This page applies to Apigee and Apigee hybrid.

View

Apigee Edge documentation.

![]()

Apigee enhances the availability of your APIs by providing built-in support for load balancing and failover across multiple backend server instances.

TargetServers decouple concrete endpoint URLs from TargetEndpoint configurations. Instead of defining a concrete URL in the configuration, you can configure one or more named TargetServers. Then, each TargetServer is referenced by name in a TargetEndpoint HTTPConnection.

Videos

Watch the following videos to learn more about API routing and load balancing using target servers

| Video | Description |

|---|---|

| Load balancing using target servers | Load balancing APIs across target servers. |

| API routing based on environment using target servers | Route an API to a different target server based on the environment. |

About the TargetServer configuration

A TargetServer configuration consists of a name, a host, a protocol, and a port, with an additional element to indicate whether the TargetServer is enabled or disabled.

The following code provides an example of a TargetServer configuration:

{

"name": "target1",

"host": "1.mybackendservice.com",

"protocol": "http",

"port": "80",

"isEnabled": "true"

}

For more information on the TargetServers API, see organizations.environments.targetservers.

See the schema for TargetServer and other entities on GitHub.

Creating TargetServers

Create TargetServers using the Apigee UI or API as described in the following sections.

Apigee in Cloud console

To create TargetServers using Apigee in Cloud console:

- Sign in to the https://console.cloud.google.com/apigee.

- Select Management > Environments > TargetServers.

- Select the environment in which you want to define a new TargetServer.

- Click Target Servers at the top of the pane.

- Click + Create Target Server.

Enter a name, host, protocol and port in the fields provided. The options for Protocol are: HTTP for REST-based target servers, gRPC - Target for gRPC-based target servers, or gRPC - External callout. See Creating gRPC API proxies for information on gRPC proxy support.

Optionally, you can select Enable SSL. See SSL certificates overview.

- Click Create.

Classic Apigee

To create TargetServers using the classic Apigee UI:

- Sign in to the Apigee UI.

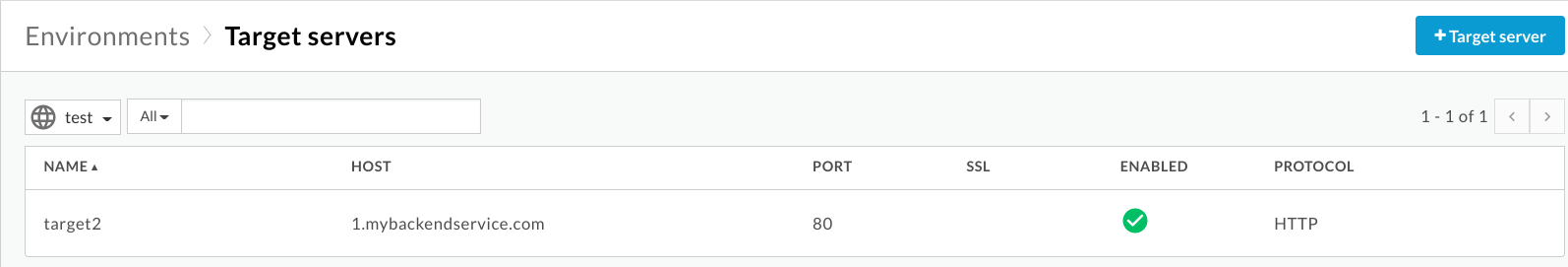

- Select Admin > Environments > TargetServers.

- From the Environment drop-down list, select the environment to which you want

to define a new TargetServer.

The Apigee UI displays a list of current TargetServers in the selected environment:

- Click +Target server to add a new

TargetServer to the selected environment.

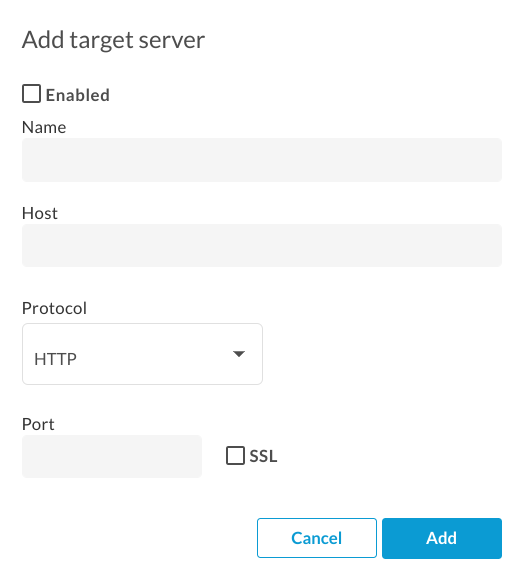

The Add target server dialog box displays:

- Click Enabled to enable the new TargetServer. definition immediately after you create it.

Enter a name, host, protocol and port in the fields provided. The options for Protocol are HTTP or GRPC.

Optionally, you can select the SSL checkbox. For more information about these fields, see TargetServers (4MV4D video).

- Click Add.

Apigee creates the new TargetServer definition.

- After you create a new TargetServer, you can edit your API proxy and change the

<HTTPTargetConnection>element to reference the new definition.

Apigee API

The following sections provide examples of using the Apigee API to create and list TargetServers.

- Creating a TargetServer in an environment using the API

- Listing the TargetServers in an environment using the API

For more information, see the TargetServers API.

Creating a TargetServer in an environment using the API

To create a TargetServer named target1 that connects to 1.mybackendservice.com on port 80, use the following API call:

curl "https://apigee.googleapis.com/v1/organizations/$ORG/environments/$ENV/targetservers" \

-X POST \

-H "Content-Type:application/json" \

-H "Authorization: Bearer $TOKEN" \

-d '

{

"name": "target1",

"host": "1.mybackendservice.com",

"protocol": "http",

"port": "80",

"isEnabled": "true",

}'

Where $TOKEN is set to your OAuth 2.0 access token, as described in

Obtaining an OAuth 2.0 access token. For information about the curl options used in this example, see

Using curl. For a description of the environment variables used,

see Setting environment variables for Apigee API requests.

The following provides an example of the response:

{

"host" : "1.mybackendservice.com",

"protocol": "http",

"isEnabled" : true,

"name" : "target1",

"port" : 80

}

Create a second TargetServer using the following API call. By defining two TargetServers, you provide two URLs that a TargetEndpoint can use for load balancing:

curl "https://apigee.googleapis.com/v1/organizations/$ORG/environments/$ENV/targetservers" \

-X POST \

-H "Content-Type:application/json" \

-H "Authorization: Bearer $TOKEN" \

-d '

{

"name": "target2",

"host": "2.mybackendservice.com",

"protocol": "http",

"port": "80",

"isEnabled": "true",

}'

The following provides an example of the response:

{

"host" : "2.mybackendservice.com",

"protocol": "http",

"isEnabled" : true,

"name" : "target2",

"port" : 80

}

Listing the TargetServers in an environment using the API

To list the TargetServers in an environment, use the following API call:

curl https://apigee.googleapis.com/v1/organizations/$ORG/environments/$ENV/targetservers \ -H "Authorization: Bearer $TOKEN"

The following provides an example of the response:

[ "target2", "target1" ]

There are now two TargetServers available for use by API proxies deployed in the test

environment. To load balance traffic across these TargetServers, you configure the HTTP

connection in an API proxy's target endpoint to use the TargetServers.

Configuring a TargetEndpoint to load balance across named TargetServers

Now that you have two TargetServers available, you can modify the TargetEndpoint HTTP connection setting to reference those two TargetServers by name:

<TargetEndpoint name="default">

<HTTPTargetConnection>

<LoadBalancer>

<Server name="target1" />

<Server name="target2" />

</LoadBalancer>

<Path>/test</Path>

</HTTPTargetConnection>

</TargetEndpoint>

The configuration above is the most basic load balancing configuration. The load

balancer supports three load balancing algorithms, Round Robin, Weighted, and Least Connection.

Round Robin is the default algorithm. Since no algorithm is specified in the configuration above,

outbound requests from the API proxy to the backend servers will alternate, one for one, between

target1 and target2.

The <Path> element forms the basepath of the TargetEndpoint URI for

all TargetServers. It is only used when <LoadBalancer> is used. Otherwise, it

is ignored. In the above example, a request reaching to target1 will be

http://target1/test and so for other TargetServers.

Configuring load balancer options

You can tune availability by configuring the options for load balancing and failover at the load balancer and TargetServer level as described in the following sections.

Algorithm

Sets the algorithm used by <LoadBalancer>. The available

algorithms are RoundRobin, Weighted, and LeastConnections,

each of which is documented below.

Round robin

The default algorithm, round robin, forwards a request to each TargetServer in the order in which the servers are listed in the target endpoint HTTP connection. For example:

<TargetEndpoint name="default">

<HTTPTargetConnection>

<LoadBalancer>

<Algorithm>RoundRobin</Algorithm>

<Server name="target1" />

<Server name="target2" />

</LoadBalancer>

<Path>/test</Path>

</HTTPTargetConnection>

</TargetEndpoint>

Weighted

The weighted load balancing algorithm enables you to configure proportional traffic loads for

your TargetServers. The weighted LoadBalancer distributes requests to your TargetServers in direct

proportion to each TargetServer's weight. Therefore, the weighted algorithm requires you to set

a weight attribute for each TargetServer. For example:

<TargetEndpoint name="default">

<HTTPTargetConnection>

<LoadBalancer>

<Algorithm>Weighted</Algorithm>

<Server name="target1">

<Weight>1</Weight>

</Server>

<Server name="target2">

<Weight>2</Weight>

</Server>

</LoadBalancer>

<Path>/test</Path>

</HTTPTargetConnection>

</TargetEndpoint>

In this example, two requests will be routed to target2 for every one request routed to

target1.

Least Connection

LoadBalancers configured to use the least connection algorithm route outbound requests to the TargetServer with fewest open HTTP connections. For example:

<TargetEndpoint name="default">

<HTTPTargetConnection>

<LoadBalancer>

<Algorithm>LeastConnections</Algorithm>

<Server name="target1" />

<Server name="target2" />

</LoadBalancer>

</HTTPTargetConnection>

<Path>/test</Path>

</TargetEndpoint>

Maximum failures

The maximum number of failed requests from the API proxy to the TargetServer that results in the request being redirected to another TargetServer.

A response failure means Apigee doesn't receive any response from a TargetServer. When this happens, the failure counter increments by one.

However, when Apigee does receive a response from a target,

even if the response is an HTTP error (such as 500), that counts as a response from the

TargetServer, and the failure counter is reset. To help ensure that bad HTTP responses

(such as 500) also increment the failure counter to take an unhealthy server out of load

balancing rotation as soon as possible, you can add the

<ServerUnhealthyResponse> element with <ResponseCode>

child elements to your load balancer configuration. Apigee will also count responses with those

codes as failures.

In the following example, target1 will be removed from rotation after five failed requests,

including some 5XX responses from the TargetServer.

<TargetEndpoint name="default">

<HTTPTargetConnection>

<LoadBalancer>

<Algorithm>RoundRobin</Algorithm>

<Server name="target1" />

<Server name="target2" />

<MaxFailures>5</MaxFailures>

<ServerUnhealthyResponse>

<ResponseCode>500</ResponseCode>

<ResponseCode>502</ResponseCode>

<ResponseCode>503</ResponseCode>

</ServerUnhealthyResponse>

</LoadBalancer>

<Path>/test</Path>

</HTTPTargetConnection>

</TargetEndpoint>

The MaxFailures default is 0. This means that Apigee always tries to connect to the target for each request and never removes the TargetServer from the rotation.

It is best to use MaxFailures > 0 with a HealthMonitor. If you configure MaxFailures > 0, the TargetServer is removed from rotation when the target fails the number of times you indicate. When a HealthMonitor is in place, Apigee automatically puts the TargetServer back in rotation after the target is up and running again, according to the configuration of that HealthMonitor. See Health monitoring for more information.

Alternatively, if you configure MaxFailures > 0 and you do not configure a HealthMonitor, Apigee will automatically take the TargetServer out of rotation when the first failure is detected. Apigee will check the health of the TargetServer every five minutes and return it to the rotation when it responds normally.

Retry

If retry is enabled, a request will be retried whenever a response failure (I/O error or HTTP timeout)

occurs or the response received matches a value set by <ServerUnhealthyResponse>.

See Maximum failures above for more on setting

<ServerUnhealthyResponse>.

By default <RetryEnabled> is set to true. Set to false to disable retry.

For example:

<RetryEnabled>false</RetryEnabled>

IsFallback

One (and only one) TargetServer can be set as the fallback server. The fallback TargetServer is not included in load balancing routines until all other TargetServers are identified as unavailable by the load balancer. When the load balancer determines that all TargetServers are unavailable, all traffic is routed to the fallback server. For example:

<TargetEndpoint name="default">

<HTTPTargetConnection>

<LoadBalancer>

<Algorithm>RoundRobin</Algorithm>

<Server name="target1" />

<Server name="target2" />

<Server name="target3">

<IsFallback>true</IsFallback>

</Server>

</LoadBalancer>

<Path>/test</Path>

</HTTPTargetConnection>

</TargetEndpoint>

The configuration above results in round robin load balancing between target1

and target2 until both target1 and target2 are

unavailable. When target1 and target2 are unavailable, all traffic

is routed to target3.

Path

Path defines a URI fragment that will be appended to all requests issued by the TargetServer to the backend server.

This element accepts a literal string path or a message template. A message

template lets you perform variable string substitution at runtime.

For example, in the following target endpoint definition, the value of {mypath} is used for the path:

<HTTPTargetConnection>

<SSLInfo>

<Enabled>true</Enabled>

</SSLInfo>

<LoadBalancer>

<Server name="testserver"/>

</LoadBalancer>

<Path>{mypath}</Path>

</HTTPTargetConnection>

Configuring a TargetServer for TLS/SSL

If you are using a TargetServer to define the backend service, and the backend service

requires the connection to use the HTTPS protocol, then you must enable TLS/SSL in the

TargetServer definition. This is necessary because the host tag does not let you

specify the connection protocol. Shown below is the TargetServer definition for one-way

TLS/SSL where Apigee makes HTTPS requests to the backend service:

{

"name": "target1",

"host": "mocktarget.apigee.net",

"protocol": "http",

"port": "443",

"isEnabled": "true",

"sSLInfo": {

"enabled": "true"

}

}

If the backend service requires two-way, or mutual, TLS/SSL, then you configure the TargetServer by using the same TLS/SSL configuration settings as TargetEndpoints:

{

"name": "TargetServer 1",

"host": "www.example.com",

"protocol": "http",

"port": "443",

"isEnabled": "true",

"sSLInfo": {

"enabled": "true",

"clientAuthEnabled": "true",

"keyStore": "keystore-name",

"keyAlias": "keystore-alias",

"trustStore": "truststore-name",

"ignoreValidationErrors": "false",

"ciphers": []

}

}

To create a TargetServer with SSL using an API call:

curl "https://apigee.googleapis.com/v1/organizations/$ORG/environments/$ENV/targetservers" \

-X POST \

-H "Content-Type:application/json" \

-H "Authorization: Bearer $TOKEN" \

-d '

{

"name": "TargetServer 1",

"host": "www.example.com",

"protocol": "http",

"port": 443,

"isEnabled": true,

"sSLInfo":

{

"enabled":true,

"clientAuthEnabled":true,

"keyStore":"keystore-name",

"keyAlias":"keystore-alias",

"ciphers":[],

"protocols":[],

"clientAuthEnabled":true,

"ignoreValidationErrors":false,

"trustStore":"truststore-name"

}

}'

Health monitoring

Health monitoring enables you to enhance load balancing configurations by actively polling the backend service URLs defined in the TargetServer configurations. With health monitoring enabled, TargetServers that are unreachable or return an error response are marked unhealthy. A failed TargetServer is automatically put back into rotation when the HealthMonitor determines that the TargetServer is active. No proxy re-deployments are required.

The HealthMonitor acts as a simple client that invokes a backend service over TCP or HTTP:

- A TCP client simply ensures that a socket can be opened.

- You configure the HTTP client to submit a valid HTTP request to the backend service. You

can define HTTP

GET,PUT,POST, orDELETEoperations. The response of the HTTP monitor call must match the configured settings in the<SuccessResponse>block.

Successes and failures

When you enable health monitoring, Apigee begins sending health checks to your target server. A health check is a request sent to the TargetServer that determines whether the TargetServer is healthy or not.

A health check can have one of two possible results:

- Success: The TargetServer is considered healthy when a successful health

check occurs. This is typically the result of one or more of the following:

- TargetServer accepts a new connection to the specified port, responds to a request on that port, and then closes the port within the specified timeframe. The response from the TargetServer contains

Connection: close - TargetServer responds to a health check request with a

200 (OK)or other HTTP status code that you determine is acceptable. - TargetServer responds to a health check request with a message body that matches the expected message body.

When Apigee determines that a server is healthy, Apigee continues or resumes sending requests to it.

- TargetServer accepts a new connection to the specified port, responds to a request on that port, and then closes the port within the specified timeframe. The response from the TargetServer contains

- Failure: The TargetServer can fail a health check in different ways,

depending on the type of check. A failure can be logged when the TargetServer:

- Refuses a connection from Apigee to the health check port.

- Does not respond to a health check request within a specified period of time.

- Returns an unexpected HTTP status code.

- Responds with a message body that does not match the expected message body.

When a TargetServer fails a health check, Apigee increments that server's failure count. If the number of failures for that server meets or exceeds a predefined threshold (

<MaxFailures>), Apigee stops sending requests to that server.

Enabling a HealthMonitor

To create a HealthMonitor, you add the <HealthMonitor> element to the TargetEndpoint's HTTPConnection

configuration for a proxy. You cannot do this in the UI. Instead, you create a proxy configuration and

upload it as a ZIP file to Apigee. A proxy configuration is a structured description of all aspects

of an API proxy. Proxy configurations consist of XML files in a pre-defined directory structure. For more

information, see API Proxy

Configuration Reference.

A simple HealthMonitor defines an IntervalInSec combined with either a

TCPMonitor or an HTTPMonitor. The <MaxFailures> element specifies the maximum

number of failed requests from the API proxy to the TargetServer that results in the request

being redirected to another TargetServer. By default <MaxFailures> is 0, which means

Apigee performs no corrective action. When configuring a health monitor, ensure that you set

<MaxFailures> in the

<HTTPTargetConnection> tag

of the <TargetEndpoint> tag

to a non-zero value.

TCPMonitor

The configuration below defines a HealthMonitor that polls each TargetServer by opening a connection on port 80 every five seconds. (Port is optional. If not specified, the TCPMonitor port is the TargetServer port.)

- If the connection fails or takes more than 10 seconds to connect, then the failure count increments by 1 for that TargetServer.

- If the connection succeeds, then the failure count for the TargetServer is reset to 0.

You can add a HealthMonitor as a child element of the TargetEndpoint's HTTPTargetConnetion element, as shown below:

<TargetEndpoint name="default">

<HTTPTargetConnection>

<LoadBalancer>

<Algorithm>RoundRobin</Algorithm>

<Server name="target1" />

<Server name="target2" />

<MaxFailures>5</MaxFailures>

</LoadBalancer>

<Path>/test</Path>

<HealthMonitor>

<IsEnabled>true</IsEnabled>

<IntervalInSec>5</IntervalInSec>

<TCPMonitor>

<ConnectTimeoutInSec>10</ConnectTimeoutInSec>

<Port>80</Port>

</TCPMonitor>

</HealthMonitor>

</HTTPTargetConnection>

</TargetEndpoint>

HealthMonitor with TCPMonitor configuration elements

The following table describes the TCPMonitor configuration elements:

| Name | Description | Default | Required? |

|---|---|---|---|

IsEnabled |

A boolean that enables or disables the HealthMonitor. | false | No |

IntervalInSec |

The time interval, in seconds, between each polling TCP request. | 0 | Yes |

ConnectTimeoutInSec |

Time in which connection to the TCP port must be established to be considered a success. Failure to connect in the specified interval counts as a failure, incrementing the load balancer's failure count for the TargetServer. | 0 | Yes |

Port |

Optional. The port on which the TCP connection will be established. If not specified, the TCPMonitor port is the TargetServer port. | 0 | No |

HTTPMonitor

A sample HealthMonitor that uses an HTTPMonitor will submit a GET request to the backend

service once every five seconds. The sample below adds an HTTP Basic Authorization header to the

request message. The Response configuration defines settings that will be compared against actual

response from the backend service. In the example below, the expected response is an HTTP

response code 200 and a custom HTTP header ImOK whose value is

YourOK. If the response does not match, then the request will treated as a failure

by the load balancer configuration.

The HTTPMonitor supports backend services configured to use HTTP and one-way HTTPS protocols. However, it does not support two-way HTTPS, also called two-way TLS/SSL, or self-signed certificates.

Note that all of the Request and Response settings in an HTTP monitor will be specific to the backend service that must be invoked.

<TargetEndpoint name="default">

<HTTPTargetConnection>

<LoadBalancer>

<Algorithm>RoundRobin</Algorithm>

<Server name="target1" />

<Server name="target2" />

<MaxFailures>5</MaxFailures>

</LoadBalancer>

<Path>/test</Path>

<HealthMonitor>

<IsEnabled>true</IsEnabled>

<IntervalInSec>5</IntervalInSec>

<HTTPMonitor>

<Request>

<ConnectTimeoutInSec>10</ConnectTimeoutInSec>

<SocketReadTimeoutInSec>30</SocketReadTimeoutInSec>

<Port>80</Port>

<Verb>GET</Verb>

<Path>/healthcheck</Path>

<Header name="Authorization">Basic 12e98yfw87etf</Header>

<IncludeHealthCheckIdHeader>true</IncludeHealthCheckIdHeader>

</Request>

<SuccessResponse>

<ResponseCode>200</ResponseCode>

<Header name="ImOK">YourOK</Header>

</SuccessResponse>

</HTTPMonitor>

</HealthMonitor>

</HTTPTargetConnection>

</TargetEndpoint>

<HTTPMonitor> configuration elements

The following table describes the top-level <HTTPMonitor> configuration elements:

| Name | Description | Default | Required? |

|---|---|---|---|

IsEnabled |

A boolean that enables or disables the HealthMonitor. | false | No |

IntervalInSec |

The time interval, in seconds, between each polling request. | 0 | Yes |

<HTTPMonitor>/<Request> configuration elements

Configuration options for the outbound request message sent by the HealthMonitor to

the TargetServers in the rotation. Note that <Request> is a required element.

| Name | Description | Default | Required? |

|---|---|---|---|

ConnectTimeoutInSec |

Time, in seconds, in which the TCP connection handshake to the HTTP service must complete to be considered a success. Failure to connect in the specified interval counts as a failure, incrementing the LoadBalancer's failure count for the TargetServer. | 0 | No |

SocketReadTimeoutInSec |

Time, in seconds, in which data must be read from the HTTP service to be considered a success. Failure to read in the specified interval counts as a failure, incrementing the LoadBalancer's failure count for the TargetServer. | 0 | No |

Port |

The port on which the HTTP connection to the backend service will be established. | Target server port | No |

Verb |

The HTTP verb used for each polling HTTP request to the backend service. | N/A | No |

Path |

The path appended to the URL defined in the TargetServer. Use the Path element to

configure a 'polling endpoint' on your HTTP service. Note that the Path element does not support variables. |

N/A | No |

| Allows you

to track the healthcheck requests on upstream systems. The

IncludeHealthCheckIdHeader takes a Boolean value, and

defaults to false. If you set it to true, then

there is a Header named X-Apigee-Healthcheck-Id

which gets

injected into the healthcheck request. The value of the header is

dynamically assigned, and takes the form

ORG/ENV/SERVER_UUID/N, where ORG is the

organization name, ENV is the environment name,

SERVER_UUID is a unique ID identifying the MP, and

N is the number of milliseconds elapsed since January 1, 1970.

Example resulting request header: X-Apigee-Healthcheck-Id: orgname/envname/E8C4D2EE-3A69-428A-8616-030ABDE864E0/1586802968123

|

false | No |

Payload |

The HTTP body generated for each polling HTTP request. Note that this element is not

required for GET requests. |

N/A | No |

<HTTPMonitor>/<SuccessResponse> configuration elements

(Optional) Matching options for the inbound HTTP response message generated by the polled backend service. Responses that do not match increment the failure count by 1.

| Name | Description | Default | Required? |

|---|---|---|---|

ResponseCode |

The HTTP response code expected to be received from the polled TargetServer. A code different than the code specified results in a failure, and the count being incremented for the polled backend service. You can define multiple ResponseCode elements. | N/A | No |

Headers |

A list of one or more HTTP headers and values expected to be received from the polled backend service. Any HTTP headers or values on the response that are different from those specified result in a failure, and the count for the polled TargetServer is incremented by 1. You can define multiple Header elements. | N/A | No |