本指南說明如何為安全性分析功能導入記錄檔,適合資安從業人員參考。 Google Cloud執行安全性分析,有助於貴機構防範、偵測及因應惡意軟體、網路釣魚、勒索軟體和設定不當的資產等威脅。

本指南說明如何執行下列操作:

- 啟用要分析的記錄。

- 根據您選擇的安全分析工具 (例如 Log Analytics、BigQuery、Google Security Operations 或第三方安全資訊與事件管理 (SIEM) 技術),將這些記錄傳送至單一目的地。

- 使用 Community Security Analytics (CSA) 專案的範例查詢,分析這些記錄來稽核雲端用量,並偵測資料和工作負載的潛在威脅。

本指南中的資訊是 Google Cloud 自主式安全營運的一部分,包括以工程為主的偵測和回應做法轉型,以及安全分析,可提升威脅偵測能力。

在本指南中,記錄檔會提供待分析的資料來源。不過,您可以將本指南的概念套用至其他互補的安全性相關資料分析,例如 Security Command Center 的安全性發現項目。 Google CloudSecurity Command Center Premium 提供定期更新的受管理偵測器清單,可近乎即時地找出系統中的威脅、安全漏洞和錯誤設定。分析 Security Command Center 的這些信號,並與本指南所述安全分析工具擷取的記錄檔建立關聯,即可更全面地掌握潛在安全威脅。

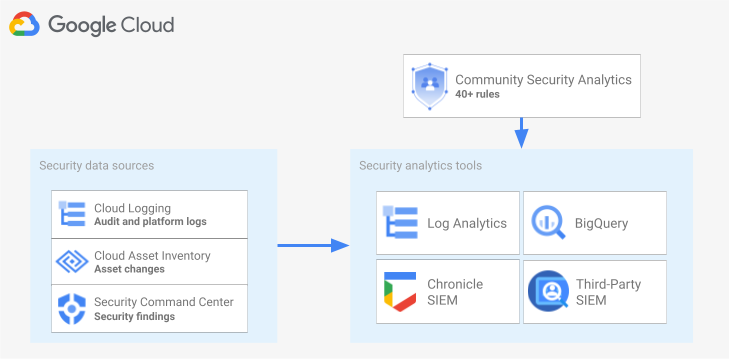

下圖顯示安全資料來源、安全分析工具和 CSA 查詢如何協同運作。

這張圖表開頭是下列安全性資料來源:Cloud Logging 的記錄、Cloud Asset Inventory 的資產變更,以及 Security Command Center 的安全性發現項目。接著,這張圖會顯示這些安全資料來源如何傳送至您選擇的安全分析工具:Cloud Logging 中的記錄檔分析、BigQuery、Google Security Operations 或第三方 SIEM。最後,圖表顯示如何使用 CSA 查詢和分析工具,分析彙整的安全資料。

安全性記錄分析工作流程

本節說明如何在Google Cloud中設定安全性記錄分析。工作流程包含下列圖表所示的三個步驟,說明如下:

啟用記錄:Google Cloud提供多種安全防護記錄。每種記錄都包含不同資訊,有助於回答特定安全性問題。部分記錄 (例如管理員活動稽核記錄) 預設為啟用,其他記錄則需要手動啟用,因為這些記錄會產生額外的 Cloud Logging 擷取費用。因此,工作流程的第一步是優先處理與安全性分析需求最相關的安全性記錄,並個別啟用這些特定記錄。

為協助您評估記錄檔提供的可視性和威脅偵測涵蓋範圍,本指南提供記錄檔範圍設定工具。這項工具會將每筆記錄對應至企業適用的 MITRE ATT&CK® Matrix 中的相關威脅策略和技術。這項工具也會將 Security Command Center 中的 Event Threat Detection 規則,對應至規則所依據的記錄檔。無論使用哪種分析工具,您都可以使用記錄範圍設定工具評估記錄。

轉送記錄:找出並啟用要分析的記錄後,下一步是轉送及匯總機構中的記錄,包括任何包含的資料夾、專案和帳單帳戶。記錄的傳送方式取決於您使用的分析工具。

本指南說明常見的記錄檔路由目的地,並示範如何使用 Cloud Logging 匯總接收器,將整個機構的記錄檔路由至 Cloud Logging 記錄檔 bucket 或 BigQuery 資料集,具體取決於您選擇使用記錄檔分析或 BigQuery 進行分析。

分析記錄:將記錄檔傳送至分析工具後,下一步就是分析這些記錄檔,找出任何潛在的安全威脅。記錄分析方式取決於您使用的分析工具。如果您使用 Log Analytics 或 BigQuery,可以透過 SQL 查詢分析記錄。如果您使用 Google Security Operations,可以透過 YARA-L 規則分析記錄。如果您使用第三方 SIEM 工具,請使用該工具指定的查詢語言。

本指南提供 SQL 查詢,可用於分析 Log Analytics 或 BigQuery 中的記錄。本指南提供的 SQL 查詢來自社群安全性分析 (CSA) 專案。CSA 是一組開放原始碼的基礎安全分析工具,提供預先建構的查詢和規則基準,方便您重複使用,開始分析 Google Cloud 記錄。

以下各節詳細說明如何設定及套用安全性記錄分析工作流程中的每個步驟。

啟用記錄功能

啟用記錄的程序包含下列步驟:

- 使用本指南中的記錄範圍設定工具,找出所需的記錄。

- 請記下記錄範圍工具產生的記錄篩選器,稍後設定記錄接收器時會用到。

- 為每個已識別的記錄類型或 Google Cloud 服務啟用記錄功能。 視服務而定,您可能也必須啟用相應的資料存取稽核記錄,詳情請參閱本節後續內容。

使用記錄範圍設定工具識別記錄

為協助您找出符合安全性與法規遵循需求的記錄,您可以使用本節所示的記錄範圍設定工具。這項工具提供互動式表格,列出Google Cloud 中與安全性相關的重要記錄,包括 Cloud 稽核記錄、資料存取透明化控管機制記錄、網路記錄和多項平台記錄。這項工具會將每種記錄類型對應至下列區域:

- MITRE ATT&CK 可透過該記錄檔監控的威脅策略和技術。

- CIS Google Cloud 運算平台的違規事項。

- Event Threat Detection 規則,這些規則會使用該記錄。

記錄範圍設定工具也會產生記錄篩選器,並立即顯示在表格下方。找出所需記錄後,在工具中選取這些記錄,系統就會自動更新記錄篩選器。

以下簡短程序說明如何使用記錄範圍設定工具:

- 如要在記錄範圍設定工具中選取或移除記錄,請按一下記錄名稱旁的切換按鈕。

- 如要選取或移除所有記錄,請按一下「記錄類型」標題旁的切換鈕。

- 如要查看各記錄類型可監控哪些 MITRE ATT&CK 技術,請按一下「MITRE ATT&CK 戰術和技術」標題旁的 。

記錄範圍界定工具

記錄記錄檔篩選器

記錄範圍設定工具自動產生的記錄篩選器,會包含您在工具中選取的所有記錄。您可以直接使用篩選器,也可以視需求進一步修正記錄篩選器。舉例來說,您可以只在特定專案中納入 (或排除) 資源。建立符合記錄需求的記錄篩選器後,您需要儲存篩選器,以便在轉送記錄時使用。舉例來說,您可以將篩選器儲存在文字編輯器中,也可以儲存在環境變數中,如下所示:

- 在工具下方的「Auto-generated log filter」(自動產生的記錄篩選器) 專區中,複製記錄篩選器的程式碼。

- 選用:編輯複製的程式碼,進一步篩選資料。

在 Cloud Shell 中,建立變數來儲存記錄篩選器:

export LOG_FILTER='LOG_FILTER'將

LOG_FILTER替換為記錄篩選器的程式碼。

啟用服務專屬的平台記錄

在記錄範圍設定工具中選取的每個平台記錄,都必須逐一啟用 (通常是在資源層級)。舉例來說,Cloud DNS 記錄是在虛擬私有雲網路層級啟用。同樣地,虛擬私有雲流量記錄會在子網路層級為子網路中的所有 VM 啟用,而防火牆規則記錄則會在個別防火牆規則層級啟用。

每個平台記錄都有專屬的記錄啟用說明。不過,您可以使用記錄範圍設定工具,快速開啟各平台記錄的相關說明。

如要瞭解如何啟用特定平台記錄的記錄功能,請執行下列操作:

- 在記錄範圍設定工具中,找出要啟用的平台記錄。

- 在「預設啟用」欄中,按一下該記錄對應的「啟用」連結。點選連結後,即可查看如何為該服務啟用記錄功能的詳細操作說明。

啟用資料存取稽核記錄

如記錄範圍設定工具所示,Cloud 稽核記錄中的資料存取稽核記錄可提供廣泛的威脅偵測涵蓋範圍。不過,這些記錄的量可能相當龐大。因此,啟用這些資料存取稽核記錄可能會導致額外費用,包括擷取、儲存、匯出及處理這些記錄的費用。本節將說明如何啟用這些記錄,並提供一些最佳做法,協助您在價值和成本之間取得平衡。

「資料存取」稽核記錄 (BigQuery 除外) 預設為停用。如要為 BigQuery 以外的服務設定「資料存取」稽核記錄,您必須明確啟用這項功能,方法是使用 Google Cloud 控制台,或使用 Google Cloud CLI 編輯 Identity and Access Management (IAM) 政策物件。 Google Cloud 啟用資料存取稽核記錄後,您也可以設定要記錄哪些類型的作業。資料存取稽核記錄類型有三種:

ADMIN_READ:記錄讀取中繼資料或設定資訊的作業。DATA_READ:記錄讀取使用者所提供資料的作業。DATA_WRITE:記錄寫入使用者所提供資料的作業。

請注意,您無法設定記錄 ADMIN_WRITE 作業,這類作業會寫入中繼資料或設定資訊。ADMIN_WRITE作業會納入 Cloud 稽核記錄的管理員活動稽核記錄,因此無法停用。

管理資料存取稽核記錄的數量

啟用資料存取稽核記錄的目標,是盡可能提高安全防護機制的可見度,同時限制費用和管理負擔。為協助您達成這個目標,建議您採取下列做法,篩除低價值、高用量的記錄:

- 優先處理相關服務,例如代管機密工作負載、金鑰和資料的服務。如需可能優先處理的服務具體範例,請參閱資料存取稽核記錄設定範例。

優先處理相關專案,例如託管生產工作負載的專案,而非託管開發人員和測試環境的專案。如要篩除特定專案的所有記錄,請在接收器的記錄篩選器中新增下列運算式。將 PROJECT_ID 替換為要篩除所有記錄的專案 ID:

專案 記錄篩選運算式 排除特定專案的所有記錄 NOT logName =~ "^projects/PROJECT_ID"

優先處理部分資料存取作業,例如

ADMIN_READ、DATA_READ或DATA_WRITE,以記錄最少量的作業。舉例來說,Cloud DNS 等部分服務會寫入所有三種作業,但您只能啟用ADMIN_READ作業的記錄。設定一或多個這三種資料存取作業後,您可能會想排除特定作業,因為這些作業的量特別高。您可以修改接收器的記錄篩選器,排除這些大量作業。舉例來說,您決定啟用完整的資料存取稽核記錄,包括對某些重要儲存服務執行的DATA_READ作業。如要排除特定高流量資料讀取作業,請在接收器的記錄檔篩選器中新增下列建議的記錄檔篩選器運算式:服務 記錄篩選運算式 從 Cloud Storage 排除大量記錄 NOT (resource.type="gcs_bucket" AND (protoPayload.methodName="storage.buckets.get" OR protoPayload.methodName="storage.buckets.list"))

從 Cloud SQL 排除大量記錄檔 NOT (resource.type="cloudsql_database" AND protoPayload.request.cmd="select")

優先處理相關資源,例如代管最敏感工作負載和資料的資源。您可以根據資源處理的資料值和安全風險 (例如是否可從外部存取),將資源分類。雖然資料存取稽核記錄是依服務啟用,但您可以透過記錄篩選器,篩除特定資源或資源類型。

排除特定主體,使其資料存取不必記錄。例如,您可以使內部測試帳戶不必記錄其作業。詳情請參閱資料存取稽核記錄說明文件中的「設定豁免」一節。

資料存取稽核記錄設定範例

下表提供資料存取稽核記錄的基本設定,可用於 Google Cloud 專案,在限制記錄量的同時,取得有價值的安全性可視性:

| 級別 | 服務 | 資料存取稽核記錄類型 | MITRE ATT&CK 戰術 |

|---|---|---|---|

| 驗證與授權服務 | IAM Identity-Aware Proxy (IAP)1 Cloud KMS Secret Manager Resource Manager |

ADMIN_READ DATA_READ |

探索 憑證存取 權限提升 |

| 儲存服務 | BigQuery (預設為啟用) Cloud Storage1、2 |

DATA_READ DATA_WRITE |

收集 資料竊取 |

| 基礎架構服務 | Compute Engine 機構政策 |

ADMIN_READ | 探索 |

1 如果 IAP 保護的網路資源或 Cloud Storage 物件流量很高,啟用 IAP 或 Cloud Storage 的資料存取稽核記錄可能會產生大量記錄。

2 啟用 Cloud Storage 的資料存取稽核記錄後,系統可能會禁止經驗證的瀏覽器下載作業存取非公開物件。如需更多詳細資料和建議的解決方法,請參閱 Cloud Storage 疑難排解指南。

在範例設定中,請注意服務如何根據基礎資料、中繼資料或設定,依敏感度分組。這些層級顯示下列建議的資料存取稽核記錄詳細程度:

- 驗證和授權服務:建議稽核這類服務的所有資料存取作業。這個層級的稽核作業可協助您監控敏感金鑰、密鑰和 IAM 政策的存取權。監控這類存取權,有助於偵測 MITRE ATT&CK 策略,例如「探索」、「存取憑證」和「權限提升」。

- 儲存空間服務:對於這類服務,建議稽核涉及使用者提供資料的資料存取作業。這個層級的稽核作業可協助您監控重要和私密資料的存取情形,監控這類存取權有助於偵測針對您資料的 MITRE ATT&CK 戰術,例如「收集」和「竊取」。

- 基礎架構服務:對於這類服務,我們建議稽核涉及中繼資料或設定資訊的資料存取作業。這個稽核層級可協助您監控基礎架構設定的掃描作業。監控這類存取權,有助於偵測針對工作負載的 MITRE ATT&CK 策略,例如「探索」。

路徑記錄

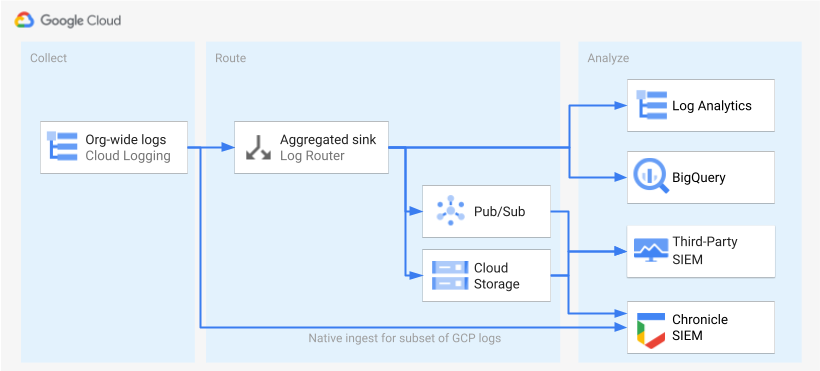

找出並啟用記錄後,下一步是將記錄傳送至單一目的地。轉送目的地、路徑和複雜度會因您使用的 Analytics 工具而異,如下圖所示。

下圖顯示下列路由選項:

如果您使用記錄檔分析,則需要匯總接收器,將整個 Google Cloud 機構的記錄檔匯總至單一 Cloud Logging bucket。

如果您使用 BigQuery,則需要匯總接收器,將整個 Google Cloud 機構的記錄檔匯總至單一 BigQuery 資料集。

如果您使用 Google Security Operations,且這個預先定義的記錄子集符合您的安全分析需求,您可以使用內建的 Google Security Operations 擷取功能,自動將這些記錄匯總到 Google Security Operations 帳戶。您也可以查看記錄範圍設定工具的「可直接匯出至 Google Security Operations」欄,瞭解這組預先定義的記錄。如要進一步瞭解如何匯出這些預先定義的記錄,請參閱「將記錄擷取至 Google Security Operations」。 Google Cloud

如果您使用 BigQuery 或第三方 SIEM,或是想將擴充記錄集匯出至 Google Security Operations,則下圖顯示啟用記錄和分析記錄之間需要額外步驟。這個額外步驟包括設定匯總接收器,適當轉送所選記錄。如果您使用 BigQuery,只要有這個接收器,就能將記錄檔傳送至 BigQuery。如果您使用第三方 SIEM,必須先讓接收器在 Pub/Sub 或 Cloud Storage 中彙整所選記錄,才能將記錄匯入分析工具。

本指南未涵蓋 Google Security Operations 和第三方 SIEM 的路由選項。不過,以下各節會詳細說明將記錄檔傳送至 Log Analytics 或 BigQuery 的步驟:

- 設定單一目的地

- 建立匯總記錄接收器。

- 授予接收器存取權。

- 設定目的地的讀取權限。

- 確認記錄檔已傳送至目的地。

設定單一目的地

記錄檔分析

在要匯總記錄的 Google Cloud 專案中開啟 Google Cloud 控制台。

在 Cloud Shell 終端機中,執行下列

gcloud指令來建立記錄 bucket:gcloud logging buckets create BUCKET_NAME \ --location=BUCKET_LOCATION \ --project=PROJECT_ID更改下列內容:

PROJECT_ID:專案的 ID,匯總記錄會儲存在該專案中。 Google CloudBUCKET_NAME:新記錄水桶的名稱。BUCKET_LOCATION:新記錄值區的地理位置。支援的位置為global、us或eu。如要進一步瞭解這些儲存空間區域,請參閱支援的區域。 如果您未指定位置,系統會使用global區域,也就是說,記錄可能實際位於任何區域。

確認值區已建立:

gcloud logging buckets list --project=PROJECT_ID(選用) 設定值區中記錄的保留期限。以下範例會將 bucket 中儲存的記錄檔保留期限延長至 365 天:

gcloud logging buckets update BUCKET_NAME \ --location=BUCKET_LOCATION \ --project=PROJECT_ID \ --retention-days=365按照這些步驟升級新 bucket,即可使用記錄檔分析工具。

BigQuery

在要匯總記錄的 Google Cloud 專案中開啟 Google Cloud 控制台。

在 Cloud Shell 終端機中,執行下列

bq mk指令來建立資料集:bq --location=DATASET_LOCATION mk \ --dataset \ --default_partition_expiration=PARTITION_EXPIRATION \ PROJECT_ID:DATASET_ID更改下列內容:

PROJECT_ID:要儲存匯總記錄的 Google Cloud 專案 ID。DATASET_ID:新 BigQuery 資料集的 ID。DATASET_LOCATION:資料集的地理位置。資料集建立後即無法變更位置。PARTITION_EXPIRATION:記錄接收器建立的分區資料表中,分區的預設生命週期 (以秒為單位)。您會在下一節中設定記錄接收器。您設定的記錄接收器會使用分區資料表,並根據記錄項目的時間戳記按天分區。分區 (包括相關聯的記錄項目) 會在分區日期起PARTITION_EXPIRATION秒後刪除。

建立匯總記錄接收器

您可以在機構層級建立匯總接收器,將機構記錄檔轉送至目的地。如要將您在記錄範圍工具中選取的所有記錄納入接收器,請使用記錄範圍工具產生的記錄篩選器設定接收器。

Google Cloud記錄檔分析

在 Cloud Shell 終端機中,執行下列

gcloud指令,在機構層級建立匯總接收器:gcloud logging sinks create SINK_NAME \ logging.googleapis.com/projects/PROJECT_ID/locations/BUCKET_LOCATION/buckets/BUCKET_NAME \ --log-filter="LOG_FILTER" \ --organization=ORGANIZATION_ID \ --include-children更改下列內容:

SINK_NAME:用於傳送記錄的接收器名稱。PROJECT_ID:要儲存匯總記錄的 Google Cloud 專案 ID。BUCKET_LOCATION:您為儲存記錄建立的 Logging bucket 位置。BUCKET_NAME:您為記錄儲存空間建立的 Logging 值區名稱。LOG_FILTER:您從記錄範圍設定工具儲存的記錄篩選器。ORGANIZATION_ID:貴機構的資源 ID。

--include-children標記非常重要,因為這樣一來,系統也會納入貴機構內所有Google Cloud 專案的記錄。詳情請參閱「將機構層級的記錄檔彙整並傳送至支援的目的地」。確認接收器已建立:

gcloud logging sinks list --organization=ORGANIZATION_ID取得與您剛建立接收器相關聯的服務帳戶名稱:

gcloud logging sinks describe SINK_NAME --organization=ORGANIZATION_ID輸出看起來類似以下內容:

writerIdentity: serviceAccount:p1234567890-12345@logging-o1234567890.iam.gserviceaccount.com`複製

writerIdentity的整個字串,開頭為 serviceAccount:。 這個 ID 是接收器的服務帳戶。除非您將記錄值區的寫入存取權授予這個服務帳戶,否則無法從該接收器進行記錄路由。您會在下一節中,將寫入存取權授予接收器的寫入者身分。

BigQuery

在 Cloud Shell 終端機中執行下列

gcloud指令,在機構層級建立匯總接收器:gcloud logging sinks create SINK_NAME \ bigquery.googleapis.com/projects/PROJECT_ID/datasets/DATASET_ID \ --log-filter="LOG_FILTER" \ --organization=ORGANIZATION_ID \ --use-partitioned-tables \ --include-children更改下列內容:

SINK_NAME:用於傳送記錄的接收器名稱。PROJECT_ID:要將記錄匯總至其中的 Google Cloud 專案 ID。DATASET_ID:您建立的 BigQuery 資料集 ID。LOG_FILTER:您從記錄範圍設定工具儲存的記錄篩選器。ORGANIZATION_ID:貴機構的資源 ID。

--include-children標記非常重要,因為這樣一來,系統也會納入貴機構內所有Google Cloud 專案的記錄。詳情請參閱「將機構層級的記錄檔彙整並傳送至支援的目的地」。--use-partitioned-tables旗標非常重要,因為資料會根據記錄項目的timestamp欄位按天分區。這可簡化資料查詢作業,並減少查詢掃描的資料量,進而降低查詢成本。分區資料表的另一個優點是,您可以在資料集層級設定預設分區到期時間,以符合記錄保留規定。您已在上一節建立資料集目的地時,設定預設分區到期時間。您也可以選擇在個別資料表層級設定分區到期時間,根據記錄類型提供精細的資料保留控制項。確認接收器已建立:

gcloud logging sinks list --organization=ORGANIZATION_ID取得與您剛建立接收器相關聯的服務帳戶名稱:

gcloud logging sinks describe SINK_NAME --organization=ORGANIZATION_ID輸出看起來類似以下內容:

writerIdentity: serviceAccount:p1234567890-12345@logging-o1234567890.iam.gserviceaccount.com`複製

writerIdentity的整個字串,開頭為 serviceAccount:。 這個 ID 是接收器的服務帳戶。除非您將 BigQuery 資料集的寫入存取權授予這個服務帳戶,否則無法從該接收器路由傳送記錄。您會在下一節中,將寫入存取權授予接收器的寫入者身分。

授予接收器存取權

建立記錄接收器後,您必須授予接收器權限,允許接收器將記錄寫入目的地 (Logging 值區或 BigQuery 資料集)。

記錄檔分析

如要將權限新增至接收器的服務帳戶,請按照下列步驟操作:

前往 Google Cloud 控制台的「IAM」頁面:

請務必選取目的地 Google Cloud 專案,其中包含您為集中式記錄檔儲存空間建立的 Logging bucket。

按一下 person_add「授予存取權」。

在「新增主體」欄位中,輸入接收器的服務帳戶,但不要加上

serviceAccount:前置字元。請注意,這個 ID 來自您在上一節中建立接收器後擷取的writerIdentity欄位。在「Select a role」(選擇角色) 下拉式選單中,選取「Logs Bucket Writer」(記錄 bucket 寫入者)。

按一下「新增 IAM 條件」,將服務帳戶的存取權限制為僅限您建立的記錄值區。

輸入條件的「標題」和「說明」。

在「條件類型」下拉式選單中,依序選取「資源」 >「名稱」。

在「運算子」下拉式選單中,選取「結尾為」。

在「值」欄位中,輸入值區的位置和名稱,如下所示:

locations/BUCKET_LOCATION/buckets/BUCKET_NAME按一下「儲存」加入條件。

按一下「儲存」即可設定權限。

BigQuery

如要將權限新增至接收器的服務帳戶,請按照下列步驟操作:

前往 Google Cloud 控制台的 BigQuery:

開啟您為集中式記錄檔儲存空間建立的 BigQuery 資料集。

在「資料集資訊」分頁中,按一下「共用」keyboard_arrow_down下拉式選單,然後點選「權限」。

在「資料集權限」側邊面板中,按一下「新增主體」。

在「新增主體」欄位中,輸入接收器的服務帳戶,但不要加上

serviceAccount:前置字元。請注意,這個 ID 來自您在上一節中建立接收器後擷取的writerIdentity欄位。在「角色」下拉式選單中,選取「BigQuery 資料編輯者」。

按一下 [儲存]。

授權存取接收器後,記錄項目就會開始填入接收器目的地:Logging 儲存空間或 BigQuery 資料集。

設定目的地的讀取權限

現在記錄接收器會將整個機構的記錄轉送至單一目的地,因此您可以搜尋所有這些記錄。使用 IAM 權限管理權限,並視需要授予存取權。

記錄檔分析

如要授予檢視及查詢新記錄 bucket 中記錄的權限,請按照下列步驟操作。

前往 Google Cloud 控制台的「IAM」頁面:

請確認您已選取用於匯總記錄的 Google Cloud 專案。

按一下「新增」person_add。

在「New principal」(新增主體) 欄位中新增電子郵件帳戶。

在「Select a role」(選擇角色) 下拉式選單中,選取「Logs Views Accessor」(記錄檢視存取者)。

這個角色可讓新加入的主體讀取 Google Cloud 專案中任何值區的所有檢視畫面。如要限制使用者存取權,請新增條件,讓使用者只能從新 bucket 讀取資料。

按一下「新增條件」。

輸入條件的「標題」和「說明」。

在「條件類型」下拉式選單中,依序選取「資源」 >「名稱」。

在「運算子」下拉式選單中,選取「結尾為」。

在「Value」(值) 欄位中,輸入 bucket 的位置和名稱,以及預設記錄檢視畫面

_AllLogs,如下所示:locations/BUCKET_LOCATION/buckets/BUCKET_NAME/views/_AllLogs按一下「儲存」加入條件。

按一下「儲存」即可設定權限。

BigQuery

如要授予權限,讓使用者查看及查詢 BigQuery 資料集中的記錄,請按照 BigQuery 說明文件「授予資料集存取權」一節中的步驟操作。

確認記錄檔已傳送至目的地

記錄檔分析

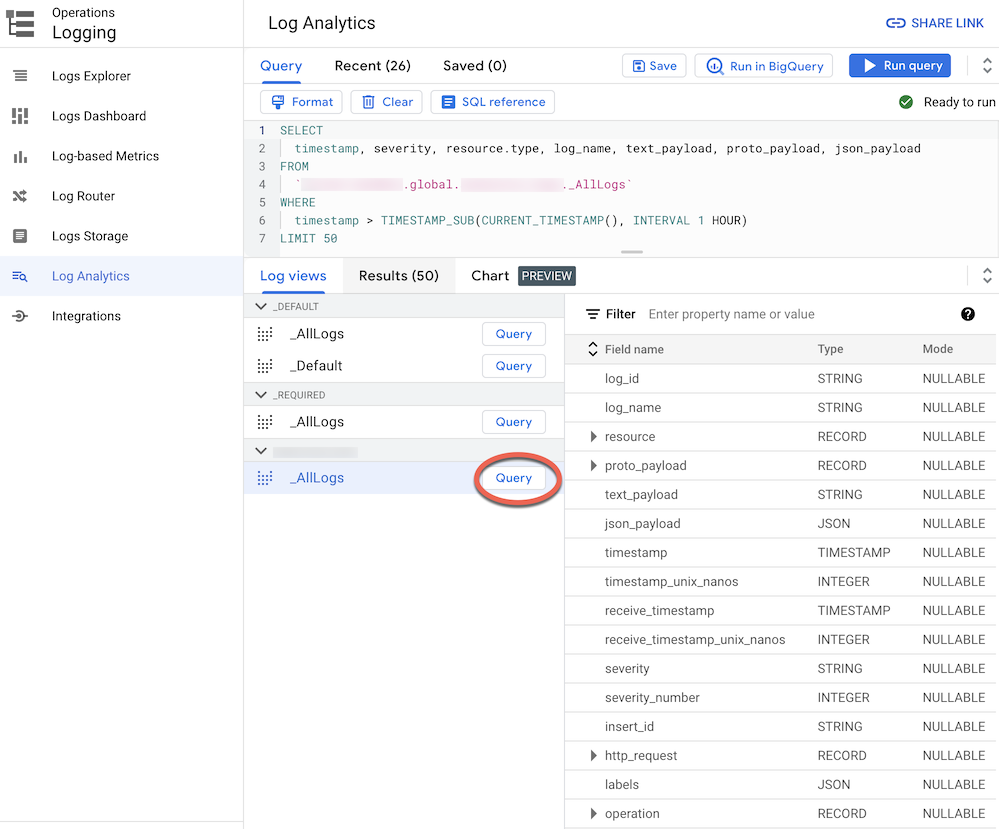

將記錄檔轉送至已升級為記錄檔分析的記錄檔 bucket 後,您就能透過單一記錄檔檢視畫面,查看及查詢所有記錄項目,且所有記錄檔類型都會採用統一的結構定義。請按照下列步驟確認記錄是否已正確路由。

在 Google Cloud 控制台中,前往「記錄檔分析」頁面:

請確認您已選取用於匯總記錄的 Google Cloud 專案。

按一下「記錄檢視畫面」分頁標籤。

如果尚未展開,請展開您建立的記錄檔儲存空間 (即

BUCKET_NAME) 下方的記錄檔檢視畫面。選取預設記錄檢視畫面

_AllLogs。現在您可以在右側面板檢查整個記錄檔結構定義,如下方螢幕截圖所示:

點按「

_AllLogs」旁的「查詢」。這會使用 SQL 範例查詢填入「查詢」編輯器,以擷取最近路由的記錄項目。按一下「執行查詢」,即可查看最近路由的記錄項目。

視貴機構專案中的活動量而定,您可能需要稍候幾分鐘,系統才會產生部分記錄,然後將這些記錄傳送至記錄值區。 Google Cloud

BigQuery

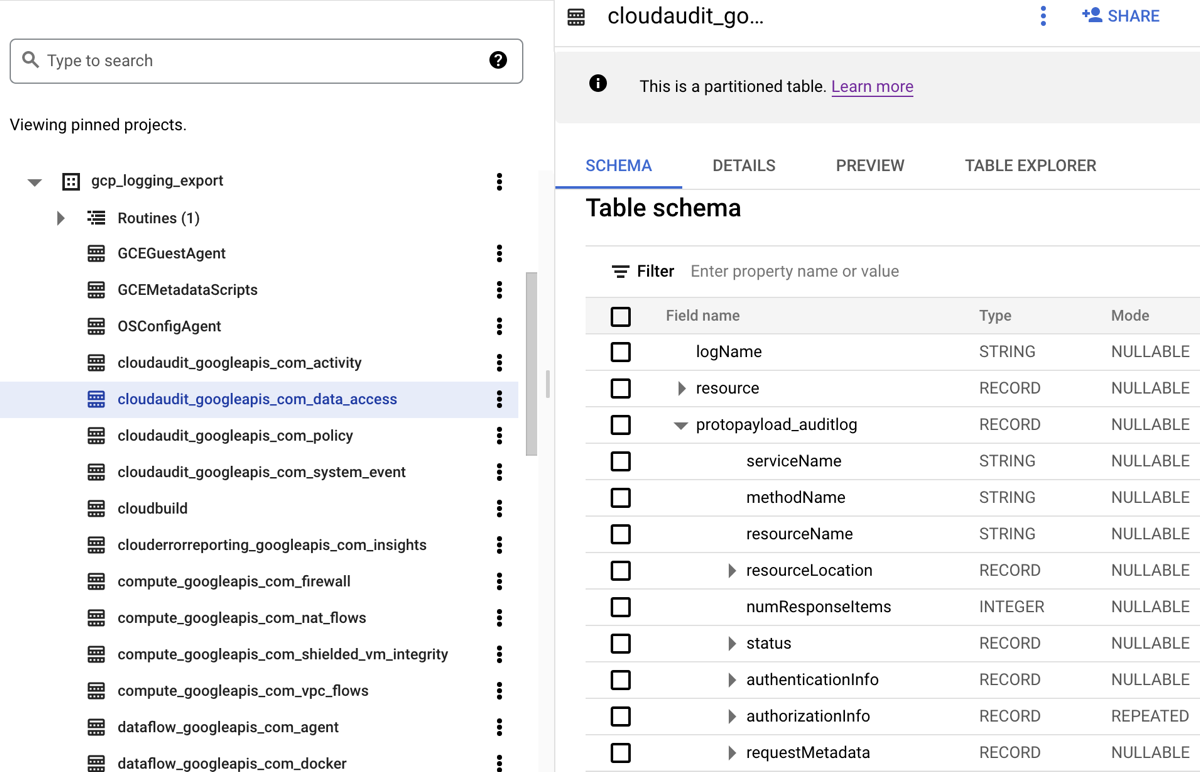

將記錄檔傳送至 BigQuery 資料集時,Cloud Logging 會建立 BigQuery 資料表來保留記錄項目,如下列螢幕截圖所示:

螢幕截圖顯示 Cloud Logging 如何根據記錄項目所屬記錄的名稱,為每個 BigQuery 表格命名。舉例來說,螢幕截圖中選取的 cloudaudit_googleapis_com_data_access 表格包含記錄 ID 為 cloudaudit.googleapis.com%2Fdata_access 的資料存取稽核記錄。除了根據對應的記錄項目命名,每個資料表也會根據每個記錄項目的時間戳記進行分區。

視貴機構專案中的活動量而定,系統可能需要幾分鐘才能產生部分記錄,然後將這些記錄傳送至 BigQuery 資料集。 Google Cloud

分析記錄檔

您可以對稽核記錄和平台記錄執行各式各樣的查詢。以下列出一些範例安全問題,您可能會想詢問自己的記錄。這份清單中的每個問題都有兩個版本的相應 CSA 查詢:一個用於 Log Analytics,另一個用於 BigQuery。請使用與先前設定的接收器目的地相符的查詢版本。

記錄檔分析

使用下列任何 SQL 查詢之前,請將 MY_PROJECT_ID 替換為您建立記錄值區的專案 ID (即 Google Cloud ),並將 PROJECT_ID) 和 MY_DATASET_ID 替換為該記錄值區的地區和名稱 (即 BUCKET_LOCATION.BUCKET_NAME)。

BigQuery

使用下列任何 SQL 查詢之前,請將 MY_PROJECT_ID 改為您建立 BigQuery 資料集的專案 ID (即 PROJECT_ID)),並將 MY_DATASET_ID 改為該資料集的名稱 (即 DATASET_ID)。 Google Cloud

登入和存取權問題

這些範例查詢會執行分析,偵測可疑的登入嘗試或對 Google Cloud 環境的初始存取嘗試。

Google Workspace 是否偵測到任何可疑的登入嘗試?

透過搜尋 Google Workspace 登入稽核中的 Cloud Identity 記錄,以下查詢會偵測 Google Workspace 標示的可疑登入嘗試。這類登入嘗試可能來自 Google Cloud 控制台、管理控制台或 gcloud CLI。

記錄檔分析

BigQuery

是否有任何使用者身分登入失敗次數過多?

透過搜尋屬於 Google Workspace 登入稽核的 Cloud Identity 記錄,下列查詢會偵測過去 24 小時內連續登入失敗次數達三次以上的使用者。

記錄檔分析

BigQuery

是否有任何存取嘗試違反 VPC Service Controls?

透過分析 Cloud Audit Logs 的「政策遭拒」稽核記錄,以下查詢會偵測 VPC Service Controls 封鎖的存取嘗試。任何查詢結果都可能指出潛在的惡意活動,例如使用遭竊憑證從未經授權的網路嘗試存取。

記錄檔分析

BigQuery

是否有任何違反 IAP 存取權控管的存取嘗試?

透過分析外部應用程式負載平衡器記錄,下列查詢會偵測到 IAP 封鎖的存取嘗試。任何查詢結果都可能表示有人試圖取得初步存取權或入侵弱點。

記錄檔分析

BigQuery

權限變更相關問題

這些範例查詢會分析變更權限的管理員活動,包括身分與存取權管理政策、群組和群組成員資格、服務帳戶,以及任何相關聯的金鑰。這類權限變更可能會提供敏感資料或環境的高存取層級。

是否將任何使用者新增至高權限群組?

透過分析 Google Workspace 管理員稽核稽核記錄,下列查詢會偵測已新增至查詢中列出的任何高權限群組的使用者。您可以在查詢中使用規則運算式,定義要監控的群組 (例如 admin@example.com 或 prod@example.com)。任何查詢結果都可能指出惡意或意外的權限提升。

記錄檔分析

BigQuery

是否已透過服務帳戶授予任何權限?

透過分析 Cloud 稽核記錄中的管理員活動稽核記錄,下列查詢會偵測授予任何主體服務帳戶的權限。例如,授予模擬該服務帳戶或建立服務帳戶金鑰的權限。任何查詢結果都可能表示權限提升的執行個體,或是憑證外洩的風險。

記錄檔分析

BigQuery

非核准身分建立的任何服務帳戶或金鑰?

透過分析管理員活動稽核記錄,下列查詢會偵測使用者手動建立的任何服務帳戶或金鑰。舉例來說,您可能會遵循最佳做法,只允許經過核准的服務帳戶建立服務帳戶,做為自動化工作流程的一部分。因此,在該工作流程以外建立的任何服務帳戶,都視為不符規定,且可能含有惡意內容。

記錄檔分析

BigQuery

是否有任何使用者新增至 (或從) 敏感 IAM 政策中移除?

下列查詢會搜尋管理員活動稽核記錄,偵測 IAP 保護資源 (例如 Compute Engine 後端服務) 的任何使用者或群組存取權變更。下列查詢會搜尋涉及 IAM 角色 roles/iap.httpsResourceAccessor 的 IAP 資源,以及所有 IAM 政策更新。這個角色提供存取 HTTPS 資源或後端服務的權限。任何查詢結果都可能指出有人試圖規避後端服務的防禦措施,而這類服務可能會暴露在網際網路上。

記錄檔分析

BigQuery

佈建活動問題

這些查詢範例會執行分析,偵測可疑或異常的管理員活動,例如佈建及設定資源。

記錄設定是否有所變更?

透過搜尋管理員活動稽核記錄,下列查詢會偵測記錄設定的任何變更。監控記錄設定有助於偵測稽核記錄是否遭到意外或惡意停用,以及類似的防禦規避技術。

記錄檔分析

BigQuery

是否已主動停用任何虛擬私有雲流量記錄?

下列查詢會搜尋管理員活動稽核記錄,偵測虛擬私有雲流量記錄遭主動停用的子網路。監控虛擬私有雲流量記錄設定,有助於偵測虛擬私有雲流量記錄是否遭到意外或惡意停用,以及類似的防禦規避技術。

記錄檔分析

BigQuery

過去一週內修改的防火牆規則數量是否異常偏高?

下列查詢會搜尋「管理員活動」稽核記錄,偵測過去一週內,是否有任何一天出現異常大量的防火牆規則變更。為判斷是否有離群值,查詢會對每日防火牆規則變更次數執行統計分析。系統會回顧前 90 天的每日計數,計算出每天的平均值和標準差。如果每日計數高於平均值兩個以上的標準差,就會視為離群值。您可以設定查詢,包括標準差因子和回溯期,以符合雲端佈建活動設定檔,並盡量減少偽陽性結果。

記錄檔分析

BigQuery

過去一週內是否刪除任何 VM?

下列查詢會搜尋「管理員活動」稽核記錄,列出過去一週內刪除的所有 Compute Engine 執行個體。這項查詢可協助您稽核資源刪除作業,並偵測潛在的惡意活動。

記錄檔分析

BigQuery

工作負載使用問題

這些範例查詢會執行分析,瞭解哪些人或哪些項目正在耗用雲端工作負載和 API,並協助您偵測內部或外部的潛在惡意行為。

過去一週內,是否有任何使用者身分異常大量使用 API?

下列查詢會分析所有雲端稽核記錄,偵測過去一週內,任何使用者身分在任何一天異常高的 API 使用量。如果用量異常偏高,可能表示 API 遭到濫用、出現內部威脅或憑證外洩。為判斷是否有離群值,這項查詢會對每個主體的每日動作次數進行統計分析。系統會回顧前 60 天的每日計數,計算出每天和每個主體的平均值和標準差。如果使用者每日的計數高於平均值三個以上的標準差,就會被視為離群值。您可以設定查詢 (包括標準差因子和回溯期),配合雲端佈建活動設定檔,盡量減少偽陽性結果。

記錄檔分析

BigQuery

過去一個月內,每天的自動調度資源用量是多少?

下列查詢會分析「管理員活動」稽核記錄,並報告上個月每天的資源自動調度程序使用情況。這項查詢可用於找出需要進一步安全調查的模式或異常狀況。

記錄檔分析

BigQuery

資料存取權問題

這些範例查詢會執行分析,瞭解在 Google Cloud中存取或修改資料的人員。

哪些使用者在過去一週最常存取資料?

下列查詢會使用「資料存取」稽核記錄,找出過去一週最常存取 BigQuery 資料表資料的使用者身分。

記錄檔分析

BigQuery

哪些使用者在上個月曾存取「帳戶」資料表中的資料?

下列查詢會使用「資料存取」稽核記錄,找出過去一個月最常查詢特定accounts資料表的使用者身分。除了 BigQuery 匯出目的地的 MY_DATASET_ID 和 MY_PROJECT_ID 預留位置,下列查詢還會使用 DATASET_ID 和 PROJECT_ID 預留位置。您需要替換 DATASET_ID 和 PROJECT_ID 預留位置,指定要分析存取權的目標資料表,例如本例中的 accounts 資料表。

記錄檔分析

BigQuery

哪些是最常存取的資料表,又是誰在存取?

下列查詢會使用「資料存取稽核記錄」,找出過去一個月內最常讀取及修改資料的 BigQuery 資料表。並顯示相關聯的使用者身分,以及資料讀取次數與修改次數的總計明細。

記錄檔分析

BigQuery

過去一週的前 10 大 BigQuery 查詢是?

下列查詢會使用「資料存取稽核記錄」,找出過去一週最常用的查詢。並列出對應的使用者和參照的表格。

記錄檔分析

BigQuery

過去一個月以來,在資料存取記錄檔中,所記錄到最常用的動作是?

下列查詢會使用 Cloud 稽核記錄中的所有記錄,找出過去一個月記錄到的 100 個最常用動作。

記錄檔分析

BigQuery

網路安全問題

這些查詢範例會分析您在 Google Cloud的網路活動。

從新 IP 位址連線至特定子網路的任何連線?

下列查詢會分析虛擬私有雲流量記錄,偵測從任何新來源 IP 位址到指定子網路的連線。在這個範例中,如果來源 IP 位址在過去 60 天的回溯期內,首次出現在過去 24 小時,就會視為新的 IP 位址。您可能想在特定法規遵循規定 (例如 PCI) 範圍內的子網路上,使用及調整這項查詢。

記錄檔分析

BigQuery

Google Cloud Armor 是否封鎖任何連線?

下列查詢會分析外部應用程式負載平衡器記錄,找出 Google Cloud Armor 中設定的安全政策封鎖的連線,藉此偵測潛在的攻擊行為。這項查詢假設您已在外部應用程式負載平衡器上設定 Google Cloud Armor 安全性政策。這項查詢也假設您已啟用外部應用程式負載平衡器記錄,如記錄範圍工具中的「啟用」連結所提供的操作說明所述。

記錄檔分析

BigQuery

Cloud IDS 是否偵測到任何嚴重性高的病毒或惡意軟體?

下列查詢會搜尋 Cloud IDS 威脅記錄,顯示 Cloud IDS 偵測到的任何高嚴重性病毒或惡意軟體。這項查詢假設您已設定 Cloud IDS 端點。

記錄檔分析

BigQuery

虛擬私有雲網路中查詢次數最多的 Cloud DNS 網域為何?

下列查詢會列出過去 60 天內,虛擬私有雲網路查詢次數最多的前 10 個 Cloud DNS 網域。這項查詢假設您已按照記錄範圍工具中「啟用」連結提供的操作說明,為虛擬私有雲網路啟用 Cloud DNS 記錄功能。

記錄檔分析

BigQuery

後續步驟

將記錄擷取至 Google Security Operations。 Google Cloud

探索 Google Cloud 的參考架構、圖表和最佳做法。 歡迎瀏覽我們的雲端架構中心。