This tutorial shows you how to set up, configure, and use Binary Authorization for Google Kubernetes Engine (GKE). Binary authorization is the process of creating attestations on container images for the purpose of verifying that certain criteria are met before you can deploy the images to GKE.

For example, Binary Authorization can verify that an app passed its unit tests or that an app was built using a specific set of systems. For more information, see Software Delivery Shield overview.

This tutorial is intended for practitioners who want to better understand container vulnerability scanning and binary authorization, as well as their implementation and application in a CI/CD pipeline.

This tutorial assumes you are familiar with the following topics and technologies:

- Continuous integration and continuous deployment

- Common vulnerabilities and exposures (CVE) vulnerability scanning

- GKE

- Artifact Registry

- Cloud Build

- Cloud Key Management Service (Cloud KMS)

Objectives

- Deploy GKE clusters for staging and production.

- Create multiple attestors and attestations.

- Deploy a CI/CD pipeline using Cloud Build.

- Test deployment pipeline.

- Develop a break-glass process.

Costs

In this document, you use the following billable components of Google Cloud:

To generate a cost estimate based on your projected usage,

use the pricing calculator.

When you finish the tasks that are described in this document, you can avoid continued billing by deleting the resources that you created. For more information, see Clean up.

Before you begin

- Sign in to your Google Cloud account. If you're new to Google Cloud, create an account to evaluate how our products perform in real-world scenarios. New customers also get $300 in free credits to run, test, and deploy workloads.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

-

Make sure that billing is enabled for your Google Cloud project.

-

Enable the Binary Authorization, Cloud Build, Cloud KMS, GKE, Artifact Registry, Artifact Analysis, Resource Manager, and Cloud Source Repositories APIs.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

-

Make sure that billing is enabled for your Google Cloud project.

-

Enable the Binary Authorization, Cloud Build, Cloud KMS, GKE, Artifact Registry, Artifact Analysis, Resource Manager, and Cloud Source Repositories APIs.

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

All commands in this tutorial are run in Cloud Shell.

Architecture of the CI/CD pipeline

An important aspect of software development lifecycle (SDLC) is ensuring and enforcing that app deployments follow your organization's approved processes. One method for establishing these checks and balances is with Binary Authorization on GKE. First, Binary Authorization attaches notes to container images. Then, GKE verifies that the required notes are present before you can deploy the app.

These notes, or attestations, make statements about the image. Attestations are completely configurable, but here are a few common examples:

- The app passed unit tests.

- The app was verified by the Quality Assurance (QA) team.

- The app was scanned for vulnerabilities and none were found.

The following diagram depicts a SDLC where a single attestation is applied after vulnerability scanning finishes with no known vulnerabilities.

In this tutorial, you create a CI/CD pipeline using Cloud Source Repositories, Cloud Build, Artifact Registry, and GKE. The following diagram illustrates the CI/CD pipeline.

This CI/CD pipeline consists of the following steps:

Builds a container image with app source code.

Pushes the container image into Artifact Registry.

Artifact Analysis, scans the container image for known security vulnerabilities or CVEs.

If the image contains no CVEs with a severity score greater than five, the image is attested as having no critical CVEs and is automatically deployed to staging. A score greater than five indicates mid-range medium to critical vulnerability, and thus is not attested or deployed.

A QA team inspects the app in the staging cluster. If it passes their requirements, they apply a manual attestation that the container image is of sufficient quality for deployment to production. The production manifests are updated and the app is deployed to the production GKE cluster.

The GKE clusters are configured to examine the container images for attestations and reject any deployments that don't have the required attestations. The staging GKE cluster only requires the vulnerability scan attestation, but the production GKE cluster requires both a vulnerability scan and QA attestation.

In this tutorial, you introduce failures into the CI/CD pipeline to test and verify this enforcement. Finally, you implement a break-glass procedure for bypassing these deployment checks in GKE in case of an emergency.

Setting up your environment

This tutorial uses the following environment variables. You can change these values to match your requirements, but all steps in the tutorial assume these environment variables exist and contain a valid value.

In Cloud Shell, set the Google Cloud project where you deploy and manage all resources used in this tutorial:

export PROJECT_ID="${DEVSHELL_PROJECT_ID}" gcloud config set project ${PROJECT_ID}Set the region in which you deploy these resources:

export REGION="us-central1"The GKE cluster and the Cloud KMS keys reside in this region. In this tutorial, the region is

us-central1. For more information about regions, see Geography and regions.Set the Cloud Build project number:

export PROJECT_NUMBER="$(gcloud projects describe "${PROJECT_ID}" \ --format='value(projectNumber)')"Set the Cloud Build service account email:

export CLOUD_BUILD_SA_EMAIL="${PROJECT_NUMBER}@cloudbuild.gserviceaccount.com"

Creating GKE clusters

Create two GKE clusters and grant Identity and Access Management (IAM) permissions for Cloud Build to deploy apps to GKE. Creating GKE clusters can take a few minutes.

In Cloud Shell, create a GKE cluster for staging:

gcloud container clusters create "staging-cluster" \ --project "${PROJECT_ID}" \ --machine-type "n1-standard-1" \ --region "${REGION}" \ --num-nodes "1" \ --binauthz-evaluation-mode=PROJECT_SINGLETON_POLICY_ENFORCECreate a GKE cluster for production:

gcloud container clusters create "prod-cluster" \ --project "${PROJECT_ID}" \ --machine-type "n1-standard-1" \ --region "${REGION}" \ --num-nodes "1" \ --binauthz-evaluation-mode=PROJECT_SINGLETON_POLICY_ENFORCEGrant the Cloud Build service account permission to deploy to GKE:

gcloud projects add-iam-policy-binding "${PROJECT_ID}" \ --member "serviceAccount:${CLOUD_BUILD_SA_EMAIL}" \ --role "roles/container.developer"

Creating signing keys

Create two Cloud KMS asymmetric keys for signing attestations.

In Cloud Shell, create a Cloud KMS key ring named

binauthz:gcloud kms keyrings create "binauthz" \ --project "${PROJECT_ID}" \ --location "${REGION}"Create an asymmetric Cloud KMS key named

vulnz-signerwhich will be used to sign and verify vulnerability scan attestations:gcloud kms keys create "vulnz-signer" \ --project "${PROJECT_ID}" \ --location "${REGION}" \ --keyring "binauthz" \ --purpose "asymmetric-signing" \ --default-algorithm "rsa-sign-pkcs1-4096-sha512"Create an asymmetric Cloud KMS key named

qa-signerto sign and verify QA attestations:gcloud kms keys create "qa-signer" \ --project "${PROJECT_ID}" \ --location "${REGION}" \ --keyring "binauthz" \ --purpose "asymmetric-signing" \ --default-algorithm "rsa-sign-pkcs1-4096-sha512"

Configuring attestations

You create the notes that are attached to container images, grant permissions to the Cloud Build service account to view notes, attach notes, and create the attestors using the keys from the previous steps.

Create the vulnerability scanner attestation

In Cloud Shell, create a Artifact Analysis note named

vulnz-note:curl "https://containeranalysis.googleapis.com/v1/projects/${PROJECT_ID}/notes/?noteId=vulnz-note" \ --request "POST" \ --header "Content-Type: application/json" \ --header "Authorization: Bearer $(gcloud auth print-access-token)" \ --header "X-Goog-User-Project: ${PROJECT_ID}" \ --data-binary @- <<EOF { "name": "projects/${PROJECT_ID}/notes/vulnz-note", "attestation": { "hint": { "human_readable_name": "Vulnerability scan note" } } } EOFGrant the Cloud Build service account permission to view and attach the

vulnz-notenote to container images:curl "https://containeranalysis.googleapis.com/v1/projects/${PROJECT_ID}/notes/vulnz-note:setIamPolicy" \ --request POST \ --header "Content-Type: application/json" \ --header "Authorization: Bearer $(gcloud auth print-access-token)" \ --header "X-Goog-User-Project: ${PROJECT_ID}" \ --data-binary @- <<EOF { "resource": "projects/${PROJECT_ID}/notes/vulnz-note", "policy": { "bindings": [ { "role": "roles/containeranalysis.notes.occurrences.viewer", "members": [ "serviceAccount:${CLOUD_BUILD_SA_EMAIL}" ] }, { "role": "roles/containeranalysis.notes.attacher", "members": [ "serviceAccount:${CLOUD_BUILD_SA_EMAIL}" ] } ] } } EOFCreate the vulnerability scan attestor:

gcloud container binauthz attestors create "vulnz-attestor" \ --project "${PROJECT_ID}" \ --attestation-authority-note-project "${PROJECT_ID}" \ --attestation-authority-note "vulnz-note" \ --description "Vulnerability scan attestor"Add the public key for the attestor's signing key:

gcloud beta container binauthz attestors public-keys add \ --project "${PROJECT_ID}" \ --attestor "vulnz-attestor" \ --keyversion "1" \ --keyversion-key "vulnz-signer" \ --keyversion-keyring "binauthz" \ --keyversion-location "${REGION}" \ --keyversion-project "${PROJECT_ID}"Grant the Cloud Build service account permission to view attestations made by

vulnz-attestor:gcloud container binauthz attestors add-iam-policy-binding "vulnz-attestor" \ --project "${PROJECT_ID}" \ --member "serviceAccount:${CLOUD_BUILD_SA_EMAIL}" \ --role "roles/binaryauthorization.attestorsViewer"Grant the Cloud Build service account permission to sign objects using the

vulnz-signerkey:gcloud kms keys add-iam-policy-binding "vulnz-signer" \ --project "${PROJECT_ID}" \ --location "${REGION}" \ --keyring "binauthz" \ --member "serviceAccount:${CLOUD_BUILD_SA_EMAIL}" \ --role 'roles/cloudkms.signerVerifier'

Create the QA attestation

In Cloud Shell, create a Artifact Analysis note named

qa-note:curl "https://containeranalysis.googleapis.com/v1/projects/${PROJECT_ID}/notes/?noteId=qa-note" \ --request "POST" \ --header "Content-Type: application/json" \ --header "Authorization: Bearer $(gcloud auth print-access-token)" \ --header "X-Goog-User-Project: ${PROJECT_ID}" \ --data-binary @- <<EOF { "name": "projects/${PROJECT_ID}/notes/qa-note", "attestation": { "hint": { "human_readable_name": "QA note" } } } EOFGrant the Cloud Build service account permission to view and attach the

qa-notenote to container images:curl "https://containeranalysis.googleapis.com/v1/projects/${PROJECT_ID}/notes/qa-note:setIamPolicy" \ --request POST \ --header "Content-Type: application/json" \ --header "Authorization: Bearer $(gcloud auth print-access-token)" \ --header "X-Goog-User-Project: ${PROJECT_ID}" \ --data-binary @- <<EOF { "resource": "projects/${PROJECT_ID}/notes/qa-note", "policy": { "bindings": [ { "role": "roles/containeranalysis.notes.occurrences.viewer", "members": [ "serviceAccount:${CLOUD_BUILD_SA_EMAIL}" ] }, { "role": "roles/containeranalysis.notes.attacher", "members": [ "serviceAccount:${CLOUD_BUILD_SA_EMAIL}" ] } ] } } EOFCreate the QA attestor:

gcloud container binauthz attestors create "qa-attestor" \ --project "${PROJECT_ID}" \ --attestation-authority-note-project "${PROJECT_ID}" \ --attestation-authority-note "qa-note" \ --description "QA attestor"Add the public key for the attestor's signing key:

gcloud beta container binauthz attestors public-keys add \ --project "${PROJECT_ID}" \ --attestor "qa-attestor" \ --keyversion "1" \ --keyversion-key "qa-signer" \ --keyversion-keyring "binauthz" \ --keyversion-location "${REGION}" \ --keyversion-project "${PROJECT_ID}"Grant the Cloud Build service account permission to view attestations made by

qa-attestor:gcloud container binauthz attestors add-iam-policy-binding "qa-attestor" \ --project "${PROJECT_ID}" \ --member "serviceAccount:${CLOUD_BUILD_SA_EMAIL}" \ --role "roles/binaryauthorization.attestorsViewer"Grant your QA team permission to sign attestations:

gcloud kms keys add-iam-policy-binding "qa-signer" \ --project "${PROJECT_ID}" \ --location "${REGION}" \ --keyring "binauthz" \ --member "group:qa-team@example.com" \ --role 'roles/cloudkms.signerVerifier'

Setting the Binary Authorization policy

Even though you created the GKE clusters with --binauthz-evaluation-mode=PROJECT_SINGLETON_POLICY_ENFORCE,

you must author a policy that instructs GKE on what attestations

the binaries require to run in the cluster. Binary Authorization policies exist

at the project level, but contain configuration for the cluster level.

The following policy alters the default policy in the following ways:

Changes the default

evaluationModetoALWAYS_DENY. Only exempted images or images with the required attestations are admitted to run in the cluster.Enables

globalPolicyEvaluationMode, which changes the default allowlist to only include system images provided by Google.Defines the following cluster admission rules:

staging-clusterrequires attestations fromvulnz-attestor.prod-clusterrequires attestations fromvulnz-attestorandqa-attestor.

For more information on Binary Authorization policies, see Policy YAML reference.

In Cloud Shell, create a YAML file that describes the Binary Authorization policy for the Google Cloud project:

cat > ./binauthz-policy.yaml <<EOF admissionWhitelistPatterns: - namePattern: docker.io/istio/* defaultAdmissionRule: enforcementMode: ENFORCED_BLOCK_AND_AUDIT_LOG evaluationMode: ALWAYS_DENY globalPolicyEvaluationMode: ENABLE clusterAdmissionRules: # Staging cluster ${REGION}.staging-cluster: evaluationMode: REQUIRE_ATTESTATION enforcementMode: ENFORCED_BLOCK_AND_AUDIT_LOG requireAttestationsBy: - projects/${PROJECT_ID}/attestors/vulnz-attestor # Production cluster ${REGION}.prod-cluster: evaluationMode: REQUIRE_ATTESTATION enforcementMode: ENFORCED_BLOCK_AND_AUDIT_LOG requireAttestationsBy: - projects/${PROJECT_ID}/attestors/vulnz-attestor - projects/${PROJECT_ID}/attestors/qa-attestor EOFUpload the new policy to the Google Cloud project:

gcloud container binauthz policy import ./binauthz-policy.yaml \ --project "${PROJECT_ID}"

Creating the vulnerability scan checker and enabling the API

Create a container image that is used as a build step in Cloud Build. This container compares the severity scores of any detected vulnerabilities against the configured threshold. If the score is within the threshold, Cloud Build creates an attestation on the container. If the score is outside of the threshold, the build fails, and no attestation is created.

In Cloud Shell, create a new Artifact Registry repository to store the attestor image:

gcloud artifacts repositories create cloudbuild-helpers \ --repository-format=DOCKER --location=${REGION}Clone the binary authorization tools and sample application source:

git clone https://github.com/GoogleCloudPlatform/gke-binary-auth-tools ~/binauthz-toolsBuild and push the vulnerability scan attestor container named

attestortocloudbuild-helpersArtifact Registry:gcloud builds submit \ --project "${PROJECT_ID}" \ --tag "us-central1-docker.pkg.dev/${PROJECT_ID}/cloudbuild-helpers/attestor" \ ~/binauthz-tools

Setting up the Cloud Build pipeline

Create a Cloud Source Repositories repository and Cloud Build trigger for the sample app and Kubernetes manifests.

Create the hello-app Cloud Source Repositories and Artifact Registry repository

In Cloud Shell, create a Cloud Source Repositories repository for the sample app:

gcloud source repos create hello-app \ --project "${PROJECT_ID}"Clone the repository locally:

gcloud source repos clone hello-app ~/hello-app \ --project "${PROJECT_ID}"Copy the sample code into the repository:

cp -R ~/binauthz-tools/examples/hello-app/* ~/hello-appCreate a new Artifact Registry repository to store application images:

gcloud artifacts repositories create applications \ --repository-format=DOCKER --location=${REGION}

Create the hello-app Cloud Build trigger

In the Google Cloud console, go to the Triggers page.

Click Manage Repositories.

For the

hello-apprepository, click the ... and select Add Trigger.In the Trigger Settings window, enter the following details:

- In the Name field, enter

build-vulnz-deploy. - For Event choose Push to a branch.

- In the Repository field, choose

hello-appfrom the menu. - In the Branch field, enter

master. - For Configuration, select Cloud Build configuration file (yaml or json).

- In the Location, select Repository enter the default

value

/cloudbuild.yaml.

- In the Name field, enter

Add the following Substitution Variable pairs:

_COMPUTE_REGIONwith the valueus-central1(or the region you chose in the beginning)._KMS_KEYRINGwith the valuebinauthz._KMS_LOCATIONwith the valueus-central1(or the region you chose in the beginning)._PROD_CLUSTERwith the valueprod-cluster._QA_ATTESTORwith the valueqa-attestor._QA_KMS_KEYwith the valueqa-signer._QA_KMS_KEY_VERSIONwith the value1._STAGING_CLUSTERwith the valuestaging-cluster._VULNZ_ATTESTORwith the valuevulnz-attestor._VULNZ_KMS_KEYwith the valuevulnz-signer._VULNZ_KMS_KEY_VERSIONwith the value1.

Click Create.

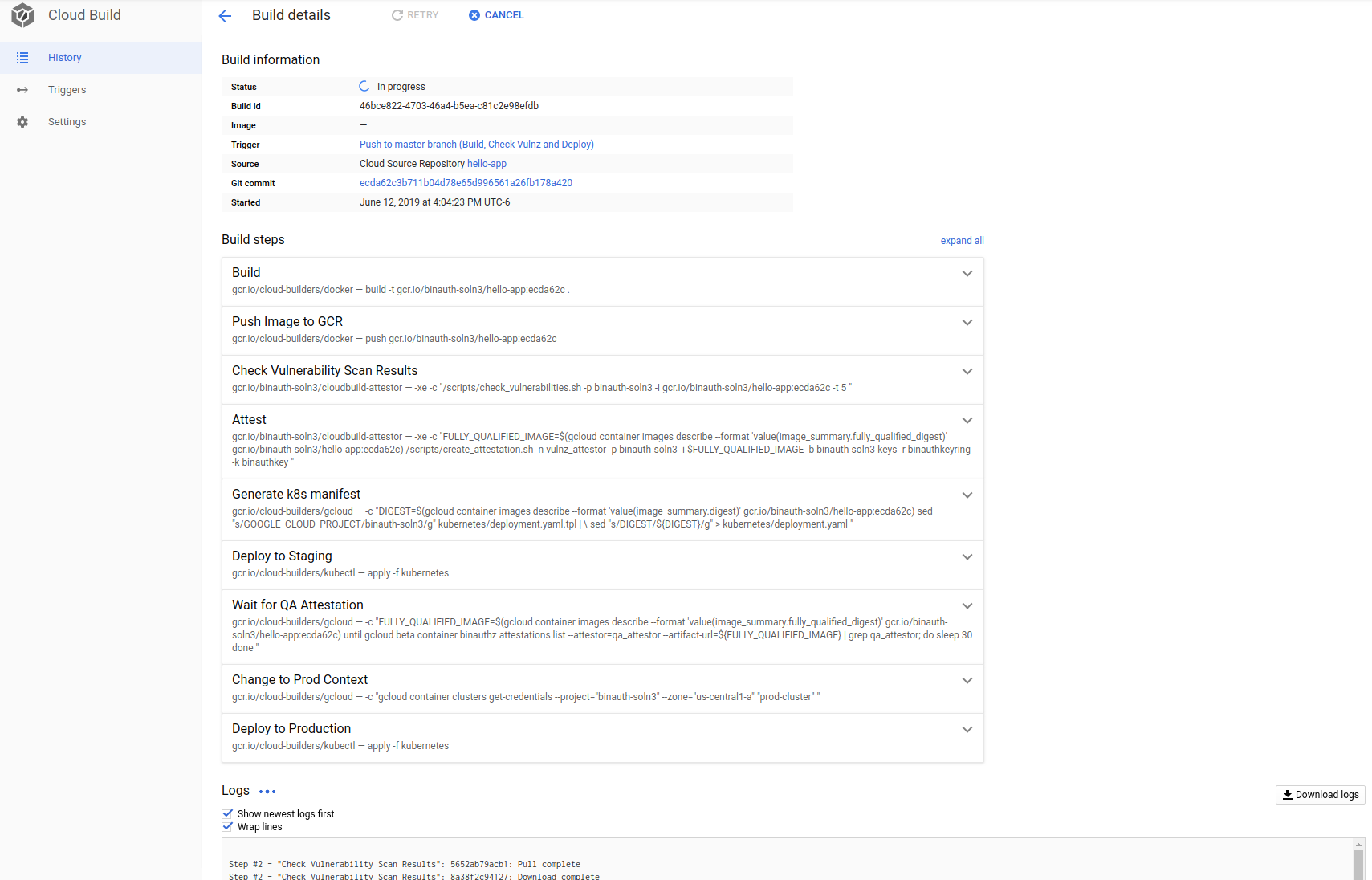

Test the Cloud Build pipeline

Test the CI/CD pipeline by committing and pushing the sample app to the

Cloud Source Repositories repository. Cloud Build detects the

change, builds, and deploys the app to staging-cluster. The pipeline waits for

up to 10 minutes for QA verification. After the deployment is verified by the

QA team, the process continues and the production Kubernetes manifests are

updated and Cloud Build deploys the app to prod-cluster.

In Cloud Shell, commit and push the

hello-appfiles to the Cloud Source Repositories repository to trigger a build:cd ~/hello-app git add . git commit -m "Initial commit" git push origin masterIn the Google Cloud console, go to the History page.

To watch the build progress, click the most recent run of Cloud Build.

When the deployment to

staging-clusteris finished, go to the Services page.To verify that the app is working, click the Endpoints link for the app.

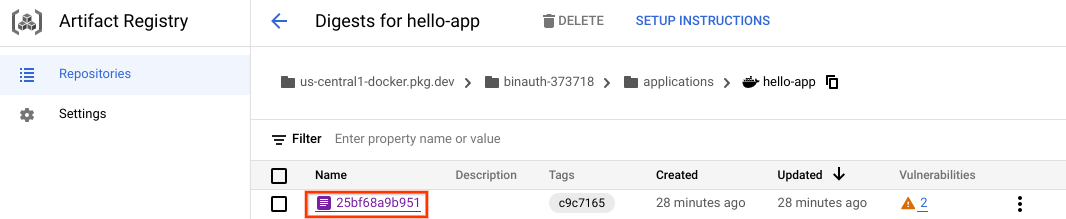

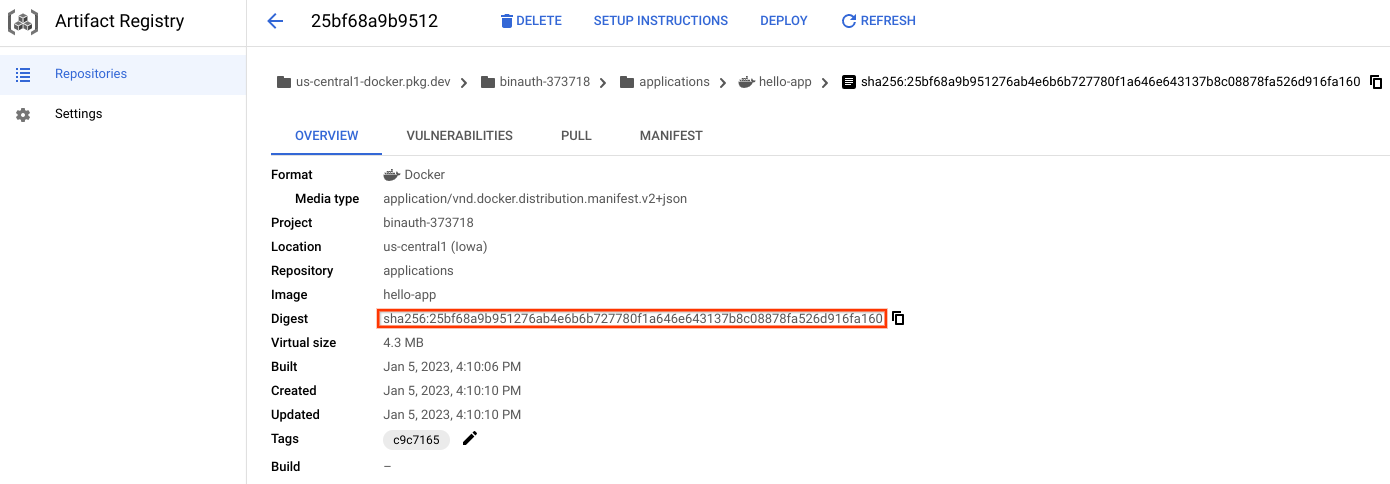

Go to the Repositories page.

Click

applications.Click

hello-app.Click the image that you validated in the staging deployment.

Copy the Digest value from the image details. This information is needed in the next step

To apply the manual QA attestation, replace

...with the value you copied from the image details. TheDIGESTvariable should be in the formatsha256:hash-value`.The

Await QA attestationbuild step will also output a copy-pasteable command, shown below.DIGEST="sha256:..." # Replace with your value gcloud beta container binauthz attestations sign-and-create \ --project "${PROJECT_ID}" \ --artifact-url "${REGION}-docker.pkg.dev/${PROJECT_ID}/applications/hello-app@${DIGEST}" \ --attestor "qa-attestor" \ --attestor-project "${PROJECT_ID}" \ --keyversion "1" \ --keyversion-key "qa-signer" \ --keyversion-location "${REGION}" \ --keyversion-keyring "binauthz" \ --keyversion-project "${PROJECT_ID}"To verify the app was deployed, go to the Services page.

To view the app, click the endpoint link.

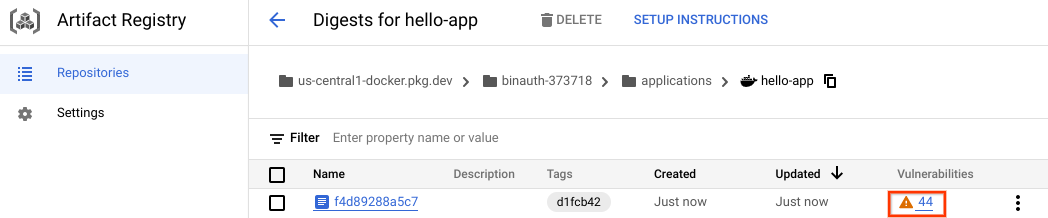

Deploying an unattested image

So far, the sample app didn't have any vulnerabilities. Update the app to output a different message and change the base image.

In Cloud Shell, change the output from

Hello WorldtoBinary Authorization, and change the base image fromdistrolesstodebian:cd ~/hello-app sed -i "s/Hello World/Binary Authorization/g" main.go sed -i "s/FROM gcr\.io\/distroless\/static-debian11/FROM debian/g" DockerfileCommit and push the changes:

git add . git commit -m "Change message and base image" git push origin masterTo monitor the status of the CI/CD pipeline, in the Google Cloud console, go to the History page.

This build fails because of detected CVEs in the image.

To examine the identified CVEs, go to the Images page.

Click

hello-app.To review the identified CVEs, click the vulnerability summary for the most recent image.

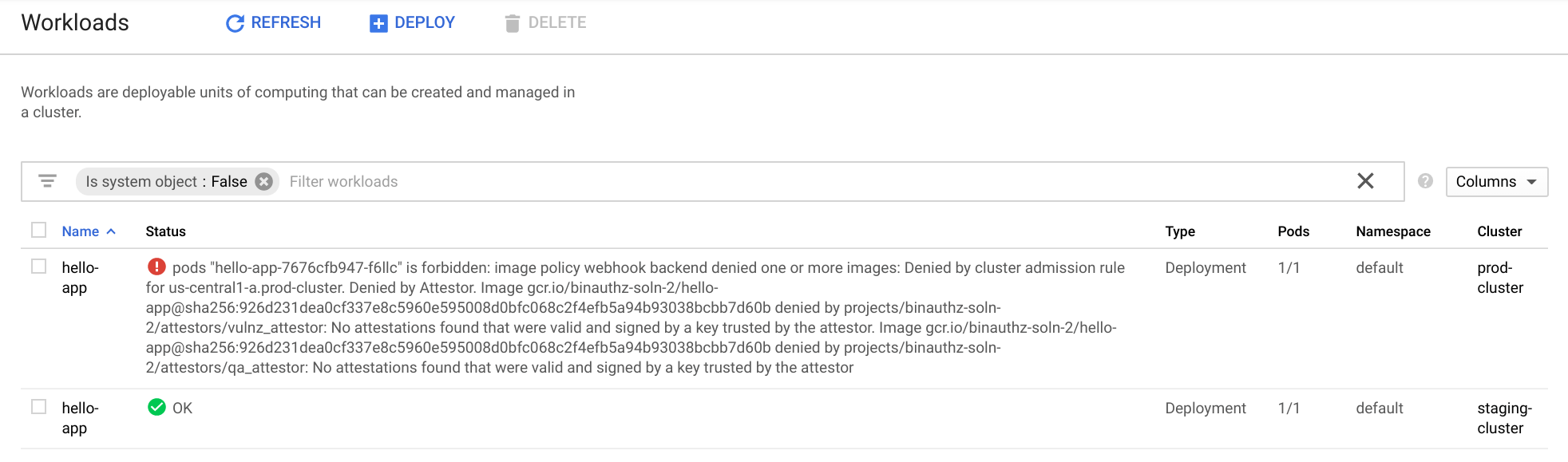

In Cloud Shell, attempt to deploy the new image to production without the attestation from the vulnerability scan:

export SHA_DIGEST="[SHA_DIGEST_VALUE]" cd ~/hello-app sed "s/REGION/${REGION}/g" kubernetes/deployment.yaml.tpl | \ sed "s/GOOGLE_CLOUD_PROJECT/${PROJECT_ID}/g" | \ sed -e "s/DIGEST/${SHA_DIGEST}/g" > kubernetes/deployment.yaml gcloud container clusters get-credentials \ --project=${PROJECT_ID} \ --region="${REGION}" prod-cluster kubectl apply -f kubernetesIn the Google Cloud console, go to the Workloads page.

The image failed to deploy because it wasn't signed by the

vulnz-attestorand theqa-attestor.

Break-glass procedure

Occasionally, you need to allow changes that are outside the normal workflow. To allow image deployments without the required attestations, the pod definition is annotated with a break-glass policy flag. Enabling this flag still causes GKE to check for the required attestations, but allows the container image to be deployed and logs violations.

For more information about bypassing attestation checks, see Override a policy.

In Cloud Shell, uncomment the break-glass annotation in the Kubernetes manifest:

sed -i "31s/^#//" kubernetes/deployment.yamlUse

kubectlto apply the changes:kubectl apply -f kubernetesTo verify the change was deployed to

prod-clustergo to the Workloads page in Google Cloud console.The deployment error message is now gone.

To verify the app was deployed, go to the Services page.

To view the app, click the endpoint link.

Clean up

To avoid incurring charges to your Google Cloud account for the resources used in this tutorial, either delete the project that contains the resources, or keep the project and delete the individual resources.

Delete the project

- In the Google Cloud console, go to the Manage resources page.

- In the project list, select the project that you want to delete, and then click Delete.

- In the dialog, type the project ID, and then click Shut down to delete the project.

What's next

- Best practices for building containers.

- Deploying a containerized web application.

- GitOps-style continuous delivery with Cloud Build.

- Managed base images.

- Explore reference architectures, diagrams, and best practices about Google Cloud. Take a look at our Cloud Architecture Center.