This topic describes a multi-region deployment for Apigee hybrid on GKE and Anthos GKE deployed on-prem.

Topologies for multi-region deployment include the following:

- Active-Active: When you have applications deployed in multiple geographic locations and you require low latency API response for your deployments. You have the option to deploy hybrid in multiple geographic locations nearest to your clients. For example: US West Coast, US East Coast, Europe, APAC.

- Active-Passive: When you have a primary region and a failover or disaster recovery region.

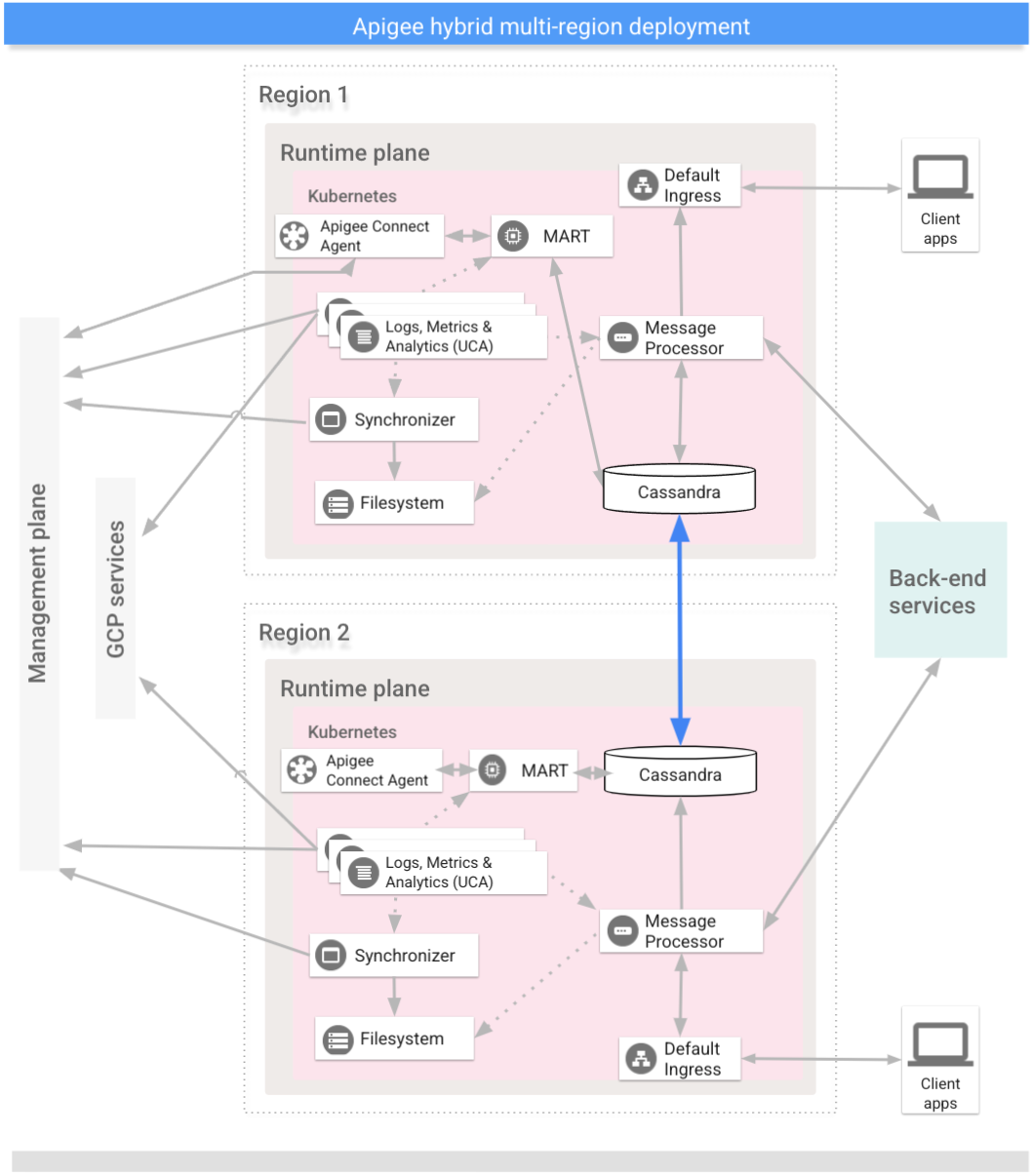

The regions in a multi-region hybrid deployment communicate via Cassandra, as the following image shows:

Load balancing the MART connection

Each regional cluster must have its own MART IP and hostname; however, you only need to connect the management plane to one of them. Cassandra propagates information to all of the clusters. The best option for high availability for MART is to load balance the individual MART IP addresses and configure your organization to talk to the load balanced MART URL.

Prerequisites

Before configuring hybrid for multiple regions, you must complete the following prerequisites:

- Set up Kubernetes clusters in multiple regions with different CIDR blocks

- Set up cross-region communication

- Cassandra Multi Region requirements:

- Make sure the pod network namespace has connectivity across the regions, including firewalls, vpn, vpc peering and vNet peering. This is the case for most GKE installations.

- If the pod network namespace does not have connectivity between pods in different clusters

(the clusters are running in "island network mode", for example in GKE on-prem installations),

enable the Kubernetes

hostNetworkfeature by settingcassandra.hostNetwork: truein the overrides file for all of the regions in your Apigee hybrid multi-regions installation.For information on the Kubernetes

hostNetworkfeature, see Host namespaces in the Kubernetes documentation. - Enable

hostNetworkon existing clusters before expanding your multi-region configuration to new regions. - When

hostNetworkis enabled, make sure worker nodes can perform reverse DNS lookup. Apigee cassandra uses both forward and reverse DNS lookup to obtain the host IP while starting. - Open Cassandra ports 7000 and 7001 between Kubernetes clusters across all regions to enable worker nodes across regions and datacenters to communicate. See Configure ports.

For detailed information, see Kubernetes documentation.

Configure the multi-region seed host

This section describes how to expand the existing Cassandra cluster to a new region. This setup allows the new region to bootstrap the cluster and join the existing data center. Without this configuration, the multi-region Kubernetes clusters would not know about each other.

Run the following

kubectlcommand to identify a seed host address for Cassandra in the current region.A seed host address allows a new regional instance to find the original cluster on the very first startup to learn the topology of the cluster. The seed host address is designated as the contact point in the cluster.

kubectl get pods -o wide -n apigee NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE apigee-cassandra-default-0 1/1 Running 0 5d 10.0.0.11 gke-k8s-dc-2-default-pool-a2206492-p55d apigee-cassandra-default-1 1/1 Running 0 5d 10.0.2.4 gke-k8s-dc-2-default-pool-e9daaab3-tjmz apigee-cassandra-default-2 1/1 Running 0 5d 10.0.3.5 gke-k8s-dc-2-default-pool-e589awq3-kjch

- Decide which of the IPs returned from the previous command will be the multi-region seed host.

The configuration in this step depends on whether you are on GKE or GKE on-prem:

GKE Only: In data center 2, configure

cassandra.multiRegionSeedHostandcassandra.datacenterin Manage runtime plane components, wheremultiRegionSeedHostis one of the IPs returned by the previous command:cassandra: multiRegionSeedHost: seed_host_IP datacenter: data_center_name rack: rack_name hostNetwork: false # Set this to true for Non GKE platforms.

For example:

cassandra: multiRegionSeedHost: 10.0.0.11 datacenter: "dc-2" rack: "ra-1" hostNetwork: false

GKE on-prem Only: In data center 2, configure

cassandra.multiRegionSeedHostin your overrides file, wheremultiRegionSeedHostis one of the IPs returned by the previous command:cassandra: hostNetwork: true multiRegionSeedHost: seed_host_IP datacenter: data_center_name

For example:

cassandra: hostNetwork: true multiRegionSeedHost: 10.0.0.11 datacenter: "dc-2"

- In the new data center/region, before you install hybrid, set the same TLS certificates and

credentials in

overrides.yamlas you set in the first region.

Set up the new region

After you configure the seed host, you can set up the new region.

To set up the new region:

- Copy your certificate from the existing cluster to the new cluster. The new CA root is

used by Cassandra and other hybrid components for mTLS. Therefore, it is essential to have

consistent certificates across the cluster.

- Set the context to the original namespace:

kubectl config use-context original-cluster-name

- Export the current namespace configuration to a file:

kubectl get namespace namespace -o yaml > apigee-namespace.yaml

- Export the

apigee-casecret to a file:kubectl -n cert-manager get secret apigee-ca -o yaml > apigee-ca.yaml

- Set the context to the new region's cluster name:

kubectl config use-context new-cluster-name

- Import the namespace configuration to the new cluster. Be sure to update the

"namespace" in the file if you're using a different namespace

in the new region:

kubectl apply -f apigee-namespace.yaml

Import the secret to the new cluster:

kubectl -n cert-manager apply -f apigee-ca.yaml

- Set the context to the original namespace:

- Install hybrid in the new region. Be sure that the

overrides-DC_name.yamlfile includes the same TLS certificates that are configured in the first region, as explained in the previous section.Execute the following two commands to install hybrid in the new region:

apigeectl init -f overrides/overrides-DC_name.yaml

apigeectl apply -f overrides/overrides-DC_name.yaml

- Verify the hybrid installation is successful by running the following command:

apigeectl check-ready -f overrides_your_cluster_name.yaml

- Verify the Cassandra cluster setup by running the following command. The output should

show both the existing and new data centers.

kubectl exec apigee-cassandra-default-0 -n apigee \ -- nodetool -u JMX_user -pw JMX_password status

Example showing a successful setup:

Datacenter: dc-1 ==================== Status=Up/Down |/ State=Normal/Leaving/Joining/Moving -- Address Load Tokens Owns Host ID Rack UN 10.132.87.93 68.07 GiB 256 ? fb51465c-167a-42f7-98c9-b6eba1de34de c UN 10.132.84.94 69.9 GiB 256 ? f621a5ac-e7ee-48a9-9a14-73d69477c642 b UN 10.132.84.105 76.95 GiB 256 ? 0561086f-e95b-4232-ba6c-ad519ff30336 d Datacenter: dc-2 ==================== Status=Up/Down |/ State=Normal/Leaving/Joining/Moving -- Address Load Tokens Owns Host ID Rack UN 10.132.0.8 71.61 GiB 256 ? 8894a98b-8406-45de-99e2-f404ab10b5d6 c UN 10.132.9.204 75.1 GiB 256 ? afa0ffa3-630b-4f1e-b46f-fc3df988092e a UN 10.132.3.133 68.08 GiB 256 ? 25ae39ab-b39e-4d4f-9cb7-de095ab873db b

- Set up Cassandra on all the pods in the new data centers.

- Get

apigeeorgfrom the cluster with the following command:kubectl get apigeeorg -n apigee -o json | jq .items[].metadata.name

For example:

Ex: kubectl get apigeeorg -n apigee -o json | jq .items[].metadata.name "rg-hybrid-b7d3b9c"

- Create a cassandra data replication custom resource (

YAML) file. The file can have any name. In the following examples the file will have the namedatareplication.yaml.The file must contain the following:

apiVersion: apigee.cloud.google.com/v1alpha1 kind: CassandraDataReplication metadata: name: REGION_EXPANSION namespace: NAMESPACE spec: organizationRef: APIGEEORG_VALUE force: false source: region: SOURCE_REGIONWhere:

- REGION_EXPANSION is the name you are giving this metadata. You can use any name.

- NAMESPACE is the same namespace that is provided in

overrides.yaml. This is usually "apigee". - APIGEEORG_VALUE is the value output from the

kubectl get apigeeorg -n apigee -o json | jq .items[].metadata.namecommand in the previous step. For example,rg-hybrid-b7d3b9c - SOURCE_REGION is the datacenter name in the source region. This is the

value set for

cassandra:datacenter:in youroverrides.yaml.

For example:

apiVersion: apigee.cloud.google.com/v1alpha1 kind: CassandraDataReplication metadata: name: region-expansion namespace: apigee spec: organizationRef: rg-hybrid-b7d3b9c force: false source: region: "dc-1"

- Apply the

CassandraDataReplicationwith the following command:kubectl apply -f datareplication.yaml

- Verify the rebuild status using the following command.

kubectl -n apigee get apigeeds -o json | jq .items[].status.cassandraDataReplication

The results should look something like:

{ "rebuildDetails": { "apigee-cassandra-default-0": { "state": "complete", "updated": 1623105760 }, "apigee-cassandra-default-1": { "state": "complete", "updated": 1623105765 }, "apigee-cassandra-default-2": { "state": "complete", "updated": 1623105770 } }, "state": "complete", "updated": 1623105770 }

- Get

- Verify the rebuild processes from the logs. Also, verify the data size

using the

nodetool statuscommand:kubectl logs apigee-cassandra-default-0 -f -n apigee

kubectl exec apigee-cassandra-default-0 -n apigee -- nodetool -u JMX_user -pw JMX_password status

The following example shows example log entries:

INFO 01:42:24 rebuild from dc: dc-1, (All keyspaces), (All tokens) INFO 01:42:24 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889] Executing streaming plan for Rebuild INFO 01:42:24 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889] Starting streaming to /10.12.1.45 INFO 01:42:25 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889, ID#0] Beginning stream session with /10.12.1.45 INFO 01:42:25 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889] Starting streaming to /10.12.4.36 INFO 01:42:25 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889 ID#0] Prepare completed. Receiving 1 files(0.432KiB), sending 0 files(0.000KiB) INFO 01:42:25 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889] Session with /10.12.1.45 is complete INFO 01:42:25 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889, ID#0] Beginning stream session with /10.12.4.36 INFO 01:42:25 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889] Starting streaming to /10.12.5.22 INFO 01:42:26 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889 ID#0] Prepare completed. Receiving 1 files(0.693KiB), sending 0 files(0.000KiB) INFO 01:42:26 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889] Session with /10.12.4.36 is complete INFO 01:42:26 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889, ID#0] Beginning stream session with /10.12.5.22 INFO 01:42:26 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889 ID#0] Prepare completed. Receiving 3 files(0.720KiB), sending 0 files(0.000KiB) INFO 01:42:26 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889] Session with /10.12.5.22 is complete INFO 01:42:26 [Stream #3a04e810-580d-11e9-a5aa-67071bf82889] All sessions completed

- Update the seed hosts. Remove

multiRegionSeedHost: 10.0.0.11fromoverrides-DC_name.yamland reapply.apigeectl apply -f overrides/overrides-DC_name.yaml

Check the Cassandra cluster status

The following command is useful to see if the cluster setup is successful in two data centers. The command checks the nodetool status for the two regions.

kubectl exec apigee-cassandra-default-0 -n apigee -- nodetool -u JMX_user -pw JMX_password status Datacenter: dc-1 ================ Status=Up/Down |/ State=Normal/Leaving/Joining/Moving -- Address Load Tokens Owns (effective) Host ID Rack UN 10.12.1.45 112.09 KiB 256 100.0% 3c98c816-3f4d-48f0-9717-03d0c998637f ra-1 UN 10.12.4.36 95.27 KiB 256 100.0% 0a36383d-1d9e-41e2-924c-7b62be12d6cc ra-1 UN 10.12.5.22 88.7 KiB 256 100.0% 3561f4fa-af3d-4ea4-93b2-79ac7e938201 ra-1 Datacenter: dc-2 ================ Status=Up/Down |/ State=Normal/Leaving/Joining/Moving -- Address Load Tokens Owns (effective) Host ID Rack UN 10.0.4.33 78.69 KiB 256 0.0% a200217d-260b-45cd-b83c-182b27ff4c99 ra-1 UN 10.0.0.21 78.68 KiB 256 0.0% 9f3364b9-a7a1-409c-9356-b7d1d312e52b ra-1 UN 10.0.1.26 15.46 KiB 256 0.0% 1666df0f-702e-4c5b-8b6e-086d0f2e47fa ra-1

Troubleshooting

See Cassandra data replication failure.