SCENE 1: An introductory panel similar to our panel from Part 1. We see Martha, Flip, Bit, and Octavius in isolated circles, surrounded by a couple Neural Network-related icons. TITLE: Learning NEURAL NETWORKS. An online comic from Google AI. Caption/Arrow: Starring MARTHA, who is getting the hang of this. Martha: I THINK I know what’s happening? Caption: With FLIP Caption: And BIT Caption: And introducing OCTAVIUS! Octavius: Hello!

SCENE 2:Martha turns the key in the door to Neural Network land. Caption: Previously on Machine Learning Adventures… Martha: Next stop: Neural Networks!

SCENE 3: Martha throws open the door, beaming and shouting. Martha: HELLO WORL—

SCENE 4:Camera swings around behind Martha and we see an endless vista of interconnected nodes, massive and overwhelming through the doorway. Maybe some smoke pouring through the door. Martha cowers in silhouette. Martha: ...eep!

SCENE 5: Back on the other side of the door. Martha’s thrown it shut, eyes wide, hair in disarray. Flip and Bit regard her stoically. Sound F/X: SLAM

SCENE 6: Same as before, but Martha’s eyes have travelled down to Flip and Bit. A voice issues from behind the door. Octavius (from behind door): Sorry, sorry! Wasn’t ready yet. Okay, come in.

SCENE 7: A beat, Martha, Flip, and Bit peer tentatively around the door.

SCENE 8: Octavius, a cute baby octopus, floats beside a simple technological neuron. Octavius: Hi! I’m Octavius! Let’s start with the BASICS shall we?

SCENE 9: Martha looks relieved, checking over her shoulder for the overwhelming vista from before. Flip and Bit wave to Octavius. Martha: *Whew* Yes, please. I’m Martha. Octavius: Hi, Martha! So, NEURAL NETWORKS are made up of simple building blocks, and the SIMPLEST is “THE NEURON.” Oh, Hi Flip! Hi Bit! Flip: Yo. Bit: Hey, Doc.

SCENE 10: Martha kneels beside two descriptive panels that show biological and technological neurons side-by-side as Octavius explains. Octavius: Just like their biological namesake, these “neurons” accept multiple INPUTS, and combine them to produce OUTPUTS. Martha: “Inputs,” as in…?

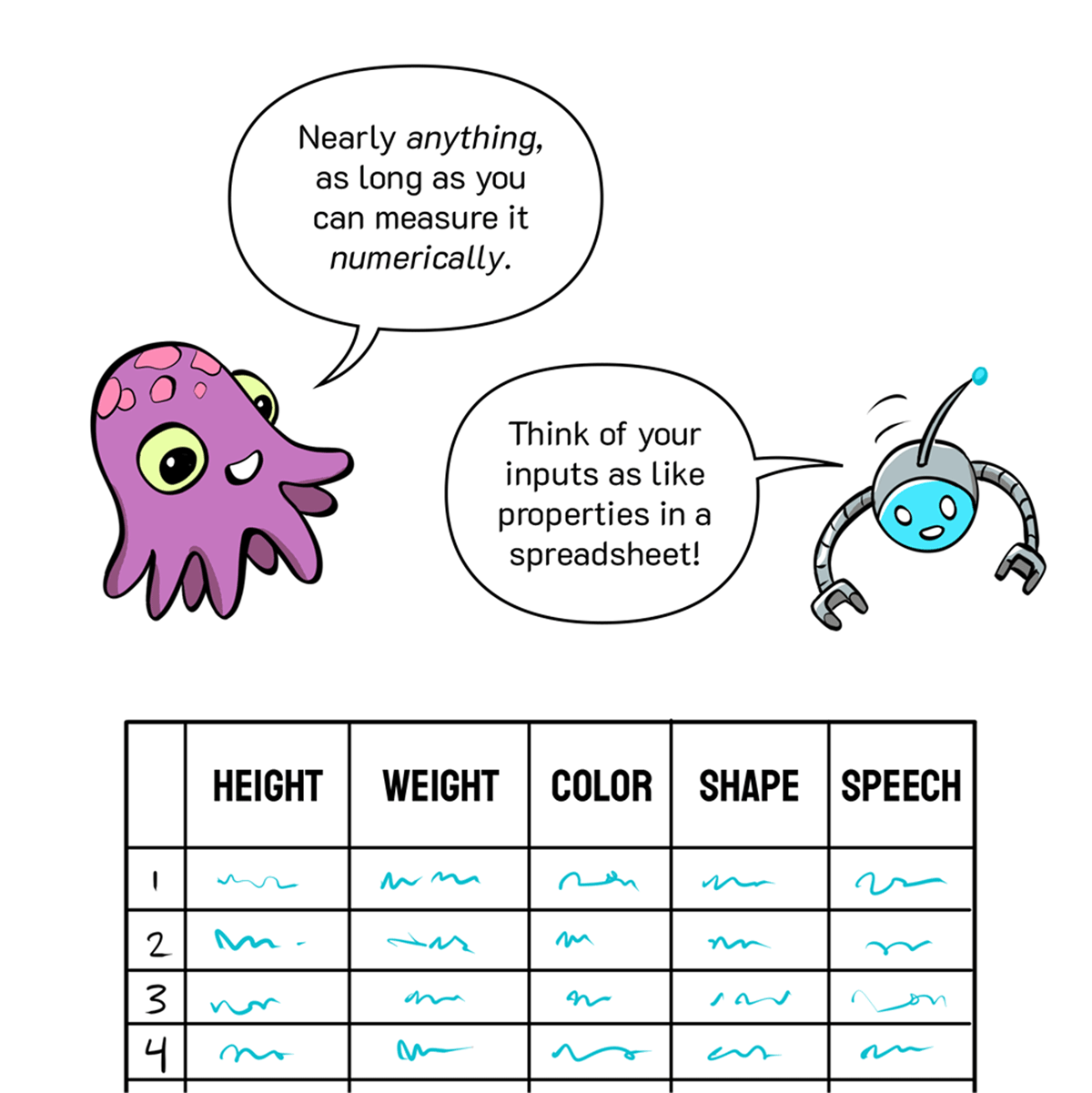

SCENE 11: Octavius and Bit chat above a table of features with columns of data. Octavius: Nearly ANYTHING, as long as you can measure it NUMERICALLY. Bit: Think of your inputs as like properties in a spreadsheet!

SCENE 12: Flip knocks the table over with her hind legs while Bit gestures. Flip: ...but KICKED on its SIDE! Bit: (Not strictly necessary. It just looks better going left-to-right…)

SCENE 13: Octavius and Martha regard the now-horizontal table, where we see arrows traveling from each column of data into a series of round nodes (the inputs). Octavius: This is where it starts—our first LAYER of INPUTS.

SCENE 14: Octavius floats between the previous layer of inputs and gestures to a simple binary classification at panel right: Cat or Dog. Cat is highlighted. Octavius: Our GOAL then is to use the values generated by that INPUT LAYER—no matter how complex—to generate an OUTPUT layer on the other end with a nice simple ANSWER.

SCENE 15: Zoom out, the crew are all standing in a clean space. Octavius smiles, Martha looks nonplussed. Octavius: And that’s it. Any questions? Martha: …

SCENE 16: As before, but Flip and Bit are snickering. Bit (vocal F/X): *snort*

SCENE 17: As before, but Martha is now yelling in confusion. The rest of the crew are outright laughing now. Martha: BUT—WHAT HAPPENS IN BETWEEN?! Octavius: Exactly!

SCENE 18: Octavius looks on as a small animated classification icon moves across the hidden layers of a standard NN diagram... Octavius: Those ‘hidden layers’ in-between are performing a series of simple [classification] tasks to arrive at a complex answer.

SCENE 19: Octavius gestures to a simple neuron with labelled inputs (X1, X2) converging on a node with a sigma (Σ). Flip chimes in from below. Octavius: Each feature’s numeric value (X) is simply added up in the neuron. Flip: And That sum (∑) helps determine the slope of the line.

SCENE 20: As before, but now the lines between the inputs and the sum node are labelled with W1 and W2, and have different thicknesses. Octavius: But some features deserve more WEIGHT than others, so first those inputs are adjusted up or down in strength.

SCENE 21: Martha pops in, gesturing to the lines, which now have dials set to Low and High (à la our explanation in Part 1). Martha: Oh! So the weights are one of our DIALS! Octavius: Yup!

SCENE 22: Martha and Octavius examine the simple neuron, which now has an additional node labelled “b” entering the sum from below. It, too, has a weight on its line. Octavius: Another is the BIAS; an offset to the whole sum that’s also adjustable by weight.

SCENE 23: Martha’s word balloons contain two animated graphs, showing the alteration of the slope and y intercept as weight and bias are changed on loop. Octavius and Flip chime in from below. Martha: So… Adjusting WEIGHT adjusts SLOPE… … And adjusting BIAS adjusts the Y-INTERCEPT? Octavius: Just so! Flip: Told’ja she was a fast learner.

SCENE 24: Our neuron now shows a sloped line in place of the sum node, and Octavius indicates the next step: a squashed line (like a sigmoid shape) denoting the activation function. Bit floats below with a placard. Octavius: Then we squash that linear classifier into a NONLINEAR form like a sigmoid function… Caption (held by Bit): Learn more.

SCENE 25: Octavius floats at the y intercept of an enlarged sigmoid graph, Martha frames the picture with her fingers at lower right. Octavius: This “ACTIVATION FUNCTION” allows for nonlinear relationships… …and smoother adjustments in the learning process. Martha: Hunh.

SCENE 26: We now see our full neuron with all its labels, Octavius and Martha observe from below. It’s meant to look a little overwhelming with all the components in play. Labels: Node, Weight, Edge, Sum, Bias, Activation function, and Non-linear function. (e.g., Sigmoid, tanh, Softmax, Swish, ReLU, Leaky ReLU, Diet ReLU, ReLU with Chips, ReLU, Spam, Spam, ReLU, and Spam.) Octavius: Here it is, fully unpacked. Martha: Yikes.

SCENE 27: We return to our simple, three-node neuron, showing X1 and X2 for inputs, and the sigmoid curve for the activation function. Martha looks relieves as Octavius explains why they’re going back to the simple view. Octavius: But, for simplicity sake, let’s combine sum, bias, and activation function into one node… …and use line thickness to indicate weights. Martha: *whew* Yeah, LET’S!

SCENE 28: The single neuron now has animated weighted lines connecting its nodes, and a new output line moving off to the right. Octavius: And now the OUTPUT of THAT node — Martha: OH!

SCENE 29: Neuron 1 has joined with a second neuron. The outputs of N1 become the inputs of N2. Lines between neurons are still animated, showing the flow of information between nodes. Martha: …can be the INPUT for another! Octavius: That’s the stuff!

SCENE 30: The network grows, showing six layers of interconnected neurons with an animated web of lines flowing between them. Octavius: …and ANOTHER, and ANOTHER… Martha: whoah.

SCENE 31: Below Martha’s dialogue we see two little animated squares calling back to Part 1: a ball rolling back and forth to settle at the bottom of a curve and a line rotating back and forth to settle on the appropriate classification trajectory for a collection of Xs and Os. Bit holds a placard linking us back to Part 1. Martha: So, when we train neural networks using BACKPROPAGATION and GRADIENT DESCENT*… …that process ADJUSTS those weights and biases? Octavius: Yup! Footnote (held up by Bit): *See Part One.

SCENE 32: A simple neuron with three inputs shows animated dials adjusting line thickness to convey weight. Octavius explains from above, Bit throws his hands in the air, Martha sits cross-legged on the floor, gesturing. Octavius: We call those automated adjustments “MODEL TRAINING.” Bit: Look, Ma! No hands! Martha: That’s cool, but where do we ENGINEERS come in?

SCENE 33: Martha pokes at a multi-layered network of nodes floating before her while Flip sits on her back and chimes in. Bit floats below. Flip: Oh, ALL OVER! Engineers choose the right architecture, tweak the number of layers or nodes, select activation functions — all kinds of decisions. Martha: Hunh. Bit: We call that stuff “HYPERPARAMETER TUNING.”

SCENE 34: Martha and Octavius chat in front of a graph-like grid background. Octavius: Information has STRUCTURE. A well-trained neural network can help you NAVIGATE that structure.

SCENE 35: Martha and Octavius peer over a wall to observe two tiny Marthas performing regression (drawing a trend line through a map of data) and classification (drawing a line to delineate two groups of data). Octavius: That’s what you’re doing when you draw those REGRESSION and CLASSIFICATION lines… Easy to do BY HAND when there are only one or two features… Martha: Aww… Lookit ‘em go!

SCENE 36: We return to our spreadsheet on its side, with an attendant layer of input nodes. Martha and Octavius observe. Octavius: But with multiple features, come multiple INPUTS. And that means—

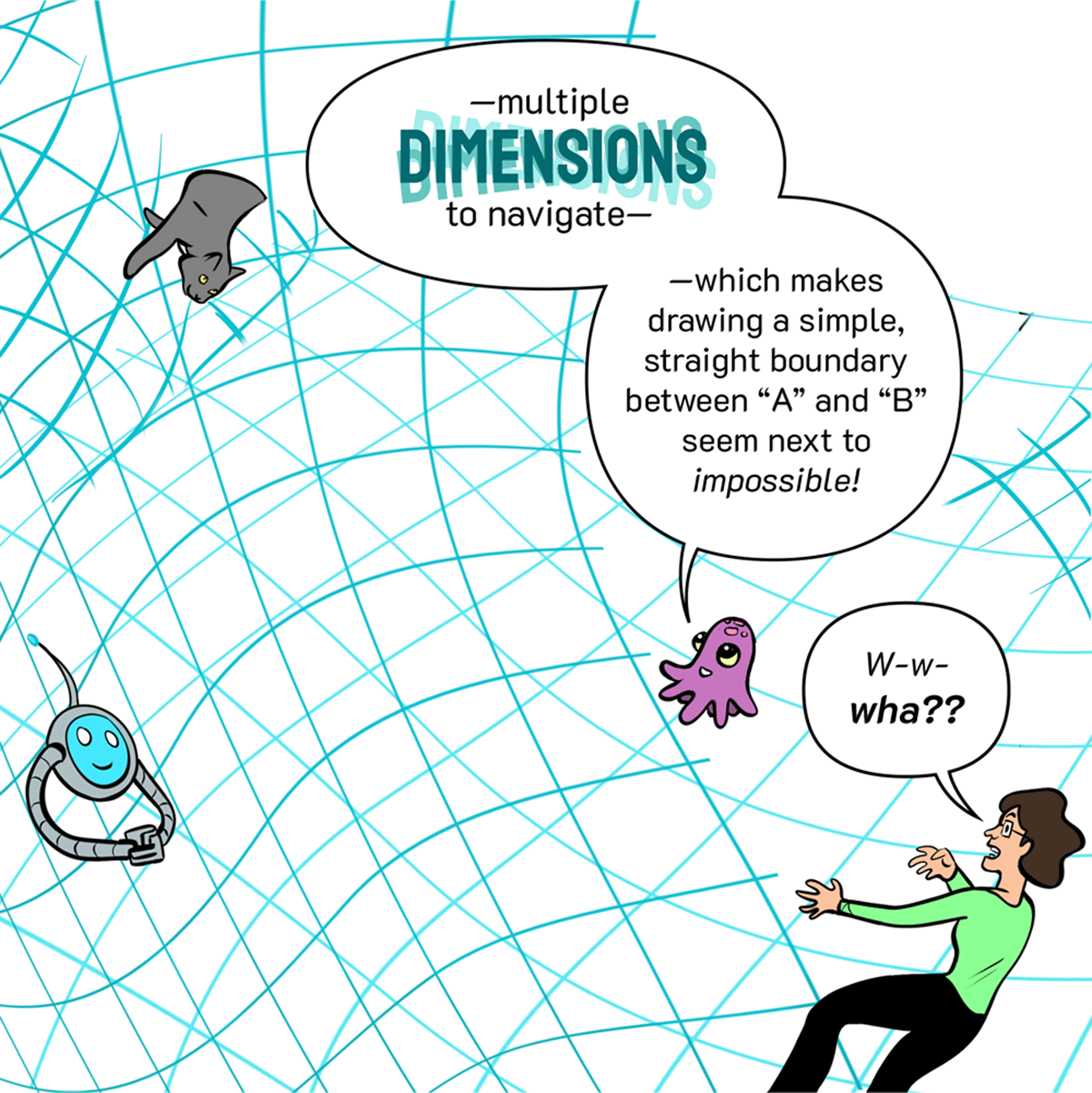

SCENE 37: Martha, Octavius, Flip, and Bit are suddenly floating in a twisty-turny world of data. Streams of integers and letters fly around them, Martha looks terrified. Bit is warping into a strangely distended shape. Flip is walking upside down through an Escherian strand of numbers. Octavius is unphased. Octavius: —multiple DIMENSIONS to navigate— —which makes drawing a simple, straight boundary between “A” and “B” seem next to IMPOSSIBLE! Martha: W-W-WHA??

SCENE 38: The multidimensional landscape fades out towards the top of the panel. Bit looks casual and confident while Martha trips out in the corner. Octavius: Luckily, our DIGITAL cousins see other dimensions MATHEMATICALLY and can find us paths THROUGH that structure. Bit: Oh yeah, piece a’ cake. Just gotta bend the underlying topography.

SCENE 39: Bit gestures to a graph showing two intertwined spirals of data. Flip chimes in from right. Bit: For example, it seems like no straight line could separate these two shapes, but neural networks find a way. Flip: This is where those “HIDDEN LAYERS” shine…

SCENE 40:Bit twists the data spirals between his hands in three stages, turning them into a pair of squiggles that can be easily divided by a single line. Bit: They TRANSFORM data — — they STRETCH and SQUISH space — — but never CUT, BREAK or FOLD it in pursuit of an answer.

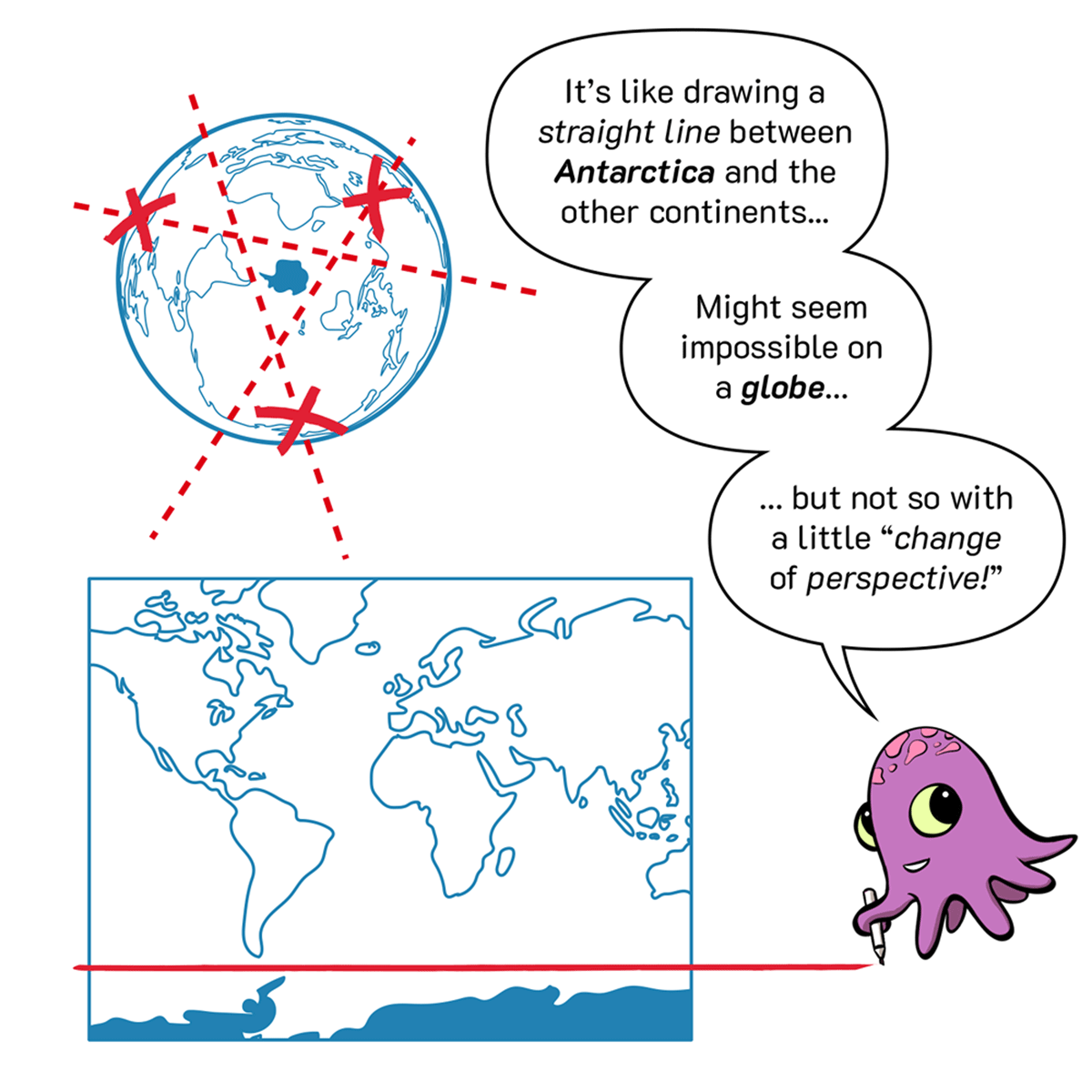

SCENE 41: A tilted globe floats above Octavius, showing a dotted circle drawn around Antarctica with a little question mark after it. Below, Octavius draws a straight line across a mercator projection. Octavius: It’s like drawing a STRAIGHT LINE between ANTARCTICA and the other continents… Might seem impossible on a GLOBE… … but not so with a little “CHANGE of PERSPECTIVE!”

SCENE 42: We see three nodes with lines dividing sets of data within them the first two (in a single layer) are combined to create a curved line in the output node, which successfully separates the desired dataset. Bit holds a Learn More placard linking to the Neural Network playground demo. Octavius: If each neuron contains a different linear function, COMBINING them gets us more COMPLEX shapes for DATA-FITTING. Caption/Link (held by Bit): Learn More.

SCENE 43: Martha looks devious, gloating over a cloud of nodes floating between her hands. Octavius puts a tentacle on her shoulder and looks concerned. Martha: OOH! So with ENOUGH neurons, I could fit ANY dataset, no matter how complex?? Mwa-ha-ha... Octavius: Not so fast! TOO MANY neurons may lead to OVERFITTING!

SCENE 44: The dotted line extends slightly downward from left to right, as Flip start slowly walking. FLIP: ...that array of peaks and valleys we call an “error function” or “loss function”... ...can only be revealed—

SCENE 45: Martha, Bit, Octavius, and Flip chat in white sapce. Martha: SO MUCH FOR. So why DO we call them “HIDDEN LAYERS”? Bit: Hoo-boy. Good question. Octavius: Welll… We know what features go IN… We know what answers come OUT… Flip: … and we can even describe HOW hidden layers work…

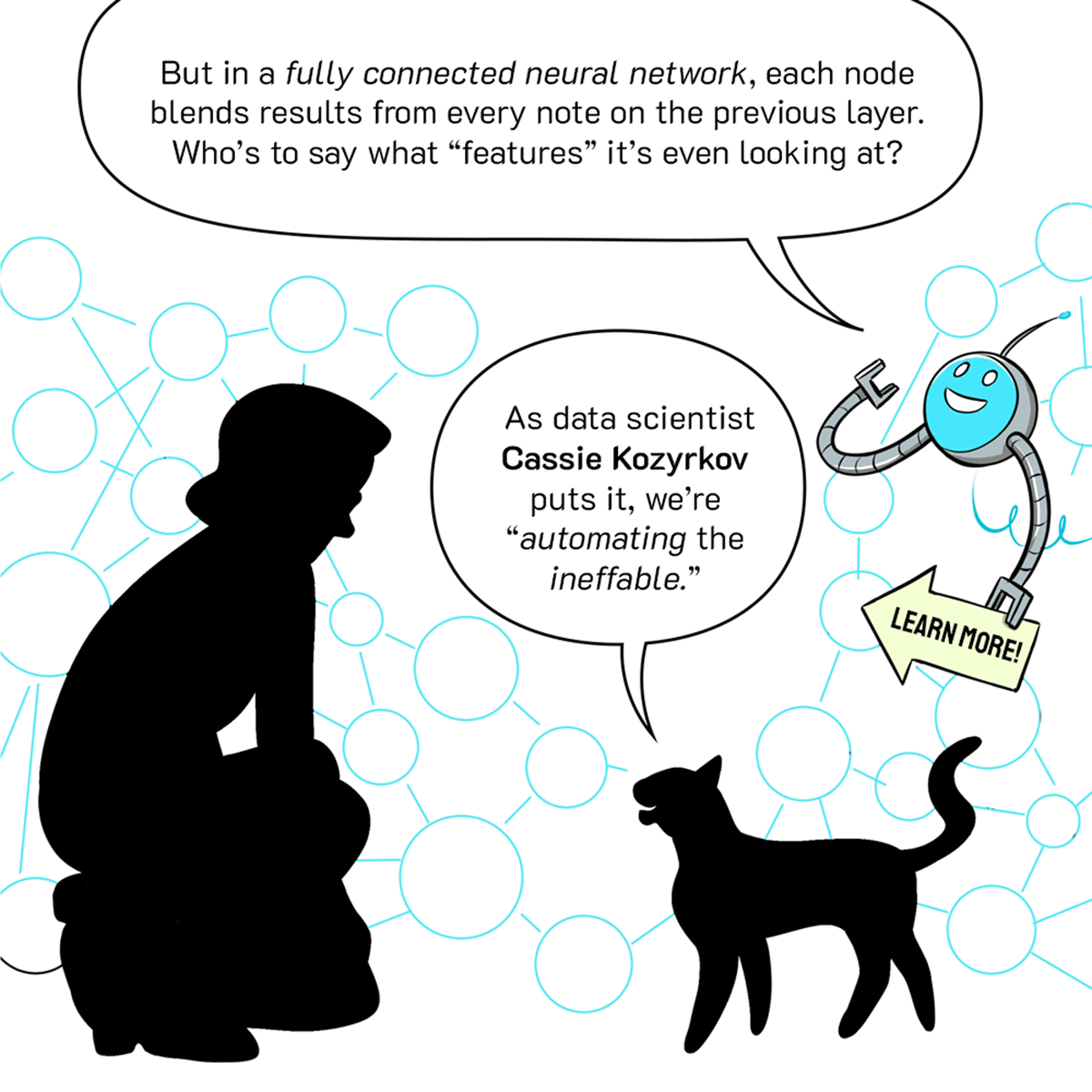

SCENE 46: Martha kneels to listen to Flip, silhouetted against a complex web of nodes. Bit holds a Learn More sign off to the right, pointing at Flip’s dialogue and linking to Cassie’s research. Bit: But in a FULLY CONNECTED NEURAL NETWORK, each node blends results from every note on the previous layer. Who’s to say what “features” it’s even looking at? Flip: As data scientist Cassie Kozyrkov puts it, we’re “AUTOMATING the INEFFABLE.”

SCENE 47: Octavius appears panel right, wearing a conductor’s hat next to a little mining cart. The crew make moves to hop in. Octavius: Now, a FULLY CONNECTED neural network is just one kind of architecture… Martha: Nice hat. Octavius: Thanks!

SCENE 48: Octavius sits in the mining cart in front of a transit map with various stops labelled according to their network architecture symbols. Octavius: Let’s ride to ANOTHER popular stop on the neural network map.

SCENE 49: The whole crew ride the cart through curving loops of track with different architecture icons floating around them. Martha is having a thrill ride, Octavius is blithely explaining as they go. Octavius: This whole field is in FLUX — forever “under construction.” Some of today’s most popular destinations were just PROTOTYPES ten years ago!

SCENE 50: The cart travels in three stages through a loop of track that resembles a clock face. Figures too small to make out. Octavius: RECURRENT NEURAL NETWORKS (such as LSTMs) loop back on themselves repeatedly — — addressing problems with the TEMPORAL ELEMENT — — such as SPEECH RECOGNITION. Caption / link: Learn more.

SCENE 51: The cart moves through a track interchange, seen from above. Multiple options converging and diverging. Octavius operates an interchange switch while explaining. Learn more link connects to [what would we like to link out to here?] Octavius: Others, like AUTO-ENCODERS, helped make sense of unsupervised data — — reducing the DIMENSIONALITY of overflowing big data. Caption / link: Learn more.

SCENE 52: Our crew hurtle by in the foreground, with other tracks and carts seen in the distance. As they scoot by, Martha interrupts Octavius to ask about the passengers in other carts. He maintains a chipper, eyes-front demeanor. Octavius: The network we are heading for has been especially popular for analyzing — Martha: Hey, who are THEY? Octavius: Oh, that’s the G.A.N. line. None of those passengers exist! Don’t make eye contact.

SCENE 53: The cart pulls up to a platform with an arched doorway labelled “CNNs”. Everyone hops out to investigate. Octavius: Here we are. All out for CONVOLUTIONAL NEURAL NETWORKS! Martha: Aha, I’ve heard of THESE. Bit: They get a lotta press.

SCENE 54: The crew enter a gallery full of ornately framed artworks, but every canvas is a mess of 1s and 0s. Bit is enraptured by one of the canvases, Martha looks confused. Bit: Oh, I LOVE this one. She really captured the 11001101010, don’t you think? Martha: Uh… Octavius: Images are all just grids of numbers to a CNN!

SCENE 55: Martha holds up one of the canvases, peering through the numbers within. Octavius explains their contents. Bit holds up a microphone above a soundwave bottom right to demonstrate how CNNs can parse data that’s rendered visually. Martha: Well, isn’t that true of ANY neural network that handles images? Octavius: Sure! But CNNs offer a unique method for parsing and obtaining all that image-based data. Bit: (Including any data type that can be REPRESENTED as images.)

SCENE 56: The crew regard a 32x32 pixel greyscale photo of a cat. Octavius: Even a LOW RESOLUTION image like this contains a wealth of information. Every one of those 1024 PIXELS is a separate input — THREE, in fact, if you count the red, green, and blue channels. Flip: It’s uncle Rufus!

SCENE 57: Octavius explains the multiplicity of color images from panel left, above a set of layered inputs showing Red, Green, and Blue channels, with the corresponding number of total inputs (3072). A vast layer of inputs stretches off to his right. Martha recoils in horror. Octavius: Talk about a MULTI-DIMENSIONAL INPUT LAYER… And that’s not even high-res or video! Martha: AAAH! Getting FLASHBACKS here.

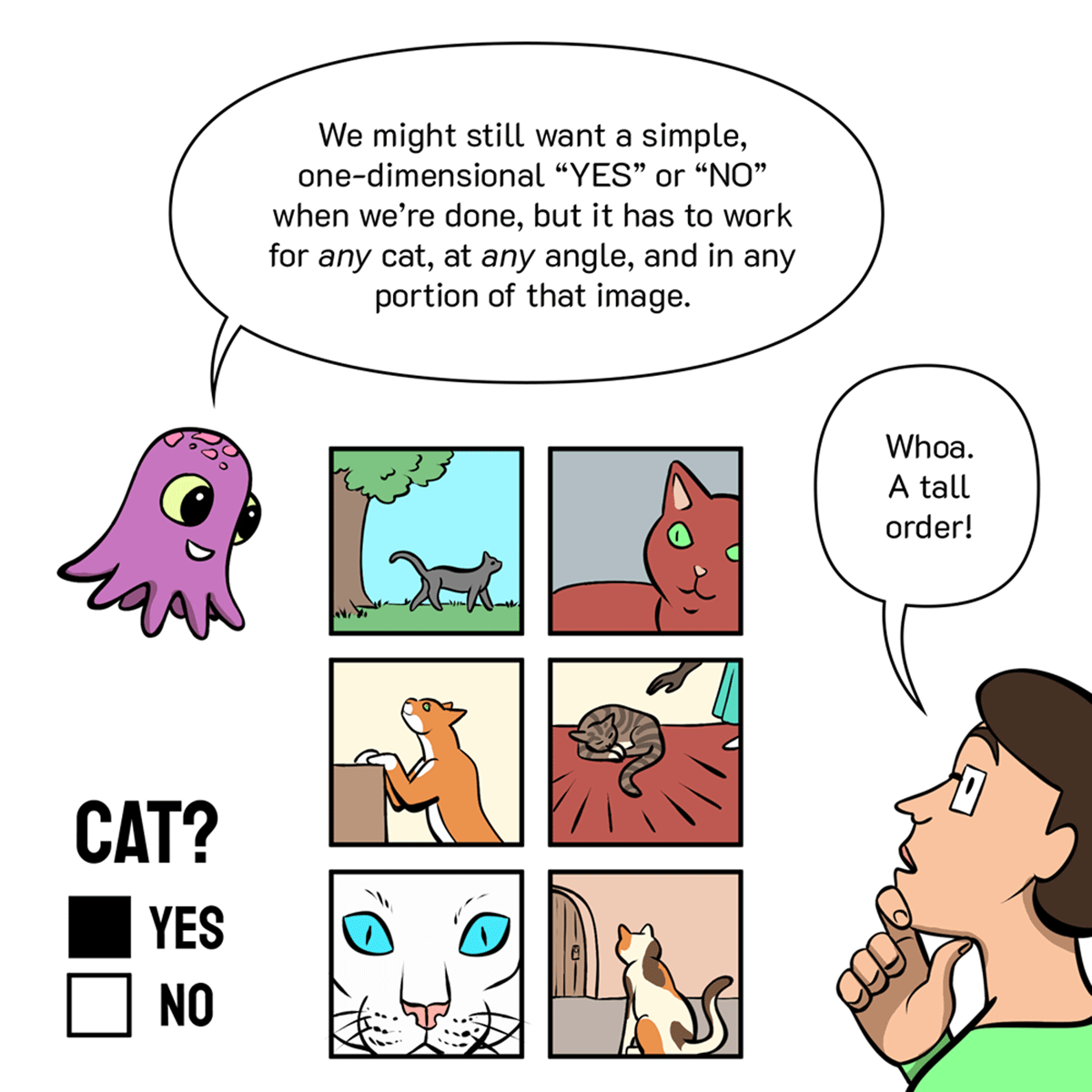

SCENE 58: Octavius continues explaining beside a series of six cat-related images showing cats close up, at different rotational positions, in multiple slots within a single image etc. A classification node bottom left shows Cat: YES. Octavius: We might still want a simple, one-dimensional “YES” or “NO” when we’re done, but it has to work for ANY cat, at ANY angle, and in any portion of that image. Martha: Whoah. A tall order!

SCENE 59: Bit appears in a matrix of pixels where each block is exploding outward into a separate unit panel right. Octavius: Indeed. And CNNs FILL that order, by BREAKING DOWN those huge matrices of pixel data into MANAGEABLE CHUNKS, layer by layer.

SCENE 60: Octavius tosses Martha a small matrix with handles (the filter). Octavius: Let’s start by building a map of CAT-RELATED FEATURES in our source image. CATCH! Martha: What’s this? Octavius: A FILTER. It starts out as a matrix of random weights, but the algorithm tunes it up over time.

SCENE 61: Tiny Martha and Octavius tiptoe across two animated matrices. The first shows the filter moving across the input data stride by stride, the second shows the resulting feature map being generated square by square. [Example here.] Flip pops up lower right to chime in. Octavius: A “CONVOLUTION” involves moving this filter across the entire image by of variable interval known as a “STRIDE.” Martha: Oh! It’s multiplying the SOURCE DATA by that matrix! Flip: The result is called a FEATURE MAP!

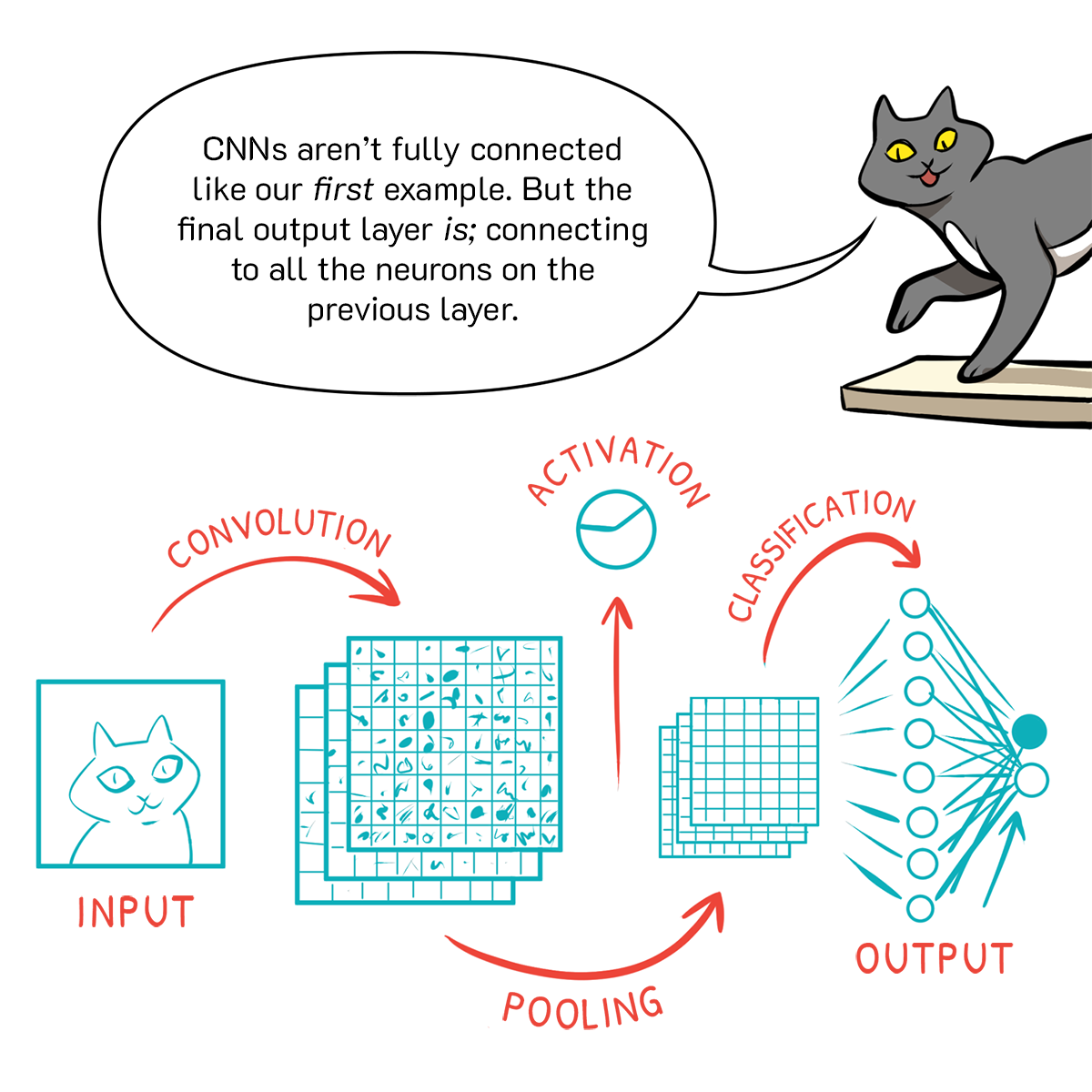

SCENE 62: Octavius, sporting a pool floaty and sunglasses, explains the walkthrough of CNNs. We see the input image, the multiple, simpler images generated through convolution, then an activation function, and finally the smaller images generated through pooling. Martha stands on top of them, surveying the scene. Octavius: These feature maps go through POOLING to further reduce their computational size. Martha: Ooh, So you can keep stacking them to hunt down more features!

SCENE 63: Octavius floats over to four low-res filters showing vague edges (horizontal, vertical, slanted left and right). Bit chimes in from an inset panel below, his hand resting on a brain with goofy eyes. Octavius: In their earliest stages, filters may detect no more than EDGES and ORIENTATION…* Bit: *FUN FACT: your brain’s visual cortex initiates detection this way to… In fact, it was an early inspiration for CNNs. Caption: Learn more.

SCENE 64: Three layers of features are shown in grids at left: rough edges, clearer elements, and complete characters or figures—including a tiny Octavius! Real Octavius explains from panel right. Octavius: But with every subsequent layer, COMPOSITE features start to emerge from the noise. See? It ME!

SCENE 65: Martha and Octavius peer from above at a glowing filter. Martha: So, it goes from LINES and ANGLES, through WHISKERS, PAWS, and FUR, and then finally to “CATS” or “DOGS?” Octavius: Well… *heh* YES, NO, and YES! We may never know exactly what features it sees in the MIDDLE — — but whatever they are, it can FIND ‘EM no matter where they’re hiding!

SCENE 66: Back to our exploded view of CNNs stages, but now there’s an added Classification layer at the end, showing a full line of nodes with interconnected lines moving to a binary output. Flip pops in panel right to explain. Flip: CNNs artfully connected like our first FIRST example. But the final output layer IS; connecting to all the neurons on the previous layer.

SCENE 67: Martha, Ocavius, Bit, and Flip stand in white space. They each have boxes around them corresponding to the network’s predictions. Martha is 98% Human, Octavius 94% Octopus, Flip 89% Cat, but Bit is 60% Basketball. Octavius: This CLASSIFICATION LAYER delivers the accurate answers we all— Bit: Uh… Guys? Labels: 98% Human 99% Octopus 60% Basketball 89% Cat

SCENE 68: Martha leans over in concern. Everyone’s boxes but Bit’s have vanished. Octavius floats above looking concerned. Bit mopes. Martha: Uh-oh. What’s this? Were there no FLYING ROBOTS in the training data? Octavius: Well, there SHOULD’VE been! Label: 60% Basketball

SCENE 69: Flip and Martha investigate training and testing data spread out on the floor. Bit looks resolute and rebellious in the background. Flip: NOPE! Only the walking classic SELECTION BIAS. Martha: Well, that’s not fair! Expecting all robots to walk… Bit: YOU CAN’T TAKE THE SKY FROM ME! Label: 60% Basketball

SCENE 70: Bit and Flip head off-panel left. Martha moves to stand up. Martha: Guess I always thought of data as being UNBIASED. Just “numbers” and all… Octavius: Datasets are products of the time and place they’re assembled. Label: 60% Basketball

SCENE 71: Octavius holds a variety of sock puppets on his tentacles. They each sport speech balloons containing different symbols. Octavius: Even if you proactively try to create “neutral” data, the people doing the compiling may have THEIR OWN implicit biases.

SCENE 72: Martha slumps down among the data images. Flip and Bit look dejected. Octavius flips through some more images at panel right. Martha: This is more complicated than I thought. Octavius: Hmmm… We might be dealing with something called LATENT BIAS too… Take a look at all these basketball photos and see what they all have in common… Label: 60% Basketball

SCENE 73: Octavius shows Martha a spread of photos. They all show basketball players’ arms and hands holding basketballs. Octavius: See anything FAMILIAR? Martha: I dunno, just looks like a bunch of arms holding—

SCENE 74: Martha looks back at Bit in his Basketball Box and has a realization. Martha: OOOHHH. Label: 60% Basketball

SCENE 75: Martha and Octavius discuss. Octavius: ENGINEERS and ETHICISTS are still coming to grips with how neural networks see the world— —and the roles we see FOR them in the coming years.

SCENE 76: Martha, Octavius, Flip, and Bit stand on a mirrored surface, looking down at flipped versions of themselves. Octavius: By simply turning a CNN upside down, researchers took a peerless image classifier… …and gave birth to GENERATIVE ADVERSARIAL NETWORKS or G.A.N.s… … Which can generate beautiful surreal images, but also those notorious “deep fakes.” Caption: Learn More.

SCENE 77: Martha looks determined, Octavius encouraging. Flip and Bit chime in from below. Martha: Yeah, I want to be part of the solution, not part of the problem… I just hope I can tell the DIFFERENCE when the time comes. Octavius: Hey, just learning how it all WORKS is a great place to START. Flip and Bit (off-panel): Hear! Hear!

SCENE 78: Martha beams at the sky, optimistic, but she’s cut off by her boss, Mel. Octavius looks indignant. Martha: Well, look out world! Because I’m 99% certain I can — Mel (off-panel): HEY, MARTHA!

SCENE 79: Mel looms over the edge of their fantasy explain-o-land, mirroring his to reply to his questions. The whole crew look agitated. Flip hisses. Mel: Ooh! Where am I? What’s that? Who are THESE guys? Martha: Uh, This is — Mel: Actually, I DON’T CARE!

SCENE 80: Martha slides down the wall next to Bit and Octavius. All look dejected as Mel prattles on. Mel: I need you on deck in, like, TEN MINUTES to present to the shareholders. Octavius: Is he always like this? Martha: Yep.

SCENE 81: Mel disappears with a wave. Martha starts to pick herself up as the team rally around her. Mel: KTHX BYEEE! Octavius: Oh dear, that was a lot of info we just… Flip: I’ll go sabotage the meeting room to buy some time.

SCENE 82: Octavius stares into the middle distance in panic, but Martha holds up a hand to stop him. Octavius: I knew I should’ve gone slower! They’re going to laugh me out of the Cephalopod Teaching Academy! Martha: NO.

SCENE 83: Close on Martha, determined and excited. Martha: I’VE GOT THIS.

Now it's your turn!

Continue your journey into

the world of machine learning...

SCENE 84: Martha presents to a room full of execs, gesturing to a whiteboard with NNs and other elements visible. The audience is rapt. We close with a call to action for readers to learn more.

...with Google's

ML crash course

or explore

Google Cloud AI!

- Story, Design, and Layout by Lucy Bellwood, Dylan Meconis, Scott McCloud

- Line Art by Leila del Duca

- Color by Jenn Manley Lee

- Japanese localization by Kaz Sato, Mariko Ogawa

- Produced by the Google Comics Factory (Allen Tsai, Alison Lentz, Michael Richardson)