Introduction to optimizing query performance

This document provides an overview of optimization techniques that can improve query performance in BigQuery. In general, queries that do less work perform better. They run faster and consume fewer resources, which can result in lower costs and fewer failures.

Query performance

Evaluating query performance in BigQuery involves several factors:

- Input data and data sources (I/O): How many bytes does your query read?

- Communication between nodes (shuffling): How many bytes does your query pass to the next stage? How many bytes does your query pass to each slot?

- Computation: How much CPU work does your query require?

- Outputs (materialization): How many bytes does your query write?

- Capacity and concurrency: How many slots are available and how many other queries are running at the same time?

- Query patterns: Are your queries following SQL best practices?

To evaluate specific queries or whether you are experiencing resource contention, you can use Cloud Monitoring or the BigQuery administrative resource charts to monitor how your BigQuery jobs consume resources over time. If you identify a slow or resource-intensive query, you can focus your performance optimizations on that query.

Some query patterns, especially those generated by business intelligence tools, can be accelerated by using BigQuery BI Engine. BI Engine is a fast, in-memory analysis service that accelerates many SQL queries in BigQuery by intelligently caching the data you use most frequently. BI Engine is built into BigQuery, which means you can often get better performance without any query modifications.

As with any systems, optimizing for performance sometimes involves tradeoffs. For example, using advanced SQL syntax can sometimes introduce complexity and reduce the queries' understandability for people who aren't SQL experts. Spending time on micro-optimizations for noncritical workloads could also divert resources away from building new features for your applications or from identifying more important optimizations. To help you achieve the highest possible return on investment, we recommend that you focus your optimizations on the workloads that matter most to your data analytics pipelines.

Optimization for capacity and concurrency

BigQuery offers two pricing models for queries: on-demand pricing and capacity-based pricing. The on-demand model provides a shared pool of capacity, and pricing is based on the amount of data that is processed by each query you run.

The capacity-based model is recommended if you want to budget a consistent, monthly expenditure or if you need more capacity than is available with the on-demand model. When you use capacity-based pricing, you allocate dedicated query processing capacity that is measured in slots. The cost of all bytes processed is included in the capacity-based price. In addition to fixed slot commitments, you can use autoscaling slots, which provide dynamic capacity based on your query workload.

The performance of queries that are run repeatedly on the same data can vary, and the variation is generally larger for queries using on-demand slots than it is for queries using slot reservations.

During SQL query processing, BigQuery breaks down the computational capacity required to execute each stage of a query into slots. BigQuery automatically determines the number of queries that can run concurrently as follows:

- On-demand model: number of slots available in the project

- Capacity-based model: number of slots available in the reservation

Queries that require more slots than are available are queued until processing resources become available. After a query begins execution, BigQuery calculates how many slots each query stage uses based on the stage size and complexity and the number of slots available. BigQuery uses a technique called fair scheduling to ensure that each query has enough capacity to progress.

Access to more slots doesn't always result in faster performance for a query. However, a larger pool of slots can improve the performance of large or complex queries, and the performance of highly concurrent workloads. To improve query performance, you can modify your slot reservations or set a higher limit for slots autoscaling.

Query plan and timeline

BigQuery generates a query plan each time that you run a query. Understanding this plan is critical for effective query optimization. The query plan includes execution statistics such as bytes read and slot time consumed. The query plan also includes details about the different stages of execution, which can help you diagnose and improve query performance. The query execution graph provides a graphical interface for viewing the query plan and diagnosing query performance issues.

You can also use the jobs.get API method

or the INFORMATION_SCHEMA.JOBS view

to retrieve the query plan and timeline information. This information is used by

BigQuery Visualiser,

an open source tool that visually represents the flow of execution stages in a

BigQuery job.

When BigQuery executes a query job, it converts the declarative SQL statement into a graph of execution. This graph is broken up into a series of query stages, which themselves are composed of more granular sets of execution steps. BigQuery uses a heavily distributed parallel architecture to run these queries. The BigQuery stages model the units of work that many potential workers might execute in parallel. Stages communicate with one another through a fast, distributed shuffle architecture.

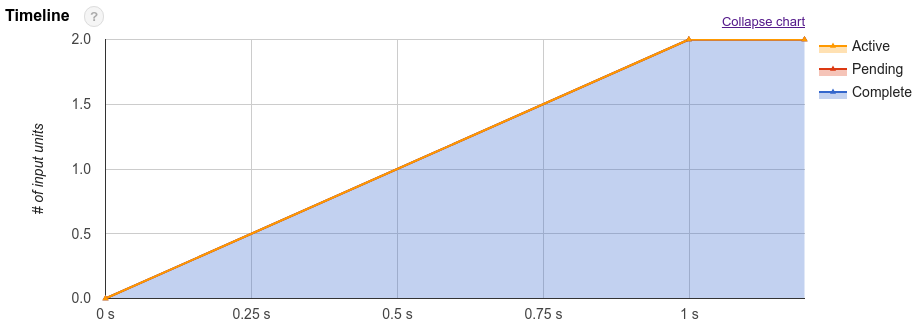

In addition to the query plan, query jobs also expose a timeline of execution. This timeline provides an accounting of units of work completed, pending, and active within query workers. A query might have multiple stages with active workers simultaneously, so the timeline is intended to show overall progress of the query.

To estimate how computationally expensive a query is, you can look at the total number of slot seconds the query consumes. The lower the number of slot seconds, the better, because it means more resources are available to other queries running in the same project at the same time.

The query plan and timeline statistics can help you understand how

BigQuery executes queries and if certain stages dominate resource

utilization. For example, a JOIN stage that generates far more output rows than

input rows might indicate an opportunity to filter earlier in the query.

However, the managed nature of the service limits whether some details are

directly actionable. For best practices and techniques to improve query

execution and performance, see

Optimize query computation.

What's next

- Learn how to troubleshoot query execution issues using the BigQuery audit logs.

- Learn other cost-controlling techniques for BigQuery.

- View near real-time metadata about BigQuery jobs using

the

INFORMATION_SHEMA.JOBSview. - Learn how to monitor your BigQuery usage using the BigQuery System Tables Reports.