Use tuning and evaluation to improve model performance

This document shows you how to create a BigQuery ML

remote model

that references a

Vertex AI gemini-1.5-flash-002 model.

You then use

supervised tuning

to tune the model with new training data, followed by evaluating the model

with the

ML.EVALUATE function.

Tuning can help you address scenarios where you need to customize the hosted Vertex AI model, such as when the expected behavior of the model is hard to concisely define in a prompt, or when prompts don't produce expected results consistently enough. Supervised tuning also influences the model in the following ways:

- Guides the model to return specific response styles—for example being more concise or more verbose.

- Teaches the model new behaviors—for example responding to prompts as a specific persona.

- Causes the model to update itself with new information.

In this tutorial, the goal is to have the model generate text whose style and content conforms as closely as possible to provided ground truth content.

Required permissions

To create a connection, you need the following Identity and Access Management (IAM) role:

roles/bigquery.connectionAdmin

To grant permissions to the connection's service account, you need the following permission:

resourcemanager.projects.setIamPolicy

To create the model using BigQuery ML, you need the following IAM permissions:

bigquery.jobs.createbigquery.models.createbigquery.models.getDatabigquery.models.updateDatabigquery.models.updateMetadata

To run inference, you need the following permissions:

bigquery.tables.getDataon the tablebigquery.models.getDataon the modelbigquery.jobs.create

Before you begin

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

-

Make sure that billing is enabled for your Google Cloud project.

-

Enable the BigQuery, BigQuery Connection, Vertex AI, and Compute Engine APIs.

Costs

In this document, you use the following billable components of Google Cloud:

- BigQuery: You incur costs for the queries that you run in BigQuery.

- BigQuery ML: You incur costs for the model that you create and the processing that you perform in BigQuery ML.

- Vertex AI: You incur costs for calls to and

supervised tuning of the

gemini-1.0-flash-002model.

To generate a cost estimate based on your projected usage,

use the pricing calculator.

For more information, see the following resources:

Create a dataset

Create a BigQuery dataset to store your ML model.

Console

In the Google Cloud console, go to the BigQuery page.

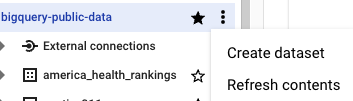

In the Explorer pane, click your project name.

Click View actions > Create dataset.

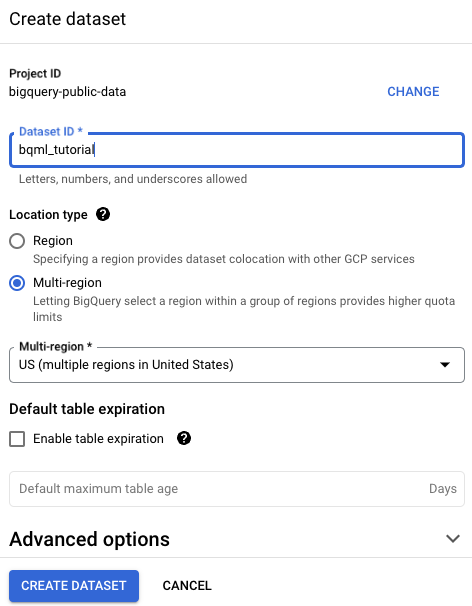

On the Create dataset page, do the following:

For Dataset ID, enter

bqml_tutorial.For Location type, select Multi-region, and then select US (multiple regions in United States).

The public datasets are stored in the

USmulti-region. For simplicity, store your dataset in the same location.- Leave the remaining default settings as they are, and click Create dataset.

bq

To create a new dataset, use the

bq mk command

with the --location flag. For a full list of possible parameters, see the

bq mk --dataset command

reference.

Create a dataset named

bqml_tutorialwith the data location set toUSand a description ofBigQuery ML tutorial dataset:bq --location=US mk -d \ --description "BigQuery ML tutorial dataset." \ bqml_tutorial

Instead of using the

--datasetflag, the command uses the-dshortcut. If you omit-dand--dataset, the command defaults to creating a dataset.Confirm that the dataset was created:

bq ls

API

Call the datasets.insert

method with a defined dataset resource.

{ "datasetReference": { "datasetId": "bqml_tutorial" } }

Create a connection

Create a Cloud resource connection and get the connection's service account ID. Create the connection in the same location as the dataset that you created in the previous step.

Select one of the following options:

Console

Go to the BigQuery page.

To create a connection, click Add, and then click Connections to external data sources.

In the Connection type list, select Vertex AI remote models, remote functions and BigLake (Cloud Resource).

In the Connection ID field, enter a name for your connection.

Click Create connection.

Click Go to connection.

In the Connection info pane, copy the service account ID for use in a later step.

bq

In a command-line environment, create a connection:

bq mk --connection --location=REGION --project_id=PROJECT_ID \ --connection_type=CLOUD_RESOURCE CONNECTION_ID

The

--project_idparameter overrides the default project.Replace the following:

REGION: your connection regionPROJECT_ID: your Google Cloud project IDCONNECTION_ID: an ID for your connection

When you create a connection resource, BigQuery creates a unique system service account and associates it with the connection.

Troubleshooting: If you get the following connection error, update the Google Cloud SDK:

Flags parsing error: flag --connection_type=CLOUD_RESOURCE: value should be one of...

Retrieve and copy the service account ID for use in a later step:

bq show --connection PROJECT_ID.REGION.CONNECTION_ID

The output is similar to the following:

name properties 1234.REGION.CONNECTION_ID {"serviceAccountId": "connection-1234-9u56h9@gcp-sa-bigquery-condel.iam.gserviceaccount.com"}

Terraform

Use the

google_bigquery_connection

resource.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

The following example creates a Cloud resource connection named

my_cloud_resource_connection in the US region:

To apply your Terraform configuration in a Google Cloud project, complete the steps in the following sections.

Prepare Cloud Shell

- Launch Cloud Shell.

-

Set the default Google Cloud project where you want to apply your Terraform configurations.

You only need to run this command once per project, and you can run it in any directory.

export GOOGLE_CLOUD_PROJECT=PROJECT_ID

Environment variables are overridden if you set explicit values in the Terraform configuration file.

Prepare the directory

Each Terraform configuration file must have its own directory (also called a root module).

-

In Cloud Shell, create a directory and a new

file within that directory. The filename must have the

.tfextension—for examplemain.tf. In this tutorial, the file is referred to asmain.tf.mkdir DIRECTORY && cd DIRECTORY && touch main.tf

-

If you are following a tutorial, you can copy the sample code in each section or step.

Copy the sample code into the newly created

main.tf.Optionally, copy the code from GitHub. This is recommended when the Terraform snippet is part of an end-to-end solution.

- Review and modify the sample parameters to apply to your environment.

- Save your changes.

-

Initialize Terraform. You only need to do this once per directory.

terraform init

Optionally, to use the latest Google provider version, include the

-upgradeoption:terraform init -upgrade

Apply the changes

-

Review the configuration and verify that the resources that Terraform is going to create or

update match your expectations:

terraform plan

Make corrections to the configuration as necessary.

-

Apply the Terraform configuration by running the following command and entering

yesat the prompt:terraform apply

Wait until Terraform displays the "Apply complete!" message.

- Open your Google Cloud project to view the results. In the Google Cloud console, navigate to your resources in the UI to make sure that Terraform has created or updated them.

Give the connection's service account access

Grant your service account the Vertex AI Service Agent role so that the service account can access Vertex AI. Failure to grant this role results in an error. Select one of the following options:

Console

Go to the IAM & Admin page.

Click Grant access.

The Add principals dialog opens.

In the New principals field, enter the service account ID that you copied earlier.

Click Select a role.

In Filter, type

Vertex AI Service Agentand then select that role.Click Save.

gcloud

Use the

gcloud projects add-iam-policy-binding command:

gcloud projects add-iam-policy-binding 'PROJECT_NUMBER' --member='serviceAccount:MEMBER' --role='roles/aiplatform.serviceAgent' --condition=None

Replace the following:

PROJECT_NUMBER: your project numberMEMBER: the service account ID that you copied earlier

The service account associated with your connection is an instance of the BigQuery Connection Delegation Service Agent, so it is acceptable to assign a service agent role to it.

Create test tables

Create tables of training and evaluation data based on the public task955_wiki_auto_style_transfer dataset from Hugging Face.

Open the Cloud Shell.

In the Cloud Shell, run the following commands to create tables of test and evaluation data:

python3 -m pip install pandas pyarrow fsspec huggingface_hub python3 -c "import pandas as pd; df_train = pd.read_parquet('hf://datasets/Lots-of-LoRAs/task955_wiki_auto_style_transfer/data/train-00000-of-00001.parquet').drop('id', axis=1); df_train['output'] = [x[0] for x in df_train['output']]; df_train.to_json('wiki_auto_style_transfer_train.jsonl', orient='records', lines=True);" python3 -c "import pandas as pd; df_valid = pd.read_parquet('hf://datasets/Lots-of-LoRAs/task955_wiki_auto_style_transfer/data/valid-00000-of-00001.parquet').drop('id', axis=1); df_valid['output'] = [x[0] for x in df_valid['output']]; df_valid.to_json('wiki_auto_style_transfer_valid.jsonl', orient='records', lines=True);" bq rm -t bqml_tutorial.wiki_auto_style_transfer_train bq rm -t bqml_tutorial.wiki_auto_style_transfer_valid bq load --source_format=NEWLINE_DELIMITED_JSON bqml_tutorial.wiki_auto_style_transfer_train wiki_auto_style_transfer_train.jsonl input:STRING,output:STRING bq load --source_format=NEWLINE_DELIMITED_JSON bqml_tutorial.wiki_auto_style_transfer_valid wiki_auto_style_transfer_valid.jsonl input:STRING,output:STRING

Create a baseline model

Create a

remote model

over the Vertex AI gemini-1.0-flash-002 model.

In the Google Cloud console, go to the BigQuery page.

In the query editor, run the following statement to create a remote model:

CREATE OR REPLACE MODEL `bqml_tutorial.gemini_baseline` REMOTE WITH CONNECTION `LOCATION.CONNECTION_ID` OPTIONS (ENDPOINT ='gemini-1.5-flash-002');

Replace the following:

LOCATION: the connection location.CONNECTION_ID: the ID of your BigQuery connection.When you view the connection details in the Google Cloud console, the

CONNECTION_IDis the value in the last section of the fully qualified connection ID that is shown in Connection ID, for exampleprojects/myproject/locations/connection_location/connections/myconnection.

The query takes several seconds to complete, after which the

gemini_baselinemodel appears in thebqml_tutorialdataset in the Explorer pane. Because the query uses aCREATE MODELstatement to create a model, there are no query results.

Check baseline model performance

Run the

ML.GENERATE_TEXT function

with the remote model to see how it performs on the evaluation data without any

tuning.

In the Google Cloud console, go to the BigQuery page.

In the query editor, run the following statement:

SELECT ml_generate_text_llm_result, ground_truth FROM ML.GENERATE_TEXT( MODEL `bqml_tutorial.gemini_baseline`, ( SELECT input AS prompt, output AS ground_truth FROM `bqml_tutorial.wiki_auto_style_transfer_valid` LIMIT 10 ), STRUCT(TRUE AS flatten_json_output));

If you examine the output data and compare the

ml_generate_text_llm_resultandground_truthvalues, you see that while the baseline model generates text that accurately reflects the facts provided in the ground truth content, the style of the text is fairly different.

Evaluate the baseline model

To perform a more detailed evaluation of the model performance, use the

ML.EVALUATE function.

This function computes model metrics that measure the accuracy and quality of

the generated text, in order to see how the model's responses compare to ideal

esponses.

In the Google Cloud console, go to the BigQuery page.

In the query editor, run the following statement:

SELECT * FROM ML.EVALUATE( MODEL `bqml_tutorial.gemini_baseline`, ( SELECT input AS input_text, output AS output_text FROM `bqml_tutorial.wiki_auto_style_transfer_valid` ), STRUCT('text_generation' AS task_type));

The output looks similar to the following:

+---------------------+---------------------+-------------------------------------------+--------------------------------------------+

| bleu4_score | rouge-l_precision | rouge-l_recall | rouge-l_f1_score | evaluation_status |

+---------------------+---------------------+---------------------+---------------------+--------------------------------------------+

| 0.15289758194680161 | 0.24925921915413246 | 0.44622484204944518 | 0.30851122211104348 | { |

| | | | | "num_successful_rows": 176, |

| | | | | "num_total_rows": 176 |

| | | | | } |

+---------------------+---------------------+ --------------------+---------------------+--------------------------------------------+

You can see that the baseline model perforance isn't bad, but the similarity of the generated text to the ground truth is low, based on the evaluation metrics. This indicates that it is worth performing supervised tuning to see if you can improve model performance for this use case.

Create a tuned model

Create a remote model very similar to the one you created in

Create a model, but this time specifying the

AS SELECT clause

to provide the training data in order to tune the model.

In the Google Cloud console, go to the BigQuery page.

In the query editor, run the following statement to create a remote model:

CREATE OR REPLACE MODEL `bqml_tutorial.gemini_tuned` REMOTE WITH CONNECTION `LOCATION.CONNECTION_ID` OPTIONS ( endpoint = 'gemini-1.5-flash-002', max_iterations = 500, data_split_method = 'no_split') AS SELECT input AS prompt, output AS label FROM `bqml_tutorial.wiki_auto_style_transfer_train`;

Replace the following:

LOCATION: the connection location.CONNECTION_ID: the ID of your BigQuery connection.When you view the connection details in the Google Cloud console, the

CONNECTION_IDis the value in the last section of the fully qualified connection ID that is shown in Connection ID, for exampleprojects/myproject/locations/connection_location/connections/myconnection.

The query takes a few minutes to complete, after which the

gemini_tunedmodel appears in thebqml_tutorialdataset in the Explorer pane. Because the query uses aCREATE MODELstatement to create a model, there are no query results.

Check tuned model performance

Run the ML.GENERATE_TEXT function to see how the tuned model performs on the

evaluation data.

In the Google Cloud console, go to the BigQuery page.

In the query editor, run the following statement:

SELECT ml_generate_text_llm_result, ground_truth FROM ML.GENERATE_TEXT( MODEL `bqml_tutorial.gemini_tuned`, ( SELECT input AS prompt, output AS ground_truth FROM `bqml_tutorial.wiki_auto_style_transfer_valid` LIMIT 10 ), STRUCT(TRUE AS flatten_json_output));

If you examine the output data, you see that the tuned model produces text that is much more similar in style to the ground truth content.

Evaluate the tuned model

Use the ML.EVALUATE function to see how the tuned model's responses compare

to ideal responses.

In the Google Cloud console, go to the BigQuery page.

In the query editor, run the following statement:

SELECT * FROM ML.EVALUATE( MODEL `bqml_tutorial.gemini_tuned`, ( SELECT input AS prompt, output AS label FROM `bqml_tutorial.wiki_auto_style_transfer_valid` ), STRUCT('text_generation' AS task_type));

The output looks similar to the following:

+---------------------+---------------------+-------------------------------------------+--------------------------------------------+

| bleu4_score | rouge-l_precision | rouge-l_recall | rouge-l_f1_score | evaluation_status |

+---------------------+---------------------+---------------------+---------------------+--------------------------------------------+

| 0.19391708685890585 | 0.34170970869469058 | 0.46793189219384496 | 0.368190192211538 | { |

| | | | | "num_successful_rows": 176, |

| | | | | "num_total_rows": 176 |

| | | | | } |

+---------------------+---------------------+ --------------------+---------------------+--------------------------------------------+

You can see that even though the training dataset used only 1,408 examples, there is a marked improvement in performance as indicated by the higher evaluation metrics.

Clean up

- In the Google Cloud console, go to the Manage resources page.

- In the project list, select the project that you want to delete, and then click Delete.

- In the dialog, type the project ID, and then click Shut down to delete the project.