Transcribe audio files with the ML.TRANSCRIBE function

This document describes how to use the

ML.TRANSCRIBE function

with a

remote model

to transcribe audio files from an

object table.

Supported locations

You must create the remote model used in this procedure in one of the following locations:

asia-northeast1asia-south1asia-southeast1australia-southeast1eueurope-west1europe-west2europe-west3europe-west4northamerica-northeast1usus-central1us-east1us-east4us-west1

You must run

the ML.TRANSCRIBE function in the same region as the remote model.

Required roles

To create a remote model and transcribe audio files, you need the following Identity and Access Management (IAM) roles at the project level:

- Create a speech recognizer: Cloud Speech Editor

(

roles/speech.editor) - Create and use BigQuery datasets, tables, and models:

BigQuery Data Editor (

roles/bigquery.dataEditor) Create, delegate, and use BigQuery connections: BigQuery Connections Admin (

roles/bigquery.connectionsAdmin)If you don't have a default connection configured, you can create and set one as part of running the

CREATE MODELstatement. To do so, you must have BigQuery Admin (roles/bigquery.admin) on your project. For more information, see Configure the default connection.Grant permissions to the connection's service account: Project IAM Admin (

roles/resourcemanager.projectIamAdmin)Create BigQuery jobs: BigQuery Job User (

roles/bigquery.jobUser)

These predefined roles contain the permissions required to perform the tasks in this document. To see the exact permissions that are required, expand the Required permissions section:

Required permissions

- Create a dataset:

bigquery.datasets.create - Create, delegate, and use a connection:

bigquery.connections.* - Set service account permissions:

resourcemanager.projects.getIamPolicyandresourcemanager.projects.setIamPolicy - Create a model and run inference:

bigquery.jobs.createbigquery.models.createbigquery.models.getDatabigquery.models.updateDatabigquery.models.updateMetadata

- Create an object table:

bigquery.tables.createandbigquery.tables.update - Create a speech recognizer:

speech.recognizers.createspeech.recognizers.getspeech.recognizers.recognizespeech.recognizers.update

You might also be able to get these permissions with custom roles or other predefined roles.

Before you begin

- Sign in to your Google Cloud account. If you're new to Google Cloud, create an account to evaluate how our products perform in real-world scenarios. New customers also get $300 in free credits to run, test, and deploy workloads.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

-

Enable the BigQuery, BigQuery Connection API, and Speech-to-Text APIs.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles. -

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

-

Enable the BigQuery, BigQuery Connection API, and Speech-to-Text APIs.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles.

Create a recognizer

Speech-to-Text supports resources called recognizers. Recognizers represent stored and reusable recognition configurations. You can create a recognizer to logically group together transcriptions or traffic for your application.

Creating a speech recognizer is optional. If you choose to create a speech

recognizer, note the project ID, location, and recognizer ID of the recognizer

for use in the CREATE MODEL statement, as described in

SPEECH_RECOGNIZER.

If you choose not to create a speech recognizer, you must specify a value

for the

recognition_config argument

of the ML.TRANSCRIBE function.

You can only use the chirp

transcription model

in the speech recognizer or recognition_config value that you provide.

Create a dataset

Create a BigQuery dataset to contain your resources:

Console

In the Google Cloud console, go to the BigQuery page.

In the left pane, click Explorer:

If you don't see the left pane, click Expand left pane to open the pane.

In the Explorer pane, click your project name.

Click View actions > Create dataset.

On the Create dataset page, do the following:

For Dataset ID, type a name for the dataset.

For Location type, select Region or Multi-region.

- If you selected Region, then select a location from the Region list.

- If you selected Multi-region, then select US or Europe from the Multi-region list.

Click Create dataset.

bq

Create a connection

You can skip this step if you either have a default connection configured, or you have the BigQuery Admin role.

Create a Cloud resource connection for the remote model to use, and get the connection's service account. Create the connection in the same location as the dataset that you created in the previous step.

Select one of the following options:

Console

Go to the BigQuery page.

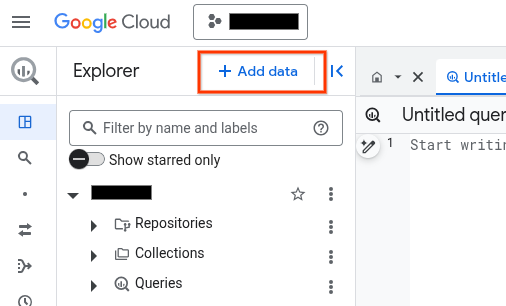

In the Explorer pane, click Add data:

The Add data dialog opens.

In the Filter By pane, in the Data Source Type section, select Business Applications.

Alternatively, in the Search for data sources field, you can enter

Vertex AI.In the Featured data sources section, click Vertex AI.

Click the Vertex AI Models: BigQuery Federation solution card.

In the Connection type list, select Vertex AI remote models, remote functions, BigLake and Spanner (Cloud Resource).

In the Connection ID field, enter a name for your connection.

Click Create connection.

Click Go to connection.

In the Connection info pane, copy the service account ID for use in a later step.

bq

In a command-line environment, create a connection:

bq mk --connection --location=REGION --project_id=PROJECT_ID \ --connection_type=CLOUD_RESOURCE CONNECTION_ID

The

--project_idparameter overrides the default project.Replace the following:

REGION: your connection regionPROJECT_ID: your Google Cloud project IDCONNECTION_ID: an ID for your connection

When you create a connection resource, BigQuery creates a unique system service account and associates it with the connection.

Troubleshooting: If you get the following connection error, update the Google Cloud SDK:

Flags parsing error: flag --connection_type=CLOUD_RESOURCE: value should be one of...

Retrieve and copy the service account ID for use in a later step:

bq show --connection PROJECT_ID.REGION.CONNECTION_ID

The output is similar to the following:

name properties 1234.REGION.CONNECTION_ID {"serviceAccountId": "connection-1234-9u56h9@gcp-sa-bigquery-condel.iam.gserviceaccount.com"}

Terraform

Use the

google_bigquery_connection

resource.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

The following example creates a Cloud resource connection named

my_cloud_resource_connection in the US region:

To apply your Terraform configuration in a Google Cloud project, complete the steps in the following sections.

Prepare Cloud Shell

- Launch Cloud Shell.

-

Set the default Google Cloud project where you want to apply your Terraform configurations.

You only need to run this command once per project, and you can run it in any directory.

export GOOGLE_CLOUD_PROJECT=PROJECT_ID

Environment variables are overridden if you set explicit values in the Terraform configuration file.

Prepare the directory

Each Terraform configuration file must have its own directory (also called a root module).

-

In Cloud Shell, create a directory and a new

file within that directory. The filename must have the

.tfextension—for examplemain.tf. In this tutorial, the file is referred to asmain.tf.mkdir DIRECTORY && cd DIRECTORY && touch main.tf

-

If you are following a tutorial, you can copy the sample code in each section or step.

Copy the sample code into the newly created

main.tf.Optionally, copy the code from GitHub. This is recommended when the Terraform snippet is part of an end-to-end solution.

- Review and modify the sample parameters to apply to your environment.

- Save your changes.

-

Initialize Terraform. You only need to do this once per directory.

terraform init

Optionally, to use the latest Google provider version, include the

-upgradeoption:terraform init -upgrade

Apply the changes

-

Review the configuration and verify that the resources that Terraform is going to create or

update match your expectations:

terraform plan

Make corrections to the configuration as necessary.

-

Apply the Terraform configuration by running the following command and entering

yesat the prompt:terraform apply

Wait until Terraform displays the "Apply complete!" message.

- Open your Google Cloud project to view the results. In the Google Cloud console, navigate to your resources in the UI to make sure that Terraform has created or updated them.

Grant access to the service account

Select one of the following options:

Console

Go to the IAM & Admin page.

Click Grant Access.

The Add principals dialog opens.

In the New principals field, enter the service account ID that you copied earlier.

Click the Select a role field and then type

Cloud Speech Clientin Filter.Click Add another role.

In the Select a role field, select Cloud Storage, and then select Storage Object Viewer.

Click Save.

gcloud

Use the

gcloud projects add-iam-policy-binding command:

gcloud projects add-iam-policy-binding 'PROJECT_NUMBER' --member='serviceAccount:MEMBER' --role='roles/speech.client' --condition=None gcloud projects add-iam-policy-binding 'PROJECT_NUMBER' --member='serviceAccount:MEMBER' --role='roles/storage.objectViewer' --condition=None

Replace the following:

PROJECT_NUMBER: your project number.MEMBER: the service account ID that you copied earlier.

Failure to grant the permission results in a Permission denied error.

Create an object table

Create an object table over a set of audio files in Cloud Storage. The audio files in the object table must be of a supported type.

The Cloud Storage bucket used by the object table should be in the

same project where you plan to create the model and call the

ML.TRANSCRIBE function. If you want to call the

ML.TRANSCRIBE function in a different project than the one

that contains the Cloud Storage bucket used by the object table, you must

grant the Storage Admin role at the bucket level

to the service-A@gcp-sa-aiplatform.iam.gserviceaccount.com service account.

Create a model

Create a remote model with a

REMOTE_SERVICE_TYPE of

CLOUD_AI_SPEECH_TO_TEXT_V2:

CREATE OR REPLACE MODEL `PROJECT_ID.DATASET_ID.MODEL_NAME` REMOTE WITH CONNECTION {DEFAULT | `PROJECT_ID.REGION.CONNECTION_ID`} OPTIONS ( REMOTE_SERVICE_TYPE = 'CLOUD_AI_SPEECH_TO_TEXT_V2', SPEECH_RECOGNIZER = 'projects/PROJECT_NUMBER/locations/LOCATION/recognizers/RECOGNIZER_ID' );

Replace the following:

PROJECT_ID: your project ID.DATASET_ID: the ID of the dataset to contain the model.MODEL_NAME: the name of the model.REGION: the region used by the connection.CONNECTION_ID: the connection ID—for example,myconnection.When you view the connection details in the Google Cloud console, the connection ID is the value in the last section of the fully qualified connection ID that is shown in Connection ID—for example

projects/myproject/locations/connection_location/connections/myconnection.PROJECT_NUMBER: the project number of the project that contains the speech recognizer. You can find this value on the Project info card in the Dashboard page of the Google Cloud console.LOCATION: the location used by the speech recognizer. You can find this value in the Location field on the List recognizers page of the Google Cloud console.RECOGNIZER_ID: the speech recognizer ID. You can find this value in the ID field on the List recognizers page of the Google Cloud console.This option isn't required. If you don't specify a value for it, a default recognizer is used. In that case, you must specify a value for the

recognition_configparameter of theML.TRANSCRIBEfunction in order to provide a configuration for the default recognizer.You can only use the

chirptranscription model in therecognition_configvalue that you provide.

Transcribe audio files

Transcribe audio files with the ML.TRANSCRIBE function:

SELECT * FROM ML.TRANSCRIBE( MODEL `PROJECT_ID.DATASET_ID.MODEL_NAME`, TABLE `PROJECT_ID.DATASET_ID.OBJECT_TABLE_NAME`, RECOGNITION_CONFIG => ( JSON 'recognition_config') );

Replace the following:

PROJECT_ID: your project ID.DATASET_ID: the ID of the dataset that contains the model.MODEL_NAME: the name of the model.OBJECT_TABLE_NAME: the name of the object table that contains the URIs of the audio files to process.recognition_config: aRecognitionConfigresource in JSON format.If a recognizer has been specified for the remote model by using the

SPEECH_RECOGNIZERoption, you can't specify arecognition_configvalue.If no recognizer has been specified for the remote model by using the

SPEECH_RECOGNIZERoption, you must specify arecognition_configvalue. This value is used to provide a configuration for the default recognizer.You can only use the

chirptranscription model in therecognition_configvalue that you provide.

Examples

Example 1

The following example transcribes the audio files represented by the

audio table without overriding the recognizer's default configuration:

SELECT * FROM ML.TRANSCRIBE( MODEL `myproject.mydataset.transcribe_model`, TABLE `myproject.mydataset.audio` );

The following example transcribes the audio files represented by the

audio table and provides a configuration for the default recognizer:

SELECT * FROM ML.TRANSCRIBE( MODEL `myproject.mydataset.transcribe_model`, TABLE `myproject.mydataset.audio`, recognition_config => ( JSON '{"language_codes": ["en-US" ],"model": "chirp","auto_decoding_config": {}}') );

What's next

- For more information about model inference in BigQuery ML, see Model inference overview.

- For more information about using Cloud AI APIs to perform AI tasks, see AI application overview.

- For more information about supported SQL statements and functions for generative AI models, see End-to-end user journeys for generative AI models.