En este instructivo, se muestra cómo usar HammerDB para realizar pruebas de carga en una instancia de SQL Server de Compute Engine. Puedes obtener información sobre cómo instalar una instancia de SQL Server, si consultas los siguientes instructivos:

Hay una gran cantidad de herramientas para pruebas de carga disponibles. Algunas son gratuitas y de código abierto, pero otras requieren licencias. HammerDB es una herramienta de código abierto que, por lo general, es útil para demostrar el rendimiento de tu base de datos de SQL Server. En este instructivo, se muestran los pasos básicos para usar HammerDB, pero hay otras herramientas disponibles y deberías elegir las que se adapten mejor a tus cargas de trabajo específicas.

Configura la instancia de SQL Server para pruebas de carga

Antes de comenzar, asegúrate de que las reglas del firewall de Windows están configuradas para permitir el tráfico desde la dirección IP de la instancia de Windows nueva que creaste. Luego, crea una base de datos nueva para las pruebas de carga TPCC y sigue estos pasos a fin de configurar una cuenta de usuario:

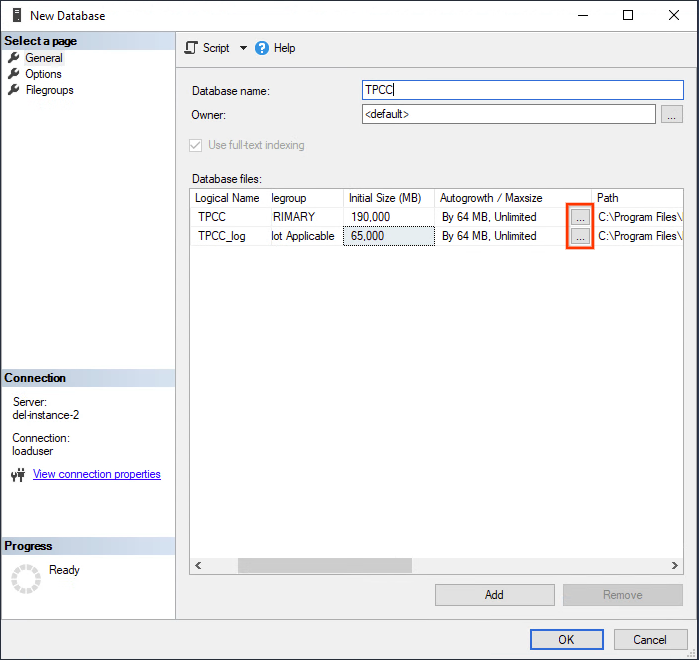

- Haz clic derecho en la carpeta Bases de datos en SQL Server Management Studio y elige Base de datos nueva.

- Otórgale el nombre “TPCC”.

- Establece el tamaño inicial del archivo de datos en 190,000 MB y del archivo de registro en 65,000 MB.

Haz clic en el botón de elipsis para establecer los límites de Crecimiento automático (Autogrowth) en valores más altos, como se muestra en la siguiente captura de pantalla:

Establece el crecimiento del archivo de datos en 64 MB a un tamaño ilimitado.

Establece el archivo de registro para inhabilitar el crecimiento automático.

Haz clic en Aceptar.

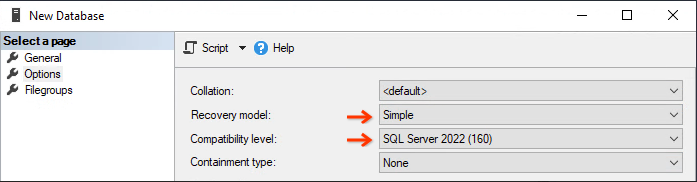

En el cuadro de diálogo Base de datos nueva (New database), en el panel de la izquierda, selecciona la página Opciones (Options).

Establece el Nivel de compatibilidad en SQL Server 2022 (160).

Establece el Modelo de recuperación (Recovery model) en Simple (Simple), para que la carga no llene los registros de transacciones.

Haz clic en Aceptar para crear la base de datos TPCC; esto puede tardar unos minutos en completarse.

La imagen de SQL Server preconfigurada solo viene con la autenticación de Windows activada, por lo que deberás habilitar el modo de autenticación mixto dentro de SSMS con esta guía.

Sigue estos pasos para crear una cuenta de usuario de SQL Server nueva en tu servidor de base de datos que tiene el permiso DBOwner. Otórgale el nombre “loaduser” y establece una contraseña segura.

Toma nota de la dirección IP interna de SQL Serve; para ello, usa el comando

Get-NetIPAddress, ya que es importante para el rendimiento y la seguridad usar la IP interna.

Instala HammerDB

Puedes ejecutar HammerDB directamente en tu instancia de SQL Server. Sin embargo, para una prueba más precisa, crea una instancia de Windows nueva y prueba la instancia de SQL Server de forma remota.

Crea una instancia

Sigue estos pasos para crear una instancia de Compute Engine nueva:

En la consola de Google Cloud , ve a la página Crear una instancia.

En Nombre, ingresa

hammerdb-instance.En la sección Configuración de la máquina, selecciona el tipo de máquina con al menos la mitad de la cantidad de CPU como instancia de base de datos.

En la sección Disco de arranque, haz clic en Cambiar y, luego, haz lo siguiente:

- En la pestaña Imágenes públicas, elige un sistema operativo de Windows Server.

- En la lista Versión, haz clic en Windows Server 2022 Datacenter.

- En la lista Tipo de disco de arranque, selecciona Disco persistente estándar.

- Para confirmar las opciones del disco de arranque, haz clic en Seleccionar.

Para crear y, también, iniciar la VM, haz clic en Crear.

Instala el software

Cuando esté listo, usa un cliente de RDP para conectarte a tu nueva instancia de Windows Server e instala el siguiente software:

Ejecuta HammerDB

Después de instalar HammerDB, ejecuta el archivo hammerdb.bat. HammberDB no aparece en la lista de aplicaciones del menú de inicio. Usa el siguiente comando para ejecutar HammerDB:

C:\Program Files\HammerDB-VERSION\hammerdb.batReemplaza VERSION por la versión de HammerDB instalada.

Crea la conexión y el esquema

Cuando la aplicación se está ejecutando, el primer paso es configurar la conexión para compilar el esquema.

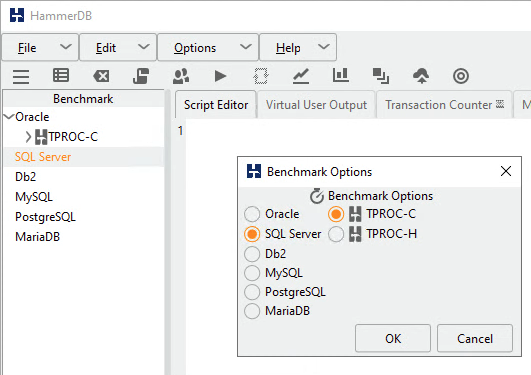

- Haz doble clic en SQL Server en el panel Comparativas (Benchmark).

- Selecciona TPROC-C.

Desde el sitio de HammerDB:

TPROC-C es la carga de trabajo de OLTP implementada en HammerDB derivada de la especificación de TPROC-C con modificaciones para que la ejecución de HammerDB sea sencilla y rentable en cualquiera de los entornos de bases de datos admitidos. La carga de trabajo de HammerDB TPROC-C es una carga de trabajo de código abierto derivada del estándar de comparativas de TPROC-C y, como tal, no es comparable con los resultados publicados de TPROC-C, ya que los resultados cumplen con un subconjunto en lugar del estándar de comparativas de TPROC-C completo. El nombre de la carga de trabajo de HammerDB TPROC-C significa "comparativa de procesamiento de transacciones derivada de la especificación "C" del TPC".

Haz clic en Aceptar.

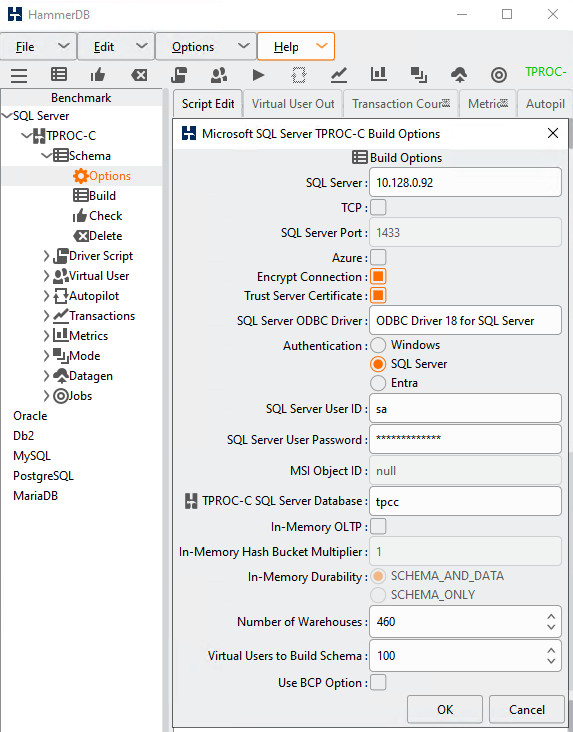

Haz clic en Esquema y, luego, haz doble clic en Opciones.

Completa el formulario con tu dirección IP, nombre de usuario y contraseña, como se muestra en la siguiente imagen:

Configura el controlador ODBC de SQL Server en el controlador OBDC 18 para SQL Server

En este caso, Cantidad de almacenes (la escala) se establece en 460, pero puedes elegir un valor diferente. Algunos lineamientos sugieren de 10 a 100 almacenes de datos por CPU. En este instructivo, establece este valor en la cantidad de núcleos multiplicada por 10: 160 para una instancia de 16 núcleos.

En Usuarios virtuales para compilar el esquema (Virtual Users to Build Schema), elige un número entre 1 y 2 veces la cantidad de CPU virtuales cliente. Puedes hacer clic en la barra gris junto al control deslizante para aumentar la cantidad.

Borra la opción Usar la opción de BPC.

Haz clic en Aceptar.

Haz doble clic en la opción Compilar debajo de la sección Compilación del esquema para crear el esquema y cargar las tablas. Cuando se complete, haz clic en el ícono de la linterna roja en la parte superior central de la pantalla para borrar el usuario virtual y continuar con el siguiente paso.

Si creaste tu base de datos con el modelo de recuperación Simple, te recomendamos volver a cambiarlo a Full en este momento para obtener una prueba más precisa de una situación de producción. Este cambio no tendrá efecto hasta que realices una copia de seguridad completa o diferencial para activar el inicio de una cadena de registro nueva.

Crea la secuencia de comandos del controlador

HammerDB usa la secuencia de comandos del controlador a fin de dirigir el flujo de las instrucciones de SQL a la base de datos para generar la carga necesaria.

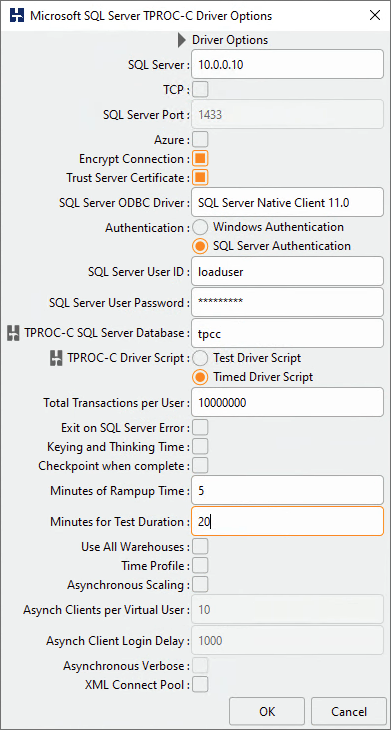

- En el panel Comparativas (Benchmark), expande la sección Secuencia de comandos del controlador (Driver Script) y haz doble clic en Opciones (Options).

- Verifica que la configuración coincida con la que usaste en el cuadro de diálogo Compilación del esquema.

- Elige Secuencia de comandos del controlador con marcas de tiempo.

- La opción Punto de control cuando se completa (Checkpoint when complete) obliga a la base de datos a escribir todo en el disco al final de la prueba. Marca esta opción solo si ejecutarás varias pruebas consecutivas.

- Para garantizar una prueba exhaustiva, establece Minutos de tiempo de arranque (Minutes of rampup time) en 5 y Minutos de duración de la prueba (Minutes for test duration) en 20.

- Haz clic en Aceptar (OK) para salir del cuadro de diálogo.

- Haz doble clic en Cargar (Load) en la sección Secuencia de comandos del controlador (Driver Script) del panel Comparativas (Benchmark) para activar la secuencia de comandos del controlador.

Crea usuarios virtuales

Por lo general, la creación de una carga real requiere secuencias de comandos que se ejecuten como varios usuarios diferentes. Crea algunos usuarios virtuales para la prueba.

- Expande la sección Usuarios virtuales y haz doble clic en Opciones.

- Si configuras la cantidad de almacenes de datos (escala) en 160, establece Usuarios virtuales en 16, debido a que los lineamientos TPROC-C recomiendan una proporción 10 veces menor para evitar el bloqueo de las filas. Selecciona la casilla de verificación Mostrar resultado para habilitar los mensajes de error en la consola.

- Haz clic en Aceptar.

Recopila estadísticas de entorno de ejecución

HammerDB y SQL Server no recopilan estadísticas detalladas del entorno de ejecución con facilidad. A pesar de que las estadísticas están disponibles en profundidad en SQL Server, deben capturarse y calcularse con frecuencia. Si todavía no tienes un procedimiento o una herramienta para ayudarte a capturar estos datos, puedes usar el procedimiento de esta sección para capturar algunas métricas útiles durante tu prueba. Los resultados se escribirán en un archivo CSV en el directorio temp de Windows. Puedes copiar los datos en una Hoja de cálculo de Google con la opción Pegado especial > Pegar CSV.

Si deseas usar este procedimiento, primero debes habilitar de forma temporal los Procedimientos de automatización OLE para escribir el archivo en el disco. Recuerda inhabilitarlos luego de la prueba:

sp_configure 'show advanced options', 1; GO RECONFIGURE; GO sp_configure 'Ole Automation Procedures', 1; GO RECONFIGURE; GO

Este es el código para crear el procedimiento sp_write_performance_counters en SQL Server Management Studio. Antes de comenzar la prueba de carga, ejecutarás este procedimiento en Management Studio:

USE [master]

GO

SET ANSI_NULLS ON

GO

SET QUOTED_IDENTIFIER ON

GO

/***

LogFile path has to be in a directory that SQL Server can Write To.

*/

CREATE PROCEDURE [dbo].[sp_write_performance_counters] @LogFile varchar (2000) = 'C:\\WINDOWS\\TEMP\\sqlPerf.log', @SecondsToRun int =1600, @RunIntervalSeconds int = 2

AS

BEGIN

--File writing variables

DECLARE @OACreate INT, @OAFile INT, @FileName VARCHAR(2000), @RowText VARCHAR(500), @Loops int, @LoopCounter int, @WaitForSeconds varchar (10)

--Variables to save last counter values

DECLARE @LastTPS BIGINT, @LastLRS BIGINT, @LastLTS BIGINT, @LastLWS BIGINT, @LastNDS BIGINT, @LastAWT BIGINT, @LastAWT_Base BIGINT, @LastALWT BIGINT, @LastALWT_Base BIGINT

--Variables to save current counter values

DECLARE @TPS BIGINT, @Active BIGINT, @SCM BIGINT, @LRS BIGINT, @LTS BIGINT, @LWS BIGINT, @NDS BIGINT, @AWT BIGINT, @AWT_Base BIGINT, @ALWT BIGINT, @ALWT_Base BIGINT, @ALWT_DIV BIGINT, @AWT_DIV BIGINT

SELECT @Loops = case when (@SecondsToRun % @RunIntervalSeconds) > 5 then @SecondsToRun / @RunIntervalSeconds + 1 else @SecondsToRun / @RunIntervalSeconds end

SET @LoopCounter = 0

SELECT @WaitForSeconds = CONVERT(varchar, DATEADD(s, @RunIntervalSeconds , 0), 114)

SELECT @FileName = @LogFile + FORMAT ( GETDATE(), '-MM-dd-yyyy_m', 'en-US' ) + '.txt'

--Create the File Handler and Open the File

EXECUTE sp_OACreate 'Scripting.FileSystemObject', @OACreate OUT

EXECUTE sp_OAMethod @OACreate, 'OpenTextFile', @OAFile OUT, @FileName, 2, True, -2

--Write the Header

EXECUTE sp_OAMethod @OAFile, 'WriteLine', NULL,'Transactions/sec, Active Transactions, SQL Cache Memory (KB), Lock Requests/sec, Lock Timeouts/sec, Lock Waits/sec, Number of Deadlocks/sec, Average Wait Time (ms), Average Latch Wait Time (ms)'

--Collect Initial Sample Values

SET ANSI_WARNINGS OFF

SELECT

@LastTPS= max(case when counter_name = 'Transactions/sec' then cntr_value end),

@LastLRS = max(case when counter_name = 'Lock Requests/sec' then cntr_value end),

@LastLTS = max(case when counter_name = 'Lock Timeouts/sec' then cntr_value end),

@LastLWS = max(case when counter_name = 'Lock Waits/sec' then cntr_value end),

@LastNDS = max(case when counter_name = 'Number of Deadlocks/sec' then cntr_value end),

@LastAWT = max(case when counter_name = 'Average Wait Time (ms)' then cntr_value end),

@LastAWT_Base = max(case when counter_name = 'Average Wait Time base' then cntr_value end),

@LastALWT = max(case when counter_name = 'Average Latch Wait Time (ms)' then cntr_value end),

@LastALWT_Base = max(case when counter_name = 'Average Latch Wait Time base' then cntr_value end)

FROM sys.dm_os_performance_counters

WHERE counter_name IN (

'Transactions/sec',

'Lock Requests/sec',

'Lock Timeouts/sec',

'Lock Waits/sec',

'Number of Deadlocks/sec',

'Average Wait Time (ms)',

'Average Wait Time base',

'Average Latch Wait Time (ms)',

'Average Latch Wait Time base') AND instance_name IN( '_Total' ,'')

SET ANSI_WARNINGS ON

WHILE @LoopCounter <= @Loops

BEGIN

WAITFOR DELAY @WaitForSeconds

SET ANSI_WARNINGS OFF

SELECT

@TPS= max(case when counter_name = 'Transactions/sec' then cntr_value end) ,

@Active = max(case when counter_name = 'Active Transactions' then cntr_value end) ,

@SCM = max(case when counter_name = 'SQL Cache Memory (KB)' then cntr_value end) ,

@LRS = max(case when counter_name = 'Lock Requests/sec' then cntr_value end) ,

@LTS = max(case when counter_name = 'Lock Timeouts/sec' then cntr_value end) ,

@LWS = max(case when counter_name = 'Lock Waits/sec' then cntr_value end) ,

@NDS = max(case when counter_name = 'Number of Deadlocks/sec' then cntr_value end) ,

@AWT = max(case when counter_name = 'Average Wait Time (ms)' then cntr_value end) ,

@AWT_Base = max(case when counter_name = 'Average Wait Time base' then cntr_value end) ,

@ALWT = max(case when counter_name = 'Average Latch Wait Time (ms)' then cntr_value end) ,

@ALWT_Base = max(case when counter_name = 'Average Latch Wait Time base' then cntr_value end)

FROM sys.dm_os_performance_counters

WHERE counter_name IN (

'Transactions/sec',

'Active Transactions',

'SQL Cache Memory (KB)',

'Lock Requests/sec',

'Lock Timeouts/sec',

'Lock Waits/sec',

'Number of Deadlocks/sec',

'Average Wait Time (ms)',

'Average Wait Time base',

'Average Latch Wait Time (ms)',

'Average Latch Wait Time base') AND instance_name IN( '_Total' ,'')

SET ANSI_WARNINGS ON

SELECT @AWT_DIV = case when (@AWT_Base - @LastAWT_Base) > 0 then (@AWT_Base - @LastAWT_Base) else 1 end ,

@ALWT_DIV = case when (@ALWT_Base - @LastALWT_Base) > 0 then (@ALWT_Base - @LastALWT_Base) else 1 end

SELECT @RowText = '' + convert(varchar, (@TPS - @LastTPS)/@RunIntervalSeconds) + ', ' +

convert(varchar, @Active) + ', ' +

convert(varchar, @SCM) + ', ' +

convert(varchar, (@LRS - @LastLRS)/@RunIntervalSeconds) + ', ' +

convert(varchar, (@LTS - @LastLTS)/@RunIntervalSeconds) + ', ' +

convert(varchar, (@LWS - @LastLWS)/@RunIntervalSeconds) + ', ' +

convert(varchar, (@NDS - @LastNDS)/@RunIntervalSeconds) + ', ' +

convert(varchar, (@AWT - @LastAWT)/@AWT_DIV) + ', ' +

convert(varchar, (@ALWT - @LastALWT)/@ALWT_DIV)

SELECT @LastTPS = @TPS,

@LastLRS = @LRS,

@LastLTS = @LTS,

@LastLWS = @LWS,

@LastNDS = @NDS,

@LastAWT = @AWT,

@LastAWT_Base = @AWT_Base,

@LastALWT = @ALWT,

@LastALWT_Base = @ALWT_Base

EXECUTE sp_OAMethod @OAFile, 'WriteLine', Null, @RowText

SET @LoopCounter = @LoopCounter + 1

END

--CLEAN UP

EXECUTE sp_OADestroy @OAFile

EXECUTE sp_OADestroy @OACreate

print 'Completed Logging Performance Metrics to file: ' + @FileName

END

GO

Ejecuta la prueba de carga TPROC-C

En SQL Server Management Studio, ejecuta el procedimiento de recopilación con la siguiente secuencia de comandos:

Use master Go exec dbo.sp_write_performance_counters

En la instancia de Compute Engine en la que instalaste HammerDB, inicia la prueba en la aplicación HammerDB:

- En el panel Comparativas, en Usuarios virtuales haz doble clic en Crear para crear los usuarios virtuales, que activarán la pestaña Resultado de usuario virtual.

- Haz doble clic en Ejecutar debajo de la opción Crear para iniciar la prueba.

- Cuando se complete la prueba, verás el cálculo de transacciones por minuto (TPM) en la pestaña Resultado de usuario virtual.

- Puedes encontrar los resultados de tu procedimiento de recopilación en el directorio

c:\Windows\temp. - Guarda todos los valores en una Hoja de cálculo de Google y úsalos para comparar varias ejecuciones de pruebas.