Higher network bandwidths can improve the performance of your GPU instances to support distributed workloads that are running on Compute Engine.

The maximum network bandwidth that is available for instances with attached GPUs on Compute Engine is as follows:

- For A4X accelerator-optimized instances, you can get a maximum network bandwidth of up to 2,000 Gbps, based on the machine type.

- For A4 and A3 accelerator-optimized instances, you can get a maximum network bandwidth of up to 3,600 Gbps, based on the machine type.

- For G4 accelerator-optimized instances, you can get a maximum network bandwidth of up to 400 Gbps, based on the machine type.

- For A2 and G2 accelerator-optimized instances, you can get a maximum network bandwidth of up to 100 Gbps, based on the machine type.

- For N1 general-purpose instances that have P100 and P4 GPUs attached, a maximum network bandwidth of 32 Gbps is available. This is similar to the maximum rate available to N1 instances that don't have GPUs attached. For more information about network bandwidths, see maximum egress data rate.

- For N1 general-purpose instances that have T4 and V100 GPUs attached, you can get a maximum network bandwidth of up to 100 Gbps, based on the combination of GPU and vCPU count.

Review network bandwidth and NIC arrangement

Use the following section to review the network arrangement and bandwidth speed for each GPU machine type.

A4X machine types

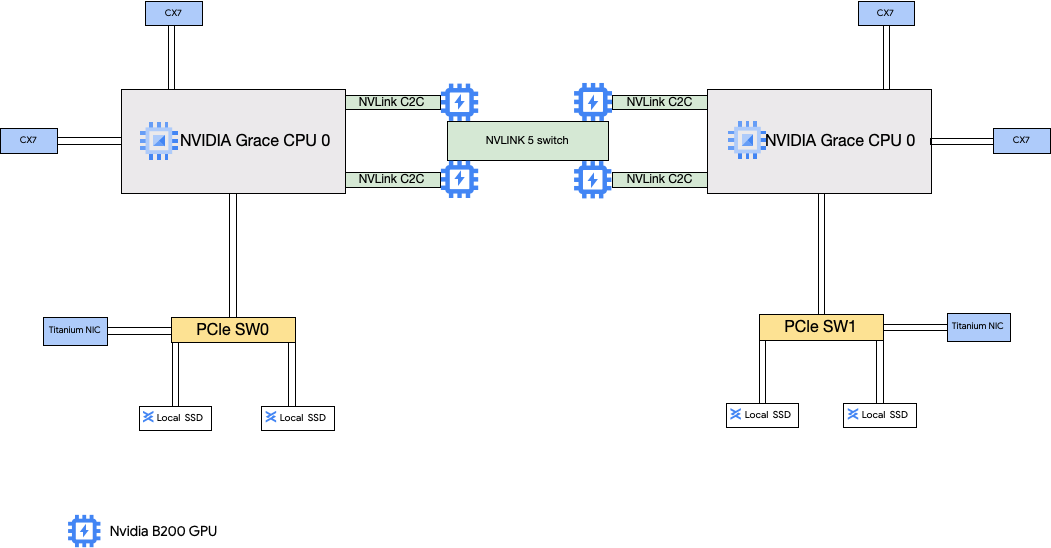

The A4X machine types have NVIDIA GB200 Superchips attached. These Superchips have NVIDIA B200 GPUs.

This machine type has four NVIDIA ConnectX-7 (CX-7) network interface cards (NICs) and two Titanium NICs. The four CX-7 NICs deliver a total network bandwidth of 1,600 Gbps. These CX-7 NICs are dedicated for only high-bandwidth GPU to GPU communication and can't be used for other networking needs such as public internet access. The two Titanium NICs are smart NICs that provide an additional 400 Gbps of network bandwidth for general purpose networking requirements. Combined, the network interface cards provide a total maximum network bandwidth of 2,000 Gbps for these machines.

A4X is an exascale platform based on NVIDIA GB200 NVL72 rack-scale architecture and introduces the NVIDIA Grace Hopper Superchip architecture which delivers NVIDIA Hopper GPUs and NVIDIA Grace CPUs that are connected with high bandwidth NVIDIA NVLink Chip-to-Chip (C2C) interconnect.

The A4X networking architecture uses a rail-aligned design, which is a topology where the corresponding network card of one Compute Engine instance is connected to the network card of another. The four CX-7 NICs on each instance are physically isolated on a 4-way rail-aligned network topology, which allows A4X to scale out in groups of 72 GPUs to thousands of GPUs in a single non-blocking cluster. This hardware-integrated approach provides predictable, low-latency performance essential for large-scale, distributed workloads.

To use these multiple NICs, you need to create 3 Virtual Private Cloud networks as follows:

- 2 VPC networks: each gVNIC must attach to a different VPC network

- 1 VPC network with the RDMA network profile: all four CX-7 NICs share the same VPC network

To set up these networks, see Create VPC networks in the AI Hypercomputer documentation.

| Attached NVIDIA GB200 Grace Blackwell Superchips | |||||||

|---|---|---|---|---|---|---|---|

| Machine type | vCPU count1 | Instance memory (GB) | Attached Local SSD (GiB) | Physical NIC count | Maximum network bandwidth (Gbps)2 | GPU count | GPU memory3 (GB HBM3e) |

a4x-highgpu-4g |

140 | 884 | 12,000 | 6 | 2,000 | 4 | 720 |

1A vCPU is implemented as a single hardware hyper-thread on one of

the available CPU platforms.

2Maximum egress bandwidth cannot exceed the number given. Actual

egress bandwidth depends on the destination IP address and other factors.

For more information about network bandwidth,

see Network bandwidth.

3GPU memory is the memory on a GPU device that can be used for

temporary storage of data. It is separate from the instance's memory and is

specifically designed to handle the higher bandwidth demands of your

graphics-intensive workloads.

A4 and A3 Ultra machine types

The A4 machine types have NVIDIA B200 GPUs attached and A3 Ultra machine types have NVIDIA H200 GPUs attached.

These machine types provide eight NVIDIA ConnectX-7 (CX-7) network interface cards (NICs) and two Google virtual NICs (gVNIC). The eight CX-7 NICs deliver a total network bandwidth of 3,200 Gbps. These NICs are dedicated for only high-bandwidth GPU to GPU communication and can't be used for other networking needs such as public internet access. As outlined in the following diagram, each CX-7 NIC is aligned with one GPU to optimize non-uniform memory access (NUMA). All eight GPUs can rapidly communicate with each other by using the all to all NVLink bridge that connects them. The two other gVNIC network interface cards are smart NICs that provide an additional 400 Gbps of network bandwidth for general purpose networking requirements. Combined, the network interface cards provide a total maximum network bandwidth of 3,600 Gbps for these machines.

To use these multiple NICs, you need to create 3 Virtual Private Cloud networks as follows:

- 2 regular VPC networks: each gVNIC must attach to a different VPC network

- 1 RoCE VPC network: all eight CX-7 NICs share the same RoCE VPC network

To set up these networks, see Create VPC networks in the AI Hypercomputer documentation.

A4 VMs

| Attached NVIDIA B200 Blackwell GPUs | |||||||

|---|---|---|---|---|---|---|---|

| Machine type | vCPU count1 | Instance memory (GB) | Attached Local SSD (GiB) | Physical NIC count | Maximum network bandwidth (Gbps)2 | GPU count | GPU memory3 (GB HBM3e) |

a4-highgpu-8g |

224 | 3,968 | 12,000 | 10 | 3,600 | 8 | 1,440 |

1A vCPU is implemented as a single hardware hyper-thread on one of

the available CPU platforms.

2Maximum egress bandwidth cannot exceed the number given. Actual

egress bandwidth depends on the destination IP address and other factors.

For more information about network bandwidth, see

Network bandwidth.

3GPU memory is the memory on a GPU device that can be used for

temporary storage of data. It is separate from the instance's memory and is

specifically designed to handle the higher bandwidth demands of your

graphics-intensive workloads.

A3 Ultra VMs

| Attached NVIDIA H200 GPUs | |||||||

|---|---|---|---|---|---|---|---|

| Machine type | vCPU count1 | Instance memory (GB) | Attached Local SSD (GiB) | Physical NIC count | Maximum network bandwidth (Gbps)2 | GPU count | GPU memory3 (GB HBM3e) |

a3-ultragpu-8g |

224 | 2,952 | 12,000 | 10 | 3,600 | 8 | 1128 |

1A vCPU is implemented as a single hardware hyper-thread on one of

the available CPU platforms.

2Maximum egress bandwidth cannot exceed the number given. Actual

egress bandwidth depends on the destination IP address and other factors.

For more information about network bandwidth,

see Network bandwidth.

3GPU memory is the memory on a GPU device that can be used for

temporary storage of data. It is separate from the instance's memory and is

specifically designed to handle the higher bandwidth demands of your

graphics-intensive workloads.

A3 Mega, High, and Edge machine types

These machine types have H100 GPUs attached. Each of these machine types have a fixed GPU count, vCPU count, and memory size.

- Single NIC A3 VMs: For A3 VMs with 1 to 4 GPUs attached, only a single physical network interface card (NIC) is available.

- Multi-NIC A3 VMs: For A3 VMs with 8 GPUS attached,

multiple physical NICs are available. For these A3 machine types the NICs are arranged as follows on

a Peripheral Component Interconnect Express (PCIe) bus:

- For the A3 Mega machine type: a NIC arrangement of 8+1 is available. With this arrangement, 8 NICs share the same PCIe bus, and 1 NIC resides on a separate PCIe bus.

- For the A3 High machine type: a NIC arrangement of 4+1 is available. With this arrangement, 4 NICs share the same PCIe bus, and 1 NIC resides on a separate PCIe bus.

- For the A3 Edge machine type machine type: a NIC arrangement of 4+1 is available. With this arrangement, 4 NICs share the same PCIe bus, and 1 NIC resides on a separate PCIe bus. These 5 NICs provide a total network bandwidth of 400 Gbps for each VM.

NICs that share the same PCIe bus, have a non-uniform memory access (NUMA) alignment of one NIC per two NVIDIA H100 GPUs. These NICs are ideal for dedicated high bandwidth GPU to GPU communication. The physical NIC that resides on a separate PCIe bus is ideal for other networking needs. For instructions on how to setup networking for A3 High and A3 Edge VMs, see set up jumbo frame MTU networks.

A3 Mega

| Attached NVIDIA H100 GPUs | |||||||

|---|---|---|---|---|---|---|---|

| Machine type | vCPU count1 | Instance memory (GB) | Attached Local SSD (GiB) | Physical NIC count | Maximum network bandwidth (Gbps)2 | GPU count | GPU memory3 (GB HBM3) |

a3-megagpu-8g |

208 | 1,872 | 6,000 | 9 | 1,800 | 8 | 640 |

A3 High

| Attached NVIDIA H100 GPUs | |||||||

|---|---|---|---|---|---|---|---|

| Machine type | vCPU count1 | Instance memory (GB) | Attached Local SSD (GiB) | Physical NIC count | Maximum network bandwidth (Gbps)2 | GPU count | GPU memory3 (GB HBM3) |

a3-highgpu-1g |

26 | 234 | 750 | 1 | 25 | 1 | 80 |

a3-highgpu-2g |

52 | 468 | 1,500 | 1 | 50 | 2 | 160 |

a3-highgpu-4g |

104 | 936 | 3,000 | 1 | 100 | 4 | 320 |

a3-highgpu-8g |

208 | 1,872 | 6,000 | 5 | 1,000 | 8 | 640 |

A3 Edge

| Attached NVIDIA H100 GPUs | |||||||

|---|---|---|---|---|---|---|---|

| Machine type | vCPU count1 | Instance memory (GB) | Attached Local SSD (GiB) | Physical NIC count | Maximum network bandwidth (Gbps)2 | GPU count | GPU memory3 (GB HBM3) |

a3-edgegpu-8g |

208 | 1,872 | 6,000 | 5 |

|

8 | 640 |

1A vCPU is implemented as a single hardware hyper-thread on one of

the available CPU platforms.

2Maximum egress bandwidth cannot exceed the number given. Actual

egress bandwidth depends on the destination IP address and other factors.

For more information about network bandwidth,

see Network bandwidth.

3GPU memory is the memory on a GPU device that can be used for

temporary storage of data. It is separate from the instance's memory and is

specifically designed to handle the higher bandwidth demands of your

graphics-intensive workloads.

A2 machine types

Each A2 machine type has a fixed number of NVIDIA A100 40GB or NVIDIA A100 80 GB GPUs attached. Each machine type also has a fixed vCPU count and memory size.

A2 machine series are available in two types:

- A2 Ultra: these machine types have A100 80GB GPUs and Local SSD disks attached.

- A2 Standard: these machine types have A100 40GB GPUs attached.

A2 Ultra

| Attached NVIDIA A100 80GB GPUs | ||||||

|---|---|---|---|---|---|---|

| Machine type | vCPU count1 | Instance memory (GB) | Attached Local SSD (GiB) | Maximum network bandwidth (Gbps)2 | GPU count | GPU memory3 (GB HBM2e) |

a2-ultragpu-1g |

12 | 170 | 375 | 24 | 1 | 80 |

a2-ultragpu-2g |

24 | 340 | 750 | 32 | 2 | 160 |

a2-ultragpu-4g |

48 | 680 | 1,500 | 50 | 4 | 320 |

a2-ultragpu-8g |

96 | 1,360 | 3,000 | 100 | 8 | 640 |

A2 Standard

| Attached NVIDIA A100 40GB GPUs | ||||||

|---|---|---|---|---|---|---|

| Machine type | vCPU count1 | Instance memory (GB) | Local SSD supported | Maximum network bandwidth (Gbps)2 | GPU count | GPU memory3 (GB HBM2) |

a2-highgpu-1g |

12 | 85 | Yes | 24 | 1 | 40 |

a2-highgpu-2g |

24 | 170 | Yes | 32 | 2 | 80 |

a2-highgpu-4g |

48 | 340 | Yes | 50 | 4 | 160 |

a2-highgpu-8g |

96 | 680 | Yes | 100 | 8 | 320 |

a2-megagpu-16g |

96 | 1,360 | Yes | 100 | 16 | 640 |

1A vCPU is implemented as a single hardware hyper-thread on one of

the available CPU platforms.

2Maximum egress bandwidth cannot exceed the number given. Actual

egress bandwidth depends on the destination IP address and other factors.

For more information about network bandwidth,

see Network bandwidth.

3GPU memory is the memory on a GPU device that can be used for

temporary storage of data. It is separate from the instance's memory and is

specifically designed to handle the higher bandwidth demands of your

graphics-intensive workloads.

G4 machine types

G4 accelerator-optimized

machine types use

NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs (nvidia-rtx-pro-6000)

and are

suitable for NVIDIA Omniverse simulation workloads, graphics-intensive applications, video

transcoding, and virtual desktops. G4 machine types also provide a low-cost solution for

performing single host inference and model tuning compared with A series machine types.

| Attached NVIDIA RTX PRO 6000 GPUs | |||||||

|---|---|---|---|---|---|---|---|

| Machine type | vCPU count1 | Instance memory (GB) | Maximum Titanium SSD supported (GiB)2 | Physical NIC count | Maximum network bandwidth (Gbps)3 | GPU count | GPU memory4 (GB GDDR7) |

g4-standard-48 |

48 | 180 | 1,500 | 1 | 50 | 1 | 96 |

g4-standard-96 |

96 | 360 | 3,000 | 1 | 100 | 2 | 192 |

g4-standard-192 |

192 | 720 | 6,000 | 1 | 200 | 4 | 384 |

g4-standard-384 |

384 | 1,440 | 12,000 | 2 | 400 | 8 | 768 |

1A vCPU is implemented as a single hardware hyper-thread on one of

the available CPU platforms.

2You can add Titanium SSD disks when creating a G4 instance. For the number of disks

you can attach, see

Machine types that require you to choose a number of Local SSD disks.

3Maximum egress bandwidth cannot exceed the number given. Actual

egress bandwidth depends on the destination IP address and other factors.

See Network bandwidth.

4GPU memory is the memory on a GPU device that can be used for

temporary storage of data. It is separate from the instance's memory and is

specifically designed to handle the higher bandwidth demands of your

graphics-intensive workloads.

G2 machine types

G2 accelerator-optimized machine types have NVIDIA L4 GPUs attached and are ideal for cost-optimized inference, graphics-intensive and high performance computing workloads.

Each G2 machine type also has a default memory and a custom memory range. The custom memory range defines the amount of memory that you can allocate to your instance for each machine type. You can also add Local SSD disks when creating a G2 instance. For the number of disks you can attach, see Machine types that require you to choose a number of Local SSD disks.

To get the higher network bandwidth rates (50 Gbps or higher) applied to most GPU instances, it is recommended that you use Google Virtual NIC (gVNIC). For more information about creating GPU instances that use gVNIC, see Creating GPU instances that use higher bandwidths.

| Attached NVIDIA L4 GPUs | |||||||

|---|---|---|---|---|---|---|---|

| Machine type | vCPU count1 | Default instance memory (GB) | Custom instance memory range (GB) | Max Local SSD supported (GiB) | Maximum network bandwidth (Gbps)2 | GPU count | GPU memory3 (GB GDDR6) |

g2-standard-4 |

4 | 16 | 16 to 32 | 375 | 10 | 1 | 24 |

g2-standard-8 |

8 | 32 | 32 to 54 | 375 | 16 | 1 | 24 |

g2-standard-12 |

12 | 48 | 48 to 54 | 375 | 16 | 1 | 24 |

g2-standard-16 |

16 | 64 | 54 to 64 | 375 | 32 | 1 | 24 |

g2-standard-24 |

24 | 96 | 96 to 108 | 750 | 32 | 2 | 48 |

g2-standard-32 |

32 | 128 | 96 to 128 | 375 | 32 | 1 | 24 |

g2-standard-48 |

48 | 192 | 192 to 216 | 1,500 | 50 | 4 | 96 |

g2-standard-96 |

96 | 384 | 384 to 432 | 3,000 | 100 | 8 | 192 |

1A vCPU is implemented as a single hardware hyper-thread on one of

the available CPU platforms.

2Maximum egress bandwidth cannot exceed the number given. Actual

egress bandwidth depends on the destination IP address and other factors.

For more information about network bandwidth,

see Network bandwidth.

3GPU memory is the memory on a GPU device that can be used for

temporary storage of data. It is separate from the instance's memory and is

specifically designed to handle the higher bandwidth demands of your

graphics-intensive workloads.

N1 + GPU machine types

For N1 general-purpose instances that have T4 and V100 GPUs attached, you can get a maximum network bandwidth of up to 100 Gbps, based on the combination of GPU and vCPU count. For all other N1 GPU instances, see Overview.

Review the following section to calculate the maximum network bandwidth that is available for your T4 and V100 instances based on the GPU model, vCPU, and GPU count.

Less than 5 vCPUs

For T4 and V100 instances that have 5 vCPUs or less, a maximum network bandwidth of 10 Gbps is available.

More than 5 vCPUs

For T4 and V100 instances that have more than 5 vCPUs, maximum network bandwidth is calculated based on the number of vCPUs and GPUs for that VM.

To get the higher network bandwidth rates (50 Gbps or higher) applied to most GPU instances, it is recommended that you use Google Virtual NIC (gVNIC). For more information about creating GPU instances that use gVNIC, see Creating GPU instances that use higher bandwidths.

| GPU model | Number of GPUs | Maximum network bandwidth calculation |

|---|---|---|

| NVIDIA V100 | 1 | min(vcpu_count * 2, 32) |

| 2 | min(vcpu_count * 2, 32) |

|

| 4 | min(vcpu_count * 2, 50) |

|

| 8 | min(vcpu_count * 2, 100) |

|

| NVIDIA T4 | 1 | min(vcpu_count * 2, 32) |

| 2 | min(vcpu_count * 2, 50) |

|

| 4 | min(vcpu_count * 2, 100) |

MTU settings and GPU machine types

To maximize network bandwidth, set a higher maximum transmission unit (MTU) value for your VPC networks. Higher MTU values increase the packet size and reduce the packet-header overhead, which in turn increases payload data throughput.

For GPU machine types, we recommend the following MTU settings for your VPC networks.

| GPU machine type | Recommended MTU (in bytes) | |

|---|---|---|

| VPC network | VPC network with RDMA profiles | |

|

8896 | 8896 |

|

8244 | N/A |

|

8896 | N/A |

When setting the MTU value, note the following:

- 8192 is two 4 KB pages.

- 8244 is recommended in A3 Mega, A3 High, and A3 Edge VMs for GPU NICs that have header split enabled.

- Use a value of 8896 unless otherwise indicated in the table.

Create high bandwidth GPU machines

To create GPU instances that use higher network bandwidths, use one of the following methods based on the machine type:

To create A2, G2 and N1 instances that use higher network bandwidths, see Use higher network bandwidth for A2, G2, and N1 instances. To test or verify the bandwidth speed for these machines, you can use the benchmarking test. For more information, see Checking network bandwidth.

To create A3 Mega instances that use higher network bandwidths, see Deploy an A3 Mega Slurm cluster for ML training. To test or verify the bandwidth speed for these machines, use a benchmarking test by following the steps in Checking network bandwidth.

For A3 High and A3 Edge instances that use higher network bandwidths, see Create an A3 VM with GPUDirect-TCPX enabled. To test or verify the bandwidth speed for these machines, you can use the benchmarking test. For more information, see Checking network bandwidth.

For other accelerator-optimized machine types, no action is required to use higher network bandwidth; creating an instance as documented already uses high network bandwidth. To learn how to create instances for other accelerator-optimized machine types, see Create a VM that has attached GPUs.

What's next?

- Learn more about GPU platforms.

- Learn how to create instances with attached GPUs.

- Learn about Use higher network bandwidth.

- Learn about GPU pricing.