Create and use tables

This document describes how to create and use standard (built-in) tables in BigQuery. For information about creating other table types, see the following:

After creating a table, you can do the following:

- Control access to your table data.

- Get information about your tables.

- List the tables in a dataset.

- Get table metadata.

For more information about managing tables including updating table properties, copying a table, and deleting a table, see Managing tables.

Before you begin

Grant Identity and Access Management (IAM) roles that give users the necessary permissions to perform each task in this document.

Required roles

To get the permissions that you need to create a table, ask your administrator to grant you the following IAM roles:

-

BigQuery Job User (

roles/bigquery.jobUser) on the project if you're creating a table by loading data or by saving query results to a table. -

BigQuery Data Editor (

roles/bigquery.dataEditor) on the dataset where you're creating the table.

For more information about granting roles, see Manage access to projects, folders, and organizations.

These predefined roles contain the permissions required to create a table. To see the exact permissions that are required, expand the Required permissions section:

Required permissions

The following permissions are required to create a table:

-

bigquery.tables.createon the dataset where you're creating the table. -

bigquery.tables.getDataon all tables and views that your query references if you're saving query results as a table. -

bigquery.jobs.createon the project if you're creating the table by loading data or by saving query results to a table. -

bigquery.tables.updateDataon the table if you're appending to or overwriting a table with query results.

You might also be able to get these permissions with custom roles or other predefined roles.

Table naming

When you create a table in BigQuery, the table name must be unique per dataset. The table name can:

- Contain characters with a total of up to 1,024 UTF-8 bytes.

- Contain Unicode characters in category L (letter), M (mark), N (number), Pc (connector, including underscore), Pd (dash), Zs (space). For more information, see General Category.

The following are all examples of valid table names:

table 01, ग्राहक, 00_お客様, étudiant-01.

Caveats:

- Table names are case-sensitive by default.

mytableandMyTablecan coexist in the same dataset, unless they are part of a dataset with case-sensitivity turned off. - Some table names and table name prefixes are reserved. If you receive an error saying that your table name or prefix is reserved, then select a different name and try again.

If you include multiple dot operators (

.) in a sequence, the duplicate operators are implicitly stripped.For example, this:

project_name....dataset_name..table_nameBecomes this:

project_name.dataset_name.table_name

Create tables

You can create a table in BigQuery in the following ways:

- Manually by using the Google Cloud console or the bq command-line tool

bq mkcommand. - Programmatically by calling the

tables.insertAPI method. - By using the client libraries.

- From query results.

- By defining a table that references an external data source.

- When you load data.

- By using a

CREATE TABLEdata definition language (DDL) statement.

Create an empty table with a schema definition

You can create an empty table with a schema definition in the following ways:

- Enter the schema using the Google Cloud console.

- Provide the schema inline using the bq command-line tool.

- Submit a JSON schema file using the bq command-line tool.

- Provide the schema in a table resource

when calling the APIs

tables.insertmethod.

For more information about specifying a table schema, see Specifying a schema.

After the table is created, you can load data into it or populate it by writing query results to it.

To create an empty table with a schema definition:

Console

In the Google Cloud console, go to the BigQuery page.

- In the left pane, click Explorer.

- In the Explorer pane, expand your project, click Datasets, and then select a dataset.

- In the Dataset info section, click Create table.

- In the Create table pane, specify the following details:

- In the Source section, select Empty table in the Create table from list.

- In the Destination section, specify the following details:

- For Dataset, select the dataset in which you want to create the table.

- In the Table field, enter the name of the table that you want to create.

- Verify that the Table type field is set to Native table.

- In the Schema section, enter the schema

definition.

You can enter schema information manually by using one of

the following methods:

- Option 1: Click Edit as text and paste the schema in the form of a

JSON array. When you use a JSON array, you generate the schema using the

same process as creating a JSON schema file.

You can view the schema of an existing table in JSON format by entering the following

command:

bq show --format=prettyjson dataset.table

- Option 2: Click Add field and enter the table schema. Specify each field's Name, Type, and Mode.

- Option 1: Click Edit as text and paste the schema in the form of a

JSON array. When you use a JSON array, you generate the schema using the

same process as creating a JSON schema file.

You can view the schema of an existing table in JSON format by entering the following

command:

- Optional: Specify Partition and cluster settings. For more information, see Creating partitioned tables and Creating and using clustered tables.

- Optional: In the Advanced options section, if you want to use a customer-managed encryption key, then select the Use a customer-managed encryption key (CMEK) option. By default, BigQuery encrypts customer content stored at rest by using a Google-owned and Google-managed encryption key.

- Click Create table.

SQL

The following example creates a table named newtable that expires on

January 1, 2023:

In the Google Cloud console, go to the BigQuery page.

In the query editor, enter the following statement:

CREATE TABLE mydataset.newtable ( x INT64 OPTIONS (description = 'An optional INTEGER field'), y STRUCT < a ARRAY <STRING> OPTIONS (description = 'A repeated STRING field'), b BOOL > ) OPTIONS ( expiration_timestamp = TIMESTAMP '2023-01-01 00:00:00 UTC', description = 'a table that expires in 2023', labels = [('org_unit', 'development')]);

Click Run.

For more information about how to run queries, see Run an interactive query.

bq

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

Use the

bq mkcommand with the--tableor-tflag. You can supply table schema information inline or with a JSON schema file. For a full list of parameters, see thebq mk --tablereference. Some optional parameters include:--expiration--description--time_partitioning_field--time_partitioning_type--range_partitioning--clustering_fields--destination_kms_key--label

--time_partitioning_field,--time_partitioning_type,--range_partitioning,--clustering_fields, and--destination_kms_keyare not demonstrated here. Refer to the following links for more information on these optional parameters:- For more information about

--time_partitioning_field,--time_partitioning_type, and--range_partitioningsee partitioned tables. - For more information about

--clustering_fields, see clustered tables. - For more information about

--destination_kms_key, see customer-managed encryption keys.

If you are creating a table in a project other than your default project, add the project ID to the dataset in the following format:

project_id:dataset.To create an empty table in an existing dataset with a schema definition, enter the following:

bq mk \ --table \ --expiration=integer \ --description=description \ --label=key_1:value_1 \ --label=key_2:value_2 \ --add_tags=key_3:value_3[,...] \ project_id:dataset.table \ schema

Replace the following:

- integer is the default lifetime (in seconds) for the table. The minimum value is 3600 seconds (one hour). The expiration time evaluates to the current UTC time plus the integer value. If you set the expiration time when you create a table, the dataset's default table expiration setting is ignored.

- description is a description of the table in quotes.

- key_1:value_1 and key_2:value_2 are key-value pairs that specify labels.

- key_3:value_3 are key-value pairs that specify tags. Add multiple tags under the same flag with commas between key:value pairs.

- project_id is your project ID.

- dataset is a dataset in your project.

- table is the name of the table you're creating.

- schema is an inline schema definition in the format field:data_type,field:data_type or the path to the JSON schema file on your local machine.

When you specify the schema on the command line, you cannot include a

RECORD(STRUCT) type, you cannot include a column description, and you cannot specify the column mode. All modes default toNULLABLE. To include descriptions, modes, andRECORDtypes, supply a JSON schema file instead.Examples:

Enter the following command to create a table using an inline schema definition. This command creates a table named

mytableinmydatasetin your default project. The table expiration is set to 3600 seconds (1 hour), the description is set toThis is my table, and the label is set toorganization:development. The command uses the-tshortcut instead of--table. The schema is specified inline as:qtr:STRING,sales:FLOAT,year:STRING.bq mk \ -t \ --expiration 3600 \ --description "This is my table" \ --label organization:development \ mydataset.mytable \ qtr:STRING,sales:FLOAT,year:STRING

Enter the following command to create a table using a JSON schema file. This command creates a table named

mytableinmydatasetin your default project. The table expiration is set to 3600 seconds (1 hour), the description is set toThis is my table, and the label is set toorganization:development. The path to the schema file is/tmp/myschema.json.bq mk \ --table \ --expiration 3600 \ --description "This is my table" \ --label organization:development \ mydataset.mytable \ /tmp/myschema.json

Enter the following command to create a table using an JSON schema file. This command creates a table named

mytableinmydatasetinmyotherproject. The table expiration is set to 3600 seconds (1 hour), the description is set toThis is my table, and the label is set toorganization:development. The path to the schema file is/tmp/myschema.json.bq mk \ --table \ --expiration 3600 \ --description "This is my table" \ --label organization:development \ myotherproject:mydataset.mytable \ /tmp/myschema.json

After the table is created, you can update the table's expiration, description, and labels. You can also modify the schema definition.

Terraform

Use the

google_bigquery_table

resource.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Create a table

The following example creates a table named mytable:

Create a table and grant access to it

The following example creates a table named mytable, then uses the

google_bigquery_table_iam_policy resource to grant

access to it. Take this step only if you want to grant access

to the table to principals who don't have access to the dataset in which

the table resides.

Create a table with a customer-managed encryption key

The following example creates a table named mytable, and also uses the

google_kms_crypto_key

and

google_kms_key_ring

resources to specify a

Cloud Key Management Service key for the

table. You must

enable the Cloud Key Management Service API before running this example.

To apply your Terraform configuration in a Google Cloud project, complete the steps in the following sections.

Prepare Cloud Shell

- Launch Cloud Shell.

-

Set the default Google Cloud project where you want to apply your Terraform configurations.

You only need to run this command once per project, and you can run it in any directory.

export GOOGLE_CLOUD_PROJECT=PROJECT_ID

Environment variables are overridden if you set explicit values in the Terraform configuration file.

Prepare the directory

Each Terraform configuration file must have its own directory (also called a root module).

-

In Cloud Shell, create a directory and a new

file within that directory. The filename must have the

.tfextension—for examplemain.tf. In this tutorial, the file is referred to asmain.tf.mkdir DIRECTORY && cd DIRECTORY && touch main.tf

-

If you are following a tutorial, you can copy the sample code in each section or step.

Copy the sample code into the newly created

main.tf.Optionally, copy the code from GitHub. This is recommended when the Terraform snippet is part of an end-to-end solution.

- Review and modify the sample parameters to apply to your environment.

- Save your changes.

-

Initialize Terraform. You only need to do this once per directory.

terraform init

Optionally, to use the latest Google provider version, include the

-upgradeoption:terraform init -upgrade

Apply the changes

-

Review the configuration and verify that the resources that Terraform is going to create or

update match your expectations:

terraform plan

Make corrections to the configuration as necessary.

-

Apply the Terraform configuration by running the following command and entering

yesat the prompt:terraform apply

Wait until Terraform displays the "Apply complete!" message.

- Open your Google Cloud project to view the results. In the Google Cloud console, navigate to your resources in the UI to make sure that Terraform has created or updated them.

API

Call the tables.insert

method with a defined table resource.

C#

Before trying this sample, follow the C# setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery C# API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Go

Before trying this sample, follow the Go setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Go API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Node.js

Before trying this sample, follow the Node.js setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Node.js API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

PHP

Before trying this sample, follow the PHP setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery PHP API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Python

Before trying this sample, follow the Python setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Python API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Ruby

Before trying this sample, follow the Ruby setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Ruby API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Create an empty table without a schema definition

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Create a table from a query result

To create a table from a query result, write the results to a destination table.

Console

Open the BigQuery page in the Google Cloud console.

In the left pane, click Explorer:

If you don't see the left pane, click Expand left pane to open the pane.

In the Explorer pane, expand your project, click Datasets, and then select a dataset.

In the query editor, enter a valid SQL query.

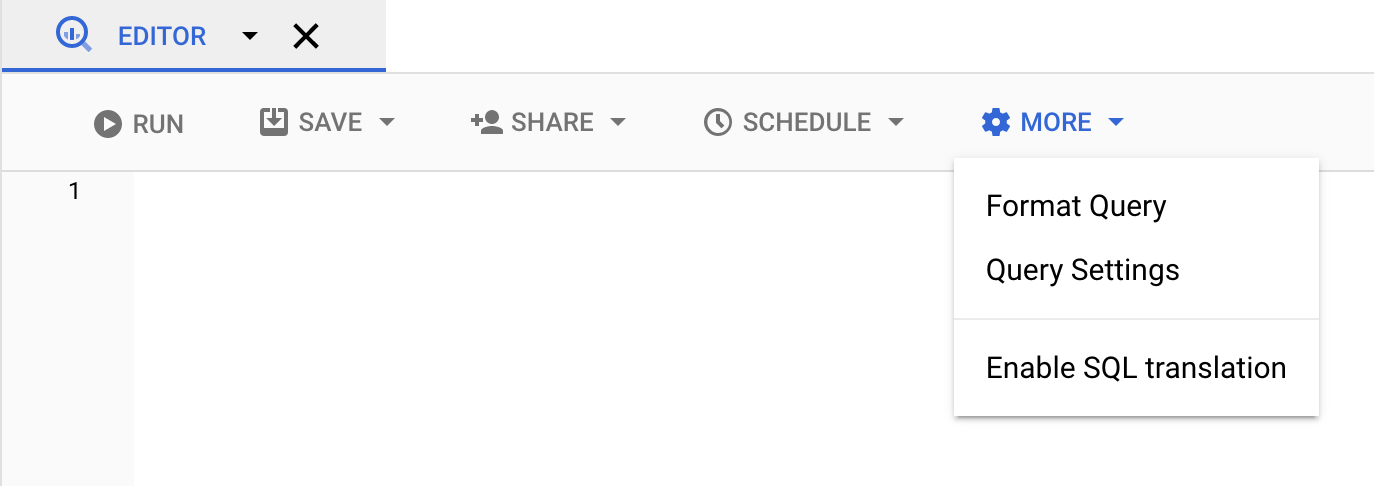

Click More and then select Query settings.

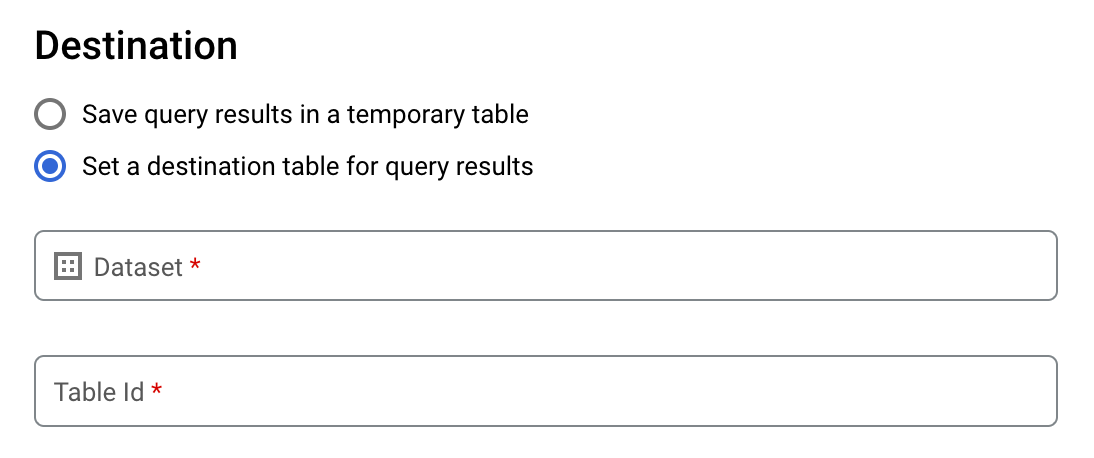

Select the Set a destination table for query results option.

In the Destination section, select the Dataset in which you want to create the table, and then choose a Table Id.

In the Destination table write preference section, choose one of the following:

- Write if empty — Writes the query results to the table only if the table is empty.

- Append to table — Appends the query results to an existing table.

- Overwrite table — Overwrites an existing table with the same name using the query results.

Optional: For Data location, choose your location.

To update the query settings, click Save.

Click Run. This creates a query job that writes the query results to the table you specified.

Alternatively, if you forget to specify a destination table before running your query, you can copy the cached results table to a permanent table by clicking the Save Results button above the editor.

SQL

The following example uses the

CREATE TABLE statement

to create the trips table from data in the public

bikeshare_trips table:

In the Google Cloud console, go to the BigQuery page.

In the query editor, enter the following statement:

CREATE TABLE mydataset.trips AS ( SELECT bike_id, start_time, duration_minutes FROM bigquery-public-data.austin_bikeshare.bikeshare_trips );

Click Run.

For more information about how to run queries, see Run an interactive query.

For more information, see Creating a new table from an existing table.

bq

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

Enter the

bq querycommand and specify the--destination_tableflag to create a permanent table based on the query results. Specify theuse_legacy_sql=falseflag to use GoogleSQL syntax. To write the query results to a table that is not in your default project, add the project ID to the dataset name in the following format:project_id:dataset.Optional: Supply the

--locationflag and set the value to your location.To control the write disposition for an existing destination table, specify one of the following optional flags:

--append_table: If the destination table exists, the query results are appended to it.--replace: If the destination table exists, it is overwritten with the query results.bq --location=location query \ --destination_table project_id:dataset.table \ --use_legacy_sql=false 'query'

Replace the following:

locationis the name of the location used to process the query. The--locationflag is optional. For example, if you are using BigQuery in the Tokyo region, you can set the flag's value toasia-northeast1. You can set a default value for the location by using the.bigqueryrcfile.project_idis your project ID.datasetis the name of the dataset that contains the table to which you are writing the query results.tableis the name of the table to which you're writing the query results.queryis a query in GoogleSQL syntax.If no write disposition flag is specified, the default behavior is to write the results to the table only if it is empty. If the table exists and it is not empty, the following error is returned:

BigQuery error in query operation: Error processing job project_id:bqjob_123abc456789_00000e1234f_1: Already Exists: Table project_id:dataset.table.Examples:

Enter the following command to write query results to a destination table named

mytableinmydataset. The dataset is in your default project. Since no write disposition flag is specified in the command, the table must be new or empty. Otherwise, anAlready existserror is returned. The query retrieves data from the USA Name Data public dataset.bq query \ --destination_table mydataset.mytable \ --use_legacy_sql=false \ 'SELECT name, number FROM `bigquery-public-data`.usa_names.usa_1910_current WHERE gender = "M" ORDER BY number DESC'

Enter the following command to use query results to overwrite a destination table named

mytableinmydataset. The dataset is in your default project. The command uses the--replaceflag to overwrite the destination table.bq query \ --destination_table mydataset.mytable \ --replace \ --use_legacy_sql=false \ 'SELECT name, number FROM `bigquery-public-data`.usa_names.usa_1910_current WHERE gender = "M" ORDER BY number DESC'

Enter the following command to append query results to a destination table named

mytableinmydataset. The dataset is inmy-other-project, not your default project. The command uses the--append_tableflag to append the query results to the destination table.bq query \ --append_table \ --use_legacy_sql=false \ --destination_table my-other-project:mydataset.mytable \ 'SELECT name, number FROM `bigquery-public-data`.usa_names.usa_1910_current WHERE gender = "M" ORDER BY number DESC'

The output for each of these examples looks like the following. For readability, some output is truncated.

Waiting on bqjob_r123abc456_000001234567_1 ... (2s) Current status: DONE +---------+--------+ | name | number | +---------+--------+ | Robert | 10021 | | John | 9636 | | Robert | 9297 | | ... | +---------+--------+

API

To save query results to a permanent table, call the

jobs.insert method,

configure a query job, and include a value for the destinationTable

property. To control the write disposition for an existing destination

table, configure the writeDisposition property.

To control the processing location for the query job, specify the location

property in the jobReference section of the job resource.

Go

Before trying this sample, follow the Go setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Go API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

To save query results to a permanent table, set the destination table to the desired TableId in a QueryJobConfiguration.

Node.js

Before trying this sample, follow the Node.js setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Node.js API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Python

Before trying this sample, follow the Python setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Python API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Create a table that references an external data source

An external data source is a data source that you can query directly from BigQuery, even though the data is not stored in BigQuery storage. For example, you might have data in a different Google Cloud database, in files in Cloud Storage, or in a different cloud product altogether that you would like to analyze in BigQuery, but that you aren't prepared to migrate.

For more information, see Introduction to external data sources.

Create a table when you load data

When you load data into BigQuery, you can load data into a new table or partition, you can append data to an existing table or partition, or you can overwrite a table or partition. You don't need to create an empty table before loading data into it. You can create the new table and load your data at the same time.

When you load data into BigQuery, you can supply the table or partition schema, or for supported data formats, you can use schema auto-detection.

For more information about loading data, see Introduction to loading data into BigQuery.

Control access to tables

To configure access to tables and views, you can grant an IAM role to an entity at the following levels, listed in order of range of resources allowed (largest to smallest):

- a high level in the Google Cloud resource hierarchy such as the project, folder, or organization level

- the dataset level

- the table or view level

You can also restrict data access within tables, by using the following methods:

Access with any resource protected by IAM is additive. For example, if an entity does not have access at the high level such as a project, you could grant the entity access at the dataset level, and then the entity will have access to the tables and views in the dataset. Similarly, if the entity does not have access at the high level or the dataset level, you could grant the entity access at the table or view level.

Granting IAM roles at a higher level in the Google Cloud resource hierarchy such as the project, folder, or organization level gives the entity access to a broad set of resources. For example, granting a role to an entity at the project level gives that entity permissions that apply to all datasets throughout the project.

Granting a role at the dataset level specifies the operations an entity is allowed to perform on tables and views in that specific dataset, even if the entity does not have access at a higher level. For information on configuring dataset-level access controls, see Controlling access to datasets.

Granting a role at the table or view level specifies the operations an entity is allowed to perform on specific tables and views, even if the entity does not have access at a higher level. For information on configuring table-level access controls, see Controlling access to tables and views.

You can also create IAM custom roles. If you create a custom role, the permissions you grant depend on the specific operations you want the entity to be able to perform.

You can't set a "deny" permission on any resource protected by IAM.

For more information about roles and permissions, see Understanding roles in the IAM documentation and the BigQuery IAM roles and permissions.

Get information about tables

You can get information or metadata about tables in the following ways:

- Using the Google Cloud console.

- Using the bq command-line tool

bq showcommand. - Calling the

tables.getAPI method. - Using the client libraries.

- Querying the

INFORMATION_SCHEMA.VIEWSview.

Required permissions

At a minimum, to get information about tables, you must be granted

bigquery.tables.get permissions. The following predefined IAM

roles include bigquery.tables.get permissions:

bigquery.metadataViewerbigquery.dataViewerbigquery.dataOwnerbigquery.dataEditorbigquery.admin

In addition, if a user has bigquery.datasets.create permissions, when that

user creates a dataset, they are granted bigquery.dataOwner access to it.

bigquery.dataOwner access gives the user the ability to retrieve table

metadata.

For more information on IAM roles and permissions in BigQuery, see Access control.

Get table information

To get information about tables:

Console

In the navigation panel, in the Resources section, expand your project, and then select a dataset.

Click the dataset name to expand it. The tables and views in the dataset appear.

Click the table name.

In the Details panel, click Details to display the table's description and table information.

Optionally, switch to the Schema tab to view the table's schema definition.

bq

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

Issue the

bq showcommand to display all table information. Use the--schemaflag to display only table schema information. The--formatflag can be used to control the output.If you are getting information about a table in a project other than your default project, add the project ID to the dataset in the following format:

project_id:dataset.bq show \ --schema \ --format=prettyjson \ project_id:dataset.table

Where:

- project_id is your project ID.

- dataset is the name of the dataset.

- table is the name of the table.

Examples:

Enter the following command to display all information about

mytableinmydataset.mydatasetis in your default project.bq show --format=prettyjson mydataset.mytable

Enter the following command to display all information about

mytableinmydataset.mydatasetis inmyotherproject, not your default project.bq show --format=prettyjson myotherproject:mydataset.mytable

Enter the following command to display only schema information about

mytableinmydataset.mydatasetis inmyotherproject, not your default project.bq show --schema --format=prettyjson myotherproject:mydataset.mytable

API

Call the tables.get

method and provide any relevant parameters.

Go

Before trying this sample, follow the Go setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Go API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Node.js

Before trying this sample, follow the Node.js setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Node.js API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

PHP

Before trying this sample, follow the PHP setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery PHP API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Python

Before trying this sample, follow the Python setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Python API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Get table information using INFORMATION_SCHEMA

INFORMATION_SCHEMA is a series of views that provide access to metadata

about datasets, routines, tables, views, jobs, reservations, and streaming data.

You can query the following views to get table information:

- Use the

INFORMATION_SCHEMA.TABLESandINFORMATION_SCHEMA.TABLE_OPTIONSviews to retrieve metadata about tables and views in a project. - Use the

INFORMATION_SCHEMA.COLUMNSandINFORMATION_SCHEMA.COLUMN_FIELD_PATHSviews to retrieve metadata about the columns (fields) in a table. - Use the

INFORMATION_SCHEMA.TABLE_STORAGEviews to retrieve metadata about current and historical storage usage by a table.

The TABLES and TABLE_OPTIONS views also contain high-level

information about views. For detailed information, query the

INFORMATION_SCHEMA.VIEWS view

instead.

TABLES view

When you query the INFORMATION_SCHEMA.TABLES view, the query results contain

one row for each table or view in a dataset. For detailed information about

views, query the INFORMATION_SCHEMA.VIEWS

view instead.

The INFORMATION_SCHEMA.TABLES view has the following schema:

| Column name | Data type | Value |

|---|---|---|

table_catalog |

STRING |

The project ID of the project that contains the dataset. |

table_schema |

STRING |

The name of the dataset that contains the table or view. Also referred

to as the datasetId. |

table_name |

STRING |

The name of the table or view. Also referred to as the

tableId. |

table_type |

STRING |

The table type; one of the following:

|

managed_table_type |

STRING |

This column is in Preview. The managed table type; one of the following:

|

is_insertable_into |

STRING |

YES or NO depending on whether the table

supports DML INSERT

statements |

is_fine_grained_mutations_enabled |

STRING |

YES or NO depending on whether

fine-grained DML mutations

are enabled on the table

|

is_typed |

STRING |

The value is always NO |

is_change_history_enabled |

STRING |

YES or NO depending on whether

change history

is enabled |

creation_time |

TIMESTAMP |

The table's creation time |

base_table_catalog |

STRING |

For table clones

and table snapshots,

the base table's project. Applicable only to

tables with table_type set to CLONE or

SNAPSHOT.

|

base_table_schema |

STRING |

For table clones

and table snapshots,

the base table's dataset. Applicable only to tables with

table_type set to CLONE or

SNAPSHOT. |

base_table_name |

STRING |

For table clones

and table snapshots,

the base table's name. Applicable only to tables with

table_type set to CLONE or

SNAPSHOT. |

snapshot_time_ms |

TIMESTAMP |

For table clones

and table snapshots,

the time when the clone

or snapshot

operation was run on the base table to create this table. If

time travel was used, then this

field contains the time travel timestamp. Otherwise, the

snapshot_time_ms field is the same as the

creation_time field. Applicable only to

tables with table_type set to CLONE or

SNAPSHOT.

|

replica_source_catalog |

STRING |

For materialized view replicas, the base materialized view's project. |

replica_source_schema |

STRING |

For materialized view replicas, the base materialized view's dataset. |

replica_source_name |

STRING |

For materialized view replicas, the base materialized view's name. |

replication_status |

STRING |

For

materialized view replicas,

the status of the replication from the base materialized view to the

materialized view replica; one of the following:

|

replication_error |

STRING |

If replication_status indicates a replication issue for a

materialized view replica,

replication_error provides further details about the issue. |

ddl |

STRING |

The DDL statement

that can be used to recreate the table, such as

CREATE TABLE

or CREATE VIEW |

default_collation_name |

STRING |

The name of the default collation specification

if it exists; otherwise, NULL.

|

upsert_stream_apply_watermark |

TIMESTAMP |

For tables that use change data capture (CDC), the time when row modifications were last applied. For more information, see Monitor table upsert operation progress. |

Examples

Example 1:

The following example retrieves table metadata for all of the tables in the

dataset named mydataset. The metadata that's

returned is for all types of tables in mydataset in your default project.

mydataset contains the following tables:

mytable1: a standard BigQuery tablemyview1: a BigQuery view

To run the query against a project other than your default project, add the

project ID to the dataset in the following format:

`project_id`.dataset.INFORMATION_SCHEMA.view;

for example, `myproject`.mydataset.INFORMATION_SCHEMA.TABLES.

SELECT table_catalog, table_schema, table_name, table_type, is_insertable_into, creation_time, ddl FROM mydataset.INFORMATION_SCHEMA.TABLES;

The result is similar to the following. For readability, some columns are excluded from the result.

+----------------+---------------+----------------+------------+--------------------+---------------------+---------------------------------------------+ | table_catalog | table_schema | table_name | table_type | is_insertable_into | creation_time | ddl | +----------------+---------------+----------------+------------+--------------------+---------------------+---------------------------------------------+ | myproject | mydataset | mytable1 | BASE TABLE | YES | 2018-10-29 20:34:44 | CREATE TABLE `myproject.mydataset.mytable1` | | | | | | | | ( | | | | | | | | id INT64 | | | | | | | | ); | | myproject | mydataset | myview1 | VIEW | NO | 2018-12-29 00:19:20 | CREATE VIEW `myproject.mydataset.myview1` | | | | | | | | AS SELECT 100 as id; | +----------------+---------------+----------------+------------+--------------------+---------------------+---------------------------------------------+

Example 2:

The following example retrieves table metadata for all tables of type CLONE

or SNAPSHOT from the INFORMATION_SCHEMA.TABLES view. The metadata returned

is for tables in mydataset in your default project.

To run the query against a project other than your default project, add the

project ID to the dataset in the following format:

`project_id`.dataset.INFORMATION_SCHEMA.view;

for example, `myproject`.mydataset.INFORMATION_SCHEMA.TABLES.

SELECT table_name, table_type, base_table_catalog, base_table_schema, base_table_name, snapshot_time_ms FROM mydataset.INFORMATION_SCHEMA.TABLES WHERE table_type = 'CLONE' OR table_type = 'SNAPSHOT';

The result is similar to the following. For readability, some columns are excluded from the result.

+--------------+------------+--------------------+-------------------+-----------------+---------------------+ | table_name | table_type | base_table_catalog | base_table_schema | base_table_name | snapshot_time_ms | +--------------+------------+--------------------+-------------------+-----------------+---------------------+ | items_clone | CLONE | myproject | mydataset | items | 2018-10-31 22:40:05 | | orders_bk | SNAPSHOT | myproject | mydataset | orders | 2018-11-01 08:22:39 | +--------------+------------+--------------------+-------------------+-----------------+---------------------+

Example 3:

The following example retrieves table_name and ddl columns from the INFORMATION_SCHEMA.TABLES

view for the population_by_zip_2010 table in the

census_bureau_usa

dataset. This dataset is part of the BigQuery

public dataset program.

Because the table you're querying is in another project, you add the project ID to the dataset in

the following format:

`project_id`.dataset.INFORMATION_SCHEMA.view.

In this example, the value is

`bigquery-public-data`.census_bureau_usa.INFORMATION_SCHEMA.TABLES.

SELECT table_name, ddl FROM `bigquery-public-data`.census_bureau_usa.INFORMATION_SCHEMA.TABLES WHERE table_name = 'population_by_zip_2010';

The result is similar to the following:

+------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| table_name | ddl |

+------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| population_by_zip_2010 | CREATE TABLE `bigquery-public-data.census_bureau_usa.population_by_zip_2010` |

| | ( |

| | geo_id STRING OPTIONS(description="Geo code"), |

| | zipcode STRING NOT NULL OPTIONS(description="Five digit ZIP Code Tabulation Area Census Code"), |

| | population INT64 OPTIONS(description="The total count of the population for this segment."), |

| | minimum_age INT64 OPTIONS(description="The minimum age in the age range. If null, this indicates the row as a total for male, female, or overall population."), |

| | maximum_age INT64 OPTIONS(description="The maximum age in the age range. If null, this indicates the row as having no maximum (such as 85 and over) or the row is a total of the male, female, or overall population."), |

| | gender STRING OPTIONS(description="male or female. If empty, the row is a total population summary.") |

| | ) |

| | OPTIONS( |

| | labels=[("freebqcovid", "")] |

| | ); |

+------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

TABLE_OPTIONS view

When you query the INFORMATION_SCHEMA.TABLE_OPTIONS view, the query results

contain one row for each option, for each table or view in a dataset. For

detailed information about

views, query the

INFORMATION_SCHEMA.VIEWS view

instead.

The INFORMATION_SCHEMA.TABLE_OPTIONS view has the following schema:

| Column name | Data type | Value |

|---|---|---|

TABLE_CATALOG |

STRING |

The project ID of the project that contains the dataset |

TABLE_SCHEMA |

STRING |

The name of the dataset that contains the table or view also referred

to as the datasetId |

TABLE_NAME |

STRING |

The name of the table or view also referred to as the tableId |

OPTION_NAME |

STRING |

One of the name values in the options table |

OPTION_TYPE |

STRING |

One of the data type values in the options table |

OPTION_VALUE |

STRING |

One of the value options in the options table |

Options table

|

|

|

|---|---|---|

|

|

A description of the table |

|

|

Whether automatic refresh is enabled for a materialized view |

|

|

The time when this table expires |

|

|

The table's descriptive name |

|

|

The name of the Cloud KMS key used to encrypt the table |

|

|

An array of STRUCT's that represent the labels on the

table |

|

|

The configured table's maximum staleness for BigQuery change data capture (CDC) upserts |

|

|

The default lifetime, in days, of all partitions in a partitioned table |

|

|

How frequently a materialized view is refreshed |

|

|

Whether queries over the table require a partition filter |

|

|

Tags attached to a table in a namespaced <key, value> syntax. For more information, see Tags and conditional access. |

For external tables, the following options are possible:

| Options | |

|---|---|

allow_jagged_rows |

If Applies to CSV data. |

allow_quoted_newlines |

If Applies to CSV data. |

bigtable_options |

Only required when creating a Bigtable external table. Specifies the schema of the Bigtable external table in JSON format. For a list of Bigtable table definition options, see

|

column_name_character_map |

Defines the scope of supported column name characters and the

handling behavior of unsupported characters. The default setting is

Supported values include:

Applies to CSV and Parquet data. |

compression |

The compression type of the data source. Supported values include:

Applies to CSV and JSON data. |

decimal_target_types |

Determines how to convert a Example: |

description |

A description of this table. |

enable_list_inference |

If Applies to Parquet data. |

enable_logical_types |

If Applies to Avro data. |

encoding |

The character encoding of the data. Supported values include:

Applies to CSV data. |

enum_as_string |

If Applies to Parquet data. |

expiration_timestamp |

The time when this table expires. If not specified, the table does not expire. Example: |

field_delimiter |

The separator for fields in a CSV file. Applies to CSV data. |

format |

The format of the external data.

Supported values for

Supported values for

The value |

hive_partition_uri_prefix |

A common prefix for all source URIs before the partition key encoding begins. Applies only to hive-partitioned external tables. Applies to Avro, CSV, JSON, Parquet, and ORC data. Example: |

file_set_spec_type |

Specifies how to interpret source URIs for load jobs and external tables. Supported values include:

For example, if you have a source URI of |

ignore_unknown_values |

If Applies to CSV and JSON data. |

json_extension |

For JSON data, indicates a particular JSON interchange format. If not specified, BigQuery reads the data as generic JSON records. Supported values include: |

max_bad_records |

The maximum number of bad records to ignore when reading the data. Applies to: CSV, JSON, and Google Sheets data. |

max_staleness |

Applicable for BigLake tables and object tables. Specifies whether cached metadata is used by operations against the table, and how fresh the cached metadata must be in order for the operation to use it. To disable metadata caching, specify 0. This is the default. To enable metadata caching, specify an

interval literal

value between 30 minutes and 7 days. For example, specify

|

null_marker |

The string that represents Applies to CSV data. |

null_markers |

(Preview) The list of strings that represent This option cannot be used with Applies to CSV data. |

object_metadata |

Only required when creating an object table. Set the value of this option to |

preserve_ascii_control_characters |

If Applies to CSV data. |

projection_fields |

A list of entity properties to load. Applies to Datastore data. |

quote |

The string used to quote data sections in a CSV file. If your data

contains quoted newline characters, also set the

Applies to CSV data. |

reference_file_schema_uri |

User provided reference file with the table schema. Applies to Parquet/ORC/AVRO data. Example: |

require_hive_partition_filter |

If Applies to Avro, CSV, JSON, Parquet, and ORC data. |

sheet_range |

Range of a Google Sheets spreadsheet to query from. Applies to Google Sheets data. Example: |

skip_leading_rows |

The number of rows at the top of a file to skip when reading the data. Applies to CSV and Google Sheets data. |

source_column_match |

(Preview) This controls the strategy used to match loaded columns to the schema. If this value is unspecified, then the default is based on how the schema is provided. If autodetect is enabled, then the default behavior is to match columns by name. Otherwise, the default is to match columns by position. This is done to keep the behavior backward-compatible. Supported values include:

|

tags |

<ARRAY<STRUCT<STRING, STRING>>>

An array of IAM tags for the table, expressed as key-value pairs. The key should be the namespaced key name, and the value should be the short name. |

time_zone |

(Preview) Default time zone that will apply when parsing timestamp values that have no specific time zone. Check valid time zone names. If this value is not present, the timestamp values without specific time zone is parsed using default time zone UTC. Applies to CSV and JSON data. |

date_format |

(Preview)

Format elements

that define how the DATE values are formatted in the input files (for

example, If this value is present, this format is the only compatible DATE format. Schema autodetection will also decide DATE column type based on this format instead of the existing format. If this value is not present, the DATE field is parsed with the default formats. Applies to CSV and JSON data. |

datetime_format |

(Preview)

Format elements

that define how the DATETIME values are formatted in the input files

(for example, If this value is present, this format is the only compatible DATETIME format. Schema autodetection will also decide DATETIME column type based on this format instead of the existing format. If this value is not present, the DATETIME field is parsed with the default formats. Applies to CSV and JSON data. |

time_format |

(Preview)

Format elements

that define how the TIME values are formatted in the input files (for

example, If this value is present, this format is the only compatible TIME format. Schema autodetection will also decide TIME column type based on this format instead of the existing format. If this value is not present, the TIME field is parsed with the default formats. Applies to CSV and JSON data. |

timestamp_format |

(Preview)

Format elements

that define how the TIMESTAMP values are formatted in the input files

(for example, If this value is present, this format is the only compatible TIMESTAMP format. Schema autodetection will also decide TIMESTAMP column type based on this format instead of the existing format. If this value is not present, the TIMESTAMP field is parsed with the default formats. Applies to CSV and JSON data. |

uris |

For external tables, including object tables, that aren't Bigtable tables:

An array of fully qualified URIs for the external data locations.

Each URI can contain one

asterisk ( The following examples show valid

For Bigtable tables:

The URI identifying the Bigtable table to use as a data source. You can only specify one Bigtable URI. Example:

For more information on constructing a Bigtable URI, see Retrieve the Bigtable URI. |

Examples

Example 1:

The following example retrieves the default table expiration times for all

tables in mydataset in your default project (myproject) by querying the

INFORMATION_SCHEMA.TABLE_OPTIONS view.

To run the query against a project other than your default project, add the

project ID to the dataset in the following format:

`project_id`.dataset.INFORMATION_SCHEMA.view;

for example, `myproject`.mydataset.INFORMATION_SCHEMA.TABLE_OPTIONS.

SELECT * FROM mydataset.INFORMATION_SCHEMA.TABLE_OPTIONS WHERE option_name = 'expiration_timestamp';

The result is similar to the following:

+----------------+---------------+------------+----------------------+-------------+--------------------------------------+ | table_catalog | table_schema | table_name | option_name | option_type | option_value | +----------------+---------------+------------+----------------------+-------------+--------------------------------------+ | myproject | mydataset | mytable1 | expiration_timestamp | TIMESTAMP | TIMESTAMP "2020-01-16T21:12:28.000Z" | | myproject | mydataset | mytable2 | expiration_timestamp | TIMESTAMP | TIMESTAMP "2021-01-01T21:12:28.000Z" | +----------------+---------------+------------+----------------------+-------------+--------------------------------------+

Example 2:

The following example retrieves metadata about all tables in mydataset that

contain test data. The query uses the values in the description option to find

tables that contain "test" anywhere in the description. mydataset is in your

default project — myproject.

To run the query against a project other than your default project, add the

project ID to the dataset in the following format:

`project_id`.dataset.INFORMATION_SCHEMA.view;

for example,

`myproject`.mydataset.INFORMATION_SCHEMA.TABLE_OPTIONS.

SELECT * FROM mydataset.INFORMATION_SCHEMA.TABLE_OPTIONS WHERE option_name = 'description' AND option_value LIKE '%test%';

The result is similar to the following:

+----------------+---------------+------------+-------------+-------------+--------------+ | table_catalog | table_schema | table_name | option_name | option_type | option_value | +----------------+---------------+------------+-------------+-------------+--------------+ | myproject | mydataset | mytable1 | description | STRING | "test data" | | myproject | mydataset | mytable2 | description | STRING | "test data" | +----------------+---------------+------------+-------------+-------------+--------------+

COLUMNS view

When you query the INFORMATION_SCHEMA.COLUMNS view, the query results contain

one row for each column (field) in a table.

The INFORMATION_SCHEMA.COLUMNS view has the following schema:

| Column name | Data type | Value |

|---|---|---|

TABLE_CATALOG |

STRING |

The project ID of the project that contains the dataset |

TABLE_SCHEMA |

STRING |

The name of the dataset that contains the table also referred to as

the datasetId |

TABLE_NAME |

STRING |

The name of the table or view also referred to as the tableId |

COLUMN_NAME |

STRING |

The name of the column |

ORDINAL_POSITION |

INT64 |

The 1-indexed offset of the column within the table; if it's a pseudo

column such as _PARTITIONTIME or _PARTITIONDATE, the value is

NULL |

IS_NULLABLE |

STRING |

YES or NO depending on whether the column's

mode allows NULL values |

DATA_TYPE |

STRING |

The column's GoogleSQL data type |

IS_GENERATED |

STRING |

The value is always NEVER |

GENERATION_EXPRESSION |

STRING |

The value is always NULL |

IS_STORED |

STRING |

The value is always NULL |

IS_HIDDEN |

STRING |

YES or NO depending on whether the column is

a pseudo column such as _PARTITIONTIME or _PARTITIONDATE |

IS_UPDATABLE |

STRING |

The value is always NULL |

IS_SYSTEM_DEFINED |

STRING |

YES or NO depending on whether the column is

a pseudo column such as _PARTITIONTIME or _PARTITIONDATE |

IS_PARTITIONING_COLUMN |

STRING |

YES or NO depending on whether the column is

a partitioning column |

CLUSTERING_ORDINAL_POSITION |

INT64 |

The 1-indexed offset of the column within the table's

clustering columns; the value is NULL if the table is not a

clustered table |

COLLATION_NAME |

STRING |

The name of the collation specification

if it exists; otherwise, NULLIf a STRING or ARRAY<STRING> is passed

in, the collation specification is returned if it exists; otherwise

NULL is returned

|

COLUMN_DEFAULT |

STRING |

The default value of the

column if it exists; otherwise, the value is NULL

|

ROUNDING_MODE |

STRING |

The mode of rounding that's used for values written to the field if its

type is a parameterized NUMERIC or BIGNUMERIC;

otherwise, the value is NULL

|

POLICY_TAGS |

ARRAY<STRING> |

The list of policy tags that are attached to the column |

Examples

The following example retrieves metadata from the INFORMATION_SCHEMA.COLUMNS

view for the population_by_zip_2010 table in the

census_bureau_usa

dataset. This dataset is part of the BigQuery

public dataset program.

Because the table you're querying is in another project, the

bigquery-public-data project, you add the project ID to the dataset in the

following format:

`project_id`.dataset.INFORMATION_SCHEMA.view;

for example,

`bigquery-public-data`.census_bureau_usa.INFORMATION_SCHEMA.TABLES.

The following columns are excluded from the query results because they are currently reserved for future use:

IS_GENERATEDGENERATION_EXPRESSIONIS_STOREDIS_UPDATABLE

SELECT * EXCEPT(is_generated, generation_expression, is_stored, is_updatable) FROM `bigquery-public-data`.census_bureau_usa.INFORMATION_SCHEMA.COLUMNS WHERE table_name = 'population_by_zip_2010';

The result is similar to the following. For readability, some columns are excluded from the result.

+------------------------+-------------+------------------+-------------+-----------+-----------+-------------------+------------------------+-----------------------------+-------------+ | table_name | column_name | ordinal_position | is_nullable | data_type | is_hidden | is_system_defined | is_partitioning_column | clustering_ordinal_position | policy_tags | +------------------------+-------------+------------------+-------------+-----------+-----------+-------------------+------------------------+-----------------------------+-------------+ | population_by_zip_2010 | zipcode | 1 | NO | STRING | NO | NO | NO | NULL | 0 rows | | population_by_zip_2010 | geo_id | 2 | YES | STRING | NO | NO | NO | NULL | 0 rows | | population_by_zip_2010 | minimum_age | 3 | YES | INT64 | NO | NO | NO | NULL | 0 rows | | population_by_zip_2010 | maximum_age | 4 | YES | INT64 | NO | NO | NO | NULL | 0 rows | | population_by_zip_2010 | gender | 5 | YES | STRING | NO | NO | NO | NULL | 0 rows | | population_by_zip_2010 | population | 6 | YES | INT64 | NO | NO | NO | NULL | 0 rows | +------------------------+-------------+------------------+-------------+-----------+-----------+-------------------+------------------------+-----------------------------+-------------+

COLUMN_FIELD_PATHS view

When you query the INFORMATION_SCHEMA.COLUMN_FIELD_PATHS view, the query

results contain one row for each column

nested within a RECORD

(or STRUCT) column.

The INFORMATION_SCHEMA.COLUMN_FIELD_PATHS view has the following schema:

| Column name | Data type | Value |

|---|---|---|

TABLE_CATALOG |

STRING |

The project ID of the project that contains the dataset |

TABLE_SCHEMA |

STRING |

The name of the dataset that contains the table also referred to as

the datasetId |

TABLE_NAME |

STRING |

The name of the table or view also referred to as the tableId |

COLUMN_NAME |

STRING |

The name of the column |

FIELD_PATH |

STRING |

The path to a column nested within a `RECORD` or `STRUCT` column |

DATA_TYPE |

STRING |

The column's GoogleSQL data type |

DESCRIPTION |

STRING |

The column's description |

COLLATION_NAME |

STRING |

The name of the collation specification

if it exists; otherwise, NULLIf a STRING, ARRAY<STRING>, or

STRING field in a STRUCT is passed in, the

collation specification is returned if it exists; otherwise,

NULL is returned

|

ROUNDING_MODE |

STRING |

The mode of rounding that's used when applying precision and scale to

parameterized NUMERIC or BIGNUMERIC values;

otherwise, the value is NULL

|

POLICY_TAGS |

ARRAY<STRING> |

The list of policy tags that are attached to the column |

Examples

The following example retrieves metadata from the

INFORMATION_SCHEMA.COLUMN_FIELD_PATHS view for the commits table in the

github_repos dataset.

This dataset is part of the BigQuery

public dataset program.

Because the table you're querying is in another project, the

bigquery-public-data project, you add the project ID to the dataset in the

following format:

`project_id`.dataset.INFORMATION_SCHEMA.view;

for example,

`bigquery-public-data`.github_repos.INFORMATION_SCHEMA.COLUMN_FIELD_PATHS.

The commits table contains the following nested and nested and repeated

columns:

author: nestedRECORDcolumncommitter: nestedRECORDcolumntrailer: nested and repeatedRECORDcolumndifference: nested and repeatedRECORDcolumn

To view metadata about the author and difference columns, run the following query.

SELECT * FROM `bigquery-public-data`.github_repos.INFORMATION_SCHEMA.COLUMN_FIELD_PATHS WHERE table_name = 'commits' AND (column_name = 'author' OR column_name = 'difference');

The result is similar to the following. For readability, some columns are excluded from the result.

+------------+-------------+---------------------+-----------------------------------------------------------------------------------------------------------------------------------------------------+-------------+-------------+ | table_name | column_name | field_path | data_type | description | policy_tags | +------------+-------------+---------------------+-----------------------------------------------------------------------------------------------------------------------------------------------------+-------------+-------------+ | commits | author | author | STRUCT<name STRING, email STRING, time_sec INT64, tz_offset INT64, date TIMESTAMP> | NULL | 0 rows | | commits | author | author.name | STRING | NULL | 0 rows | | commits | author | author.email | STRING | NULL | 0 rows | | commits | author | author.time_sec | INT64 | NULL | 0 rows | | commits | author | author.tz_offset | INT64 | NULL | 0 rows | | commits | author | author.date | TIMESTAMP | NULL | 0 rows | | commits | difference | difference | ARRAY<STRUCT<old_mode INT64, new_mode INT64, old_path STRING, new_path STRING, old_sha1 STRING, new_sha1 STRING, old_repo STRING, new_repo STRING>> | NULL | 0 rows | | commits | difference | difference.old_mode | INT64 | NULL | 0 rows | | commits | difference | difference.new_mode | INT64 | NULL | 0 rows | | commits | difference | difference.old_path | STRING | NULL | 0 rows | | commits | difference | difference.new_path | STRING | NULL | 0 rows | | commits | difference | difference.old_sha1 | STRING | NULL | 0 rows | | commits | difference | difference.new_sha1 | STRING | NULL | 0 rows | | commits | difference | difference.old_repo | STRING | NULL | 0 rows | | commits | difference | difference.new_repo | STRING | NULL | 0 rows | +------------+-------------+---------------------+-----------------------------------------------------------------------------------------------------------------------------------------------------+-------------+-------------+

TABLE_STORAGE view

The TABLE_STORAGE and TABLE_STORAGE_BY_ORGANIZATION views have the following

schema:

| Column name | Data type | Value |

|---|---|---|

PROJECT_ID |

STRING |

The project ID of the project that contains the dataset. |

PROJECT_NUMBER |

INT64 |

The project number of the project that contains the dataset. |

TABLE_CATALOG |

STRING |

The project ID of the project that contains the dataset. |

TABLE_SCHEMA |

STRING |

The name of the dataset that contains the table or materialized view,

also referred to as the datasetId. |

TABLE_NAME |

STRING |

The name of the table or materialized view, also referred to as the

tableId. |

CREATION_TIME |

TIMESTAMP |

The creation time of the table. |

TOTAL_ROWS |

INT64 |

The total number of rows in the table or materialized view. |

TOTAL_PARTITIONS |

INT64 |

The number of partitions present in the table or materialized view. Unpartitioned tables return 0. |

TOTAL_LOGICAL_BYTES |

INT64 |

Total number of logical (uncompressed) bytes in the table or materialized view. |

ACTIVE_LOGICAL_BYTES |

INT64 |

Number of logical (uncompressed) bytes that are younger than 90 days. |

LONG_TERM_LOGICAL_BYTES |

INT64 |

Number of logical (uncompressed) bytes that are older than 90 days. |

CURRENT_PHYSICAL_BYTES |

INT64 |

Total number of physical bytes for the current storage of the table across all partitions. |

TOTAL_PHYSICAL_BYTES |

INT64 |

Total number of physical (compressed) bytes used for storage, including active, long-term, and time travel (deleted or changed data) bytes. Fail-safe (deleted or changed data retained after the time-travel window) bytes aren't included. |

ACTIVE_PHYSICAL_BYTES |

INT64 |

Number of physical (compressed) bytes younger than 90 days, including time travel (deleted or changed data) bytes. |

LONG_TERM_PHYSICAL_BYTES |

INT64 |

Number of physical (compressed) bytes older than 90 days. |

TIME_TRAVEL_PHYSICAL_BYTES |

INT64 |

Number of physical (compressed) bytes used by time travel storage (deleted or changed data). |

STORAGE_LAST_MODIFIED_TIME |

TIMESTAMP |

The most recent time that data was written to the table. |

DELETED |

BOOLEAN |

Indicates whether or not the table is deleted. |

TABLE_TYPE |

STRING |

The type of table. For example, BASE TABLE.

|

MANAGED_TABLE_TYPE |

STRING |

This column is in Preview. The managed type of the table. For example,

NATIVE or BIGLAKE.

|

FAIL_SAFE_PHYSICAL_BYTES |

INT64 |

Number of physical (compressed) bytes used by the fail-safe storage (deleted or changed data). |

LAST_METADATA_INDEX_REFRESH_TIME |

TIMESTAMP |

The last metadata index refresh time of the table. |

TABLE_DELETION_REASON |

STRING |

Table deletion reason if the DELETED field is true. The

possible values are as follows:

|

TABLE_DELETION_TIME |

TIMESTAMP |

The deletion time of the table. |

Examples

Example 1:

The following example shows you the total logical bytes billed for the current project.

SELECT SUM(total_logical_bytes) AS total_logical_bytes FROM `region-REGION`.INFORMATION_SCHEMA.TABLE_STORAGE;

The result is similar to the following:

+---------------------+ | total_logical_bytes | +---------------------+ | 971329178274633 | +---------------------+

Example 2:

The following example shows different storage bytes in GiB at the dataset(s) level for current project.

SELECT table_schema AS dataset_name, -- Logical SUM(total_logical_bytes) / power(1024, 3) AS total_logical_gib, SUM(active_logical_bytes) / power(1024, 3) AS active_logical_gib, SUM(long_term_logical_bytes) / power(1024, 3) AS long_term_logical_gib, -- Physical SUM(total_physical_bytes) / power(1024, 3) AS total_physical_gib, SUM(active_physical_bytes) / power(1024, 3) AS active_physical_gib, SUM(active_physical_bytes - time_travel_physical_bytes) / power(1024, 3) AS active_no_tt_physical_gib, SUM(long_term_physical_bytes) / power(1024, 3) AS long_term_physical_gib, SUM(time_travel_physical_bytes) / power(1024, 3) AS time_travel_physical_gib, SUM(fail_safe_physical_bytes) / power(1024, 3) AS fail_safe_physical_gib FROM `region-REGION`.INFORMATION_SCHEMA.TABLE_STORAGE WHERE table_type ='BASE TABLE' GROUP BY table_schema ORDER BY dataset_name

Example 3:

The following example shows you how to forecast the price difference per dataset between logical and physical billing models for the next 30 days. This example assumes that future storage usage is constant over the next 30 days from the moment the query was run. Note that the forecast is limited to base tables, it excludes all other types of tables within a dataset.

The prices used in the pricing variables for this query are for

the us-central1 region. If you want to run this query for a different region,

update the pricing variables appropriately. See

Storage pricing for pricing information.

Open the BigQuery page in the Google Cloud console.

Enter the following GoogleSQL query in the Query editor box.

INFORMATION_SCHEMArequires GoogleSQL syntax. GoogleSQL is the default syntax in the Google Cloud console.DECLARE active_logical_gib_price FLOAT64 DEFAULT 0.02; DECLARE long_term_logical_gib_price FLOAT64 DEFAULT 0.01; DECLARE active_physical_gib_price FLOAT64 DEFAULT 0.04; DECLARE long_term_physical_gib_price FLOAT64 DEFAULT 0.02; WITH storage_sizes AS ( SELECT table_schema AS dataset_name, -- Logical SUM(IF(deleted=false, active_logical_bytes, 0)) / power(1024, 3) AS active_logical_gib, SUM(IF(deleted=false, long_term_logical_bytes, 0)) / power(1024, 3) AS long_term_logical_gib, -- Physical SUM(active_physical_bytes) / power(1024, 3) AS active_physical_gib, SUM(active_physical_bytes - time_travel_physical_bytes) / power(1024, 3) AS active_no_tt_physical_gib, SUM(long_term_physical_bytes) / power(1024, 3) AS long_term_physical_gib, -- Restorable previously deleted physical SUM(time_travel_physical_bytes) / power(1024, 3) AS time_travel_physical_gib, SUM(fail_safe_physical_bytes) / power(1024, 3) AS fail_safe_physical_gib, FROM `region-REGION`.INFORMATION_SCHEMA.TABLE_STORAGE_BY_PROJECT WHERE total_physical_bytes + fail_safe_physical_bytes > 0 -- Base the forecast on base tables only for highest precision results AND table_type = 'BASE TABLE' GROUP BY 1 ) SELECT dataset_name, -- Logical ROUND(active_logical_gib, 2) AS active_logical_gib, ROUND(long_term_logical_gib, 2) AS long_term_logical_gib, -- Physical ROUND(active_physical_gib, 2) AS active_physical_gib, ROUND(long_term_physical_gib, 2) AS long_term_physical_gib, ROUND(time_travel_physical_gib, 2) AS time_travel_physical_gib, ROUND(fail_safe_physical_gib, 2) AS fail_safe_physical_gib, -- Compression ratio ROUND(SAFE_DIVIDE(active_logical_gib, active_no_tt_physical_gib), 2) AS active_compression_ratio, ROUND(SAFE_DIVIDE(long_term_logical_gib, long_term_physical_gib), 2) AS long_term_compression_ratio, -- Forecast costs logical ROUND(active_logical_gib * active_logical_gib_price, 2) AS forecast_active_logical_cost, ROUND(long_term_logical_gib * long_term_logical_gib_price, 2) AS forecast_long_term_logical_cost, -- Forecast costs physical ROUND((active_no_tt_physical_gib + time_travel_physical_gib + fail_safe_physical_gib) * active_physical_gib_price, 2) AS forecast_active_physical_cost, ROUND(long_term_physical_gib * long_term_physical_gib_price, 2) AS forecast_long_term_physical_cost, -- Forecast costs total ROUND(((active_logical_gib * active_logical_gib_price) + (long_term_logical_gib * long_term_logical_gib_price)) - (((active_no_tt_physical_gib + time_travel_physical_gib + fail_safe_physical_gib) * active_physical_gib_price) + (long_term_physical_gib * long_term_physical_gib_price)), 2) AS forecast_total_cost_difference FROM storage_sizes ORDER BY (forecast_active_logical_cost + forecast_active_physical_cost) DESC;

Click Run.

The result is similar to following:

+--------------+--------------------+-----------------------+---------------------+------------------------+--------------------------+-----------------------------+------------------------------+----------------------------------+-------------------------------+----------------------------------+--------------------------------+ | dataset_name | active_logical_gib | long_term_logical_gib | active_physical_gib | long_term_physical_gib | active_compression_ratio | long_term_compression_ratio | forecast_active_logical_cost | forecaset_long_term_logical_cost | forecast_active_physical_cost | forecast_long_term_physical_cost | forecast_total_cost_difference | +--------------+--------------------+-----------------------+---------------------+------------------------+--------------------------+-----------------------------+------------------------------+----------------------------------+-------------------------------+----------------------------------+--------------------------------+ | dataset1 | 10.0 | 10.0 | 1.0 | 1.0 | 10.0 | 10.0 | 0.2 | 0.1 | 0.04 | 0.02 | 0.24 |

List tables in a dataset

You can list tables in datasets in the following ways:

- Using the Google Cloud console.

- Using the bq command-line tool

bq lscommand. - Calling the

tables.listAPI method. - Using the client libraries.

Required permissions

At a minimum, to list tables in a dataset, you must be granted

bigquery.tables.list permissions. The following predefined IAM

roles include bigquery.tables.list permissions:

bigquery.userbigquery.metadataViewerbigquery.dataViewerbigquery.dataEditorbigquery.dataOwnerbigquery.admin

For more information on IAM roles and permissions in BigQuery, see Access control.

List tables

To list the tables in a dataset:

Console

In the Google Cloud console, in the navigation pane, click your dataset to expand it. This displays the tables and views in the dataset.

Scroll through the list to see the tables in the dataset. Tables and views are identified by different icons.

bq

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

Issue the

bq lscommand. The--formatflag can be used to control the output. If you are listing tables in a project other than your default project, add the project ID to the dataset in the following format:project_id:dataset.Additional flags include:

--max_resultsor-n: An integer indicating the maximum number of results. The default value is50.

bq ls \ --format=pretty \ --max_results integer \ project_id:dataset

Where:

- integer is an integer representing the number of tables to list.

- project_id is your project ID.

- dataset is the name of the dataset.

When you run the command, the

Typefield displays eitherTABLEorVIEW. For example:+-------------------------+-------+----------------------+-------------------+ | tableId | Type | Labels | Time Partitioning | +-------------------------+-------+----------------------+-------------------+ | mytable | TABLE | department:shipping | | | myview | VIEW | | | +-------------------------+-------+----------------------+-------------------+

Examples:

Enter the following command to list tables in dataset

mydatasetin your default project.bq ls --format=pretty mydataset

Enter the following command to return more than the default output of 50 tables from

mydataset.mydatasetis in your default project.bq ls --format=pretty --max_results 60 mydataset

Enter the following command to list tables in dataset

mydatasetinmyotherproject.bq ls --format=pretty myotherproject:mydataset

API

To list tables using the API, call the tables.list

method.

C#

Before trying this sample, follow the C# setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery C# API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Go

Before trying this sample, follow the Go setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Go API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Node.js