Manage tables

This document describes how to manage tables in BigQuery. You can manage your BigQuery tables in the following ways:

For information about how to restore (or undelete) a deleted table, see Restore deleted tables.

For more information about creating and using tables including getting table information, listing tables, and controlling access to table data, see Creating and using tables.

Before you begin

Grant Identity and Access Management (IAM) roles that give users the necessary permissions to perform each task in this document. The permissions required to perform a task (if any) are listed in the "Required permissions" section of the task.

Update table properties

You can update the following elements of a table:

Required permissions

To get the permissions that

you need to update table properties,

ask your administrator to grant you the

Data Editor (roles/bigquery.dataEditor)

IAM role on a table.

For more information about granting roles, see Manage access to projects, folders, and organizations.

This predefined role contains the permissions required to update table properties. To see the exact permissions that are required, expand the Required permissions section:

Required permissions

The following permissions are required to update table properties:

-

bigquery.tables.update -

bigquery.tables.get

You might also be able to get these permissions with custom roles or other predefined roles.

Additionally, if you have the bigquery.datasets.create permission, you can

update the properties of the tables of the datasets that you create.

Update a table's description

You can update a table's description in the following ways:

- Using the Google Cloud console.

- Using a data definition language (DDL)

ALTER TABLEstatement. - Using the bq command-line tool's

bq updatecommand. - Calling the

tables.patchAPI method. - Using the client libraries.

- Generating a description with Gemini in BigQuery.

To update a table's description:

Console

You can't add a description when you create a table using the Google Cloud console. After the table is created, you can add a description on the Details page.

In the Explorer panel, expand your project and dataset, then select the table.

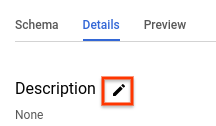

In the details panel, click Details.

In the Description section, click the pencil icon to edit the description.

Enter a description in the box, and click Update to save.

SQL

Use the

ALTER TABLE SET OPTIONS statement.

The following example updates the

description of a table named mytable:

In the Google Cloud console, go to the BigQuery page.

In the query editor, enter the following statement:

ALTER TABLE mydataset.mytable SET OPTIONS ( description = 'Description of mytable');

Click Run.

For more information about how to run queries, see Run an interactive query.

bq

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

Issue the

bq updatecommand with the--descriptionflag. If you are updating a table in a project other than your default project, add the project ID to the dataset name in the following format:project_id:dataset.bq update \ --description "description" \ project_id:dataset.table

Replace the following:

description: the text describing the table in quotesproject_id: your project IDdataset: the name of the dataset that contains the table you're updatingtable: the name of the table you're updating

Examples:

To change the description of the

mytabletable in themydatasetdataset to "Description of mytable", enter the following command. Themydatasetdataset is in your default project.bq update --description "Description of mytable" mydataset.mytable

To change the description of the

mytabletable in themydatasetdataset to "Description of mytable", enter the following command. Themydatasetdataset is in themyotherprojectproject, not your default project.bq update \ --description "Description of mytable" \ myotherproject:mydataset.mytable

API

Call the tables.patch

method and use the description property in the table resource

to update the table's description. Because the tables.update method

replaces the entire table resource, the tables.patch method is preferred.

Go

Before trying this sample, follow the Go setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Go API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Java

Before trying this sample, follow the Java setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Java API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Python

Before trying this sample, follow the Python setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Python API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Gemini

You can generate a table description with Gemini in BigQuery by using data insights. Data insights is an automated way to explore, understand, and curate your data.

For more information about data insights, including setup steps, required IAM roles, and best practices to improve the accuracy of the generated insights, see Generate data insights in BigQuery.

In the Google Cloud console, go to the BigQuery page.

In the Explorer panel, expand your project and dataset, then select the table.

In the details panel, click the Schema tab.

Click Generate.

Gemini generates a table description and insights about the table. It takes a few minutes for the information to be populated. You can view the generated insights on the table's Insights tab.

To edit and save the generated table description, do the following:

Click View column descriptions.

The current table description and the generated description are displayed.

In the Table description section, click Save to details.

To replace the current description with the generated description, click Copy suggested description.

Edit the table description as necessary, and then click Save to details.

The table description is updated immediately.

To close the Preview descriptions panel, click Close.

Update a table's expiration time

You can set a default table expiration time at the dataset level, or you can set a table's expiration time when the table is created. A table's expiration time is often referred to as "time to live" or TTL.

When a table expires, it is deleted along with all of the data it contains. If necessary, you can undelete the expired table within the time travel window specified for the dataset, see Restore deleted tables for more information.

If you set the expiration when the table is created, the dataset's default table expiration is ignored. If you do not set a default table expiration at the dataset level, and you do not set a table expiration when the table is created, the table never expires and you must delete the table manually.

At any point after the table is created, you can update the table's expiration time in the following ways:

- Using the Google Cloud console.

- Using a data definition language (DDL)

ALTER TABLEstatement. - Using the bq command-line tool's

bq updatecommand. - Calling the

tables.patchAPI method. - Using the client libraries.

To update a table's expiration time:

Console

You can't add an expiration time when you create a table using the Google Cloud console. After a table is created, you can add or update a table expiration on the Table Details page.

In the Explorer panel, expand your project and dataset, then select the table.

In the details panel, click Details.

Click the pencil icon next to Table info

For Table expiration, select Specify date. Then select the expiration date using the calendar widget.

Click Update to save. The updated expiration time appears in the Table info section.

SQL

Use the

ALTER TABLE SET OPTIONS statement.

The following example updates the

expiration time of a table named mytable:

In the Google Cloud console, go to the BigQuery page.

In the query editor, enter the following statement:

ALTER TABLE mydataset.mytable SET OPTIONS ( -- Sets table expiration to timestamp 2025-02-03 12:34:56 expiration_timestamp = TIMESTAMP '2025-02-03 12:34:56');

Click Run.

For more information about how to run queries, see Run an interactive query.

bq

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

Issue the

bq updatecommand with the--expirationflag. If you are updating a table in a project other than your default project, add the project ID to the dataset name in the following format:project_id:dataset.bq update \ --expiration integer \

project_id:dataset.tableReplace the following:

integer: the default lifetime (in seconds) for the table. The minimum value is 3600 seconds (one hour). The expiration time evaluates to the current time plus the integer value. If you specify0, the table expiration is removed, and the table never expires. Tables with no expiration must be manually deleted.project_id: your project ID.dataset: the name of the dataset that contains the table you're updating.table: the name of the table you're updating.

Examples:

To update the expiration time of the

mytabletable in themydatasetdataset to 5 days (432000 seconds), enter the following command. Themydatasetdataset is in your default project.bq update --expiration 432000 mydataset.mytable

To update the expiration time of the

mytabletable in themydatasetdataset to 5 days (432000 seconds), enter the following command. Themydatasetdataset is in themyotherprojectproject, not your default project.bq update --expiration 432000 myotherproject:mydataset.mytable

API

Call the tables.patch

method and use the expirationTime property in the table resource

to update the table expiration in milliseconds. Because the tables.update

method replaces the entire table resource, the tables.patch method is

preferred.

Go

Before trying this sample, follow the Go setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Go API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Java

Before trying this sample, follow the Java setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Java API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Node.js

Before trying this sample, follow the Node.js setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Node.js API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Python

Before trying this sample, follow the Python setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Python API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

To update the default dataset partition expiration time:

Java

Before trying this sample, follow the Java setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Java API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Python

Before trying this sample, follow the Python setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Python API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Update a table's rounding mode

You can update a table's

default rounding mode

by using the

ALTER TABLE SET OPTIONS DDL statement.

The following example updates the default rounding mode for mytable to

ROUND_HALF_EVEN:

ALTER TABLE mydataset.mytable SET OPTIONS ( default_rounding_mode = "ROUND_HALF_EVEN");

When you add a NUMERIC or BIGNUMERIC field to a table and do not specify

a rounding mode, then the rounding mode

is automatically set to the table's default rounding mode. Changing a table's

default rounding mode doesn't alter the rounding mode of existing fields.

Update a table's schema definition

For more information about updating a table's schema definition, see Modifying table schemas.

Rename a table

You can rename a table after it has been created by using the

ALTER TABLE RENAME TO statement.

The following example renames mytable to mynewtable:

ALTER TABLE mydataset.mytable RENAME TO mynewtable;

Limitations on renaming tables

- If you want to rename a table that has data streaming into it, you must stop the streaming, commit any pending streams, and wait for BigQuery to indicate that streaming is not in use.

- While a table can usually be renamed 5 hours after the last streaming operation, it might take longer.

- Existing table ACLs and row access policies are preserved, but table ACL and row access policy updates made during the table rename are not preserved.

- You can't concurrently rename a table and run a DML statement on that table.

- Renaming a table removes all Data Catalog tags (deprecated) and Dataplex Universal Catalog aspects on the table.

- You can't rename external tables.

Copy a table

This section describes how to create a full copy of a table. For information about other types of table copies, see table clones and table snapshots.

You can copy a table in the following ways:

- Use the Google Cloud console.

- Use the

bq cpcommand. - Use a data definition language (DDL)

CREATE TABLE COPYstatement. - Call the jobs.insert

API method and configure a

copyjob. - Use the client libraries.

Limitations on copying tables

Table copy jobs are subject to the following limitations:

- You can't stop a table copy operation after you start it. A table copy operation runs asynchronously and doesn't stop even when you cancel the job. You are also charged for data transfer for a cross-region table copy and for storage in the destination region.

- When you copy a table, the name of the destination table must adhere to the same naming conventions as when you create a table.

- Table copies are subject to BigQuery limits on copy jobs.

- The Google Cloud console supports copying only one table at a time. You can't overwrite an existing table in the destination dataset. The table must have a unique name in the destination dataset.

- Copying multiple source tables into a destination table is not supported by the Google Cloud console.

When copying multiple source tables to a destination table using the API, bq command-line tool, or the client libraries, all source tables must have identical schemas, including any partitioning or clustering.

Certain table schema updates, such as dropping or renaming columns, can cause tables to have apparently identical schemas but different internal representations. This might cause a table copy job to fail with the error

Maximum limit on diverging physical schemas reached. In this case, you can use theCREATE TABLE LIKEstatement to ensure that your source table's schema matches the destination table's schema exactly.The time that BigQuery takes to copy tables might vary significantly across different runs because the underlying storage is managed dynamically.

You can't copy and append a source table to a destination table that has more columns than the source table, and the additional columns have default values. Instead, you can run

INSERT destination_table SELECT * FROM source_tableto copy over the data.If the copy operation overwrites an existing table, then the table-level access for the existing table is maintained. Tags from the source table aren't copied to the overwritten table, while tags on the existing table are retained. However, when you copy tables across regions, tags on the existing table are removed.

If the copy operation creates a new table, then the table-level access for the new table is determined by the access policies of the dataset in which the new table is created. Additionally, tags are copied from the source table to the new table.

When you copy multiple source tables to a destination table, all source tables must have identical tags.

Required roles

To perform the tasks in this document, you need the following permissions.

Roles to copy tables and partitions

To get the permissions that

you need to copy tables and partitions,

ask your administrator to grant you the

Data Editor (roles/bigquery.dataEditor)

IAM role on the source and destination datasets.

For more information about granting roles, see Manage access to projects, folders, and organizations.

This predefined role contains the permissions required to copy tables and partitions. To see the exact permissions that are required, expand the Required permissions section:

Required permissions

The following permissions are required to copy tables and partitions:

-

bigquery.tables.getDataon the source and destination datasets -

bigquery.tables.geton the source and destination datasets -

bigquery.tables.createon the destination dataset -

bigquery.tables.updateon the destination dataset

You might also be able to get these permissions with custom roles or other predefined roles.

Permission to run a copy job

To get the permission that

you need to run a copy job,

ask your administrator to grant you the

Job User (roles/bigquery.jobUser)

IAM role on the source and destination datasets.

For more information about granting roles, see Manage access to projects, folders, and organizations.

This predefined role contains the

bigquery.jobs.create

permission,

which is required to

run a copy job.

You might also be able to get this permission with custom roles or other predefined roles.

Copy a single source table

You can copy a single table in the following ways:

- Using the Google Cloud console.

- Using the bq command-line tool's

bq cpcommand. - Using a data definition language (DDL)

CREATE TABLE COPYstatement. - Calling the

jobs.insertAPI method, configuring acopyjob, and specifying thesourceTableproperty. - Using the client libraries.

The Google Cloud console and the CREATE TABLE COPY statement support only

one source table and one destination

table in a copy job. To copy multiple source files

to a destination table, you must use the bq command-line tool or the API.

To copy a single source table:

Console

In the Explorer panel, expand your project and dataset, then select the table.

In the details panel, click Copy table.

In the Copy table dialog, under Destination:

- For Project name, choose the project that will store the copied table.

- For Dataset name, select the dataset where you want to store the copied table. The source and destination datasets must be in the same location.

- For Table name, enter a name for the new table. The name must be unique in the destination dataset. You can't overwrite an existing table in the destination dataset using the Google Cloud console. For more information about table name requirements, see Table naming.

Click Copy to start the copy job.

SQL

Use the

CREATE TABLE COPY statement

to copy a table named

table1 to a new table named table1copy:

In the Google Cloud console, go to the BigQuery page.

In the query editor, enter the following statement:

CREATE TABLE

myproject.mydataset.table1copyCOPYmyproject.mydataset.table1;Click Run.

For more information about how to run queries, see Run an interactive query.

bq

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

Issue the

bq cpcommand. Optional flags can be used to control the write disposition of the destination table:-aor--append_tableappends the data from the source table to an existing table in the destination dataset.-for--forceoverwrites an existing table in the destination dataset and doesn't prompt you for confirmation.-nor--no_clobberreturns the following error message if the table exists in the destination dataset:Table 'project_id:dataset.table' already exists, skipping.If-nis not specified, the default behavior is to prompt you to choose whether to replace the destination table.--destination_kms_keyis the customer-managed Cloud KMS key used to encrypt the destination table.

--destination_kms_keyis not demonstrated here. See Protecting data with Cloud Key Management Service keys for more information.If the source or destination dataset is in a project other than your default project, add the project ID to the dataset names in the following format:

project_id:dataset.(Optional) Supply the

--locationflag and set the value to your location.bq --location=location cp \ -a -f -n \

project_id:dataset.source_table\project_id:dataset.destination_tableReplace the following:

location: the name of your location. The--locationflag is optional. For example, if you are using BigQuery in the Tokyo region, you can set the flag's value toasia-northeast1. You can set a default value for the location using the.bigqueryrcfile.project_id: your project ID.dataset: the name of the source or destination dataset.source_table: the table you're copying.destination_table: the name of the table in the destination dataset.

Examples:

To copy the

mydataset.mytabletable to themydataset2.mytable2table, enter the following command. Both datasets are in your default project.bq cp mydataset.mytable mydataset2.mytable2

To copy the

mydataset.mytabletable and to overwrite a destination table with the same name, enter the following command. The source dataset is in your default project. The destination dataset is in themyotherprojectproject. The-fshortcut is used to overwrite the destination table without a prompt.bq cp -f \ mydataset.mytable \ myotherproject:myotherdataset.mytable

To copy the

mydataset.mytabletable and to return an error if the destination dataset contains a table with the same name, enter the following command. The source dataset is in your default project. The destination dataset is in themyotherprojectproject. The-nshortcut is used to prevent overwriting a table with the same name.bq cp -n \ mydataset.mytable \ myotherproject:myotherdataset.mytable

To copy the

mydataset.mytabletable and to append the data to a destination table with the same name, enter the following command. The source dataset is in your default project. The destination dataset is in themyotherprojectproject. The- ashortcut is used to append to the destination table.bq cp -a mydataset.mytable myotherproject:myotherdataset.mytable

API

You can copy an existing table through the API by calling the

bigquery.jobs.insert

method, and configuring a copy job. Specify your location in

the location property in the jobReference section of the

job resource.

You must specify the following values in your job configuration:

"copy": {

"sourceTable": { // Required

"projectId": string, // Required

"datasetId": string, // Required

"tableId": string // Required

},

"destinationTable": { // Required

"projectId": string, // Required

"datasetId": string, // Required

"tableId": string // Required

},

"createDisposition": string, // Optional

"writeDisposition": string, // Optional

},

Where sourceTable provides information about the table to be

copied, destinationTable provides information about the new

table, createDisposition specifies whether to create the

table if it doesn't exist, and writeDisposition specifies

whether to overwrite or append to an existing table.

C#

Before trying this sample, follow the C# setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery C# API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Go

Before trying this sample, follow the Go setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Go API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Java

Before trying this sample, follow the Java setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Java API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Node.js

Before trying this sample, follow the Node.js setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Node.js API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

PHP

Before trying this sample, follow the PHP setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery PHP API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Python

Before trying this sample, follow the Python setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Python API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Copy multiple source tables

You can copy multiple source tables to a destination table in the following ways:

- Using the bq command-line tool's

bq cpcommand. - Calling the

jobs.insertmethod, configuring acopyjob, and specifying thesourceTablesproperty. - Using the client libraries.

All source tables must have identical schemas and tags, and only one destination table is allowed.

Source tables must be specified as a comma-separated list. You can't use wildcards when you copy multiple source tables.

To copy multiple source tables, select one of the following choices:

bq

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

Issue the

bq cpcommand and include multiple source tables as a comma-separated list. Optional flags can be used to control the write disposition of the destination table:-aor--append_tableappends the data from the source tables to an existing table in the destination dataset.-for--forceoverwrites an existing destination table in the destination dataset and doesn't prompt you for confirmation.-nor--no_clobberreturns the following error message if the table exists in the destination dataset:Table 'project_id:dataset.table' already exists, skipping.If-nis not specified, the default behavior is to prompt you to choose whether to replace the destination table.--destination_kms_keyis the customer-managed Cloud Key Management Service key used to encrypt the destination table.

--destination_kms_keyis not demonstrated here. See Protecting data with Cloud Key Management Service keys for more information.If the source or destination dataset is in a project other than your default project, add the project ID to the dataset names in the following format:

project_id:dataset.(Optional) Supply the

--locationflag and set the value to your location.bq --location=location cp \ -a -f -n \

project_id:dataset.source_table,project_id:dataset.source_table\project_id:dataset.destination_tableReplace the following:

location: the name of your location. The--locationflag is optional. For example, if you are using BigQuery in the Tokyo region, you can set the flag's value toasia-northeast1. You can set a default value for the location using the.bigqueryrcfile.project_id: your project ID.dataset: the name of the source or destination dataset.source_table: the table that you're copying.destination_table: the name of the table in the destination dataset.

Examples:

To copy the

mydataset.mytabletable and themydataset.mytable2table tomydataset2.tablecopytable, enter the following command . All datasets are in your default project.bq cp \ mydataset.mytable,mydataset.mytable2 \ mydataset2.tablecopy

To copy the

mydataset.mytabletable and themydataset.mytable2table tomyotherdataset.mytabletable and to overwrite a destination table with the same name, enter the following command. The destination dataset is in themyotherprojectproject, not your default project. The-fshortcut is used to overwrite the destination table without a prompt.bq cp -f \ mydataset.mytable,mydataset.mytable2 \ myotherproject:myotherdataset.mytable

To copy the

myproject:mydataset.mytabletable and themyproject:mydataset.mytable2table and to return an error if the destination dataset contains a table with the same name, enter the following command. The destination dataset is in themyotherprojectproject. The-nshortcut is used to prevent overwriting a table with the same name.bq cp -n \ myproject:mydataset.mytable,myproject:mydataset.mytable2 \ myotherproject:myotherdataset.mytable

To copy the

mydataset.mytabletable and themydataset.mytable2table and to append the data to a destination table with the same name, enter the following command. The source dataset is in your default project. The destination dataset is in themyotherprojectproject. The-ashortcut is used to append to the destination table.bq cp -a \ mydataset.mytable,mydataset.mytable2 \ myotherproject:myotherdataset.mytable

API

To copy multiple tables using the API, call the

jobs.insert

method, configure a table copy job, and specify the sourceTables

property.

Specify your region in the location property in the

jobReference section of the job resource.

Go

Before trying this sample, follow the Go setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Go API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Java

Before trying this sample, follow the Java setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Java API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Node.js

Before trying this sample, follow the Node.js setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Node.js API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Python

Before trying this sample, follow the Python setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Python API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Copy tables across regions

You can copy a table, table snapshot, or table clone from one BigQuery region or multi-region to another. This includes any tables that have customer-managed Cloud KMS (CMEK) applied.

Copying a table across regions incurs additional data transfer charges according to BigQuery pricing. Additional charges are incurred even if you cancel the cross-region table copy job before it has been completed.

To copy a table across regions, select one of the following options:

bq

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

Run the

bq cpcommand:

bq cp \ -f -n \SOURCE_PROJECT:SOURCE_DATASET.SOURCE_TABLE\DESTINATION_PROJECT:DESTINATION_DATASET.DESTINATION_TABLE

Replace the following:

SOURCE_PROJECT: source project ID. If the source dataset is in a project other than your default project, add the project ID to the source dataset name.DESTINATION_PROJECT: destination project ID. If the destination dataset is in a project other than your default project, add the project ID to the destination dataset name.SOURCE_DATASET: the name of the source dataset.DESTINATION_DATASET: the name of the destination dataset.SOURCE_TABLE: the table that you are copying.DESTINATION_TABLE: the name of the table in the destination dataset.

The following example is a command that copies the mydataset_us.mytable table from the us multi-region to

the mydataset_eu.mytable2 table in the eu multi-region. Both datasets are in the default project.

bq cp --sync=false mydataset_us.mytable mydataset_eu.mytable2

To copy a table across regions into a CMEK-enabled destination dataset, you must enable CMEK on the table with a key from the table's region. The CMEK on the table doesn't have to be the same CMEK in use by the destination dataset. The following example copies a CMEK-enabled table to a destination dataset using the bq cp command.

bq cp source-project-id:source-dataset-id.source-table-id destination-project-id:destination-dataset-id.destination-table-id

Conversely, to copy a CMEK-enabled table across regions into a destination dataset, you can enable CMEK on the destination dataset with a key from the destination dataset's region. You can also use the destination_kms_keys flag in the bq cp command, as shown in the following example:

bq cp --destination_kms_key=projects/project_id/locations/eu/keyRings/eu_key/cryptoKeys/eu_region mydataset_us.mytable mydataset_eu.mytable2

API

To copy a table across regions using the API, call the

jobs.insert

method and configure a table copy job.

Specify your region in the location property in the

jobReference section of the job resource.

C#

Before trying this sample, follow the C# setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery C# API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Go

Before trying this sample, follow the Go setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Go API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Java

Before trying this sample, follow the Java setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Java API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Node.js

Before trying this sample, follow the Node.js setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Node.js API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

PHP

Before trying this sample, follow the PHP setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery PHP API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Python

Before trying this sample, follow the Python setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Python API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Limitations

Copying a table across regions is subject to the following limitations:

- You can't copy a table using the Google Cloud console or the

TABLE COPY DDLstatement. - You can't copy a table if there are any policy tags on the source table.

- You can't copy a table if the source table is larger than 20 physical TiB. See get information about tables for the source table physical size. Additionally, copying source tables that are larger than 1 physical TiB across regions may need multiple retries to successfully copy them.

- You can't copy IAM policies associated with the tables. You can apply the same policies to the destination after the copy is completed.

- If the copy operation overwrites an existing table, tags on the existing table are removed.

- You can't copy multiple source tables into a single destination table.

- You can't copy tables in append mode.

- Time travel information is not copied to the destination region.

- When you copy a table clone or snapshot to a new region, a full copy of the table is created. This incurs additional storage costs.

View current quota usage

You can view your current usage of query, load, extract, or copy jobs by running

an INFORMATION_SCHEMA query to view metadata about the jobs ran over a

specified time period. You can compare your current usage against the quota

limit to determine your quota usage for a particular type of job. The following

example query uses the INFORMATION_SCHEMA.JOBS view to list the number of

query, load, extract, and copy jobs by project:

SELECT sum(case when job_type="QUERY" then 1 else 0 end) as QRY_CNT, sum(case when job_type="LOAD" then 1 else 0 end) as LOAD_CNT, sum(case when job_type="EXTRACT" then 1 else 0 end) as EXT_CNT, sum(case when job_type="COPY" then 1 else 0 end) as CPY_CNT FROM `region-REGION_NAME`.INFORMATION_SCHEMA.JOBS_BY_PROJECT WHERE date(creation_time)= CURRENT_DATE()

To view the quota limits for copy jobs, see Quotas and limits - Copy jobs.

Delete tables

You can delete a table in the following ways:

- Using the Google Cloud console.

- Using a data definition language (DDL)

DROP TABLEstatement. - Using the bq command-line tool

bq rmcommand. - Calling the

tables.deleteAPI method. - Using the client libraries.

To delete all of the tables in the dataset, delete the dataset.

When you delete a table, any data in the table is also deleted. To automatically delete tables after a specified period of time, set the default table expiration for the dataset or set the expiration time when you create the table.

Deleting a table also deletes any permissions associated with this table. When you recreate a deleted table, you must also manually reconfigure any access permissions previously associated with it.

Required roles

To get the permissions that

you need to delete a table,

ask your administrator to grant you the

Data Editor (roles/bigquery.dataEditor)

IAM role on the dataset.

For more information about granting roles, see Manage access to projects, folders, and organizations.

This predefined role contains the permissions required to delete a table. To see the exact permissions that are required, expand the Required permissions section:

Required permissions

The following permissions are required to delete a table:

-

bigquery.tables.delete -

bigquery.tables.get

You might also be able to get these permissions with custom roles or other predefined roles.

Delete a table

To delete a table:

Console

In the Explorer panel, expand your project and dataset, then select the table.

In the details panel, click Delete table.

Type

"delete"in the dialog, then click Delete to confirm.

SQL

Use the

DROP TABLE statement.

The following example deletes a table named mytable:

In the Google Cloud console, go to the BigQuery page.

In the query editor, enter the following statement:

DROP TABLE mydataset.mytable;

Click Run.

For more information about how to run queries, see Run an interactive query.

bq

-

In the Google Cloud console, activate Cloud Shell.

At the bottom of the Google Cloud console, a Cloud Shell session starts and displays a command-line prompt. Cloud Shell is a shell environment with the Google Cloud CLI already installed and with values already set for your current project. It can take a few seconds for the session to initialize.

Use the

bq rmcommand with the--tableflag (or-tshortcut) to delete a table. When you use the bq command-line tool to remove a table, you must confirm the action. You can use the--forceflag (or-fshortcut) to skip confirmation.If the table is in a dataset in a project other than your default project, add the project ID to the dataset name in the following format:

project_id:dataset.bq rm \ -f \ -t \ project_id:dataset.table

Replace the following:

project_id: your project IDdataset: the name of the dataset that contains the tabletable: the name of the table that you're deleting

Examples:

To delete the

mytabletable from themydatasetdataset, enter the following command. Themydatasetdataset is in your default project.bq rm -t mydataset.mytable

To delete the

mytabletable from themydatasetdataset, enter the following command. Themydatasetdataset is in themyotherprojectproject, not your default project.bq rm -t myotherproject:mydataset.mytable

To delete the

mytabletable from themydatasetdataset, enter the following command. Themydatasetdataset is in your default project. The command uses the-fshortcut to bypass confirmation.bq rm -f -t mydataset.mytable

API

Call the tables.delete

API method and specify the table to delete using the tableId parameter.

C#

Before trying this sample, follow the C# setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery C# API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Go

Before trying this sample, follow the Go setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Go API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Java

Before trying this sample, follow the Java setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Java API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Node.js

Before trying this sample, follow the Node.js setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Node.js API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

PHP

Before trying this sample, follow the PHP setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery PHP API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Python

Before trying this sample, follow the Python setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Python API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Ruby

Before trying this sample, follow the Ruby setup instructions in the

BigQuery quickstart using

client libraries.

For more information, see the

BigQuery Ruby API

reference documentation.

To authenticate to BigQuery, set up Application Default Credentials.

For more information, see

Set up authentication for client libraries.

Restore deleted tables

To learn how to restore or undelete deleted tables, see Restore deleted tables.

Table security

To control access to tables in BigQuery, see Control access to resources with IAM.

What's next

- For more information about creating and using tables, see Creating and using tables.

- For more information about handling data, see Working With table data.

- For more information about specifying table schemas, see Specifying a schema.

- For more information about modifying table schemas, see Modifying table schemas.

- For more information about datasets, see Introduction to datasets.

- For more information about views, see Introduction to views.