Migra el esquema y los datos desde Amazon Redshift

En este documento, se describe el proceso de migración de datos desde Amazon Redshift a BigQuery mediante direcciones IP públicas.

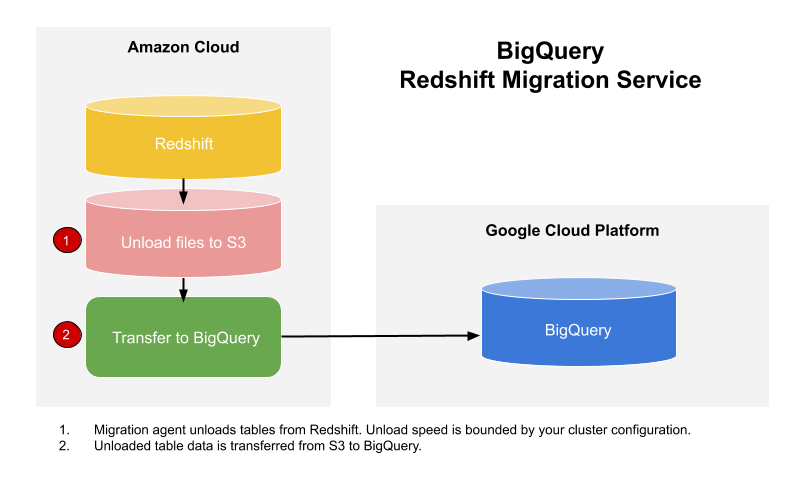

Puedes usar el Servicio de transferencia de datos de BigQuery para copiar tus datos de un almacén de datos de Amazon Redshift a BigQuery. El servicio involucra agentes de migración en GKE y activa una operación de descarga de Amazon Redshift a un área de etapa de pruebas en un bucket de Amazon S3. Luego, el Servicio de transferencia de datos de BigQuery transfiere los datos del bucket de Amazon S3 a BigQuery.

Este diagrama muestra el flujo general de datos entre un almacén de datos de Amazon Redshift y BigQuery durante una migración.

Si deseas transferir datos de tu instancia de Amazon Redshift a través de una nube privada virtual (VPC) con direcciones IP privadas, consulta Migra datos de Amazon Redshift con VPC.

Antes de comenzar

- Sign in to your Google Cloud account. If you're new to Google Cloud, create an account to evaluate how our products perform in real-world scenarios. New customers also get $300 in free credits to run, test, and deploy workloads.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

-

Make sure that billing is enabled for your Google Cloud project.

-

Enable the BigQuery and BigQuery Data Transfer Service APIs.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

-

Make sure that billing is enabled for your Google Cloud project.

-

Enable the BigQuery and BigQuery Data Transfer Service APIs.

Establece los permisos necesarios

Antes de crear una transferencia de Amazon Redshift, haz lo siguiente:

Asegúrate de que la cuenta principal que crea la transferencia tenga los siguientes permisos en el proyecto que contiene el trabajo de transferencia:

- Los permisos

bigquery.transfers.updatepara crear la transferencia - Los permisos

bigquery.datasets.getybigquery.datasets.updateen el conjunto de datos de destino

El rol predefinido

roles/bigquery.adminde Identity and Access Management (IAM) incluye los permisosbigquery.transfers.update,bigquery.datasets.updateybigquery.datasets.get. Para obtener más información sobre los roles de IAM en el Servicio de transferencia de datos de BigQuery, consulta Control de acceso.- Los permisos

Consulta la documentación de Amazon S3 y asegúrate de tener configurados los permisos necesarios para habilitar la transferencia. Como mínimo, los datos de origen de Amazon S3 deben estar sujetos a la política administrada de AWS

AmazonS3ReadOnlyAccess.

Crea un conjunto de datos

Crea un conjunto de datos de BigQuery para almacenar tus datos. No es necesario crear ninguna tabla.

Otorga acceso a tu clúster de Amazon Redshift

Sigue las instrucciones en Configura reglas entrantes para clientes SQL a fin de incluir las direcciones IP siguientes en la lista de entidades permitidas. Puedes permitir las listas de direcciones IP que corresponden a la ubicación de tu conjunto de datos o puedes incluir todas las direcciones IP de la lista de anunciantes permitidos en la siguiente tabla. Estas direcciones IP que son propiedad de Google están reservadas para las migraciones de datos de Amazon Redshift.

Ubicaciones regionales

| Descripción de la región | Nombre de la región | Direcciones IP | |

|---|---|---|---|

| América | |||

| Columbus, Ohio | us-east5 |

34.162.72.184 34.162.173.185 34.162.205.205 34.162.81.45 34.162.182.149 34.162.59.92 34.162.157.190 34.162.191.145 |

|

| Dallas | us-south1 |

34.174.172.89 34.174.40.67 34.174.5.11 34.174.96.109 34.174.148.99 34.174.176.19 34.174.253.135 34.174.129.163 |

|

| Iowa | us-central1 |

34.121.70.114 34.71.81.17 34.122.223.84 34.121.145.212 35.232.1.105 35.202.145.227 35.226.82.216 35.225.241.102 |

|

| Las Vegas | us-west4 |

34.125.53.201 34.125.69.174 34.125.159.85 34.125.152.1 34.125.195.166 34.125.50.249 34.125.68.55 34.125.91.116 |

|

| Los Ángeles | us-west2 |

35.236.59.167 34.94.132.139 34.94.207.21 34.94.81.187 34.94.88.122 35.235.101.187 34.94.238.66 34.94.195.77 |

|

| Montreal | northamerica-northeast1 |

34.95.20.253 35.203.31.219 34.95.22.233 34.95.27.99 35.203.12.23 35.203.39.46 35.203.116.49 35.203.104.223 |

|

| Virginia del Norte | us-east4 |

35.245.95.250 35.245.126.228 35.236.225.172 35.245.86.140 35.199.31.35 35.199.19.115 35.230.167.48 35.245.128.132 35.245.111.126 35.236.209.21 |

|

| Oregón | us-west1 |

35.197.117.207 35.199.178.12 35.197.86.233 34.82.155.140 35.247.28.48 35.247.31.246 35.247.106.13 34.105.85.54 |

|

| Salt Lake City | us-west3 |

34.106.37.58 34.106.85.113 34.106.28.153 34.106.64.121 34.106.246.131 34.106.56.150 34.106.41.31 34.106.182.92 |

|

| São Paulo | southamerica-east1 |

35.199.88.228 34.95.169.140 35.198.53.30 34.95.144.215 35.247.250.120 35.247.255.158 34.95.231.121 35.198.8.157 |

|

| Santiago | southamerica-west1 |

34.176.188.48 34.176.38.192 34.176.205.134 34.176.102.161 34.176.197.198 34.176.223.236 34.176.47.188 34.176.14.80 |

|

| Carolina del Sur | us-east1 |

35.196.207.183 35.237.231.98 104.196.102.222 35.231.13.201 34.75.129.215 34.75.127.9 35.229.36.137 35.237.91.139 |

|

| Toronto | northamerica-northeast2 |

34.124.116.108 34.124.116.107 34.124.116.102 34.124.116.80 34.124.116.72 34.124.116.85 34.124.116.20 34.124.116.68 |

|

| Europa | |||

| Bélgica | europe-west1 |

35.240.36.149 35.205.171.56 34.76.234.4 35.205.38.234 34.77.237.73 35.195.107.238 35.195.52.87 34.76.102.189 |

|

| Berlín | europe-west10 |

34.32.28.80 34.32.31.206 34.32.19.49 34.32.33.71 34.32.15.174 34.32.23.7 34.32.1.208 34.32.8.3 |

|

| Finlandia | europe-north1 |

35.228.35.94 35.228.183.156 35.228.211.18 35.228.146.84 35.228.103.114 35.228.53.184 35.228.203.85 35.228.183.138 |

|

| Fráncfort | europe-west3 |

35.246.153.144 35.198.80.78 35.246.181.106 35.246.211.135 34.89.165.108 35.198.68.187 35.242.223.6 34.89.137.180 |

|

| Londres | europe-west2 |

35.189.119.113 35.189.101.107 35.189.69.131 35.197.205.93 35.189.121.178 35.189.121.41 35.189.85.30 35.197.195.192 |

|

| Madrid | europe-southwest1 |

34.175.99.115 34.175.186.237 34.175.39.130 34.175.135.49 34.175.1.49 34.175.95.94 34.175.102.118 34.175.166.114 |

|

| Milán | europe-west8 |

34.154.183.149 34.154.40.104 34.154.59.51 34.154.86.2 34.154.182.20 34.154.127.144 34.154.201.251 34.154.0.104 |

|

| Países Bajos | europe-west4 |

35.204.237.173 35.204.18.163 34.91.86.224 34.90.184.136 34.91.115.67 34.90.218.6 34.91.147.143 34.91.253.1 |

|

| París | europe-west9 |

34.163.76.229 34.163.153.68 34.155.181.30 34.155.85.234 34.155.230.192 34.155.175.220 34.163.68.177 34.163.157.151 |

|

| Turín | europe-west12 |

34.17.15.186 34.17.44.123 34.17.41.160 34.17.47.82 34.17.43.109 34.17.38.236 34.17.34.223 34.17.16.47 |

|

| Varsovia | europe-central2 |

34.118.72.8 34.118.45.245 34.118.69.169 34.116.244.189 34.116.170.150 34.118.97.148 34.116.148.164 34.116.168.127 |

|

| Zúrich | europe-west6 |

34.65.205.160 34.65.121.140 34.65.196.143 34.65.9.133 34.65.156.193 34.65.216.124 34.65.233.83 34.65.168.250 |

|

| Asia-Pacífico | |||

| Delhi | asia-south2 |

34.126.212.96 34.126.212.85 34.126.208.224 34.126.212.94 34.126.208.226 34.126.212.232 34.126.212.93 34.126.212.206 |

|

| Hong Kong | asia-east2 |

34.92.245.180 35.241.116.105 35.220.240.216 35.220.188.244 34.92.196.78 34.92.165.209 35.220.193.228 34.96.153.178 |

|

| Yakarta | asia-southeast2 |

34.101.79.105 34.101.129.32 34.101.244.197 34.101.100.180 34.101.109.205 34.101.185.189 34.101.179.27 34.101.197.251 |

|

| Melbourne | australia-southeast2 |

34.126.196.95 34.126.196.106 34.126.196.126 34.126.196.96 34.126.196.112 34.126.196.99 34.126.196.76 34.126.196.68 |

|

| Bombay | asia-south1 |

34.93.67.112 35.244.0.1 35.200.245.13 35.200.203.161 34.93.209.130 34.93.120.224 35.244.10.12 35.200.186.100 |

|

| Osaka | asia-northeast2 |

34.97.94.51 34.97.118.176 34.97.63.76 34.97.159.156 34.97.113.218 34.97.4.108 34.97.119.140 34.97.30.191 |

|

| Seúl | asia-northeast3 |

34.64.152.215 34.64.140.241 34.64.133.199 34.64.174.192 34.64.145.219 34.64.136.56 34.64.247.158 34.64.135.220 |

|

| Singapur | asia-southeast1 |

34.87.12.235 34.87.63.5 34.87.91.51 35.198.197.191 35.240.253.175 35.247.165.193 35.247.181.82 35.247.189.103 |

|

| Sídney | australia-southeast1 |

35.189.33.150 35.189.38.5 35.189.29.88 35.189.22.179 35.189.20.163 35.189.29.83 35.189.31.141 35.189.14.219 |

|

| Taiwán | asia-east1 |

35.221.201.20 35.194.177.253 34.80.17.79 34.80.178.20 34.80.174.198 35.201.132.11 35.201.223.177 35.229.251.28 35.185.155.147 35.194.232.172 |

|

| Tokio | asia-northeast1 |

34.85.11.246 34.85.30.58 34.85.8.125 34.85.38.59 34.85.31.67 34.85.36.143 34.85.32.222 34.85.18.128 34.85.23.202 34.85.35.192 |

|

| Oriente Medio | |||

| Dammam | me-central2 |

34.166.20.177 34.166.10.104 34.166.21.128 34.166.19.184 34.166.20.83 34.166.18.138 34.166.18.48 34.166.23.171 |

|

| Doha | me-central1 |

34.18.48.121 34.18.25.208 34.18.38.183 34.18.33.25 34.18.21.203 34.18.21.80 34.18.36.126 34.18.23.252 |

|

| Tel Aviv | me-west1 |

34.165.184.115 34.165.110.74 34.165.174.16 34.165.28.235 34.165.170.172 34.165.187.98 34.165.85.64 34.165.245.97 |

|

| África | |||

| Johannesburgo | africa-south1 |

34.35.11.24 34.35.10.66 34.35.8.32 34.35.3.248 34.35.2.113 34.35.5.61 34.35.7.53 34.35.3.17 |

|

Ubicaciones multirregionales

| Descripción de la multirregión | Nombre de la multirregión | Direcciones IP |

|---|---|---|

| Centros de datos dentro de los estados miembros de la Unión Europea1 | EU |

34.76.156.158 34.76.156.172 34.76.136.146 34.76.1.29 34.76.156.232 34.76.156.81 34.76.156.246 34.76.102.206 34.76.129.246 34.76.121.168 |

| Centros de datos en Estados Unidos | US |

35.185.196.212 35.197.102.120 35.185.224.10 35.185.228.170 35.197.5.235 35.185.206.139 35.197.67.234 35.197.38.65 35.185.202.229 35.185.200.120 |

1 Los datos ubicados en la multirregión EU no se almacenan en los centros de datos de europe-west2 (Londres) ni deeurope-west6 (Zúrich).

Otorga acceso a tu bucket de Amazon S3

Debes tener un bucket Amazon S3 para usarlo como área de etapa de pruebas a fin de transferir los datos de Amazon Redshift a BigQuery. Para obtener instrucciones detalladas, consulta la documentación de Amazon.

Recomendamos que crees un usuario IAM dedicado de Amazon y le otorgues a ese usuario solo acceso de lectura a Amazon Redshift y acceso de lectura y escritura a Amazon S3. Para lograr este paso, puedes aplicar las siguientes políticas:

Crea un par de claves de acceso de usuario de IAM de Amazon.

Configura cargas de trabajo con una cola de migración independiente

De manera opcional, puedesdefinir una cola de Amazon Redshift con fines de migración para limitar y separar los recursos usados en la migración. Puedes configurar esta cola de migración con un recuento máximo de consultas simultáneas. Luego, puedes asociar un grupo de usuarios de migración determinado a la cola y usar esas credenciales mediante la configuración de la migración para transferir datos a BigQuery. El servicio de transferencia solo tiene acceso a la cola de migración.

Recopila información de transferencia

Recopila la información que necesitas para configurar la migración con el Servicio de transferencia de datos de BigQuery:

- Sigue estas instrucciones para obtener la URL de JDBC.

- Obtén el nombre de usuario y la contraseña de un usuario con los permisos adecuados para tu base de datos de Amazon Redshift.

- Sigue las instrucciones en Otorga acceso a tu bucket Amazon S3 para obtener un par de claves de acceso de AWS.

- Obtén el URI del bucket de Amazon S3 que deseas usar para la transferencia. Te recomendamos que configures una política de Lifecycle para este bucket a fin de evitar cargos innecesarios. La fecha de caducidad recomendada es de 24 horas a fin de permitir el tiempo suficiente para transferir todos los datos a BigQuery.

Evalúa tus datos

Como parte de la transferencia de datos, el Servicio de transferencia de datos de BigQuery escribe datos de Amazon Redshift en Cloud Storage como archivos CSV. Si estos archivos contienen el carácter ASCII 0, no se pueden cargar en BigQuery. Te sugerimos que evalúes tus datos para determinar si esto podría ser un problema para ti. Si presenta un problema, lo puedes solucionar mediante la exportación de tus datos a Amazon S3 como archivos de Parquet y, luego, la importación de esos archivos con el Servicio de transferencia de datos de BigQuery. Para obtener más información, consulta Descripción general de las transferencias de Amazon S3.

Configura una transferencia de Amazon Redshift

Selecciona una de las opciones siguientes:

Console

En la consola de Google Cloud, ve a la página de BigQuery.

Haz clic en Transferencias de datos.

Haz clic en Crear transferencia.

En la sección Tipo de fuente, selecciona Migración: Amazon Redshift en la lista Origen.

En la sección Transfer config name (Nombre de la configuración de transferencia), ingresa un nombre para la transferencia, como

My migration, en el campo Display name (Nombre visible). El nombre que se muestra puede ser cualquier valor que te permita identificar la transferencia con facilidad si necesitas modificarla más tarde.En la sección Destination settings (Configuración de destino), elige el conjunto de datos que creaste de la lista Dataset (Conjunto de datos).

En la sección Detalles de fuente de datos (Data source details), haz lo siguiente:

- En URL de conexión de JDBC para Redshift (JDBC connection url for Redshift), proporciona la URL de JDBC a fin de acceder a tu clúster de Amazon Redshift.

- En Nombre de usuario de tu base de datos, (Username of your database) ingresa el nombre de usuario de la base de datos de Amazon Redshift que deseas migrar.

En Contraseña de tu base de datos (Password of your database), ingresa la contraseña de la base de datos.

En ID de clave de acceso (Access key ID) y Clave de acceso secreta (Secret access key), ingresa el par de claves de acceso que obtuviste en Otorgar acceso a tu depósito S3.

En URI de Amazon S3 (Amazon S3 URI), ingresa el URI del depósito S3 que usarás como área de etapa de pruebas.

En Esquema de Redshift (Redshift schema), ingresa el esquema de Amazon Redshift que estás migrando.

En Patrones de nombre de la tabla (Table name patterns), especifica un nombre o un patrón para hacer coincidir los nombres de tabla en el esquema. Puedes usar expresiones regulares para especificar el patrón en el formato

<table1Regex>;<table2Regex>. El patrón debe seguir la sintaxis de la expresión regular de Java. Por ejemplo:lineitem;ordertbcoincide con las tablas llamadaslineitemyordertb..*coincide con todas las tablas.

Deja este campo vacío para migrar todas las tablas del esquema especificado.

Para VPC y el rango de IP reservado, deja el campo en blanco.

En el menú Cuenta de servicio, selecciona una cuenta de servicio de las cuentas de servicio asociadas a tu proyecto de Google Cloud. Puedes asociar una cuenta de servicio con tu transferencia en lugar de usar tus credenciales de usuario. Para obtener más información sobre el uso de cuentas de servicio con transferencias de datos, consulta Usa cuentas de servicio.

- Si accediste con una identidad federada, se requiere una cuenta de servicio para crear una transferencia. Si accediste con una Cuenta de Google, la cuenta de servicio para la transferencia es opcional.

- La cuenta de servicio debe tener los permisos necesarios.

Opcional: En la sección Opciones de notificación, haz lo siguiente:

- Haz clic en el botón de activación para habilitar las notificaciones por correo electrónico. Cuando habilitas esta opción, el administrador de transferencias recibe una notificación por correo electrónico cuando falla una ejecución de transferencia.

- En Seleccionar un tema de Cloud Pub/Sub (Select a Cloud Pub/Sub topic), elige el nombre de tu tema o haz clic en Crear un tema (Create a topic). Con esta opción, se configuran las notificaciones de ejecución de Pub/Sub para tu transferencia.

Haz clic en Guardar.

La consola de Google Cloud muestra todos los detalles de configuración de la transferencia, incluido un Nombre de recurso para esta transferencia.

bq

Ingresa el comando bq mk y suministra la marca de creación de transferencias --transfer_config. También se requieren las siguientes marcas:

--project_id--data_source--target_dataset--display_name--params

bq mk \ --transfer_config \ --project_id=project_id \ --data_source=data_source \ --target_dataset=dataset \ --display_name=name \ --service_account_name=service_account \ --params='parameters'

Donde:

- project_id es tu ID del proyecto de Cloud. Si no se especifica

--project_id, se usa el proyecto predeterminado. - data_source es la fuente de datos:

redshift. - dataset es el conjunto de datos de destino de BigQuery para la configuración de la transferencia.

- name es el nombre visible de la configuración de transferencia. El nombre de la transferencia puede ser cualquier valor que te permita identificarla si es necesario hacerle modificaciones más tarde.

- service_account es el nombre de la cuenta de servicio que se usa para autenticar tu transferencia. La cuenta de servicio debe ser propiedad del mismo

project_idque se usa para crear la transferencia y debe tener todos los permisos necesarios. - parameters contiene los parámetros para la configuración de la transferencia creada en formato JSON. Por ejemplo:

--params='{"param":"param_value"}'

Los parámetros necesarios para una configuración de transferencia de Amazon Redshift son:

jdbc_url: La URL de conexión de JDBC se usa para ubicar el clúster de Amazon Redshift.database_username: El nombre de usuario para acceder a tu base de datos a fin de descargar tablas especificadas.database_password: La contraseña usada con el nombre de usuario para acceder a tu base de datos a fin de descargar las tablas especificadas.access_key_id: El ID de la clave de acceso para firmar las solicitudes realizadas a AWS.secret_access_key: La clave de acceso secreta usada con el ID de la clave de acceso para firmar las solicitudes realizadas a AWS.s3_bucket: El URI de Amazon S3 que comienza con “s3://” y especifica un prefijo para los archivos temporales que se usarán.redshift_schema: El esquema de Amazon Redshift que contiene todas las tablas que se migrarán.table_name_patterns: Patrones de nombre de tabla separados por un punto y coma (;). El patrón de tabla es una expresión regular para las tablas que se deben migrar. Si no se proporciona, se migrarán todas las tablas del esquema de la base de datos.

Por ejemplo, el siguiente comando crea una transferencia de Amazon Redshift llamada My Transfer con un conjunto de datos de destino llamado mydataset y un proyecto con el ID de google.com:myproject.

bq mk \

--transfer_config \

--project_id=myproject \

--data_source=redshift \

--target_dataset=mydataset \

--display_name='My Transfer' \

--params='{"jdbc_url":"jdbc:postgresql://test-example-instance.sample.us-west-1.redshift.amazonaws.com:5439/dbname","database_username":"my_username","database_password":"1234567890","access_key_id":"A1B2C3D4E5F6G7H8I9J0","secret_access_key":"1234567890123456789012345678901234567890","s3_bucket":"s3://bucket/prefix","redshift_schema":"public","table_name_patterns":"table_name"}'

API

Usa el método projects.locations.transferConfigs.create y suministra una instancia del recurso TransferConfig.

Java

Antes de probar este ejemplo, sigue las instrucciones de configuración para Java incluidas en la guía de inicio rápido de BigQuery sobre cómo usar bibliotecas cliente. Para obtener más información, consulta la documentación de referencia de la API de BigQuery para Java.

Para autenticarte en BigQuery, configura las credenciales predeterminadas de la aplicación. Si deseas obtener más información, consulta Configura la autenticación para bibliotecas cliente.

Cuotas y límites

BigQuery tiene una cuota de carga de 15 TB para cada trabajo de carga por cada tabla. Por dentro, Amazon Redshift comprime los datos de la tabla, por lo que el tamaño de la tabla exportada será mayor que el tamaño de la tabla informado por Amazon Redshift. Si planeas migrar una tabla de más de 15 TB, comunícate primero con la Atención al cliente de Cloud.

Se pueden generar costos fuera de Google por el uso de este servicio. Revisa las páginas de precios de Amazon Redshift y Amazon S3 para obtener más detalles.

Debido al modelo de coherencia de Amazon S3, es posible que algunos archivos no se incluyan en la transferencia a BigQuery.

¿Qué sigue?

- Obtén información sobre cómo migrar instancias privadas de Amazon Redshift con VPC.

- Obtén más información acerca del Servicio de transferencia de datos de BigQuery.

- Migra el código SQL con la traducción de SQL por lotes.