Load YouTube Channel data into BigQuery

You can load data from YouTube Channel to BigQuery using the BigQuery Data Transfer Service for YouTube Channel connector. With the BigQuery Data Transfer Service, you can schedule recurring transfer jobs that add your latest data from YouTube Channel to BigQuery.

Connector overview

The BigQuery Data Transfer Service for the YouTube Channel connector supports the following options for your data transfer.

| Data transfer options | Support |

|---|---|

| Supported reports | The YouTube Channel connector supports the transfer of data from Channel reports.

The YouTube Channel connector supports the June 18, 2018 API version. For information about how YouTube Channel reports are transformed into BigQuery tables and views, see YouTube Channel report transformation. |

| Repeat frequency | The YouTube Channel connector supports daily data transfers. By default, data transfers are scheduled at the time when the data transfer is created. You can configure the time of data transfer when you set up your data transfer. |

| Refresh window | The YouTube Channel connector retrieves YouTube Channel data from up to 1 day at the time the data transfer is run.

For more information, see Refresh windows. |

| Backfill data availability | Run a data backfill to retrieve data outside of your scheduled data transfer. You can retrieve data as far back as the data retention policy on your data source allows. YouTube reports containing historical data are available for 30 days from the time that they are generated. (Reports that contain non-historical data are available for 60 days.) For more information, see Historical data. |

Data ingestion from YouTube Channel transfers

When you transfer data from a YouTube Channel into BigQuery, the data is loaded into BigQuery tables that are partitioned by date. The table partition that the data is loaded into corresponds to the date from the data source. If you schedule multiple transfers for the same date, BigQuery Data Transfer Service overwrites the partition for that specific date with the latest data. Multiple transfers in the same day or running backfills don't result in duplicate data, and partitions for other dates are not affected.Refresh windows

A refresh window is the number of days that a data transfer retrieves data when a data transfer occurs. For example, if the refresh window is three days and a daily transfer occurs, the BigQuery Data Transfer Service retrieves all data from your source table from the past three days. In this example, when a daily transfer occurs, the BigQuery Data Transfer Service creates a new BigQuery destination table partition with a copy of your source table data from the current day, then automatically triggers backfill runs to update the BigQuery destination table partitions with your source table data from the past two days. The automatically triggered backfill runs will either overwrite or incrementally update your BigQuery destination table, depending on whether or not incremental updates are supported in the BigQuery Data Transfer Service connector.

When you run a data transfer for the first time, the data transfer retrieves all source data available within the refresh window. For example, if the refresh window is three days and you run the data transfer for the first time, the BigQuery Data Transfer Service retrieves all source data within three days.

To retrieve data outside the refresh window, such as historical data, or to recover data from any transfer outages or gaps, you can initiate or schedule a backfill run.

Limitations

- The maximum supported file size for each report is 1710 GB.

- The minimum frequency that you can schedule a data transfer for is once every 24 hours. By default, a data transfer starts at the time that you create the transfer. However, you can configure the data transfer start time when you set up your transfer.

- The BigQuery Data Transfer Service does not support incremental data transfers during a YouTube Content Owner transfer. When you specify a date for a data transfer, all of the data that is available for that date is transferred.

- You cannot create a YouTube Channel data transfer if you are signed in as a federated identity. You can only create a YouTube Channel transfer while signed in using a Google Account.

Before you begin

Before you create a YouTube Channel data transfer:

- Verify that you have completed all actions required to enable the BigQuery Data Transfer Service.

- Create a BigQuery dataset to store the YouTube data.

Required permissions

Creating a YouTube Channel data transfer requires the following:

- YouTube: Ownership of the YouTube Channel

BigQuery: The following Identity and Access Management (IAM) permissions in BigQuery:

bigquery.transfers.updateto create the transfer.bigquery.datasets.getandbigquery.datasets.updateon the target dataset.- If you intend to set up transfer run notifications for Pub/Sub, you

must have

pubsub.topics.setIamPolicypermissions. Pub/Sub permissions are not required if you just set up email notifications. For more information, see BigQuery Data Transfer Service run notifications.

The bigquery.admin predefined IAM role includes all of the

BigQuery permissions that you need to create a YouTube Channel

data transfer. For more information about IAM roles in

BigQuery, see Predefined roles and permissions.

Set up a YouTube Channel transfer

Setting up a YouTube Channel data transfer requires a:

- Table Suffix: A user-friendly name for the channel provided by you when you set up the data transfer. The suffix is appended to the job ID to create the table name, for example reportTypeId_suffix. The suffix is used to prevent separate transfers from writing to the same tables. The table suffix must be unique across all transfers that load data into the same dataset, and the suffix should be short to minimize the length of the resulting table name.

If you use the YouTube Reporting API and have existing reporting jobs, the BigQuery Data Transfer Service loads your report data. If you don't have existing reporting jobs, setting up the transfer automatically enables YouTube reporting jobs.

To create a YouTube Channel data transfer:

Console

Go to the Data transfers page in the Google Cloud console.

Click Create transfer.

On the Create Transfer page:

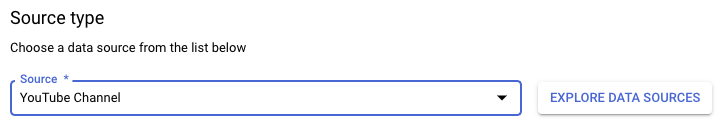

In the Source type section, for Source, choose YouTube Channel.

In the Transfer config name section, for Display name, enter a name for the data transfer such as

My Transfer. The transfer name can be any value that lets you identify the transfer if you need to modify it later.

In the Schedule options section:

- For Repeat frequency, choose an option for how often to run the data transfer. If you select Days, provide a valid time in UTC.

- If applicable, select either Start now or Start at set time, and provide a start date and run time.

In the Destination settings section, for Destination dataset, choose the dataset you created to store your data.

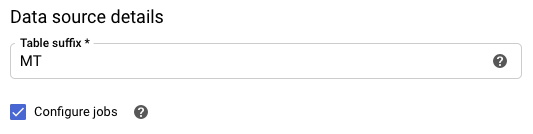

In the Data source details section:

- For Table suffix, enter a suffix such as

MT. - Check the box for Configure jobs to allow BigQuery to manage YouTube reporting jobs for you. If there are YouTube reports that don't yet exist for your account, new reporting jobs are created to enable them.

- For Table suffix, enter a suffix such as

(Optional) In the Notification options section:

- Click the toggle to enable email notifications. When you enable this option, the transfer administrator receives an email notification when a transfer run fails.

- For Select a Pub/Sub topic, choose your topic name or click Create a topic. This option configures Pub/Sub run notifications for your data transfer.

Click Save.

bq

Enter the bq mk command and supply the transfer creation flag —

--transfer_config. The following flags are also required:

--data_source--target_dataset--display_name--params

bq mk \ --transfer_config \ --project_id=project_id \ --target_dataset=dataset \ --display_name=name \ --params='parameters' \ --data_source=data_source

Where:

- project_id is your project ID.

- dataset is the target dataset for the transfer configuration.

- name is the display name for the transfer configuration. The data transfer name can be any value that lets you identify the transfer if you need to modify it later.

- parameters contains the parameters for the created transfer

configuration in JSON format. For example:

--params='{"param":"param_value"}'. For YouTube Channel data transfers, you must supply thetable_suffixparameter. You may optionally set theconfigure_jobsparameter totrueto allow the BigQuery Data Transfer Service to manage YouTube reporting jobs for you. If there are YouTube reports that don't exist for your channel, new reporting jobs are created to enable them. - data_source is the data source —

youtube_channel.

You can also supply the --project_id flag to specify a particular

project. If --project_id isn't specified, the default project is used.

For example, the following command creates a YouTube Channel data transfer named

My Transfer using table suffix MT, and target dataset mydataset. The

data transfer is created in the default project:

bq mk \

--transfer_config \

--target_dataset=mydataset \

--display_name='My Transfer' \

--params='{"table_suffix":"MT","configure_jobs":"true"}' \

--data_source=youtube_channel

API

Use the projects.locations.transferConfigs.create

method and supply an instance of the TransferConfig

resource.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Query your data

When your data is transferred to BigQuery, the data is written to ingestion-time partitioned tables. For more information, see Introduction to partitioned tables.

If you query your tables directly instead of using the auto-generated views, you

must use the _PARTITIONTIME pseudocolumn in your query. For more information,

see Querying partitioned tables.

Troubleshoot YouTube Channel transfer setup

If you are having issues setting up your data transfer, see YouTube transfer issues in Troubleshooting transfer configurations.