run 方法来运行脚本。

在本主题中,您将创建训练脚本,然后为训练脚本指定命令参数。

创建训练脚本

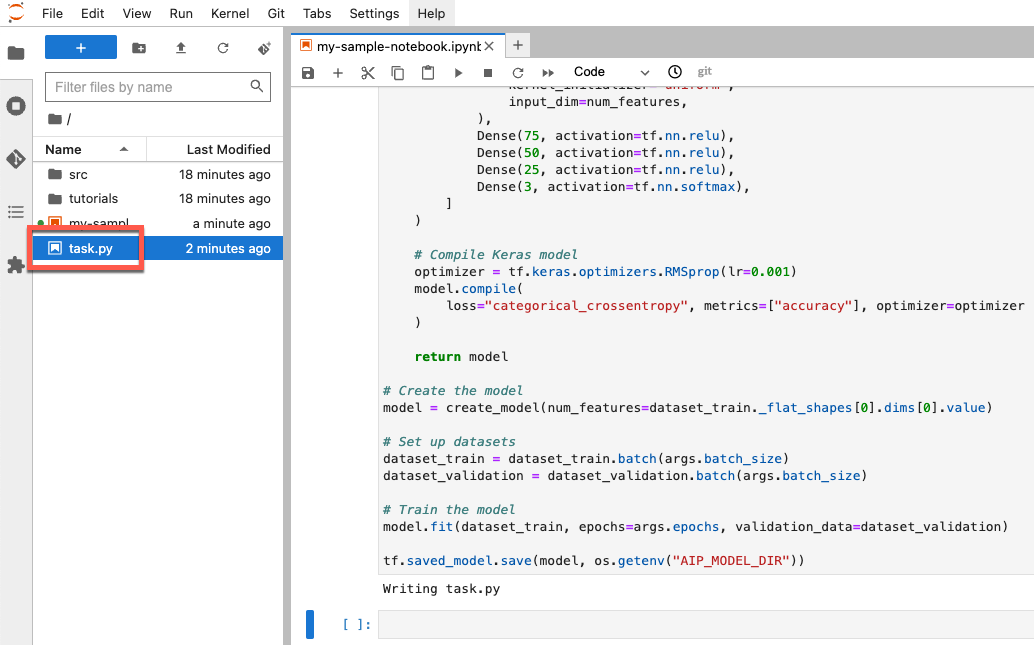

在本部分中,您将创建一个训练脚本。此脚本是笔记本环境中名为 task.py 的新文件。在本教程的后面部分,您需要将此脚本传递给 aiplatform.CustomTrainingJob 构造函数。脚本运行时,会执行以下操作:

将数据加载到您创建的 BigQuery 数据集中。

使用 TensorFlow Keras API 构建、编译和训练模型。

指定调用 Keras

Model.fit方法时使用的周期数和批次大小。使用

AIP_MODEL_DIR环境变量指定模型制品的保存位置。AIP_MODEL_DIR由 Vertex AI 设置,包含用于保存模型制品的目录的 URI。如需了解详情,请参阅特殊 Cloud Storage 目录的环境变量。将 TensorFlow

SavedModel导出到模型目录。如需了解详情,请参阅 TensorFlow 网站上的使用SavedModel格式。

如需创建训练脚本,请在笔记本中运行以下代码:

%%writefile task.py

import argparse

import numpy as np

import os

import pandas as pd

import tensorflow as tf

from google.cloud import bigquery

from google.cloud import storage

# Read environmental variables

training_data_uri = os.getenv("AIP_TRAINING_DATA_URI")

validation_data_uri = os.getenv("AIP_VALIDATION_DATA_URI")

test_data_uri = os.getenv("AIP_TEST_DATA_URI")

# Read args

parser = argparse.ArgumentParser()

parser.add_argument('--label_column', required=True, type=str)

parser.add_argument('--epochs', default=10, type=int)

parser.add_argument('--batch_size', default=10, type=int)

args = parser.parse_args()

# Set up training variables

LABEL_COLUMN = args.label_column

# See https://cloud.google.com/vertex-ai/docs/workbench/managed/executor#explicit-project-selection for issues regarding permissions.

PROJECT_NUMBER = os.environ["CLOUD_ML_PROJECT_ID"]

bq_client = bigquery.Client(project=PROJECT_NUMBER)

# Download a table

def download_table(bq_table_uri: str):

# Remove bq:// prefix if present

prefix = "bq://"

if bq_table_uri.startswith(prefix):

bq_table_uri = bq_table_uri[len(prefix) :]

# Download the BigQuery table as a dataframe

# This requires the "BigQuery Read Session User" role on the custom training service account.

table = bq_client.get_table(bq_table_uri)

return bq_client.list_rows(table).to_dataframe()

# Download dataset splits

df_train = download_table(training_data_uri)

df_validation = download_table(validation_data_uri)

df_test = download_table(test_data_uri)

def convert_dataframe_to_dataset(

df_train: pd.DataFrame,

df_validation: pd.DataFrame,

):

df_train_x, df_train_y = df_train, df_train.pop(LABEL_COLUMN)

df_validation_x, df_validation_y = df_validation, df_validation.pop(LABEL_COLUMN)

y_train = tf.convert_to_tensor(np.asarray(df_train_y).astype("float32"))

y_validation = tf.convert_to_tensor(np.asarray(df_validation_y).astype("float32"))

# Convert to numpy representation

x_train = tf.convert_to_tensor(np.asarray(df_train_x).astype("float32"))

x_test = tf.convert_to_tensor(np.asarray(df_validation_x).astype("float32"))

# Convert to one-hot representation

num_species = len(df_train_y.unique())

y_train = tf.keras.utils.to_categorical(y_train, num_classes=num_species)

y_validation = tf.keras.utils.to_categorical(y_validation, num_classes=num_species)

dataset_train = tf.data.Dataset.from_tensor_slices((x_train, y_train))

dataset_validation = tf.data.Dataset.from_tensor_slices((x_test, y_validation))

return (dataset_train, dataset_validation)

# Create datasets

dataset_train, dataset_validation = convert_dataframe_to_dataset(df_train, df_validation)

# Shuffle train set

dataset_train = dataset_train.shuffle(len(df_train))

def create_model(num_features):

# Create model

Dense = tf.keras.layers.Dense

model = tf.keras.Sequential(

[

Dense(

100,

activation=tf.nn.relu,

kernel_initializer="uniform",

input_dim=num_features,

),

Dense(75, activation=tf.nn.relu),

Dense(50, activation=tf.nn.relu),

Dense(25, activation=tf.nn.relu),

Dense(3, activation=tf.nn.softmax),

]

)

# Compile Keras model

optimizer = tf.keras.optimizers.RMSprop(lr=0.001)

model.compile(

loss="categorical_crossentropy", metrics=["accuracy"], optimizer=optimizer

)

return model

# Create the model

model = create_model(num_features=dataset_train._flat_shapes[0].dims[0].value)

# Set up datasets

dataset_train = dataset_train.batch(args.batch_size)

dataset_validation = dataset_validation.batch(args.batch_size)

# Train the model

model.fit(dataset_train, epochs=args.epochs, validation_data=dataset_validation)

tf.saved_model.save(model, os.getenv("AIP_MODEL_DIR"))

创建脚本后,它会显示在笔记本的根文件夹中:

为训练脚本定义参数

将以下命令行参数传递给训练脚本:

label_column- 这用于标识数据中包含您要预测的内容的列。在此示例中,该列为species。处理数据时,您在名为LABEL_COLUMN的变量中定义此参数。如需了解详情,请参阅下载、预处理和拆分数据。epochs- 这是训练模型时使用的周期数。周期是在训练模型时对数据的迭代。本教程使用 20 个周期。batch_size- 这是在模型更新之前处理的样本数。本教程使用的批次大小为 10。

如需定义传递给脚本的参数,请运行以下代码:

JOB_NAME = "custom_job_unique"

EPOCHS = 20

BATCH_SIZE = 10

CMDARGS = [

"--label_column=" + LABEL_COLUMN,

"--epochs=" + str(EPOCHS),

"--batch_size=" + str(BATCH_SIZE),

]