For logging purposes, use the Vertex AI SDK for Python.

Supported metrics and parameters:

- summary metrics

- time series metrics

- parameters

- classification metrics

Vertex AI SDK for Python

Note: When the optional resume parameter is specified as TRUE,

the previously started run resumes. When not specified, resume defaults to

FALSE and a new run is created.

The following sample uses the

init

method, from the aiplatform functions.

Summary metrics

Summary metrics are single value scalar metrics stored next to time series metrics and represent a final summary of an experiment run.

One example use case is early stopping where a patience configuration allows

continued training but the candidate model is restored from an earlier step

and the metrics calculated for the model at that step would be represented as

a summary metric because the latest time series metric is not representative

of the restored model. The log_metrics API for summary metrics

is used for this purpose.

Python

experiment_name: Provide a name for your experiment. You can find your list of experiments in the Google Cloud console by selecting Experiments in the section nav.run_name: Specify a run name (seestart_run).metric: Metrics key-value pairs. For example:{'learning_rate': 0.1}project: . You can find these in the Google Cloud console welcome page.location: See List of available locations

Time series metrics

To log time series metrics, Vertex AI Experiments requires a backing Vertex AI TensorBoard instance.

Assign backing Vertex AI TensorBoard resource for Time Series Metric Logging.

All metrics logged through

log_time_series_metrics

are stored as

time series metrics.

Vertex AI TensorBoard is the backing time series metric store.

The experiment_tensorboard can be set at both the experiment and

experiment run levels. Setting

the experiment_tensorboard at the run

level overrides the setting at the experiment level. Once the

experiment_tensorboard is set in a run, the run's experiment_tensorboard can't be changed.

- Set

experiment_tensorboardat experiment level:aiplatform.

init(experiment='my-experiment', experiment_tensorboard='projects/.../tensorboard/my-tb-resource') - Set

experiment_tensorboardat run level: Note: Overrides setting at experiment level.aiplatform.

start_run(run_name='my-other-run', tensorboard='projects/.../.../other-resource') aiplatform.log_time_series_metrics(...)

Python

experiment_name: Provide the name of your experiment. You can find your list of experiments in the Google Cloud console by selecting Experiments in the section nav.run_name: Specify a run name (seestart_run).metrics: Dictionary of where keys are metric names and values are metric values.step: Optional. Step index of this data point within the run.wall_time: Optional. Wall clock timestamp when this data point is generated by the end user. If not provided,wall_timeis generated based on the value from time.time()project: . You can find these in the Google Cloud console welcome page.location: See List of available locations

Step and walltime

The log_time_series_metrics API optionally accepts step

and walltime.

step: Optional. Step index of this data point within the run. If not provided, an increment over the latest step among all time series metrics already logged is used. If the step exists for any of the provided metric keys, the step is overwritten.wall_time: Optional. The seconds after epoch of the logged metric. If this is not provided the default is to Python'stime.time.

For example:

aiplatform.log_time_series_metrics({"mse": 2500.00, "rmse": 50.00})

Log to a specific step

aiplatform.log_time_series_metrics({"mse": 2500.00, "rmse": 50.00}, step=8)

Include wall_time

aiplatform.log_time_series_metrics({"mse": 2500.00, "rmse": 50.00}, step=10)

Parameters

Parameters are keyed input values that configure a run, regulate the behavior of the run, and affect the results of the run. Examples include learning rate, dropout rate, and number of training steps. Log parameters using the log_params method.

Python

aiplatform.log_params({"learning_rate": 0.01, "n_estimators": 10})

experiment_name: Provide a name for your experiment. You can find your list of experiments in the Google Cloud console by selecting Experiments in the section nav.run_name: Specify a run name (seestart_run).params: Parameter key-value pairs For example:{'accuracy': 0.9}(seelog_params). welcome page.location: See List of available locations

Classification metrics

In addition to summary metrics and time series metrics, confusion matrices and ROC curves are

commonly used metrics. They can be logged to Vertex AI Experiments using the

log_classification_metrics

API.

Python

experiment_name: Provide a name for your experiment. You can find your list of experiments in the Google Cloud console by selecting Experiments in the section nav.run_name: Specify a run name (seestart_run).project: . You can find these in the Google Cloud console welcome page.location: See List of available locations.labels: List of label names for the confusion matrix. Must be set if 'matrix' is set.matrix: Values for the confusion matrix. Must be set if 'labels' is set.fpr: List of false positive rates for the ROC curve. Must be set if 'tpr' or 'thresholds' is set.tpr: List of true positive rates for the ROC curve. Must be set if 'fpr' or 'thresholds' is set.threshold: List of thresholds for the ROC curve. Must be set if 'fpr' or 'tpr' is set.display_name: The user-defined name for the classification metric artifact.

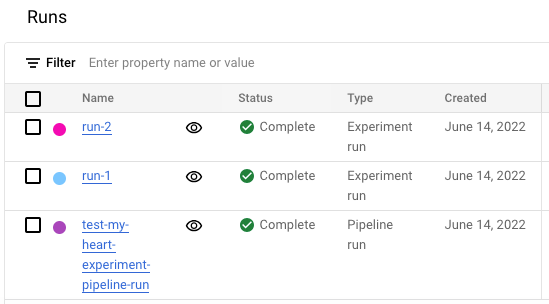

View experiment runs list in the Google Cloud console

- In the Google Cloud console, go to the Experiments page.

Go to Experiments

A list of experiments appears. - Select the experiment that you want to check.

A list of runs appears.

For more details, see Compare and analyze runs.