Connect to Amazon S3

As a BigQuery administrator, you can create a connection to let data analysts access data stored in Amazon Simple Storage Service (Amazon S3) buckets.

BigQuery Omni accesses Amazon S3 data through connections. Each connection has its unique Amazon Web Services (AWS) Identity and Access Management (IAM) user. You grant permissions to users through AWS IAM roles. The policies within the AWS IAM roles determine what data BigQuery can access for each connection.

Connections are required to query the Amazon S3 data and export query results from BigQuery to your Amazon S3 bucket.

Before you begin

Ensure that you've created the following resources:

- A Google Cloud project with BigQuery Connection API enabled.

- If you are on the capacity-based pricing model, then ensure that you have enabled BigQuery Reservation API for your project. For information about pricing, see BigQuery Omni pricing.

- An AWS account with permissions to modify IAM policies in AWS.

Required roles

To get the permissions that

you need to create a connection to access Amazon S3 data,

ask your administrator to grant you the

BigQuery Connection Admin (roles/bigquery.connectionAdmin)

IAM role on the project.

For more information about granting roles, see Manage access to projects, folders, and organizations.

You might also be able to get the required permissions through custom roles or other predefined roles.

Create an AWS IAM policy for BigQuery

Ensure that you follow security best practices for Amazon S3. We recommend that you do the following:

- Set up an AWS policy that prevents access to your Amazon S3 bucket through HTTP.

- Set up an AWS policy that prevents public access to your Amazon S3 bucket.

- Use Amazon S3 server-side encryption.

- Limit permissions granted to the Google Account to the required minimum.

- Set up CloudTrails and enable Amazon S3 data events.

To create an AWS IAM policy, use the AWS console or Terraform:

AWS console

Go to the AWS IAM console. Ensure that you're in the account that owns the Amazon S3 bucket that you want to access.

Select Policies > Create policy (opens in a new tab).

Click JSON and paste the following into the editor:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "s3:ListBucket" ], "Resource": [ "arn:aws:s3:::BUCKET_NAME" ] }, { "Effect": "Allow", "Action": [ "s3:GetObject", EXPORT_PERM ], "Resource": [ "arn:aws:s3:::BUCKET_NAME", "arn:aws:s3:::BUCKET_NAME/*" ] } ] }

Replace the following:

BUCKET_NAME: the Amazon S3 bucket that you want BigQuery to access.EXPORT_PERM(optional): additional permission if you want to export data to an Amazon S3 bucket. Replace with"s3:PutObject"- To separate export access control, we recommend that you create another connection with a separate AWS IAM role and grant the role write-only access. For more granular access control, you can also limit a role's access to a specific path of the bucket.

In the Name field, enter a policy name, such as

bq_omni_read_only.Click Create policy.

Your policy is created with an Amazon Resource Name (ARN) in the following format:

arn:aws:iam::AWS_ACCOUNT_ID:policy/POLICY_NAME

Replace the following:

AWS_ACCOUNT_ID: the ID number of the connection's AWS IAM user.POLICY_NAME: the policy name you chose.

AWS CLI

To create an AWS IAM policy, use the

aws iam create-policy command:

aws iam create-policy \ --policy-name POLICY_NAME \ --policy-document '{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "s3:ListBucket" ], "Resource": [ "arn:aws:s3:::BUCKET_NAME" ] }, { "Effect": "Allow", "Action": [ "s3:GetObject", EXPORT_PERM ], "Resource": [ "arn:aws:s3:::BUCKET_NAME", "arn:aws:s3:::BUCKET_NAME/*" ] } ] }'

Replace the following:

POLICY_NAME: the name of the policy you are creating.BUCKET_NAME: the Amazon S3 bucket that you want BigQuery to access.EXPORT_PERM(optional): additional permission if you want to export data to an Amazon S3 bucket. Replace with"s3:PutObject"- To separate export access control, we recommend that you create another connection with a separate AWS IAM role and grant the role write-only access. For more granular access control, you can also limit a role's access to a specific path of the bucket.

Your policy is created with an Amazon Resource Name (ARN) in the following format:

arn:aws:iam::AWS_ACCOUNT_ID:policy/POLICY_NAME

Replace the following:

AWS_ACCOUNT_ID: the ID number of the connection's AWS IAM user.POLICY_NAME: the policy name you chose.

Terraform

Add the following to your Terraform config to attach a policy to an Amazon S3 bucket resource:

resource "aws_iam_policy" "bigquery-omni-connection-policy" { name = "bigquery-omni-connection-policy" policy = <<-EOF { "Version": "2012-10-17", "Statement": [ { "Sid": "BucketLevelAccess", "Effect": "Allow", "Action": ["s3:ListBucket"], "Resource": ["arn:aws:s3:::BUCKET_NAME"] }, { "Sid": "ObjectLevelAccess", "Effect": "Allow", "Action": ["s3:GetObject",EXPORT_PERM], "Resource": [ "arn:aws:s3:::BUCKET_NAME", "arn:aws:s3:::BUCKET_NAME/*" ] } ] } EOF }

Replace the following:

BUCKET_NAME: the Amazon S3 bucket that you want BigQuery to access.EXPORT_PERM(optional): additional permission if you want to export data to an Amazon S3 bucket. Replace with"s3:PutObject"- To separate export access control, we recommend that you create another connection with a separate AWS IAM role and grant the role write-only access. For more granular access control, you can also limit a role's access to a specific path of the bucket.

Create an AWS IAM role for BigQuery

Next, create a role that allows access to the Amazon S3 bucket from within BigQuery. This role uses the policy that you created in the previous section.

To create an AWS IAM role, use the AWS console or Terraform:

AWS console

Go to the AWS IAM console. Ensure that you're in the account that owns the Amazon S3 bucket that you want to access.

Select Roles > Create role.

For Select type of trusted entity, select Web Identity.

For Identity Provider, select Google.

For Audience, enter

00000as a placeholder value. You'll replace the value later.Click Next: Permissions.

To grant the role access to your Amazon S3 data, attach an IAM policy to the role. Search for the policy that you created in the previous section, and click the toggle.

Click Next: Tags.

Click Next: Review. Enter a name for the role, such as

BQ_Read_Only.Click Create role.

AWS CLI

Use the following command to create an IAM role and assign the policy to the role created:

aws iam create-role \ --role-name bigquery-omni-connection \ --max-session-duration 43200 \ --assume-role-policy-document '{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "accounts.google.com" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "accounts.google.com:sub": "00000" } } } ] }'

Terraform

Add the following to your Terraform config to create an IAM role and assign the policy to the role created:

resource "aws_iam_role" "bigquery-omni-connection-role" { name = "bigquery-omni-connection" max_session_duration = 43200 assume_role_policy = <<-EOF { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "accounts.google.com" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "accounts.google.com:sub": "00000" } } } ] } EOF } resource "aws_iam_role_policy_attachment" "bigquery-omni-connection-role-attach" { role = aws_iam_role.bigquery-omni-connection-role.name policy_arn = aws_iam_policy.bigquery-omni-connection-policy.arn } output "bigquery_omni_role" { value = aws_iam_role.bigquery-omni-connection-role.arn }

Then, attach the policy to the role:

aws iam attach-role-policy \ --role-name bigquery-omni-connection \ --policy-arn arn:aws:iam::AWS_ACCOUNT_ID:policy/POLICY_NAME

Replace the following:

AWS_ACCOUNT_ID: the ID number of the connection's AWS IAM user.POLICY_NAME: the policy name you chose.

Create connections

To connect to your Amazon S3 bucket, use the Google Cloud console, the bq command-line tool, or the client library:

Console

Go to the BigQuery page.

In the Explorer pane, click Add data.

The Add data dialog opens.

In the Filter By pane, in the Data Source Type section, select Storage/Data Lakes.

Alternatively, in the Search for data sources field, you can enter

awsorAmazon S3.In the Featured data sources section, click Amazon S3.

Click the Amazon S3 Omni: BigQuery Federation solution card.

In the Create table dialog, in the Connection ID field, select Create a new S3 connection.

In the External data source pane, enter the following information:

- For Connection type, select BigLake on AWS (via BigQuery Omni).

- For Connection ID, enter an identifier for the connection resource. You can use letters, numbers, dashes, and underscores.

- For Region, select the location where you want to create the connection.

- Optional: For Friendly name, enter a user-friendly name for

the connection, such as

My connection resource. The friendly name can be any value that helps you identify the connection resource if you need to modify it later. - Optional: For Description, enter a description for this connection resource.

- For AWS role id, enter the full IAM role ID that

you created in this format:

arn:aws:iam::AWS_ACCOUNT_ID:role/ROLE_NAME

Click Create connection.

Click Go to connection.

In the Connection info pane, copy the BigQuery Google identity. This is a Google principal that is specific to each connection. Example:

BigQuery Google identity: IDENTITY_ID

Terraform

resource "google_bigquery_connection" "connection" { connection_id = "bigquery-omni-aws-connection" friendly_name = "bigquery-omni-aws-connection" description = "Created by Terraform" location = "AWS_LOCATION" aws { access_role { # This must be constructed as a string instead of referencing the # AWS resources directly to avoid a resource dependency cycle # in Terraform. iam_role_id = "arn:aws:iam::AWS_ACCOUNT:role/IAM_ROLE_NAME" } } }

Replace the following:

AWS_LOCATION: an Amazon S3 location in Google CloudAWS_ACCOUNT: your AWS account ID.IAM_ROLE_NAME: the role that allows access to the Amazon S3 bucket from BigQuery. Use the value of thenameargument from theaws_iam_roleresource in Create an AWS IAM role for BigQuery.

bq

bq mk --connection --connection_type='AWS' \ --iam_role_id=arn:aws:iam::AWS_ACCOUNT_ID:role/ROLE_NAME \ --location=AWS_LOCATION \ CONNECTION_ID

Replace the following:

AWS_ACCOUNT_ID: the ID number of the connection's AWS IAM userROLE_NAME: the role policy name you choseAWS_LOCATION: an Amazon S3 location in Google CloudCONNECTION_ID: the ID that you give this connection resource.

The command line shows the following output:

Identity: IDENTITY_ID

The output contains the following:

IDENTITY_ID: a Google principal that Google Cloud controls that is specific to each connection.

Take note of the IDENTITY_ID value.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

Add a trust relationship to the AWS role

BigQuery Omni provides two methods for securely accessing data

from Amazon S3.

You can either grant the Google Cloud service account access to your

AWS role, or if your AWS account has a

custom identity provider

for accounts.google.com, then you must add the Google Cloud service

account as an audience to the provider:

Add a trust policy to the AWS role

The trust relationship lets the connection assume the role and access the Amazon S3 data as specified in the roles policy.

To add a trust relationship, use the AWS console or Terraform:

AWS console

Go to the AWS IAM console. Ensure that you're in the account that owns the Amazon S3 bucket that you want to access.

Select Roles.

Select the

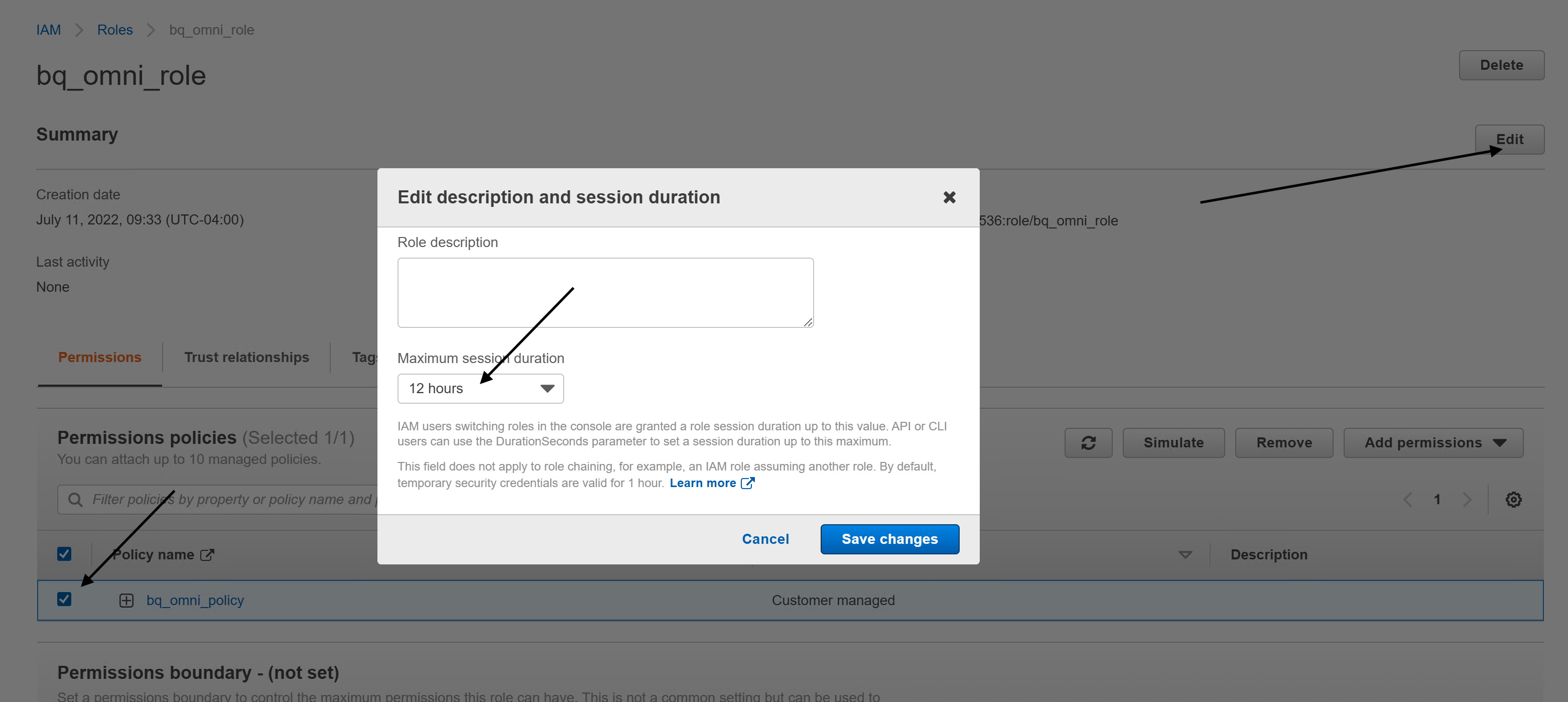

ROLE_NAMEthat you created.Click Edit and then do the following:

Set Maximum session duration to 12 hours. As each query can run for up to six hours, this duration allows for one additional retry. Increasing the session duration beyond 12 hours won't allow for additional retries. For more information, see the query/multi-statement query execution-time limit.

Click Save changes.

Select Trust Relationships and click Edit trust relationship. Replace the policy content with the following:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "accounts.google.com" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "accounts.google.com:sub": "IDENTITY_ID" } } } ] }

Replace

IDENTITY_IDwith the BigQuery Google identity value, which you can find on the Google Cloud console for the connection you created.Click Update Trust Policy.

AWS CLI

To create a trust relationship with the BigQuery connection,

use the

aws iam update-assume-role-policy command:

aws iam update-assume-role-policy \ --role-name bigquery-omni-connection \ --policy-document '{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "accounts.google.com" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "accounts.google.com:sub": "IDENTITY_ID" } } } ] }' aws iam update-assume-role-policy \ --role-name bigquery-omni-connection \ --policy-document '{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "accounts.google.com" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "accounts.google.com:sub": "IDENTITY_ID" } } } ] }'

Replace the following:

IDENTITY_ID: the BigQuery Google identity value, which you can find on the Google Cloud console for the connection you created.

Terraform

Update the aws_iam_role resource in the Terraform configuration to add a

trust relationship:

resource "aws_iam_role" "bigquery-omni-connection-role" { name = "bigquery-omni-connection" max_session_duration = 43200 assume_role_policy = <<-EOF { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "accounts.google.com" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "accounts.google.com:sub": "${google_bigquery_connection.connection.aws[0].access_role[0].identity}" } } } ] } EOF }

The connection is now ready to use.

Configure a custom AWS identity provider

If your AWS account has a

custom identity provider

for accounts.google.com, you will need to add the IDENTITY_ID

as an audience to the provider. You can accomplish this by:

Go to the AWS IAM console. Ensure that you're in the account that owns the Amazon S3 bucket that you want to access.

Navigate to the IAM > Identity Providers.

Select the identity provider for accounts.google.com.

Click Add Audience and add the IDENTITY_ID as the audience.

The connection is now ready to use.

Share connections with users

You can grant the following roles to let users query data and manage connections:

roles/bigquery.connectionUser: enables users to use connections to connect with external data sources and run queries on them.roles/bigquery.connectionAdmin: enables users to manage connections.

For more information about IAM roles and permissions in BigQuery, see Predefined roles and permissions.

Select one of the following options:

Console

Go to the BigQuery page.

Connections are listed in your project, in a group called Connections.

In the left pane, click Explorer:

If you don't see the left pane, click Expand left pane to open the pane.

Click your project, click Connections, and then select a connection.

In the Details pane, click Share to share a connection. Then do the following:

In the Connection permissions dialog, share the connection with other principals by adding or editing principals.

Click Save.

bq

You cannot share a connection with the bq command-line tool. To share a connection, use the Google Cloud console or the BigQuery Connections API method to share a connection.

API

Use the

projects.locations.connections.setIAM method

in the BigQuery Connections REST API reference section, and

supply an instance of the policy resource.

Java

Before trying this sample, follow the Java setup instructions in the BigQuery quickstart using client libraries. For more information, see the BigQuery Java API reference documentation.

To authenticate to BigQuery, set up Application Default Credentials. For more information, see Set up authentication for client libraries.

What's next

- Learn about different connection types.

- Learn about managing connections.

- Learn about BigQuery Omni.

- Use the BigQuery Omni with AWS lab.

- Learn about BigLake tables.

- Learn how to query Amazon S3 data.

- Learn how to export query results to an Amazon S3 bucket.