Bigtable

Scale your latency-sensitive applications with the NoSQL pioneer

Low-latency, Cassandra, and HBase-compatible NoSQL database service for machine learning, operational analytics, and user-facing applications.

Get started with a free trial instance.

Features

Low latency and high throughput

Bigtable is a key-value and wide-column store ideal for fast access to structured, semi-structured, or unstructured data. This makes latency-sensitive workloads like personalization a perfect fit for Bigtable.

Distributed counters and high read and write throughput per dollar makes it also a great fit for clickstream and IoT use cases, and even batch analytics for high-performance computing (HPC) applications, including training ML models.

Hybrid storage architecture

Bigtable automatic, seamless data tiering between RAM, SSD and HDD helps deliver high performance, cost effectively. Deliver ultra-low latencies with RAM while eliminating hotspot problems without need for a caching layer, tier infrequently accessed data to HDD for significant cost savings without managing data pipelines and headache of keeping data in sync between multiple systems.

SQL and continuous materialized views

Bigtable SQL empowers users to build fully-managed, real-time applications using familiar SQL syntax, with specialized features that preserve Bigtable flexible schema. You can also use the SQL interface to build incremental materialized views that simplify the creation of real-time metrics. A Bigtable materialized view will automatically keep data up to date by processing the changes as they arrive without impacting your write and read performance and scales automatically in response to the traffic.

Data model flexibility

Bigtable lets your data model evolve organically. Store anything from scalars, JSON, Protocol Buffers, Avro, Arrow to embeddings, images, and dynamically add/remove new columns as needed. Deliver low-latency serving or high-performance batch analytics over raw, unstructured data in a single database.

Easy migration from NoSQL databases

Bigtable offers Apache Cassandra and HBase APIs and migration tooling that enables faster and simpler onboarding by ensuring accurate data migration with reduced effort. HBase Bigtable replication library and Cassandra Proxy allow for no-downtime live migrations while Bigtable Data Bridge and Aerospike Migration Tool simplify migrations from Amazon DynamoDB and Aerospike respectively.

From a single zone up to eight regions at once

Apps backed by Bigtable can deliver low-latency reads and writes with globally distributed multi-primary configurations, no matter where your users may be. Zonal instances are great for cost savings and can be seamlessly scaled up to multi-region deployments with automatic replication. When running a multi-region instance, your database is protected against a regional failure and offers industry-leading 99.999% availability.

Write and read scalability with no limits

Bigtable decouples compute resources from data storage, which makes it possible to transparently adjust processing resources. Each additional node can process reads and writes equally well, providing effortless horizontal scalability. Bigtable optimizes performance by automatically scaling resources to adapt to server traffic, handling the sharding, replication, and query processing.

High-performance, workload-isolated data processing

Bigtable Data Boost enables users to run analytical queries, batch ETL processes, train ML models, or export data faster without affecting transactional workloads. Data Boost does not require capacity planning or management. It allows directly querying data stored in Google’s distributed storage system, Colossus using on-demand capacity letting users easily handle mixed workloads and share data worry-free.

Rich application and tool support

Easily connect to the open source ecosystem with the Apache HBase API. Build data-driven applications faster with seamless integrations with Apache Spark, Hadoop, GKE, Dataflow, Dataproc, Vertex AI Vector Search, and BigQuery. Meet development teams where they are with SQL and client libraries for Java, Go, Python, C#, Node.js, PHP, Ruby, C++, HBase, and integration with LangChain.

Agentic development

With MCP support, Agent Development Kit (ADK) and LangChain integration, build Agentic applications to support process automation or conversational user experiences with ease. Using Bigtable Agent Skills with Antigravity, your favorite IDE or CLI take your developer productivity to next level, build more and faster with Bigtable.

No hidden costs

No IOPS charges, no cost for taking or restoring backups, no disproportionate read/write pricing to impact your budget as your workloads evolve.

Real-time change data capture and eventing

Use Bigtable change streams to capture change data from Bigtable databases and integrate it with other systems for analytics, event triggering, and compliance.

Enterprise-grade security and controls

Customer-managed encryption keys (CMEK) with Cloud External Key Manager support, IAM integration for access and controls, support for VPC-SC, Access Transparency, Access Approval, and comprehensive audit logging help ensure your data is protected and complies with regulations. Fine-grained access control lets you authorize access at table, column, or row level.

Observability

Monitor performance of Bigtable databases with server-side metrics. Analyze usage patterns with Key Visualizer interactive monitoring tool. Use query stats, table stats, and the hot tablets tool for troubleshooting query performance issues and quickly diagnose latency issues with client-side monitoring.

Disaster recovery

Take instant, incremental backups of your database cost-effectively and restore on demand. Store backups in different regions for additional resilience, easily restore between instances, or projects for test versus production scenarios.

How It Works

Bigtable instances provide compute and storage in one or more regions. Each Bigtable cluster can receive both reads and writes. Data is automatically "split" for scalability and replicated between clusters asynchronously. A distributed clock called TrueTime guarantees transactions are correctly ordered.

Bigtable instances provide compute and storage in one or more regions. Each Bigtable cluster can receive both reads and writes. Data is automatically "split" for scalability and replicated between clusters asynchronously. A distributed clock called TrueTime guarantees transactions are correctly ordered.

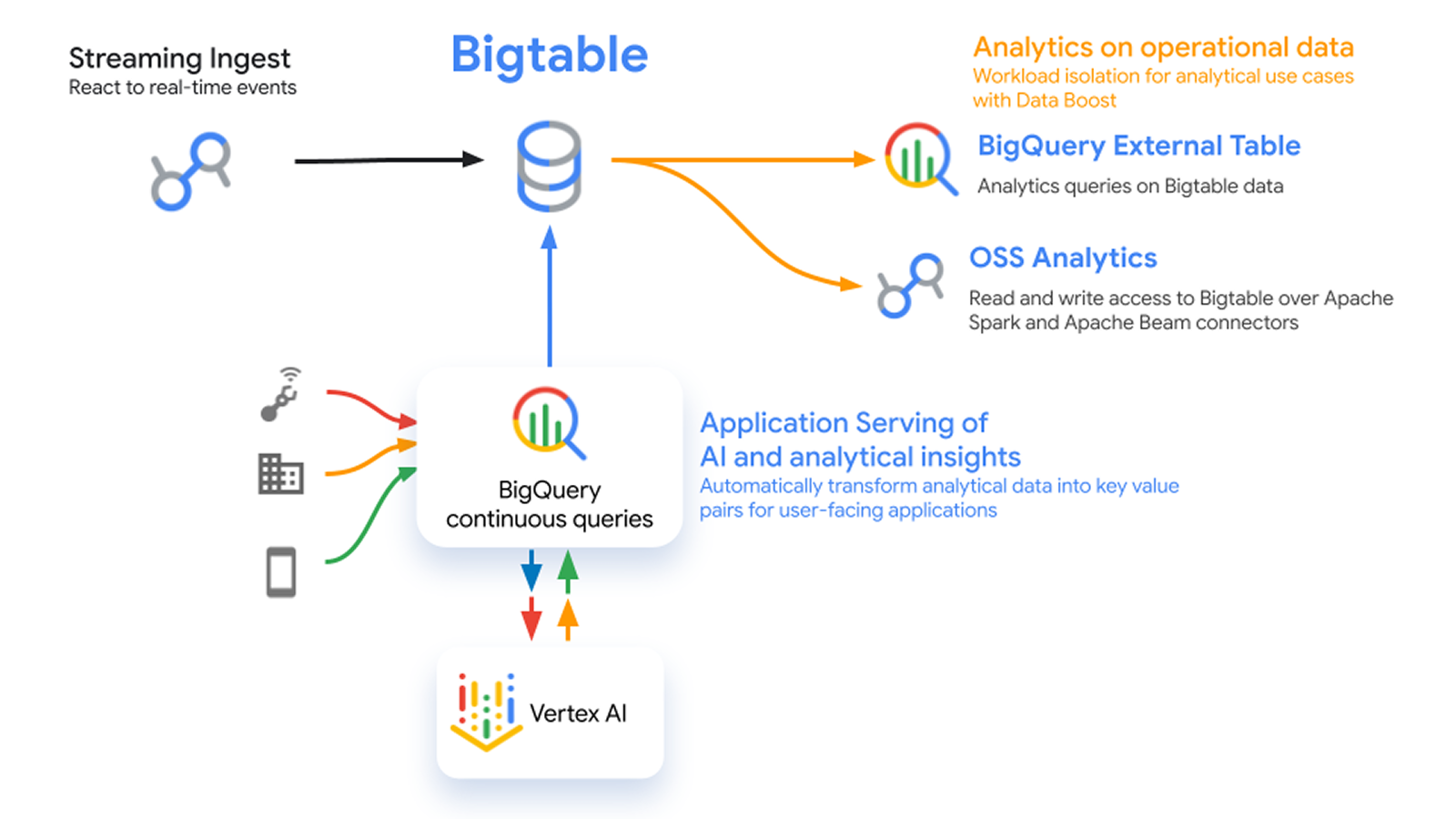

Real-time analytics

Increase data freshness and reduce query latency

Learning resources

Increase data freshness and reduce query latency

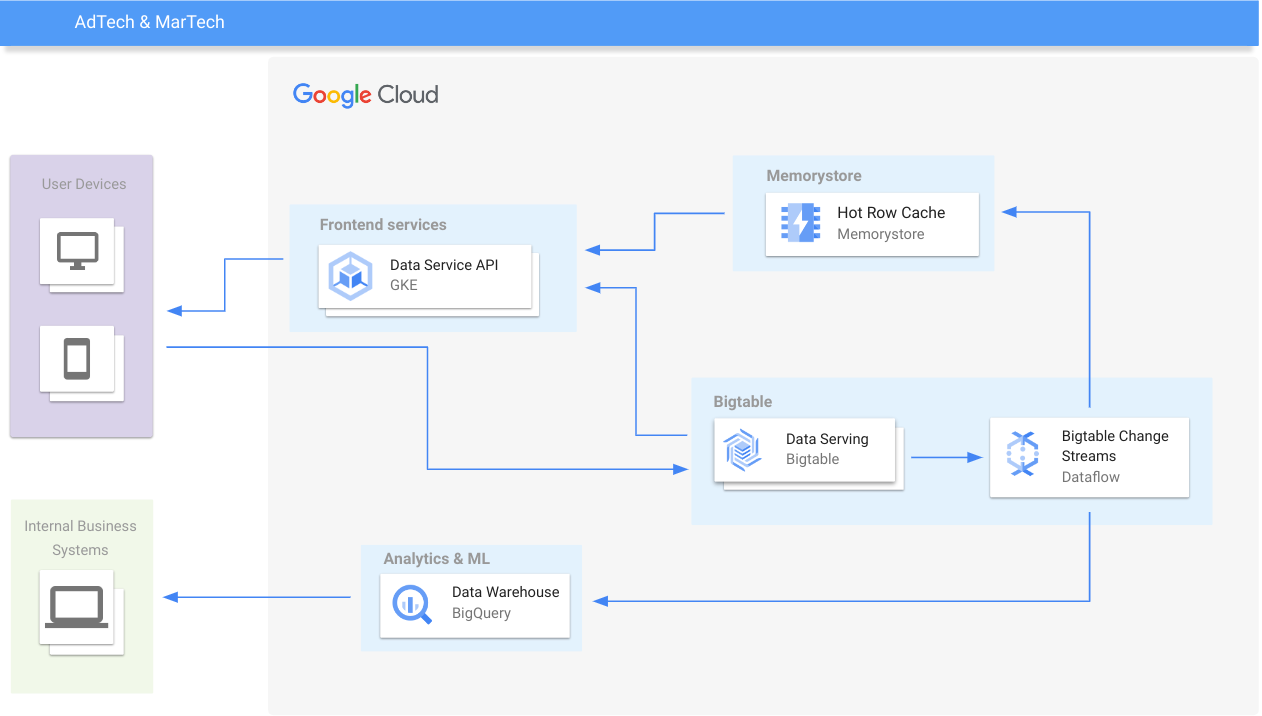

AdTech and retail

Personalize experiences in real time

Learning resources

Personalize experiences in real time

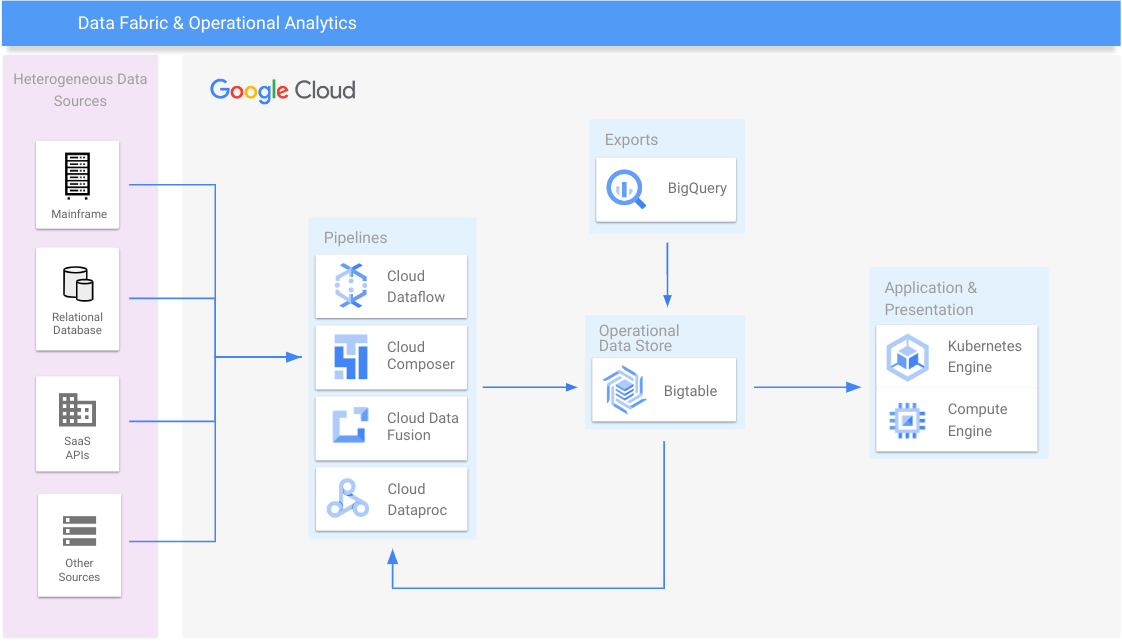

Data fabric and operational analytics

Consolidate data silos and scale out legacy systems

Learning resources

Consolidate data silos and scale out legacy systems

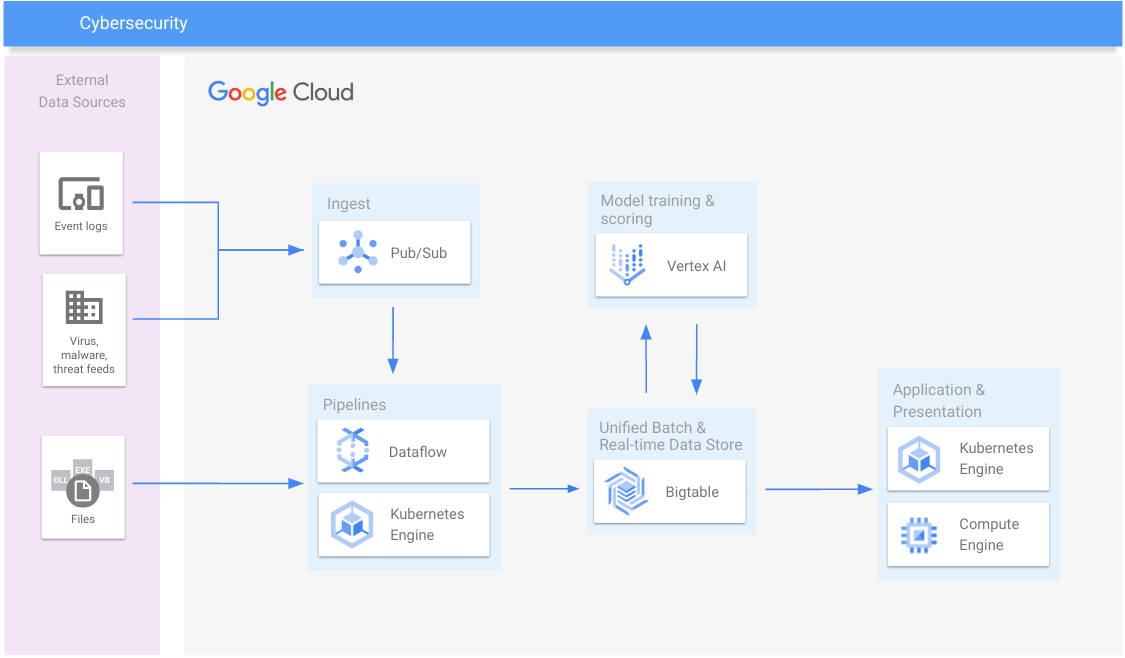

Cybersecurity

Detect malware, payment fraud, stop spam and scams

Learning resources

Detect malware, payment fraud, stop spam and scams

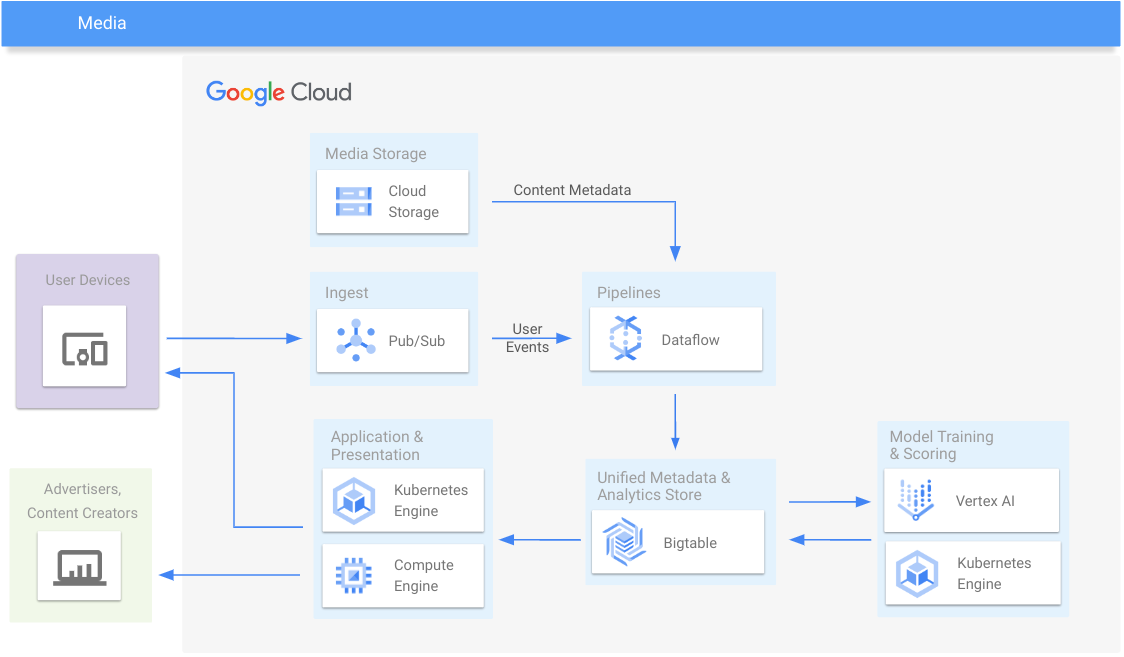

Media

Deliver media content and engagement analytics

Learning resources

Deliver media content and engagement analytics

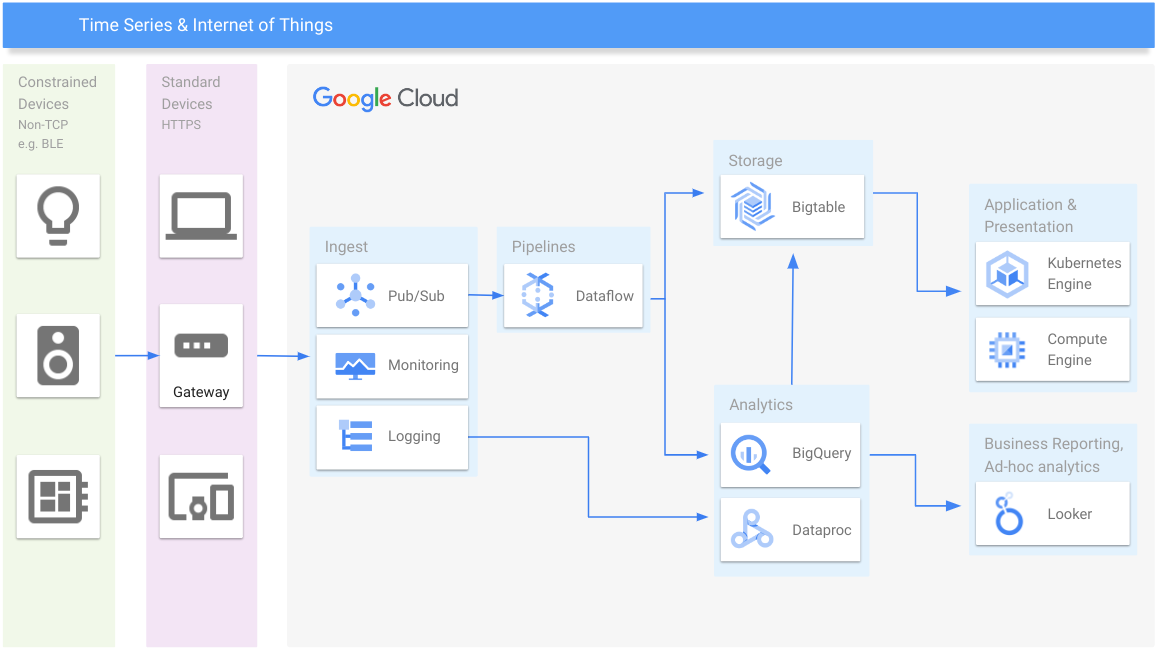

Time series and IoT

Manage time series data at any scale

Learning resources

Manage time series data at any scale

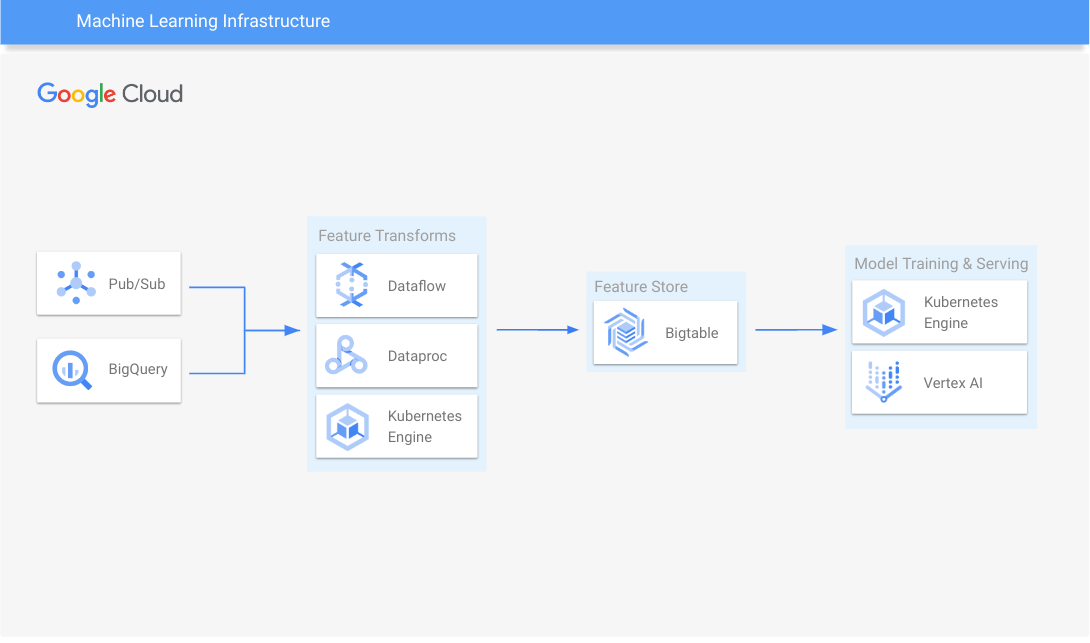

Machine learning infrastructure

Scale model training and serving

Learn how to use Bigtable with popular open-source feature stores.

Learning resources

Scale model training and serving

Learn how to use Bigtable with popular open-source feature stores.

Pricing

| How Bigtable pricing works | Bigtable pricing is based on compute capacity, database storage, backup storage, and network usage. Committed use discounts reduce the price further. | |

|---|---|---|

| Service | Description | Price |

Compute capacity | Enterprise edition Packed with key capabilities for machine learning, time series, operational analytics and user-facing applications at scale with low single-digit millisecond latency. Compute capacity is provisioned as nodes. | Starting at $0.65 per node per hour |

Enterprise Plus edition Support the most demanding workloads with the highest levels of configurability, performance, observability and governance. Includes all Enterprise edition capabilities and can deliver sub-millisecond latency. Compute capacity is provisioned as nodes. | Starting at $0.85 per node per hour | |

In-memory tier In-memory read throughput capacity for ultra-low latency reads with high hotspot resistance. Only available in Enterprise Plus edition. Read throughput is provisioned in 40,000 (1 KB) rows per second increments, up to 120,000 rows per second per node. | Starting at $0.20 per 40,000 rows per second capacity | |

Data Boost | On-demand, isolated compute resources for batch processing | Starting at $0.000845 per serverless processing unit per hour |

Data storage | SSD Pricing is based on the physical size of tables. Each replica is billed separately. Recommended for low-latency serving. | Starting at $0.17 per GB per month |

HDD Pricing is based on the physical size of tables. Each replica is billed separately. Infrequent Access (IA) uses HDD and is billed at same rate. | Starting at $0.026 per GB per month | |

Backups | Standard backups Pricing is based on the physical size of backups. Bigtable backups are incremental. | Starting at $0.026 per GB per month |

Hot backups Optimized for significantly reduced restore time. Pricing is based on the physical size of backups. | Starting at $0.12 per GB per month | |

Network | Ingress | Free |

Egress within same region | Free | |

Egress between regions | Starting at $0.10 per GB | |

Replication | Within same region | Free |

Between regions | Starting at $0.01 per GB | |

Learn more about Bigtable pricing and committed use discounts.

How Bigtable pricing works

Bigtable pricing is based on compute capacity, database storage, backup storage, and network usage. Committed use discounts reduce the price further.

Compute capacity

Enterprise edition

Packed with key capabilities for machine learning, time series, operational analytics and user-facing applications at scale with low single-digit millisecond latency.

Compute capacity is provisioned as nodes.

Starting at

$0.65

per node per hour

Enterprise Plus edition

Support the most demanding workloads with the highest levels of configurability, performance, observability and governance. Includes all Enterprise edition capabilities and can deliver sub-millisecond latency.

Compute capacity is provisioned as nodes.

Starting at

$0.85

per node per hour

In-memory tier

In-memory read throughput capacity for ultra-low latency reads with high hotspot resistance. Only available in Enterprise Plus edition.

Read throughput is provisioned in 40,000 (1 KB) rows per second increments, up to 120,000 rows per second per node.

Starting at

$0.20

per 40,000 rows per second capacity

Data Boost

On-demand, isolated compute resources for batch processing

Starting at

$0.000845

per serverless processing unit per hour

Data storage

SSD

Pricing is based on the physical size of tables. Each replica is billed separately. Recommended for low-latency serving.

Starting at

$0.17

per GB per month

HDD

Pricing is based on the physical size of tables. Each replica is billed separately. Infrequent Access (IA) uses HDD and is billed at same rate.

Starting at

$0.026

per GB per month

Backups

Standard backups

Pricing is based on the physical size of backups. Bigtable backups are incremental.

Starting at

$0.026

per GB per month

Hot backups

Optimized for significantly reduced restore time. Pricing is based on the physical size of backups.

Starting at

$0.12

per GB per month

Network

Ingress

Free

Egress within same region

Free

Egress between regions

Starting at

$0.10

per GB

Replication

Within same region

Free

Between regions

Starting at

$0.01

per GB

Learn more about Bigtable pricing and committed use discounts.

Business Case

Explore how other businesses built innovative apps to deliver great customer experiences, cut costs, and increase ROI with Bigtable

Explore how Box modernized their NoSQL databases with Bigtable

Box enhanced scalability and availability while reducing cost to manage, through a seamless migration.

Benefits and customers

Grow your business with innovative applications that scale limitlessly to meet any demand.

Get best-in-class price-performance and pay for what you use.

Migrate easily from other NoSQL databases and run hybrid or multicloud deployments with open source APIs and migration tools.

Partners & Integration

Take advantage of partners with Bigtable expertise to help you at every step of the journey, from assessments and business cases to migrations and building new apps on Bigtable.

System integrators

Want to get more details about which partner or third-party integration is best for your business? Go to the partner directory.

Learn more

FAQ

What type of database is Bigtable?

Bigtable is a NoSQL database service, specifically a key-value store that allows for very wide tables with tens of thousands of columns, hence also referred to as a wide-column database or a distributed multi-dimensional map. Bigtable is a NoSQL database in the "Not only SQL" sense, rather than "zero SQL." It supports many capabilities beyond key-value lookups including aggregations and global secondary indexes.

Bigtable is most similar to popular open source projects it inspired, such as Apache HBase and Cassandra, and hence is the most common destination for customers that deal with large data volumes looking for a high-performance, cost-effective, fully managed NoSQL database solution on Google Cloud.

Does Bigtable support SQL?

In addition to its key-value APIs, Bigtable also supports SQL queries in three different ways:

- For low-latency application development, Bigtable offers a SQL query API that builds upon GoogleSQL with extensions for the wide-column data model resembling Cassandra Query Language (CQL). It supports 200+ functions including aggregations (GROUP BY), windowing, geospatial and JSON processing functions and vector search (kNN). Bigtable supports both standard and piped query syntax.

- For data science use cases or other kinds of batch processing and ETL, Bigtable supports SparkSQL using its Spark client.

- For users who want to do post-hoc exploratory analysis or blend data from multiple sources for batch analytics, Bigtable data can also be accessed from BigQuery. Simply register your Bigtable tables in BigQuery and query like any other BigQuery table without any ETL or data duplication.

How do I migrate databases to Bigtable?

Bigtable offers Apache Cassandra and HBase APIs and migration tooling that enables faster and simpler onboarding by ensuring accurate data migration with reduced effort. Bigtable’s HBase replication library and Cassandra migration tools allow for no-downtime, live migrations. Bigtable also offers utilities to simplify migrations from DynamoDB and Aerospike.

Is Bigtable serverless?

Bigtable storage is billed per GB used similar to a serverless model. Bigtable also offers linear horizontal scaling and can automatically scale up and down compute resources in response to demand fluctuations. Hence it doesn’t require a long term capacity commitment for storage or compute. However, pricing for low-latency compute is capacity-based and billed per node, not per request where each node can serve up to 17K requests per second. This makes Bigtable price more favorable for larger workloads but less ideal for small applications, which may be more suitable for Google Cloud databases such as Firestore.

For batch data processing Bigtable offers Data Boost, which bills in Serverless Processing Units (SPU).

Is Bigtable a persistent cache?

Bigtable is a database with cache-like performance, not a cache with persistence. It offers powerful in-database processing capabilities, a SQL API, continuous materialized views and asynchronous secondary indexes while using RDMA technology to provide direct access to RAM for high throughput and performance not bound by database server's CPU capacity. Although it is possible to use Bigtable as a replacement for durable caching solutions like MemoryDB, the opposite isn't always true, as solutions like Redis were designed as a cache, not a database.