How Cloud Bigtable helps Ravelin detect retail fraud with low latency

Jono MacDougall

Software Engineer and Co-founder, Ravelin

Try Google Cloud

Start building on Google Cloud with $300 in free credits and 20+ always free products.

Free trialEditor’s note: Today we are hearing from Jono MacDougall , Principal Software Engineer at Ravelin. Ravelin delivers market-leading online fraud detection and payment acceptance solutions for online retailers. To help us meet the scaling, throughput, and latency demands of our growing roster of large-scale clients, we migrated to Google Cloud and its suite of managed services, including Cloud Bigtable, the scalable NoSQL database for large workloads.

As a fraud detection company for online retailers, each new client brings new data that must be kept in a secure manner and new financial transactions to analyze. This means our data infrastructure must be highly scalable and constantly maintain low latency. Our goal is to bring these new organizations on quickly without interrupting their business. We help our clients with checkout flows, so we need latencies that won’t interrupt that process—a critical concern in the booming online retail sector.

We like Cloud Bigtable because it can quickly and securely ingest and process a high volume of data. Our software accesses data in Bigtable every time it makes a fraud decision. When a client’s customer places an order, we need to process their full history and as much data as possible about that customer in order to detect fraud, all while keeping their data secure. Bigtable excels at accessing and processing that data in a short time window. With a customer key, we can quickly access data, bring it into our feature extraction process, and generate features for our models and rules. The data stays encrypted at rest in Bigtable, which keeps us and our customers safe.

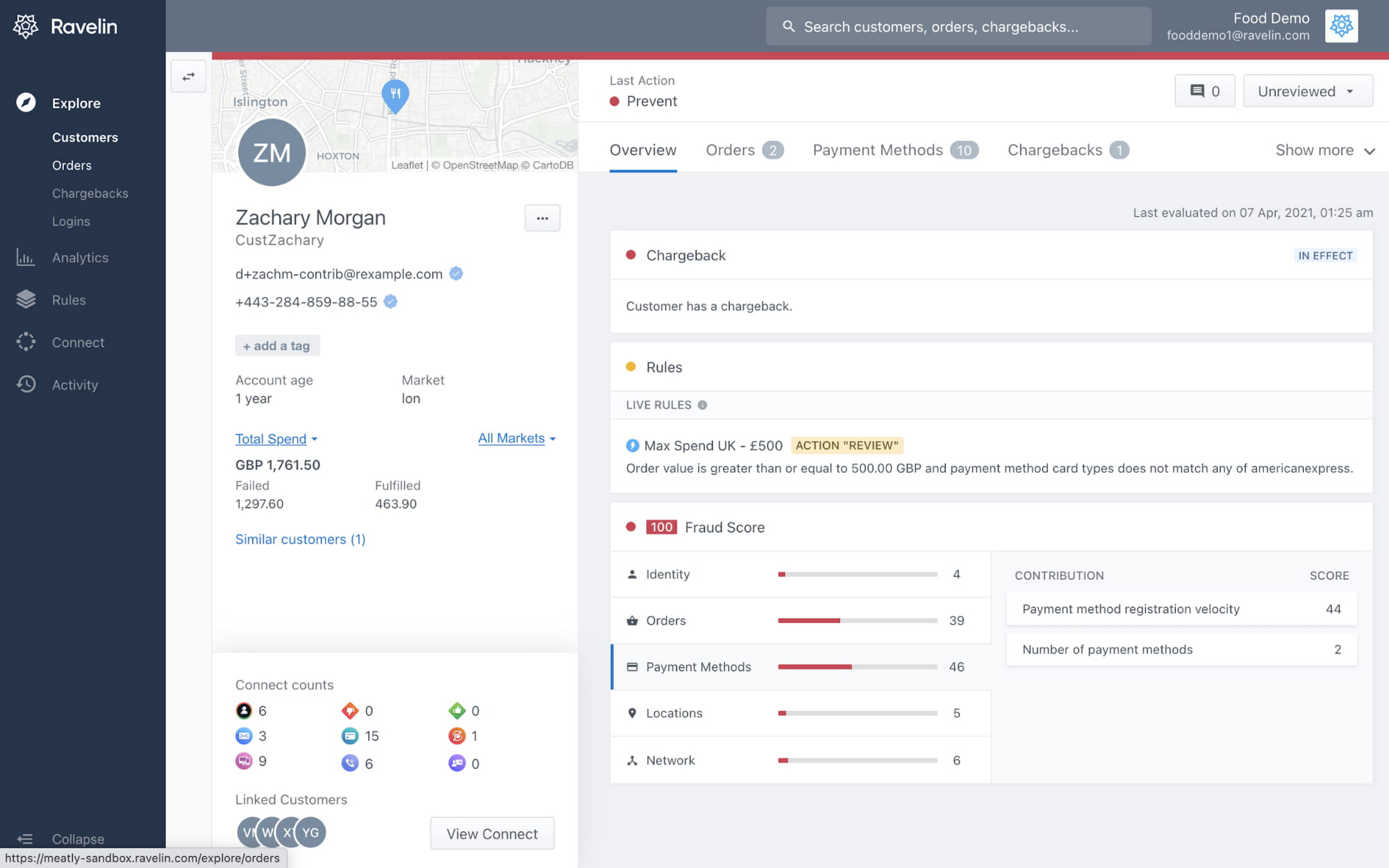

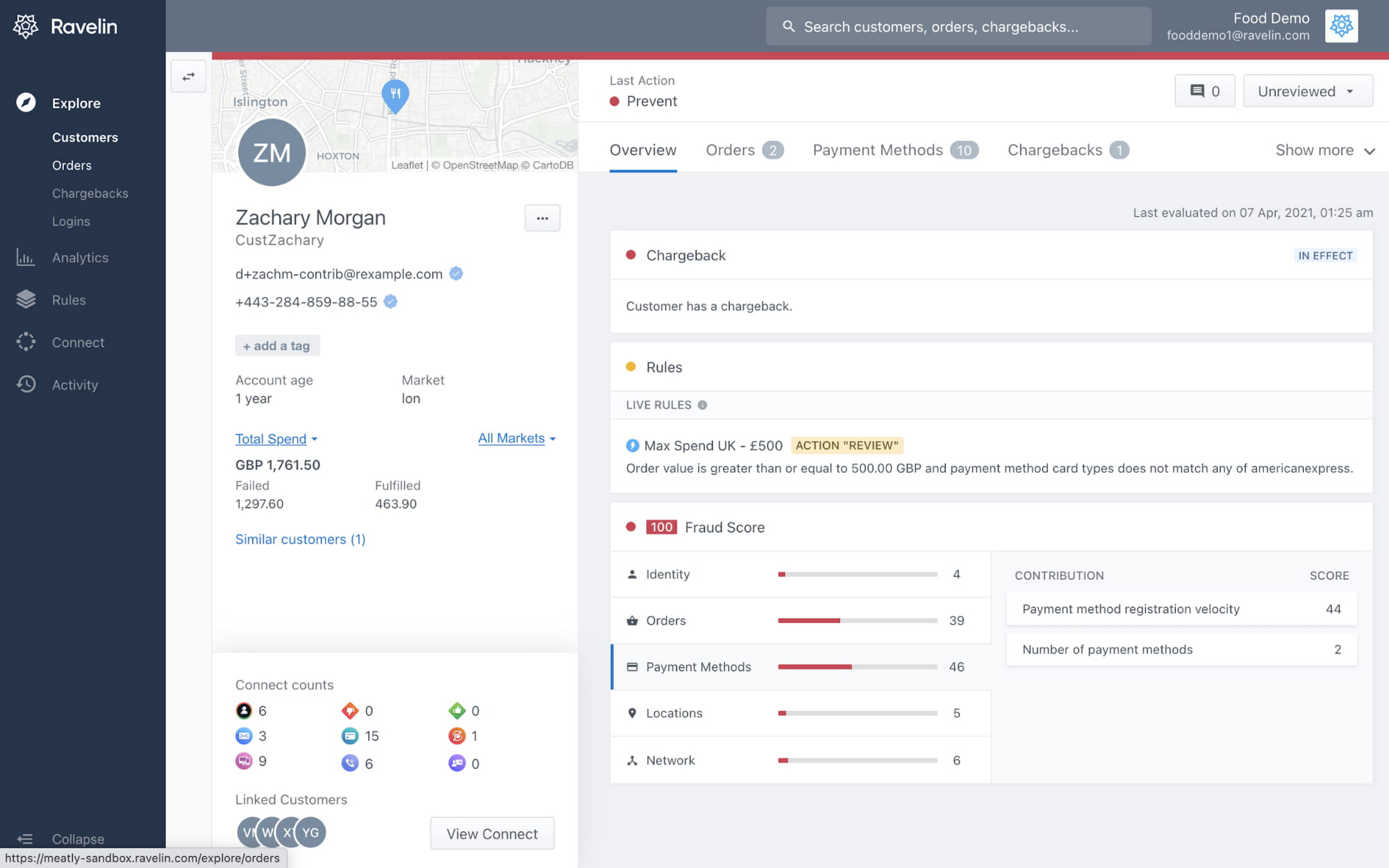

Bigtable also lets us present customer profiles in our dashboard to our client, so that if we make a fraud decision, our clients can confirm the fraud using the same data source we use.

We have configured our bigtable clusters to only be accessible within our private network and have restricted our pods access to it using targeted service accounts. This way the majority of our code does not have access to bigtable and only the bits that do the reading and writing have those privileges.

We also use Bigtable for debugging, logging, and tracing, because we have spare capacity and it's a fast, convenient location.

We conduct load testings against Bigtable. We started at a low rate of ~10 Bigtable requests per second and we peaked at ~167000 mixed read and write requests per second at absolute peak. The only intervention that was done to achieve this was pressing a single button to increase the number of nodes in the database. No other changes were made.

In terms of real traffic to our production system, we have seen ~22,000 req/s (combined read/write) on Bigtable in our live environment as a peak within the last 6 weeks.

Migrating seamlessly to Google Cloud

Like many startups, we started with Postgres, since it was easy and it was what we knew, but we quickly realized that scaling would be a challenge, and we didn't want to manage enormous Postgres instances. We looked for a kind of key value store, because we weren't doing crazy JOINS or complex WHERE clauses. We wanted to provide a customer ID and get everything we knew about it, and that's where key value really shines.

I used Cassandra at a previous company, but we had to hire several people just for that chore. At Ravelin we wanted to move to managed services and save ourselves that headache. We were already heavy users and fans of BigQuery, Google Cloud’s serverless, scalable data warehouse, and we also wanted to start using Kubernetes. This was five years ago, and though quite a few providers offer Kubernetes services now, we still see Google Cloud at the top of that stack with Google Kubernetes Engine (GKE). We also like Bigtable’s versioning capability that helped with a use case involving upserts. All of these features helped us choose Bigtable.

Migrations can be intimidating, especially in retail where downtime isn’t an option. We were migrating not just from Postgres to Bigtable, but also from AWS to Google Cloud. To prepare, we ran in AWS like always, but at the same time we set up a queue at our API level to mirror every request over to Google Cloud. We looked at those requests to see if any were failing, and confirmed if the results and response times were the same as in AWS. We did that for a month, fine tuning along the way.

Then we took the big step and flipped a config flag and it was 100% over to Google Cloud. At the exact same time, we flipped the queue over to AWS so that we could still send traffic into our legacy environment. That way, if anything went wrong, we could fail back without missing data. We ran like that for about a month, and we never had to fail back. In the end, we pulled off a seamless, issue-free online migration to Google Cloud.

Flexing Bigtable’s features

For our database structure, we originally had everything spread across rows, and we'd use a hash of a customer ID as a prefix. Then we could scan each record of history, such as orders or transactions. But eventually we got customers that were too big, where the scanning wasn't fast enough. So we switched and put all of the customer data into one row and the history into columns. Then each cell was a different record, order, payment method, or transaction. Now, we can quickly look up the one row and get all the necessary details of that customer. Some of our clients send us test customers who place an order, say, every minute, and that quickly becomes problematic if you want to pull out enormous amounts of data without any limits on your row size. The garbage collection feature makes it easy to clean up big customers.

We also use Bigtable replication to increase reliability, atomicity, and consistency. We need strong consistency guarantees within the context of a single request to our API since we make multiple bigtable requests within that scope. So within a request we always hit the same replica of Bigtable and if we have a failure, we retry the whole request. That allows us to make use of the replica and some of the consistency guarantees, a nice little trade-off where we can choose where we want our consistency to live.

We also use BigQuery with Bigtable for training on customer records or queries with complicated WHERE clauses. We put the data in Bigtable, and also asynchronously in BigQuery using streaming inserts, which allows our data scientists to query it in every way you can imagine, build models, and investigate patterns and not worry about query engine limitations. Since our Bigtable production cluster is completely separate, doing a query on BigQuery has no impact on our response times. When we were on Postgres many years ago, it was used for both analysis and real time traffic and it was not the optimal solution for us. We also use Elasticsearch for powering text searches for our dashboard.

If you’re using Bigtable, we recommend three features:

Key visualizer. If we get latency or errors coming back from Bigtable, we look at the key visualizer first. We may have a hotkey or a wide row, and the visualizer will alert us and provide the exact key range where the key lives, or the row in question. Then we can go in and fix it at that level. It’s useful to know how your data is hitting Bigtable and if you're using any anti-patterns or if your clients have changed their traffic pattern that exacerbated some issue.

Garbage collection. We can prevent big row issues by putting size limits in place with the garbage collection policies.

Cell versioning. Bigtable has a 3d array, with rows, columns, and cells, which are all the different versions. You can make use of the versioning to get history of a particular value or to build a time series within one row. Getting a single row is very fast in Bigtable so as long as you can keep the data volume in check for that row, making use of cell versions is a very powerful and fast option. There are patterns in the docs that are quite useful and not immediately obvious. For example, one trick is to reverse your timestamps (MAXINT64 - now) so instead of the latest version, you can get the oldest version effectively reversing the cell version sorting if you need it.

Google Cloud and Bigtable help us meet the low-latency demands of the growing online retail sector, with speed and easy integration with other Google Cloud services like BigQuery. With their managed services, we freed up time to focus on innovations and meet the needs of bigger and bigger customers.

Learn more about Ravelin and Bigtable, and check out our recent blog, How BIG is Cloud Bigtable?