本頁說明如何使用 Google Cloud 主控台,將 Spanner 資料庫匯入 Spanner。如要從其他來源匯入 Avro 檔案,請參閱從非 Spanner 資料庫匯入資料。

這個程序會利用 Dataflow,從包含一組 Avro 檔案和 JSON 資訊清單檔案的 Cloud Storage 值區資料夾匯入資料。匯入程序僅支援從 Spanner 匯出的 Avro 檔案。

如要使用 REST API 或 gcloud CLI 匯入 Spanner 資料庫,請先完成本頁面「事前準備」一節中的步驟,再參閱「Cloud Storage Avro 到 Spanner」中的詳細操作說明。

事前準備

如要匯入 Spanner 資料庫,請先啟用 Spanner、Cloud Storage、Compute Engine 和 Dataflow API:

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM

role (roles/serviceusage.serviceUsageAdmin), which

contains the serviceusage.services.enable permission. Learn how to grant

roles.

此外,您也需要足夠的配額和必要的 IAM 權限。

配額需求

匯入工作的配額需求如下:

- Spanner:您必須要有足夠的運算容量來支援匯入的資料量。匯入資料庫本身不需任何額外運算資源,不過您可能需要新增更多運算資源,以便在合理的時間內完成工作。詳情請參閱「工作最佳化」一節。

- Cloud Storage:如要匯入,您必須要有包含先前匯出檔案的值區。您不需要為值區設定大小。

- Dataflow:匯入工作在 CPU、磁碟使用率和 IP 位址方面的 Compute Engine 配額限制與其他 Dataflow 工作相同。

Compute Engine:執行匯入工作前,您必須先為 Dataflow 使用的 Compute Engine 設定初始配額。這些配額代表您允許 Dataflow 為工作使用的資源數量「上限」。我們建議的起始值如下:

- CPU:200

- 使用中的 IP 位址:200

- 標準永久磁碟:50 TB

一般來說,您不需再進行其他調整。 Dataflow 會自動調度資源,因此您只要支付匯入期間實際用到的資源費用。如果您的工作可能會使用更多資源,Dataflow UI 將出現警告圖示,在此情況下工作仍可順利完成。

必要的角色

如要取得匯出資料庫所需的權限,請要求管理員在 Dataflow 工作站服務帳戶中,授予您下列 IAM 角色:

-

Cloud Spanner 檢視者 (

roles/spanner.viewer) -

Dataflow 工作者 (

roles/dataflow.worker) -

儲存空間管理員 (

roles/storage.admin) -

Spanner 資料庫讀取者 (

roles/spanner.databaseReader) -

資料庫管理員 (

roles/spanner.databaseAdmin)

選用:在 Cloud Storage 中尋找資料庫資料夾

如要在Google Cloud 控制台中尋找匯出資料庫所在的資料夾,請前往 Cloud Storage 瀏覽器,然後按一下包含匯出資料夾的值區。

匯出資料所在的資料夾名稱是以執行個體 ID 開頭,後面接上資料庫名稱和匯出工作的時間戳記。資料夾內容包括:

spanner-export.json檔案。- 您匯出的資料庫中每個資料表各一個

TableName-manifest.json檔案。 一或多個

TableName.avro-#####-of-#####檔案。副檔名.avro-#####-of-#####中的第一組數字代表 Avro 檔案的索引 (從 0 開始),第二組則是系統為每個資料表產生的 Avro 檔案數量。舉例來說,如果

Songs資料表的資料分別存放在兩個檔案內,Songs.avro-00001-of-00002就是其中的第二個檔案。您匯出的資料庫中每個變更串流各一個

ChangeStreamName-manifest.json檔案。每個變更串流各一個

ChangeStreamName.avro-00000-of-00001檔案。這個檔案包含空資料,只有變更串流的 Avro 結構定義。

匯入資料庫

如要將 Spanner 資料庫從 Cloud Storage 匯入執行個體,請按照下列步驟操作:

前往 Spanner「Instances」(執行個體) 頁面。

按一下包含匯入資料庫的執行個體名稱。

按一下左窗格中的「匯入/匯出」選單項目,然後按一下「匯入」按鈕。

在「選擇來源資料夾」下方,按一下「瀏覽」。

在初始清單中尋找包含匯出內容的值區,或是按一下「搜尋」圖示

來篩選清單,然後再尋找值區。按兩下值區即可查看其包含的資料夾。

來篩選清單,然後再尋找值區。按兩下值區即可查看其包含的資料夾。尋找包含匯出檔案的資料夾,然後按一下即可選取。

按一下「選取」。

為 Spanner 在匯入期間建立的新資料庫輸入名稱。您不能使用執行個體中已存在的名稱。

為新資料庫選擇方言 (GoogleSQL 或 PostgreSQL)。

(選用) 如要使用客戶管理的加密金鑰保護新資料庫,請按一下「顯示加密選項」,然後選取「使用客戶管理的加密金鑰 (CMEK)」。然後從下拉式清單中選取金鑰。

在「Choose a region for the import job」(選擇匯入工作使用的區域) 下拉式選單中選取地區。

(選用) 如要使用客戶管理的加密金鑰加密 Dataflow 管道狀態,請按一下「顯示加密選項」,然後選取「使用客戶管理的加密金鑰 (CMEK)」。然後從下拉式選單中選取金鑰。

選取「Confirm charges」(確認費用) 下方的核取方塊,表示除了現有 Spanner 執行個體產生的費用外,您確認還有額外費用。

按一下「匯入」。

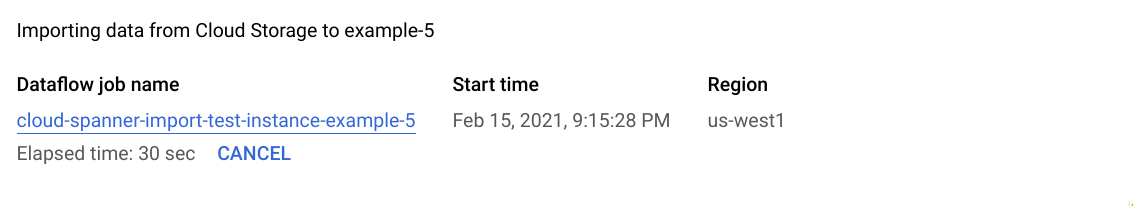

Google Cloud 控制台會顯示「Database details」(資料庫詳細資料) 頁面,此頁面會出現匯入工作的說明方塊,包括工作的經過時間:

工作完成或終止時, Google Cloud 主控台會在「Database details」(資料庫詳細資料) 頁面顯示訊息。如果工作順利完成,頁面上會出現成功訊息:

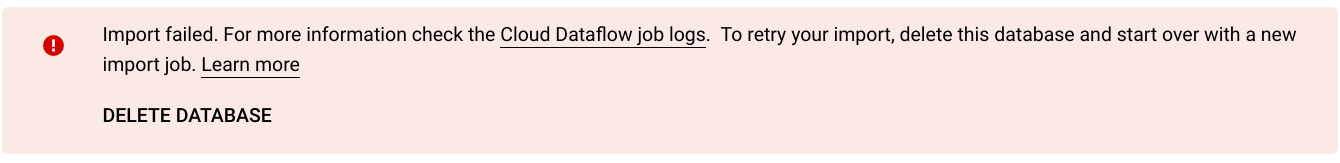

如果工作並未順利完成,則會出現失敗訊息:

如有工作失敗,請檢閱該工作的 Dataflow 記錄檔,查看錯誤詳細資料,並參閱「排解匯入工作失敗問題」。

匯入產生的資料欄和變更串流的注意事項

Spanner 會使用 Avro 結構定義中每個產生資料欄的定義,重新建立該資料欄。Spanner 會在匯入期間自動計算產生的資料欄值。

同樣地,Spanner 會使用 Avro 結構定義中每個變更串流的定義,在匯入期間重新建立變更串流。系統不會透過 Avro 匯出或匯入串流資料變更,因此與新匯入資料庫相關聯的所有變更串流,都不會有變更資料記錄。

匯入序列的注意事項

Spanner 匯出的每個序列 (GoogleSQL、PostgreSQL) 都會使用 GET_INTERNAL_SEQUENCE_STATE() (GoogleSQL、PostgreSQL) 函式擷取目前狀態。Spanner 會在計數器中加入 1000 的緩衝區,並將新的計數器值寫入記錄欄位的屬性。請注意,這只是盡量避免匯入後發生重複值錯誤的方法。如果在資料匯出期間,來源資料庫有更多寫入作業,請調整實際的序號計數器。

匯入時,序號會從這個新計數器開始,而不是從結構定義中的計數器開始。如有需要,您可以使用 ALTER SEQUENCE (GoogleSQL、PostgreSQL) 陳述式更新為新的計數器。

匯入交錯式資料表和外鍵的注意事項

Dataflow 工作可以匯入交錯資料表,讓您保留來源檔案中的父項/子項關係。不過,資料載入期間不會強制執行外鍵限制。資料載入完成後,Dataflow 工作會建立所有必要的外鍵。

如果 Spanner 資料庫在匯入開始前有外鍵限制,您可能會因參照完整性違規而發生寫入錯誤。為避免寫入錯誤,建議您在啟動匯入程序前,先捨棄所有現有的外部鍵。

選擇匯入工作使用的區域

根據 Cloud Storage 值區的位置,選擇不同的地區。如要避免產生輸出資料移轉費用,請選擇與 Cloud Storage 值區位置相符的地區。

如果 Cloud Storage 值區位置是地區,只要為匯入工作選擇相同地區 (假設該地區可用),即可享有免費網路用量。

如果您的 Cloud Storage bucket 位於雙區域,只要其中一個區域可用,您就可以為匯入工作選擇構成雙區域的其中一個區域,以利用免費網路用量。

在 Dataflow UI 中查看工作或排解工作問題

開始匯入工作後,您可以在 Google Cloud 控制台的 Dataflow 區段中查看工作詳細資料,包括記錄檔。

查看 Dataflow 工作詳細資料

如要查看過去一週內執行的任何匯入或匯出工作詳細資料,包括目前正在執行的工作,請按照下列步驟操作:

- 前往資料庫的「資料庫總覽」頁面。

- 按一下左窗格選單中的「匯入/匯出」。資料庫的「Import/Export」(匯入/匯出) 頁面會顯示最近的工作清單。

在資料庫的「匯入/匯出」頁面中,按一下「Dataflow job name」(Dataflow 工作名稱) 欄位中的工作名稱:

Google Cloud 控制台會顯示 Dataflow 工作的詳細資料。

如何查看超過一週前執行的工作:

前往 Google Cloud 控制台的 Dataflow 工作頁面。

在清單中找出您的工作,然後按一下工作名稱。

Google Cloud 控制台會顯示 Dataflow 工作的詳細資料。

查看工作的 Dataflow 記錄檔

如要查看 Dataflow 工作記錄檔,請前往工作的詳細資料頁面,然後按一下工作名稱右側的「記錄」。

如有工作失敗,請在記錄檔中尋找錯誤。如果有錯誤,[Logs] (記錄) 旁邊會顯示錯誤計數:

如何查看工作錯誤:

按一下「記錄」旁的錯誤計數。

Google Cloud 控制台會顯示工作的記錄。您可能需要捲動頁面,才能看到錯誤。

找出帶有錯誤圖示

的項目。

的項目。按一下個別記錄項目,即可展開內容。

如要進一步瞭解如何排解 Dataflow 工作問題,請參閱「排解管道問題」。

排解匯入工作失敗問題

如果作業記錄中顯示下列錯誤:

com.google.cloud.spanner.SpannerException: NOT_FOUND: Session not found --or-- com.google.cloud.spanner.SpannerException: DEADLINE_EXCEEDED: Deadline expired before operation could complete.

在Google Cloud 主控台的 Spanner 資料庫「監控」分頁中,查看「99% 寫入延遲」。如果顯示的值很高 (多秒),表示執行個體負載過重,導致寫入作業逾時並失敗。

造成高延遲的原因之一,是 Dataflow 工作使用的工作站過多,導致 Spanner 執行個體負載過重。

如要限制 Dataflow 工作站數量,請勿使用 Google Cloud 控制台中 Spanner 資料庫執行個體詳細資料頁面的「匯入/匯出」分頁,而是使用 Dataflow Cloud Storage Avro to Spanner 範本啟動匯入作業,並指定工作站數量上限,如下所示:主控台

如果您使用 Dataflow 控制台,Max workers 參數位於「Create job from template」(利用範本建立工作) 頁面的「Optional parameters」(選用參數) 區段。

gcloud

執行 gcloud dataflow jobs run 指令,並指定 max-workers 引數。例如:

gcloud dataflow jobs run my-import-job \

--gcs-location='gs://dataflow-templates/latest/GCS_Avro_to_Cloud_Spanner' \

--region=us-central1 \

--parameters='instanceId=test-instance,databaseId=example-db,inputDir=gs://my-gcs-bucket' \

--max-workers=10 \

--network=network-123

排解網路錯誤

匯出 Spanner 資料庫時,可能會發生下列錯誤:

Workflow failed. Causes: Error: Message: Invalid value for field 'resource.properties.networkInterfaces[0].subnetwork': ''. Network interface must specify a subnet if the network resource is in custom subnet mode. HTTP Code: 400

發生這項錯誤的原因是,Spanner 會假設您打算使用名為「預設」default的自動模式虛擬私有雲網路,這個網路位於 Dataflow 工作的專案。如果專案中沒有預設的虛擬私有雲網路,或是虛擬私有雲網路採用的是自訂模式虛擬私有雲網路,您就必須建立 Dataflow 工作,並指定替代網路或子網路。

對速度緩慢的匯入工作進行最佳化

如果您已按照初始設定的建議操作,通常不需要再進行其他調整。如果工作執行速度緩慢,您可嘗試下列其他最佳化處理做法:

為工作和資料選擇最佳位置:在 Spanner 執行個體和 Cloud Storage 值區所在位置的地區執行 Dataflow 工作。

確保您有足夠的 Dataflow 資源:如果相關的 Compute Engine 配額限制了 Dataflow 工作的資源,該工作在 Google Cloud 控制台的 Dataflow 頁面會顯示警告圖示

和記錄訊息:

和記錄訊息:

在這種情況下,提高 CPU、使用中的 IP 位址和標準永久磁碟的配額,可能會縮短工作執行時間,但您可能需要支付更多 Compute Engine 費用。

檢查 Spanner CPU 使用率:如果您發現執行個體的 CPU 使用率超過 65%,則可增加該執行個體中的運算容量。容量會增加更多 Spanner 資源,並加快工作執行速度,不過 Spanner 費用也會隨之提高。

影響匯入工作效能的因素

下列幾個因素會影響完成匯入工作所需的時間。

Spanner 資料庫大小:處理更多資料的同時也需要較多時間和資源。

Spanner 資料庫結構定義,包括:

- 資料表數量

- 資料列大小

- 次要索引的數量

- 外鍵數量

- 變更串流數量

請注意,Dataflow 匯入工作完成後,仍會持續建立索引和外鍵。變更串流會在匯入工作完成前建立,但會在所有資料匯入後建立。

資料位置:資料會透過 Dataflow 在 Spanner 和 Cloud Storage 之間轉移,比較理想的情況是這三個元件都位在同個地區。如果這些元件位在不同地區,在各地區間移動資料將拖慢工作的執行速度。

Dataflow 工作站數量:如要獲得良好效能,必須使用最佳數量的 Dataflow 工作站。Dataflow 可使用自動調度資源,根據需要處理的工作量選擇工作站數量。不過,工作站數量會以 CPU、使用中的 IP 位址和標準永久磁碟的配額做為上限。當工作站數量達到配額上限時,Dataflow UI 會出現警告圖示,此時的處理速度相對較為緩慢,不過工作仍可順利完成。如果需要匯入大量資料,自動調度資源可能會導致 Spanner 負載過重,進而發生錯誤。

Spanner 的現有負載:匯入工作會大幅加重 Spanner 執行個體上 CPU 的負載。如果該執行個體原本已有大量負載,則會拖慢作業的執行速度。

Spanner 的運算容量:如果執行個體的 CPU 使用率超過 65%,則會拖慢工作的執行速度。

調整工作人員,提升匯入效能

啟動 Spanner 匯入作業時,必須將 Dataflow 工作站設為最佳值,才能發揮出色效能。工作站數量過多會導致 Spanner 負載過重,而工作站數量過少則會導致匯入效能不佳。

工作站數量上限取決於資料大小,但理想情況下,Spanner CPU 總使用率應介於 70% 至 90% 之間。這樣一來,就能在 Spanner 效率和無錯誤完成作業之間取得平衡。

如要在多數結構定義和情境中達到使用率目標,建議工作站 vCPU 數量上限為 Spanner 節點數量的 4 到 6 倍。

舉例來說,如果使用 n1-standard-2 工作站,且 Spanner 執行個體有 10 個節點,則可將工作站數量上限設為 25,提供 50 個 vCPU。