Usar la plantilla de flujo de cambios de Bigtable a BigQuery

En esta guía de inicio rápido, aprenderá a configurar una tabla de Bigtable con un flujo de cambios habilitado, ejecutar una canalización de flujo de cambios, hacer cambios en su tabla y, a continuación, ver los cambios transmitidos.

Antes de empezar

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Verify that billing is enabled for your Google Cloud project.

-

Enable the Dataflow, Cloud Bigtable API, Cloud Bigtable Admin API, and BigQuery APIs.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles. -

In the Google Cloud console, activate Cloud Shell.

Crear un conjunto de datos de BigQuery

Usa la Google Cloud consola para crear un conjunto de datos que almacene los datos.

En la Google Cloud consola, ve a la página BigQuery.

En el panel Explorador, haz clic en el nombre de tu proyecto.

Abre la opción Acciones y haz clic en Crear conjunto de datos.

En la página Crear conjunto de datos, haz lo siguiente:

- En ID del conjunto de datos, introduce

bigtable_bigquery_quickstart. - Deje el resto de los ajustes predeterminados tal como están y haga clic en Crear conjunto de datos.

- En ID del conjunto de datos, introduce

Crear una tabla con un flujo de cambios habilitado

En la Google Cloud consola, ve a la página Instancias de Bigtable.

Haga clic en el ID de la instancia que está usando en esta guía de inicio rápido.

Si no tienes ninguna instancia disponible, crea una con las configuraciones predeterminadas en una región cercana.

En el panel de navegación de la izquierda, haga clic en Tablas.

Haz clic en Crear tabla.

Asigna un nombre a la tabla

bigquery-changestream-quickstart.Añade una familia de columnas llamada

cf.Selecciona Habilitar flujo de cambios.

Haz clic en Crear.

En la página Tablas de Bigtable, busca tu tabla.

bigquery-changestream-quickstartEn la columna Cambio de flujo, haga clic en Conectar.

En el cuadro de diálogo, selecciona BigQuery.

Haga clic en Crear tarea de Dataflow.

En los campos de parámetros proporcionados, introduzca los valores de los parámetros. No es necesario que proporciones ningún parámetro opcional.

- Asigna el ID de perfil de aplicación de Bigtable a

default. - Asigna el valor

bigtable_bigquery_quickstartal conjunto de datos de BigQuery.

- Asigna el ID de perfil de aplicación de Bigtable a

Haz clic en Ejecutar trabajo.

Espera a que el estado del trabajo sea Iniciando o En curso antes de continuar. Tarda unos 5 minutos una vez que se pone en cola.

Mantén el trabajo abierto en una pestaña para poder detenerlo cuando limpies tus recursos.

Escribir datos en Bigtable

En Cloud Shell, escribe algunas filas en Bigtable para que el registro de cambios pueda escribir algunos datos en BigQuery. Siempre que escribas los datos después de crear el trabajo, los cambios aparecerán. No tienes que esperar a que el estado de la tarea sea

running.cbt -instance=BIGTABLE_INSTANCE_ID -project=PROJECT_ID \ set bigquery-changestream-quickstart user123 cf:col1=abc cbt -instance=BIGTABLE_INSTANCE_ID -project=PROJECT_ID \ set bigquery-changestream-quickstart user546 cf:col1=def cbt -instance=BIGTABLE_INSTANCE_ID -project=PROJECT_ID \ set bigquery-changestream-quickstart user789 cf:col1=ghiHaz los cambios siguientes:

- PROJECT_ID: el ID del proyecto que estás usando

- BIGTABLE_INSTANCE_ID: el ID de la instancia que contiene la tabla

bigquery-changestream-quickstart

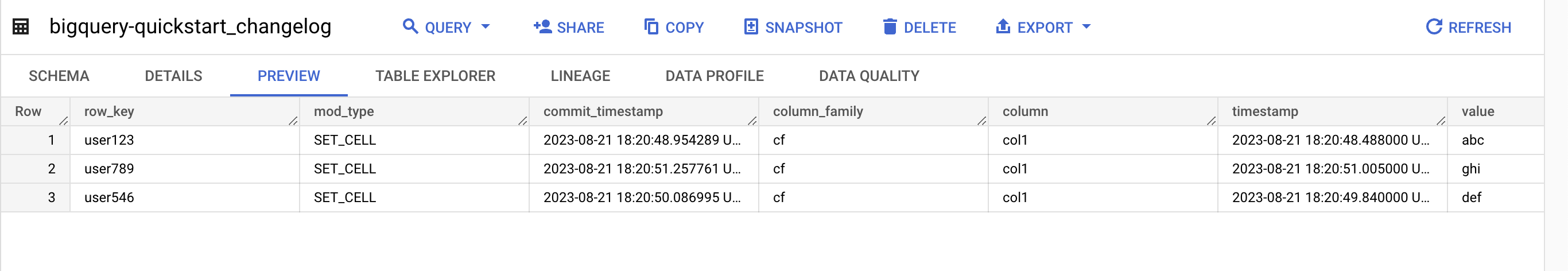

Ver los registros de cambios en BigQuery

En la Google Cloud consola, ve a la página BigQuery.

En el panel Explorador, despliega tu proyecto y el conjunto de datos

bigtable_bigquery_quickstart.Haz clic en la tabla

bigquery-changestream-quickstart_changelog.Para ver el registro de cambios, haz clic en Vista previa.

Limpieza

Para evitar que se apliquen cargos en tu cuenta de Google Cloud por los recursos utilizados en esta página, sigue estos pasos.

Inhabilita el flujo de cambios en la tabla:

gcloud bigtable instances tables update bigquery-changestream-quickstart \ --project=PROJECT_ID --instance=BIGTABLE_INSTANCE_ID \ --clear-change-stream-retention-periodElimina la tabla

bigquery-changestream-quickstart:cbt --instance=BIGTABLE_INSTANCE_ID --project=PROJECT_ID deletetable bigquery-changestream-quickstartDetén el flujo de procesamiento de cambios:

En la Google Cloud consola, ve a la página Trabajos de Dataflow.

Selecciona el trabajo de streaming en la lista de trabajos.

En la navegación, haz clic en Detener.

En el cuadro de diálogo Detener trabajo, selecciona Cancelar y, a continuación, haz clic en Detener trabajo.

Elimina el conjunto de datos de BigQuery:

En la Google Cloud consola, ve a la página BigQuery.

En el panel Explorador, busca el conjunto de datos

bigtable_bigquery_quickstarty haz clic en él.Haz clic en Eliminar, escribe

deletey, a continuación, haz clic en Eliminar para confirmar la acción.

Opcional: Elimina la instancia si has creado una para esta guía de inicio rápido:

cbt deleteinstance BIGTABLE_INSTANCE_ID

Siguientes pasos