This tutorial shows how to onboard a new application, develop a feature for the application, and deploy the application to production using modern continuous integration/continuous delivery (CI/CD) techniques with Google Kubernetes Engine (GKE).

This document is part of a series:

- Modern CI/CD with GKE: A software delivery framework

- Modern CI/CD with GKE: Build a CI/CD system (reference architecture)

- Modern CI/CD with GKE: Apply the developer workflow (this document)

In this tutorial, you use tools such as

Skaffold,

kustomize,

Artifact Registry,

Config Sync,

Cloud Build and

Cloud Deploy to develop, build and deploy your application.

This document is intended for enterprise architects and application developers, as well as IT security, DevOps, and Site Reliability Engineering (SRE) teams. Some experience with automated deployment tools and processes is useful for understanding the concepts in this document.

Architecture

In this tutorial, you onboard a new application. Then, you develop a new feature and deploy the application in dev, staging, and production environments. The reference architecture contains the infrastructure and tools needed to onboard and release a new application with the workflow shown in the following diagram:

Starting at the code repository for CI, the workflow includes the following steps:

You share your application source code through your application repositories.

When you commit and push the code to the application repository, it automatically triggers a CI pipeline in Cloud Build. The CI process creates and pushes a container image to Artifact Registry.

The CI process also creates a CD release for the application in Cloud Deploy.

The CD release generates fully rendered kubernetes manifests for dev using

skaffoldand deploys them in the dev GKE cluster.The CD release is then promoted from dev to a staging target, that generates fully rendered staging manifests and deploys them in the staging GKE cluster.

Then, The CD release is promoted from staging to production, that generates fully rendered production manifests and deploys them in production GKE clusters.

For more information about the tools and infrastructure used in this workflow, see Modern CI/CD with GKE: Build a CI/CD system.

Objectives

Onboard a new application.

Deploy the application in the dev environment.

Develop a new feature and deploy it in the dev environment.

Promote the new feature to staging and then release it into production.

Test the resiliency of the application.

Costs

In this document, you use the following billable components of Google Cloud:

To generate a cost estimate based on your projected usage,

use the pricing calculator.

When you finish the tasks that are described in this document, you can avoid continued billing by deleting the resources that you created. For more information, see Clean up.

Before you begin

- For this tutorial, deploy the reference architecture in this series.

Prepare your environment

If you're continuing directly from Modern CI/CD with GKE: Build a CI/CD system, go to the next section. However, if you have a new session, or your session expired, open Cloud Shell and set the project where you installed the reference architecture infrastructure:

gcloud config set core/project PROJECT_ID

Replace

PROJECT_IDwith your Google Cloud project ID.

Onboard a new application

The reference architecture contains an application factory. This factory is a collection of a git repository named application-factory-repo and the following Cloud Build triggers:

create-apptf-plantf-applycreate-team

You use the application factory to onboard a new application from starter repositories. Application onboarding consists of the following steps:

Create the application definition: You create the application definition in a Terraform file and store it in

application-factory-repowhich acts as application catalog.Create the application infrastructure: You run Terraform on the application definition file to create the application infrastructure. Application infrastructure consists of the following:

A Landing zone for the new application includes defining the namespace, service account, and base policies in the

acm-gke-infrastructure-reporepository. The landing zone is only created in a dev GKE cluster while onboarding a new application. This is done to unblock the developers so that they can use the dev environment and start iterating on it. The landing zone in the staging and production clusters are created with the GitOps approach. This approach is demonstrated later in this document when you are ready to promote the release in those clusters.The Infrastructure repository from infrastructure starter repository that hosts the code to create the CI pipeline in Cloud Build, the CD pipeline in Cloud Deploy, and the Artifact Registry repository for storing artifacts.

An infrastructure Cloud Build trigger that takes the code in the infrastructure repository and creates the resources based on their definition.

An application repository from the application starter repository that hosts the application's source code.

Create application's CI/CD resources: You use the application infrastructure to create CI/CD resources for the application.

Create application definition:

Run the create-app trigger to generate an application definition file in application-factory-repo. The definition file contains the declarative definition of the resources required to create an application.

In the Google Cloud console, go to the Cloud Build page:

Click

create-apptrigger.Click SHOW URL PREVIEW to display the URL required to invoke the webhook.

In Cloud Shell, invoke the trigger by making a curl request on the URL obtained from the previous step and passing the parameters as a payload to it.

curl "WEBHOOK_URL" -d '{"message": {"app": "sample","runtime": "python","trigger_type": "webhook","github_team": ""}}'

In the previous code sample:

Replace

WEBHOOK_URLwith the URL obtained from the trigger."app": "sample"specifies the name of the application."runtime": "python"tells the application factory to use the python template to create application repositories."trigger_type": "webhook"specifies the type of CI/CD pipelines for the application."github_team": ""is a team in GitHub that's going to be associated with the repositories that are created for the application. Since, you haven't created a GitHub team yet, pass it as an empty string.

Check the pipeline for

create-apptrigger:Go to the Cloud Build History page.

There is a new pipeline for

create-apptrigger. When that has completed, the application definition is created inapplication-factory-repo.Review the application definition file:

In a web browser, go to GitHub and sign in to your account.

Click the picture icon and click

Your organizations. Choose your organization.Click the repository

application-factory-repo, go to the folderapps/pythonand open the new file namedsample.tfcreated by thecreate-apptrigger. Inspect the file, this file contains Terraform code to create a new application.

Create application infrastructure:

Now that you've created the application definition, you run the trigger tf-apply to create the application infrastructure.

In Google Cloud console:

Click

tf-applytrigger.Click "SHOW URL PREVIEW" to display the URL required to invoke the webhook.

Invoke the trigger:

curl "WEBHOOK_URL" -d '{}'

In the previous code sample:

- Replace

WEBHOOK_URLwith the URL obtained from the trigger.

- Replace

Check the pipeline for

tf-applytrigger:Go to the Cloud Build History page.

There is a new pipeline for

tf-applytrigger. Wait for it to complete.

This trigger creates the application infrastructure.

Review the application infrastructure:

Review the various components of the application infrastructure.

Landing zone

Go to Cloud Shell and set the project.

gcloud config set core/project PROJECT_ID

Replace

PROJECT_IDwith your Google Cloud project ID.Get credentials to the development GKE cluster.

gcloud container clusters get-credentials gke-dev-us-central1 --location us-central1-aCheck the namespace of the application. The namespace is named after the application, sample.

kubectl get namespaces sampleThe output resembles the following:

NAME STATUS AGE sample Active 15m

Check the service account in the namespace.

kubectl get serviceaccounts -n sampleThere is a service account in addition to the default. The output resembles the following:

NAME SECRETS AGE default 0 15m sample-ksa 0 15m

Infrastructure repository

In a web browser, go to GitHub and sign in to your account. Click the picture icon. Then click Your organizations. Choose you organization and click the sample-infra repository.

This repository has four branches: cicd-trigger, dev, staging and prod. It also contains four folders cicd-trigger, dev, staging and prod. The default branch is cicd-trigger and you can push the code to it while other branches have protection rules so, you can't push code directly to those branches. In order to push the code to those branches, you need to create a pull request. The cicd-trigger folder has code to create CI/CD resources for the application while dev, staging and prod folders have code to create infrastructure for different environments of the application.

Infrastructure trigger

In Google Cloud console:

There is a new trigger named

deploy-infra-sample.This trigger is connected to the repository

sample-infrasuch that when a code push happens to this repository, the trigger is invoked and identifies the branch where the push happened and goes to the corresponding folder on that branch and runs Terraform there. For example, if the code is pushed tocicd-triggerbranch, the trigger runs Terraform on cicd-trigger folder of cicd-trigger branch. Similarly, when a push happens to thedevbranch, the trigger runs Terraform on dev folder of the dev branch and so forth.

Application repository

- Go to GitHub and pull up the repositories under your organization. There is a new repository with the name

sample. This repository hosts the source code and steps to build containers inDockerfile,kustomizeconfigs that describe needed configurations of the application andskaffold.yamlthat defines the deployment steps to be used by Cloud Deploy for CD.

Create application CI/CD resources

Now that you've created the skeleton of the application, run the trigger deploy-infra-sample to create its CI/CD resources. You can either invoke the trigger manually using its webhook URL or by making a commit to the git repository sample-infra.

To invoke the Cloud Build trigger, add a new line in a file in the repository. Then, push the changes:

If you've never used Git in Cloud Shell, configure Git with your name and email address. Git uses this information to identify you as the author of the commits that you create in Cloud Shell:

git config --global user.email "GITHUB_EMAIL_ADDRESS" git config --global user.name "GITHUB_USERNAME"

Replace the following:

GITHUB_EMAIL_ADDRESS: the email address associated with your GitHub accountGITHUB_USERNAME: the username associated with your GitHub account

Clone the git repository

sample-infra:git clone https://github.com/GITHUB_ORG/sample-infra cd sample-infra

Replace the following:

GITHUB_ORGwith your GitHub organization.

The default branch cicd-trigger is checked out.

Add a new line to the file env/cicd-trigger/main.tf, commit the change and push.

echo "" >> env/cicd-trigger/main.tfCommit and push the changes:

git add . git commit -m "A dummy commit to invoke the infrastrucutre trigger" git push cd ..As soon as the changes are pushed, the Cloud Deploy trigger

deploy-infra-sampleis started.

Monitor the status of the trigger:

Go to Cloud Build history page to view the pipeline and wait for it to complete.

Review application CICD resources

Review the various CI/CD resources created for the application.

In the Google Cloud console:

Go to the Cloud Build page and view the

deploy-app-sampletrigger.This is the CI pipeline trigger. It's connected to the application code repository

sample. The trigger gets invoked when a push is made to the application repository and performs the build steps as defined in the trigger configuration. To view the steps that the trigger performs when invoked, click the trigger name and then click OPEN EDITOR button.Go to the Artifact Registry page and view the new repository with the name

sample.This Artifact Repository stores the application's artifacts.

Go to the Cloud Deploy pipeline page and view the pipeline with the name

sample. This is the continuous deployment pipeline that deploys the application on the GKE clusters.

Deploy the application in the dev environment

The trigger deploy-app-sample is connected to the application repository named sample. You can either invoke the trigger manually, using the webhook URL, or through a push to the application repository.

Add a new line in a file in the repository

sampleand push the changes to invoke the Cloud Build trigger:Clone the git repository

sample:In Cloud Shell:

git clone https://github.com/GITHUB_ORG/sample cd sample

Replace

GITHUB_ORGwith your GitHub prganization.Add a new line to the

skaffold.yamlfile.echo "" >> skaffold.yamlCommit and push the changes:

git add . git commit -m "A dummy commit to invoke CI/CD trigger" git pushAs soon as the changes are pushed, the Cloud Deploy trigger

deploy-app-sampleis started.

Monitor the status of the trigger:

Go to Cloud Build history page to view the pipeline and wait for it to complete.

The trigger executes the steps defined in its configuration. The first step is to build a docker image from the application code in the repository

sample. The last step is to kick-off the Cloud Deploy pipeline that deploys the application to the dev GKE cluster.Check the deployment in dev cluster:

Go to Cloud Deploy pipeline page.

Click

samplepipeline, the deployment to the dev GKE cluster has been started. Wait for it to complete.

Verify that the application has been deployed successfully :

Get credentials to the development cluster.

gcloud container clusters get-credentials gke-dev-us-central1 --location us-central1-aTunnel into GKE cluster.

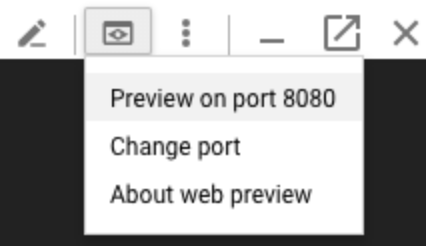

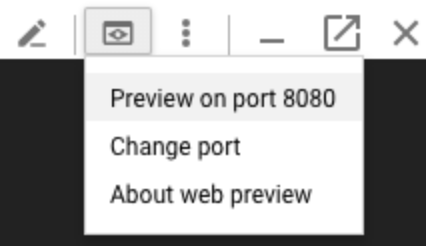

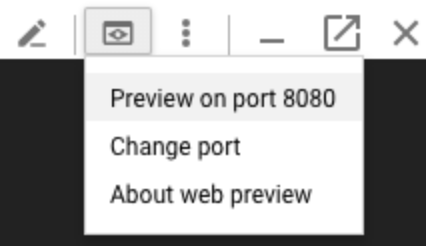

gcloud container clusters get-credentials gke-dev-us-central1 --location us-central1-a && kubectl port-forward --namespace sample $(kubectl get pod --namespace sample --selector="deploy.cloud.google.com/delivery-pipeline-id=sample" --output jsonpath='{.items[0].metadata.name}') 8080:8080On the Cloud Shell toolbar, click

Web Preview, and then click Preview on port 8080:

The output is the following:

Hello World!

In Cloud Shell, press

CTRL+Cto end the port forward.

Add a new feature to the application

When you develop a new feature, you need to quickly deploy your changes into development environment in order to test and iterate on them. In this tutorial, you use make changes in application code repository and deploy them to development environment.

In Cloud Shell, change directory to already cloned

samplerepository:Update the application to output a different message:

sed -i "s/Hello World/My new feature/g" main.pyCommit and push the changes:

git add . git commit -m "Changed the message" git pushAs soon as the code is pushed to the GitHub repository, the webhook trigger

deploy-app-samplegets kicked-off.Monitor the status of the trigger on Cloud Build history page and wait for it to complete.

Go to Cloud Deploy pipeline page

Click

samplepipeline, the deployment to the dev GKE cluster has been started. Wait for it to complete.

Verify that the application has been deployed successfully :

Get credentials to the development cluster if you opened a new Cloud Shell:

gcloud container clusters get-credentials gke-dev-us-central1 --zone us-central1-aTunnel into GKE cluster:

gcloud container clusters get-credentials gke-dev-us-central1 --zone us-central1-a && kubectl port-forward --namespace sample $(kubectl get pod --namespace sample --selector="deploy.cloud.google.com/delivery-pipeline-id=sample" --output jsonpath='{.items[0].metadata.name}') 8080:8080On the Cloud Shell toolbar, click

Web Preview, and then click Preview on port 8080:

The output is the following:

My new feature!

In Cloud Shell, press

CTRL+Cto end the port forward.

Promote your change to the staging and productions clusters

Before promoting the application to staging and production environments, you need to create the landing zone for the application in the GKE clusters for those environments. When you onboarded the application, the landing zone for dev was automatically created in dev GKE cluster by adding code to acm-gke-infrastructure-repo in the dev branch.

Create landing zone in staging and prod GKE clusters

Create landing zone in staging GKE cluster: You need to create a pull request from dev to staging branch in

acm-gke-infrastructure-repoand merge it.Go to GitHub and traverse to the repository

acm-gke-infrastructure-repo. ClickPull requestsand thenNew pull requestbutton. In the Base menu, choose staging and in the Compare menu, choose dev. Click theCreate pull requestbutton.Typically, someone who has access to the repository reviews the changes and then merge the PR to make sure only the intended changes are being promoted to the staging environment. To let the individuals try out the reference architecture, the branch protection rules have been relaxed so that the repository administrator are able to bypass the review and merge the PR. If you are an administrator on the repository, merge the pull request. Otherwise, get the administrator to merge it.

Config Sync syncs the changes that arrive on staging branch of the repository

acm-gke-infrastructure-repowith the staging GKE cluster that results in landing zone creation for the application on the staging GKE cluster.Create landing zone in production GKE clusters: You need to create a pull request from staging to prod branch and merge it.

Click

Pull requestsand thenNew pull requestbutton. In the Base menu, choose prod and in the Compare menu, choose staging. Click theCreate pull requestbutton.If you are an administrator on the repository, merge the pull request. Otherwise, get the administrator to merge it.

Config Sync syncs the changes that arrive on prod branch of the repository

acm-gke-infrastructure-repowith the production GKE clusters that results in landing zone creation for the application on production GKE clusters.

Promote the changes from dev to staging

Now that you've created the landing zone for the application in staging and prod GKE clusters, promote the application from dev to staging environment.

Find the latest release name and save it as an environment variable:

export RELEASE=$(gcloud deploy targets describe dev --region=us-central1 --format="json" | jq -r '."Active Pipeline"[0]."projects/PROJECT_ID/locations/us-central1/deliveryPipelines/sample"."Latest release"' | awk -F '/' '{print $NF}')

Replace

PROJECT_IDwith your Google Cloud project ID.Verify the environment variable has been set:

echo $RELEASE

In Cloud Shell, run the following command to trigger promotion of the release from dev to staging environment:

gcloud deploy releases promote --release=$RELEASE --delivery-pipeline=sample --region=us-central1 --to-target=staging --quiet

Check staging deployment:

Go to Cloud Deploy pipeline page

Click

samplepipeline, the deployment to the staging GKE cluster has been started. Wait for it to complete.Verify the staging deployment has happened successfully:

Get credentials to the staging cluster:

gcloud container clusters get-credentials gke-staging-us-central1 --location us-central1-aTunnel into GKE cluster:

gcloud container clusters get-credentials gke-staging-us-central1 --location us-central1-a && kubectl port-forward --namespace sample $(kubectl get pod --namespace sample --selector="deploy.cloud.google.com/delivery-pipeline-id=sample" --output jsonpath='{.items[0].metadata.name}') 8080:8080On the Cloud Shell toolbar, click

Web Preview, and then click Preview on port 8080:

The output is the following:

My new feature!

In Cloud Shell, press

CTRL+Cto end the port forward.

Promote the changes from staging to prod

Now, promote the release from staging to prod. You have two production clusters and Cloud Deploy has a target for each of them named prod1 and prod2 respectively.

In Cloud Shell, run the following command to trigger promotion of the release from staging to prod1 cluster:

gcloud deploy releases promote --release=$RELEASE --delivery-pipeline=sample --region=us-central1 --to-target=prod1 --quiet

Release to prod clusters require approval so the rollout waits until you approve it. To view it:

Go to Cloud Deploy pipeline page

Click

samplepipeline. The rollout to prod1 requires approval and clouddeploy.approver role is needed to approve the rollout. Since, you are the owner of the project, you have access to approve the release.Approve the release to prod1:

Run the following command to fetch the name of the rollout pending approval and save it in an environment variable:

export ROLLOUT=$(gcloud deploy targets describe prod1 --region=us-central1 --format="json" | jq -r '."Pending Approvals"[]' | awk -F '/' '{print $NF}')

Approve the release:

gcloud deploy rollouts approve $ROLLOUT --delivery-pipeline=sample --region=us-central1 --release=$RELEASE --quiet

After the approval is given, prod1 release starts. Monitor the progress on Cloud Deploy pipeline page.

After prod1 deployment is completed, kick-off prod2 release.

gcloud deploy releases promote --release=$RELEASE --delivery-pipeline=sample --region=us-central1 --to-target=prod2 --quiet

Release to prod2 also requires approval. Approve the release to prod2 cluster:

Run the following command to fetch the name of the rollout pending approval and save it in an environment variable:

export ROLLOUT=$(gcloud deploy targets describe prod2 --region=us-central1 --format="json" | jq -r '."Pending Approvals"[]' | awk -F '/' '{print $NF}')

Approve the release:

gcloud deploy rollouts approve $ROLLOUT --delivery-pipeline=sample --region=us-central1 --release=$RELEASE --quiet

After the approval is given, prod2 release starts. Monitor the progress on Cloud Deploy pipeline page.

Verify the deployment in production cluster was successful after the Cloud Deploy pipelines in prod1 and prod2 are completed.

There is Multi Cluster Ingress created in production clusters and you use a load balancer to access the prod application. This Multi Cluster Ingress configurations are created using YAML files k8s/prod/mci.yaml and k8s/prod/mcs.yaml in the repository

sample. When you send request to the IP address of the load balancer, Multi Cluster Ingress forwards the request to one of the two instances of the application running in two different GKE clusters.List the forwarding rule associated to the load balancer to find the IP address.

gcloud compute forwarding-rules list

The output resembles the following:

NAME: mci-qqxs9x-fw-sample-sample-ingress REGION: IP_ADDRESS: 34.36.123.118 IP_PROTOCOL: TCP TARGET: mci-qqxs9x-sample-sample-ingress

Open a web browser and enter the following in the URL:

http://IP_ADDRESS:80

Replace

IP_ADDRESSwith IP address of the load balancer.The output is the following:

My new feature!

This confirms the application is deployed as expected in production clusters.

Test resiliency of the application

In this section you test the resiliency of the application running in production by re-starting one of the two production GKE clusters' nodes without impacting the application.

The application in production uses multi-cluster ingress and is accessible through a load balancer IP. When the application is accessed through that IP, multi-cluster ingress routes it to one of the two instances of the application running on two different GKE clusters. When one of the GKE clusters is unhealthy and the application instance running on it can't be reached, the multi-cluster ingress keeps sending the traffic to the healthy instance of the application running on the other GKE cluster. This makes the cluster outage invisible to the end user and the application continuously serves the requests.

To test the resiliency:

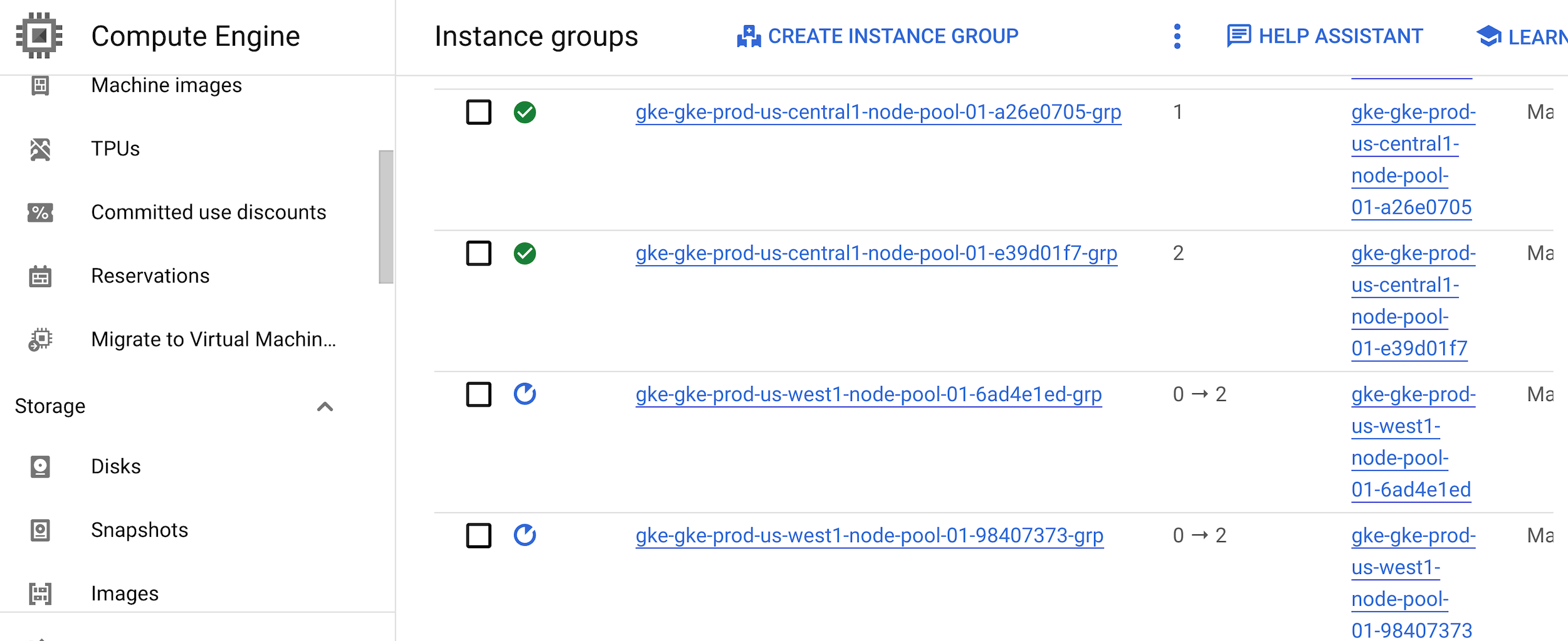

Find the node pool of the production GKE clusters running in us-west1.

gcloud container clusters describe gke-prod-us-west1 --location=us-west1-a --format=json | jq ".nodePools[0].instanceGroupUrls[]" | tr '"' ' ' | awk -F '/' '{for(i=NF-2; i<=NF; i=i+2) printf ("%s ",$i); print ""}'

The output resembles the following:

us-west1-b gke-gke-prod-us-west1-node-pool-01-6ad4e1ed-grp us-west1-c gke-gke-prod-us-west1-node-pool-01-98407373-grp

The output has two columns, first column is the zone and the second column is the name of the instance group associated with the node pool of the production GKE cluster in us-west1 region.

Restart the instance-group corresponding to the node pools:

gcloud compute instance-groups managed rolling-action restart INSTANCE_GROUP_1 --zone=ZONE_1 --max-unavailable=100% gcloud compute instance-groups managed rolling-action restart INSTANCE_GROUP_2 --zone=ZONE_2 --max-unavailable=100%

Replace

INSTANCE_GROUP_1with the name of the first instance group.Replace

ZONE_1with the zone of the first instance group.Replace

INSTANCE_GROUP_2with the name of the second instance group.Replace

ZONE_2with the zone of the second instance group.Check the status of the instance group.

The two instance groups are being rebooted while the other groups have a green tickmark against them.

Open a web browser and enter the following in the URL:

http://IP_ADDRESS:80

Replace

IP_ADDRESSwith IP address of the load balancer.Even when one of the two GKE clusters is down, the application is available and the output is the following:

My new feature!

This shows that your application is resilient and highly available.

Manage the application

When you created this application from the application factory, you got separate git repos, infrastrucutre and CI/CD pipelines for the application. You used these resources to deploy the application and add a new feature. In order to manage the application further, you only need to interact with these git repos and the pipeline without needing to update the application factory. You can customize the application's pipelines and git repositories based on your requirements. As an application owner, you can define who gets access to the pipelines and git repos of your application to manage it.

Clean up

To avoid incurring charges to your Google Cloud account for the resources used in this tutorial.

Delete the project

- In the Google Cloud console, go to the Manage resources page.

- In the project list, select the project that you want to delete, and then click Delete.

- In the dialog, type the project ID, and then click Shut down to delete the project.

What's next

- Learn about best practices for setting up identity federation.

- Read Kubernetes and the challenges of continuous software deployment.

- Learn about hybrid and multi-cloud monitoring and logging patterns.

- Explore reference architectures, diagrams, and best practices about Google Cloud. Take a look at our Cloud Architecture Center.