本文档介绍了采用代管式集合的 Managed Service for Prometheus 部署中的规则和提醒评估配置。

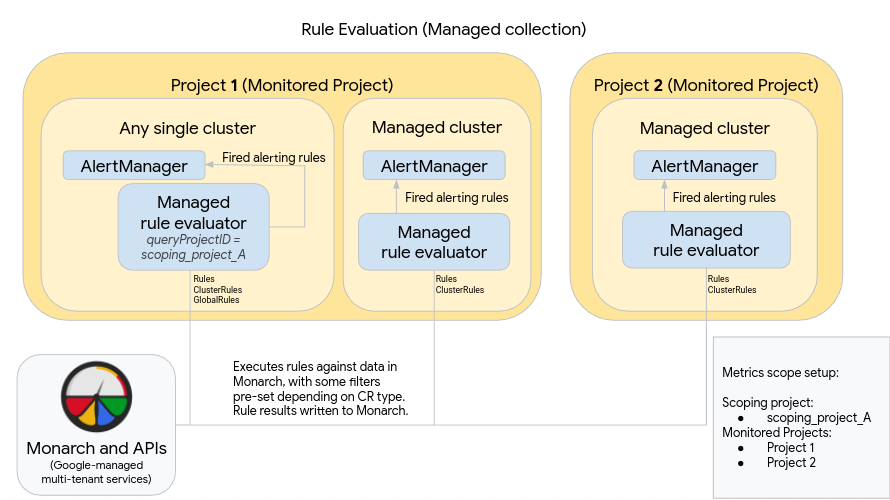

下图展示了在两个 Google Cloud 项目中使用多个集群,并使用规则和提醒评估以及可选的 GlobalRules 资源的部署:

如需设置和使用图中所示的部署,请注意以下事项:

代管式规则评估器会自动部署到正在运行代管式集合的任何集群中。这些评估器的配置如下:

使用 Rules 资源对命名空间中的数据运行规则。Rules 资源必须应用于您要执行规则的每个命名空间。

使用 ClusterRules 资源对集群中的数据运行规则。每个集群应该应用 ClusterRules 资源一次。

所有规则评估都针对全局数据存储区 Monarch 执行。

- Rules 资源会自动过滤安装它们的项目、位置、集群和命名空间的规则。

- ClusterRules 资源会自动过滤安装它们的项目、位置和集群的规则。

- 评估后,所有规则结果都会写入 Monarch。

在每个集群中手动部署 Prometheus AlertManager 实例。通过修改 OperatorConfig 资源来配置代管式规则评估器,以将触发的提醒规则发送到本地 AlertManager 实例。抑制、确认和突发事件管理工作流通常在 PagerDuty 等第三方工具中进行处理。

您可以使用 Kubernetes Endpoints 资源将多个集群的提醒管理集中到单个 AlertManager 中。

上图还展示了可选的 GlobalRules 资源。对于计算跨项目的全局 SLO 或评估单个 Google Cloud 项目中跨集群的规则等任务,请谨慎使用 GlobalRules。我们强烈建议您尽量使用 Rules 和 ClusterRules;这些资源可以提供卓越的可靠性,更适合常见的 Kubernetes 部署机制和租户模型。

如果您使用 GlobalRules 资源,请注意上图中的以下内容:

在 Google Cloud 中运行的单个集群被指定为指标范围的全局规则评估集群。此代管式规则评估器配置为使用 scoping_project_A,其中包含项目 1 和项目 2。针对 scoping_project_A 执行的规则会自动扇出到项目 1 和项目 2。

底层服务账号必须获得 scoping_project_A 的 Monitoring Viewer 权限。如需详细了解如何设置这些字段,请参阅多项目和全局规则评估。

与所有其他集群一样,此规则评估器使用 Rules 和 ClusterRules 资源进行设置,这些资源会评估范围限定为命名空间或集群的规则。这些规则会自动过滤到本地项目 - 在本例中为项目 1。由于 scoping_project_A 包含项目 1,因此 Rules 和 ClusterRules 配置的规则仅按预期针对本地项目中的数据执行。

此集群还具有针对 scoping_project_A 执行规则的 GlobalRules 资源。GlobalRules 不会自动过滤,因此 GlobalRules 完全按照编写方式在 scoping_project_A 的所有项目、位置、集群和命名空间中执行。

触发的提醒规则会按预期发送到自行托管的 AlertManager。

使用 GlobalRules 可能会产生意外影响,具体取决于您是保留还是聚合规则中的 project_id、location、cluster 和 namespace 标签:

如果您的 GlobalRules 规则保留

project_id标签(使用by(project_id)子句),则系统会使用底层时序的原始project_id值将规则结果写回到 Monarch。在这种情况下,您需要确保底层服务账号具有 scoping_project_A 中每个受监控项目的 Monitoring Metric Writer 权限。如果您向 scoping_project_A 添加新的受监控项目,则还必须向该服务账号手动添加新的权限。

如果您的 GlobalRules 规则不保留

project_id标签(不使用by(project_id)子句),则系统会使用正在运行全局规则评估器的集群的project_id值将规则结果写回到 Monarch。在这种情况下,您无需进一步修改底层服务账号。

如果 GlobalRules 保留

location标签(使用by(location)子句),则系统会使用生成底层时序的每个原始 Google Cloud 区域将规则结果写回到 Monarch。如果您的 GlobalRules 不保留

location标签,则数据会写回到正在运行全局规则评估器的集群所在的位置。

我们强烈建议保留规则评估结果中的 cluster 和 namespace 标签,除非规则的目的是聚合这些标签。否则,查询性能可能会降低,并且您可能会遇到基数限制。强烈建议不要移除这两个标签。