Cloud Composer 1 | Cloud Composer 2

This page describes how to upgrade your environment to a new Cloud Composer or Airflow version.

About upgrade operations

When you change the version of Airflow or Cloud Composer used by your environment, your environment upgrades.

Upgrading does not change how you connect to the resources in your environment, such as the URL of your environment's bucket, or Airflow web server.

During an upgrade, Cloud Composer:

- Re-deploys your environment's components using new versions of Cloud Composer images.

- Applies Airflow configuration changes, such as custom PyPI packages or Airflow configuration option overrides, if your environment had them before the upgrade.

- Updates the Airflow

airflow_dbconnection to point to the new Cloud SQL database.

Before you begin

Upgrading is in Preview. Use this feature with caution in production environments.

You cannot downgrade to an earlier version of Cloud Composer or Airflow.

We recommend to create a new snapshot of the environment to be able to re-create the environment in case this is needed.

You can upgrade the Cloud Composer version, the Airflow version, or both at the same time.

You can upgrade from any version of Cloud Composer only to the latest version, three previous versions of Cloud Composer, and to versions with an extended upgrade timeline.

To upgrade to versions other than the latest Cloud Composer version, use Google Cloud CLI, API, or Terraform. In Google Cloud console, you can upgrade only to the latest version.

It is not possible to upgrade to a different major version of Cloud Composer or Airflow in-place. You can manually transfer DAGs and configuration between environments. For more information, see:

You must have a role that can trigger environment upgrade operations. In addition, the service account of the environment must have a role that has enough permissions to perform upgrade operations. For more information, see Access control.

For the list of PyPI packages in a version that you want to upgrade to, see the list of Cloud Composer versions.

You cannot upgrade your environment if the Airflow database contains more than 16 GB of data. During an upgrade, a warning is shown if the Airflow database size is more than 16 GB. In this case, perform the database maintenance to reduce the database size.

The image version that you upgrade to must support your environment's current Python version.

Make sure your DAGs are compatible with the Airflow version and all provider packages (including the Airflow provider package for Google Cloud and Google services). You can check the versions of provider packages that are included in a specific Cloud Composer version.

In Google Cloud console, navigate to the IAM and Admin > Quotas and System Limits page and check if the

Compute Engine Engine APIquota for CPU is not exceeded. If the quota threshold is being approached, request Quota extension before proceeding with upgrade operation.Upgrades are possible to the last three published Cloud Composer versions, or to one of the "extended upgrade support" versions as described in Cloud Composer versions.

Check that your environment is up to date

Cloud Composer displays warnings when your environment's image approaches its end of support date. You can use these warnings to always keep your environments in the full support period.

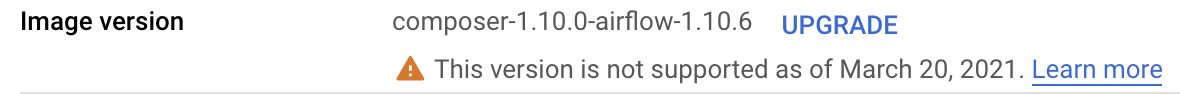

Cloud Composer keeps track of the Cloud Composer image version that your environment is based on. When the image approaches its end of support date, you can see a warning in the list of environments and on the Environment details page.

To check if your environment image is up to date:

Console

In Google Cloud console, go to the Environments page.

In the list of environments, click the name of your environment. The Environment details page opens.

Go to the Environment configuration tab.

In the Image version field, one of the following messages is displayed:

Newest available version. Your environment image is fully supported.

New version available. Your environment image is fully supported and you can upgrade it to a later version.

Support for this image version ends in... Your environment image approaches the end of the full support period.

This version is not supported as of... Your environment is past the full support period.

View available upgrades

To view Cloud Composer versions that you can upgrade to:

Console

In Google Cloud console, go to the Environments page.

In the list of environments, click the name of your environment. The Environment details page opens.

Go to the Environment configuration tab and click Upgrade Image Version.

For the list of available versions, click the Cloud Composer Image version drop-down menu.

gcloud

gcloud beta composer environments list-upgrades \

ENVIRONMENT_NAME \

--location LOCATION

Replace:

ENVIRONMENT_NAMEwith the name of the environment.LOCATIONwith the region where the environment is located.

Example:

gcloud beta composer environments list-upgrades example-environment \

--location us-central1

API

You can view available versions for a location. To do so, construct an

imageVersions.list API request.

For example:

// GET https://composer.googleapis.com/v1/projects/example-project/

// locations/us-central1/imageVersions

Checking for PyPI package conflicts before upgrading

You can check if PyPI packages installed in your environment have any conflicts with preinstalled packages in the new Cloud Composer image.

A successful check means that there is no PyPI package dependency conflicts between the current and the specified version. Note that an upgrade operation might still not be successful because of other reasons.

Console

To run an upgrade check for your environment:

In Google Cloud console, go to the Environments page.

In the list of environments, click the name of your environment. The Environment details page opens.

Go to the Environment configuration tab, locate the Image version entry, and click Upgrade.

In the Environment version upgrade dialog, in the New version drop-down list, select a Cloud Composer version that you want to upgrade to.

In the PyPI packages compatibility section, click Check for conflicts.

Wait until the check is complete. If there are PyPI package dependency conflicts, then the displayed error messages contain details about conflicting packages and package versions.

gcloud

To run an upgrade check for your environment, run the

environments check-upgrade

command with the Cloud Composer image version that you want to

upgrade to.

gcloud beta composer environments check-upgrade \

ENVIRONMENT_NAME \

--location LOCATION \

--image-version COMPOSER_IMAGE

Replace:

ENVIRONMENT_NAMEwith the name of the environment.LOCATIONwith the region where the environment is located.COMPOSER_IMAGEwith the new Cloud Composer image version that you want to upgrade to, in the formcomposer-a.b.c-airflow-x.y.z.

Example:

gcloud beta composer environments check-upgrade example-environment \

--location us-central1 \

--image-version composer-1.20.12-airflow-1.10.15

Example output:

Waiting for [projects/example-project/locations/us-central1/environments/

example-environment] to be checked for PyPI package conflicts when upgrading

to composer-1.20.12-airflow-1.10.15. Operation [projects/example-project/locations/

us-central1/operations/04d0e8b2-...]...done.

...

Response:

'@type': type.googleapis.com/

google.cloud.orchestration.airflow.service.v1beta1.CheckUpgradeResponse

buildLogUri: https://console.cloud.google.com/cloud-build/builds/79738aa7-...

containsPypiModulesConflict: CONFLICT

pypiConflictBuildLogExtract: |-

The Cloud Build image build failed: Build failed; check build logs for

details. Full log can be found at https://console.cloud.google.com/

cloud-build/builds/79738aa7-...

Error details: tensorboard 2.2.2 has requirement

setuptools>=41.0.0, but you have setuptools 40.3.0.

As an alternative, you can run an upgrade check asynchronously. Use the

--async argument to make an asynchronous call, then check the result with

the gcloud composer operations describe

command.

API

Construct an environments.checkUpgrade API

request.

Specify the image version in the imageVersion field:

{

"imageVersion": "COMPOSER_IMAGE"

}

Replace COMPOSER_IMAGE with the new

Cloud Composer image version that you want to

upgrade to, in the form composer-a.b.c-airflow-x.y.z.

Upgrade your environment

To upgrade your environment to a later version of Cloud Composer or Airflow:

Console

In Google Cloud console, go to the Environments page.

In the list of environments, click the name of your environment. The Environment details page opens.

Go to the Environment configuration tab.

Locate the Image version item and click Upgrade.

From the Image version drop-down menu, select a version.

Click Upgrade.

gcloud

gcloud beta composer environments update \

ENVIRONMENT_NAME \

--location LOCATION \

--image-version COMPOSER_IMAGE

Replace:

ENVIRONMENT_NAMEwith the name of the environment.LOCATIONwith the region where the environment is located.COMPOSER_IMAGEwith the new Cloud Composer image version that you want to upgrade to, in the formcomposer-a.b.c-airflow-x.y.z.

For example:

gcloud beta composer environments update

example-environment \

--location us-central1 \

--image-version composer-1.20.12-airflow-1.10.15

API

Construct an

environments.patchbeta API request.In this request:

In the

updateMaskparameter, specify theconfig.softwareConfig.imageVersionmask.In the request body, in the

imageVersionfield, the new Cloud Composer image version that you want to upgrade to, in the formcomposer-a.b.c-airflow-x.y.z.

For example:

// PATCH https://composer.googleapis.com/v1beta1/projects/example-project/

// locations/us-central1/environments/example-environment?updateMask=

// config.softwareConfig.imageVersion

{

"config": {

"softwareConfig": {

"imageVersion": "composer-1.20.12-airflow-1.10.15"

}

}

}

Terraform

The image_version field in the config.software_config block

controls the Cloud Composer image of your environment. In this

field, specify a new Cloud Composer image.

resource "google_composer_environment" "example" {

provider = google-beta

name = "ENVIRONMENT_NAME"

region = "LOCATION"

config {

software_config {

image_version = "COMPOSER_IMAGE"

}

}

}

Replace:

ENVIRONMENT_NAMEwith the name of the environment.LOCATIONwith the region where the environment is located.COMPOSER_IMAGEwith the new Cloud Composer image version that you want to upgrade to, in the formcomposer-a.b.c-airflow-x.y.z.

Example:

resource "google_composer_environment" "example" {

provider = google-beta

name = "example-environment"

region = "us-central1"

config {

software_config {

image_version = "composer-1.20.12-airflow-1.10.15"

}

}

}