Cloud Composer 3 | Cloud Composer 2 | Cloud Composer 1

Halaman ini menjelaskan cara menggunakan KubernetesPodOperator untuk men-deploy Pod Kubernetes dari Cloud Composer ke cluster Google Kubernetes Engine yang merupakan bagian dari lingkungan Cloud Composer Anda.

KubernetesPodOperator meluncurkan Kubernetes Pod di cluster lingkungan Anda. Sebagai perbandingan, operator Google Kubernetes Engine menjalankan Pod Kubernetes di cluster tertentu, yang dapat berupa cluster terpisah yang tidak terkait dengan lingkungan Anda. Anda juga dapat membuat dan menghapus cluster menggunakan operator Google Kubernetes Engine.

KubernetesPodOperator adalah opsi yang baik jika Anda memerlukan:

- Dependensi Python kustom yang tidak tersedia melalui repositori PyPI publik.

- Dependensi biner yang tidak tersedia di image pekerja Cloud Composer stock.

Sebelum memulai

- Sebaiknya gunakan Cloud Composer versi terbaru. Minimal, versi ini harus didukung sebagai bagian dari kebijakan penghentian dan dukungan.

- Pastikan lingkungan Anda memiliki resource yang memadai. Meluncurkan Pod ke lingkungan yang kekurangan resource dapat menyebabkan error pekerja dan penjadwal Airflow.

Menyiapkan resource lingkungan Cloud Composer

Saat membuat lingkungan Cloud Composer, Anda menentukan parameter performanya, termasuk parameter performa untuk cluster lingkungan. Meluncurkan Pod Kubernetes ke cluster lingkungan dapat menyebabkan persaingan untuk resource cluster, seperti CPU atau memori. Karena penjadwal dan pekerja Airflow berada di cluster GKE yang sama, penjadwal dan pekerja tidak akan berfungsi dengan benar jika persaingan menyebabkan kehabisan resource.

Untuk mencegah kehabisan resource, lakukan satu atau beberapa tindakan berikut:

- (Direkomendasikan) Membuat node pool

- Meningkatkan jumlah node di lingkungan Anda

- Menentukan jenis mesin yang sesuai

Membuat node pool

Cara yang lebih disukai untuk mencegah kehabisan resource di lingkungan Cloud Composer adalah dengan membuat node pool baru dan mengonfigurasi Pod Kubernetes untuk dijalankan hanya menggunakan resource dari kumpulan tersebut.

Konsol

Di konsol Google Cloud, buka halaman Environments.

Klik nama lingkungan Anda.

Di halaman Detail lingkungan, buka tab Konfigurasi lingkungan.

Di bagian Resources > GKE cluster, ikuti link lihat detail cluster.

Buat node pool seperti yang dijelaskan dalam Menambahkan node pool.

gcloud

Tentukan nama cluster lingkungan Anda:

gcloud composer environments describe ENVIRONMENT_NAME \ --location LOCATION \ --format="value(config.gkeCluster)"Ganti:

ENVIRONMENT_NAMEdengan nama lingkungan.LOCATIONdengan region tempat lingkungan berada.

Output-nya berisi nama cluster lingkungan Anda. Misalnya, ini dapat berupa

europe-west3-example-enviro-af810e25-gke.Buat node pool seperti yang dijelaskan dalam Menambahkan node pool.

Meningkatkan jumlah node di lingkungan Anda

Meningkatkan jumlah node di lingkungan Cloud Composer akan meningkatkan daya komputasi yang tersedia untuk beban kerja Anda. Peningkatan ini tidak memberikan resource tambahan untuk tugas yang memerlukan lebih banyak CPU atau RAM daripada yang disediakan jenis mesin yang ditentukan.

Untuk meningkatkan jumlah node, update lingkungan Anda.

Menentukan jenis mesin yang sesuai

Selama pembuatan lingkungan Cloud Composer, Anda dapat menentukan jenis mesin. Untuk memastikan resource yang tersedia, tentukan jenis mesin untuk jenis komputasi yang terjadi di lingkungan Cloud Composer Anda.

Konfigurasi minimal

Untuk membuat KubernetesPodOperator, hanya parameter name, image Pod yang akan digunakan, dan

task_id yang diperlukan. /home/airflow/composer_kube_config

berisi kredensial untuk mengautentikasi ke GKE.

Airflow 2

Aliran udara 1

Konfigurasi afinitas pod

Saat mengonfigurasi parameter affinity di KubernetesPodOperator, Anda

mengontrol node tempat Pod akan dijadwalkan, seperti node hanya di node pool

tertentu. Dalam contoh ini, operator hanya berjalan di node pool bernama

pool-0 dan pool-1. Node lingkungan Cloud Composer 1 Anda berada di

default-pool, sehingga Pod tidak berjalan di node di lingkungan Anda.

Airflow 2

Aliran udara 1

Saat contoh dikonfigurasi, tugas akan gagal. Jika Anda melihat log, tugas gagal karena node pool pool-0 dan pool-1 tidak ada.

Untuk memastikan node pool di values ada, buat salah satu perubahan konfigurasi berikut:

Jika Anda membuat node pool sebelumnya, ganti

pool-0danpool-1dengan nama node pool Anda, lalu upload DAG lagi.Buat node pool bernama

pool-0ataupool-1. Anda dapat membuat kedua file tersebut, tetapi tugas hanya memerlukan satu file untuk berhasil.Ganti

pool-0danpool-1dengandefault-pool, yang merupakan kumpulan default yang digunakan Airflow. Kemudian, upload DAG Anda lagi.

Setelah Anda membuat perubahan, tunggu beberapa menit hingga lingkungan Anda diperbarui.

Kemudian, jalankan kembali tugas ex-pod-affinity dan pastikan tugas ex-pod-affinity

berhasil.

Konfigurasi tambahan

Contoh ini menunjukkan parameter tambahan yang dapat Anda konfigurasikan di KubernetesPodOperator.

Lihat referensi berikut untuk informasi selengkapnya:

Untuk informasi tentang cara menggunakan Secret dan ConfigMap Kubernetes, lihat Menggunakan Secret dan ConfigMap Kubernetes.

Untuk informasi tentang penggunaan template Jinja dengan KubernetesPodOperator, lihat Menggunakan template Jinja.

Untuk informasi tentang parameter KubernetesPodOperator, lihat referensi operator dalam dokumentasi Airflow.

Airflow 2

Aliran udara 1

Menggunakan template Jinja

Airflow mendukung template Jinja di DAG.

Anda harus mendeklarasikan parameter Airflow yang diperlukan (task_id, name, dan

image) dengan operator. Seperti yang ditunjukkan dalam contoh berikut,

Anda dapat membuat template semua parameter lainnya dengan Jinja, termasuk cmds,

arguments, env_vars, dan config_file.

Parameter env_vars dalam contoh ditetapkan dari

Variabel Airflow bernama my_value. Contoh DAG

mendapatkan nilainya dari variabel template vars di Airflow. Airflow memiliki lebih banyak variabel yang memberikan akses ke berbagai jenis informasi. Misalnya,

Anda dapat menggunakan variabel template conf untuk mengakses nilai

opsi konfigurasi Airflow. Untuk informasi selengkapnya dan

daftar variabel yang tersedia di Airflow, lihat

Referensi template dalam dokumentasi

Airflow.

Tanpa mengubah DAG atau membuat variabel env_vars, tugas ex-kube-templates dalam contoh akan gagal karena variabel tidak

ada. Buat variabel ini di UI Airflow atau dengan Google Cloud CLI:

UI Airflow

Buka UI Airflow.

Di toolbar, pilih Admin > Variables.

Di halaman List Variable, klik Add a new record.

Di halaman Tambahkan Variabel, masukkan informasi berikut:

- Kunci:

my_value - Val:

example_value

- Kunci:

Klik Save.

Jika lingkungan Anda menggunakan Airflow 1, jalankan perintah berikut:

Buka UI Airflow.

Di toolbar, pilih Admin > Variables.

Di halaman Variabel, klik tab Buat.

Di halaman Variabel, masukkan informasi berikut:

- Kunci:

my_value - Val:

example_value

- Kunci:

Klik Save.

gcloud

Masukkan perintah berikut:

gcloud composer environments run ENVIRONMENT \

--location LOCATION \

variables set -- \

my_value example_value

Jika lingkungan Anda menggunakan Airflow 1, jalankan perintah berikut:

gcloud composer environments run ENVIRONMENT \

--location LOCATION \

variables -- \

--set my_value example_value

Ganti:

ENVIRONMENTdengan nama lingkungan.LOCATIONdengan region tempat lingkungan berada.

Contoh berikut menunjukkan cara menggunakan template Jinja dengan KubernetesPodOperator:

Airflow 2

Aliran udara 1

Menggunakan Secret dan ConfigMap Kubernetes

Secret Kubernetes adalah objek yang berisi data sensitif. ConfigMap Kubernetes adalah objek yang berisi data non-rahasia dalam key-value pair.

Di Cloud Composer 2, Anda dapat membuat Secret dan ConfigMap menggunakan Google Cloud CLI, API, atau Terraform, lalu mengaksesnya dari KubernetesPodOperator.

Tentang file konfigurasi YAML

Saat membuat Secret atau ConfigMap Kubernetes menggunakan Google Cloud CLI dan API, Anda memberikan file dalam format YAML. File ini harus mengikuti format yang sama seperti yang digunakan oleh Secret dan ConfigMap Kubernetes. Dokumentasi Kubernetes menyediakan banyak contoh kode ConfigMap dan Secret. Untuk memulai, Anda dapat melihat halaman Mendistribusikan Kredensial dengan Aman Menggunakan Secret dan ConfigMaps.

Sama seperti di Kubernetes Secrets, gunakan representasi base64 saat Anda menentukan nilai di Secret.

Untuk mengenkode nilai, Anda dapat menggunakan perintah berikut (ini adalah salah satu dari banyak cara untuk mendapatkan nilai yang dienkode base64):

echo "postgresql+psycopg2://root:example-password@127.0.0.1:3306/example-db" -n | base64

Output:

cG9zdGdyZXNxbCtwc3ljb3BnMjovL3Jvb3Q6ZXhhbXBsZS1wYXNzd29yZEAxMjcuMC4wLjE6MzMwNi9leGFtcGxlLWRiIC1uCg==

Dua contoh file YAML berikut akan digunakan dalam contoh di bagian selanjutnya dalam panduan ini. Contoh file konfigurasi YAML untuk Secret Kubernetes:

apiVersion: v1

kind: Secret

metadata:

name: airflow-secrets

data:

sql_alchemy_conn: cG9zdGdyZXNxbCtwc3ljb3BnMjovL3Jvb3Q6ZXhhbXBsZS1wYXNzd29yZEAxMjcuMC4wLjE6MzMwNi9leGFtcGxlLWRiIC1uCg==

Contoh lain yang menunjukkan cara menyertakan file. Sama seperti pada contoh

sebelumnya, pertama-tama encode konten file (cat ./key.json | base64), lalu

berikan nilai ini dalam file YAML:

apiVersion: v1

kind: Secret

metadata:

name: service-account

data:

service-account.json: |

ewogICJ0eXBl...mdzZXJ2aWNlYWNjb3VudC5jb20iCn0K

Contoh file konfigurasi YAML untuk ConfigMap. Anda tidak perlu menggunakan representasi base64 di ConfigMap:

apiVersion: v1

kind: ConfigMap

metadata:

name: example-configmap

data:

example_key: example_value

Mengelola Secret Kubernetes

Di Cloud Composer 2, Anda membuat Secret menggunakan Google Cloud CLI dan kubectl:

Dapatkan informasi tentang cluster lingkungan Anda:

Jalankan perintah berikut:

gcloud composer environments describe ENVIRONMENT \ --location LOCATION \ --format="value(config.gkeCluster)"Ganti:

ENVIRONMENTdengan nama lingkungan Anda.LOCATIONdengan region tempat lingkungan Cloud Composer berada.

Output perintah ini menggunakan format berikut:

projects/<your-project-id>/zones/<zone-of-composer-env>/clusters/<your-cluster-id>.Untuk mendapatkan ID cluster GKE, salin output setelah

/clusters/(berakhiran-gke).Untuk mendapatkan zona, salin output setelah

/zones/.

Hubungkan ke cluster GKE Anda dengan perintah berikut:

gcloud container clusters get-credentials CLUSTER_ID \ --project PROJECT \ --zone ZONEGanti:

CLUSTER_ID: ID cluster lingkungan.PROJECT_ID: Project ID.ZONEdengan zona tempat cluster lingkungan berada.

Membuat Secret Kubernetes:

Perintah berikut menunjukkan dua pendekatan berbeda untuk membuat Kubernetes Secrets. Pendekatan

--from-literalmenggunakan pasangan nilai kunci. Pendekatan--from-filemenggunakan konten file.Untuk membuat Secret Kubernetes dengan memberikan pasangan nilai kunci, jalankan perintah berikut. Contoh ini membuat Secret bernama

airflow-secretsyang memiliki kolomsql_alchemy_conndengan nilaitest_value.kubectl create secret generic airflow-secrets \ --from-literal sql_alchemy_conn=test_valueUntuk membuat Kubernetes Secret dengan memberikan konten file, jalankan perintah berikut. Contoh ini membuat Secret bernama

service-accountyang memiliki kolomservice-account.jsondengan nilai yang diambil dari konten file./key.jsonlokal.kubectl create secret generic service-account \ --from-file service-account.json=./key.json

Menggunakan Secret Kubernetes di DAG

Contoh ini menunjukkan dua cara menggunakan Secret Kubernetes: sebagai variabel lingkungan, dan sebagai volume yang dipasang oleh Pod.

Secret pertama, airflow-secrets, ditetapkan

ke variabel lingkungan Kubernetes bernama SQL_CONN (bukan

variabel lingkungan Airflow atau Cloud Composer).

Secret kedua, service-account, memasang service-account.json, file

dengan token akun layanan, ke /var/secrets/google.

Berikut adalah tampilan objek Secret:

Airflow 2

Aliran udara 1

Nama Secret Kubernetes pertama ditentukan dalam variabel secret_env.

Secret ini bernama airflow-secrets. Parameter deploy_type menentukan

bahwa parameter tersebut harus diekspos sebagai variabel lingkungan. Nama variabel lingkungan adalah SQL_CONN, seperti yang ditentukan dalam parameter deploy_target. Terakhir, nilai variabel lingkungan SQL_CONN ditetapkan ke nilai kunci sql_alchemy_conn.

Nama Kubernetes Secret kedua ditentukan dalam variabel

secret_volume. Secret ini bernama service-account. Volume ini diekspos sebagai

volume, seperti yang ditentukan dalam parameter deploy_type. Jalur file yang akan dipasang, deploy_target, adalah /var/secrets/google. Terakhir, key Secret yang disimpan di deploy_target adalah service-account.json.

Berikut adalah tampilan konfigurasi operator:

Airflow 2

Aliran udara 1

Informasi tentang Penyedia Kubernetes CNCF

KubernetesPodOperator diimplementasikan di

penyedia apache-airflow-providers-cncf-kubernetes.

Untuk mengetahui catatan rilis mendetail bagi penyedia Kubernetes CNCF, lihat situs Penyedia Kubernetes CNCF.

Versi 6.0.0

Pada paket Penyedia Kubernetes CNCF versi 6.0.0, koneksi kubernetes_default digunakan secara default di KubernetesPodOperator.

Jika Anda menentukan koneksi kustom dalam versi 5.0.0, koneksi kustom ini

masih digunakan oleh operator. Untuk beralih kembali menggunakan koneksi kubernetes_default, sebaiknya sesuaikan DAG Anda.

Versi 5.0.0

Versi ini memperkenalkan beberapa perubahan yang tidak kompatibel dengan versi lama

dibandingkan dengan versi 4.4.0. Yang paling penting terkait dengan

koneksi kubernetes_default yang tidak digunakan di versi 5.0.0.

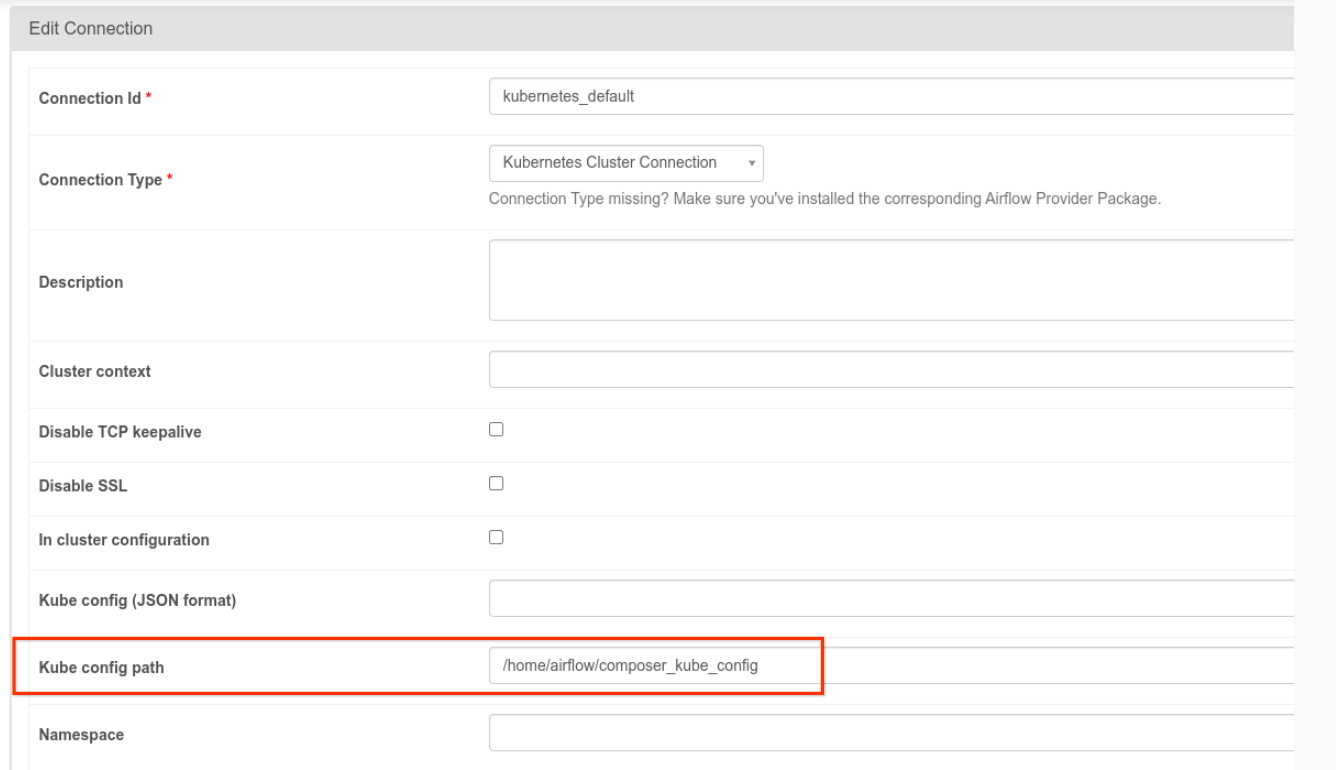

- Koneksi

kubernetes_defaultperlu diubah. Jalur konfigurasi Kubernetes harus ditetapkan ke/home/airflow/composer_kube_config(seperti yang ditunjukkan pada gambar berikut). Sebagai alternatif,config_fileharus ditambahkan ke konfigurasi KubernetesPodOperator (seperti yang ditunjukkan dalam contoh kode berikut).

- Ubah kode tugas menggunakan KubernetesPodOperator dengan cara berikut:

KubernetesPodOperator(

# config_file parameter - can be skipped if connection contains this setting

config_file="/home/airflow/composer_kube_config",

# definition of connection to be used by the operator

kubernetes_conn_id='kubernetes_default',

...

)

Untuk informasi selengkapnya tentang Versi 5.0.0, lihat Catatan Rilis Penyedia Kubernetes CNCF.

Pemecahan masalah

Bagian ini memberikan saran untuk memecahkan masalah KubernetesPodOperator umum:

Melihat log

Saat memecahkan masalah, Anda dapat memeriksa log dalam urutan berikut:

Log Tugas Airflow:

Di konsol Google Cloud, buka halaman Environments.

Di daftar lingkungan, klik nama lingkungan Anda. Halaman Environment details akan terbuka.

Buka tab DAG.

Klik nama DAG, lalu klik operasi DAG untuk melihat detail dan log.

Log penjadwal Airflow:

Buka halaman Detail lingkungan.

Buka tab Logs.

Periksa log penjadwal Airflow.

Log pod di konsol Google Cloud, di bagian beban kerja GKE. Log ini mencakup file YAML definisi Pod, peristiwa Pod, dan detail Pod.

Kode return bukan nol

Saat menggunakan KubernetesPodOperator (dan GKEStartPodOperator), kode yang ditampilkan dari titik entri penampung menentukan apakah tugas dianggap berhasil atau tidak. Kode return selain nol menunjukkan kegagalan.

Pola umum adalah mengeksekusi skrip shell sebagai titik entri penampung untuk menggabungkan beberapa operasi dalam penampung.

Jika Anda menulis skrip tersebut, sebaiknya sertakan

perintah set -e di bagian atas skrip sehingga perintah yang gagal dalam skrip

akan menghentikan skrip dan menyebarkan kegagalan ke instance tugas Airflow.

Waktu tunggu pod

Waktu tunggu default untuk KubernetesPodOperator adalah 120 detik, yang

dapat menyebabkan waktu tunggu terjadi sebelum download image yang lebih besar. Anda dapat meningkatkan waktu tunggu dengan mengubah parameter startup_timeout_seconds saat membuat KubernetesPodOperator.

Jika Pod habis waktunya, log khusus tugas akan tersedia di UI Airflow. Contoh:

Executing <Task(KubernetesPodOperator): ex-all-configs> on 2018-07-23 19:06:58.133811

Running: ['bash', '-c', u'airflow run kubernetes-pod-example ex-all-configs 2018-07-23T19:06:58.133811 --job_id 726 --raw -sd DAGS_FOLDER/kubernetes_pod_operator_sample.py']

Event: pod-name-9a8e9d06 had an event of type Pending

...

...

Event: pod-name-9a8e9d06 had an event of type Pending

Traceback (most recent call last):

File "/usr/local/bin/airflow", line 27, in <module>

args.func(args)

File "/usr/local/lib/python2.7/site-packages/airflow/bin/cli.py", line 392, in run

pool=args.pool,

File "/usr/local/lib/python2.7/site-packages/airflow/utils/db.py", line 50, in wrapper

result = func(*args, **kwargs)

File "/usr/local/lib/python2.7/site-packages/airflow/models.py", line 1492, in _run_raw_task

result = task_copy.execute(context=context)

File "/usr/local/lib/python2.7/site-packages/airflow/contrib/operators/kubernetes_pod_operator.py", line 123, in execute

raise AirflowException('Pod Launching failed: {error}'.format(error=ex))

airflow.exceptions.AirflowException: Pod Launching failed: Pod took too long to start

Waktu tunggu Pod juga dapat terjadi saat Akun Layanan Cloud Composer tidak memiliki izin IAM yang diperlukan untuk melakukan tugas yang sedang dilakukan. Untuk memverifikasinya, lihat error tingkat Pod menggunakan Dasbor GKE untuk melihat log Workload tertentu, atau gunakan Cloud Logging.

Gagal membuat koneksi baru

Upgrade otomatis diaktifkan secara default di cluster GKE. Jika node pool berada dalam cluster yang sedang diupgrade, Anda mungkin melihat error berikut:

<Task(KubernetesPodOperator): gke-upgrade> Failed to establish a new

connection: [Errno 111] Connection refused

Untuk memeriksa apakah cluster Anda sedang diupgrade, di konsol Google Cloud, buka halaman Kubernetes clusters dan cari ikon pemuatan di samping nama cluster lingkungan Anda.