Google Kubernetes Engine (GKE)

Kubernetes, evolved: The foundation for platform builders

Put your containers on autopilot and securely run your enterprise workloads at scale—with little to no Kubernetes operational expertise required.

Get one free zonal or Autopilot cluster per month. Plus, new customers get $300 in free credits to try out GKE.

Features

Simplified cluster management and improved resource efficiency

GKE Autopilot is a mode of operation in which Google manages your node infrastructure, scaling, security, and pre-configured features. With automatic capacity right-sizing and per-pod pricing, you can avoid overprovisioning, overpaying, and underutilization. With the Autopilot’s container-optimized compute platform, you can get near real-time, vertically and horizontally scalable compute that provides the capacity needed, when needed, at the best price and performance.

Production-ready platform for agentic AI workloads and gen AI models

With support for up to 65,000-node clusters, integration with AI Hypercomputer, and GPU and TPU support, GKE makes it easy to run ML, HPC, and other workloads that benefit from specialized hardware accelerators.

GKE inference capabilities with gen AI-aware scaling and load balancing techniques help to reduce serving costs by over 30%, tail latency by 60%, and increase throughput by up to 40% compared to other managed and open source Kubernetes offerings.

Secure by design

GKE runs on a secure-by-design foundation, now enhanced with always-on essential security enabled by default. Get instant visibility into cluster misconfigurations, risks, and agentless scanning for critical vulnerabilities directly in the GKE security dashboard. Protect data-in-use with hardware-based encryption on Confidential GKE Nodes and isolate workloads with GKE Sandbox. Use built-in IaC scanning to proactively detect misconfigurations in your Terraform plans before they reach production.

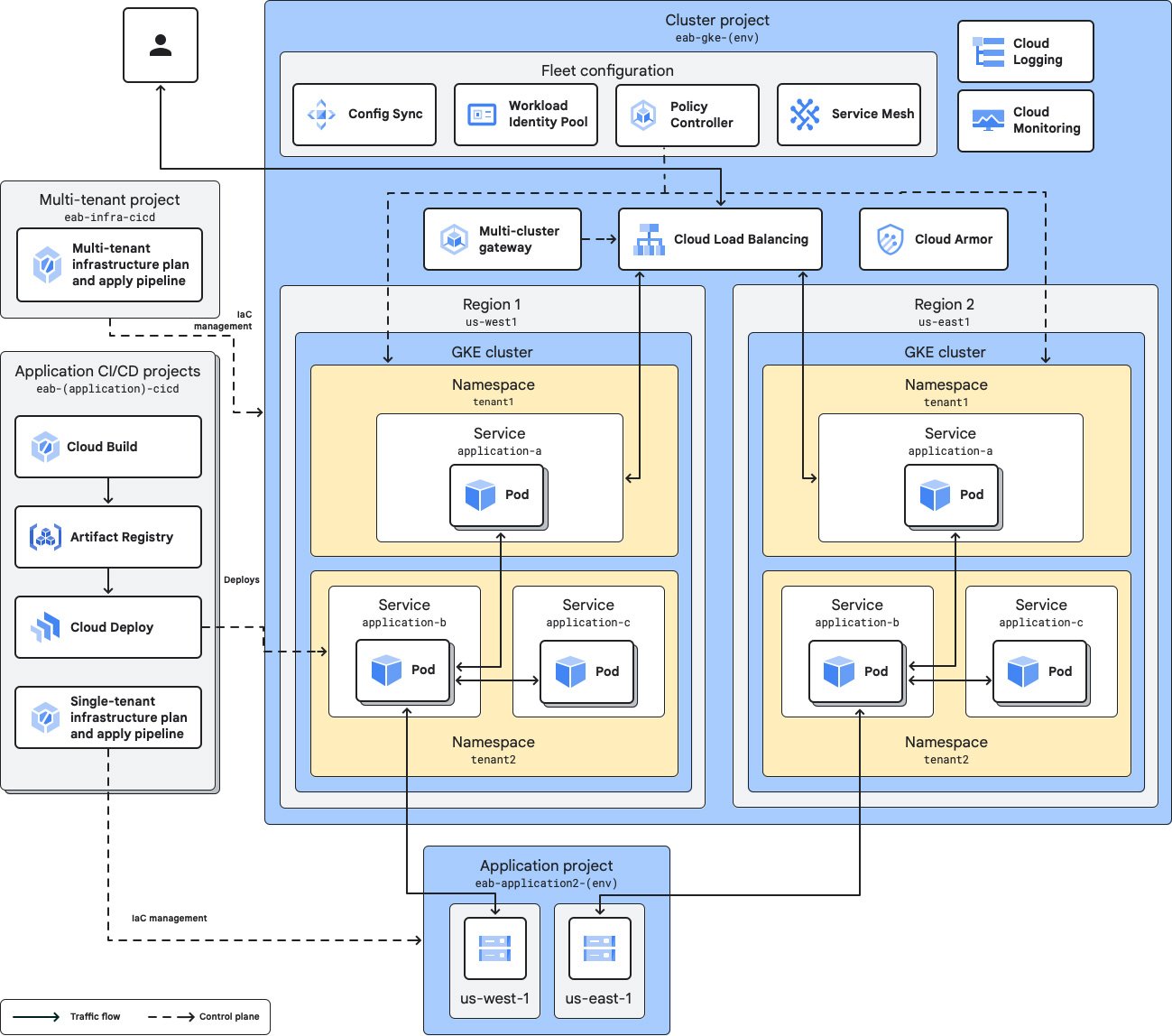

Multi-team and multi-cluster management

Fleets and Teams can be used to organize clusters and workloads and assign resources to multiple teams easily to improve velocity and delegate ownership. Team scopes let you define subsets of fleet resources on a per-team basis, with each scope associated with one or more fleet member clusters.

You might choose multiple clusters to separate services across environments, tiers, locales, teams, or infrastructure providers. Fleets strive to make managing multiple clusters as easy as possible.

Workload portability with multi-cloud support

GKE runs Certified Kubernetes and embraces open standards to let customers run their applications, unmodified, on existing on-premises hardware investments or in the public cloud.

GKE attached clusters lets you register, or attach, any conformant Kubernetes cluster you’ve created yourself to the GKE management environment. Attaching a cluster gives you GKE management and control over it, along with access to additional features like Config Sync, Cloud Service Mesh, and Fleets.

How It Works

Within each GKE cluster, GKE manages the Kubernetes control plane life cycle from cluster creation to deletion. With Autopilot enabled, GKE can also manage your nodes, including automated provisioning, scaling, and scheduling. Or, you can opt for more control and manage the nodes yourself.

Within each GKE cluster, GKE manages the Kubernetes control plane life cycle from cluster creation to deletion. With Autopilot enabled, GKE can also manage your nodes, including automated provisioning, scaling, and scheduling. Or, you can opt for more control and manage the nodes yourself.

Build platforms for all of your workloads

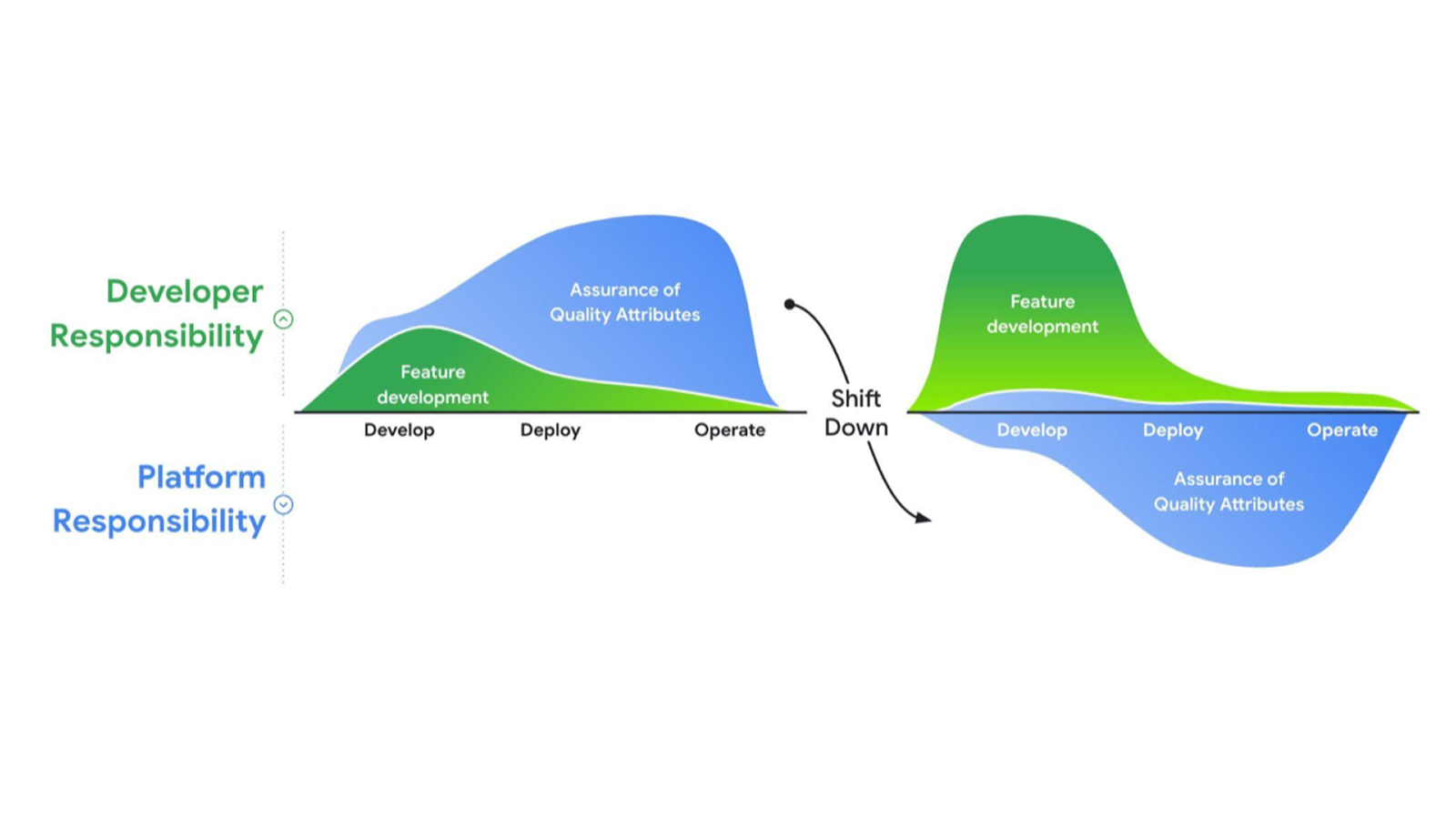

Build an enterprise developer platform for fast, reliable app delivery

Build an enterprise developer platform for fast, reliable app delivery

Google Cloud offers a comprehensive suite of managed services and runtimes that act as building blocks for your platform, so you can find the right combination of services for your use cases. GKE’s deep integration with the Google Cloud ecosystem, unmatched scalability, and built-in security posture make it an ideal foundation for your platform.

What is platform engineering?

Tutorials, quickstarts, & labs

Build an enterprise developer platform for fast, reliable app delivery

Build an enterprise developer platform for fast, reliable app delivery

Google Cloud offers a comprehensive suite of managed services and runtimes that act as building blocks for your platform, so you can find the right combination of services for your use cases. GKE’s deep integration with the Google Cloud ecosystem, unmatched scalability, and built-in security posture make it an ideal foundation for your platform.

Learning resources

What is platform engineering?

Train, serve, and scale gen AI models

Deploy gen AI inference with GKE

Deploy gen AI inference with GKE

GKE not only provides a platform for AI, but it also simplifies and automates Kubernetes operations with AI. With support for up to 65,000 nodes and integration with AI Hypercomputer, you can train and scale your largest gen AI models on GKE.

Plus, GKE’s Gen AI-aware inference capabilities provide up to 30% lower serving costs, 60% lower tail latency, and 40% higher throughput than OSS K8s.

Tutorials, quickstarts, & labs

Deploy gen AI inference with GKE

Deploy gen AI inference with GKE

GKE not only provides a platform for AI, but it also simplifies and automates Kubernetes operations with AI. With support for up to 65,000 nodes and integration with AI Hypercomputer, you can train and scale your largest gen AI models on GKE.

Plus, GKE’s Gen AI-aware inference capabilities provide up to 30% lower serving costs, 60% lower tail latency, and 40% higher throughput than OSS K8s.

Multi-agent orchestration

Deploy and orchestrate multi-agent applications

Deploy and orchestrate multi-agent applications

Agentic AI is centered around the orchestration and execution of agents that use LLMs as a "brain" to perform actions through tools.

GKE is the definitive open platform to support agents and orchestrate your compute so you can embrace the next generation of agentic AI workloads.

Tutorials, quickstarts, & labs

Deploy and orchestrate multi-agent applications

Deploy and orchestrate multi-agent applications

Agentic AI is centered around the orchestration and execution of agents that use LLMs as a "brain" to perform actions through tools.

GKE is the definitive open platform to support agents and orchestrate your compute so you can embrace the next generation of agentic AI workloads.

Pricing

| How GKE pricing works | After free credits are used, the total cost is based on cluster operation mode, cluster management fees, and applicable inbound data transfer fees. | |

|---|---|---|

| Service | Description | Price (USD) |

Free tier | The GKE free tier provides $74.40 in monthly credits per billing account that are applied to zonal and Autopilot clusters. | Free |

Cluster management fee | Includes fully automated cluster life cycle management, pod and cluster autoscaling, cost visibility, automated infrastructure cost optimization, and multi-cluster management features, at no extra cost. | $0.10 per cluster per hour |

Compute | When using Autopilot, only pay for the CPU, memory, and compute resources that are provisioned for your pods. For node pools and compute classes that don't use Autopilot, you're billed for the nodes' underlying Compute Engine instances until the nodes are deleted. | Refer to Compute Engine pricing |

Learn more about GKE pricing. View all pricing details.

How GKE pricing works

After free credits are used, the total cost is based on cluster operation mode, cluster management fees, and applicable inbound data transfer fees.

Free tier

The GKE free tier provides $74.40 in monthly credits per billing account that are applied to zonal and Autopilot clusters.

Free

Cluster management fee

Includes fully automated cluster life cycle management, pod and cluster autoscaling, cost visibility, automated infrastructure cost optimization, and multi-cluster management features, at no extra cost.

$0.10

per cluster per hour

Compute

When using Autopilot, only pay for the CPU, memory, and compute resources that are provisioned for your pods.

For node pools and compute classes that don't use Autopilot, you're billed for the nodes' underlying Compute Engine instances until the nodes are deleted.

Refer to Compute Engine pricing

Learn more about GKE pricing. View all pricing details.

Business Case

Learn from GKE customers

Rembrand reduces multicloud complexity with GKE

By leveraging Google Cloud GPUs, Rembrand cut training costs by 50% and accelerated development 6x.

Unlock AI innovation on GKE

AI-powered advertising provider Moloco gets 10x faster model training times with TPUs on GKE.

With TPUs on GKE, HubX reduces latency by up to 66%, leading to a better user experience and increased conversion rates.

10 years and counting: Why Signify chose GKE. With GKE as its foundation, the Philips Hue Platform has scaled its infrastructure to support a 1,150% increase in transactions and commands over the past decade.