Gemini Enterprise Agent Platform

Innova, crea e implementa agentes listos para empresas

Gemini Enterprise Agent Platform es la plataforma integral de Google Cloud para que los desarrolladores creen, escalen, administren y optimicen agentes. Es un destino único para que los equipos técnicos creen agentes que puedan transformar las aplicaciones y los flujos de trabajo empresariales en potentes sistemas de agentes.

Los clientes nuevos obtienen hasta $300 en créditos gratuitos para probar Agent Platform y otros productos de Google Cloud.

Funciones

Crea, escala, administra y optimiza agentes de IA de nivel empresarial

Agent Platform es nuestra plataforma abierta y completa que les permite a las empresas crear, escalar, administrar y optimizar rápidamente agentes de nivel empresarial basados en sus datos. Proporciona la base full stack y la amplia variedad de opciones para desarrolladores que necesitas para transformar tus aplicaciones y flujos de trabajo en sistemas agentes potentes a escala global.

Desarrollo y flujos de trabajo potenciados por agentes

Google Antigravity ahora está disponible a través de Agent Platform y proporciona una app centralizada para dirigir, personalizar y organizar agentes. Puedes implementar varios agentes para ejecutar simultáneamente flujos de trabajo completos, como lanzamientos de productos, lo que automatiza la generación de código para tu sitio web, la creación de activos de marcas y la producción de correos electrónicos para clientes. Descarga Antigravity y accede a la aplicación para computadoras de escritorio o a la CLI de Antigravity con tus credenciales estándar de Google Cloud.

Más de 200 modelos y herramientas de IA de Google y terceros

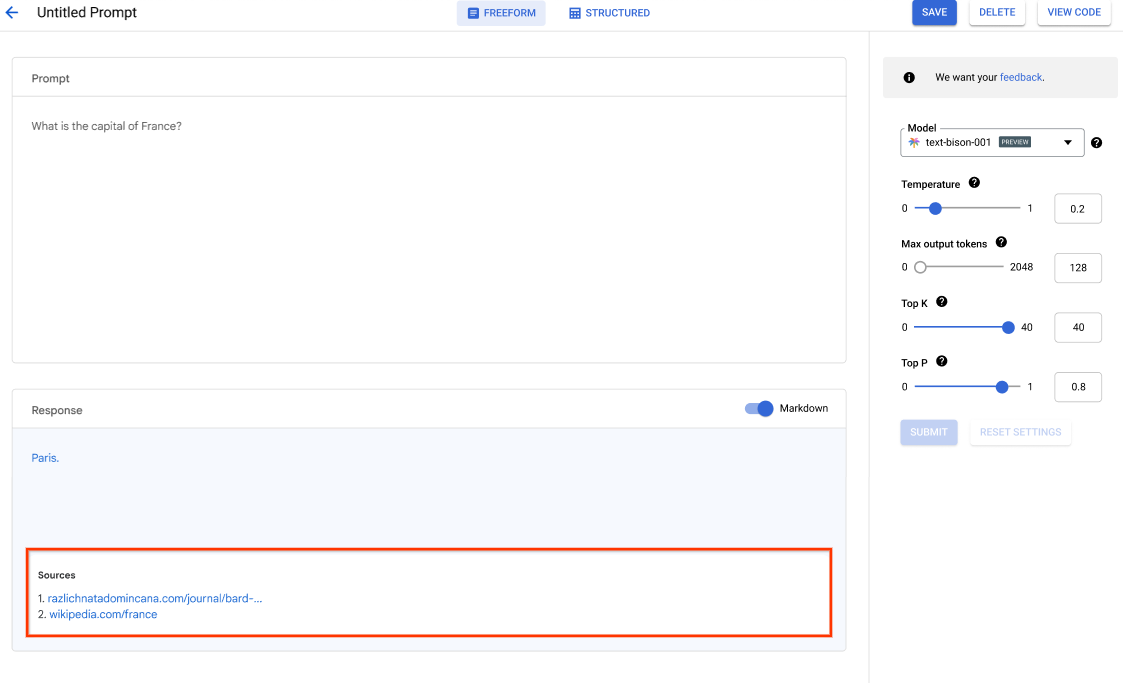

Elige entre los modelos multimodales más recientes de Google (como Gemini 3.5), modelos de terceros (como la familia de modelos Claude de Anthropic) y modelos de código abierto (como Gemma) en Model Garden. También puedes personalizar los modelos según tu caso de uso con una variedad de opciones de ajuste.

Nuestro servicio de evaluación de modelos ofrece herramientas de nivel empresarial para la evaluación objetiva y basada en datos de modelos de IA generativa.

Plataforma de IA integrada y abierta

Los científicos de datos pueden avanzar más rápido con las herramientas de Agent Platform para entrenar, ajustar e implementar modelos de AA.

Los notebooks de Agent Platform, incluida tu elección de Colab Enterprise o Workbench, se integran de forma nativa en BigQuery, lo que proporciona una sola plataforma en todas las cargas de trabajo de IA y datos.

El entrenamiento y la predicción de Agent Platform te ayudan a reducir el tiempo de entrenamiento y a implementar modelos en producción con facilidad a través de los frameworks de código abierto que elijas y la infraestructura de IA optimizada.

MLOps para IA predictiva y generativa

Agent Platform ofrece herramientas de MLOps específicas para que los científicos de datos y los ingenieros de AA automaticen, estandaricen y administren proyectos de AA.

Las herramientas modulares te ayudan a colaborar con los equipos y a mejorar los modelos durante todo el ciclo de vida del desarrollo. Identifica el mejor modelo para un caso de uso con Model Evaluation de IA generativa, organiza flujos de trabajo con canalizaciones, administra cualquier modelo con Model Registry, entrega, comparte y reutiliza atributos de AA con Feature Store, y supervisa los modelos para el sesgo de entrada y el desvío.

Cómo funciona

Agent Platform ofrece varias opciones para la creación de agentes, el entrenamiento de modelos y la implementación:

- Agent Platform te permite crear, escalar, administrar y optimizar agentes listos para la empresa en una plataforma unificada.

- Agent Studio te brinda acceso a grandes modelos, incluido Gemini 3, para que puedas evaluarlos, ajustarlos e implementarlos, y usarlos en tus aplicaciones potenciadas por IA.

- Model Garden te permite descubrir, probar, personalizar e implementar en Agent Platform, y seleccionar modelos y elementos de código abierto (OSS).

- El entrenamiento personalizado te brinda control total sobre el proceso de entrenamiento, incluido el uso de tu marco de trabajo de AA preferido, la escritura de tu propio código de entrenamiento y la elección de las opciones de ajuste de hiperparámetros.

Agent Platform ofrece varias opciones para la creación de agentes, el entrenamiento de modelos y la implementación:

- Agent Platform te permite crear, escalar, administrar y optimizar agentes listos para la empresa en una plataforma unificada.

- Agent Studio te brinda acceso a grandes modelos, incluido Gemini 3, para que puedas evaluarlos, ajustarlos e implementarlos, y usarlos en tus aplicaciones potenciadas por IA.

- Model Garden te permite descubrir, probar, personalizar e implementar en Agent Platform, y seleccionar modelos y elementos de código abierto (OSS).

- El entrenamiento personalizado te brinda control total sobre el proceso de entrenamiento, incluido el uso de tu marco de trabajo de AA preferido, la escritura de tu propio código de entrenamiento y la elección de las opciones de ajuste de hiperparámetros.

Crea e implementa agentes de IA

Aprovecha las capacidades avanzadas de IA con la Agent Platform

Aprovecha las capacidades avanzadas de IA con la Agent Platform

Crea agentes y aplicaciones de IA generativa listos para producción en una plataforma que se adapta a tu crecimiento. Nuestra plataforma de agentes ofrece un entorno seguro para desarrollar e implementar modelos y aplicaciones de IA.

Para los desarrolladores, Agent Platform sigue siendo nuestra plataforma avanzada en la que pueden crear, personalizar y ajustar agentes sofisticados con frameworks como el Kit de desarrollo de agentes (ADK).

Comienza con este codelab y crea tu primera aplicación de IA hoy mismo

Instructivos, guías de inicio rápido y labs

Aprovecha las capacidades avanzadas de IA con la Agent Platform

Aprovecha las capacidades avanzadas de IA con la Agent Platform

Crea agentes y aplicaciones de IA generativa listos para producción en una plataforma que se adapta a tu crecimiento. Nuestra plataforma de agentes ofrece un entorno seguro para desarrollar e implementar modelos y aplicaciones de IA.

Para los desarrolladores, Agent Platform sigue siendo nuestra plataforma avanzada en la que pueden crear, personalizar y ajustar agentes sofisticados con frameworks como el Kit de desarrollo de agentes (ADK).

Comienza con este codelab y crea tu primera aplicación de IA hoy mismo

Compila con modelos de Gemini

Comienza a crear con Agent Studio

Comienza a crear con Agent Studio

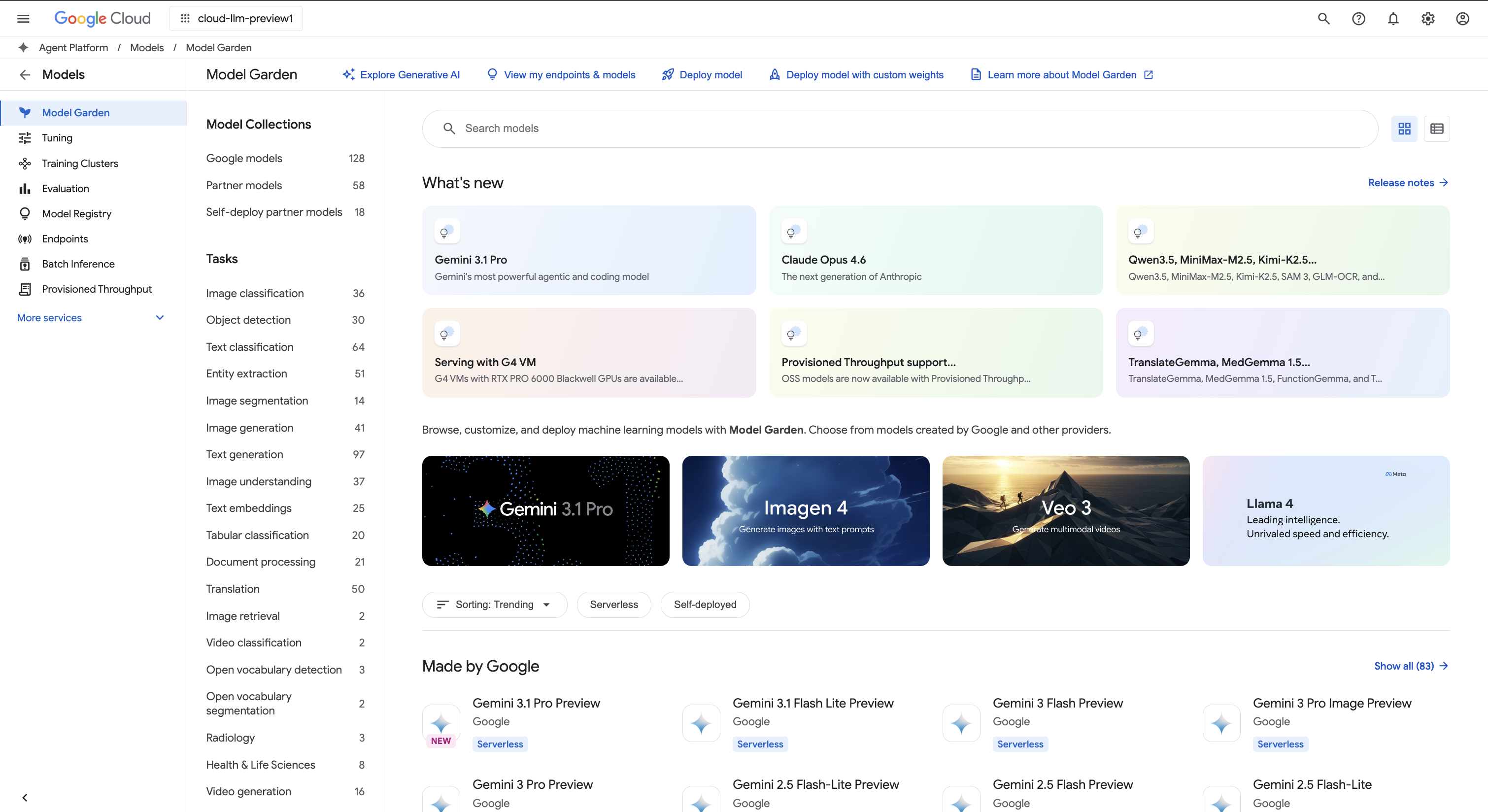

Usa Agent Studio para diseñar, probar y administrar instrucciones para modelos de Gemini con lenguaje natural, código, imágenes o video. Prueba instrucciones de muestra para extraer texto de las imágenes, realizar simulaciones de imágenes en formato HTML o incluso generar respuestas sobre las imágenes o los videos subidos.

También puedes comenzar a probar Gemini en Agent Platform con una clave de API.

Accede a modelos de Gemini a través de la API de Gemini en Agent Platform

- Python

- JavaScript

- Java

- Ir

- Curl

Instructivos, guías de inicio rápido y labs

Comienza a crear con Agent Studio

Comienza a crear con Agent Studio

Usa Agent Studio para diseñar, probar y administrar instrucciones para modelos de Gemini con lenguaje natural, código, imágenes o video. Prueba instrucciones de muestra para extraer texto de las imágenes, realizar simulaciones de imágenes en formato HTML o incluso generar respuestas sobre las imágenes o los videos subidos.

También puedes comenzar a probar Gemini en Agent Platform con una clave de API.

Muestra de código

Accede a modelos de Gemini a través de la API de Gemini en Agent Platform

- Python

- JavaScript

- Java

- Ir

- Curl

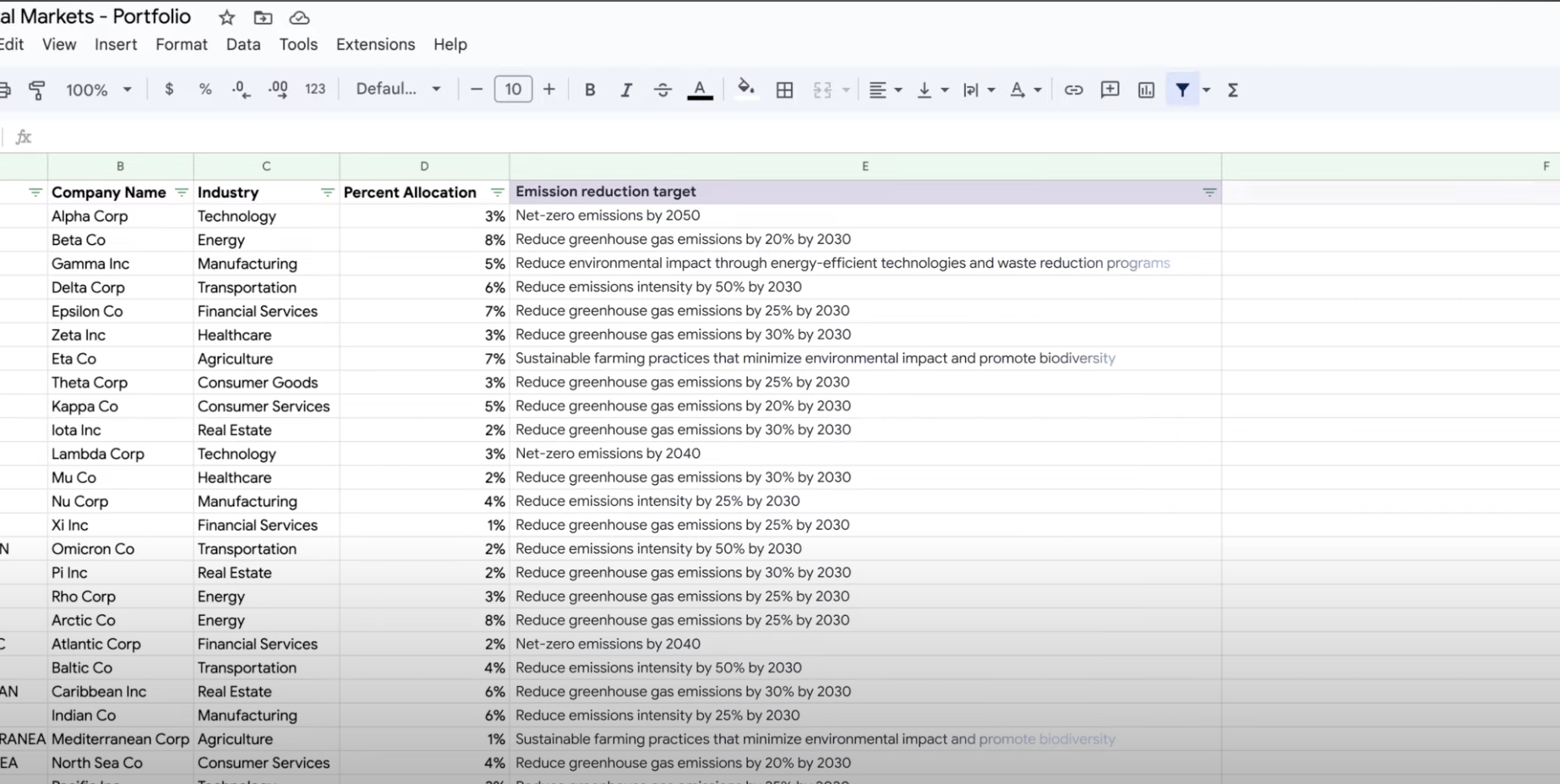

Extrae, resume y clasifica datos

Usa IA generativa para resumir, clasificar y extraer

Usa IA generativa para resumir, clasificar y extraer

Aprende a crear instrucciones de texto para controlar cualquier cantidad de tareas con la asistencia de la IA generativa de Agent Platform. Algunas de las tareas más comunes son la clasificación, el resumen y la extracción. Gemini en Agent Platform te permite diseñar instrucciones con flexibilidad en términos de su estructura y formato.

Instructivos, guías de inicio rápido y labs

Usa IA generativa para resumir, clasificar y extraer

Usa IA generativa para resumir, clasificar y extraer

Aprende a crear instrucciones de texto para controlar cualquier cantidad de tareas con la asistencia de la IA generativa de Agent Platform. Algunas de las tareas más comunes son la clasificación, el resumen y la extracción. Gemini en Agent Platform te permite diseñar instrucciones con flexibilidad en términos de su estructura y formato.

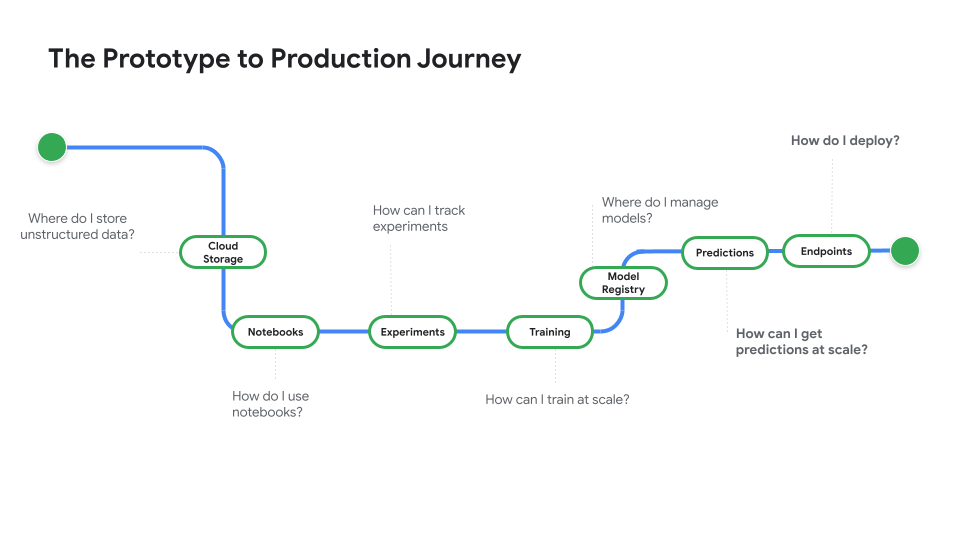

Implementa un modelo para usar en la producción

Implementa modelos para predicciones en línea o por lotes

Implementa modelos para predicciones en línea o por lotes

Cuando tengas todo listo para usar tu modelo para resolver un problema real, regístralo en Model Registry y usa el servicio de predicción de Agent Platform para realizar predicciones por lotes y en línea.

Mira Del prototipo a la producción, una serie de videos que te llevará del código de notebook a un modelo implementado.

Instructivos, guías de inicio rápido y labs

Implementa modelos para predicciones en línea o por lotes

Implementa modelos para predicciones en línea o por lotes

Cuando tengas todo listo para usar tu modelo para resolver un problema real, regístralo en Model Registry y usa el servicio de predicción de Agent Platform para realizar predicciones por lotes y en línea.

Mira Del prototipo a la producción, una serie de videos que te llevará del código de notebook a un modelo implementado.

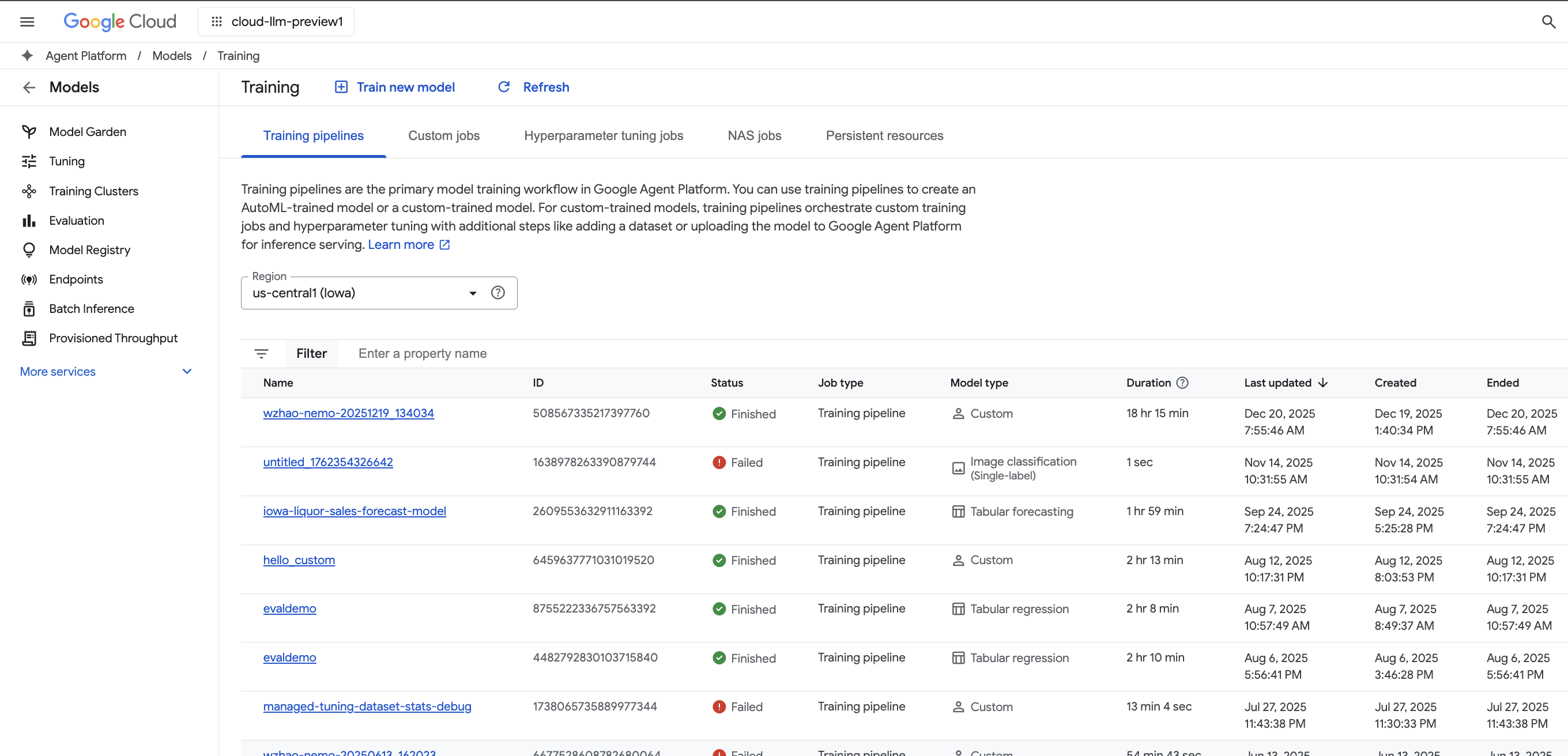

Entrenamiento de modelos personalizados

Descripción general y documentación del entrenamiento de modelos personalizados

Descripción general y documentación del entrenamiento de modelos personalizados

Obtén una descripción general del flujo de trabajo del entrenamiento personalizado en Agent Platform, sus beneficios y las diversas opciones disponibles. En esta página, también se detalla cada paso del flujo de trabajo del entrenamiento del AA, desde la preparación de los datos hasta las predicciones.

Mira un video explicativo de los pasos necesarios para entrenar modelos personalizados en Agent Platform.

Instructivos, guías de inicio rápido y labs

Descripción general y documentación del entrenamiento de modelos personalizados

Descripción general y documentación del entrenamiento de modelos personalizados

Obtén una descripción general del flujo de trabajo del entrenamiento personalizado en Agent Platform, sus beneficios y las diversas opciones disponibles. En esta página, también se detalla cada paso del flujo de trabajo del entrenamiento del AA, desde la preparación de los datos hasta las predicciones.

Mira un video explicativo de los pasos necesarios para entrenar modelos personalizados en Agent Platform.

Precios

| Cómo funcionan los precios de Agent Platform | Paga por las herramientas de Agent Platform, el almacenamiento, el procesamiento y los recursos de Cloud que uses. Los clientes nuevos obtienen $300 en créditos gratuitos para probar Agent Platform y otros productos de Google Cloud. | |

|---|---|---|

| Herramientas y uso | Descripción | Precio |

IA generativa | Modelo de imagen para la generación de imágenes Según los precios de la entrada de imágenes, la entrada de caracteres o del entrenamiento personalizado. | A partir de $0.0001 |

Generación de mensajes, chat y código Según cada 1,000 caracteres de entrada (mensaje) y cada 1,000 caracteres de salida (respuesta). | A partir de $0.0001 por cada 1,000 caracteres | |

Modelos entrenados de forma personalizada | Entrenamiento personalizado de modelos Según el tipo de máquina que se usa por hora, la región y los aceleradores utilizados. Obtén una estimación con el equipo de ventas o nuestra calculadora de precios. | Comunicarse con Ventas |

Notebooks de Agent Platform | Recursos de procesamiento y almacenamiento Según las mismas tarifas que Compute Engine y Cloud Storage. | Consulta los productos |

Tarifas de administración Además del uso de recursos mencionado anteriormente, se aplican tarifas de administración según la región, las instancias, los notebooks y los notebooks administrados que se usen. Ver detalles. | Consulta los detalles | |

Canalizaciones de Agent Platform | Tarifas de ejecución y adicionales Según el cargo de ejecución, los recursos usados y cualquier cargo de servicio adicional. | A partir de $0.03 por ejecución de canalización |

Búsqueda de vectores de Agent Platform | Costos de entrega y compilación Según el tamaño de tus datos, la cantidad de consultas por segundo (QPS) que deseas ejecutar y la cantidad de nodos que usas. Ver ejemplo. | Consulta el ejemplo |

Consulta los detalles de precios de todas las funciones y los servicios de Agent Platform.

Cómo funcionan los precios de Agent Platform

Paga por las herramientas de Agent Platform, el almacenamiento, el procesamiento y los recursos de Cloud que uses. Los clientes nuevos obtienen $300 en créditos gratuitos para probar Agent Platform y otros productos de Google Cloud.

IA generativa

Modelo de imagen para la generación de imágenes

Según los precios de la entrada de imágenes, la entrada de caracteres o del entrenamiento personalizado.

Starting at

$0.0001

Generación de mensajes, chat y código

Según cada 1,000 caracteres de entrada (mensaje) y cada 1,000 caracteres de salida (respuesta).

Starting at

$0.0001

por cada 1,000 caracteres

Modelos entrenados de forma personalizada

Entrenamiento personalizado de modelos

Según el tipo de máquina que se usa por hora, la región y los aceleradores utilizados. Obtén una estimación con el equipo de ventas o nuestra calculadora de precios.

Comunicarse con Ventas

Notebooks de Agent Platform

Recursos de procesamiento y almacenamiento

Según las mismas tarifas que Compute Engine y Cloud Storage.

Consulta los productos

Tarifas de administración

Además del uso de recursos mencionado anteriormente, se aplican tarifas de administración según la región, las instancias, los notebooks y los notebooks administrados que se usen. Ver detalles.

Consulta los detalles

Canalizaciones de Agent Platform

Tarifas de ejecución y adicionales

Según el cargo de ejecución, los recursos usados y cualquier cargo de servicio adicional.

Starting at

$0.03

por ejecución de canalización

Búsqueda de vectores de Agent Platform

Costos de entrega y compilación

Según el tamaño de tus datos, la cantidad de consultas por segundo (QPS) que deseas ejecutar y la cantidad de nodos que usas. Ver ejemplo.

Consulta el ejemplo

Consulta los detalles de precios de todas las funciones y los servicios de Agent Platform.

Comienza tu prueba de concepto

Caso empresarial

Aprovecha todo el potencial de la IA generativa

"La precisión de la solución de IA generativa de Google Cloud y la practicidad de Agent Platform nos dan la confianza que necesitábamos para implementar esta tecnología de vanguardia en el centro de nuestra empresa y lograr nuestro objetivo a largo plazo de obtener un tiempo de respuesta de cero minutos".

Abdol Moabery, director general de GA Telesis

Contenido relacionado

Informes de analistas

Se nombró a Google líder en la Evaluación de proveedores de software de modelos de base de ciclo de vida de IA generativa de IDC MarketScape de 2025. Descargue el informe

Se nombró a Google líder en el Cuadrante Mágico™ de Gartner para plataformas de desarrollo de aplicaciones de IA, 4º trimestre de 2025. Leer el informe

Se nombró líder a Google en el informe The Forrester Wave™: AI/ML Platforms del 3ᵉʳ trim. de 2024. Leer el informe