Secure AI innovation without interruption

Secure AI innovation without interruption

Innovation can’t scale without security. Discover how to build, deploy, and govern AI responsibly—with visibility, control, and trust built into every layer.

Google Cloud helps teams build, deploy, and govern AI responsibly

Google Cloud helps teams build, deploy, and govern AI responsibly

Protect your AI innovation with Google Cloud IAM

Learn about the latest innovations in IAM, access risk, cloud governance, and Gemini-powered assistance.

Securing the foundations

Protect your AI innovation with Google Cloud IAM

Learn about the latest innovations in IAM, access risk, cloud governance, and Gemini-powered assistance.

Securing the AI lifecycle

Unsupervised autonomy: How to secure AI agents and limit risk

Learn how Google Cloud helps secure the entire AI agent life cycle with visibility, data protection, and threat mitigation.

Building trust through transparency and sovereignty

Sovereign Cloud from Google: The power of choice

Learn how Google’s Sovereign Cloud delivers the power of choice with residency, access, and transparency controls.

Real-world outcomes: Securing AI at scale

Organizations across industries are already using AI protection capabilities to scale AI safely and confidently.

Secure what's next

Explore the latest threat intelligence and AI-driven security strategies to protect and accelerate your innovation.

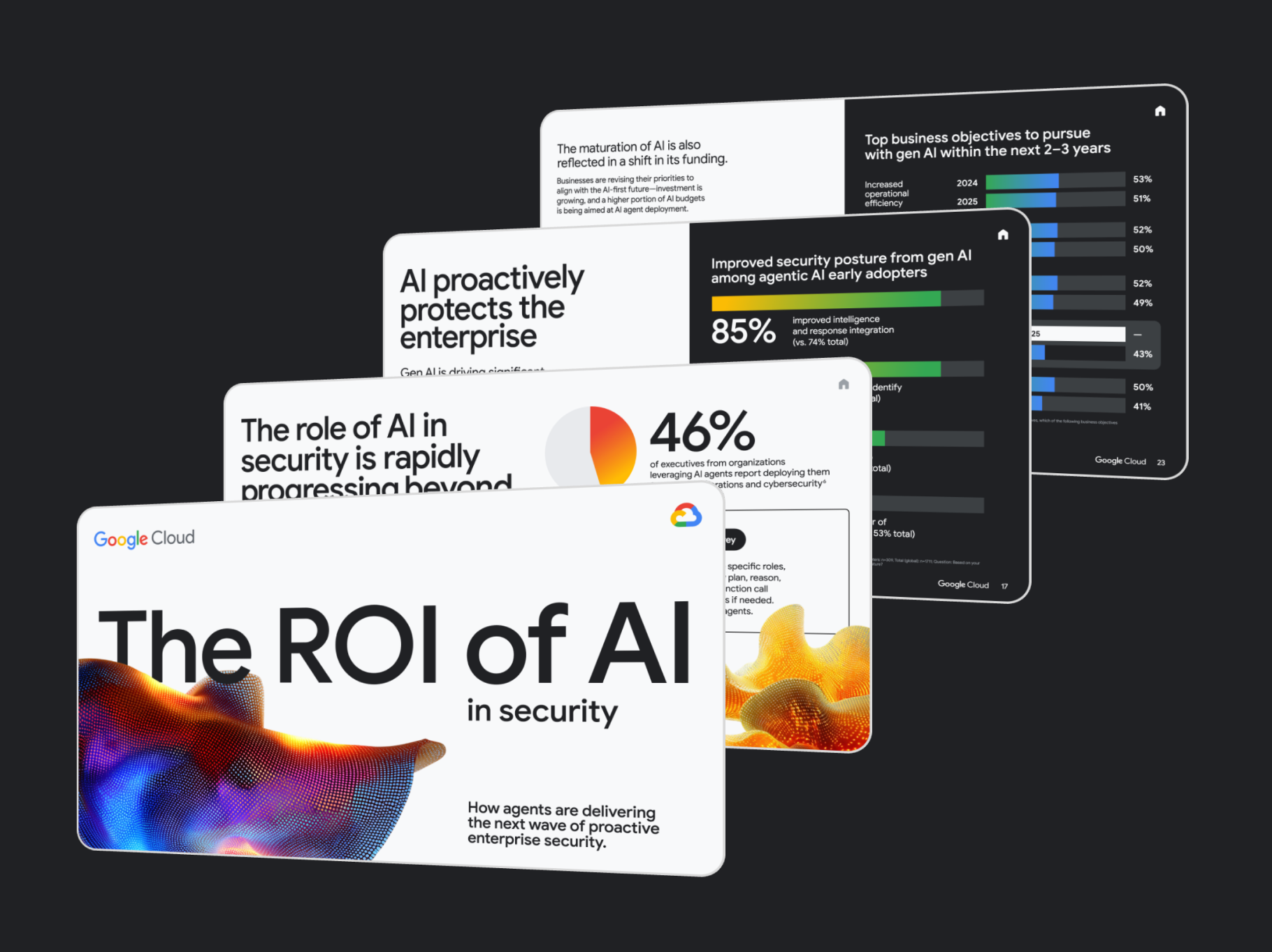

The State of AI Security and GovernanceDownload the full survey report to understand the critical state of AI security and governance and learn how to build the mature policies needed to accelerate adoption with confidence.Download the report

The State of AI Security and GovernanceDownload the full survey report to understand the critical state of AI security and governance and learn how to build the mature policies needed to accelerate adoption with confidence.Download the report Cybersecurity Forecast 2026Explore the future of AI-driven threats. Learn how adversaries are operationalizing "Shadow Agents," deepfakes, and prompt injection—and how defenders can stay ahead.Download the forecast

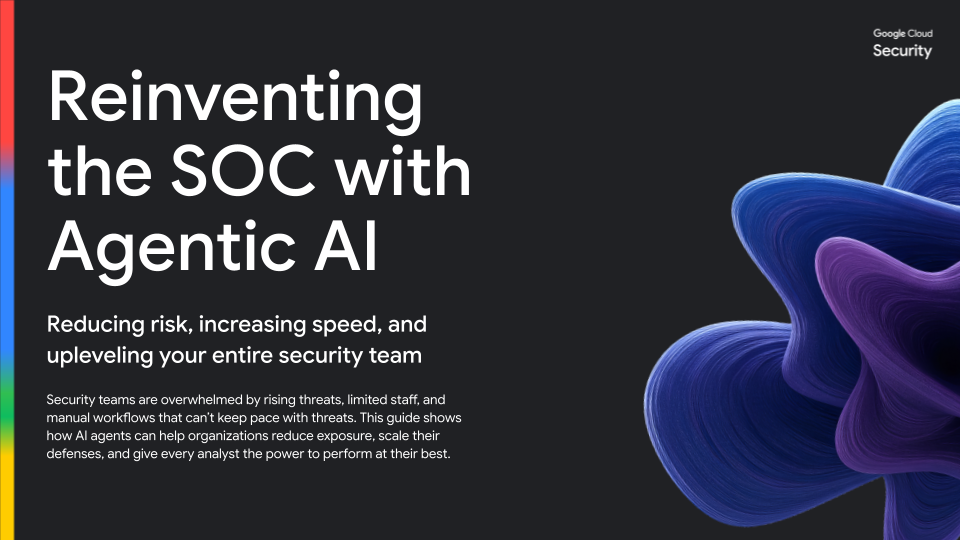

Cybersecurity Forecast 2026Explore the future of AI-driven threats. Learn how adversaries are operationalizing "Shadow Agents," deepfakes, and prompt injection—and how defenders can stay ahead.Download the forecast Reinventing the SOC with Agentic AIDiscover how to reinvent your security operations with agentic AI to reduce risk, increase speed, and empower your entire security team.Download the ebook

Reinventing the SOC with Agentic AIDiscover how to reinvent your security operations with agentic AI to reduce risk, increase speed, and empower your entire security team.Download the ebook

Ready to get started?

Google Cloud equips you with the frameworks, controls, and expertise to innovate responsibly—from model to market. Whether you are assessing risk, structuring governance, or deploying autonomous agents, we offer a clear path forward.

- Apply the Secure AI Framework (SAIF)Take a practical approach to addressing AI security challenges with a conceptual framework for secure AI systems.Take the assessment

- Secure the entire AI stack and life cycleConfidently build, deploy, run, and govern your AI workloads in a secure, compliant, and private manner.Learn more

- Securing the use of AIAssess the architecture, data defenses, and applications built on AI models with expert-led threat readiness and AI-specific assessments from Mandiant Consulting.Talk to an expert

Frequently Asked Questions

What are the top security risks when adopting Generative AI?

According to the 2025 State of AI Security and Governance Report, the top risks cited by enterprises are sensitive data exposure (52%) and regulatory compliance (50%). Other emerging threats include Shadow AI, prompt injection, and model theft. A comprehensive defense requires securing the data, the model, and the user interaction layer simultaneously.

What is Shadow AI and how can my organization detect it?

Shadow AI occurs when employees use unsanctioned models or datasets, bypassing IT governance. To detect it, organizations need automated discovery tools. Google Cloud’s Security Command Center provides a real-time inventory of all AI assets—including unauthorized "shadow" models—giving security teams the visibility needed to apply consistent security policies to unauthorized models, reducing risk without disrupting business workflows.

Do I need specialized security skills to secure AI workloads?

While skill gaps are a common barrier (cited by 53% of organizations), you don’t need to build everything from scratch. Google Cloud embeds AI security directly into its existing platform. Tools like Security Command Center use AI to summarize threats and recommend fixes, helping your existing security team manage AI risks without needing to be data science experts.

How do I secure Agentic AI and non-human identities?

Securing Agentic AI requires treating autonomous agents as high-value identities. Unlike standard users, agents need strict Identity and Access Management (IAM) policies that enforce "least privilege." Google Cloud helps you map agent interaction paths and assign specific agent identities, ensuring they can only access the data required for their specific tasks.

Can Google Cloud secure third-party and open-source AI models?

Yes. Google Cloud supports a multi-model security strategy. Whether you are using first-party models like Gemini or third-party open-source models on Vertex AI, you can apply unified runtime guardrails using Model Armor. This protects your stack from prompt injections and data leakage regardless of the underlying model provider.

How does Google Cloud protect against prompt injection attacks?

Model Armor provides a dedicated security layer that filters prompt injections and sensitive data leakage before they reach the model—eliminating the need for custom-coded interceptors. It detects and blocks malicious prompts (like attempts to jailbreak the model) and filters unsafe content before it reaches your applications, protecting both your data and your brand reputation.

How can I ensure my AI models don't leak private data?

Preventing data leakage requires a "defense-in-depth" approach. Google Cloud offers Confidential Computing to encrypt data while it is being processed (in use), along with Data Loss Prevention (DLP) controls that can automatically scan and redact sensitive information (like PII) from model responses before they are shown to users.

How does Google Cloud ensure data sovereignty for AI workloads?

For regulated industries, data sovereignty means keeping sensitive data within specific physical and digital boundaries. Google Sovereign Cloud enables you to adopt AI while maintaining full control over data residency and encryption keys (using External Key Manager), ensuring compliance with regional regulations like GDPR or local data laws.

What is the Secure AI Framework (SAIF)?

The Secure AI Framework (SAIF) is Google’s blueprint for responsible AI adoption, designed to help organizations integrate security into their AI development lifecycle. It aligns with industry standards to help you assess risks, automate controls, and build a culture of security that scales as fast as your AI innovation.

What is the difference between governance and security for AI?

Security focuses on technical defenses (like firewalls and encryption), while Governance focuses on policy, accountability, and risk management. As highlighted in our AI Innovation Without Interruption guide, successful AI adoption requires both: robust security tools to enforce controls, and a governance framework to define who is responsible for AI decisions and data usage.