對於 Cloud Run 服務,每個修訂版本都會自動調整所需執行個體數量,以處理所有傳入要求。

當越來越多的執行個體處理要求時,便會使用更多的 CPU 和記憶體,導致費用水漲船高。

為了讓您享有更多控制權,Cloud Run 提供「每個執行個體的並行要求數量上限」設定,可指定特定執行個體可同時處理的要求數量上限。

每個執行個體的並行要求數量上限

您可以設定每個執行個體的並行要求數量上限。根據預設,每個 Cloud Run 執行個體最多可同時接收 80 個要求;您可以將這個數字提高至最多 1000。

雖然建議您使用預設值,但如有需要,也可以降低最大並行作業數。舉例來說,如果程式碼無法處理並行要求,請將並行處理設定為 1。

指定的並行處理值是上限。如果執行個體的 CPU 使用率已達到極限,Cloud Run 可能不會向特定執行個體傳送太多要求。在這種情況下,Cloud Run 執行個體可能會顯示未使用最高並行作業數。舉例來說,如果 CPU 使用率持續偏高,系統可能會改為向上調整執行個體數量。

下圖顯示每個執行個體的並行要求數量上限設定,如何影響處理傳入並行要求所需的執行個體數量:

調整自動調度資源和資源使用率的並行作業

調整每個執行個體的並行作業上限,會對服務的資源擴充和使用方式造成重大影響。

- 降低並行數:強制 Cloud Run 使用更多執行個體處理相同要求量,因為每個執行個體處理的請求較少。這可改善應用程式的回應速度,無論是針對高內部平行處理作業進行最佳化,或是根據要求負載更快速地調整應用程式。

- 並行處理能力提升:讓每個執行個體處理更多要求,進而減少有效執行個體數量並降低成本。這類應用程式可有效執行並行 I/O 受限工作,或可真正利用多個 vCPU 處理並行要求。

請先使用預設並行性 (80),然後密切監控應用程式的效能和使用率,並視需要進行調整。

與多個 vCPU 執行個體的並行作業

如果您的服務使用多個 vCPU,但應用程式是單執行緒或實際上是單執行緒 (受 CPU 限制),調整並行處理作業就顯得格外重要。

- vCPU 熱點:在多 vCPU 執行個體上,單執行緒應用程式可能會將一個 vCPU 用盡,而其他 vCPU 則處於閒置狀態。Cloud Run CPU 自動配置器會測量所有 vCPU 的平均 CPU 使用率。在這種情況下,平均 CPU 使用率可能會維持在較低的水平,導致無法有效地根據 CPU 調度資源。

- 使用並行處理來推動資源調度:如果 CPU 型自動調度資源因 vCPU 熱點而無效,降低並行處理上限就會成為重要的工具。由於單執行緒應用程式需要大量記憶體,因此經常會發生 vCPU 熱點。使用並行作業來推動調整,會強制根據要求吞吐量進行調整。這可確保啟動更多執行個體來處理負載,減少個別執行個體的排隊和延遲時間。

何時將並行限制在一次一個要求?

您可以限制並行,如此一來,一次只會傳送一個要求到每個執行中的執行個體。若出現以下狀況,您就可以考慮限制並行:

- 每個要求都佔用大部分可用 CPU 或記憶體。

- 容器映像檔在設計時並未考慮同時處理多個要求,例如容器需依賴兩個要求無法共用的全域狀態。

請注意,並行 1 可能會對資源調度效能造成負面影響,因為許多執行個體需要開始處理大幅增加的傳入需求。如需更多考量事項,請參閱「吞吐量與延遲時間之間的權衡」。

個案研究

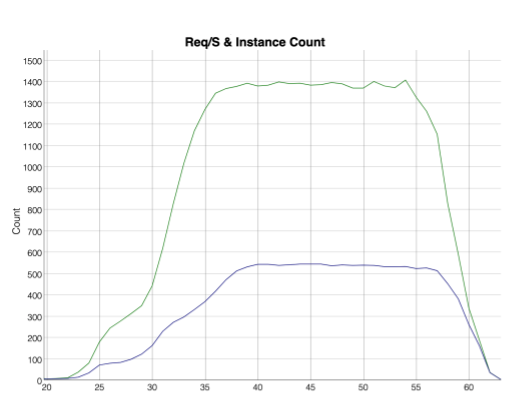

下列指標顯示一個用例,其中 400 個用戶端每秒向 Cloud Run 服務提出 3 個要求,而服務已將每個執行個體的並行要求數量上限設為 1。頂端的綠線顯示隨時間變化的請求數量,底部的藍線則顯示啟動用於處理請求的執行個體數量。

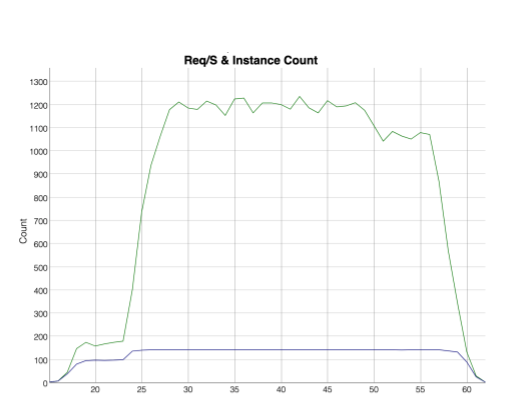

下列指標顯示,400 個用戶端每秒向 Cloud Run 服務提出 3 次要求,而該服務的每個執行個體並行要求數上限為 80 次。頂端的綠色線條顯示要求隨時間變化情形,底部的藍色線條則顯示啟動來處理要求的執行個體數量。請注意,處理相同要求量所需的執行個體數量會大幅減少。

原始碼部署作業的並行作業

啟用並行作業後,Cloud Run 不會在同一個執行個體處理的並行要求之間提供隔離功能。在這種情況下,您必須確保程式碼可安全地並行執行。如要變更這項設定,請設定其他並行值。建議您先從較低的並行作業數 (例如 8) 開始,再逐步增加。如果從過高的並行作業開始,可能會因資源限制 (例如記憶體或 CPU) 而導致非預期行為。

語言執行階段也會影響並行作業。以下列出部分語言的影響:

Node.js 本質上是單執行緒的。如要充分利用並行作業,請使用 JavaScript 的非同步程式碼樣式,這是 Node.js 的慣用方式。如需詳細資訊,請參閱官方 Node.js 說明文件中的「非同步流程控制」。

針對 Python 3.8 以上版本,每個執行個體要支援高並發性,就需要足夠的執行緒來處理並發性。建議您設定執行階段環境變數,讓執行緒值等於並行處理值,例如:

THREADS=8。

後續步驟

如要管理 Cloud Run 服務每個執行個體的並行要求數量上限,請參閱「設定每個執行個體的並行要求數量上限」。

如要最佳化每個執行個體的並行要求數量上限設定,請參閱調整並行的開發提示。