Google Cloud's 8th generation TPUs: The computing foundation for the agentic era

Accelerate the AI lifecycle with specialized architectures purpose-built for frontier-model training and real-time reasoning.

Connect with a Google Cloud specialist to learn more.

Accelerate the AI lifecycle with specialized architectures purpose-built for frontier-model training and real-time reasoning.

Connect with a Google Cloud specialist to learn more.

Request more information.

- (+1)

- Afghanistan

- Albania

- Algeria

- American Samoa

- Andorra

- Angola

- Anguilla

- Antarctica

- Antigua & Barbuda

- Argentina

- Armenia

- Aruba

- Ascension Island

- Australia

- Austria

- Azerbaijan

- Bahamas

- Bahrain

- Bangladesh

- Barbados

- Belarus

- Belgium

- Belize

- Benin

- Bermuda

- Bhutan

- Bolivia

- Bosnia & Herzegovina

- Botswana

- Bouvet Island

- Brazil

- British Indian Ocean Territory

- British Virgin Islands

- Brunei

- Bulgaria

- Burkina Faso

- Burundi

- Cambodia

- Cameroon

- Canada

- Cape Verde

- Cayman Islands

- Central African Republic

- Chad

- Chile

- China

- Christmas Island

- Cocos (Keeling) Islands

- Colombia

- Comoros

- Congo - Brazzaville

- Congo - Kinshasa

- Cook Islands

- Costa Rica

- Croatia

- Curaçao

- Cyprus

- Czechia

- Côte d’Ivoire

- Denmark

- Djibouti

- Dominica

- Dominican Republic

- Ecuador

- Egypt

- El Salvador

- Equatorial Guinea

- Eritrea

- Estonia

- Eswatini

- Ethiopia

- Falkland Islands (Islas Malvinas)

- Faroe Islands

- Fiji

- Finland

- France

- French Guiana

- French Polynesia

- French Southern Territories

- Gabon

- Gambia

- Georgia

- Germany

- Ghana

- Gibraltar

- Greece

- Greenland

- Grenada

- Guadeloupe

- Guam

- Guatemala

- Guinea

- Guinea-Bissau

- Guyana

- Haiti

- Heard & McDonald Islands

- Honduras

- Hong Kong

- Hungary

- Iceland

- India

- Indonesia

- Iraq

- Ireland

- Israel

- Italy

- Jamaica

- Japan

- Jordan

- Kazakhstan

- Kenya

- Kiribati

- Kuwait

- Kyrgyzstan

- Laos

- Latvia

- Lebanon

- Lesotho

- Liberia

- Libya

- Liechtenstein

- Lithuania

- Luxembourg

- Macao

- Madagascar

- Malawi

- Malaysia

- Maldives

- Mali

- Malta

- Marshall Islands

- Martinique

- Mauritania

- Mauritius

- Mayotte

- Mexico

- Micronesia

- Moldova

- Monaco

- Mongolia

- Montenegro

- Montserrat

- Morocco

- Mozambique

- Myanmar (Burma)

- Namibia

- Nauru

- Nepal

- Netherlands

- New Caledonia

- New Zealand

- Nicaragua

- Niger

- Nigeria

- Niue

- Norfolk Island

- North Macedonia

- Northern Mariana Islands

- Norway

- Oman

- Pakistan

- Palau

- Palestine

- Panama

- Papua New Guinea

- Paraguay

- Peru

- Philippines

- Pitcairn Islands

- Poland

- Portugal

- Puerto Rico

- Qatar

- Romania

- Russia

- Rwanda

- Réunion

- Samoa

- San Marino

- Saudi Arabia

- Senegal

- Serbia

- Seychelles

- Sierra Leone

- Singapore

- Sint Maarten

- Slovakia

- Slovenia

- Solomon Islands

- Somalia

- South Africa

- South Georgia & South Sandwich Islands

- South Korea

- South Sudan

- Spain

- Sri Lanka

- St. Barthélemy

- St. Helena

- St. Kitts & Nevis

- St. Lucia

- St. Martin

- St. Pierre & Miquelon

- St. Vincent & Grenadines

- Suriname

- Svalbard & Jan Mayen

- Sweden

- Switzerland

- São Tomé & Príncipe

- Taiwan

- Tajikistan

- Tanzania

- Thailand

- Timor-Leste

- Togo

- Tokelau

- Tonga

- Trinidad & Tobago

- Tunisia

- Turkmenistan

- Turks & Caicos Islands

- Tuvalu

- Türkiye

- U.S. Outlying Islands

- U.S. Virgin Islands

- Uganda

- Ukraine

- United Arab Emirates

- United Kingdom

- United States

- Uruguay

- Uzbekistan

- Vanuatu

- Vatican City

- Venezuela

- Vietnam

- Wallis & Futuna

- Western Sahara

- Yemen

- Zambia

- Zimbabwe

The way we build and deploy AI is undergoing a profound shift. As models evolve from providing simple predictions to executing multi-step reasoning loops, the architectural requirements for training and inference have diverged sharply. Training demands massive compute throughput and scale-up bandwidth, while real-time inference requires massive memory bandwidth and ultra-low latency.

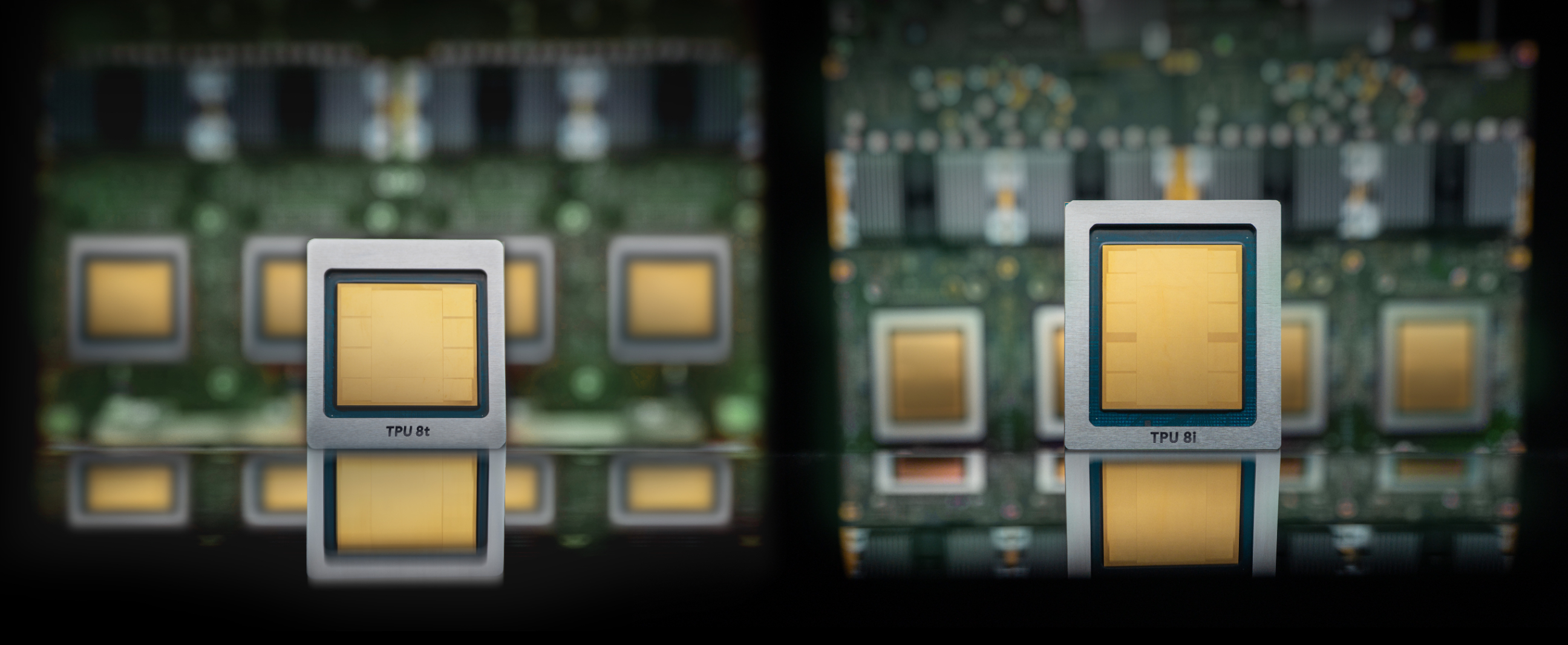

To lead in the agentic era, you cannot rely on one-size-fits-all hardware. Our 8th generation TPU family introduces two purpose-built architectures: TPU 8t for training and TPU 8i for inference. Hosted for the first time on our own Axion ARM-based processors, they provide a fully optimized, co-designed foundation to help your teams build what comes next.

Here is how we empower your teams to drive rapid innovation:

Performance without compromise: accelerate the AI lifecycle with infrastructure purpose-built for frontier-model training and real-time reinforcement learning for inference.

Sustainable economics at scale: deliver unrivaled price-performance through system-level co-design that optimizes the entire infrastructure stack.

Open, flexible, and portable operations: speed up development with familiar open-source frameworks and a portable ecosystem for global scaling.

Ready to scale your AI operations? Connect with our experts to build your future on Google Cloud’s 8th Generation TPUs.

Cloud AI products comply with our SLA policies. They may offer different latency or availability guarantees from other Google Cloud services.