Foundations for TPU development

Build with your preferred framework and diagnostic tools to drive peak performance

Learn about TPU architectureTensor Processing Units (TPUs) are application specific integrated circuits (ASICs) designed by Google to accelerate machine learning workloads. Google Cloud makes TPUs available as a scalable resource.Tutorial

Learn about TPU architectureTensor Processing Units (TPUs) are application specific integrated circuits (ASICs) designed by Google to accelerate machine learning workloads. Google Cloud makes TPUs available as a scalable resource.Tutorial Set up your Cloud TPU environmentAn end-to-end checklist for getting started with TPUs on Google Cloud.Tutorial

Set up your Cloud TPU environmentAn end-to-end checklist for getting started with TPUs on Google Cloud.Tutorial A developer's guide to debugging JAX on TPUsA practical guide to debugging and profiling techniques for JAX on Cloud TPUs.Blog

A developer's guide to debugging JAX on TPUsA practical guide to debugging and profiling techniques for JAX on Cloud TPUs.Blog

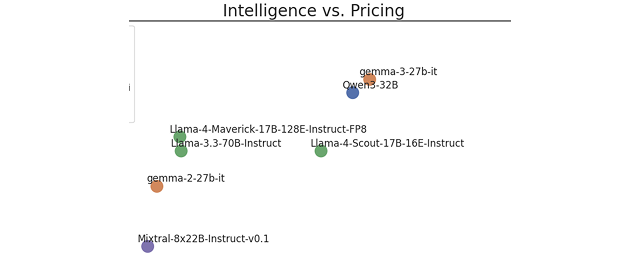

Accelerate production inference on TPUs

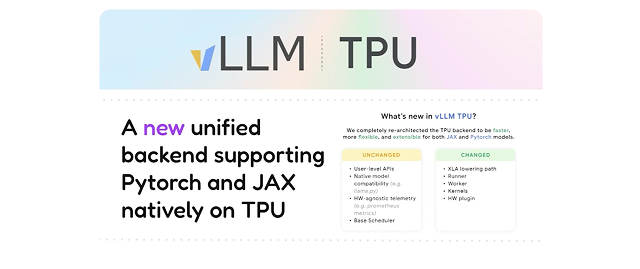

Deploy high-throughput, low-latency workloads using vLLM and optimized TPU serving stacks

Gemma 4 is now available on vLLM TPUvLLM introduces support & community recipes for Gemma 4, with Day 0 support on Google TPUs.Guide

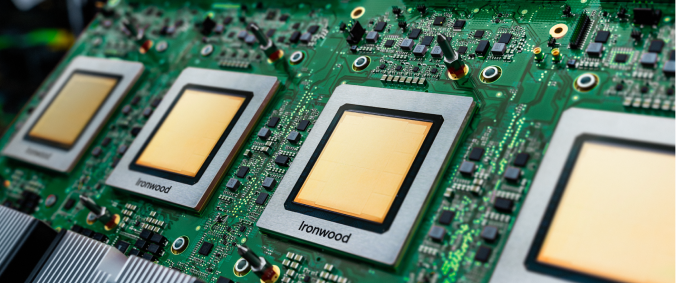

Gemma 4 is now available on vLLM TPUvLLM introduces support & community recipes for Gemma 4, with Day 0 support on Google TPUs.Guide Serve Qwen3-Coder with vLLM on Ironwood TPUIn this guide, we show how to serve Qwen3-Coder-480B-A35B-Instruct-FP8-Dynamic on Ironwood (TPU7x).Guide

Serve Qwen3-Coder with vLLM on Ironwood TPUIn this guide, we show how to serve Qwen3-Coder-480B-A35B-Instruct-FP8-Dynamic on Ironwood (TPU7x).Guide Inference on TPULearn the essential skills to design and implement efficient AI inference solutions using Google Cloud's specialized hardware and popular open-source frameworks.Course

Inference on TPULearn the essential skills to design and implement efficient AI inference solutions using Google Cloud's specialized hardware and popular open-source frameworks.Course

Scale model pre-training on TPUs

Achieve higher training throughput using JAX, PyTorch, and Keras on TPUs

Build an LLM with JAXBuild a GPT-2 style language model with 20 million parameters from scratch using JAX, the open-source library behind Google’s Gemini, Veo, and Nano Banana models.Course

Build an LLM with JAXBuild a GPT-2 style language model with 20 million parameters from scratch using JAX, the open-source library behind Google’s Gemini, Veo, and Nano Banana models.Course How to scale your modelTraining LLMs often feels like alchemy, but understanding and optimizing the performance of your models doesn't have to. This book aims to demystify the science of scaling language models.eBook

How to scale your modelTraining LLMs often feels like alchemy, but understanding and optimizing the performance of your models doesn't have to. This book aims to demystify the science of scaling language models.eBook A developer’s guide to training with Ironwood TPUsThis technical overview explores the specific methods and tools within the JAX and MaxText ecosystems designed to refine training efficiency and reach peak performance on Ironwood hardware.Guide

A developer’s guide to training with Ironwood TPUsThis technical overview explores the specific methods and tools within the JAX and MaxText ecosystems designed to refine training efficiency and reach peak performance on Ironwood hardware.Guide

Optimize post-training on TPUs

Efficiently customize and align open models for high-performance serving and deployment

Profile and debug TPU workloads

Uncover bottlenecks and optimize your model’s execution

Advanced TPU Optimization with XProfIntroducing three advanced capabilities designed to provide instruction-level insights: Continuous Profiling Snapshots, the Utilization Viewer, and LLO Bundle Visualization.Blog

Advanced TPU Optimization with XProfIntroducing three advanced capabilities designed to provide instruction-level insights: Continuous Profiling Snapshots, the Utilization Viewer, and LLO Bundle Visualization.Blog Supercharge ML Performance with XProfLearn how XProf, our core ML profiler, pairs with the new Cloud Diagnostics XProf library to easily identify model bottlenecks.Blog

Supercharge ML Performance with XProfLearn how XProf, our core ML profiler, pairs with the new Cloud Diagnostics XProf library to easily identify model bottlenecks.Blog

Community Contributions

Resources and code samples from our developer community

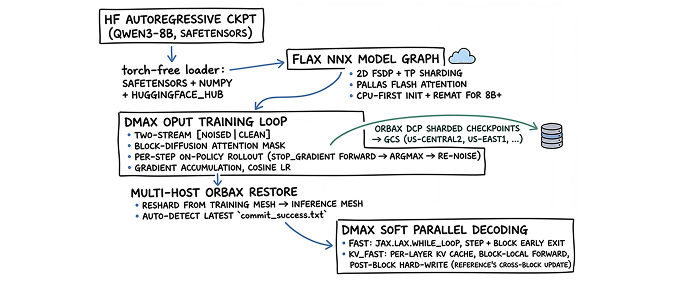

dLLM into TPU: An End-to-End Diffusion LM Stack in Pure JAXA JAX reference implementation of Diffusion Language Models (dLLMs), the most interesting alternative paradigm to autoregressive LLMs right now.Guide

dLLM into TPU: An End-to-End Diffusion LM Stack in Pure JAXA JAX reference implementation of Diffusion Language Models (dLLMs), the most interesting alternative paradigm to autoregressive LLMs right now.Guide Building a Medical Q&A Assistant with LoRA, Keras Kinetic, and Cloud TPULearn how to use a single Python decorator to fine-tune a medical question-answering model on a remote TPU — no Docker, no Kubernetes YAML, just @kinetic.run().Guide

Building a Medical Q&A Assistant with LoRA, Keras Kinetic, and Cloud TPULearn how to use a single Python decorator to fine-tune a medical question-answering model on a remote TPU — no Docker, no Kubernetes YAML, just @kinetic.run().Guide Pallas for BeginnersHow to get started with Pallas, an experimental JAX extension for writing custom kernels for GPUs and TPUs.Guide

Pallas for BeginnersHow to get started with Pallas, an experimental JAX extension for writing custom kernels for GPUs and TPUs.Guide

TPU developer resources

Explore official documentation, workload recipes, and the latest technical updates for Cloud TPUs