Accelerate innovation on Google Cloud and NVIDIA

NVIDIA and Google Cloud provide accelerator-optimized solutions that support demanding workloads, including generative AI, high-performance computing, data analytics, graphics, and gaming workloads.

Recapping on NVIDIA GTC 2026 San Jose. See the highlights:

- View industry-leading announcements

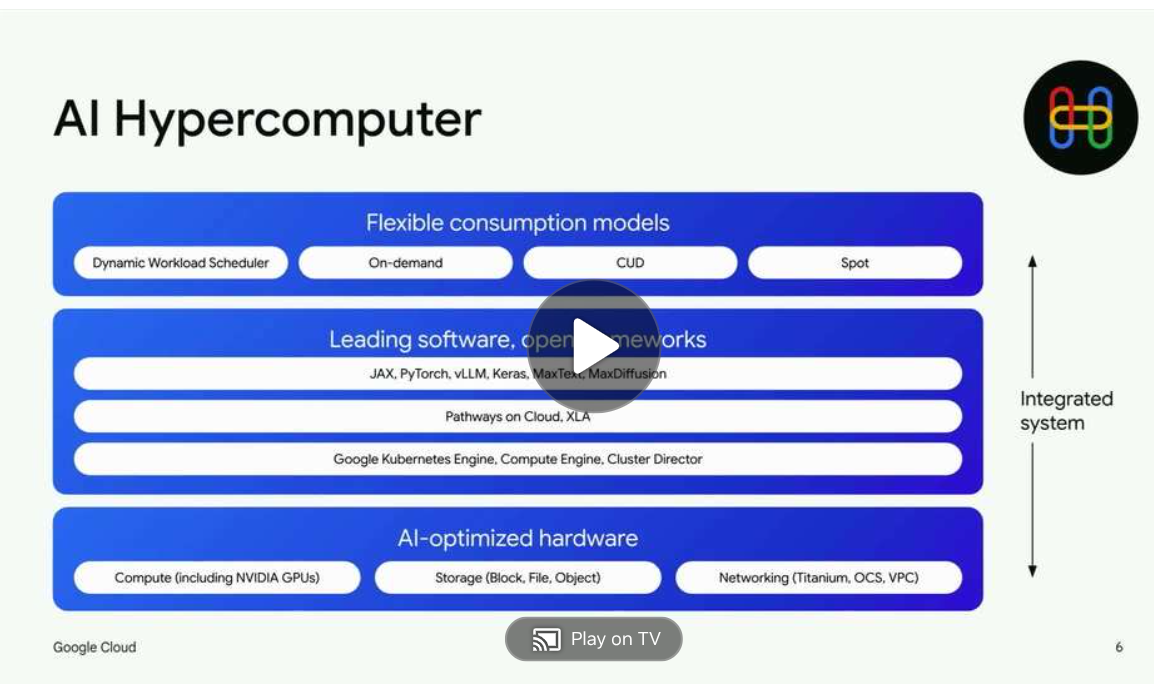

- Explore Sponsored sessions on demand: Blueprint for AI scale: How industry Aarchitects success with NVIDIA GPUs on Google Cloud and Scale Foundation Models with Google Cloud AI Hypercomputer

- Watch our latest AI Podcast

- View our session catalog

“We are moving from training AI to producing intelligence. These data centers are no longer just storing information. They are factories generating tokens, generating intelligence... Our expanded collaboration with Google Cloud will help developers accelerate their work with infrastructure that supercharges energy efficiency and reduces costs.”

Jensen Huang, GTC 2026 keynote

View Google Cloud-led sessions at NVIDIA GTC

High Performing GPUs on Google Cloud

Speed up machine learning, scientific computing, and generative AI with high-performance GPUs on Google Cloud.

Key benefits:

- Run workloads (generative AI, 3D visualization, HPC) with advanced AI hardware/software

- Access diverse GPUs for varied performance and pricing

- Optimize workloads with flexible pricing and machine customizations

Key features

- Diverse GPU Offerings: Compute Engine offers NVIDIA GPUs: RTX PRO 6000, GB300, GB200, B200, H200, H100, L4, P100, P4, T4, V100, A100. Options cover various cost/performance needs.

- Adaptable Performance: Achieve optimal balance of processor, memory, high-performance disk, and up to 8 GPUs per instance. Benefit from per-second billing.

- Use Google Cloud Advantages: Run GPU workloads on Google Cloud and access industry-leading storage, networking, and data analytics.

NVIDIA technologies on Google Cloud

Google Kubernetes Engine (GKE)

Use GKE scalability, NVIDIA Multi-Instance GPU (MIG) support, and GPU time-sharing for efficient generative AI training, inference, and other compute-intensive workloads. Optimize resource utilization and minimize operational costs.

Gemini Enterprise Agent Platform

Combine NVIDIA accelerated computing with Gemini Enterprise Agent Platform, a unified MLOps platform. Utilize NVIDIA GPUs and AI software (such as, Triton™ Inference Server) within Agent Platform Managed Training, Agent Platform Inference, Pipelines, and Notebooks to accelerate generative AI development and deployment without infrastructure complexities.

Cloud Run

Deploy generative AI faster with NVIDIA NIM on Cloud Run, a fully managed serverless platform. Cloud Run GPU support speeds up NIM to optimize performance and accelerate gen AI model deployment in a serverless environment.

Dynamic Workload Scheduler

Access NVIDIA GPU capacity on Google Cloud for short-duration AI workloads (training, fine-tuning, experimentation). Flexible scheduling and atomic provisioning enhance resource utilization and optimize costs across services like GKE, Agent Platform, and Batch.

Google Distributed Cloud

The NVIDIA Blackwell platform on Google Distributed Cloud enables secure, on-premises deployment of advanced agentic AI (including Google Gemini models). This offers improved AI performance and scalability for sensitive, regulated workloads, ensuring data privacy, sovereignty, and compliance.

Technical resources for deploying NVIDIA technologies on Google Cloud

Google Cloud basics

- GPUs on Compute Engine: Compute Engine provides GPUs that you can add to your virtual machine instances. Learn more

- Using GPUs for training models in the cloud: Run the training process for many deep learning models, like image classification, video analysis, and natural language processing. Learn more

- Attaching GPUs to Managed Service for Apache Spark clusters: Attach GPUs to the master and worker Compute Engine nodes in a Managed Service for Apache Spark cluster to accelerate specific workloads, such as machine learning and data processing. Learn more

- Using GPUs with Dataflow: Using GPUs in Dataflow jobs lets you speed up some of your machine learning and other compute intensive data processing tasks. Learn more

Tutorials

- Learn how to add or remove GPUs from a Compute Engine VM. Learn more

- Installing GPUs drivers: This guide shows ways to install NVIDIA proprietary drivers you’ve created an instance with one or more GPUs. Learn more

- GPUs on Google Kubernetes Engine: Learn how to use GPU hardware accelerators in your Google Kubernetes Engine clusters nodes. Learn more

NVIDIA GTC 2026 Highlights

Recapping on NVIDIA GTC 2026 San Jose. See the highlights:

- View industry-leading announcements

- Explore Sponsored sessions on demand: Blueprint for AI scale: How industry Aarchitects success with NVIDIA GPUs on Google Cloud and Scale Foundation Models with Google Cloud AI Hypercomputer

- Watch our latest AI Podcast

- View our session catalog

“We are moving from training AI to producing intelligence. These data centers are no longer just storing information. They are factories generating tokens, generating intelligence... Our expanded collaboration with Google Cloud will help developers accelerate their work with infrastructure that supercharges energy efficiency and reduces costs.”

Jensen Huang, GTC 2026 keynote

View Google Cloud-led sessions at NVIDIA GTC

NVIDIA GPUs on Google Cloud

High Performing GPUs on Google Cloud

Speed up machine learning, scientific computing, and generative AI with high-performance GPUs on Google Cloud.

Key benefits:

- Run workloads (generative AI, 3D visualization, HPC) with advanced AI hardware/software

- Access diverse GPUs for varied performance and pricing

- Optimize workloads with flexible pricing and machine customizations

Key features

- Diverse GPU Offerings: Compute Engine offers NVIDIA GPUs: RTX PRO 6000, GB300, GB200, B200, H200, H100, L4, P100, P4, T4, V100, A100. Options cover various cost/performance needs.

- Adaptable Performance: Achieve optimal balance of processor, memory, high-performance disk, and up to 8 GPUs per instance. Benefit from per-second billing.

- Use Google Cloud Advantages: Run GPU workloads on Google Cloud and access industry-leading storage, networking, and data analytics.

Product integrations

NVIDIA technologies on Google Cloud

Google Kubernetes Engine (GKE)

Use GKE scalability, NVIDIA Multi-Instance GPU (MIG) support, and GPU time-sharing for efficient generative AI training, inference, and other compute-intensive workloads. Optimize resource utilization and minimize operational costs.

Gemini Enterprise Agent Platform

Combine NVIDIA accelerated computing with Gemini Enterprise Agent Platform, a unified MLOps platform. Utilize NVIDIA GPUs and AI software (such as, Triton™ Inference Server) within Agent Platform Managed Training, Agent Platform Inference, Pipelines, and Notebooks to accelerate generative AI development and deployment without infrastructure complexities.

Cloud Run

Deploy generative AI faster with NVIDIA NIM on Cloud Run, a fully managed serverless platform. Cloud Run GPU support speeds up NIM to optimize performance and accelerate gen AI model deployment in a serverless environment.

Dynamic Workload Scheduler

Access NVIDIA GPU capacity on Google Cloud for short-duration AI workloads (training, fine-tuning, experimentation). Flexible scheduling and atomic provisioning enhance resource utilization and optimize costs across services like GKE, Agent Platform, and Batch.

Google Distributed Cloud

The NVIDIA Blackwell platform on Google Distributed Cloud enables secure, on-premises deployment of advanced agentic AI (including Google Gemini models). This offers improved AI performance and scalability for sensitive, regulated workloads, ensuring data privacy, sovereignty, and compliance.

Documentation

Technical resources for deploying NVIDIA technologies on Google Cloud

Google Cloud basics

- GPUs on Compute Engine: Compute Engine provides GPUs that you can add to your virtual machine instances. Learn more

- Using GPUs for training models in the cloud: Run the training process for many deep learning models, like image classification, video analysis, and natural language processing. Learn more

- Attaching GPUs to Managed Service for Apache Spark clusters: Attach GPUs to the master and worker Compute Engine nodes in a Managed Service for Apache Spark cluster to accelerate specific workloads, such as machine learning and data processing. Learn more

- Using GPUs with Dataflow: Using GPUs in Dataflow jobs lets you speed up some of your machine learning and other compute intensive data processing tasks. Learn more

Tutorials

- Learn how to add or remove GPUs from a Compute Engine VM. Learn more

- Installing GPUs drivers: This guide shows ways to install NVIDIA proprietary drivers you’ve created an instance with one or more GPUs. Learn more

- GPUs on Google Kubernetes Engine: Learn how to use GPU hardware accelerators in your Google Kubernetes Engine clusters nodes. Learn more

Google Cloud and NVIDIA partnership

NVIDIA on Google Cloud marketplace

NVIDIA OmniverseA platform of APIs, SDKs, and services that let developers integrate OpenUSD, NVIDIA RTX™ rendering technologies into physical AI applications. Use virtual machines (VMs) on Google Cloud to streamline your application development.

NVIDIA OmniverseA platform of APIs, SDKs, and services that let developers integrate OpenUSD, NVIDIA RTX™ rendering technologies into physical AI applications. Use virtual machines (VMs) on Google Cloud to streamline your application development. NVIDIA DGX CloudNVIDIA DGX Cloud accelerates AI workloads in the cloud, provides high-performance training, scalable inference, and global GPU access for developers and platform teams.

NVIDIA DGX CloudNVIDIA DGX Cloud accelerates AI workloads in the cloud, provides high-performance training, scalable inference, and global GPU access for developers and platform teams. NVIDIA AI EnterpriseA cloud-native platform that streamlines development and deployment of production-grade AI solutions-including generative AI, computer vision, speech AI.

NVIDIA AI EnterpriseA cloud-native platform that streamlines development and deployment of production-grade AI solutions-including generative AI, computer vision, speech AI.

Customer stories

Compared with another inference platform, running on GKE with NVIDIA NIM and GPUs delivered 6.1x acceleration in average answer/response generation speed for the Amazfit AI agent.

Jia Li Co-Founder, Chief AI Officer, LiveX AI