Nesta página, descrevemos como ler várias tabelas de um banco de dados do Microsoft SQL Server usando a fonte de várias tabelas. Use a fonte de várias tabelas quando quiser que o pipeline leia a partir de várias tabelas. Se você quiser que seu pipeline leia a partir de uma única tabela, consulte Como ler uma tabela do SQL Server.

A origem de várias tabelas gera dados com vários esquemas e inclui um campo de nome da tabela que indica a tabela de onde vieram os dados. Ao usar a fonte de várias tabelas, use um dos coletores de várias tabelas, BigQuery Table ou arquivo multiGCS para criar um anexo da VLAN de monitoramento.

Armazenar a senha do SQL Server como uma chave segura

Adicione a senha do SQL Server como uma chave segura para criptografar na instância do Cloud Data Fusion. Posteriormente neste guia, você garantirá que sua senha seja recuperada usando o Cloud KMS.

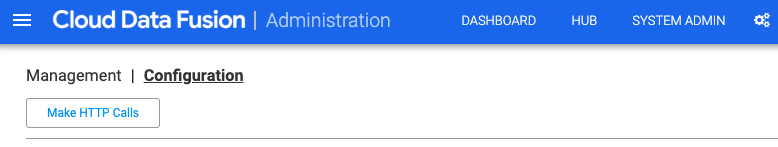

No canto superior direito de qualquer página do Cloud Data Fusion, clique em Administrador do sistema.

Clique na guia Configuration.

Clique em Fazer chamadas HTTP.

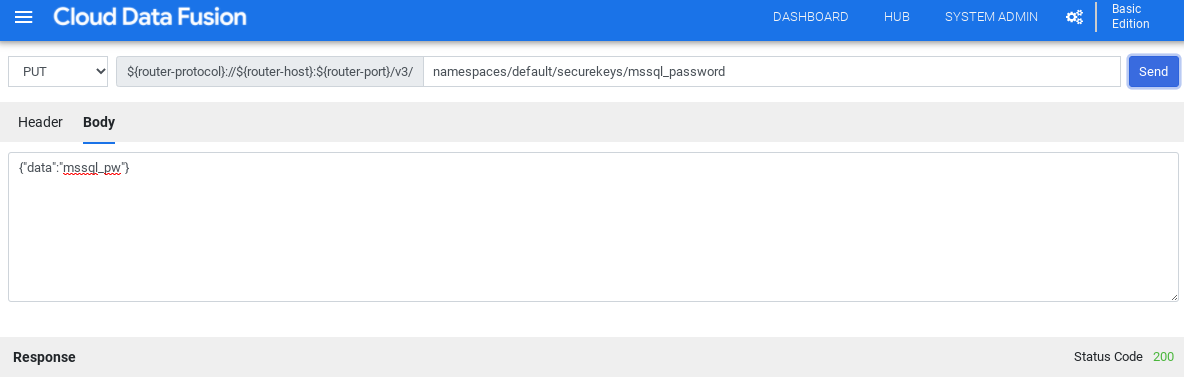

No menu suspenso, escolha PUT.

No campo do caminho, digite

namespaces/NAMESPACE_ID/securekeys/PASSWORD.No campo Corpo, digite

{"data":"SQL_SERVER_PASSWORD"}.Clique em Enviar.

Verifique se a Resposta recebida é o código de status 200.

Acessar o driver JDBC para SQL Server

Como usar o Hub

Na interface do Cloud Data Fusion, clique em Hub.

Na barra de pesquisa, digite

Microsoft SQL Server JDBC Driver.Clique em Driver JDBC do Microsoft SQL Server.

Clique em Fazer download. Siga as etapas de download mostradas.

Clique em Implantar. Faça o upload do arquivo JAR da etapa anterior.

Clique em Concluir.

Como usar o Studio

Acesse Microsoft.com.

Escolha o download e clique em Fazer o download.

Na interface do Cloud Data Fusion, clique em Menu e navegue até a página Studio.

Clique em Adicionar.

Em Driver, clique em Fazer upload.

Faça o upload do arquivo JAR baixado na etapa 2.

Clique em Próxima.

Configure o driver inserindo um Nome.

No campo Nome da classe, insira

com.microsoft.sqlserver.jdbc.SQLServerDriver.Clique em Concluir.

Implante os plug-ins de várias tabelas

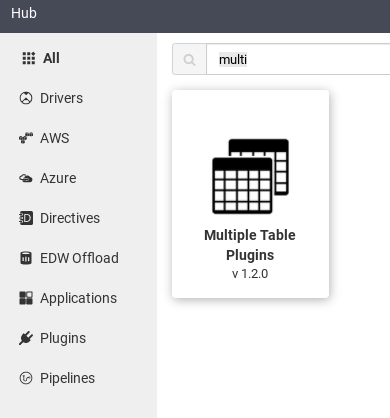

Na IU da Web do Cloud Data Fusion, clique em Hub.

Na barra de pesquisa, digite

Multiple table plugins.Clique em Múltiplos plug-ins de tabela.

Clique em Implantar.

Clique em Concluir.

Clique em Criar um pipeline.

Conectar-se ao SQL Server

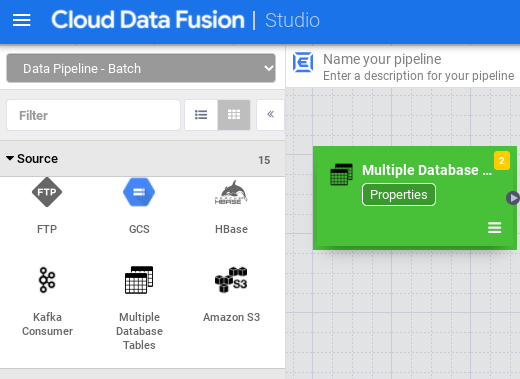

Na interface do Cloud Data Fusion, clique em Menu e navegue até a página Studio.

No Studio, expanda o menu Origem.

Clique em Várias tabelas de banco de dados.

Coloque o ponteiro sobre o nó Várias tabelas de banco de dados e clique em Propriedades.

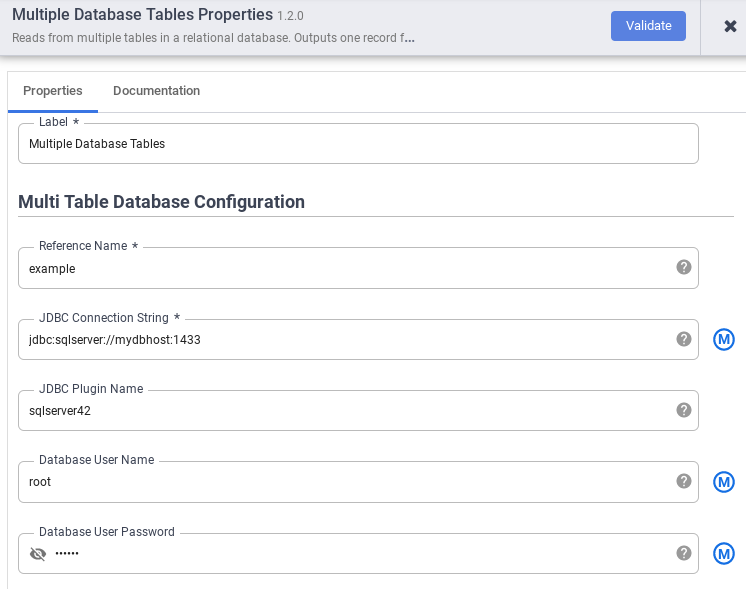

No campo Nome de referência, especifique um nome que será usado para identificar sua origem do SQL Server.

No campo String de conexão JDBC, insira a string de conexão JDBC. Por exemplo,

jdbc:sqlserver://mydbhost:1433. Para mais informações, consulte Como criar o URL de conexão.Insira o Nome do plug-in JDBC, o Nome de usuário do banco de dados e a Senha do usuário do banco de dados.

Clique em Validate (Validar).

Clique em Fechar.

Conectar-se ao BigQuery ou ao Cloud Storage

Na interface do Cloud Data Fusion, clique em Menu e navegue até a página Studio.

Expanda Gravador.

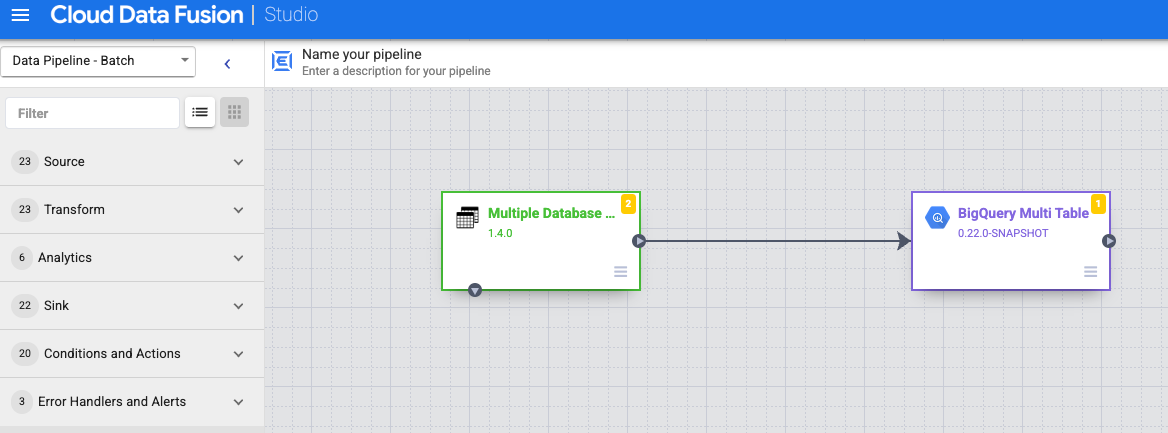

Clique em BigQuery Multi Table ou GCS Multi File.

Conecte o nó Várias tabelas de banco de dados com BigQuery Multi Table ou GCS Multi File.

Mantenha o ponteiro sobre o nó Várias tabelas do BigQuery ou Vários arquivos do GCS, clique em Propriedades e configure o coletor.

Para mais informações, consulte Coletor de várias tabelas do Google BigQuery e Coletor de vários arquivos do Google Cloud Storage.

Clique em Validate (Validar).

Clique em Fechar.

Executar a visualização do pipeline

Na interface do Cloud Data Fusion, clique em Menu e navegue até a página Studio.

Clique em Visualização.

Clique em Executar. Aguarde a conclusão da prévia.

Implante o pipeline.

Na interface do Cloud Data Fusion, clique em Menu e navegue até a página Studio.

Clique em Implantar.

Executar o pipeline

Na interface do Cloud Data Fusion, clique em Menu.

Clique em Lista.

Clique no pipeline.

Na página de detalhes do pipeline, clique em Executar.