Membuat pipeline data

Panduan memulai ini menunjukkan cara melakukan hal berikut:

- Buat instance Cloud Data Fusion.

- Deploy pipeline contoh yang disediakan dengan instance Cloud Data Fusion Anda. Pipeline ini melakukan hal berikut:

- Membaca file JSON yang berisi data buku terlaris NYT dari Cloud Storage.

- Menjalankan transformasi pada file untuk mengurai dan membersihkan data.

- Memuat buku dengan rating teratas yang ditambahkan dalam seminggu terakhir dan harganya kurang dari $25 ke BigQuery.

Sebelum memulai

- Sign in to your Google Cloud account. If you're new to Google Cloud, create an account to evaluate how our products perform in real-world scenarios. New customers also get $300 in free credits to run, test, and deploy workloads.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Enable the Cloud Data Fusion API.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles. -

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Enable the Cloud Data Fusion API.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles. - Klik Create an instance.

- Masukkan Nama instance.

- Masukkan Deskripsi untuk instance Anda.

- Masukkan Region tempat instance akan dibuat.

- Pilih Versi Cloud Data Fusion yang akan digunakan.

- Pilih Edisi Cloud Data Fusion.

- Untuk Cloud Data Fusion versi 6.2.3 dan yang lebih baru, di kolom Authorization, pilih Dataproc service account yang akan digunakan untuk menjalankan pipeline Cloud Data Fusion di Dataproc. Nilai default, akun Compute Engine, sudah dipilih sebelumnya.

- Klik Buat. Proses pembuatan instance memerlukan waktu hingga 30 menit. Saat Cloud Data Fusion membuat instance Anda, roda progres akan ditampilkan di samping nama instance di halaman Instances. Setelah selesai, ikon akan berubah menjadi tanda centang hijau dan menunjukkan bahwa Anda dapat mulai menggunakan instance.

Di konsol Google Cloud , Anda dapat melakukan hal berikut:

- Buat project Google Cloud konsol

- Membuat dan menghapus instance Cloud Data Fusion

- Melihat detail instance Cloud Data Fusion

Di antarmuka web Cloud Data Fusion, Anda dapat menggunakan berbagai halaman, seperti Studio atau Wrangler, untuk menggunakan fungsi Cloud Data Fusion.

- Di konsol Google Cloud , buka halaman Instances.

- Di kolom Actions instance, klik link View Instance.

- Di antarmuka web Cloud Data Fusion, gunakan panel navigasi kiri untuk membuka halaman yang Anda butuhkan.

- Di antarmuka web Cloud Data Fusion, klik Hub.

- Di panel kiri, klik Pipelines.

- Klik pipeline Cloud Data Fusion Quickstart.

- Klik Buat.

- Di panel konfigurasi Cloud Data Fusion Quickstart, klik Finish.

Klik Sesuaikan Pipeline.

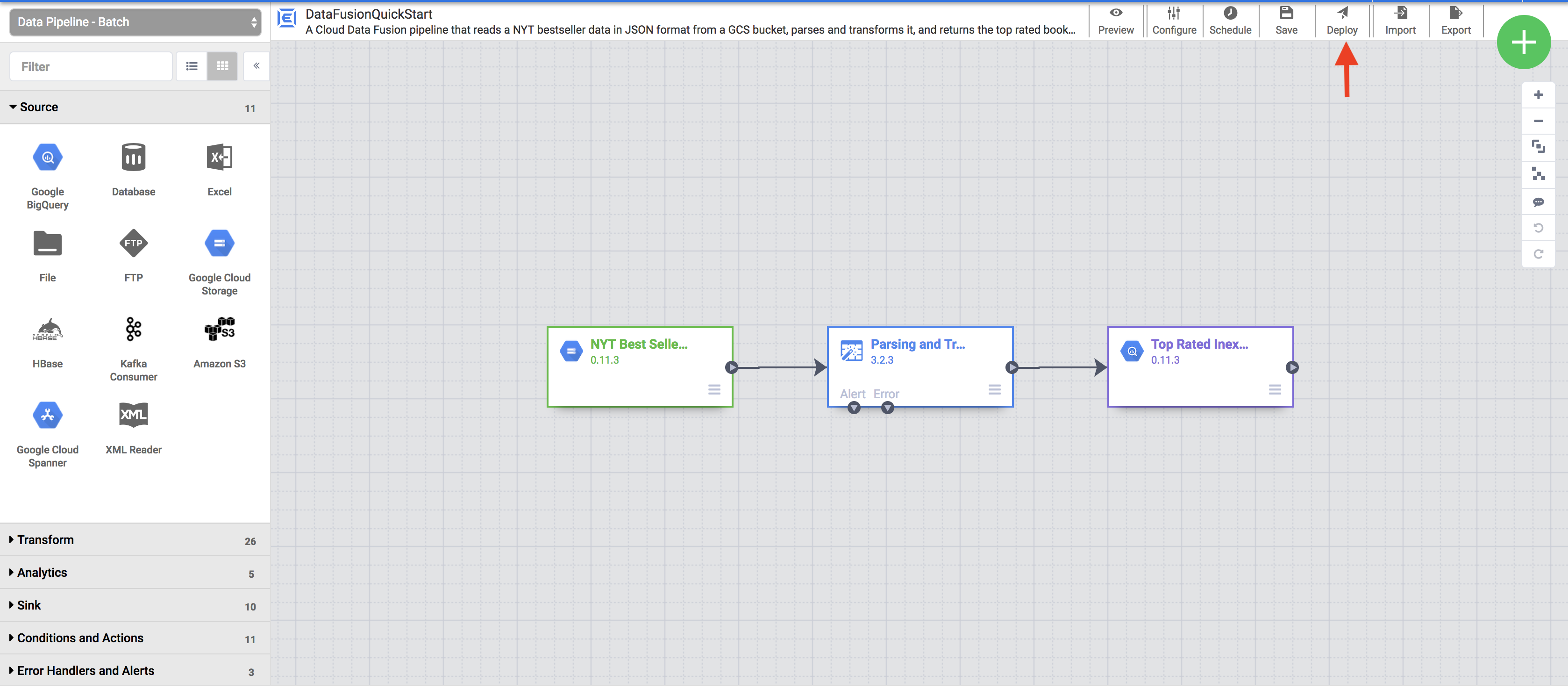

Representasi visual pipeline Anda akan muncul di halaman Studio, yang merupakan antarmuka grafis untuk mengembangkan pipeline integrasi data. Plugin pipeline yang tersedia tercantum di sebelah kiri, dan pipeline Anda ditampilkan di area kanvas utama. Anda dapat menjelajahi pipeline dengan menahan pointer di atas setiap node pipeline dan mengklik Properti. Menu properti untuk setiap node memungkinkan Anda melihat objek dan operasi yang terkait dengan node.

Di menu kanan atas, klik Deploy. Langkah ini mengirimkan pipeline ke Cloud Data Fusion. Anda akan menjalankan pipeline di bagian berikutnya dalam panduan memulai ini.

- Lihat struktur dan konfigurasi pipeline.

- Jalankan pipeline secara manual atau siapkan jadwal atau pemicu.

- Lihat ringkasan eksekusi historis pipeline, termasuk waktu eksekusi, log, dan metrik.

- Menyediakan cluster Dataproc efemeral

- Menjalankan pipeline di cluster menggunakan Apache Spark

- Menghapus cluster

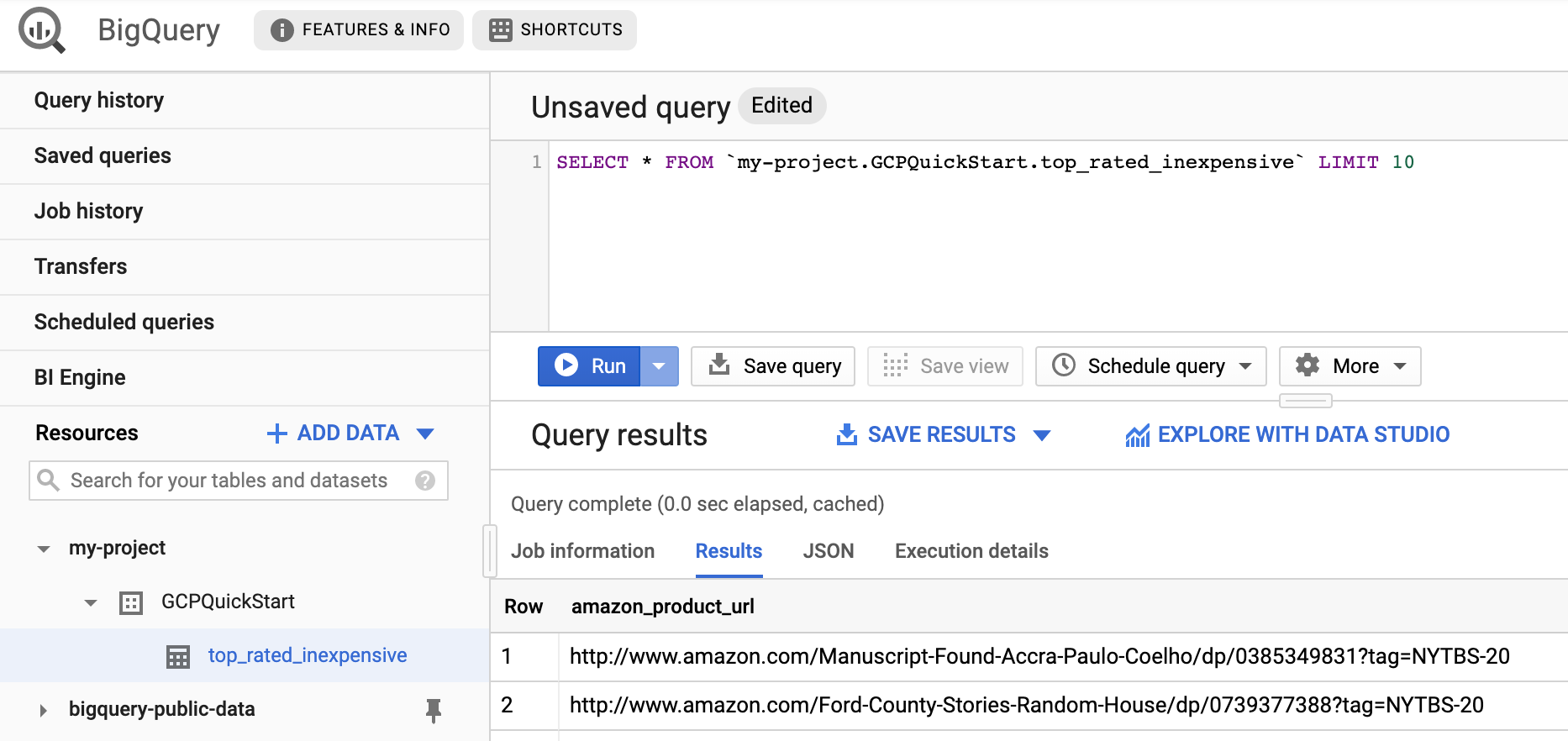

- Buka antarmuka web BigQuery.

Untuk melihat contoh hasil, buka set data

DataFusionQuickstartdi project Anda, klik tabeltop_rated_inexpensive, lalu jalankan kueri sederhana. Contoh:SELECT * FROM PROJECT_ID.GCPQuickStart.top_rated_inexpensive LIMIT 10Ganti PROJECT_ID dengan project ID Anda.

- Hapus set data BigQuery yang ditulis oleh pipeline Anda dalam panduan memulai ini.

Opsional: Hapus project.

- In the Google Cloud console, go to the Manage resources page.

- In the project list, select the project that you want to delete, and then click Delete.

- In the dialog, type the project ID, and then click Shut down to delete the project.

Membuat instance Cloud Data Fusion

Membuka antarmuka web Cloud Data Fusion

Saat menggunakan Cloud Data Fusion, Anda menggunakan konsol Google Cloud dan antarmuka web Cloud Data Fusion yang terpisah.

Untuk membuka antarmuka Cloud Data Fusion, ikuti langkah-langkah berikut:

Men-deploy pipeline contoh

Pipeline contoh tersedia melalui Hub Cloud Data Fusion, yang memungkinkan Anda membagikan pipeline, plugin, dan solusi Cloud Data Fusion yang dapat digunakan kembali.

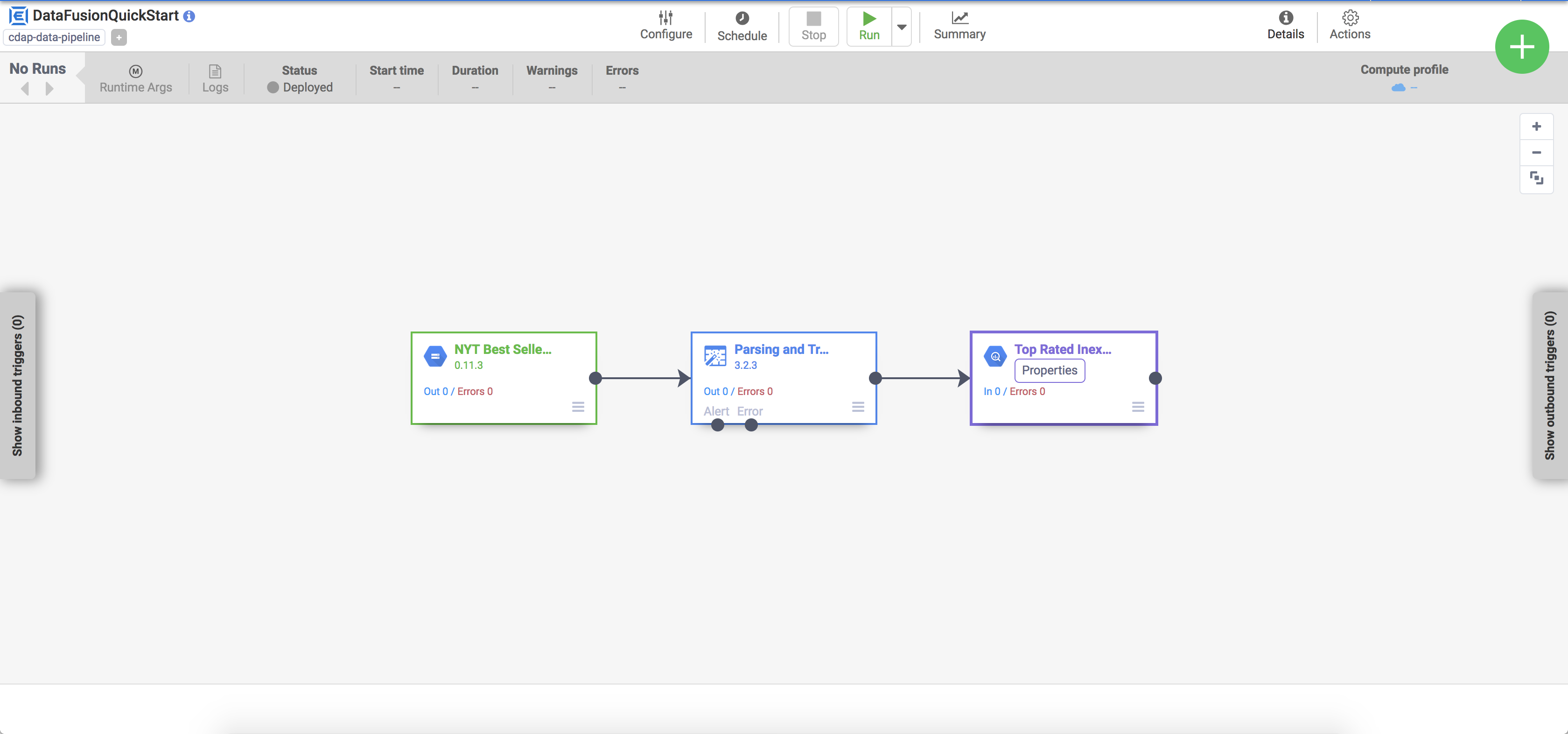

Melihat pipeline Anda

Pipeline yang di-deploy akan muncul di tampilan detail pipeline, tempat Anda dapat melakukan hal berikut:

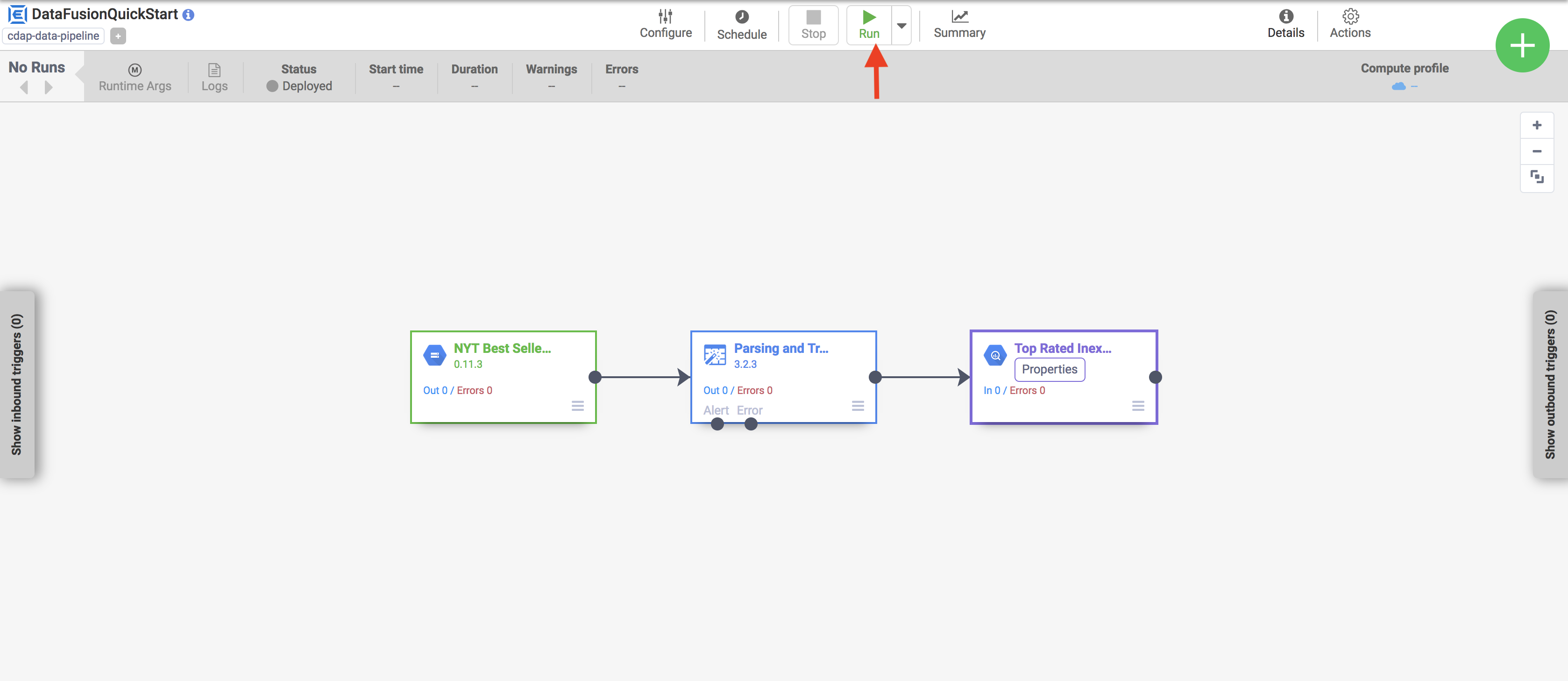

Menjalankan pipeline

Di tampilan detail pipeline, klik Run untuk menjalankan pipeline.

Saat menjalankan pipeline, Cloud Data Fusion akan melakukan hal berikut:

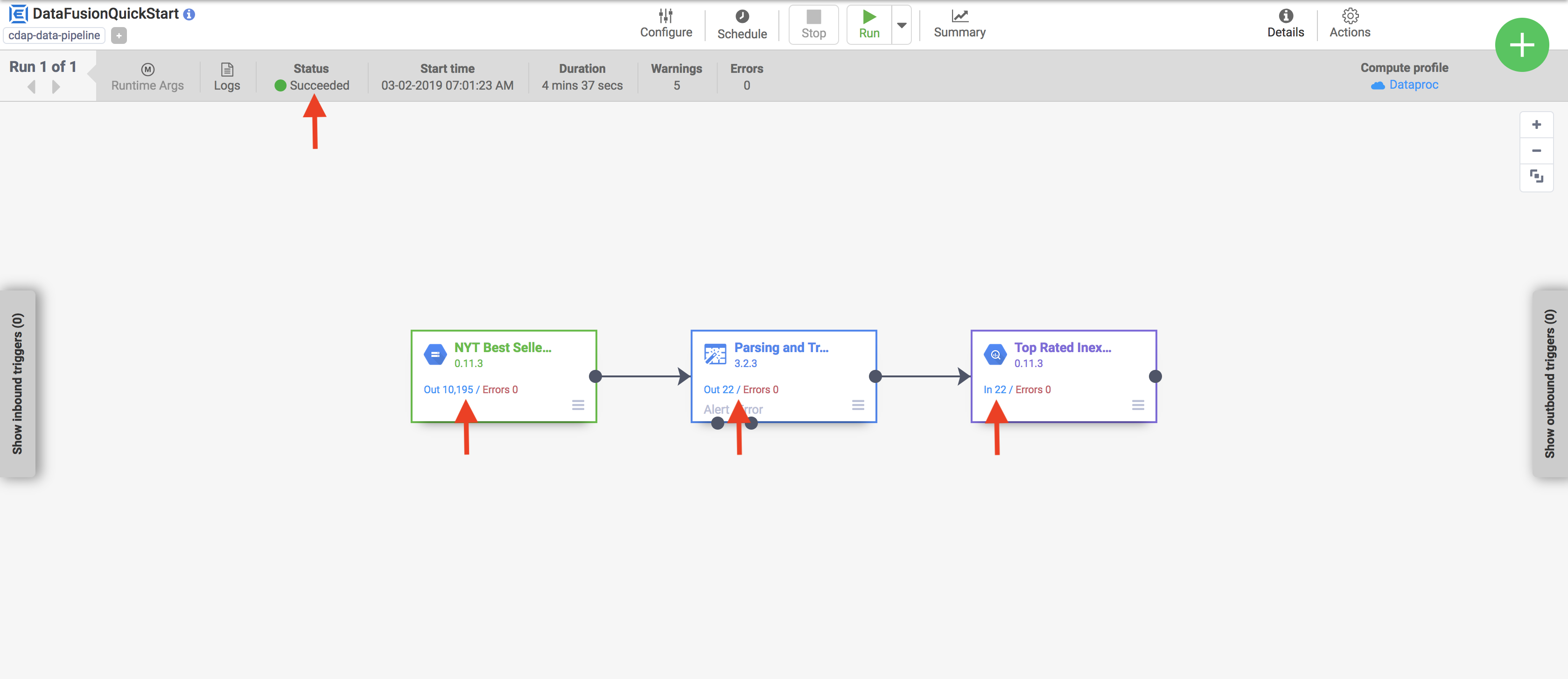

Melihat hasil

Setelah beberapa menit, pipeline akan selesai. Status pipeline berubah menjadi Berhasil dan jumlah data yang diproses oleh setiap node ditampilkan.

Pembersihan

Agar akun Google Cloud Anda tidak dikenai biaya untuk resource yang digunakan pada halaman ini, ikuti langkah-langkah berikut.