Crea una canalización de datos

En esta guía de inicio rápido, se muestra cómo hacer lo siguiente:

- Crea una instancia de Cloud Data Fusion.

- Implementa una canalización de muestra que se proporciona con tu instancia de Cloud Data Fusion. La canalización hace lo siguiente:

- Lee un archivo JSON que contiene los datos de bestseller de NYT de Cloud Storage.

- Ejecuta transformaciones en el archivo para analizar y limpiar los datos.

- Carga en BigQuery los libros mejor calificados que se agregaron durante la última semana y que cuestan menos de $25.

Antes de comenzar

- Sign in to your Google Cloud account. If you're new to Google Cloud, create an account to evaluate how our products perform in real-world scenarios. New customers also get $300 in free credits to run, test, and deploy workloads.

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Enable the Cloud Data Fusion API.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles. -

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

Roles required to select or create a project

- Select a project: Selecting a project doesn't require a specific IAM role—you can select any project that you've been granted a role on.

-

Create a project: To create a project, you need the Project Creator

(

roles/resourcemanager.projectCreator), which contains theresourcemanager.projects.createpermission. Learn how to grant roles.

-

Enable the Cloud Data Fusion API.

Roles required to enable APIs

To enable APIs, you need the Service Usage Admin IAM role (

roles/serviceusage.serviceUsageAdmin), which contains theserviceusage.services.enablepermission. Learn how to grant roles. - Haga clic en Crear una instancia.

- Ingresa un Nombre de instancia.

- Ingresa una Descripción para tu instancia.

- Ingresa la región en la que se creará la instancia.

- Elige la versión de Cloud Data Fusion que deseas usar.

- Elige la edición de Cloud Data Fusion.

- En las versiones de Cloud Data Fusion 6.2.3 y posteriores, en el campo Autorización, elige la cuenta de servicio de Dataproc para usar en la ejecución de tu canalización de Cloud Data Fusion en Dataproc. Se preselecciona como valor predeterminado la cuenta de Compute Engine.

- Haga clic en Crear. El proceso de creación de la instancia toma hasta 30 minutos en completarse. Mientras Cloud Data Fusion crea la instancia, se muestra una rueda de progreso junto al nombre de la instancia en la página Instances. Cuando se completa, se convierte en una marca de verificación verde y se indica que puedes comenzar a usar la instancia.

En la consola de Google Cloud , puedes hacer lo siguiente:

- Crea un Google Cloud proyecto de consola

- Crea y borra instancias de Cloud Data Fusion

- Consulta los detalles de la instancia de Cloud Data Fusion

En la interfaz web de Cloud Data Fusion, puedes usar varias páginas, como Studio o Wrangler, para usar la funcionalidad de Cloud Data Fusion.

- En la consola de Google Cloud , abre la página Instancias.

- En la columna Acciones de la instancia, haz clic en el vínculo Ver instancia.

- En la interfaz web de Cloud Data Fusion, usa el panel de navegación izquierdo para navegar a la página que necesites.

- En la interfaz web de Cloud Data Fusion, haz clic en Hub.

- En el panel izquierdo, haz clic en Canalizaciones.

- Haz clic en la canalización de la Guía de inicio rápido de Cloud Data Fusion.

- Haz clic en Crear.

- En el panel de configuración de inicio rápido de Cloud Data Fusion, haz clic en Finalizar.

Haz clic en Personalizar canalización.

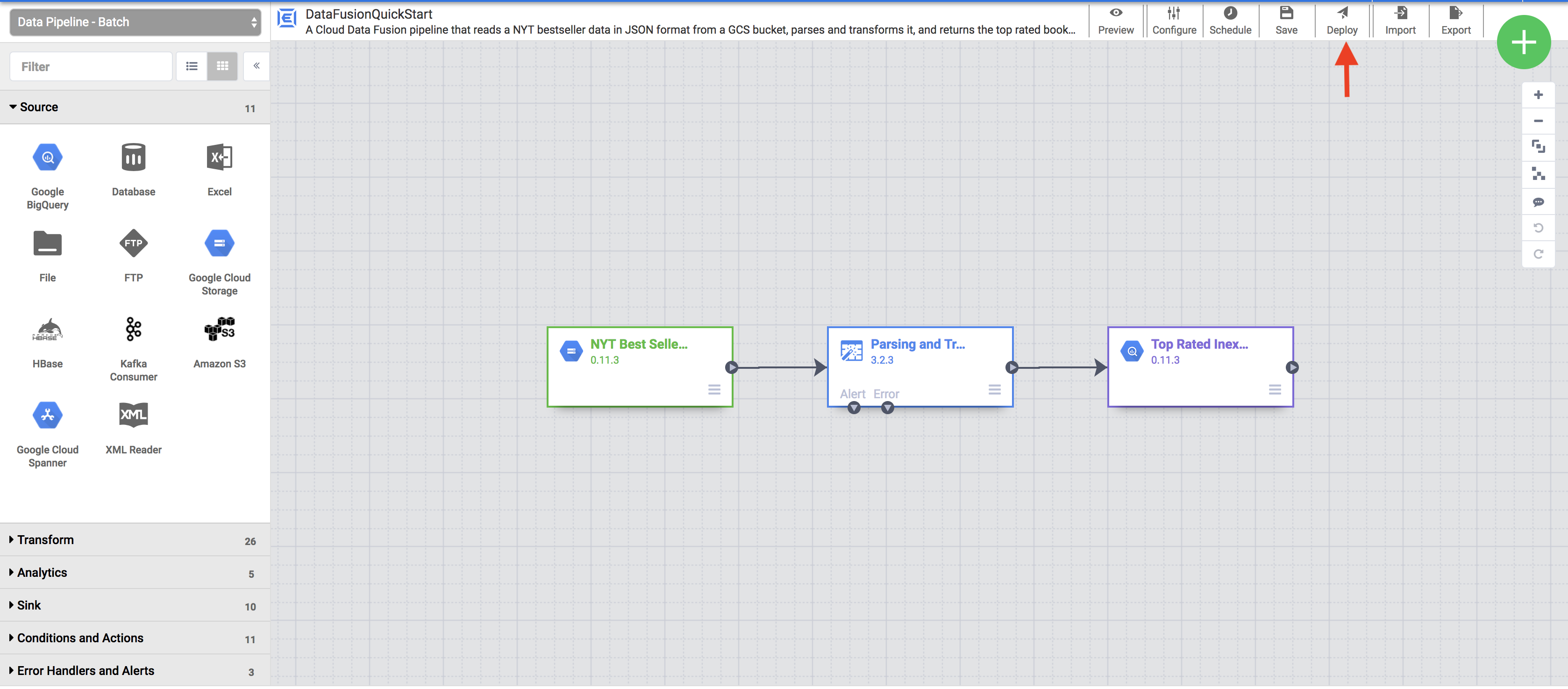

Una representación visual de tu canalización aparece en la página Studio, que es una interfaz gráfica para desarrollar canalizaciones de integración de datos. Los complementos de canalización disponibles se muestran a la izquierda y tu canalización se muestra en el área de lienzo principal. Para explorar tu canalización, mantén el puntero sobre cada nodo de la canalización y haz clic en Propiedades. El menú de propiedades de cada nodo te permite ver los objetos y las operaciones asociados con el nodo.

En el menú de la esquina superior derecha, haz clic en Implementar. En este paso, se envía la canalización a Cloud Data Fusion. Ejecutarás la canalización en la siguiente sección de esta guía de inicio rápido.

- Ver la estructura y la configuración de la canalización

- Ejecuta la canalización de forma manual o configura una programación o un activador.

- Ver un resumen de las ejecuciones históricas de la canalización, incluidos los registros, las métricas y los tiempos de ejecución

- Aprovisiona un clúster efímero de Dataproc

- Ejecuta la canalización en el clúster con Apache Spark

- Eliminación del clúster

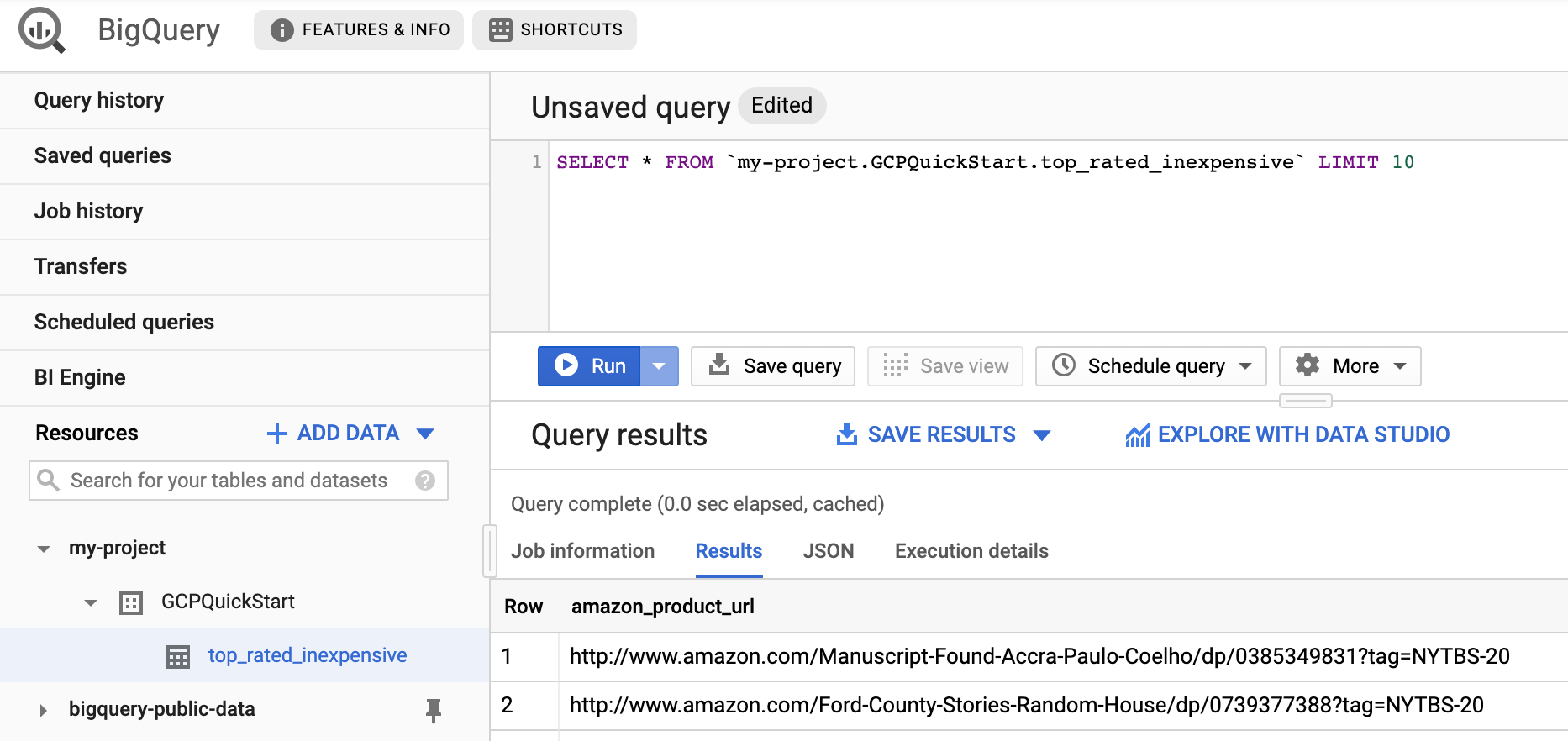

- Ve a la interfaz web de BigQuery.

Para ver una muestra de los resultados, ve al conjunto de datos

DataFusionQuickstartde tu proyecto, haz clic en la tablatop_rated_inexpensivey, luego, ejecuta una consulta simple. Por ejemplo:SELECT * FROM PROJECT_ID.GCPQuickStart.top_rated_inexpensive LIMIT 10Reemplaza PROJECT_ID con el ID del proyecto.

- Borra el conjunto de datos de BigQuery en el que tu canalización escribió en esta guía de inicio rápido.

Borra el proyecto (opcional).

- In the Google Cloud console, go to the Manage resources page.

- In the project list, select the project that you want to delete, and then click Delete.

- In the dialog, type the project ID, and then click Shut down to delete the project.

- Sigue el instructivo de Cloud Data Fusion

- Obtén información sobre los conceptos de Cloud Data Fusion.

Cree una instancia de Cloud Data Fusion

Navega por la interfaz web de Cloud Data Fusion

Cuando usas Cloud Data Fusion, usas la Google Cloud consola y la interfaz web independiente de Cloud Data Fusion.

Para navegar por la interfaz de Cloud Data Fusion, sigue estos pasos:

Implementa una canalización de muestra

Las canalizaciones de muestra están disponibles a través del Centro de noticias de Cloud Data Fusion, que te permite compartir canalizaciones, complementos y soluciones reutilizables de Cloud Data Fusion.

Visualiza tu canalización

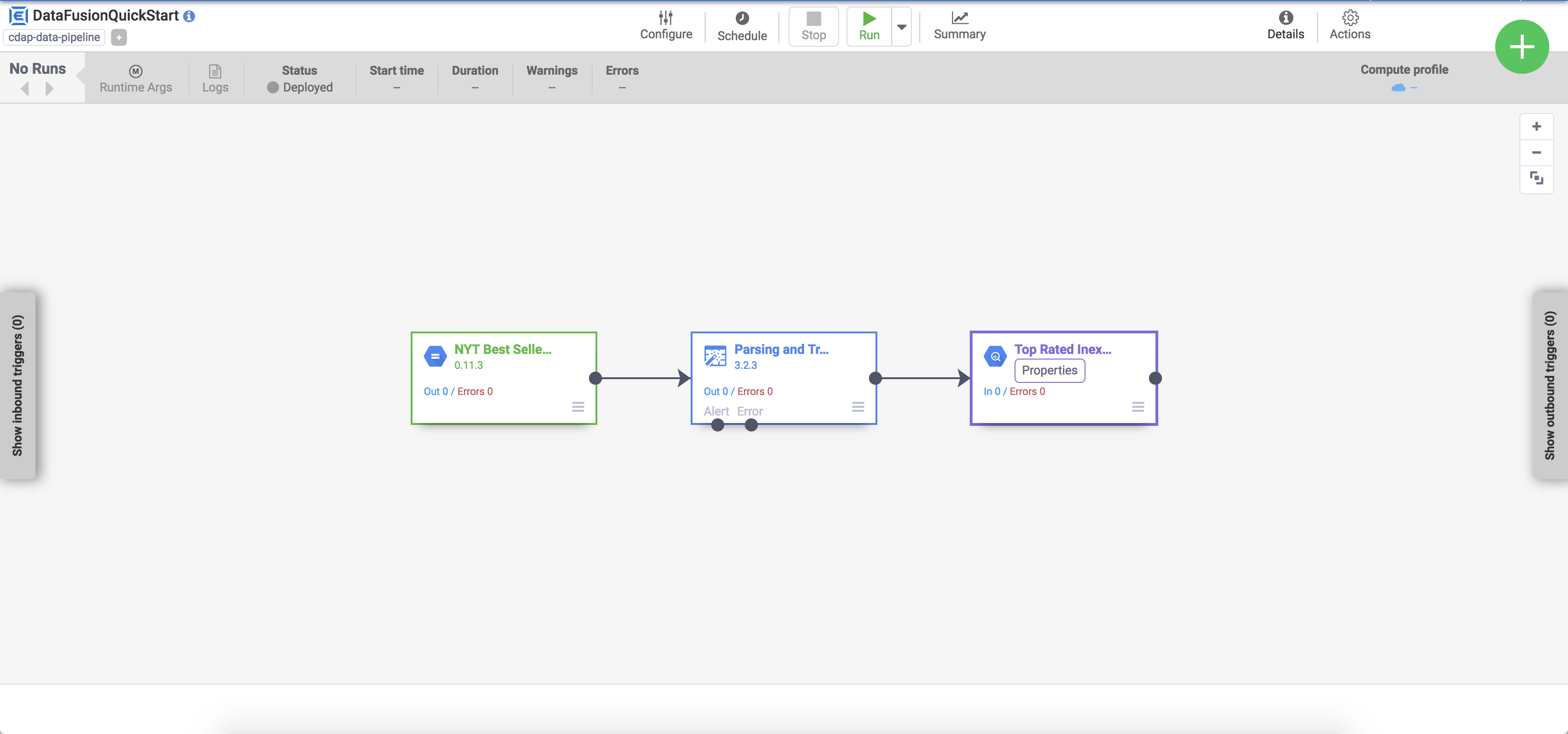

La canalización implementada aparecerá en la vista de detalles de la canalización, donde puedes hacer lo siguiente:

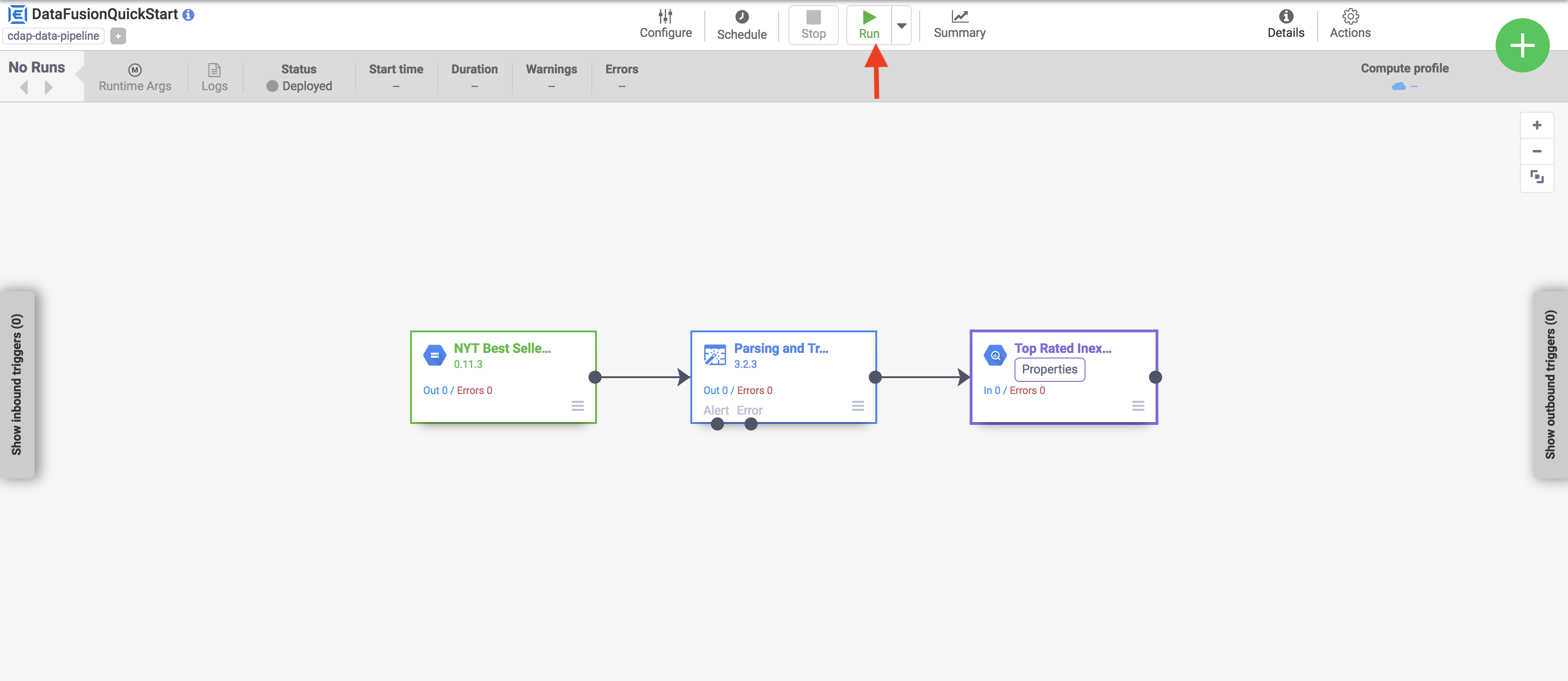

Ejecuta tu canalización

En la vista de detalles de la canalización, haz clic en Ejecutar para ejecutar su canalización.

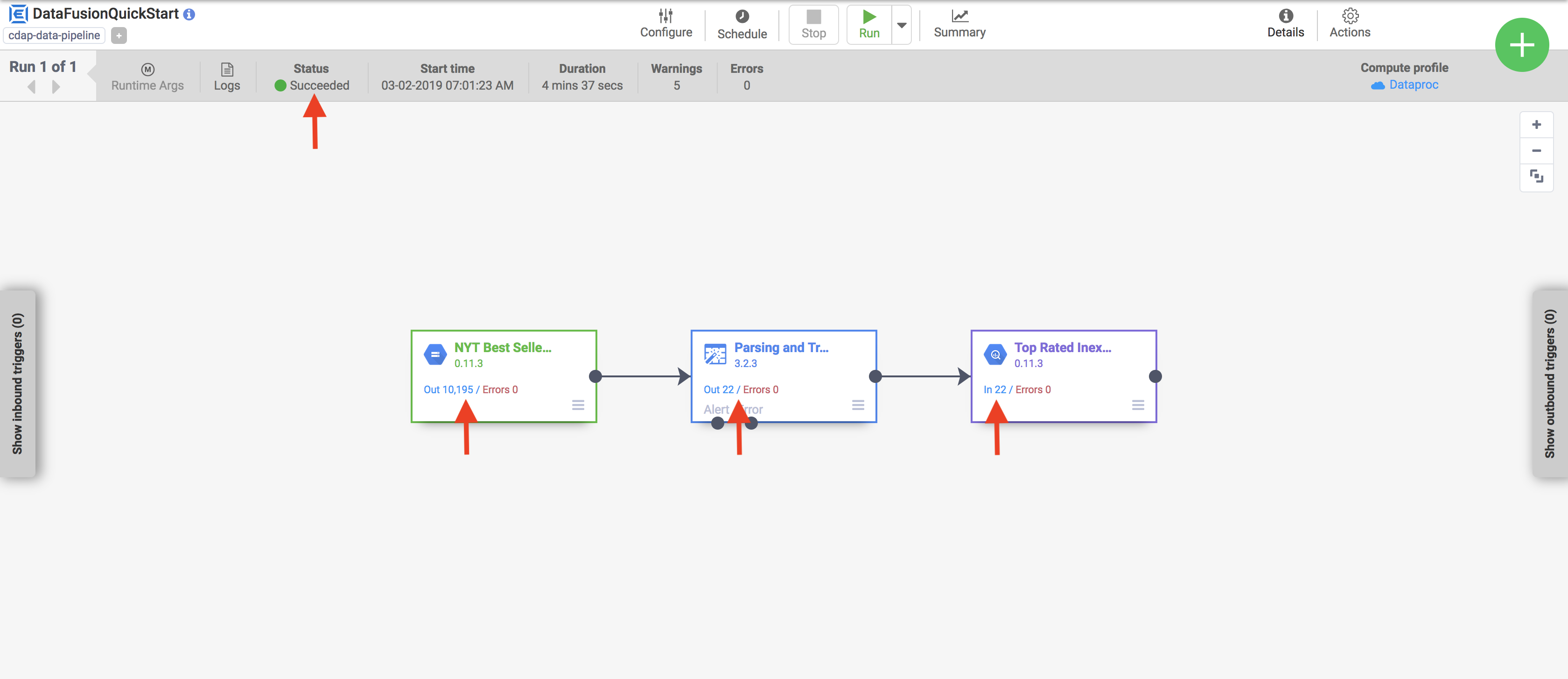

Cuando se ejecuta una canalización, Cloud Data Fusion hace lo siguiente:

Vea los resultados

Después de unos minutos, la canalización finaliza. El estado de la canalización cambia a Finalizada y se muestra la cantidad de registros que procesa cada nodo.

Limpia

Sigue estos pasos para evitar que se apliquen cargos a tu cuenta de Google Cloud por los recursos que usaste en esta página.