Provides deep insights and recommendations derived from retail analytics to customers through a chatbot enabled by Gemini 2.5 Flash

Combines cost-effectiveness with speed to manage intensive AI workloads with NVIDIA N4 GPUs available through Google Cloud

Achieves 99.99% availability with Google Kubernetes Engine (GKE) to ensure continuous service delivery

With Google Cloud, Google AI, and Cloud GPUs, Tictag.io is managing intensive AI processing workloads cost-effectively, powering a chatbot that provides intelligent responses and recommendations to retailers, and operating a secure, scalable data architecture.

With Google Cloud, Google AI, and Cloud GPUs, Tictag.io is managing intensive AI processing workloads cost-effectively, powering a chatbot that provides intelligent responses and recommendations to retailers, and operating a secure, scalable data architecture.

The new way to improve retail performance

with video analytics

Building on Google Cloud is fast and smooth, and its straightforward interface enables our engineers to deliver new features faster.

Lo Yihang

Chief Technology Officer, Tictag.io

Small issues in store execution—like misplaced products or empty shelves—can quietly erode retail performance. Tictag.io's AI-powered platform analyzes in-store video to surface these issues in real time, helping retailers improve merchandising, inventory management, and overall store productivity. Supported by a data collection and annotation engine that trains and fine-tunes models across image, text, and audio, the platform uses AI agents to drive improvements in store execution activities such as merchandising and inventory management.

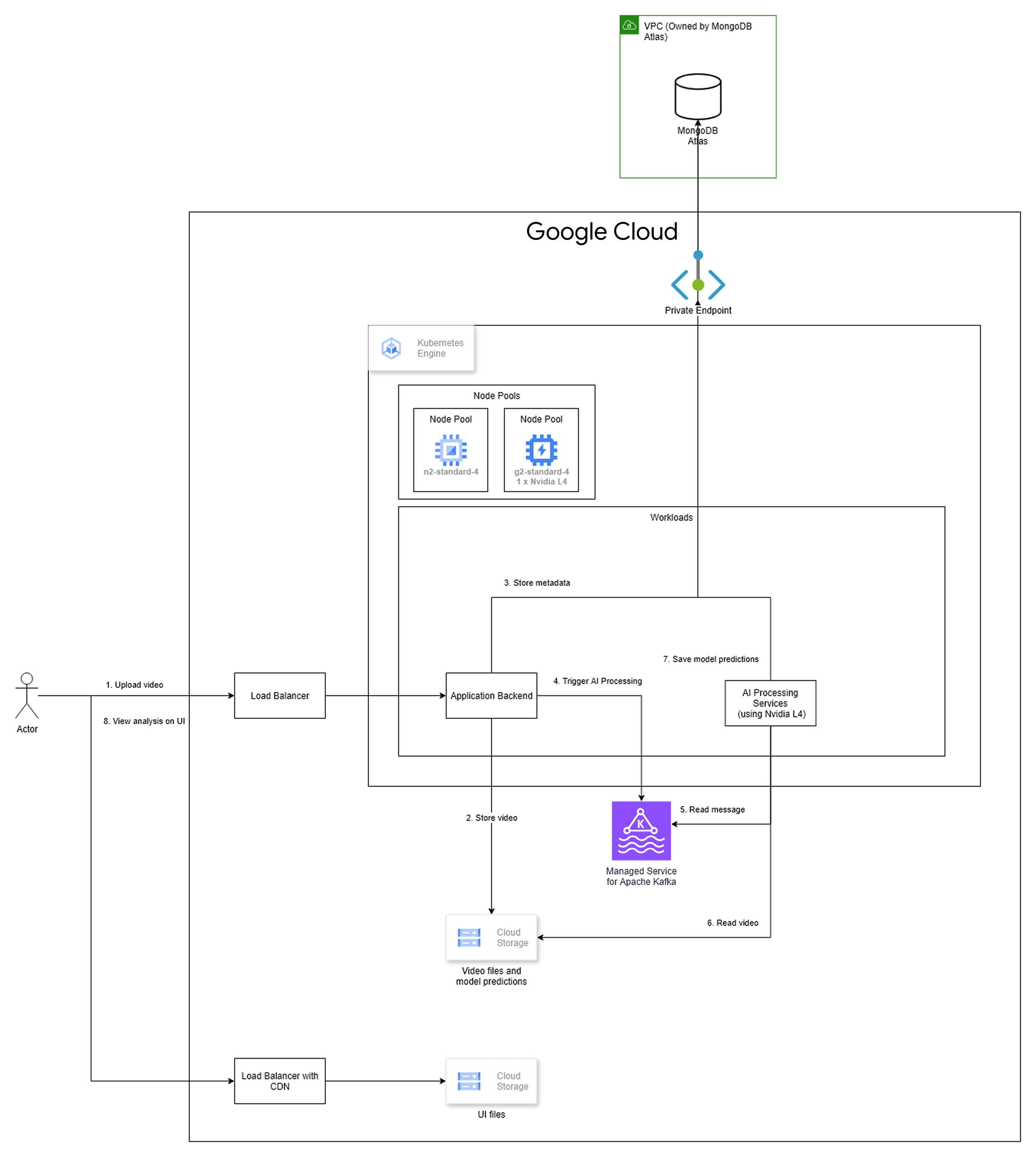

By running its platform in Google Cloud, the organization is using a scalable, robust architecture that handles video processing workloads from ingestion to analysis. Google Kubernetes Engine (GKE) orchestrates Tictag.io's containerized applications using specialized node pools, including a pool equipped with NVIDIA L4 Cloud GPUs to manage intensive AI processing workloads and ensure fast model inference. Google Cloud enables the organization to build and run AI models that, by detecting people, demographics, and events, provide insights into customer traffic, conversions, and other important retail metrics.

Retail team members reviewing store performance can track these insights on dashboards and query in a natural language chatbot enabled by Gemini 2.5 Flash to gather insights and recommendations. "For example, managers may ask the chatbot to compare the performance of two different stores over the previous seven days, or act on recommendations such as adding a new team member near a new store display on Saturday afternoon to reduce unattended customer time," explains Lo Yihang, Chief Technology Officer, Tictag.io.

With a Google Cloud data infrastructure underpinning its AI innovation strategy, Tictag.io is ideally positioned to realize plans to expand into mining, ecommerce fulfillment, and smart city applications.

The infrastructure comprises:

- Cloud Load Balancing, Cloud Storage, and Managed Service for Apache Kafka to process data quickly and easily

- A private endpoint within Virtual Private Cloud (VPC) that connects the company's cloud environment securely and with low latency to its primary MongoDB Atlas database, and

- Application Load Balancer integrated with Cloud CDN to deliver UI files from Cloud Storage to ensure a fast, responsive user experience.

Why Google Cloud

To power intensive AI processing for its retail video analysis platform, Tictag.io needed access to a GPU that balanced cost and performance to the organization's needs. With its incumbent cloud provider unable to meet these requirements, and a scalable, secure cloud infrastructure and services required for its platform, AI models and data, Tictag.io turned to Google Cloud.

"We selected the NVIDIA L4 Cloud GPU because we can fit two into one node and it sits at the sweet spot of cost effectiveness and speed for us," says Lo.

The Gemini LLM also meets Tictag.io's cost requirements, which is crucial as the organization did not want to impose any restrictions on the number of questions each client could ask of the chatbot each month. "With Gemini 2.5 Flash, we could strike the right balance between cost and being able to offer that capability," says Lo.

Many queries require context about the retail business and individual store, and auxiliary information such as the number of holidays in a given month. With a larger context window, we can fit more context in, enabling the Gemini 2.5 Flash-assisted chatbot to understand users' intent and provide more appropriate responses.

Lo Yihang

Chief Technology Officer, Tictag.io

The LLM's large context window—the amount of data the model can process to respond to a single query—also plays a key role in its ability to understand data and generate high-quality recommendations. "Many queries require context about the retail business and individual store, as well as auxiliary information such as the number of holidays in a given month," explains Lo. "With a larger context window, we can fit more context in, enabling the Gemini 2.5 Flash-assisted chatbot to understand users' intent and provide more appropriate responses."

Onboarding to the new cloud infrastructure proved seamless, with the organization utilizing frictionless workflows to deliver best-practice security and networking architectures. With uptime on Google Cloud at 99.99%, ensuring continuous service delivery, Tictag.io can focus on innovation, product, and expansion. "Building on Google Cloud is fast and smooth, and its straightforward interface enables our engineers to deliver new features faster," concludes Lo. Tictag.io is now looking at using Vertex AI to train AI models monthly on new datasets as the organization moves into a fully operational phase.

Founded in 2019 in Singapore and with operations across South East Asia and Korea, Tictag.io provides an integrated platform that transforms retail and field data into measurable insights.

Industry: Technology

Location: Singapore

Products: Cloud CDN, Cloud GPUs, Cloud Load Balancing, Cloud Storage, Gemini 2.5 Flash, Google Cloud, Google Kubernetes Engine (GKE), Managed Service for Apache Kafka, Vertex AI, Virtual Private Cloud (VPC)