En este instructivo, se muestra cómo actualizar un entorno de varios clústeres de Google Kubernetes Engine (GKE) con Ingress para varios clústeres. Este instructivo es una continuación del documento acerca de las actualizaciones de GKE de varios clústeres con Ingress de varios clústeres, en el que se explican el proceso, la arquitectura y los términos con más detalle. Te recomendamos que leas el documento de concepto antes de este instructivo.

Para obtener una comparación detallada entre Ingress de varios clústeres (MCI), la puerta de enlace de varios clústeres (MCG) y el balanceador de cargas con grupos de extremos de red independientes (LB y NEG independientes), consulta Elige tu clúster múltiple API de balanceo de cargas para GKE.

Este documento está dirigido a los Google Cloud administradores responsables de mantener las flotas de clústeres de GKE.

Te recomendamos actualizar automáticamente tus clústeres de GKE. La actualización automática es una forma completamente administrada de actualizar tus clústeres (nodos y plano de control) automáticamente en un programa de actualización determinado porGoogle Cloud. No se requiere intervención del operador. Sin embargo, si quieres tener más control sobre cómo y cuándo se actualizan los clústeres, en este instructivo se explica un método para actualizar varios clústeres en los que tus apps se ejecutan en todos los clústeres. Luego, se usa la entrada de varios clústeres para desviar un clúster a la vez antes de la actualización.

Arquitectura

En este instructivo, se usa la siguiente arquitectura. Hay un total de tres clústeres: dos (blue y green) actúan como clústeres idénticos con la misma app implementada y el tercero (ingress-config) actúa como el clúster de plano de control que configura Ingress. En este instructivo, implementarás una app de muestra en dos clústeres de app (clústeres blue y green).

Objetivos

- Crear tres clústeres de GKE y registrarlos como una flota

- Configurar un clúster de GKE (

ingress-config) como el clúster de configuración central. - Implementar una app de muestra en los otros clústeres de GKE

- Configurar Ingress de varios clústeres para enviar el tráfico del cliente a la app que se ejecuta en ambos clústeres de aplicación.

- Establecer un generador de cargas para la app y configurar la supervisión

- Quitar (desviar) un clúster de aplicación del Ingress de varios clústeres y actualizar el clúster que se desvió.

- Devolver el tráfico al clúster actualizado mediante la entrada de varios clústeres.

Costos

En este documento, usarás los siguientes componentes facturables de Google Cloud:

Para generar una estimación de costos en función del uso previsto, usa la calculadora de precios.

Cuando finalices las tareas que se describen en este documento, puedes borrar los recursos que creaste para evitar que continúe la facturación. Para obtener más información, consulta Cómo realizar una limpieza.

Antes de comenzar

- En este instructivo, se requiere que configures Ingress de varios clústeres para que se configure lo siguiente:

- Dos o más clústeres con las mismas apps, como espacios de nombres, implementaciones y servicios, que se ejecutan en todos los clústeres

- La actualización automática está desactivada para todos los clústeres

- Los clústeres son clústeres nativos de la VPC que usan rangos de direcciones IP de alias

- Habilitar el balanceo de cargas HTTP (habilitado de forma predeterminada)

gcloud --versiondebe ser 369 o superior. Los pasos del registro de clústeres de GKE dependen de esta versión o una superior

-

In the Google Cloud console, on the project selector page, select or create a Google Cloud project.

-

Make sure that billing is enabled for your Google Cloud project.

-

In the Google Cloud console, activate Cloud Shell.

Configura el proyecto predeterminado:

export PROJECT=$(gcloud info --format='value(config.project)') gcloud config set project ${PROJECT}Habilita las APIs de GKE, Hub y

multiclusteringress:gcloud services enable container.googleapis.com \ gkehub.googleapis.com \ multiclusteringress.googleapis.com \ multiclusterservicediscovery.googleapis.com

Configure el entorno

En Cloud Shell, clona el repositorio para obtener los archivos de este instructivo:

cd ${HOME} git clone https://github.com/GoogleCloudPlatform/kubernetes-engine-samplesCrea un directorio de

WORKDIR:cd kubernetes-engine-samples/networking/gke-multicluster-upgrade-mci/ export WORKDIR=`pwd`

Crea y registra clústeres de GKE en Hub

En esta sección, crearás tres clústeres de GKE y los registrarás en GKE Enterprise Hub.

Crea clústeres de GKE

En Cloud Shell, crea tres clústeres de GKE:

gcloud container clusters create ingress-config --zone us-west1-a \ --release-channel=None --no-enable-autoupgrade --num-nodes=4 \ --enable-ip-alias --workload-pool=${PROJECT}.svc.id.goog --quiet --async gcloud container clusters create blue --zone us-west1-b --num-nodes=3 \ --release-channel=None --no-enable-autoupgrade --enable-ip-alias \ --workload-pool=${PROJECT}.svc.id.goog --quiet --async gcloud container clusters create green --zone us-west1-c --num-nodes=3 \ --release-channel=None --no-enable-autoupgrade --enable-ip-alias \ --workload-pool=${PROJECT}.svc.id.goog --quietPara los fines de este instructivo, puedes crear los clústeres en una sola región, en tres zonas diferentes:

us-west1-a,us-west1-byus-west1-c. Para obtener más información sobre las regiones y zonas, consulta Geografía y regiones.Espera unos minutos hasta que todos los clústeres se creen de manera correcta. Asegúrate de que los clústeres estén en ejecución:

gcloud container clusters listEl resultado es similar a este:

NAME: ingress-config LOCATION: us-west1-a MASTER_VERSION: 1.22.8-gke.202 MASTER_IP: 35.233.186.135 MACHINE_TYPE: e2-medium NODE_VERSION: 1.22.8-gke.202 NUM_NODES: 4 STATUS: RUNNING NAME: blue LOCATION: us-west1-b MASTER_VERSION: 1.22.8-gke.202 MASTER_IP: 34.82.35.222 MACHINE_TYPE: e2-medium NODE_VERSION: 1.22.8-gke.202 NUM_NODES: 3 STATUS: RUNNING NAME: green LOCATION: us-west1-c MASTER_VERSION: 1.22.8-gke.202 MASTER_IP: 35.185.204.26 MACHINE_TYPE: e2-medium NODE_VERSION: 1.22.8-gke.202 NUM_NODES: 3 STATUS: RUNNINGCrea un archivo

kubeconfigy conéctate a todos los clústeres para generar entradas en el archivokubeconfig:touch gke-upgrade-kubeconfig export KUBECONFIG=gke-upgrade-kubeconfig gcloud container clusters get-credentials ingress-config \ --zone us-west1-a --project ${PROJECT} gcloud container clusters get-credentials blue --zone us-west1-b \ --project ${PROJECT} gcloud container clusters get-credentials green --zone us-west1-c \ --project ${PROJECT}Crea un usuario y un contexto para cada clúster a fin de usar el archivo

kubeconfigpara crear la autenticación a los clústeres. Después de crear el archivokubeconfig, puedes cambiar el contexto entre clústeres con rapidez.Verifica que tengas tres clústeres en el archivo

kubeconfig:kubectl config view -ojson | jq -r '.clusters[].name'Este es el resultado:

gke_gke-multicluster-upgrades_us-west1-a_ingress-config gke_gke-multicluster-upgrades_us-west1-b_blue gke_gke-multicluster-upgrades_us-west1-c_greenObtén el contexto de los tres clústeres para usarlo más adelante:

export INGRESS_CONFIG_CLUSTER=$(kubectl config view -ojson | jq \ -r '.clusters[].name' | grep ingress-config) export BLUE_CLUSTER=$(kubectl config view -ojson | jq \ -r '.clusters[].name' | grep blue) export GREEN_CLUSTER=$(kubectl config view -ojson | jq \ -r '.clusters[].name' | grep green) echo -e "${INGRESS_CONFIG_CLUSTER}\n${BLUE_CLUSTER}\n${GREEN_CLUSTER}"Este es el resultado:

gke_gke-multicluster-upgrades_us-west1-a_ingress-config gke_gke-multicluster-upgrades_us-west1-b_blue gke_gke-multicluster-upgrades_us-west1-c_green

Registra clústeres de GKE en una flota

El registro de tus clústeres en una flota te permite operar los clústeres de Kubernetes en entornos híbridos. Los clústeres registrados en las flotas pueden usar funciones de GKE avanzadas, como Ingress de varios clústeres. Para registrar un clúster de GKE en una flota, puedes usar una cuenta de servicio de Google Clouddirectamente o usar el enfoque recomendado de Workload Identity Federation for GKE, que permite que una cuenta de servicio de Kubernetes en el clúster de GKE actúe como una cuenta de servicio de Identity and Access Management.

Registra los tres clústeres como una flota:

gcloud container fleet memberships register ingress-config \ --gke-cluster=us-west1-a/ingress-config \ --enable-workload-identity gcloud container fleet memberships register blue \ --gke-cluster=us-west1-b/blue \ --enable-workload-identity gcloud container fleet memberships register green \ --gke-cluster=us-west1-c/green \ --enable-workload-identityVerifica que los clústeres estén registrados:

gcloud container fleet memberships listEl resultado es similar a este:

NAME: blue EXTERNAL_ID: 401b4f08-8246-4f97-a6d8-cf1b78c2a91d NAME: green EXTERNAL_ID: 8041c36a-9d42-40c8-a67f-54fcfd84956e NAME: ingress-config EXTERNAL_ID: 65ac48fe-5043-42db-8b1e-944754a0d725Para configurar el clúster

ingress-configcomo el clúster de configuración de la entrada de varios clústeres, habilita la funciónmulticlusteringressa través de Hub:gcloud container fleet ingress enable --config-membership=ingress-configEl comando anterior agrega los CDR (definiciones de recursos personalizados)

MulticlusterIngressyMulticlusterServiceal clústeringress-config. Este comando tardará unos minutos en completarse. Espera antes de continuar con el siguiente paso.Verifica que el clúster

ingress-clusterse haya configurado correctamente para la entrada de varios clústeres:watch gcloud container fleet ingress describeEspera hasta que el resultado sea similar al siguiente:

createTime: '2022-07-05T10:21:40.383536315Z' membershipStates: projects/662189189487/locations/global/memberships/blue: state: code: OK updateTime: '2022-07-08T10:59:44.230329189Z' projects/662189189487/locations/global/memberships/green: state: code: OK updateTime: '2022-07-08T10:59:44.230329950Z' projects/662189189487/locations/global/memberships/ingress-config: state: code: OK updateTime: '2022-07-08T10:59:44.230328520Z' name: projects/gke-multicluster-upgrades/locations/global/features/multiclusteringress resourceState: state: ACTIVE spec: multiclusteringress: configMembership: projects/gke-multicluster-upgrades/locations/global/memberships/ingress-config state: state: code: OK description: Ready to use updateTime: '2022-07-08T10:57:33.303543609Z' updateTime: '2022-07-08T10:59:45.247576318Z'Para salir del comando

watch, presiona Control + C.

Implementa una aplicación de muestra en los clústeres azul y verde

En Cloud Shell, implementa la app de muestra

whereamien los clústeresblueygreen:kubectl --context ${BLUE_CLUSTER} apply -f ${WORKDIR}/application-manifests kubectl --context ${GREEN_CLUSTER} apply -f ${WORKDIR}/application-manifestsEspera unos minutos y asegúrate de que todos los pods en los clústeres

blueygreentengan el estadoRunning:kubectl --context ${BLUE_CLUSTER} get pods kubectl --context ${GREEN_CLUSTER} get podsEl resultado es similar al siguiente:

NAME READY STATUS RESTARTS AGE whereami-deployment-756c7dc74c-zsmr6 1/1 Running 0 74s NAME READY STATUS RESTARTS AGE whereami-deployment-756c7dc74c-sndz7 1/1 Running 0 68s.

Configura Ingress de varios clústeres

En esta sección, crearás un Ingress de varios clústeres que envía el tráfico a la aplicación que se ejecuta en los clústeres blue y green. Debes usar Cloud Load Balancing para crear un balanceador de cargas que use la app whereami en los clústeres blue y green como backends. Para crear el balanceador de cargas, necesitarás dos recursos: un MultiClusterIngress y uno o más MultiClusterServices.

Los objetos MultiClusterIngress y MultiClusterService son análogos de varios clústeres para los recursos de Ingress y servicio de Kubernetes existentes que se usan en el contexto de clúster único.

En Cloud Shell, implementa el recurso

MulticlusterIngressen el clústeringress-config:kubectl --context ${INGRESS_CONFIG_CLUSTER} apply -f ${WORKDIR}/multicluster-manifests/mci.yamlEste es el resultado:

multiclusteringress.networking.gke.io/whereami-mci createdImplementa el recurso

MulticlusterServiceen el clústeringress-config:kubectl --context ${INGRESS_CONFIG_CLUSTER} apply -f ${WORKDIR}/multicluster-manifests/mcs-blue-green.yamlEste es el resultado:

multiclusterservice.networking.gke.io/whereami-mcs createdPara comparar los dos recursos, haz lo siguiente:

Inspecciona el recurso

MulticlusterIngress:kubectl --context ${INGRESS_CONFIG_CLUSTER} get multiclusteringress -o yamlEl resultado contiene lo siguiente:

spec: template: spec: backend: serviceName: whereami-mcs servicePort: 8080El recurso

MulticlusterIngresses similar al recurso Ingress de Kubernetes, excepto que la especificaciónserviceNameapunta a un recursoMulticlusterService.Inspecciona el recurso

MulticlusterService:kubectl --context ${INGRESS_CONFIG_CLUSTER} get multiclusterservice -o yamlEl resultado contiene lo siguiente:

spec: clusters: - link: us-west1-b/blue - link: us-west1-c/green template: spec: ports: - name: web port: 8080 protocol: TCP targetPort: 8080 selector: app: whereamiEl recurso

MulticlusterServicees similar a un recurso de servicio de Kubernetes, excepto que tiene una especificaciónclusters. El valorclusterses la lista de clústeres registrados en los que se crea el recursoMulticlusterService.Verifica que el recurso

MulticlusterIngresshaya creado un balanceador de cargas con un servicio de backend que apunta al recursoMulticlusterService:watch kubectl --context ${INGRESS_CONFIG_CLUSTER} \ get multiclusteringress -o jsonpath="{.items[].status.VIP}"Este proceso puede tardar hasta 10 minutos. Espera hasta que el resultado sea similar al siguiente:

34.107.246.9Para salir del comando

watch, presionaControl+C.

En Cloud Shell, obtén la VIP de Cloud Load Balancing:

export GCLB_VIP=$(kubectl --context ${INGRESS_CONFIG_CLUSTER} \ get multiclusteringress -o json | jq -r '.items[].status.VIP') \ && echo ${GCLB_VIP}El resultado es similar a este:

34.107.246.9Usa

curlpara acceder al balanceador de cargas y a la aplicación implementada:curl ${GCLB_VIP}El resultado es similar al siguiente:

{ "cluster_name": "green", "host_header": "34.107.246.9", "pod_name": "whereami-deployment-756c7dc74c-sndz7", "pod_name_emoji": "😇", "project_id": "gke-multicluster-upgrades", "timestamp": "2022-07-08T14:26:07", "zone": "us-west1-c" }Ejecuta el comando

curlvarias veces. Ten en cuenta que las cargas de las solicitudes se balancean entre la aplicaciónwhereamique se implementa en dos clústeres,blueygreen.

Configura el generador de cargas

En esta sección, configurarás un servicio loadgenerator que genera tráfico de cliente a la VIP de Cloud Load Balancing. Primero, el tráfico se envía a los clústeres blue y green porque el recurso MulticlusterService está configurado para enviar tráfico a ambos clústeres. Más adelante, deberás configurar el recurso MulticlusterService para enviar tráfico a un solo clúster.

Configura el manifiesto

loadgeneratorpara enviar tráfico de cliente a Cloud Load Balancing:TEMPLATE=loadgen-manifests/loadgenerator.yaml.templ && envsubst < ${TEMPLATE} > ${TEMPLATE%.*}Implementa

loadgeneratoren el clústeringress-config:kubectl --context ${INGRESS_CONFIG_CLUSTER} apply -f ${WORKDIR}/loadgen-manifestsVerifica que los pods

loadgeneratoren el clústeringress-configtengan el estadoRunning:kubectl --context ${INGRESS_CONFIG_CLUSTER} get podsEl resultado es similar al siguiente:

NAME READY STATUS RESTARTS AGE loadgenerator-5498cbcb86-hqscp 1/1 Running 0 53s loadgenerator-5498cbcb86-m2z2z 1/1 Running 0 53s loadgenerator-5498cbcb86-p56qb 1/1 Running 0 53sSi alguno de los pods no tiene un estado

Running, espera unos minutos y vuelve a ejecutar el comando.

Supervisar el tráfico

En esta sección, supervisarás el tráfico a la app whereami mediante la consola de Google Cloud.

En la sección anterior, configuraste una implementación loadgenerator que simula el tráfico de cliente mediante el acceso a la app whereami a través de la VIP de Cloud Load Balancing. Puedes supervisar estas métricas en la consola de Google Cloud. Primero debes configurar la supervisión para que puedas realizarla mientras desvías los clústeres para las actualizaciones (se describe en la siguiente sección).

Crea un panel para mostrar el tráfico que llega a Ingress de varios clústeres:

export DASH_ID=$(gcloud monitoring dashboards create \ --config-from-file=dashboards/cloud-ops-dashboard.json \ --format=json | jq -r ".name" | awk -F '/' '{print $4}')El resultado es similar a este:

Created [721b6c83-8f9b-409b-a009-9fdf3afb82f8]Las métricas de Cloud Load Balancing están disponibles en la consola de Google Cloud. Genera la URL:

echo "https://console.cloud.google.com/monitoring/dashboards/builder/${DASH_ID}/?project=${PROJECT}&timeDomain=1h"El resultado es similar a este:

https://console.cloud.google.com/monitoring/dashboards/builder/721b6c83-8f9b-409b-a009-9fdf3afb82f8/?project=gke-multicluster-upgrades&timeDomain=1h"En un navegador, ve a la URL generada por el comando anterior.

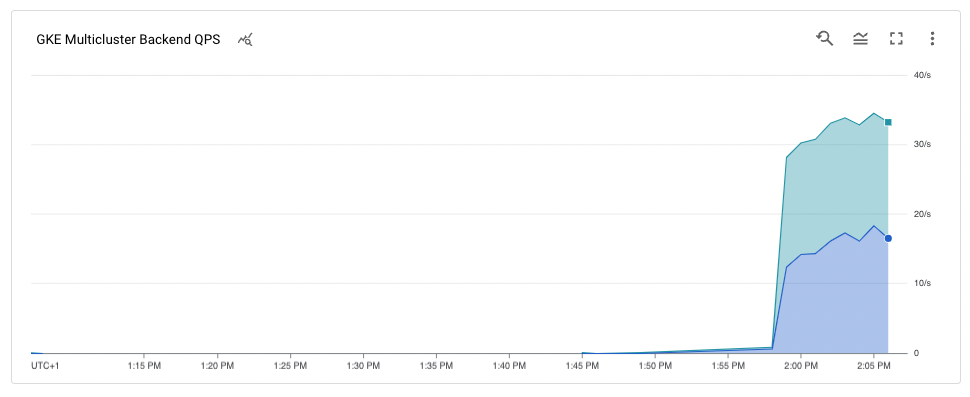

El tráfico a la aplicación de muestra va del generador de cargas a los clústeres

blueygreen(como lo indican las dos zonas en las que se encuentran los clústeres). En el gráfico de cronograma de las métricas, se muestra el tráfico que se dirige a ambos backends. Los valoresk8s1-de desplazamiento del mouse indican que los grupos de extremos de red (NEG) de los dosMulticlusterServicesde frontend se ejecutan en los clústeresblueygreen.

Desvía y actualiza el clúster blue

En esta sección, desviarás el clúster blue. Desviar un clúster significa que lo quitas del grupo de balanceo de cargas. Después de que desvíes el clúster blue, todo el tráfico de cliente destinado a la aplicación se dirige al clúster green.

Puedes supervisar este proceso como se describe en la sección anterior. Una vez que el clúster se desvía, puedes actualizarlo. Después de la actualización, puedes volver a colocarlo en el grupo de balanceo de cargas. Repite estos pasos para actualizar el otro clúster (no se muestra en este instructivo).

Para desviar el clúster blue, debes actualizar el recurso MulticlusterService en el clúster ingress-cluster y quitar el clúster blue de la especificación clusters.

Desvía el clúster azul

En Cloud Shell, actualiza el recurso

MulticlusterServiceen el clústeringress-config:kubectl --context ${INGRESS_CONFIG_CLUSTER} \ apply -f ${WORKDIR}/multicluster-manifests/mcs-green.yamlVerifica que solo tengas el clúster

greenen la especificaciónclusters:kubectl --context ${INGRESS_CONFIG_CLUSTER} get multiclusterservice \ -o json | jq '.items[].spec.clusters'Este es el resultado:

[ { "link": "us-west1-c/green" } ]Solo el clúster

greenaparece en la especificaciónclusters, por lo que únicamente el clústergreenestá en el grupo de balanceo de cargas.Puedes ver las métricas de Cloud Load Balancing en la consola de Google Cloud. Genera la URL:

echo "https://console.cloud.google.com/monitoring/dashboards/builder/${DASH_ID}/?project=${PROJECT}&timeDomain=1h"En un navegador, ve a la URL generada a partir del comando anterior.

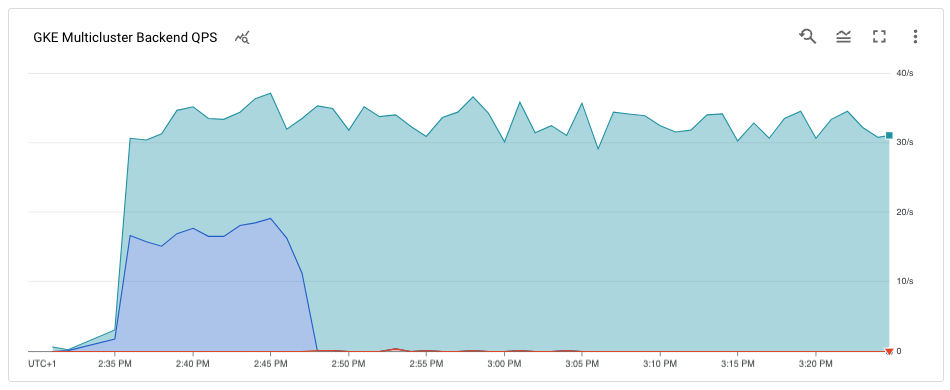

En el gráfico, se muestra que solo el clúster

greenrecibe tráfico.

Actualiza el clúster blue:

Ahora que el clúster blue ya no recibe tráfico de clientes, puedes actualizarlo (nodos y plano de control).

En Cloud Shell, obtén la versión actual de los clústeres:

gcloud container clusters listEl resultado es similar al siguiente:

NAME: ingress-config LOCATION: us-west1-a MASTER_VERSION: 1.22.8-gke.202 MASTER_IP: 35.233.186.135 MACHINE_TYPE: e2-medium NODE_VERSION: 1.22.8-gke.202 NUM_NODES: 4 STATUS: RUNNING NAME: blue LOCATION: us-west1-b MASTER_VERSION: 1.22.8-gke.202 MASTER_IP: 34.82.35.222 MACHINE_TYPE: e2-medium NODE_VERSION: 1.22.8-gke.202 NUM_NODES: 3 STATUS: RUNNING NAME: green LOCATION: us-west1-c MASTER_VERSION: 1.22.8-gke.202 MASTER_IP: 35.185.204.26 MACHINE_TYPE: e2-medium NODE_VERSION: 1.22.8-gke.202 NUM_NODES: 3 STATUS: RUNNINGTus versiones de clúster pueden ser diferentes según cuándo completes este instructivo.

Obtén la lista de las versiones

MasterVersionsdisponibles en la zona:gcloud container get-server-config --zone us-west1-b --format=json | jq \ '.validMasterVersions[0:20]'El resultado es similar al siguiente:

[ "1.24.1-gke.1400", "1.23.7-gke.1400", "1.23.6-gke.2200", "1.23.6-gke.1700", "1.23.6-gke.1501", "1.23.6-gke.1500", "1.23.5-gke.2400", "1.23.5-gke.1503", "1.23.5-gke.1501", "1.22.10-gke.600", "1.22.9-gke.2000", "1.22.9-gke.1500", "1.22.9-gke.1300", "1.22.8-gke.2200", "1.22.8-gke.202", "1.22.8-gke.201", "1.22.8-gke.200", "1.21.13-gke.900", "1.21.12-gke.2200", "1.21.12-gke.1700" ]Obtén una lista de las versiones

NodeVersionsdisponibles en la zona:gcloud container get-server-config --zone us-west1-b --format=json | jq \ '.validNodeVersions[0:20]'El resultado es similar a este:

[ "1.24.1-gke.1400", "1.23.7-gke.1400", "1.23.6-gke.2200", "1.23.6-gke.1700", "1.23.6-gke.1501", "1.23.6-gke.1500", "1.23.5-gke.2400", "1.23.5-gke.1503", "1.23.5-gke.1501", "1.22.10-gke.600", "1.22.9-gke.2000", "1.22.9-gke.1500", "1.22.9-gke.1300", "1.22.8-gke.2200", "1.22.8-gke.202", "1.22.8-gke.201", "1.22.8-gke.200", "1.22.7-gke.1500", "1.22.7-gke.1300", "1.22.7-gke.900" ]Configura una variable de entorno para una versión

MasterVersionyNodeVersionque esté en las listasMasterVersionsyNodeVersions, y que sea posterior a la versión actual del clústerblue, por ejemplo:export UPGRADE_VERSION="1.22.10-gke.600"En este instructivo, se usa la versión

1.22.10-gke.600. Las versiones de tu clúster pueden ser diferentes según las versiones que estén disponibles cuando completes este instructivo. Para obtener más información sobre la actualización, consulta la página Actualiza de forma manual un clúster o un grupo de nodos.Actualiza el nodo

control planedel clústerblue:gcloud container clusters upgrade blue \ --zone us-west1-b --master --cluster-version ${UPGRADE_VERSION}Para confirmar la actualización, presiona

Y.Este proceso tarda unos minutos en completarse. Espera hasta que se complete la actualización antes de continuar.

Una vez que se completa la actualización, el resultado es el siguiente:

Updated [https://container.googleapis.com/v1/projects/gke-multicluster-upgrades/zones/us-west1-b/clusters/blue].Actualiza los nodos en el clúster

blue:gcloud container clusters upgrade blue \ --zone=us-west1-b --node-pool=default-pool \ --cluster-version ${UPGRADE_VERSION}Para confirmar la actualización, presiona

Y.Este proceso tarda unos minutos en completarse. Espera hasta que se complete la actualización del nodo antes de continuar.

Una vez que se completa la actualización, el resultado es el siguiente:

Upgrading blue... Done with 3 out of 3 nodes (100.0%): 3 succeeded...done. Updated [https://container.googleapis.com/v1/projects/gke-multicluster-upgrades/zones/us-west1-b/clusters/blue].Verifica que el clúster

blueesté actualizado:gcloud container clusters listEl resultado es similar a este:

NAME: ingress-config LOCATION: us-west1-a MASTER_VERSION: 1.22.8-gke.202 MASTER_IP: 35.233.186.135 MACHINE_TYPE: e2-medium NODE_VERSION: 1.22.8-gke.202 NUM_NODES: 4 STATUS: RUNNING NAME: blue LOCATION: us-west1-b MASTER_VERSION: 1.22.10-gke.600 MASTER_IP: 34.82.35.222 MACHINE_TYPE: e2-medium NODE_VERSION: 1.22.10-gke.600 NUM_NODES: 3 STATUS: RUNNING NAME: green LOCATION: us-west1-c MASTER_VERSION: 1.22.8-gke.202 MASTER_IP: 35.185.204.26 MACHINE_TYPE: e2-medium NODE_VERSION: 1.22.8-gke.202 NUM_NODES: 3 STATUS: RUNNING

Agrega el clúster blue de vuelta al grupo de balanceo de cargas

En esta sección, volverás a agregar el clúster blue al grupo de balanceo de cargas.

En Cloud Shell, verifica que la implementación de la aplicación se ejecute en el clúster

blueantes de agregarla de vuelta al grupo de balanceo de cargas:kubectl --context ${BLUE_CLUSTER} get podsEl resultado es similar a este:

NAME READY STATUS RESTARTS AGE whereami-deployment-756c7dc74c-xdnb6 1/1 Running 0 17mActualiza el recurso

MutliclusterServicepara volver a agregar el clústerblueal grupo de balanceo de cargas:kubectl --context ${INGRESS_CONFIG_CLUSTER} apply \ -f ${WORKDIR}/multicluster-manifests/mcs-blue-green.yamlVerifica que tengas los clústeres

blueygreenen la especificación de clústeres:kubectl --context ${INGRESS_CONFIG_CLUSTER} get multiclusterservice \ -o json | jq '.items[].spec.clusters'Este es el resultado:

[ { "link": "us-west1-b/blue" }, { "link": "us-west1-c/green" } ]Los clústeres

blueygreenahora están en la especificaciónclusters.Las métricas de Cloud Load Balancing están disponibles en la consola de Google Cloud. Genera la URL:

echo "https://console.cloud.google.com/monitoring/dashboards/builder/${DASH_ID}/?project=${PROJECT}&timeDomain=1h"En un navegador, ve a la URL generada por el comando anterior.

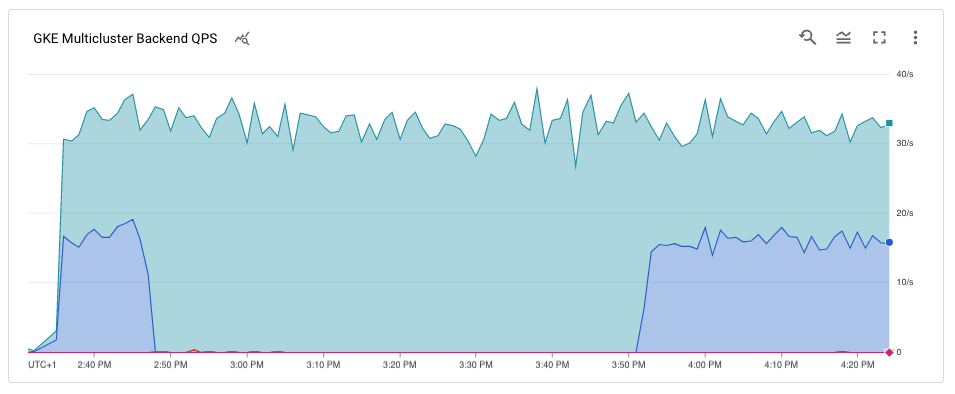

En el gráfico, se muestra que los clústeres azul y verde reciben tráfico del generador de cargas a través del balanceador de cargas.

Felicitaciones. Actualizaste de forma correcta un clúster de GKE en una arquitectura de varios clústeres con la entrada de varios clústeres.

A fin de actualizar el clúster

green, repite el proceso para desviar y actualizar el clúster azul y reemplazablueporgreen.

Realiza una limpieza

Para evitar que se apliquen cargos a tu cuenta de Google Cloud por los recursos usados en este instructivo, borra el proyecto que contiene los recursos o conserva el proyecto y borra los recursos individuales.

La manera más fácil de eliminar la facturación es borrar el proyecto de Google Cloud que creaste para el instructivo. Como alternativa, puedes borrar los recursos individuales.

Borra los clústeres

En Cloud Shell, anula el registro y borra los clústeres

blueygreen:gcloud container fleet memberships unregister blue --gke-cluster=us-west1-b/blue gcloud container clusters delete blue --zone us-west1-b --quiet gcloud container fleet memberships unregister green --gke-cluster=us-west1-c/green gcloud container clusters delete green --zone us-west1-c --quietBorra el recurso

MuticlusterIngressdel clústeringress-config:kubectl --context ${INGRESS_CONFIG_CLUSTER} delete -f ${WORKDIR}/multicluster-manifests/mci.yamlCon este comando, se borran los recursos de Cloud Load Balancing del proyecto.

Cancela el registro del clúster

ingress-configy bórralo:gcloud container fleet memberships unregister ingress-config --gke-cluster=us-west1-a/ingress-config gcloud container clusters delete ingress-config --zone us-west1-a --quietVerifica que se borren todos los clústeres:

gcloud container clusters listEste es el resultado:

*<null>*Restablece el archivo

kubeconfig:unset KUBECONFIGQuita la carpeta

WORKDIR:cd ${HOME} rm -rf ${WORKDIR}

Borra el proyecto

- In the Google Cloud console, go to the Manage resources page.

- In the project list, select the project that you want to delete, and then click Delete.

- In the dialog, type the project ID, and then click Shut down to delete the project.

¿Qué sigue?

- Obtén más información sobre Ingress de varios clústeres.

- Obtén más información para implementar Ingress de varios clústeres en diferentes clústeres.

- Explora arquitecturas de referencia, diagramas y prácticas recomendadas sobre Google Cloud. Consulta nuestro Cloud Architecture Center.