本頁說明如何使用 Dataflow 監控介面中的「執行作業詳細資料」分頁。

總覽

Dataflow 執行工作時,會將管道的步驟轉換為階段。每個步驟代表個別轉換作業,而階段則代表 Dataflow 執行的單一工作單元。為最佳化管道,Dataflow 可能會將多個步驟融合成一個階段。

Dataflow 監控介面的「執行詳細資料」分頁會顯示工作階段的相關資訊。您可以使用「執行詳細資料」分頁標籤排解效能問題,例如:

- 造成效能瓶頸的緩慢階段

- 停滯不前且未推進的階段

- 落後其他工作站的 Worker VM

查看執行作業詳細資料

如要查看 Job 的執行詳細資料,請按照下列步驟操作:

在 Google Cloud 控制台中,依序前往「Dataflow」>「Jobs」(工作) 頁面。

選取職務。

按一下「執行作業詳細資料」分頁標籤。

選取下列任一檢視畫面:

- 階段進度

- 階段工作流程

- 工作站進度 (僅限批次工作)

以下各節將說明這些檢視畫面。

階段進度畫面

您可以在「階段進度」檢視畫面中觀察工作的整體進度,並比較各階段的相對進度。批次工作和串流工作的「階段進度」檢視畫面版面配置不同。

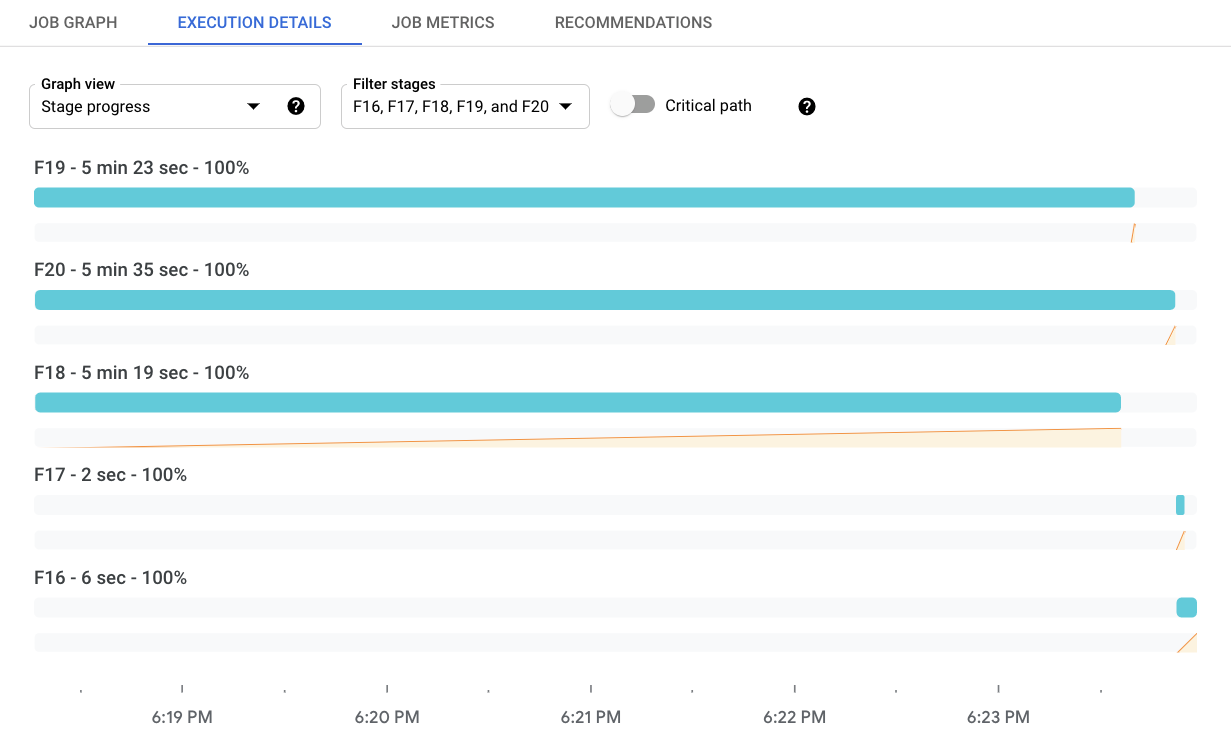

批次工作的階段進度

如果是批次工作,「階段進度」檢視畫面會依開始時間順序顯示工作階段。每個階段都會顯示下列元素:

- 顯示停止和結束時間的長條。

- 折線圖:以百分比的形式顯示階段進度,代表階段總工作量的百分比。

- 該階段的總時間。

如要篩選顯示的階段,請按一下「篩選階段」。如要查看關鍵路徑,請切換「關鍵路徑」。重要路徑是指會影響工作整體執行時間的階段序列。舉例來說,如果分支機構比整體工作更早完成,或是輸入內容未延遲下游處理程序,系統就會排除這些項目。

「階段資訊」面板會顯示階段的詳細資訊。如要查看階段詳細資料,請按一下該階段的進度列。「階段資訊」面板會顯示階段的下列資訊:

- 狀態

- 進度百分比

- 開始和結束時間

- 這個階段涵蓋的管道步驟

- 實際執行時間最長的步驟

- 任何進度落後項目的詳細資料

如果面板未顯示,請按一下「切換面板『舞台資訊』」。

串流工作的階段進度

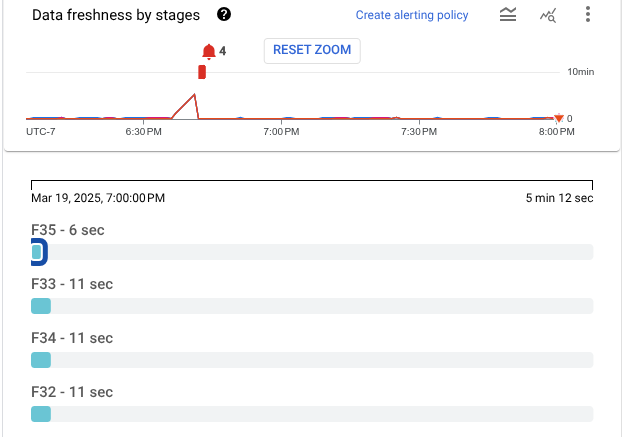

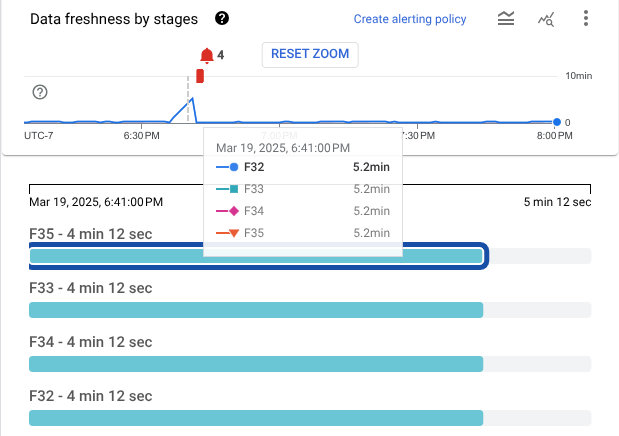

如果是串流工作,「階段進度」檢視畫面會顯示兩種資料即時程度的圖表。資料更新間隔 是指資料元素的時間戳記與處理該元素的時間之間的間隔。值越大,表示管道處理輸入資料的時間越長。

第一個視覺化圖表會以折線圖顯示每個階段的資料更新間隔。如要查看特定時間點的資料更新頻率,請將游標懸停在圖表上。如要選取時間範圍,請使用時間挑選器,或按一下圖表並拖曳來選取範圍。如要篩選顯示的階段,請按一下「篩選階段」。

圖表也會醒目顯示資料中的異常狀況:

- 可能速度緩慢:資料新穎程度超過所選時間範圍的第 95 個百分位數。

- 可能停滯:資料更新間隔超過所選時間範圍的第 99 個百分位數。

第二個視覺化圖表會以一系列長條顯示各個階段。這些階段會依拓撲順序排列。系統會先顯示沒有後代的階段,接著顯示後代。長條的長度代表資料更新間隔。 如要查看特定時間點的資料新鮮度值,請按一下圖表。長條會更新,顯示所選時間的資料新鮮度。

下圖顯示有四個階段的工作。在所選時間戳記,資料更新時間範圍為 9 秒到 13 秒。

下圖顯示選取不同時間戳記的相同工作。此時,所有階段的資料新鮮度都超過 4 分鐘,表示管道可能停滯。

「階段資訊」面板會顯示階段的詳細資訊。如要查看階段詳細資料,請按一下該階段的進度列。「階段資訊」面板會顯示階段的下列資訊:

如果面板未顯示,請按一下「切換面板『舞台資訊』」。

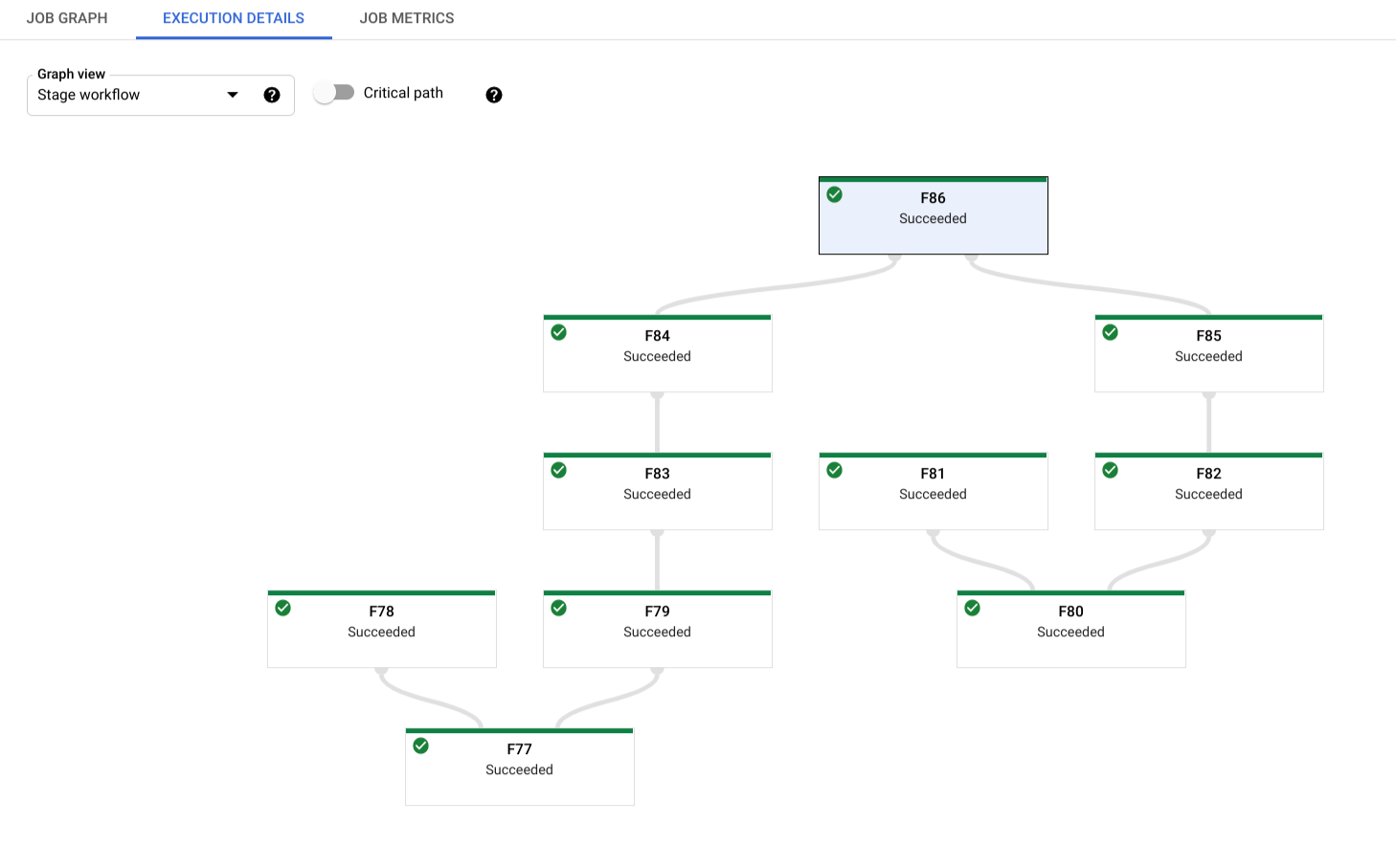

階段工作流程

「階段工作流程」檢視畫面會以工作流程圖顯示工作階段。如要查看階段的詳細資料,請按一下該階段的方塊。

如果是批次工作,請按一下「關鍵路徑」,只查看直接影響工作整體執行時間的階段。

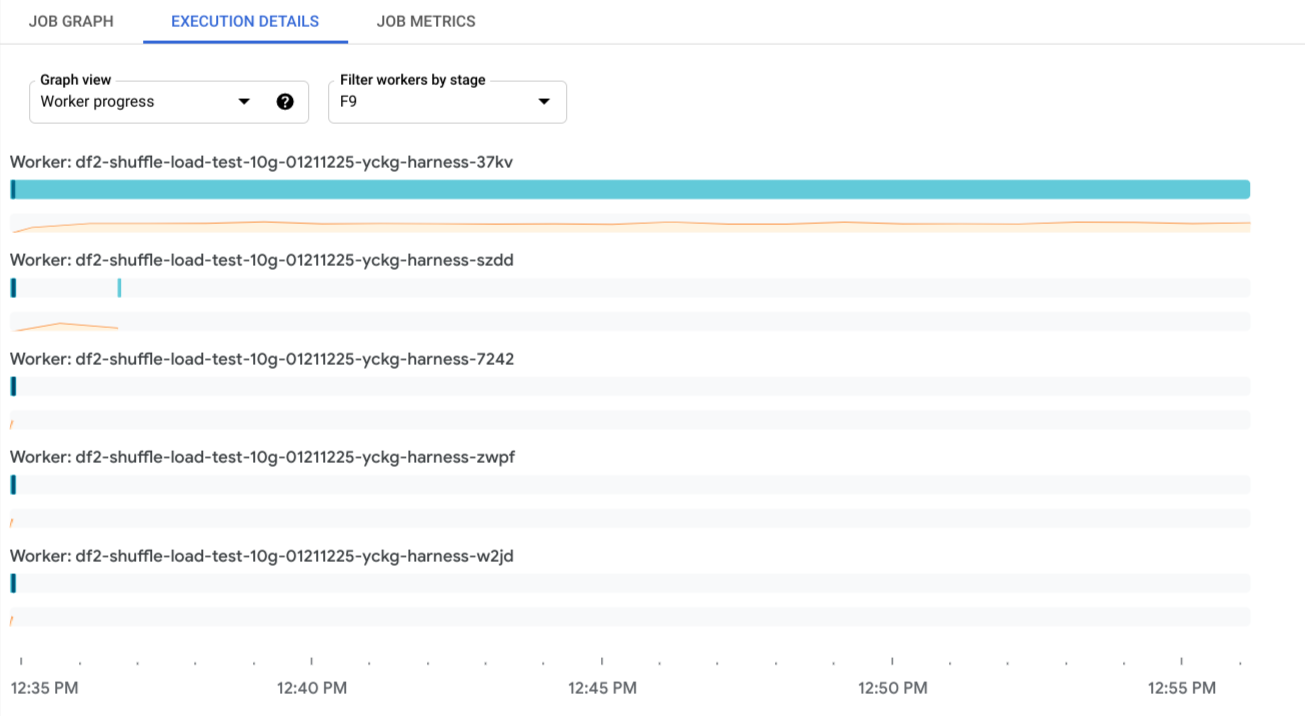

工作站進度

如果是批次工作,工作站進度檢視畫面會顯示特定階段的工作站。這項檢視畫面不適用於串流工作。如要存取這個檢視畫面,請選取「Worker progress」,然後在「Filter workers by stage」中選取階段。或者,您也可以按照下列步驟,從「階段進度」檢視畫面啟用這項檢視畫面:

- 在「階段進度」檢視畫面中,找出要查看的階段。

- 將指標懸停在該階段的長條上。

- 在「Stage」資訊卡中,按一下「View workers」。系統會顯示「工作站進度」檢視畫面,並預先選取階段。

每個長條都對應至排定給工作站的工作項目。每個工作站旁邊都有 CPU 使用率的走勢圖,方便您找出使用率過低的問題。

後續步驟

- 進一步瞭解如何排解 Dataflow pipeline 問題。

- 請參閱這篇文章,瞭解 Dataflow 網頁式監控使用者介面的不同元件。