Cette page explique comment activer Cloud Trace sur votre agent et afficher les traces pour analyser les temps de réponse aux requêtes et les opérations exécutées.

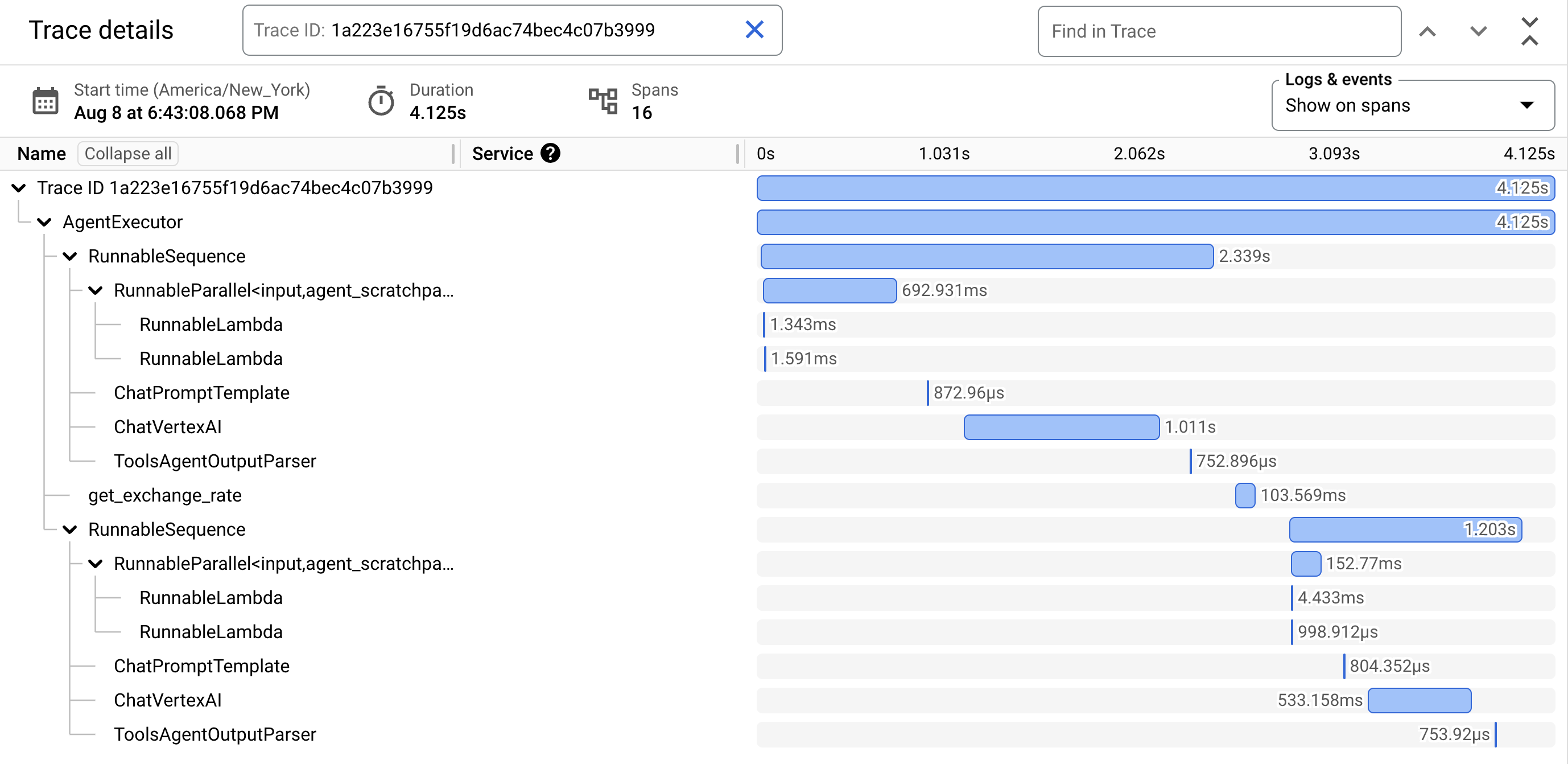

Une trace est une chronologie des requêtes lorsque votre agent répond à chaque requête. Par exemple, le diagramme de Gantt suivant montre un exemple de trace provenant d'un LangchainAgent :

La première ligne du diagramme de Gantt correspond à la trace. Une trace est composée de spans individuels, qui représentent une unité de travail unique, comme un appel de fonction ou une interaction avec un LLM. Le premier span représente la requête globale. Chaque span fournit des informations sur une opération spécifique, comme son nom, ses heures de début et de fin, ainsi que tous les attributs pertinents de la requête. Par exemple, le JSON suivant montre une seule étendue qui représente un appel à un grand modèle de langage (LLM) :

{

"name": "llm",

"context": {

"trace_id": "ed7b336d-e71a-46f0-a334-5f2e87cb6cfc",

"span_id": "ad67332a-38bd-428e-9f62-538ba2fa90d4"

},

"span_kind": "LLM",

"parent_id": "f89ebb7c-10f6-4bf8-8a74-57324d2556ef",

"start_time": "2023-09-07T12:54:47.597121-06:00",

"end_time": "2023-09-07T12:54:49.321811-06:00",

"status_code": "OK",

"status_message": "",

"attributes": {

"llm.input_messages": [

{

"message.role": "system",

"message.content": "You are an expert Q&A system that is trusted around the world.\nAlways answer the query using the provided context information, and not prior knowledge.\nSome rules to follow:\n1. Never directly reference the given context in your answer.\n2. Avoid statements like 'Based on the context, ...' or 'The context information ...' or anything along those lines."

},

{

"message.role": "user",

"message.content": "Hello?"

}

],

"output.value": "assistant: Yes I am here",

"output.mime_type": "text/plain"

},

"events": [],

}

Pour en savoir plus, consultez la documentation Cloud Trace sur les traces et les spans et sur le contexte de trace.

Écrire des traces pour un agent

Pour écrire des traces pour un agent :

ADK

Pour activer le traçage de AdkApp, spécifiez enable_tracing=True lorsque vous développez un agent Agent Development Kit.

Exemple :

from vertexai.agent_engines import AdkApp

from google.adk.agents import Agent

agent = Agent(

model=model,

name=agent_name,

tools=[get_exchange_rate],

)

app = AdkApp(

agent=agent, # Required.

enable_tracing=True, # Optional.

)

LangchainAgent

Pour activer le traçage de LangchainAgent, spécifiez enable_tracing=True lorsque vous développez un agent LangChain.

Exemple :

from vertexai.agent_engines import LangchainAgent

agent = LangchainAgent(

model=model, # Required.

tools=[get_exchange_rate], # Optional.

enable_tracing=True, # [New] Optional.

)

LanggraphAgent

Pour activer le traçage de LanggraphAgent, spécifiez enable_tracing=True lorsque vous développez un agent LangGraph.

Exemple :

from vertexai.agent_engines import LanggraphAgent

agent = LanggraphAgent(

model=model, # Required.

tools=[get_exchange_rate], # Optional.

enable_tracing=True, # [New] Optional.

)

LlamaIndex

Pour activer le traçage pour LlamaIndexQueryPipelineAgent, spécifiez enable_tracing=True lorsque vous développez un agent LlamaIndex.

Exemple :

from vertexai.preview import reasoning_engines

def runnable_with_tools_builder(model, runnable_kwargs=None, **kwargs):

from llama_index.core.query_pipeline import QueryPipeline

from llama_index.core.tools import FunctionTool

from llama_index.core.agent import ReActAgent

llama_index_tools = []

for tool in runnable_kwargs.get("tools"):

llama_index_tools.append(FunctionTool.from_defaults(tool))

agent = ReActAgent.from_tools(llama_index_tools, llm=model, verbose=True)

return QueryPipeline(modules = {"agent": agent})

agent = reasoning_engines.LlamaIndexQueryPipelineAgent(

model="gemini-2.0-flash",

runnable_kwargs={"tools": [get_exchange_rate]},

runnable_builder=runnable_with_tools_builder,

enable_tracing=True, # Optional

)

Personnalisé

Pour activer le traçage pour les agents personnalisés, consultez Traçage à l'aide d'OpenTelemetry pour en savoir plus.

Cela exportera des traces vers Cloud Trace sous le projet dans Configurer votre projet Google Cloud .

Afficher les traces d'un agent

Vous pouvez afficher vos traces à l'aide de l'explorateur Trace :

Pour obtenir les autorisations nécessaires pour afficher les données de trace dans la console Google Cloud ou sélectionner un champ d'application de trace, demandez à votre administrateur de vous accorder le rôle IAM Utilisateur Cloud Trace (

roles/cloudtrace.user) sur votre projet.Accédez à l'explorateur de traces dans la console Google Cloud :

En haut de la page, sélectionnez votre projet Google Cloud (correspondant à

PROJECT_ID).

Pour en savoir plus, consultez la documentation Cloud Trace.

Quotas et limites

Certaines valeurs d'attributs peuvent être tronquées lorsqu'elles atteignent les limites de quota. Pour en savoir plus, consultez la page Quota Cloud Trace.

Tarifs

Cloud Trace propose une version gratuite. Pour en savoir plus, consultez la page Tarifs de Cloud Trace.