這個頁面包含安裝及設定必要元件的步驟,方便您建立備份。同時也會說明如何從備份還原及復原歷史稽核記錄的存取權。

在遠端 bucket 中設定備份

本節說明如何在符合 S3 規範的 bucket 中建立稽核記錄備份。

事前準備

您必須有權存取下列資源,才能建立稽核記錄備份:

- 具有端點、私密存取金鑰和存取金鑰 ID 的遠端 S3 bucket。

- 儲存系統的憑證授權單位 (CA) 憑證。

- 運作中的叢集。

取得存取來源 bucket 的憑證

請按照下列步驟,找出包含稽核記錄的 bucket 憑證:

在根管理員叢集上,列出專案命名空間中的 bucket:

kubectl get bucket -n PROJECT_NAMESPACE輸出內容必須如下列範例所示,其中稽核記錄值區會顯示名稱和端點:

NAME BUCKET NAME DESCRIPTION STORAGE CLASS FULLY-QUALIFIED-BUCKET-NAME ENDPOINT REGION BUCKETREADY REASON MESSAGE audit-logs-loki-all audit-logs-loki-all Bucket for storing audit-logs-loki-all logs Standard wwq2y-audit-logs-loki-all https://appliance-objectstorage.zone1.google.gdch.test zone1 True BucketCreationSucceeded Bucket successfully created. cortex-metrics-alertmanager cortex-metrics-alertmanager storage bucket for cortex metrics alertmanager configuration data Standard wwq2y-cortex-metrics-alertmanager https://appliance-objectstorage.zone1.google.gdch.test zone1 True BucketCreationSucceeded Bucket successfully created. cortex-metrics-blocks cortex-metrics-blocks storage bucket for cortex metrics data Standard wwq2y-cortex-metrics-blocks https://appliance-objectstorage.zone1.google.gdch.test zone1 True BucketCreationSucceeded Bucket successfully created. cortex-metrics-ruler cortex-metrics-ruler storage bucket for cortex metrics rules data Standard wwq2y-cortex-metrics-ruler https://appliance-objectstorage.zone1.google.gdch.test zone1 True BucketCreationSucceeded Bucket successfully created. ops-logs-loki-all ops-logs-loki-all Bucket for storing ops-logs-loki-all logs Standard wwq2y-ops-logs-loki-all https://appliance-objectstorage.zone1.google.gdch.test```使用您取得的輸出內容資訊,為轉移作業設定下列環境變數:

SRC_BUCKET= BUCKET_NAME SRC_ENDPOINT = ENDPOINT SRC_PATH= FULLY_QUALIFIED_BUCKET_NAME更改下列內容:

BUCKET_NAME:您要建立備份的稽核記錄所在值區名稱。這個值位於輸出內容的BUCKET NAME欄位。ENDPOINT:包含您要建立備份的稽核記錄的值區端點。這個值位於輸出內容的ENDPOINT欄位。FULLY_QUALIFIED_BUCKET_NAME:包含您要建立備份的稽核記錄的值區完整名稱。這個值位於輸出內容的FULLY-QUALIFIED-BUCKET-NAME欄位。

取得在上一個步驟中選取的值區的密鑰:

kubectl get secret -n PROJECT_NAMESPACE -o json| jq --arg jq_src $SRC_BUCKET '.items[].metadata|select(.annotations."object.gdc.goog/subject"==$jq_src)|.name'輸出內容必須如下列範例所示,顯示值區的密鑰名稱:

"object-storage-key-sysstd-sa-olxv4dnwrwul4bshu37ikebgovrnvl773owaw3arx225rfi56swa"使用您取得的密鑰名稱,設定下列環境變數:

SRC_CREDENTIALS="PROJECT_NAMESPACE/SECRET_NAME"將

SECRET_NAME替換為您在上一個輸出內容中取得的密鑰名稱。為儲存系統的 CA 憑證建立密鑰:

kubectl create secret generic -n PROJECT_NAMESPACE audit-log-loki-ca \ --from-literal=ca.crt=CERTIFICATE將

CERTIFICATE替換為儲存系統的 CA 憑證。設定下列環境變數:

SRC_CA_CERTIFICATE=PROJECT_NAMESPACE/audit-log-loki-ca

取得存取遠端值區的憑證

請按照下列步驟操作,找出要建立備份的 bucket 憑證:

設定下列環境變數:

DST_ACCESS_KEY_ID= ACCESS_KEY DST_SECRET_ACCESS_KEY= ACCESS_SECRET DST_ENDPOINT= REMOTE_ENDPOINT DST_PATH= REMOTE_BUCKET_NAME更改下列內容:

ACCESS_KEY:目的地遠端值區的存取金鑰。ACCESS_SECRET:目的地遠端值區的存取密碼。REMOTE_ENDPOINT:目的地遠端值區的端點。REMOTE_BUCKET_NAME:目標遠端 bucket 的名稱。

為遠端 bucket 建立密鑰:

kubectl create secret generic -n PROJECT_NAMESPACE s3-bucket-credentials \ --from-literal=access-key-id=$DST_ACCESS_KEY_ID \ --from-literal=secret-access-key=$DST_SECRET_ACCESS_KEY使用密鑰位置設定下列環境變數:

DST_CREDENTIALS=PROJECT_NAMESPACE/s3-bucket-credentials如果目的地 bucket 需要設定 CA 憑證,請使用 bucket 的 CA 憑證建立密鑰:

kubectl create secret generic -n PROJECT_NAMESPACE s3-bucket-ca \ --from-literal=ca.crt=REMOTE_CERTIFICATE將

REMOTE_CERTIFICATE替換為目的地遠端 bucket 的 CA 憑證。使用憑證位置設定下列環境變數:

DST_CA_CERTIFICATE=PROJECT_NAMESPACE/s3-bucket-ca

設定稽核記錄轉移作業

請按照下列步驟操作,設定將稽核記錄從來源 bucket 轉移至備份目的地 bucket:

為稽核記錄轉移作業建立服務帳戶。您必須提供服務帳戶的存取權,才能讀取專案命名空間中的來源 bucket 和密鑰。

kubectl apply -f - <<EOF --- apiVersion: v1 kind: ServiceAccount metadata: name: audit-log-transfer-sa namespace: PROJECT_NAMESPACE --- apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: name: read-secrets-role namespace: PROJECT_NAMESPACE rules: - apiGroups: [""] resources: ["secrets"] verbs: ["get", "watch", "list"] --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: read-secrets-rolebinding namespace: PROJECT_NAMESPACE subjects: - kind: ServiceAccount name: audit-log-transfer-sa namespace: PROJECT_NAMESPACE roleRef: kind: Role name: read-secrets-role apiGroup: rbac.authorization.k8s.io --- apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: name: audit-log-read-bucket-role namespace: PROJECT_NAMESPACE rules: - apiGroups: - object.gdc.goog resourceNames: - $SRC_BUCKET # Source bucket name resources: - buckets verbs: - read-object --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: audit-log-transfer-role-binding namespace: PROJECT_NAMESPACE roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: audit-log-read-bucket-role subjects: - kind: ServiceAccount name: audit-log-transfer-sa namespace: PROJECT_NAMESPACE --- EOF建立移轉工作,將記錄匯出至遠端 bucket:

kubectl apply -f - <<EOF --- apiVersion: batch/v1 kind: Job metadata: name: audit-log-transfer-job namespace: PROJECT_NAMESPACE spec: template: spec: serviceAccountName: audit-log-transfer-sa containers: - name: storage-transfer-pod image: gcr.io/private-cloud-staging/storage-transfer:latest imagePullPolicy: Always command: - /storage-transfer args: - '--src_endpoint=$SRC_ENDPOINT - '--dst_endpoint=$DST_ENDPOINT - '--src_path=\$SRC_PATH - '--dst_path=\$DST_PATH - '--src_credentials=$SRC_CREDENTIALS - '--dst_credentials=$DST_CREDENTIALS - '--dst_ca_certificate_reference=$DST_CA_CERTIFICATE # Optional. Based on destination type. - '--src_ca_certificate_reference=$SRC_CA_CERTIFICATE - '--src_type=s3' - '--dst_type=s3' - '--bandwidth_limit=100M' # Optional of the form '10K', '100M', '1G' bytes per second restartPolicy: OnFailure # Will restart on failure. --- EOF

排定工作後,您可以提供工作名稱 (如 audit-log-transfer-job) 和專案命名空間,監控資料移轉作業。

所有資料都移轉至目的地 bucket 後,這項工作就會結束。

從備份還原稽核記錄

本節說明如何從備份還原稽核記錄。

事前準備

如要還原稽核記錄,您必須有權存取下列資源:

- 稽核記錄備份值區,其中包含端點、私密存取金鑰和存取金鑰 ID。

- 儲存系統的憑證授權單位 (CA) 憑證。

- 運作中的叢集。

建立用於還原稽核記錄的值區

請按照下列步驟建立值區,用來儲存還原的稽核記錄:

建立 bucket 資源和服務帳戶:

kubectl apply -f - <<EOF --- apiVersion: object.gdc.goog/v1 kind: Bucket metadata: annotations: object.gdc.goog/audit-logs: IO labels: logging.private.gdch.goog/loggingpipeline-name: default name: audit-logs-loki-restore namespace: PROJECT_NAMESPACE spec: bucketPolicy: lockingPolicy: defaultObjectRetentionDays: 1 description: Bucket for storing audit-logs-loki logs restore storageClass: Standard --- apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: name: audit-logs-loki-restore-buckets-role namespace: PROJECT_NAMESPACE rules: - apiGroups: - object.gdc.goog resourceNames: - audit-logs-loki-restore resources: - buckets verbs: - read-object - write-object --- apiVersion: v1 automountServiceAccountToken: false kind: ServiceAccount metadata: labels: logging.private.gdch.goog/loggingpipeline-name: default name: audit-logs-loki-restore-sa namespace: PROJECT_NAMESPACE --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: audit-logs-loki-restore namespace: PROJECT_NAMESPACE roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: audit-logs-loki-restore-buckets-role subjects: - kind: ServiceAccount name: audit-logs-loki-restore-sa namespace: PROJECT_NAMESPACE EOF系統會建立值區和密鑰。

查看建立的 bucket:

kubectl get bucket audit-logs-loki-restore -n PROJECT_NAMESPACE輸出內容必須如下列範例所示。建立 bucket 可能需要幾分鐘的時間。

NAME BUCKET NAME DESCRIPTION STORAGE CLASS FULLY-QUALIFIED-BUCKET-NAME ENDPOINT REGION BUCKETREADY REASON MESSAGE audit-logs-loki-restore audit-logs-loki-restore Bucket for storing audit-logs-loki logs restore Standard dzbl6-audit-logs-loki-restore https://objectstorage.zone1.google.gdch.test zone1 True BucketCreationSucceeded Bucket successfully created.輸出內容必須顯示您建立的值區。建立 bucket 可能需要幾分鐘的時間。

使用您取得的輸出內容資訊,設定下列環境變數:

DST_BUCKET= RESTORE_BUCKET_NAME DST_ENDPOINT = RESTORE_ENDPOINT DST_PATH= RESTORE_FULLY_QUALIFIED_BUCKET_NAME更改下列內容:

RESTORE_BUCKET_NAME:用於還原稽核記錄的值區名稱。這個值位於輸出內容的BUCKET NAME欄位。RESTORE_ENDPOINT:用於還原稽核記錄的值區端點。這個值位於輸出內容的ENDPOINT欄位。RESTORE_FULLY_QUALIFIED_BUCKET_NAME:用於還原稽核記錄的 bucket 完整名稱。這個值位於輸出內容的FULLY-QUALIFIED-BUCKET-NAME欄位。

取得所建立值區的密鑰:

kubectl get secret -n PROJECT_NAMESPACE -o json| jq --arg jq_src $DST_BUCKET '.items[].metadata|select(.annotations."object.gdc.goog/subject"==$jq_src)|.name'輸出內容必須如下列範例所示,顯示值區的密鑰名稱:

"object-storage-key-sysstd-sa-olxv4dnwrwul4bshu37ikebgovrnvl773owaw3arx225rfi56swa"使用您取得的密鑰名稱,設定下列環境變數:

DST_SECRET_NAME=RESTORE_SECRET_NAME DST_CREDENTIALS="PROJECT_NAMESPACE/RESTORE_SECRET_NAME"將

RESTORE_SECRET_NAME替換為您在上一個輸出內容中取得的密鑰名稱。為儲存系統的 CA 憑證建立密鑰:

kubectl create secret generic -n PROJECT_NAMESPACE audit-log-loki-restore-ca \ --from-literal=ca.crt=CERTIFICATE將

CERTIFICATE替換為儲存系統的 CA 憑證。設定下列環境變數,指定憑證位置:

DST_CA_CERTIFICATE=PROJECT_NAMESPACE/audit-log-loki-restore-ca

取得存取備份值區的憑證

請按照下列步驟,找出包含稽核記錄備份的 bucket 憑證:

設定下列環境變數:

SRC_ACCESS_KEY_ID= ACCESS_KEY SRC_SECRET_ACCESS_KEY= ACCESS_SECRET SRC_ENDPOINT= REMOTE_ENDPOINT SRC_PATH= REMOTE_BUCKET_NAME更改下列內容:

ACCESS_KEY:備份值區的存取金鑰。ACCESS_SECRET:備份 bucket 的存取密鑰。REMOTE_ENDPOINT:備份值區的端點。REMOTE_BUCKET_NAME:備份 bucket 的名稱。

為備份 bucket 建立密鑰:

kubectl create secret generic -n PROJECT_NAMESPACE s3-backup-bucket-credentials \ --from-literal=access-key-id=$SRC_ACCESS_KEY_ID \ --from-literal=secret-access-key=$SRC_SECRET_ACCESS_KEY使用密鑰位置設定下列環境變數:

SRC_CREDENTIALS=PROJECT_NAMESPACE/s3-backup-bucket-credentials使用 bucket 的 CA 憑證建立密鑰:

kubectl create secret generic -n PROJECT_NAMESPACE s3-backup-bucket-ca \ --from-literal=ca.crt=BACKUP_CERTIFICATE將

BACKUP_CERTIFICATE替換為備份 bucket 的 CA 憑證。使用憑證位置設定下列環境變數:

SRC_CA_CERTIFICATE=PROJECT_NAMESPACE/s3-backup-bucket-ca

設定稽核記錄還原功能

請按照下列步驟,設定將稽核記錄從備份值區轉移至還原值區:

為稽核記錄轉移作業建立服務帳戶。您必須提供服務帳戶的存取權,才能從專案命名空間中的值區和密鑰讀取及寫入資料。

kubectl apply -f - <<EOF --- apiVersion: v1 kind: ServiceAccount metadata: name: audit-log-restore-sa namespace: PROJECT_NAMESPACE --- apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: name: read-secrets-role namespace: PROJECT_NAMESPACE rules: - apiGroups: [""] resources: ["secrets"] verbs: ["get", "watch", "list"] --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: read-secrets-rolebinding-restore namespace: PROJECT_NAMESPACE subjects: - kind: ServiceAccount name: audit-log-restore-sa namespace: PROJECT_NAMESPACE roleRef: kind: Role name: read-secrets-role apiGroup: rbac.authorization.k8s.io --- apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: name: audit-log-restore-bucket-role namespace: PROJECT_NAMESPACE rules: - apiGroups: - object.gdc.goog resourceNames: - $DST_BUCKET # Source bucket name resources: - buckets verbs: - read-object - write-object --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: audit-log-restore-role-binding namespace: PROJECT_NAMESPACE roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: audit-log-restore-bucket-role subjects: - kind: ServiceAccount name: audit-log-restore-sa namespace: PROJECT_NAMESPACE --- EOF建立轉移工作,從遠端備份 bucket 還原記錄:

kubectl apply -f - <<EOF --- apiVersion: batch/v1 kind: Job metadata: name: audit-log-restore-job namespace: PROJECT_NAMESPACE spec: template: spec: serviceAccountName: audit-log-restore-sa containers: - name: storage-transfer-pod image: gcr.io/private-cloud-staging/storage-transfer:latest imagePullPolicy: Always command: - /storage-transfer args: - '--src_endpoint=$SRC_ENDPOINT - '--dst_endpoint=$DST_ENDPOINT - '--src_path=\$SRC_PATH - '--dst_path=\$DST_PATH - '--src_credentials=$SRC_CREDENTIALS - '--dst_credentials=$DST_CREDENTIALS - '--dst_ca_certificate_reference=$DST_CA_CERTIFICATE - '--src_ca_certificate_reference=$SRC_CA_CERTIFICATE # Optional. Based on destination type - '--src_type=s3' - '--dst_type=s3' - '--bandwidth_limit=100M' # Optional of the form '10K', '100M', '1G' bytes per second restartPolicy: OnFailure # Will restart on failure. --- EOF

排定工作後,您可以提供工作名稱 (如 audit-log-restore-job) 和專案命名空間,監控資料移轉作業。

所有資料都移轉至目的地 bucket 後,這項工作就會結束。

部署稽核記錄例項,存取記錄

您必須部署 Loki 執行個體 (又稱稽核記錄執行個體),才能存取還原的記錄。

如要設定稽核記錄執行個體,請使用您為還原工作建立的 audit-log-restore-sa 服務帳戶。請按照下列步驟部署執行個體:

建立執行個體設定的

ConfigMap物件:kubectl apply -f - <<EOF apiVersion: v1 kind: ConfigMap metadata: name: audit-logs-loki-restore namespace: PROJECT_NAMESPACE data: loki.yaml: |- auth_enabled: true common: ring: kvstore: store: inmemory chunk_store_config: max_look_back_period: 0s compactor: shared_store: s3 working_directory: /data/loki/boltdb-shipper-compactor compaction_interval: 10m retention_enabled: true retention_delete_delay: 2h retention_delete_worker_count: 150 ingester: chunk_target_size: 1572864 chunk_encoding: snappy max_chunk_age: 2h chunk_idle_period: 90m chunk_retain_period: 30s autoforget_unhealthy: true lifecycler: ring: kvstore: store: inmemory replication_factor: 1 heartbeat_timeout: 10m max_transfer_retries: 0 wal: enabled: true flush_on_shutdown: true dir: /wal checkpoint_duration: 1m replay_memory_ceiling: 20GB limits_config: retention_period: 48h enforce_metric_name: false reject_old_samples: false ingestion_rate_mb: 256 ingestion_burst_size_mb: 256 max_streams_per_user: 20000 max_global_streams_per_user: 20000 per_stream_rate_limit: 256MB per_stream_rate_limit_burst: 256MB shard_streams: enabled: false desired_rate: 3MB schema_config: configs: - from: "2020-10-24" index: period: 24h prefix: index_ object_store: s3 schema: v11 store: boltdb-shipper server: http_listen_port: 3100 grpc_server_max_recv_msg_size: 104857600 grpc_server_max_send_msg_size: 104857600 analytics: reporting_enabled: false storage_config: boltdb_shipper: active_index_directory: /data/loki/boltdb-shipper-active cache_location: /data/loki/boltdb-shipper-cache cache_ttl: 24h shared_store: s3 aws: endpoint: $DST_ENDPOINT bucketnames: $DST_PATH access_key_id: ${S3_ACCESS_KEY_ID} secret_access_key: ${S3_SECRET_ACCESS_KEY} s3forcepathstyle: true EOF部署執行個體,並使用可從還原值區存取記錄的服務:

kubectl apply -f - <<EOF --- apiVersion: apps/v1 kind: StatefulSet metadata: labels: app: audit-logs-loki-restore logging.private.gdch.goog/loggingpipeline-name: default name: audit-logs-loki-restore namespace: PROJECT_NAMESPACE spec: persistentVolumeClaimRetentionPolicy: whenDeleted: Retain whenScaled: Retain podManagementPolicy: OrderedReady replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: app: audit-logs-loki-restore serviceName: audit-logs-loki-restore template: metadata: labels: app: audit-logs-loki-restore app.kubernetes.io/part-of: audit-logs-loki-restore egress.networking.gke.io/enabled: "true" istio.io/rev: default logging.private.gdch.goog/log-type: audit spec: affinity: nodeAffinity: preferredDuringSchedulingIgnoredDuringExecution: - preference: matchExpressions: - key: node-role.kubernetes.io/control-plane operator: DoesNotExist - key: node-role.kubernetes.io/master operator: DoesNotExist weight: 1 podAntiAffinity: preferredDuringSchedulingIgnoredDuringExecution: - podAffinityTerm: labelSelector: matchExpressions: - key: app operator: In values: - audit-logs-loki-restore topologyKey: kubernetes.io/hostname weight: 100 containers: - args: - -config.file=/etc/loki/loki.yaml - -config.expand-env=true - -target=all env: - name: S3_ACCESS_KEY_ID valueFrom: secretKeyRef: key: access-key-id name: $DST_SECRET_NAME optional: false - name: S3_SECRET_ACCESS_KEY valueFrom: secretKeyRef: key: secret-access-key name: $DST_SECRET_NAME optional: false image: gcr.io/private-cloud-staging/loki:v2.8.4-gke.2 imagePullPolicy: Always livenessProbe: failureThreshold: 3 httpGet: path: /ready port: http-metrics scheme: HTTP initialDelaySeconds: 330 periodSeconds: 10 successThreshold: 1 timeoutSeconds: 1 name: audit-logs-loki-restore ports: - containerPort: 3100 name: http-metrics protocol: TCP - containerPort: 7946 name: gossip-ring protocol: TCP readinessProbe: failureThreshold: 3 httpGet: path: /ready port: http-metrics scheme: HTTP initialDelaySeconds: 45 periodSeconds: 10 successThreshold: 1 timeoutSeconds: 1 resources: limits: ephemeral-storage: 2000Mi memory: 8000Mi requests: cpu: 300m ephemeral-storage: 2000Mi memory: 1000Mi securityContext: readOnlyRootFilesystem: true terminationMessagePath: /dev/termination-log terminationMessagePolicy: File volumeMounts: - mountPath: /etc/loki name: config - mountPath: /data name: loki-storage - mountPath: /tmp name: temp - mountPath: /tmp/loki/rules-temp name: tmprulepath - mountPath: /etc/ssl/certs/storage-cert.crt name: storage-cert subPath: ca.crt - mountPath: /wal name: loki-storage dnsPolicy: ClusterFirst priorityClassName: audit-logs-loki-priority restartPolicy: Always schedulerName: default-scheduler securityContext: fsGroup: 10001 runAsGroup: 10001 runAsUser: 10001 serviceAccount: audit-logs-loki-restore-sa serviceAccountName: audit-logs-loki-restore-sa terminationGracePeriodSeconds: 4800 volumes: - emptyDir: {} name: temp - configMap: defaultMode: 420 name: audit-logs-loki-restore name: config - emptyDir: {} name: tmprulepath - name: storage-cert secret: defaultMode: 420 secretName: web-tls updateStrategy: type: RollingUpdate volumeClaimTemplates: - apiVersion: v1 kind: PersistentVolumeClaim metadata: creationTimestamp: null name: loki-storage spec: accessModes: - ReadWriteOnce resources: requests: storage: 50Gi storageClassName: standard-rwo volumeMode: Filesystem --- apiVersion: v1 kind: Service metadata: name: audit-logs-loki-restore namespace: PROJECT_NAMESPACE spec: internalTrafficPolicy: Cluster ipFamilies: - IPv4 ipFamilyPolicy: SingleStack ports: - name: http-metrics port: 3100 protocol: TCP targetPort: http-metrics selector: app: audit-logs-loki-restore sessionAffinity: None type: ClusterIP --- EOF

設定監控執行個體,查看資料來源的記錄

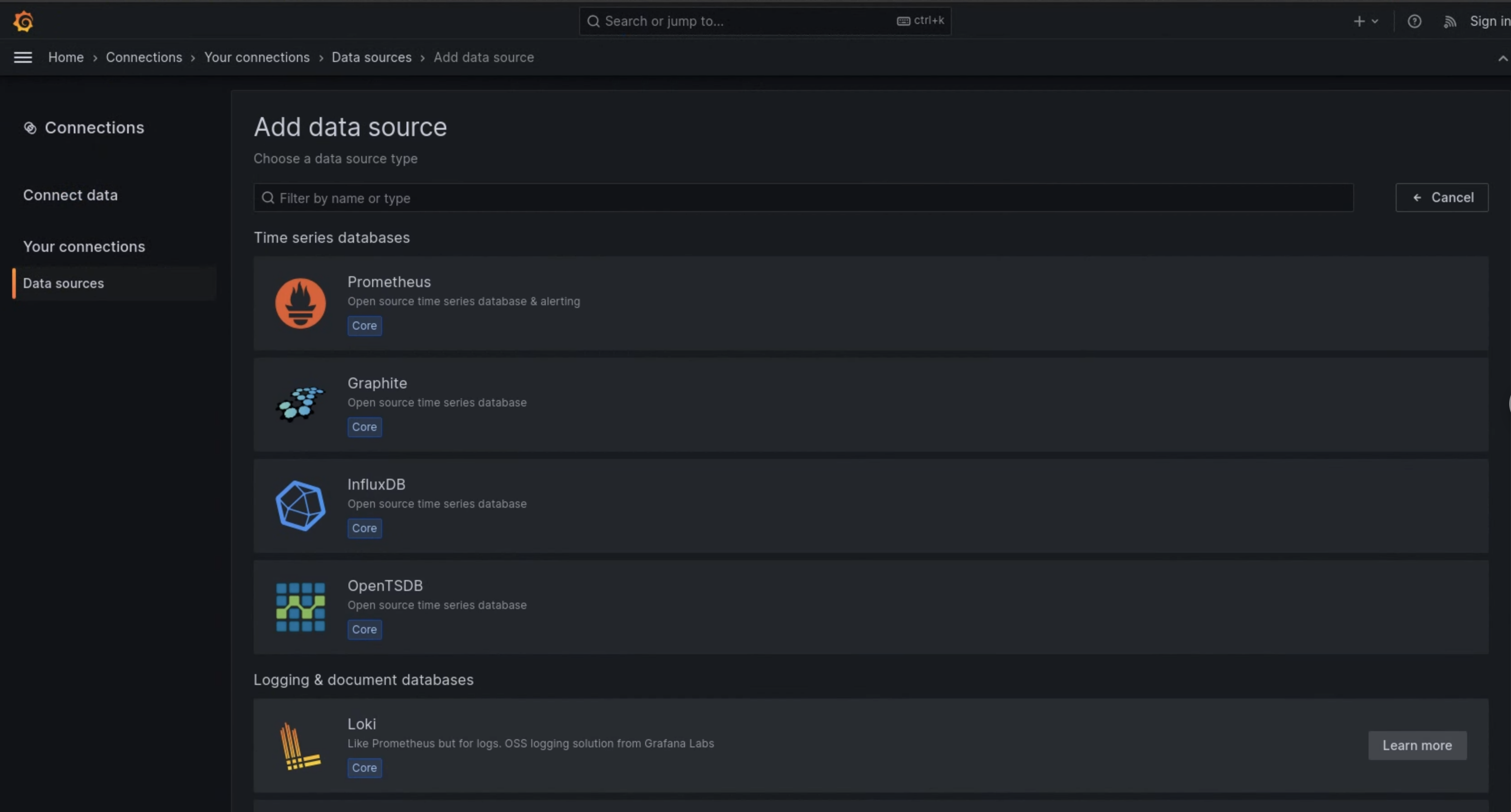

請按照下列步驟設定 Grafana (也稱為監控執行個體),以便查看稽核記錄執行個體中還原的稽核記錄:

- 前往專案監控執行個體的端點。

- 在使用者介面 (UI) 的導覽選單中,依序點選「管理」>「資料來源」。

- 按一下 「新增資料來源」。

- 在「新增資料來源」頁面中,選取「Loki」。

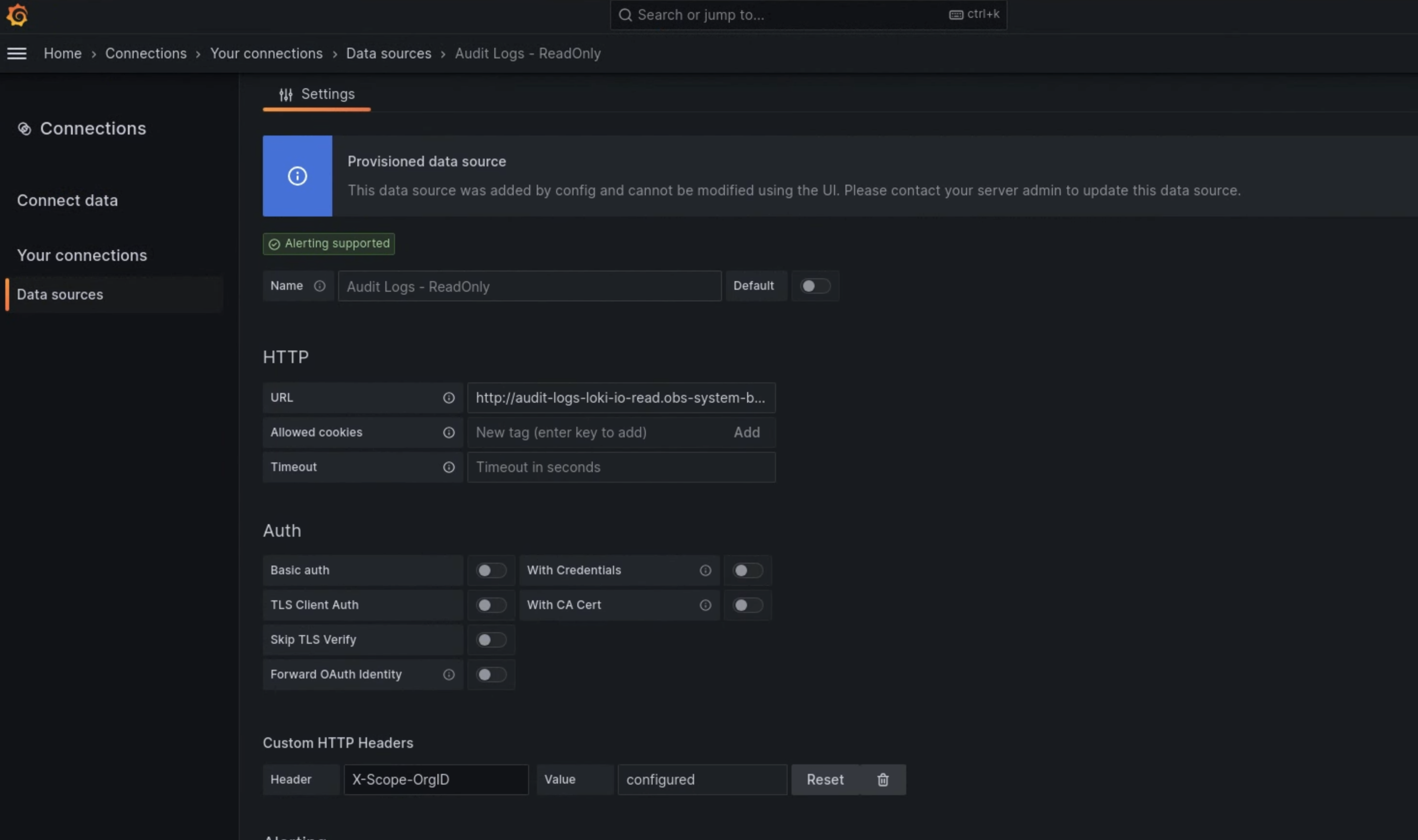

- 在「設定」頁面中,為「名稱」欄位輸入

Audit Logs - Restore值。 在「HTTP」部分,為「URL」欄位輸入下列值:

http://audit-logs-loki-restore.PROJECT_NAMESPACE.svc:3100在「Custom HTTP Headers」(自訂 HTTP 標頭) 部分,於對應欄位中輸入下列值:

- Header:

X-Scope-OrgID - Value:

infra-obs

- Header:

如圖 1 所示,在監控執行個體 UI 的「Add data source」(新增資料來源) 頁面中,Loki 會顯示為選項。圖 2 的「設定」頁面會顯示設定資料來源時必須填寫的欄位。

圖 1. 監控執行個體 UI 的「新增資料來源」頁面。

圖 2:監控執行個體的使用者介面「設定」頁面。

「記錄探索器」現在提供 Audit Logs - Restore 選項做為資料來源。