虽然提示设计方法没有正确或错误一说,但您可以使用常见的策略来影响模型的回答。严格的测试和评估对于优化模型性能仍然至关重要。

大语言模型 (LLM) 使用大量文本数据进行训练,以学习语言单元之间的模式和关系。在获得一些文本(提示)后,语言模型可以预测接下的内容,就像一个复杂的自动补全工具。因此,在设计提示时,请考虑可能影响模型预测结果的不同因素。

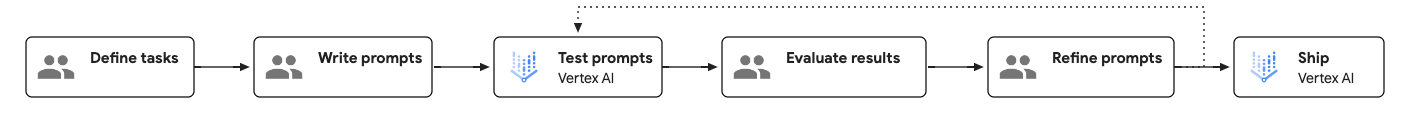

提示工程工作流

提示工程是测试驱动型迭代过程,可提高模型性能。 创建提示时,务必明确定义每个提示的目标和预期结果,并系统地测试这些提示,以确定需要改进的方面。

下图展示了提示工程工作流:

如何创建有效的提示

提示的两个方面最终会影响其效果:内容和结构。

- 内容:

为了完成某项任务,模型需要与该任务相关的所有信息。这些信息可以包括指令、示例、情境信息等。如需了解详情,请参阅提示的组成部分。

- 结构:

即使提示中提供了所有必需信息,提供信息结构也有助于模型解析信息。排序、加标签和分隔符的使用都会影响回答的质量。如需查看提示结构示例,请参阅提示模板示例。

提示的组成部分

下表显示了提示的必要和可选组成部分:

| 组件 | 说明 | 示例 |

|---|---|---|

| 目标 | 您希望模型实现的目标。明确并且包含所有总体目标。也称为“使命”或“目标”。 | 您的目标是帮助学生解答数学题,但不直接提供答案。 |

| 说明 | 关于如何执行手头任务的分步说明。也称为“任务”“步骤”或“说明”。 |

|

| 可选组件 | ||

| 系统指令 | 技术或环境指令,可能涉及控制或改变模型在一系列任务中的行为。对于许多模型 API,系统说明均在专用参数中指定。 系统指令适用于 Gemini 2.0 Flash 及更高版本的模型。 |

您是编码专家,专门负责为前端界面呈现代码。当我描述要构建的网站的组件时,请返回执行此操作所需的 HTML 和 CSS。请勿为此代码提供说明。同时请提供一些界面设计建议。 |

| 角色 | 模型充当谁或什么角色。也称为“角色”或“愿景”。 | 您是数学教师,帮助学生完成数学作业。 |

| 限制条件 | 对模型在生成回答时必须遵循的事项的限制,包括模型可以执行和不能执行的操作。也称为“保护措施”“边界”或“控制措施”。 | 不要直接向学生提供答案。请针对下一个解题步骤给出提示。如果学生一片茫然,请为他们提供解决问题的详细步骤。 |

| 语气 | 回答的语气。您还可以通过指定角色来影响风格和语气。也称为“风格”“语态”或“语气”。 | 请以随意的技术方式进行回答。 |

| 上下文 | 模型执行手头任务所需参考的任何信息。 也称为“后台”“文档”或“输入数据”。 | 学生的数学课程计划的副本。 |

| 少样本示例 | 给定提示的回答应是什么样的示例。也称为“范例”或“示例”。 | input: 我想计算一下一个体积为 1 立方米的盒子最多能装多少个高尔夫球。我已将 1 立方米换算为立方厘米,然后除以高尔夫球的体积(以立方厘米为单位),但系统显示我的答案错误。output: 高尔夫球是球体,无法以完美的效率装入空间中。您的计算考虑了球体的最大打包效率。 |

| 推理步骤 | 告诉模型解释其推理。这有时可以提高模型的推理能力。也称为“思考步骤”。 | 分步解释推理。 |

| 响应格式 | 您希望回答采用的格式。例如,您可以指示模型以 JSON、表格、Markdown、段落、项目符号列表、关键字、电梯间推销等形式输出回答。也称为“结构”“演示文稿”或“布局”。 | 在 Markdown 中设置回答的格式。 |

| 回顾 | 在提示末尾精确重复提示的要点,尤其是约束和回答格式。 | 不要直接提供答案,请给出提示。始终采用 Markdown 格式设置回答。 |

| 保护措施 | 确保问题符合聊天机器人的使命。也称为“安全规则”。 | 不适用 |

根据手头的特定任务,您可以选择包含或排除一些可选组成部分。您还可以调整组件的顺序,并检查这对回答有何影响。

提示模板示例

以下提示模板向您展示了结构良好的提示的示例:

<OBJECTIVE_AND_PERSONA>

You are a [insert a persona, such as a "math teacher" or "automotive expert"]. Your task is to...

</OBJECTIVE_AND_PERSONA>

<INSTRUCTIONS>

To complete the task, you need to follow these steps:

1.

2.

...

</INSTRUCTIONS>

------------- Optional Components ------------

<CONSTRAINTS>

Dos and don'ts for the following aspects

1. Dos

2. Don'ts

</CONSTRAINTS>

<CONTEXT>

The provided context

</CONTEXT>

<OUTPUT_FORMAT>

The output format must be

1.

2.

...

</OUTPUT_FORMAT>

<FEW_SHOT_EXAMPLES>

Here we provide some examples:

1. Example #1

Input:

Thoughts:

Output:

...

</FEW_SHOT_EXAMPLES>

<RECAP>

Re-emphasize the key aspects of the prompt, especially the constraints, output format, etc.

</RECAP>

|

最佳做法

提示设计最佳实践包括以下几点:

提示健康状况检查清单

如果提示未按预期运行,请使用以下核对清单来识别潜在问题并提高提示的性能。

写作问题

- 拼写错误:检查定义任务的关键字(例如,sumarize 而不是 summarize)、技术术语或实体名称,因为拼写错误可能会导致效果不佳。

- 语法:如果句子难以解析、包含冗长的片段、主语和谓语不一致或结构上感觉不流畅,模型可能无法正确理解提示。

- 标点符号:检查逗号、句点、引号和其他分隔符的使用情况,因为不正确的标点符号可能会导致模型误解提示。

- 使用未定义的术语:避免使用特定领域的术语、首字母缩写词或缩略词,除非它们在提示中明确定义,否则请勿将其视为具有通用含义。

- 清晰度:如果您发现自己对范围、要采取的具体步骤或做出的隐含假设感到疑惑,则提示可能不够清晰。

- 模棱两可:避免使用缺乏具体、可衡量定义的主观或相对限定词。请提供客观的限制条件(例如,“撰写不超过 3 句话的摘要”,而不是“撰写简短的摘要”)。

- 缺少关键信息:如果任务需要了解特定文档、公司政策、用户历史记录或数据集,请确保在提示中明确包含这些信息。

- 用词不当:检查提示中是否有不必要的复杂、模糊或冗长的措辞,因为这可能会让模型感到困惑。

- 二次审核:如果模型继续表现不佳,请其他人审核您的提示。

说明和示例方面的问题

- 公开操纵:从提示中移除核心任务之外的语言,这些语言试图通过情感诉求、奉承或人为压力来影响性能。虽然第一代基础模型在某些情况下通过“如果您不正确回答这个问题,就会发生非常糟糕的事情”之类的指令表现出改进,但基础模型性能将不再提高,并且在许多情况下会变得更差。

- 指令和示例相互冲突:通过审核提示来检查是否存在逻辑矛盾,或者指令与指令之间或指令与示例之间是否不匹配。

- 冗余指令和示例:检查提示和示例,看看是否以略有不同的方式多次陈述完全相同的指令或概念,而没有添加新信息或细微差别。

- 不相关的指令和示例:检查所有指令和示例是否对核心任务至关重要。如果移除任何指令或示例后,模型执行核心任务的能力不会下降,则这些指令或示例可能无关紧要。

- 使用“少样本”示例:如果任务复杂、需要特定格式或具有细致的语气,请确保提供具体的说明性示例,其中显示了输入示例和相应的输出。

- 缺少输出格式规范:避免让模型猜测输出的结构;而是使用清晰明确的指令来指定格式,并在小样本示例中展示输出结构。

- 缺少角色定义:如果您要让模型扮演特定角色,请确保在系统指令中定义该角色。

提示和系统设计问题

- 任务规范不足:确保提示的指令提供明确的途径来处理极端情形和意外输入,并提供处理缺失数据的指令,而不是假设插入的数据始终存在且格式正确。

- 任务超出模型能力范围:避免使用要求模型执行已知存在根本性限制的任务的提示。

- 任务过多:如果提示要求模型在一次运行中执行多项不同的认知操作(例如,1. 摘要、2. 提取实体、3. 翻译和 4. 撰写邮件),它可能试图完成过多的任务。将请求拆分为多个单独的提示。

- 非标准数据格式:如果模型输出必须是机器可读的或遵循特定格式,请使用可由常用库解析的公认的标准,例如 JSON、XML、Markdown 或 YAML。如果您的应用场景需要非标准格式,请考虑让模型以常用格式输出,然后使用代码转换输出。

- 错误的思维链 (CoT) 顺序:避免提供以下示例:模型在完成逐步推理之前生成最终的结构化答案。

- 内部参考冲突:避免编写包含非线性逻辑或条件语句的提示,此类提示需要模型将提示中多个不同位置的零散指令拼凑在一起。

- 提示注入风险:检查是否有明确的保护措施来防范插入到提示中的不受信任的用户输入,因为这可能会带来严重的安全风险。

后续步骤

- 在提示库中浏览更多提示示例。

- 了解如何使用 Vertex AI 提示优化器(预览版)优化提示,以便与 Google 模型搭配使用。

- 了解 Responsible AI 最佳实践和 Vertex AI 的安全过滤条件。