You're viewing Apigee and Apigee hybrid documentation.

View

Apigee Edge documentation.

In Apigee, the default behavior is that HTTP request and response payloads are stored in an in-memory buffer before they are processed by the policies in the API Proxy.

If streaming is enabled, then request and response payloads are streamed without modification to the client app (for responses) and the target endpoint (for requests). Streaming is useful especially if an application accepts or returns large payloads, or if there's an application that returns data in chunks over time.

Antipattern

Accessing the request/response payload with streaming enabled causes Apigee to go back to the default buffering mode.

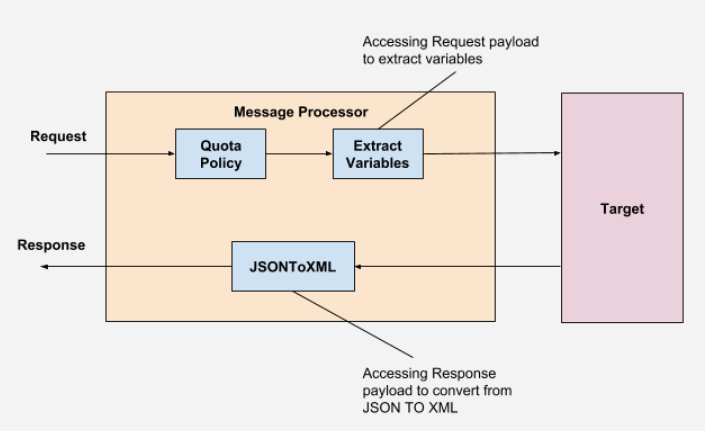

The illustration above shows that we are trying to extract variables from the request payload and converting the JSON response payload to XML using JSONToXML policy. This will disable the streaming in Apigee.

Impact

- Streaming will be disabled which can lead to increased latencies in processing the data

- Increase in the heap memory usage or

OutOfMemoryerrors can be observed on Message Processors due to use of in-memory buffers especially if we have large request/response payloads

Best practice

- Don't access the request/response payload when streaming is enabled.

Further reading

- Streaming requests and responses

- How does Apigee streaming work?

- How to handle streaming data together with normal request/response payload in a single API Proxy

- Best practices for API proxy design and development