Before you can run your training application with AI Platform Training, you must upload your code and any dependencies into a Cloud Storage bucket that your Google Cloud project can access. This page shows you how to package and stage your application in the cloud.

You'll get the best results if you test your training application locally before uploading it to the cloud. Training with AI Platform Training incurs charges to your account for the resources used.

Before you begin

Before you can move your training application to the cloud, you must complete the following steps:

- Configure your development environment, as described in the getting-started guide.

Develop your training application with one of AI Platform Training's hosted machine learning frameworks: TensorFlow, scikit-learn, or XGBoost. Alternatively, build a custom container to customize the environment of your training application. This gives you the option to use machine learning frameworks other than AI Platform Training's hosted frameworks.

If you want to deploy your trained model to AI Platform Prediction after training, read the guide to exporting your model for prediction to make sure your training package exports model artifacts that AI Platform Prediction can use.

Follow the guide to setting up a Cloud Storage bucket where you can store your training application's data and files.

Know all of the Python libraries that your training application depends on, whether they're custom packages or freely available through PyPI.

This document discusses the following factors that influence how you package your application and upload it to Cloud Storage:

- Using the gcloud CLI (recommended) or coding your own solution.

- Building your package manually if necessary.

- How to include additional dependencies that aren't installed by the AI Platform Training runtime that you're using.

Using gcloud to package and upload your application (recommended)

The simplest way to package your application and upload it along with its

dependencies is to use the gcloud CLI. You use a single command

(gcloud ai-platform jobs submit training) to

package and upload the application and to submit your first training job.

For convenience, it's useful to define your configuration values as shell variables:

PACKAGE_PATH='LOCAL_PACKAGE_PATH'

MODULE_NAME='MODULE_NAME'

STAGING_BUCKET='BUCKET_NAME'

JOB_NAME='JOB_NAME'

JOB_DIR='JOB_OUTPUT_PATH'

REGION='REGION'

Replace the following:

LOCAL_PACKAGE_PATH: the path to the directory of your Python package in your local environmentMODULE_NAME: the fully-qualified name of your training moduleBUCKET_NAME: the name of a Cloud Storage bucketJOB_NAME: a name for your training jobJOB_OUTPUT_PATH: the URI of a Cloud Storage directory where you want your training job to save its outputREGION: the region where you want to run your training job

See more details about the requirements for these values in the list after the following command.

The following example shows a

gcloud ai-platform jobs submit training

command that packages an application and submits the training job:

gcloud ai-platform jobs submit training $JOB_NAME \

--staging-bucket=$STAGING_BUCKET \

--job-dir=$JOB_DIR \

--package-path=$PACKAGE_PATH \

--module-name=$MODULE_NAME \

--region=$REGION \

-- \

--user_first_arg=first_arg_value \

--user_second_arg=second_arg_value

--staging-bucketspecifies a Cloud Storage bucket where you want to stage your training and dependency packages. Your Google Cloud project must have access to this Cloud Storage bucket, and the bucket must be in the same region where you run the job. See the available regions for AI Platform Training services. If you don't specify a staging bucket, AI Platform Training stages your packages in in the location specified in thejob-dirparameter.--job-dirspecifies the Cloud Storage location that you want to use for your training job's output files. Your Google Cloud project must have access to this Cloud Storage bucket, and the bucket should be in the same region that you run the job. See the available regions for AI Platform Training services.--package-pathspecifies the local path to the directory of your application. The gcloud CLI builds a.tar.gzdistribution package from your code based on thesetup.pyfile in the parent directory of the one specified by--package-path. Then it uploads this.tar.gzfile to Cloud Storage and uses it to run your training job.If there is no

setup.pyfile in the expected location, the gcloud CLI creates a simple, temporarysetup.pyand includes only the directory specified by--package-pathin the.tar.gzfile it builds.--module-namespecifies the name of your application's main module, using your package's namespace dot notation. This is the Python file that you run to start your application. For example, if your main module is.../my_application/trainer/task.py(see the recommended project structure), then the module name istrainer.task.

- If you specify an option both in your configuration file

(

config.yaml) and as a command-line flag, the value on the command line overrides the value in the configuration file. - The empty

--flag marks the end of thegcloudspecific flags and the start of theUSER_ARGSthat you want to pass to your application. - Flags specific to AI Platform Training, such as

--module-name,--runtime-version, and--job-dir, must come before the empty--flag. The AI Platform Training service interprets these flags. - The

--job-dirflag, if specified, must come before the empty--flag, because AI Platform Training uses the--job-dirto validate the path. - Your application must handle the

--job-dirflag too, if specified. Even though the flag comes before the empty--, the--job-diris also passed to your application as a command-line flag. - You can define as many

USER_ARGSas you need. AI Platform Training passes--user_first_arg,--user_second_arg, and so on, through to your application.

You can find out more about the job-submission flags in the guide to running a training job.

Working with dependencies

Dependencies are packages that you import in your code. Your application may

have many dependencies that it needs to make it work.

When you run a training job on AI Platform Training, the job runs on training instances (specially-configured virtual machines) that have many common Python packages already installed. Check the packages included in the runtime version that you use for training, and note any of your dependencies that are not already installed.

There are 2 types of dependencies that you may need to add:

- Standard dependencies, which are common Python packages available on PyPI.

- Custom packages, such as packages that you developed yourself, or those internal to an organization.

The sections below describe the procedure for each type.

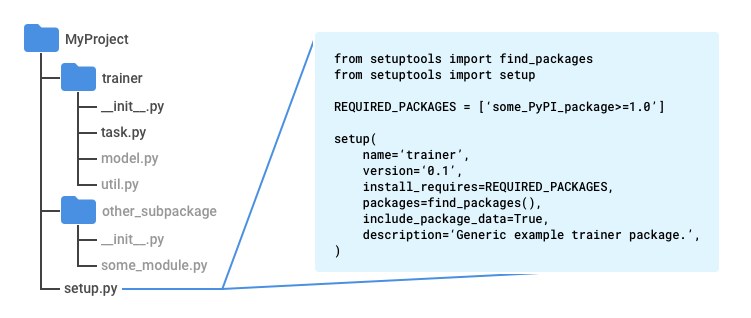

Adding standard (PyPI) dependencies

You can specify your package's standard dependencies as part of its setup.py

script. AI Platform Training uses pip to install your

package on the training instances that it allocates for your job. The

pip install

command looks for configured dependencies and installs them.

Create a file called setup.py in the root directory of your

application (one directory up from your trainer directory if you follow the

recommended pattern).

Enter the following script in setup.py, inserting your own values:

from setuptools import find_packages

from setuptools import setup

REQUIRED_PACKAGES = ['some_PyPI_package>=1.0']

setup(

name='trainer',

version='0.1',

install_requires=REQUIRED_PACKAGES,

packages=find_packages(),

include_package_data=True,

description='My training application package.'

)

If you are using the Google Cloud CLI to submit your training job, it

automatically uses your setup.py file to make the package.

If you submit the training job without using gcloud, use the following command

to run the script:

python setup.py sdist

For more information, see the section about manually packaging your training application.

Adding custom dependencies

You can specify your application's custom dependencies by passing their paths as

part of your job configuration. You need the URI to the package of each

dependency. The custom dependencies must be in a

Cloud Storage location. AI Platform Training uses

pip install

to install custom dependencies, so they can have standard dependencies of

their own in their setup.py scripts.

If you use the gcloud CLI to run your training job, you can specify

dependencies on your local machine, as well as on Cloud Storage, and

the tool will stage them in the cloud for you: when you run the

gcloud ai-platform jobs submit training command, set the

--packages flag to include the dependencies in a comma-separated list.

Each URI you include is the path to a distribution package, formatted as a

tarball (.tar.gz) or as a wheel (.whl). AI Platform Training installs each

package using pip install

on every virtual machine it allocates for your training job.

The example below specifies packaged dependencies named dep1.tar.gz

and dep2.whl (one each of the supported package types) along with

a path to the application's sources:

gcloud ai-platform jobs submit training $JOB_NAME \

--staging-bucket $PACKAGE_STAGING_PATH \

--package-path /Users/mluser/models/faces/trainer \

--module-name $MODULE_NAME \

--packages dep1.tar.gz,dep2.whl \

--region us-central1 \

-- \

--user_first_arg=first_arg_value \

--user_second_arg=second_arg_value

Similarly, the example below specifies packaged dependencies named dep1.tar.gz

and dep2.whl (one each of the supported package types), but with

a built training application:

gcloud ai-platform jobs submit training $JOB_NAME \

--staging-bucket $PACKAGE_STAGING_PATH \

--module-name $MODULE_NAME \

--packages trainer-0.0.1.tar.gz,dep1.tar.gz,dep2.whl

--region us-central1 \

-- \

--user_first_arg=first_arg_value \

--user_second_arg=second_arg_value

If you run training jobs using the AI Platform Training and Prediction API directly, you must stage your dependency packages in a Cloud Storage location yourself and then use the paths to the packages in that location.

Building your package manually

Packaging Python code is an expansive topic that is largely beyond the scope of this documentation. For convenience, this section provides an overview of using Setuptools to build your package. There are other libraries you can use to do the same thing.

Follow these steps to build your package manually:

In each directory of your application package, include a file named

__init__.py, which may be empty or may contain code that runs when that package (any module in that directory) is imported.In the parent directory of all the code you want to include in your

.tar.gzdistribution package (one directory up from yourtrainerdirectory if you follow the recommended pattern), include the Setuptools file namedsetup.pythat includes:Import statements for

setuptools.find_packagesandsetuptools.setup.A call to

setuptools.setupwith (at a minimum) these parameters set:_name_ set to the name of your package namespace._version_ set to the version number of this build of your package._install_requires_ set to a list of packages that are required by your application, with version requirements, like'docutils>=0.3'._packages_ set tofind_packages(). This tells Setuptools to include all subdirectories of the parent directory that contain an__init__.pyfile as "import packages" (you import modules from these in Python with statements likefrom trainer import util) in your "distribution package" (the `.tar.gz file containing all the code)._include_package_data_ set toTrue.

Run

python setup.py sdistto create your.tar.gzdistribution package.

Recommended project structure

You can structure your training application in any way you like. However, the following structure is commonly used in AI Platform Training samples, and having your project's organization be similar to the samples can make it easier for you to follow the samples.

Use a main project directory, containing your

setup.pyfile.Use the

find_packages()function fromsetuptoolsin yoursetup.pyfile to ensure all subdirectories get included in the.tar.gzdistribution package you build.Use a subdirectory named

trainerto store your main application module.Name your main application module

task.py.Create whatever other subdirectories in your main project directory that you need to implement your application.

Create an

__init__.pyfile in every subdirectory. These files are used by Setuptools to identify directories with code to package, and may be empty.

In the AI Platform Training samples, the trainer directory usually contains

the following source files:

task.pycontains the application logic that manages the training job.model.pycontains the logic of the model.util.pyif present, contains code to run the training application.

When you run gcloud ai-platform jobs submit training, set the --package-path

to trainer. This causes the gcloud CLI to look for a setup.py file in the

parent of trainer, your main project directory.

Python modules

Your application package can contain multiple modules (Python files). You must identify the module that contains your application entry point. The training service runs that module by invoking Python, just as you would run it locally.

For example, if you follow the recommended structure from the preceding section,

you main module is task.py. Since it's inside an import package (directory

with an __init__.py file) named trainer, the fully qualified name of this

module is trainer.task. So if you submit your job with

gcloud ai-platform jobs submit training, set the

--module-name flag to trainer.task.

Refer to the Python guide to packages for more information about modules.

Using the gcloud CLI to upload an existing package

If you build your package yourself,

you can upload it with the gcloud CLI. Run the

gcloud ai-platform jobs submit training command:

Set the

--packagesflag to the path to your packaged application.Set the

--module-nameflag to the name of your application's main module, using your package's namespace dot notation. This is the Python file that you run to start your application. For example, if your main module is.../my_application/trainer/task.py(see the recommended project structure), then the module name istrainer.task.

The example below shows you how to use a zipped tarball package (called

trainer-0.0.1.tar.gz here) that is in the same directory where you run the

command. The main function is in a module called task.py:

gcloud ai-platform jobs submit training $JOB_NAME \

--staging-bucket $PACKAGE_STAGING_PATH \

--job-dir $JOB_DIR \

--packages trainer-0.0.1.tar.gz \

--module-name $MODULE_NAME \

--region us-central1 \

-- \

--user_first_arg=first_arg_value \

--user_second_arg=second_arg_value

Using the gcloud CLI to use an existing package already in the cloud

If you build your package yourself

and upload it to a Cloud Storage location, you can upload it with

gcloud. Run the

gcloud ai-platform jobs submit training command:

Set the

--packagesflag to the path to your packaged application.Set the

--module-nameflag to the name of your application's main module, using your package's namespace dot notation. This is the Python file that you run to start your application. For example, if your main module is.../my_application/trainer/task.py(see the recommended project structure), then the module name istrainer.task.

The example below shows you how to use a zipped tarball package that is in a Cloud Storage bucket:

gcloud ai-platform jobs submit training $JOB_NAME \

--job-dir $JOB_DIR \

--packages $PATH_TO_PACKAGED_TRAINER \

--module-name $MODULE_NAME \

--region us-central1 \

-- \

--user_first_arg=first_arg_value \

--user_second_arg=second_arg_value

Where $PATH_TO_PACKAGED_TRAINER is an environment variable that represents

the path to an existing package already in the cloud. For example, the path

could point to the following Cloud Storage location, containing a

zipped tarball package called trainer-0.0.1.tar.gz:

PATH_TO_PACKAGED_TRAINER=gs://$CLOUD_STORAGE_BUCKET_NAME/trainer-0.0.0.tar.gz

Uploading packages manually

You can upload your packages manually if you have a reason to. The most common

reason is that you want to call the AI Platform Training and Prediction API directly to start your

training job. The easiest way to manually upload your package and any custom

dependencies to your Cloud Storage bucket is to use the

gsutil tool:

gsutil cp /local/path/to/package.tar.gz gs://bucket/path/

However, if you can use the command line for this operation, you should just use

gcloud ai-platform jobs submit training to

upload your packages as part of setting up a

training job. If you can't use the command line, you can use the

Cloud Storage client library to

upload programmatically.

What's next

- Configure and run a training job.

- Monitor your training job while it runs.

- Learn more about how training works.