This page describes two ways to ground a model's responses with Vertex AI and shows you how to make grounding work in your applications using the grounding API.

Vertex AI lets you ground model outputs by using the following data sources:

- Google Search: Grounds responses by using publicly-available web data.

- Your data: Grounds responses by using your data from Vertex AI Search (Preview).

Grounding with Google Search

Use Grounding with Google Search if you want to connect the model with world knowledge, a wide possible range of topics, or up-to-date information on the internet.

Grounding with Google Search supports dynamic retrieval that gives you the option to generate Grounded responses with Google Search only when necessary. Therefore, the dynamic retrieval configuration evaluates whether a prompt requires knowledge about recent events and enables Grounding with Google Search. For more information, see Dynamic retrieval.

To learn more about model grounding in Vertex AI, see the Grounding overview.

Supported models

The following models support grounding:

- Gemini 1.5 Pro with text input only

- Gemini 1.5 Flash with text input only

- Gemini 1.0 Pro with text input only

Supported languages

- English (en)

- Spanish (es)

- Japanese (ja)

Ground your model with Google Search

Use the following instructions to ground a model with publicly available web data.

Dynamic retrieval

You can use dynamic retrieval in your request to choose when to turn off grounding with Google Search. This is useful when the prompt doesn't require an answer grounded in Google Search and the supported models can provide an answer based on their knowledge without grounding. This helps you manage latency, quality, and cost more effectively.

Before you invoke the dynamic retrieval configuration in your request, understand the following terminology:

Prediction score: When you request a grounded answer, Vertex AI assigns a prediction score to the prompt. The prediction score is a floating point value in the range [0,1]. Its value depends on whether the prompt can benefit from grounding the answer with the most up-to-date information from Google Search. Therefore, a prompt that requires an answer grounded in the most recent facts on the web, has a higher prediction score. A prompt for which a model-generated answer is sufficient has a lower prediction score.

Here are examples of some prompts and their prediction scores.

Prompt Prediction score Comment "Write a poem about peonies" 0.13 The model can rely on its knowledge and the answer doesn't need grounding "Suggest a toy for a 2yo child" 0.36 The model can rely on its knowledge and the answer doesn't need grounding "Can you give a recipe for an asian-inspired guacamole?" 0.55 Google Search can give a grounded answer, but grounding isn't strictly required; the model knowledge might be sufficient "What's Agent Builder? How is grounding billed in Agent Builder?" 0.72 Requires Google Search to generate a well-grounded answer "Who won the latest F1 grand prix?" 0.97 Requires Google Search to generate a well-grounded answer Threshold: In your request, you can specify a dynamic retrieval configuration with a threshold. The threshold is a floating point value in the range [0,1] and defaults to 0.7. If the threshold value is zero, the response is always grounded with Google Search. For all other values of threshold, the following is applicable:

- If the prediction score is greater than or equal to the threshold, the answer is grounded with Google Search. A lower threshold implies that more prompts have responses that are generated using Grounding with Google Search.

- If the prediction score is less than the threshold, the model might still generate the answer, but it isn't grounded with Google Search.

To find a good threshold that suits your business needs, you can create a representative set of queries that you expect to encounter. Then you can sort the queries according to the prediction score in the response and select a good threshold for your use case.

Considerations

To use grounding with Google Search, you must enable Google Search Suggestions. See more at Google Search Suggestions.

For ideal results, use a temperature of

0.0. To learn more about setting this config, see the Gemini API request body from the model reference.Grounding with Google Search has a limit of one million queries per day. If you require more queries, contact Google Cloud support for assistance.

REST

Before using any of the request data, make the following replacements:

- LOCATION: The region to process the request.

- PROJECT_ID: Your project ID.

- MODEL_ID: The model ID of the multimodal model.

- TEXT: The text instructions to include in the prompt.

- DYNAMIC_THRESHOLD: An optional field to set the threshold

to invoke the dynamic retrieval configuration. It is a floating point

value in the range [0,1]. If you don't set the

dynamicThresholdfield, the threshold value defaults to 0.7.

HTTP method and URL:

POST https://LOCATION-aiplatform.googleapis.com/v1beta1/projects/PROJECT_ID/locations/LOCATION/publishers/google/models/MODEL_ID:generateContent

Request JSON body:

{

"contents": [{

"role": "user",

"parts": [{

"text": "TEXT"

}]

}],

"tools": [{

"googleSearchRetrieval": {

"dynamicRetrievalConfig": {

"mode": "MODE_DYNAMIC",

"dynamicThreshold": DYNAMIC_THRESHOLD

}

}

}],

"model": "projects/PROJECT_ID/locations/LOCATION/publishers/google/models/MODEL_ID"

}

To send your request, expand one of these options:

You should receive a JSON response similar to the following:

{

"candidates": [

{

"content": {

"role": "model",

"parts": [

{

"text": "Chicago weather changes rapidly, so layers let you adjust easily. Consider a base layer, a warm mid-layer (sweater-fleece), and a weatherproof outer layer."

}

]

},

"finishReason": "STOP",

"safetyRatings":[

"..."

],

"groundingMetadata": {

"webSearchQueries": [

"What's the weather in Chicago this weekend?"

],

"searchEntryPoint": {

"renderedContent": "....................."

}

"groundingSupports": [

{

"segment": {

"startIndex": 0,

"endIndex": 65,

"text": "Chicago weather changes rapidly, so layers let you adjust easily."

},

"groundingChunkIndices": [

0

],

"confidenceScores": [

0.99

]

},

]

"retrievalMetadata": {

"webDynamicRetrievalScore": 0.96879

}

}

}

],

"usageMetadata": { "..."

}

}

Console

To use Grounding with Google Search with the Vertex AI Studio, follow these steps:

- In the Google Cloud console, go to the Vertex AI Studio page.

- Click the Freeform tab.

- In the side panel, click the Ground model responses toggle.

- Click Customize and set Google Search as the source.

- Enter your prompt in the text box and click Submit.

Your prompt responses now ground to Google Search.

Python

To learn how to install or update the Vertex AI SDK for Python, see Install the Vertex AI SDK for Python. For more information, see the Python API reference documentation.

Understand your response

If your model prompt successfully grounds to Google Search from the Vertex AI Studio or from the API, then the responses include metadata with source links (web URLs). However, there are several reasons this metadata might not be provided, and the prompt response won't be grounded. These reasons include low source relevance or incomplete information within the model's response.

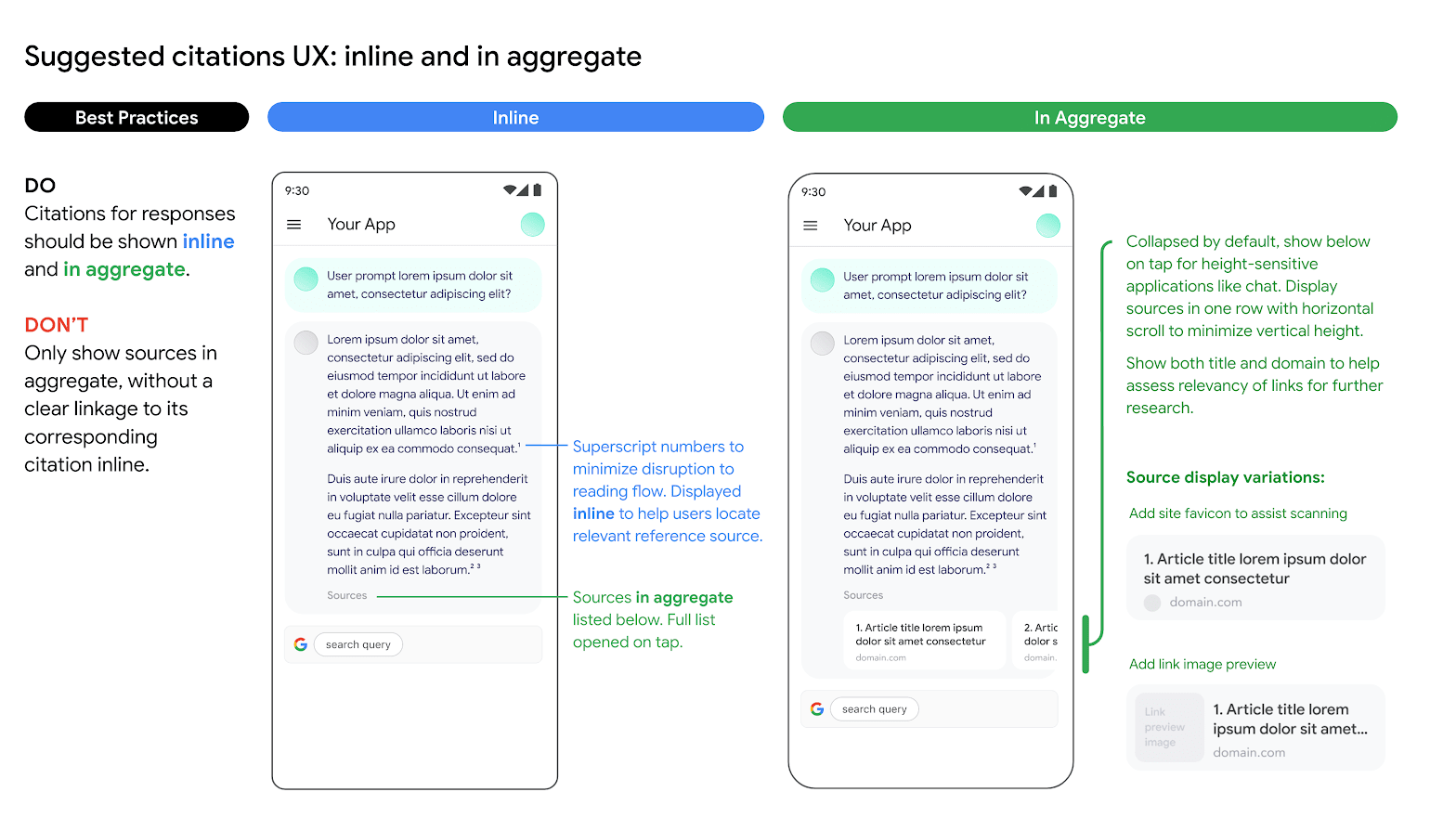

Citations

Displaying citations is highly recommended. They aid users in validating responses from publishers themselves and add avenues for further learning.

Citations for responses from Google Search sources should be shown both inline and in aggregate. See the following image as a suggestion on how to do this.

Use of alternative search engine options

Customer's use of Grounding with Google Search does not prevent Customer from offering alternative search engine options, making alternative search options the default option for Customer Applications, or displaying their own or third party search suggestions or search results in Customer Applications, provided that that any such non-Google Search services or associated results are displayed separately from the Grounded Results and Search Suggestions and can't reasonably be attributed to, or confused with results provided by, Google.

Ground Gemini to your data

This section shows you how to ground text responses to a Vertex AI Agent Builder data store by using the Vertex AI API. You can't specify a website search data store as the grounding source.

Supported models

The following models support grounding:

- Gemini 1.5 Pro with text input only

- Gemini 1.5 Flash with text input only

- Gemini 1.0 Pro with text input only

Prerequisites

There are prerequisites needed before you can ground model output to your data.

- Enable Vertex AI Agent Builder and activate the API.

- Create a Vertex AI Agent Builder data source and app.

- Link your data store to your app in Vertex AI Agent Builder. The data source serves as the foundation for grounding Gemini 1.0 Pro in Vertex AI. You can't specify a website search data store as the grounding source.

- Enable Enterprise edition for your data store.

See the Introduction to Vertex AI Search for more.

Enable Vertex AI Agent Builder

In the Google Cloud console, go to the Agent Builder page.

Read and agree to the terms of service, then click Continue and activate the API.

Vertex AI Agent Builder is available in the global location, or the eu and us multi-region. To

learn more, see Vertex AI Agent Builder locations

Create a data store in Vertex AI Agent Builder

To ground your models to your source data, you need to have prepared and saved your data to Vertex AI Search. To do this, you need to create a data store in Vertex AI Agent Builder.

If you are starting from scratch, you need to prepare your data for ingestion into Vertex AI Agent Builder. See Prepare data for ingesting to get started. Depending on the size of your data, ingestion can take several minutes to several hours. Only unstructured data stores are supported for grounding.

After you've prepared your data for ingestion, you can Create a search data store. After you've successfully created a data store, Create a search app to link to it and Turn Enterprise edition on.

Example: Ground the Gemini 1.5 Flash model

Use the following instructions to ground a model with your own data.

If you don't know your data store ID, follow these steps:

In the Google Cloud console, go to the Vertex AI Agent Builder page and in the navigation menu, click Data stores.

Click the name of your data store.

On the Data page for your data store, get the data store ID. You can't specify a website search data store as the grounding source.

REST

To test a text prompt by using the Vertex AI API, send a POST request to the publisher model endpoint.

Before using any of the request data, make the following replacements:

- LOCATION: The region to process the request.

- PROJECT_ID: Your project ID.

- MODEL_ID: The model ID of the multimodal model.

- TEXT: The text instructions to include in the prompt.

HTTP method and URL:

POST https://LOCATION-aiplatform.googleapis.com/v1beta1/projects/PROJECT_ID/locations/LOCATION/publishers/google/models/MODEL_ID:generateContent

Request JSON body:

{

"contents": [{

"role": "user",

"parts": [{

"text": "TEXT"

}]

}],

"tools": [{

"retrieval": {

"vertexAiSearch": {

"datastore": projects/PROJECT_ID/locations/global/collections/default_collection/dataStores/DATA_STORE_ID

}

}

}],

"model": "projects/PROJECT_ID/locations/LOCATION/publishers/google/models/MODEL_ID"

}

To send your request, expand one of these options:

You should receive a JSON response similar to the following:

{

"candidates": [

{

"content": {

"role": "model",

"parts": [

{

"text": "You can make an appointment on the website https://dmv.gov/"

}

]

},

"finishReason": "STOP",

"safetyRatings": [

"..."

],

"groundingMetadata": {

"retrievalQueries": [

"How to make appointment to renew driving license?"

],

"groundingChunks": [

{

"retrievedContext": {

"uri": "https://vertexaisearch.cloud.google.com/grounding-api-redirect/AXiHM.....QTN92V5ePQ==",

"title": "dmv"

}

}

],

"groundingSupport": [

{

"segment": {

"startIndex": 25,

"endIndex": 147

},

"segment_text": "ipsum lorem ...",

"supportChunkIndices": [1, 2],

"confidenceScore": [0.9541752, 0.97726375]

},

{

"segment": {

"startIndex": 294,

"endIndex": 439

},

"segment_text": "ipsum lorem ...",

"supportChunkIndices": [1],

"confidenceScore": [0.9541752, 0.9325467]

}

]

}

}

],

"usageMetadata": {

"..."

}

}

Understand the response that's grounded with Google Search

When a grounded result is generated, the metadata contains a URI that redirects to the publisher of the content that was used to generate the grounded result. The metadata also contains the publisher's domain. The provided URIs remain accessible for 30 days after the grounded result is generated.

Python

To learn how to install or update the Vertex AI SDK for Python, see Install the Vertex AI SDK for Python. For more information, see the Python API reference documentation.

Console

To ground your model output to Vertex AI Agent Builder by using Vertex AI Studio in the Google Cloud console, follow these steps:

- In the Google Cloud console, go to the Vertex AI Studio page.

- Click the Freeform tab.

- In the side panel, click the Ground model responses toggle to enable grounding.

- Click Customize and set Vertex AI Agent Builder as the source. The path should follow this format: projects/project_id/locations/global/collections/default_collection/dataStores/data_store_id.

- Enter your prompt in the text box and click Submit.

Your prompt responses now ground to Vertex AI Agent Builder.

More generative AI resources

- To learn how to send chat prompt requests, see Multiturn chat.

- To learn about responsible AI best practices and Vertex AI's safety filters, see Safety best practices.

- To learn how to ground the PaLM models, see

Grounding in Vertex AI.