Grounding with Google Search

Use Grounding with Google Search if you want to connect the model with world knowledge, a wide possible range of topics, or up-to-date information on the internet.

You have to display a Google Search entry point when using this feature. To learn more about the requirements, see Google Search entry point.

To learn more about model grounding in Vertex AI, see the Grounding overview.

Supported models

The following models support grounding:

- Gemini 1.0 Pro

If you are using Grounding with Google Search, it's suggested

you use a temperature of 0.0. To learn more about setting this config, see the Gemini API request body

from the model reference.

REST

Before using any of the request data, make the following replacements:

- LOCATION: The region to process the request.

- PROJECT_ID: Your project ID.

- MODEL_ID: The model ID of the multimodal model.

- MODEL: projects/acme/locations/us-central1/publishers/google/models/gemini-pro

- ROLE: The role in a conversation associated with the content. Specifying a role is required even in singleturn use cases. Acceptable values include the following: USER: Specifies content that's sent by you.

- TOOLS: The resource you're using to ground to. Use googleSearchRetrieval for grounding with Google Search.

- TEXT: The text instructions to include in the prompt.

HTTP method and URL:

POST https://LOCATION-prediction-aiplatform.googleapis.com/v1beta1/projects/PROJECT_ID/locations/LOCATION/publishers/google/models/MODEL_ID:generateContent

Request JSON body:

{

"contents": [{

"role": "user",

"parts": [{

"text": TEXT

}]

}],

"tools": [{

"googleSearchRetrieval": {}

}],

"model": MODEL

}'

To send your request, expand one of these options:

You should receive a JSON response similar to the following:

{

"candidates":[

{

"content":{

"role":"model",

"parts":[

{

"text":"Chicago's forecast: Today, March 1st, the weather is rainy, overcast, and chilly with highs in the low 50s. A jacket for the evenings is recommended, since the evenings will be closer to 30 degrees."

}

]

},

"finishReason":"STOP",

"safetyRatings":[

"..."

],

"groundingMetadata":{

"webSearchQueries":[

"What's the weather in Chicago this weekend and do I need to bring a coat?"

]

}

}

],

"usageMetadata":{

}

}

Console

To use Grounding with Google Search with the Vertex AI Studio, follow these steps:

- In the Google Cloud console, go to the Vertex AI Studio page.

- Click the Multimodal tab.

- Click Open to view the single prompt design page.

- In the side panel, click Advanced to view advanced settings.

- Click the Enable Grounding toggle.

- Click Customize and set Google Search as the source.

- Enter your prompt in the text box and click Submit.

Your prompt responses now ground to Google Search.

Python

To learn how to install or update the Vertex AI SDK for Python, see Install the Vertex AI SDK for Python. For more information, see the Python API reference documentation.

Understand your response

If your model prompt successfully grounds to Google Search from the Vertex AI Studio or from the API, then the responses include metadata with source links (web URLs). However, there are several reasons this metadata might not be provided, and the prompt response won't be grounded. These reasons include low source relevance or incomplete information within the model's response.

Citations

Displaying citations is highly recommended. They aid users in validating responses from publishers themselves and add avenues for further learning.

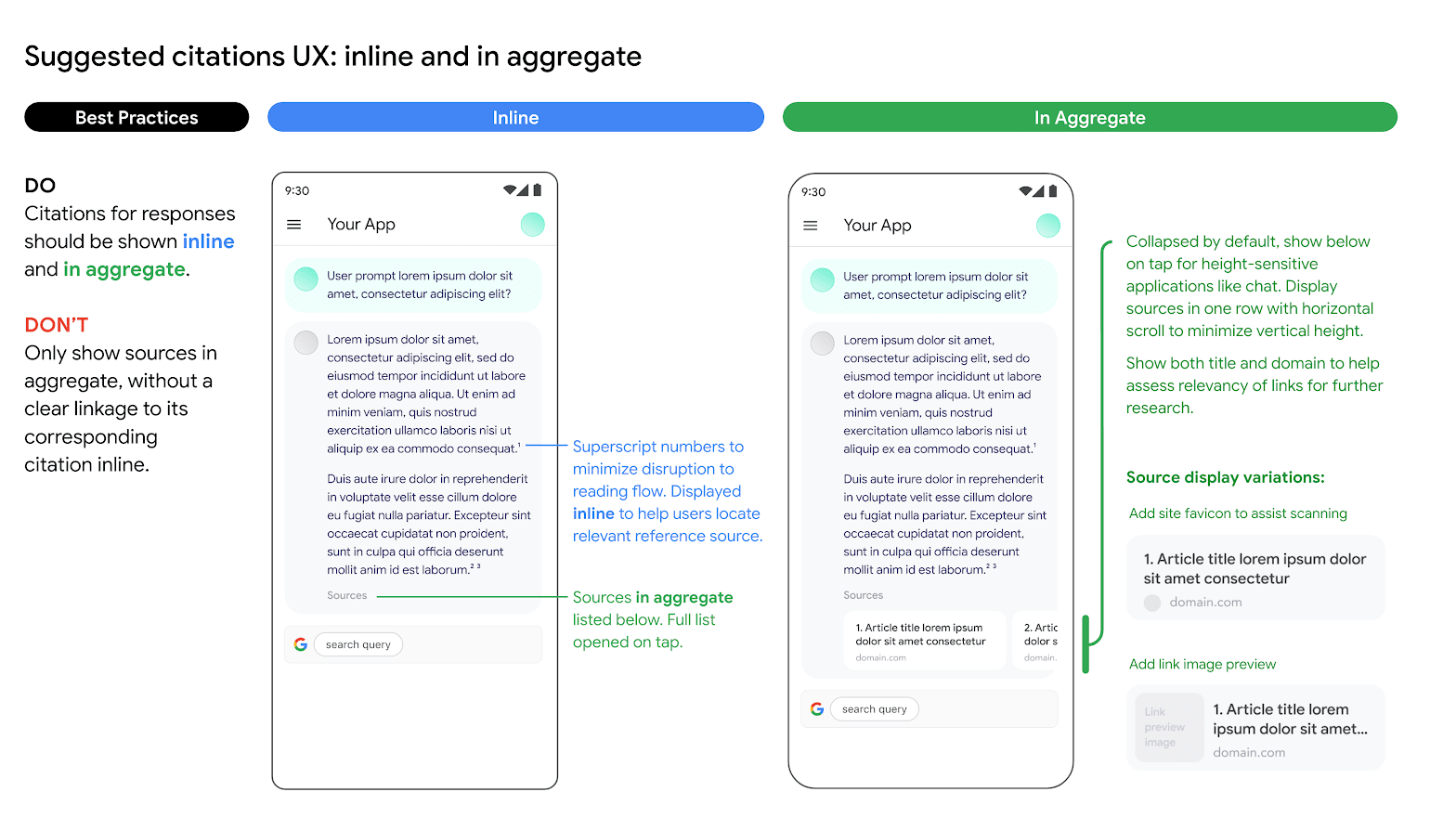

Citations for responses from Google Search sources should be shown both inline and in aggregate. See the following image as a suggestion on how to do this.

Use of alternative search engine options

Customer's use of Grounding with Google Search does not prevent Customer from offering alternative search engine options, making alternative search options the default option for Customer Applications, or displaying their own or third party search suggestions or search results in Customer Applications, provided that that any such non-Google search services or associated results are displayed separately from the Grounded Results and Search Entry Points and cannot reasonably be attributed to, or confused with results provided by, Google.

Ground Gemini to your data

This section shows you how to ground Gemini 1.0 Pro text responses to a Vertex AI Search datastore by using the Vertex AI API.

The following models support grounding:

- Gemini 1.0 Pro

There are prerequisites needed before you can ground Gemini 1.0 Pro.

- Enable Vertex AI Search and activate the API.

- Create a Vertex AI Search data source and app.

- Link your datastore to your app in Vertex AI Search. The data source serves as the foundation for grounding Gemini 1.0 Pro in Vertex AI.

- Enable Enterprise edition for your datastore.

See the Introduction to Vertex AI Search for more.

Enable Vertex AI Search

In the Google Cloud console, go to the Search & Conversation page.

Read and agree to the terms of service, then click Continue and activate the API.

Vertex AI Search is available in the global location, or the eu and us multi-region. To

learn more, see Vertex AI Search locations

Create a datastore in Vertex AI Search

To ground your models to your source data, you need to have prepared and saved your data to Vertex AI Search. To do this, you need to create a data store in Vertex AI Search.

If you are starting from scratch, you need to prepare your data for ingestion into Vertex AI Search. See Prepare data for ingesting to get started. Depending on the size of your data, ingestion can take several minutes to several hours. Only unstructured data stores are supported for grounding.

After you've prepared your data for ingestion, you can Create a search data store. After you've successfully created a datastore, Create a search app to link to it and Turn Enterprise edition on.

Ground the Gemini 1.0 Pro model

If you don't know your datastore ID, follow these steps:

- In the Google Cloud console, go to the Vertex AI Search page and in the navigation menu, click Data stores. Go to the Data stores page

- Click the name of your datastore.

- On the Data page for your datastore, get the datastore ID.

REST

To test a text prompt by using the Vertex AI API, send a POST request to the publisher model endpoint.

PROJECT_ID=PROJECT_ID

curl -X POST -H "Authorization: Bearer $(gcloud auth print-access-token)" -H "Content-Type: application/json" https://us-central1-aiplatform.googleapis.com/v1beta1/projects/PROJECT_ID/locations/us-central1/publishers/google/models/gemini-1.0-pro:generateContent -d '{

"contents": [{

"role": "user",

"parts": [{

"text": TEXT

}]

}],

"model": "projects/PROJECT_ID/locations/us-central1/publishers/google/models/gemini-1.0-pro",

"tools": [{

"retrieval": {

"vertexAiSearch": {

"datastore": projects/PROJECT_ID/locations/global/collections/default_collection/dataStores/DATA_STORE_ID

}

}

}]

}

Python

To learn how to install or update the Vertex AI SDK for Python, see Install the Vertex AI SDK for Python. For more information, see the Python API reference documentation.

Console

To ground your model output to Vertex AI Search by using Vertex AI Studio in the Google Cloud console, follow these steps:

- In the Google Cloud console, go to the Vertex AI Studio page.

- Click the Language tab.

- Click Text prompt to view the single prompt design page.

- In the side panel, click Advanced to view advanced settings.

- Click the Enable Grounding toggle to enable grounding.

- Click Customize and set Vertex AI Search as the source. The path should follow this format: projects/project_id/locations/global/collections/default_collection/dataStores/data_store_id.

- Enter your prompt in the text box and click Submit.

Your prompt responses now ground to Vertex AI Search.

What's next

- Learn how to send chat prompt requests.

- Learn about responsible AI best practices and Vertex AI's safety filters.

- To learn how to ground the PaLM models, see Grounding in Vertex AI