Year in review: smart analytics makes great strides

Sudhir Hasbe

Sr. Director of Product Management, Google Cloud

2019 was an incredible year for us, and I had the opportunity to meet so many of our customers, partners, and industry analysts. It’s truly overwhelming to witness how our customers and partners are developing analytics solutions, and solving some of the most complex business challenges with data insights.

We were inspired by HSBC tackling their fast-growing volumes of data, and Otto Group migrating their on-premises Hadoop data lake to Google Cloud. MoneySuperMarket shifted their on-prem analytics to cloud to run bigger tasks faster and help to serve customers even better. And S4 Agtech described how they’re using smart cloud analytics to de-risk crop production for farmers and innovate faster.

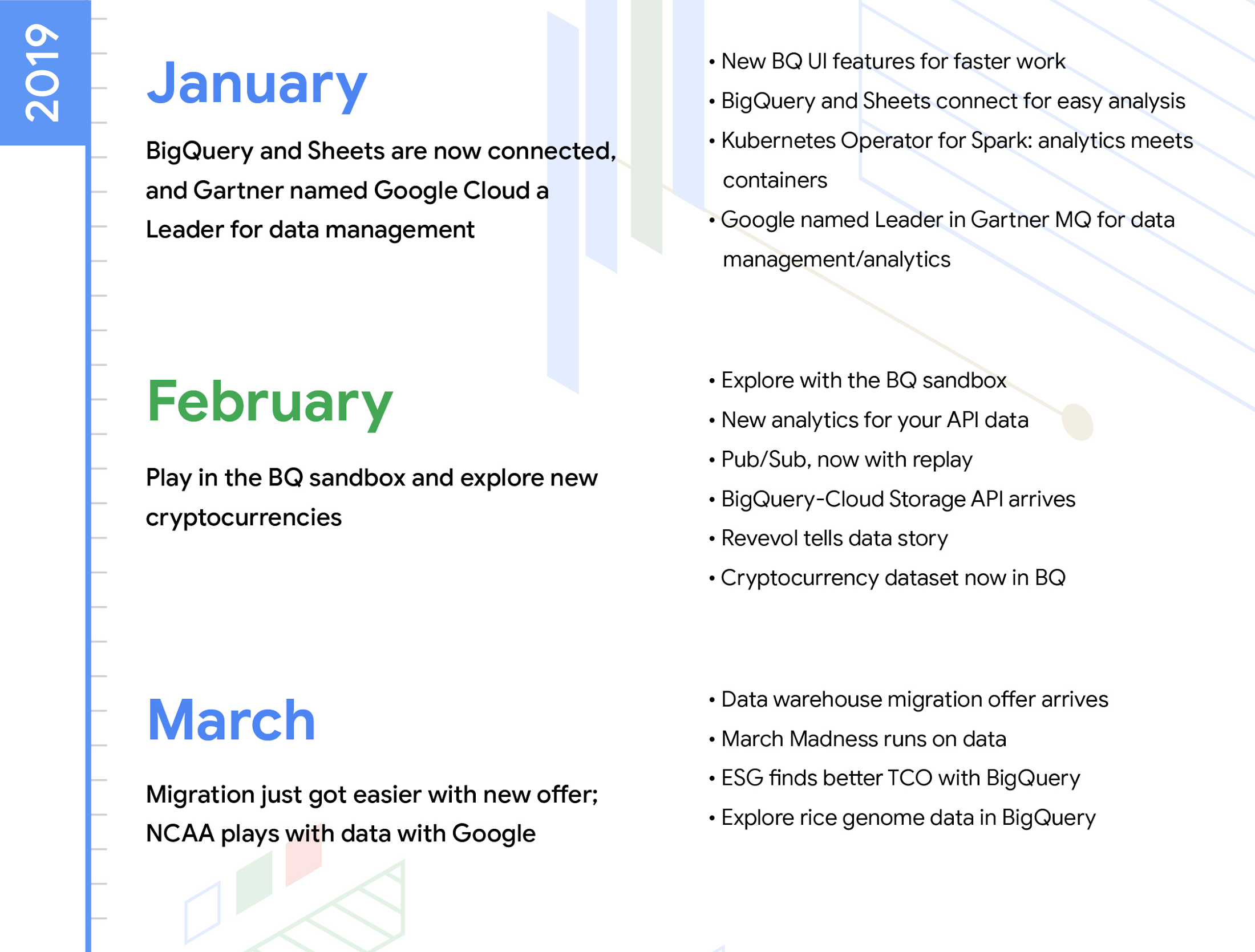

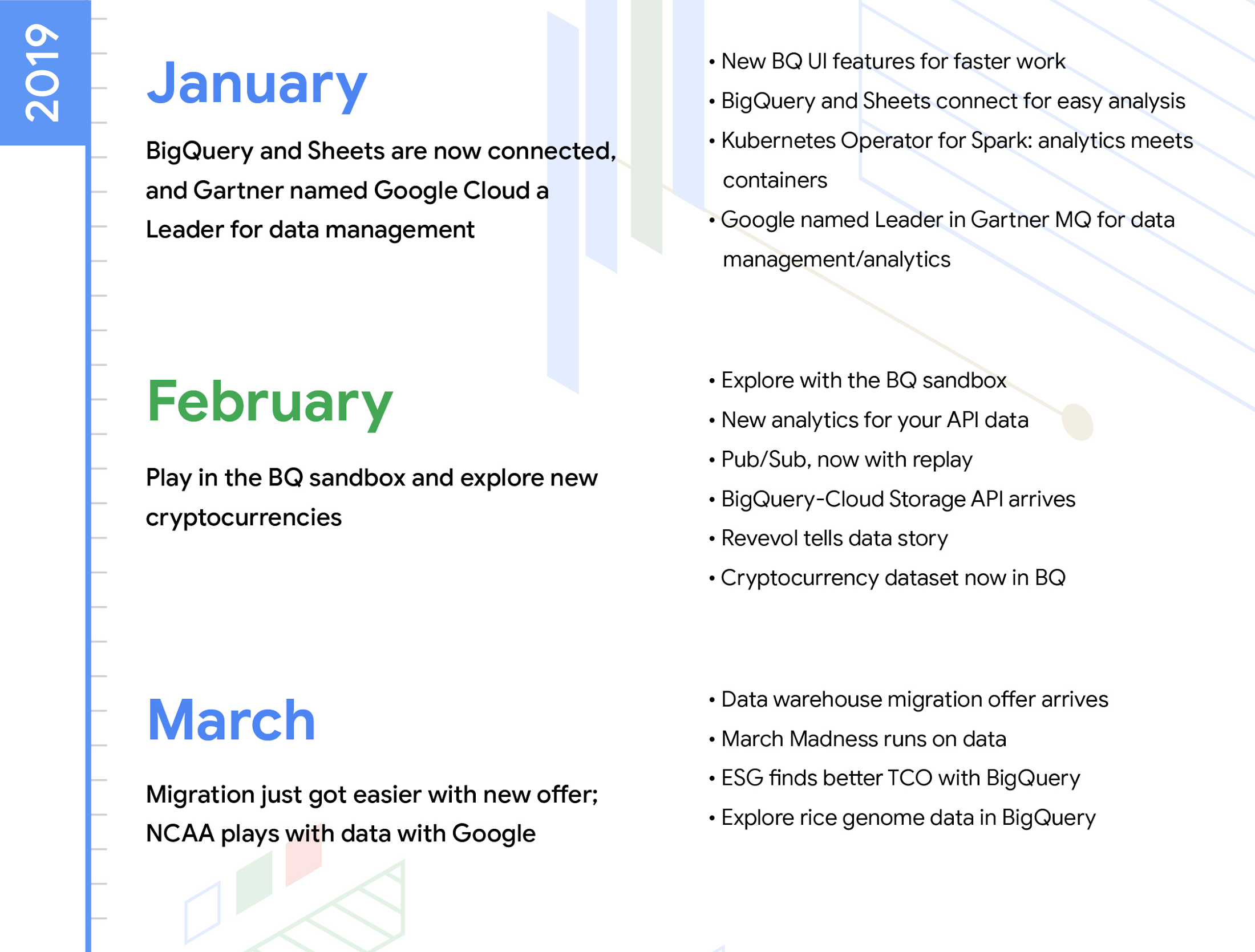

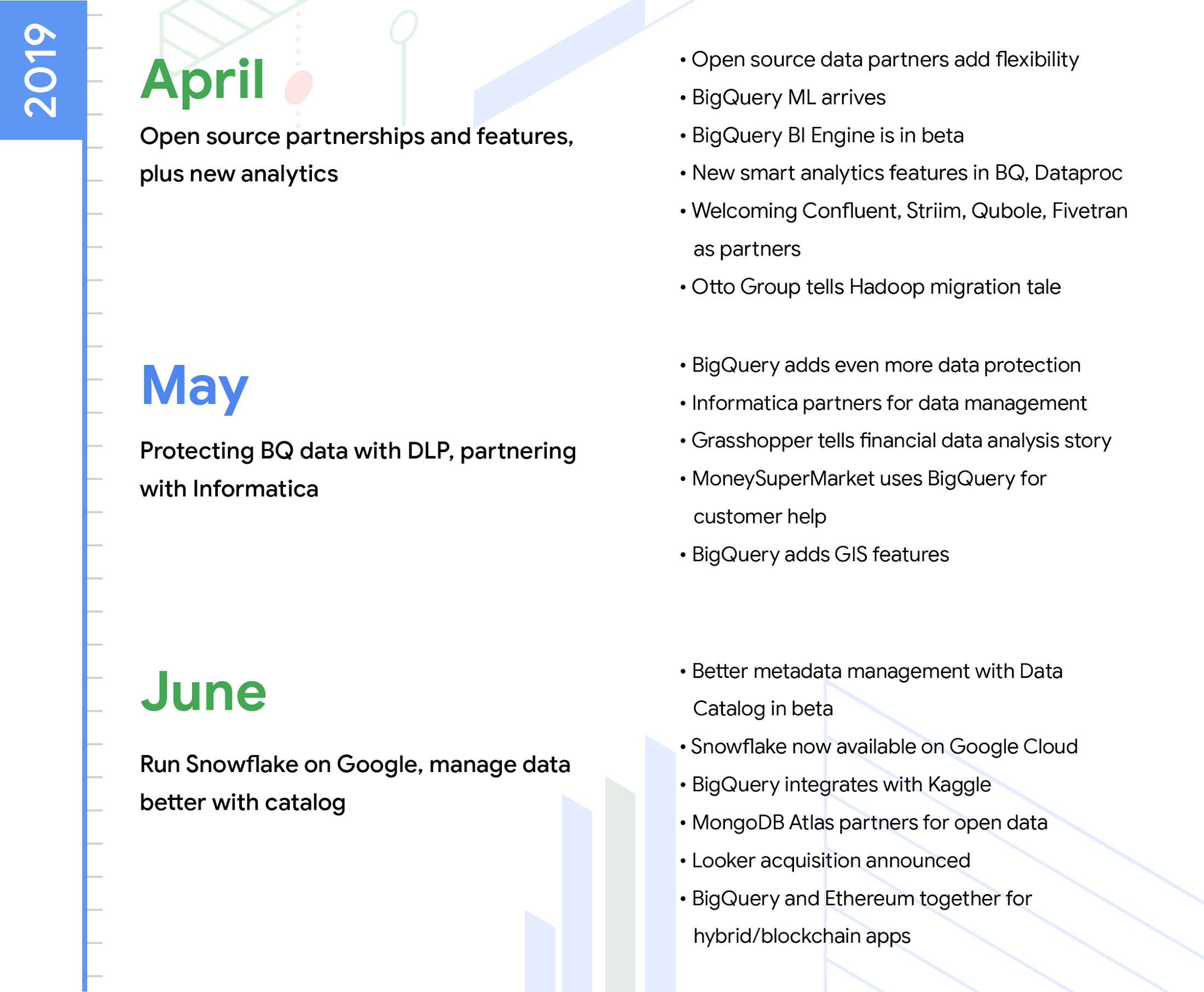

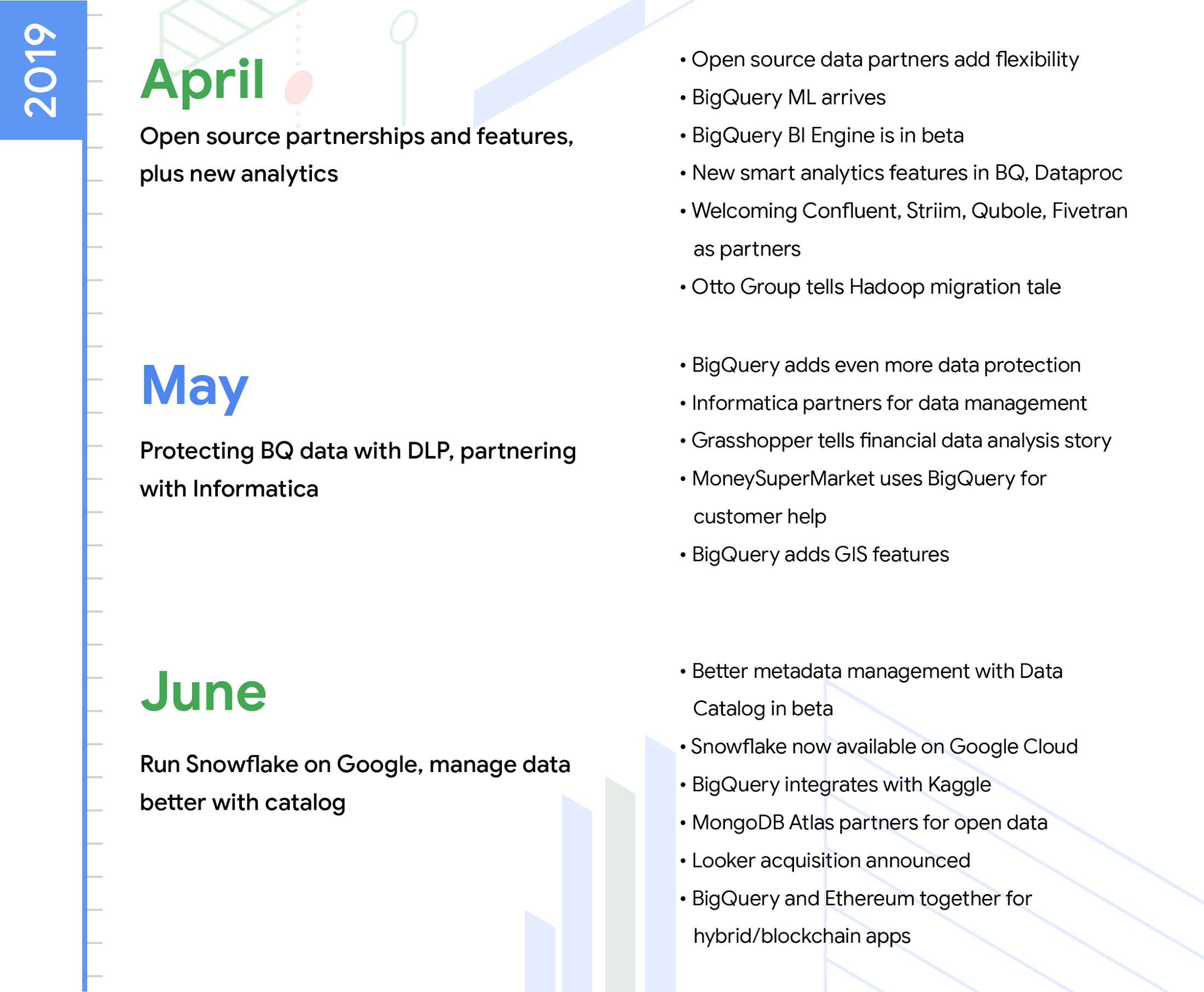

We’ve learned a lot about what’s important to you, and we put that information to good use as we continue investing in our smart analytics platform. Our years working with distributed computing and data analytics at Google have led us to consider a lot of factors when we design and build our cloud products. We are investing in building a radically simple, serverless data analytics platform that offers any user the ability to perform real-time and predictive analytics on any data without breaking the bank. The platform is open and multi-cloud by design, and it’s letting enterprises and cloud-native organizations accelerate their digital transformations with the flexibility and the choice that they need. We launched more than 100 new capabilities for smart analytics in 2019. Here are the highlights from our 2019 launches!

Data Warehousing

Our customers are building highly scalable enterprise data warehouses on the smart analytics platform. This year, we focused on three major areas for the data warehousing solution:

Seamless modernization: One of our main focus areas was to make sure that you can apply Google Cloud’s step-by-step migration framework and modernize legacy data warehouses in a frictionless manner. We launched the BigQuery Data Transfer Service for Teradata and AWS Redshift to help organizations like John Lewis Partnership more seamlessly move their data, schema and workloads to BigQuery. Recently, Enterprise Strategy Group released a report revealing that BigQuery can provide 3-year TCO that is 26% to 34% lower than cloud data warehouse alternatives. We also added capabilities so it’s easier for you to operationalize stored procedures and scripting. Plus, you can take advantage of the data warehouse migration offer to accelerate your migration process.

Ease of use: We also launched 100+ partner SaaS connectors for BigQuery, making it easier for business analysts to move data from business applications into the warehouse for analysis. The new BigQuery user interface and the new query federation functionality makes it even easier for you to analyze data in Bigtable, Cloud Storage, Cloud SQL and Google Sheets. Additionally, we announced BigQuery Reservations, a self-service way to take advantage of BigQuery flat-rate pricing. Reservations makes it even simpler to plan your spending and add flexibility and visibility to your data analytics use cases.

Intelligent insights: Our continued investments in BigQuery ML and BigQuery GIS capabilities mean that data analysts and data scientists can do advanced machine learning and geospatial analytics right within the warehouse. This year, we announced BigQuery ML support for clustering and classification models and native support for importing Tensorflow models.

We were also excited that analyst firm Gartner recognized BigQuery as a leader in their 2019 Data Management Solutions for Analytics (DMSA) Magic Quadrant.

Streaming analytics

In 2019, we invested in making streaming data analytics simpler for you to use, highly scalable and intelligent. Our customers are using Dataflow, Pub/Sub and BigQuery to build real-time analytics solutions on large-scale streaming data. Gaming companies like Unity Technologies are able to personalize their user experience in real time, and financial services companies like Dow Jones can do real-time financial assessments and data aggregations using Google Cloud’s stream analytics solution.

With the Dataflow SQL launch, we opened up real-time streaming data analytics to millions of data analysts and developers, allowing more people to more easily analyze large-scale streaming data with simple SQL. With the GA of Dataflow Streaming Engine, the architectural benefit of separating compute from state storage means you can deploy more responsive, efficient, and supportable streaming pipelines. Additionally, the BigQuery team has completely redesigned the streaming back end to increase the default Streaming API quota by a factor of 10, from 100,000 to 1,000,000 rows per second per project, so you can build massively scalable streaming analytics solutions. And analyst firm Forrester recognized Google Cloud as a leader in their 2019 streaming data analytics wave evaluation.

Data lake

Enterprises around the world from Twitter and Pandora to Vodafone Group are moving their data lakes to Google Cloud to lower TCO, unlock scale, and open up new analytics possibilities. In 2019, we continued to blend the best of open source and Google Cloud to securely modernize data lakes across industries. This year, we made significant investments and launched many new features, with highlights particularly for hybrid and multi-cloud, security, and user access.

Hybrid and multi-cloud: We launched Dataproc on Kubernetes (in alpha), so those of you using Apache Spark can build Spark jobs and deploy them on Google Kubernetes Engine (GKE), wherever GKE might live. By deploying new Spark-based analytics and data pipelines on Kubernetes, Dataproc users can now build once and deploy anywhere without worrying about downstream tech stack dependencies.

Security: In 2019, we launched multiple security improvements, including Kerberos and Hadoop secure mode (GA). This helps improve the overall security of Google’s data lake solution while making it easier for enterprises to migrate existing security controls from on-prem Hadoop-based data lakes to the cloud.

User access: SQL continues to be the language of choice for data analysts looking to access and analyze data lake information. By extending BigQuery federated queries to include open file formats like Parquet and ORC, you can now access the data lake from BigQuery and downstream BI applications. The new BigQuery Storage API breaks down data silos by making it easy for Dataproc users to run blazing fast Spark jobs against BigQuery data. 2019 represented the onset of a new era, where the data warehouses and data lakes truly start converging.

Business intelligence

A key part of our vision in smart analytics is to enable analysts to perform enterprise-class, interactive analytics at scale without compromising data freshness or speed. In this regard, 2019 was a monumental year for BI at Google Cloud. Early in the year at Next, we launched BigQuery BI Engine, a new column-oriented, in-memory feature of BigQuery that’s helping customers like AirAsia, Vendasta, Zalando and many more democratize insights. The new feature enables higher concurrency and subsecond responsiveness for interactive dashboarding and reporting for data in BigQuery.

Earlier this year, we also announced our intent to acquire Looker to round out our already powerful smart analytics portfolio with a platform for enterprise BI, tailored data applications, and embedded analytics. Finally, when it comes to democratizing data and insights, there is no more ubiquitous an interface for playing with data than a spreadsheet. Just last month at Next UK, we announced beta availability of a new feature in Sheets, called connected sheets, that lets you analyze and collaborate on billions of rows of BigQuery data right from within Sheets (without needing SQL!) with standard pivot tables, charts, and functions. In addition to the scalability and performance of BigQuery, connected sheets was one of the key reasons why HSBC chose to migrate their analytics workloads to Google Cloud.

Data governance and security

2019 demonstrated how we are deeply investing in the areas of data governance, data discovery and data security. First, we announced Cloud Data Catalog at Next, which takes design inspiration from how we catalog data internally at Google. Customers like Go-Jek, Sky, and many other customers are using Data Catalog's API and UI to enable governed data discovery and metadata management across their organizations. Taking a few pages from Google's two decades of data governance, we published a new whitepaper Principles and Best Practices for Data Governance in the Cloud to help you on your secure cloud journey. We also announced strategic partnerships with Collibra and Informatica to bring unified data discovery experiences for hybrid and multi-cloud scenarios, as well as Data Catalog integrations with Tableau and Looker. Finally, we're continually investing in simple to use, robust data security and privacy controls to help thwart the increasingly sophisticated cyber attacks many organizations contend with every day.

Data integration

As our customers continue to operate in a multi-cloud world, it’s a priority for us to make sure that data engineers and data analysts are able to bring data from a variety of applications and systems in a simpler manner. In April this year, we announced Data Fusion, a fully managed, code-free data integration service. The service is now generally available. Data Fusion equips developers, data engineers, and business analysts to easily build and manage data pipelines to cleanse, transform, and blend data from a broad range of sources. Data Fusion shifts an organization’s focus away from code and integration to insights and action. Built on the open source project CDAP, the product’s open core offers portability for users across hybrid and multi-cloud environments. CDAP’s broad integration with on-premises and public cloud platforms gives Data Fusion users like Vodafone Group the ability to break down silos and deliver more value through Google’s industry-leading big data tools.

Learn more about all of the solutions that make up smart analytics at Google Cloud. We can’t wait to see what you build next with our smart analytics platform in 2020.