This page describes how to get online (single, low-latency) predictions from AutoML Tables.

Introduction

After you have created (trained) a model, you can deploy the model and request online (real-time) predictions. Online predictions accept one row of data and provide a predicted result based on your model for that data. You use online predictions when you need a prediction as input for your business logic flow.

Before you can request an online prediction, you must deploy your model. Deployed models incur charges. When you are finished making online predictions, you can undeploy your model to avoid further deployment charges. Learn more.

Models must be retrained every six months so that they can continue to serve predictions.

Getting an online prediction

Console

Generally, you use online predictions to get predictions from within your business applications. However, you can use AutoML Tables in the Google Cloud console to test your data format or your model with a specific set of input.

Visit the AutoML Tables page in the Google Cloud console.

Select Models and select the model that you want to use.

Select the Test & Use tab and click Online prediction.

If your model is not yet deployed, deploy it now by clicking Deploy model.

Your model must be deployed to use online predictions. Deploying your model incurs costs. For more information, see the pricing page.

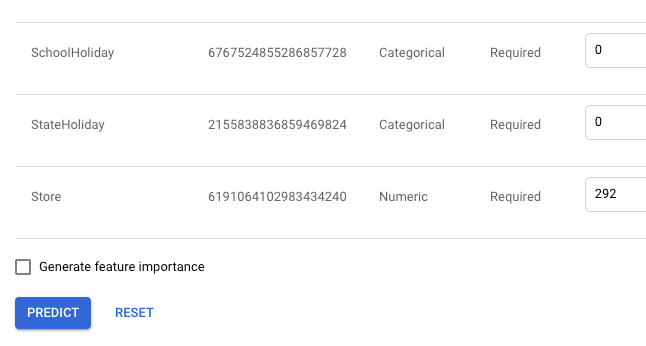

Provide your input values in the text boxes provided.

Alternatively, you can select JSON Code View to provide your input values in JSON format.

If you want to see how each feature impacted the prediction, select Generate feature importance.

The Google Cloud console truncates the local feature importance values for readability. If you need an exact value, use the Cloud AutoML API to make the prediction request.

For information about feature importance, see Local feature importance.

Click Predict to get your prediction.

For information about interpreting your prediction results, see Interpreting your prediction results. For information about local feature importance, see Local feature importance.

(Optional) If you do not plan to request more online predictions, you can undeploy your model to avoid deployment charges by clicking Undeploy model.

curl

You request a prediction for a set of values by

creating your JSON object with your feature values, and then using the

model.predict method to get the prediction.

The values must contain the exact columns you included in training, and they must be in the same order as shown on the Evaluate tab by clicking on the included columns link.

If you want to reorder the values, you can optionally include a set of column spec IDs in the order of the values. You can get the column spec IDs from your model object; they are found in the TablesModelMetadata.inputFeatureColumnSpecs field.

The data type of each value (feature) in the Row object depends on the AutoML Tables data type of the feature. For a list of accepted data types by AutoML Tables data type, see Row object format.

If you haven't deployed your model yet, deploy it now. Learn more.

Request the prediction.

Before using any of the request data, make the following replacements:

-

endpoint:

automl.googleapis.comfor the global location, andeu-automl.googleapis.comfor the EU region. - project-id: your Google Cloud project ID.

- location: the location for the resource:

us-central1for Global oreufor the European Union. - model-id: the ID of the model. For example,

TBL543. - valueN: the values for each column, in the correct order.

HTTP method and URL:

POST https://endpoint/v1beta1/projects/project-id/locations/location/models/model-id:predict

Request JSON body:

{ "payload": { "row": { "values": [ value1, value2,... ] } } }To send your request, choose one of these options:

To include local feature importance results, include thecurl

Save the request body in a file named

request.json, and execute the following command:curl -X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "x-goog-user-project: project-id" \

-H "Content-Type: application/json; charset=utf-8" \

-d @request.json \

"https://endpoint/v1beta1/projects/project-id/locations/location/models/model-id:predict"PowerShell

Save the request body in a file named

request.json, and execute the following command:$cred = gcloud auth print-access-token

$headers = @{ "Authorization" = "Bearer $cred"; "x-goog-user-project" = "project-id" }

Invoke-WebRequest `

-Method POST `

-Headers $headers `

-ContentType: "application/json; charset=utf-8" `

-InFile request.json `

-Uri "https://endpoint/v1beta1/projects/project-id/locations/location/models/model-id:predict" | Select-Object -Expand Contentfeature_importanceparameter. For more information about local feature importance, see Local feature importance.-

endpoint:

View your results.

For a classification model, you should see output similar to the following example. Note that two results are returned, each with a confidence estimate (

score). The confidence estimate is between 0 and 1, and shows how likely the model thinks this is the correct prediction value. For more information about how to use the confidence estimate, see Interpreting your prediction results.{ "payload": [ { "tables": { "score": 0.11210235, "value": "1" } }, { "tables": { "score": 0.8878976, "value": "2" } } ] }For a regression model, the results include a prediction value and a prediction interval. The prediction interval provides a range that includes the true value 95% of the time (based on the data that the model was trained on). Note that the predicted value might not be centered in the interval (it might even fall outside the interval), because the prediction interval is centered around the median, whereas the predicted value is the expected value (or mean).

{ "payload": [ { "tables": { "value": 207.18209838867188, "predictionInterval": { "start": 29.712770462036133, "end": 937.42041015625 } } } ] }For information about local feature importance results, see Local feature importance.

(Optional) If you are finished requesting online predictions, you can undeploy your model to avoid deployment charges. Learn more.

Java

If your resources are located in the EU region, you must explicitly set the endpoint. Learn more.

Node.js

If your resources are located in the EU region, you must explicitly set the endpoint. Learn more.

Python

The client library for AutoML Tables includes additional Python methods that simplify using the AutoML Tables API. These methods refer to datasets and models by name instead of id. Your dataset and model names must be unique. For more information, see the Client reference.

If your resources are located in the EU region, you must explicitly set the endpoint. Learn more.

What's next

- Learn how to interpret your prediction results.

- Learn about local feature importance.