Vision API는 Cloud Storage에 저장된 PDF, TIFF 또는 GIF 파일의 여러 페이지 또는 프레임에 대한 온라인(즉각적) 주석을 제공합니다.

각 파일에 선택한 5개의 프레임(GIF; 'image/gif') 또는 페이지(PDF; 'application/pdf' 또는 TIFF; 'image/tiff')의 온라인 기능 감지 및 주석을 요청할 수 있습니다.

이 페이지의 주석 예시는 DOCUMENT_TEXT_DETECTION용이지만 온라인 작은 배치 주석은 모든 Vision 기능에 사용할 수 있습니다.

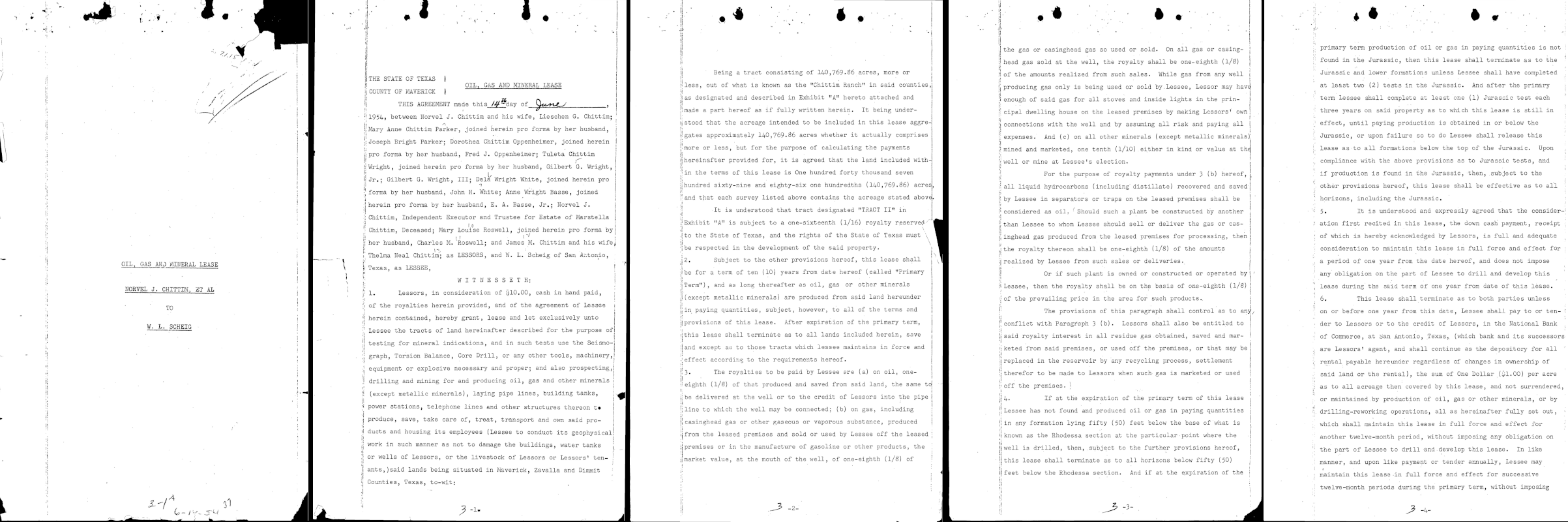

1페이지

... "text": "á\n7.1.15\nOIL, GAS AND MINERAL LEASE \nNORVEL J. CHITTIM, ET AL\n.\n. \nTO\nW. L. SCHEIG\n" }, "context": {"pageNumber": 1} ... |

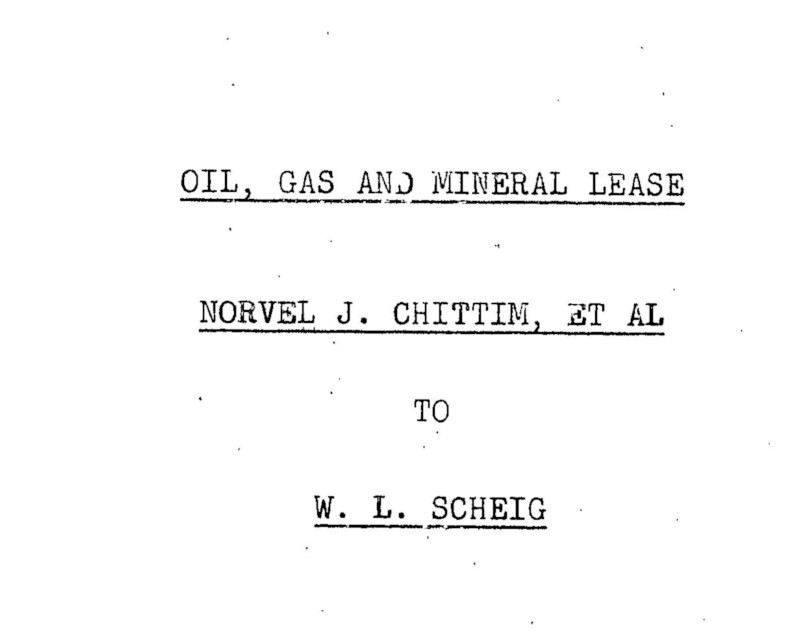

2페이지

... "text": "...\n.\n*\n.\n.\n.\nA\nNY\nALA...\n7 \n| THE STATE OF TEXAS \nOIL, GAS AND MINERAL LEASE \nCOUNTY OF MAVERICK ] \nTHIS AGREEMENT made this 14 day of_June \n1954, between Norvel J. Chittim and his wife, Lieschen G. Chittim; \nMary Anne Chittim Parker, joined herein pro forma by her husband, \nJoseph Bright Parker; Dorothea Chittim Oppenheimer, joined herein \npro forma by her husband, Fred J. Oppenheimer; Tuleta Chittim \nWright, joined herein pro forma by her husband, Gilbert G. Wright, \nJr.; Gilbert G. Wright, III; Dela Wright White, joined herein pro \nforma by her husband, John H. White; Anne Wright Basse, joined \nherein pro forma by her husband, E. A. Basse, Jr.; Norvel J. \nChittim, Independent Executor and Trustee for Estate of Marstella \nChittim, Deceased; Mary Louise Roswell, joined herein pro forma by \nher husband, Charles M. 'Roswell; and James M. Chittim and his wife, \nThelma Neal Chittim; as LESSORS, and W. L. Scheig of San Antonio, \nTexas, as LESSEE, |

\nW I T N E s s E T H: \n1. Lessors, in consideration of $10.00, cash in hand paid, \nof the royalties herein provided, and of the agreement of Lessee \nherein contained, hereby grant, lease and let exclusively unto \nLessee the tracts of land hereinafter described for the purpose of \ntesting for mineral indications, and in such tests use the Seismo- \ngraph, Torsion Balance, Core Drill, or any other tools, machinery, \nequipment or explosive necessary and proper; and also prospecting, \ndrilling and mining for and producing oil, gas and other minerals \n(except metallic minerals), laying pipe lines, building tanks, \npower stations, telephone lines and other structures thereon to \nproduce, save, take care of, treat, transport and own said pro- \nducts and housing its employees (Lessee to conduct its geophysical \nwork in such manner as not to damage the buildings, water tanks \nor wells of Lessors, or the livestock of Lessors or Lessors' ten- ! \nants, )said lands being situated in Maverick, Zavalla and Dimmit \nCounties, Texas, to-wit:\n3-1.\n" }, "context": {"pageNumber": 2} ... |

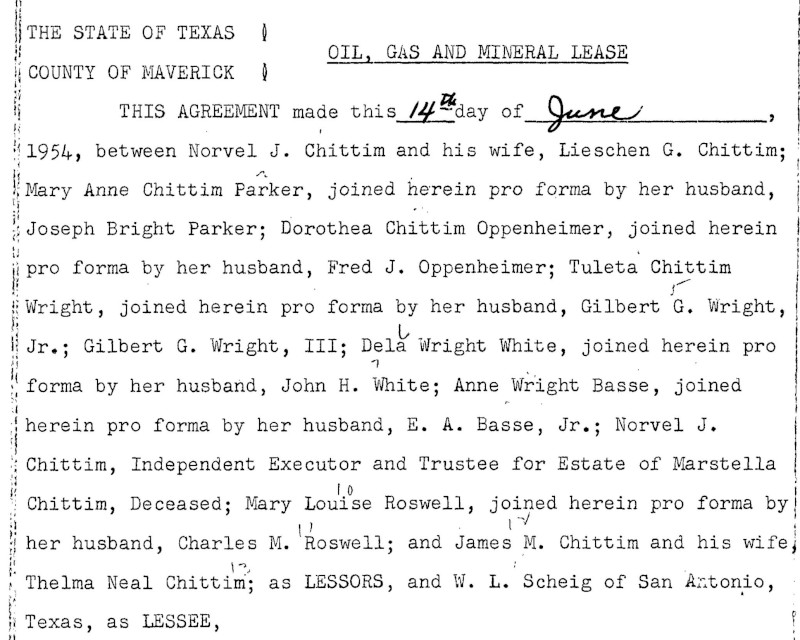

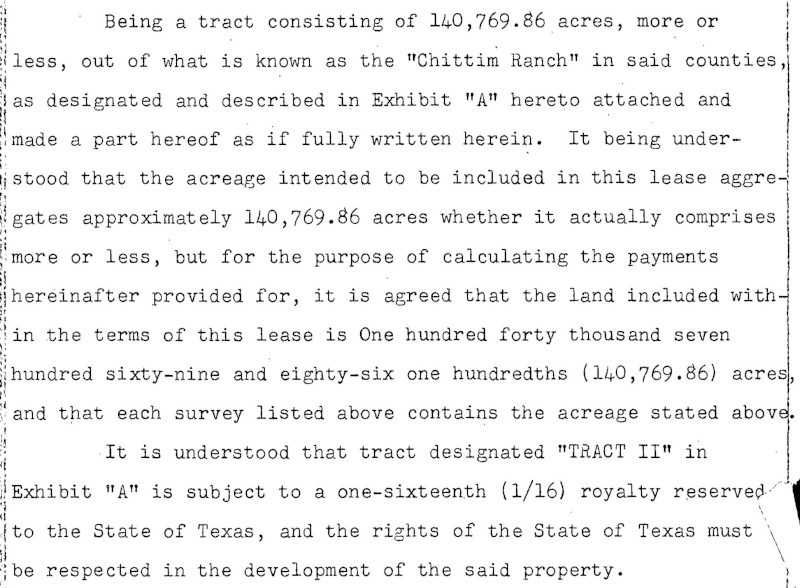

3페이지

... "text": "Being a tract consisting of 140,769.86 acres, more or \nless, out of what is known as the \"Chittim Ranch\" in said counties, \nas designated and described in Exhibit \"A\" hereto attached and \nmade a part hereof as if fully written herein. It being under- \nstood that the acreage intended to be included in this lease aggre- \ngates approximately 140,769.86 acres whether it actually comprises \nmore or less, but for the purpose of calculating the payments \nhereinafter provided for, it is agreed that the land included with- \nin the terms of this lease is One hundred forty thousand seven \nhundred sixty-nine and eighty-six one hundredths (140,769.86) acres, \nand that each survey listed above contains the acreage stated above. \nIt is understood that tract designated \"TRACT II\" in \nExhibit \"A\" is subject to a one-sixteenth (1/16) royalty reserved. \nto the State of Texas, and the rights of the State of Texas must \nbe respected in the development of the said property. |

\n2. Subject to the other provisions hereof, this lease shall \nbe for a term of ten (10) years from date hereof (called \"Primary \nTerm\"), and as long thereafter as oil, gas or other minerals \n(except metallic minerals) are produced from said land hereunder \nin paying quantities, subject, however, to all of the terms and \nprovisions of this lease. After expiration of the primary term, \nthis lease shall terminate as to all lands included herein, save \nand except as to those tracts which lessee maintains in force and \neffect according to the requirements hereof. \n3. The royalties to be paid by Lessee are (a) on oil, one- \neighth (1/8) of that produced and saved from said land, the same to \nbe delivered at the well or to the credit of Lessors into the pipe i \nline to which the well may be connected; (b) on gas, including \ni casinghead gas or other gaseous or vaporous substance, produced \nfrom the leased premises and sold or used by Lessee off the leased \npremises or in the manufacture of gasoline or other products, the \nmarket value, at the mouth of the well, of one-eighth (1/8) of \n.\n3-2-\n?\n" }, "context": {"pageNumber": 3} ... |

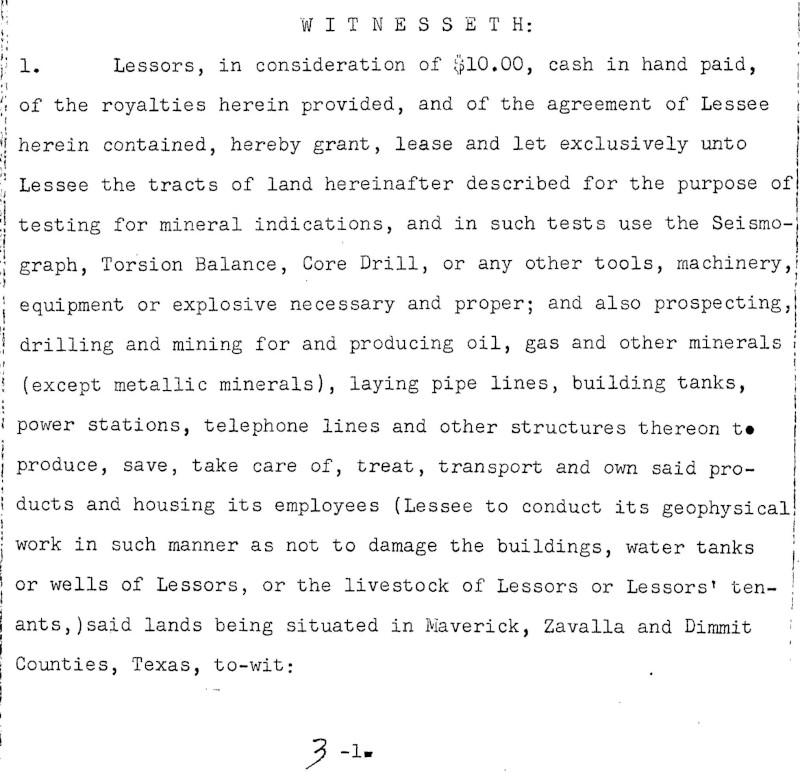

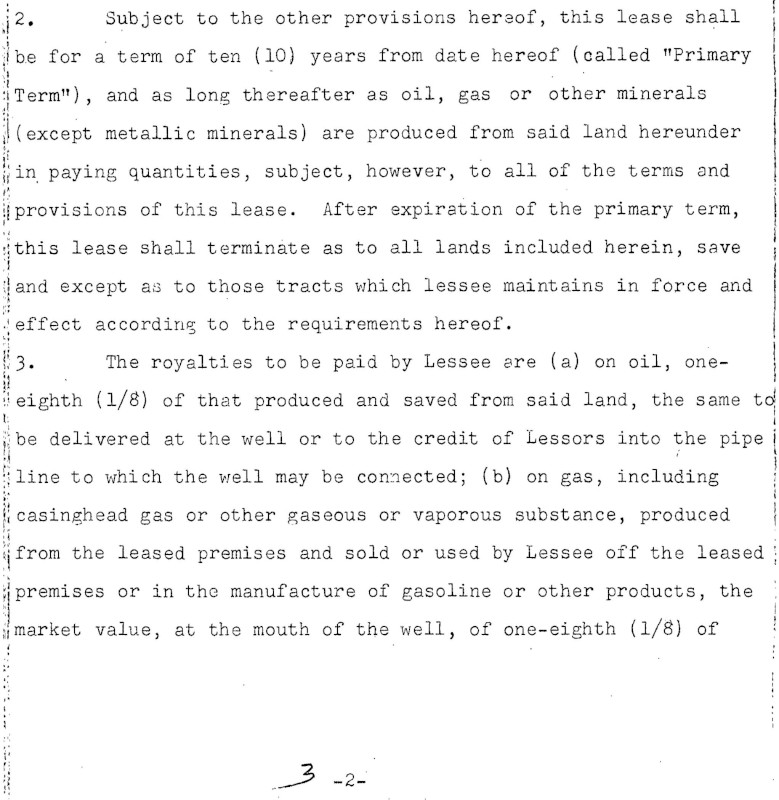

4페이지

... "text": "•\n:\n.\nthe gas or casinghead gas so used or sold. On all gas or casing- \nhead gas sold at the well, the royalty shall be one-eighth (1/8) \nof the amounts realized from such sales. While gas from any well \nproducing gas only is being used or sold by. Lessee, Lessor may have \nenough of said gas for all stoves and inside lights in the prin- \ncipal dwelling house on the leased premises by making Lessors' own \nconnections with the well and by assuming all risk and paying all \nexpenses. And (c) on all other minerals (except metallic minerals) \nmined and marketed, one tenth (1/10). either in kind or value at the \nwell or mine at Lessee's election. \nFor the purpose of royalty payments under 3 (b) hereof, \nall liquid hydrocarbons (including distillate) recovered and saved n| by Lessee in separators or traps on the leased premises shall be \nconsidered as oil. Should such a plant be constructed by another \nthan Lessee to whom Lessee should sell or deliver the gas or cas- \ninghead gas produced from the leased premises for processing, then \nthe royalty thereon shall be one-eighth (1/8) of the amounts \nrealized by Lessee from such sales or deliveries. |

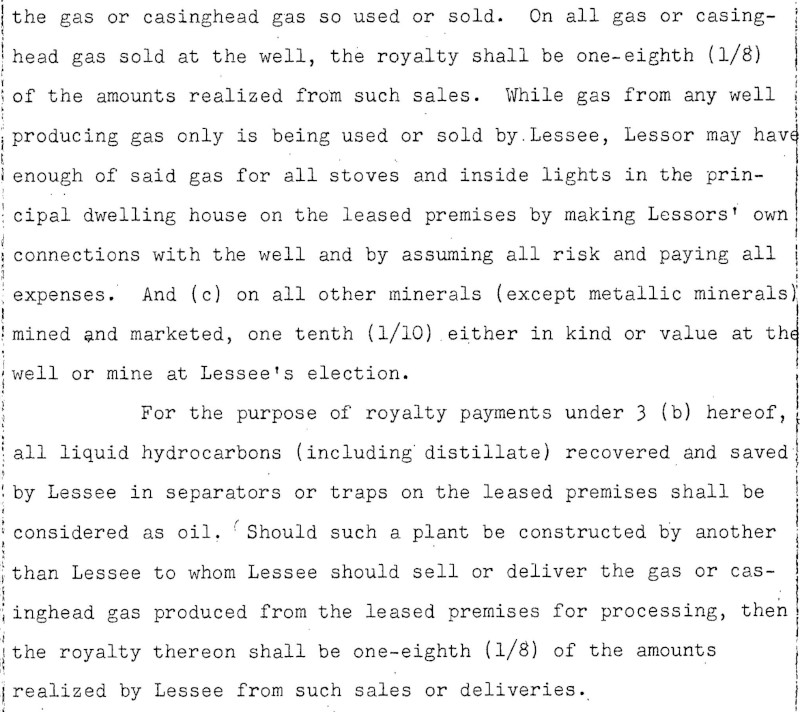

\nOr if such plant is owned or constructed or operated by \nLessee, then the royalty shall be on the basis of one-eighth (1/8) | \nof the prevailing price in the area for such products.. \nThe provisions of this paragraph shall control as to any \nconflict with Paragraph 3 (b). Lessors shall also be entitled to \nsaid royalty interest in all residue gas .obtained, saved and mar- \nketed from said premises, or used off the premises, or that may be \nreplaced in the reservoir by 'any recycling process, settlement \ntherefor to be made to Lessors when such gas is marketed or used \noff the premises. ! \nIf at the expiration of the primary term of this lease \nLessee has not found and produced oil or gas in paying quantities \nin any formation lying fifty (50) feet below the base of what is \nknown as the Rhodessa section at the particular point where the \nwell is drilled, then, subject to the further provisions hereof, \nthis lease shall terminate as to all horizons below fifty (50) \nI feet below the Rhodessa section. And if at the expiration of the \n3 -3-\n" }, "context": {"pageNumber": 4} ... |

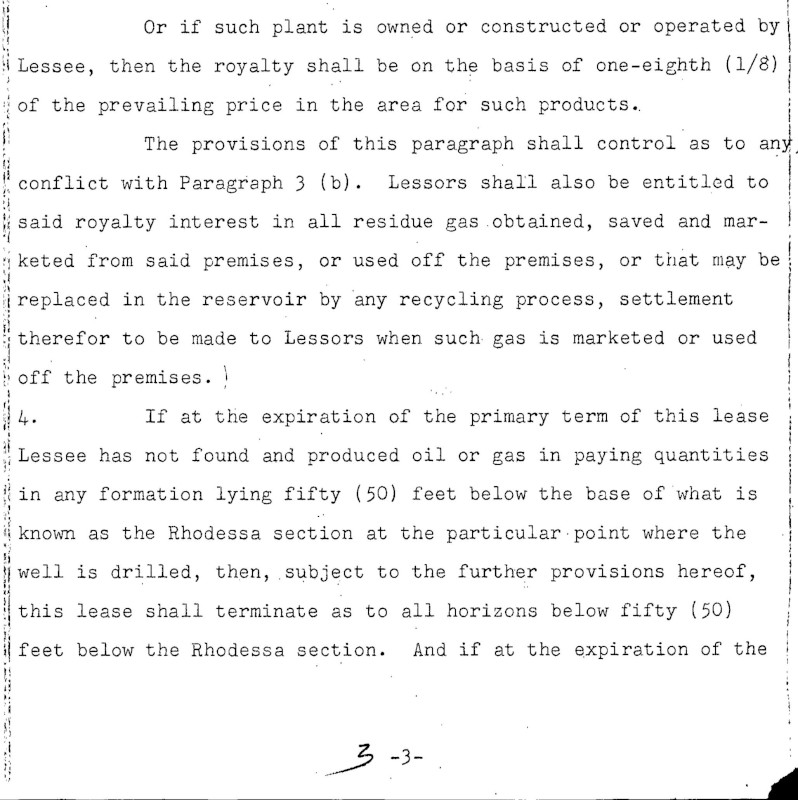

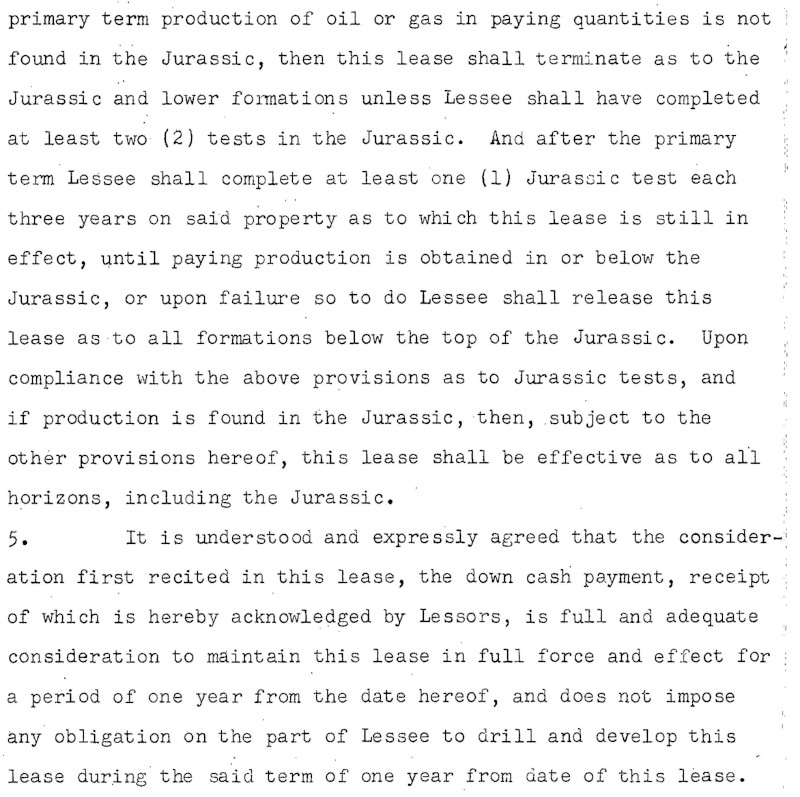

5페이지

... "text": ".\n.\n:\nI\n.\n.\n.:250:-....\n.\n...\n.\n....\n....\n..\n..\n. .. \n.\n..\n.\n...\n...\n.-\n.\n.\n..\n..\n17\n.\n:\n-\n-\n-\n.\n..\n. \nprimary term production of oil or gas in paying quantities is not \nfound in the Jurassic, then this lease shall terminate as to the \nJurassic and lower formations unless Lessee shall have completed \nat least two (2) tests in the Jurassic. And after the primary \nterm Lessee shall complete at least one (1) Jurassic test each \nthree years on said property as to which this lease is still in \neffect, until paying production is obtained in or below the \nJurassic, or upon failure so to do Lessee shall release this \nlease as to all formations below the top of the Jurassic. Upon \ncompliance with the above provisions as to Jurassic tests, and \nif production is found in the Jurassic, then, subject to the \nother provisions hereof, this lease shall be effective as to all \nhorizons, including the Jurassic.. \n5. It is understood and expressly agreed that the consider- \niation first recited in this lease, the down cash payment, receipt \nof which is hereby acknowledged by Lessors, is full and adequate \nconsideration to maintain this lease in full force and effect for \na period of one year from the date hereof, and does not impose \nany obligation on the part of Lessee to drill and develop this \nlease during the said term of one year from date of this lease. |

\n6. This lease shall terminate as to both parties unless \non or before one year from this date, Lessee shall pay to or ten- ! \nder to Lessors or to the credit of Lessors, in the National Bank \nof Commerce, at San Antonio, Texas, (which bank and its successors \nare Lessors' agent, and shall continue as the depository for all \" \nrental payable hereunder regardless of changes in ownership of \nsaid land or the rental), the sum of One Dollar ($1.00) per acre \nas to all acreage then covered by this lease, and not surrendered, \nor maintained by production of oil, gas or other minerals, or by \ndrilling-reworking operations, all as hereinafter fully set out, : \nwhich shall maintain this lease in full force and effect for \nanother twelve-month period, without imposing any obligation on \nthe part of Lessee to drill and develop this lease. In like \nmanner, and upon like payment or tender annually, Lessee may \nmaintain this lease .in full force and effect for successive \ntwelve-month periods during the primary term, without imposing \n.\n--.\n.\n.\n.\n-\n::\n--- \n-\n3\n.\n..-\n-\n-\n:.\n.\n::\n. \n3-4-\n" }, "context": {"pageNumber": 5} ... |

제한사항

최대 다섯 장까지 주석이 추가됩니다. 사용자는 특정 페이지를 5페이지까지 지정하여 주석을 추가할 수 있습니다.

인증

Google Cloud 프로젝트 및 인증 설정

현재 지원되는 기능 유형

| 기능 유형 | |

|---|---|

CROP_HINTS |

이미지에서 자르기 영역으로 제안되는 꼭짓점을 결정합니다. |

DOCUMENT_TEXT_DETECTION |

문서(PDF/TIFF)와 같은 밀집 텍스트 이미지와 필기 입력이 포함된 이미지에 OCR을 수행합니다.

TEXT_DETECTION은 희소 텍스트 이미지에 사용할 수 있습니다.

DOCUMENT_TEXT_DETECTION과 TEXT_DETECTION이 모두 존재하는 경우 우선 적용됩니다.

|

FACE_DETECTION |

이미지 안의 얼굴을 감지합니다. |

IMAGE_PROPERTIES |

이미지의 주요 색상과 같은 이미지 속성의 집합을 계산합니다. |

LABEL_DETECTION |

이미지 콘텐츠를 기반으로 라벨을 추가합니다. |

LANDMARK_DETECTION |

이미지 안의 특징을 감지합니다. |

LOGO_DETECTION |

이미지 안의 회사 로고를 감지합니다. |

OBJECT_LOCALIZATION |

이미지에서 여러 객체를 감지하고 추출합니다. |

SAFE_SEARCH_DETECTION |

세이프서치를 실행하여 안전하지 않거나 바람직하지 않은 콘텐츠를 감지합니다. |

TEXT_DETECTION |

이미지 안의 텍스트에 광 문자 인식(OCR)을 수행합니다.

텍스트 감지는 큰 이미지 내의 희소 텍스트 영역에 최적화되어 있습니다.

이미지가 문서(PDF/TIFF)이거나 밀집 텍스트가 있거나 필기 입력이 포함된 경우 DOCUMENT_TEXT_DETECTION을 대신 사용하세요.

|

WEB_DETECTION |

Google 이미지 검색을 사용하여 이미지에서 뉴스, 이벤트, 연예인 등의 주제별 항목을 검색하고 웹에서 유사한 이미지를 찾습니다. |

샘플 코드

로컬 저장된 파일을 사용하여 주석 요청을 보내거나 Cloud Storage에 저장된 파일을 사용할 수 있습니다.

로컬 저장된 파일 사용

다음 코드 샘플을 사용하여 로컬 저장된 파일에 대한 기능 주석을 가져옵니다.

REST

작은 배치 파일에 대해 온라인 PDF/TIFF/GIF 기능 감지를 수행하려면 POST 요청을 만들고 적절한 요청 본문을 제공합니다.

요청 데이터를 사용하기 전에 다음을 바꿉니다.

- BASE64_ENCODED_FILE: 바이너리 파일 데이터의 base64 표현(ASCII 문자열)입니다. 이 문자열은 다음 문자열과 비슷해야 합니다.

JVBERi0xLjUNCiW1tbW1...ydHhyZWYNCjk5NzM2OQ0KJSVFT0Y=

- PROJECT_ID: Google Cloud 프로젝트 ID입니다.

필드별 고려사항:

inputConfig.mimeType- 'application/pdf', 'image/tiff', 'image/gif' 중 하나입니다.pages- 파일에서 기능 감지를 수행할 특정 페이지를 지정합니다.

HTTP 메서드 및 URL:

POST https://vision.googleapis.com/v1/files:annotate

JSON 요청 본문:

{

"requests": [

{

"inputConfig": {

"content": "BASE64_ENCODED_FILE",

"mimeType": "application/pdf"

},

"features": [

{

"type": "DOCUMENT_TEXT_DETECTION"

}

],

"pages": [

1,2,3,4,5

]

}

]

}

요청을 보내려면 다음 옵션 중 하나를 선택합니다.

curl

요청 본문을 request.json 파일에 저장하고 다음 명령어를 실행합니다.

curl -X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "x-goog-user-project: PROJECT_ID" \

-H "Content-Type: application/json; charset=utf-8" \

-d @request.json \

"https://vision.googleapis.com/v1/files:annotate"

PowerShell

요청 본문을 request.json 파일에 저장하고 다음 명령어를 실행합니다.

$cred = gcloud auth print-access-token

$headers = @{ "Authorization" = "Bearer $cred"; "x-goog-user-project" = "PROJECT_ID" }

Invoke-WebRequest `

-Method POST `

-Headers $headers `

-ContentType: "application/json; charset=utf-8" `

-InFile request.json `

-Uri "https://vision.googleapis.com/v1/files:annotate" | Select-Object -Expand Content

annotate 요청이 성공하면 즉시 JSON 응답이 반환됩니다.

이 기능(DOCUMENT_TEXT_DETECTION)의 경우 JSON 응답은 이미지의 문서 텍스트 감지 요청의 응답과 유사합니다. 응답에는 단락, 단어, 개별 기호로 나뉜 블록의 경계 상자가 포함됩니다. 전체 텍스트도 감지됩니다. 응답에는 지정된 PDF 또는 TIFF의 위치와 결과의 파일상 페이지 번호를 표시하는 context 필드도 포함됩니다.

다음 JSON 응답은 단일 페이지(2페이지)에 대한 것이며 보기 편하도록 짧게 편집하였습니다.

Java

이 샘플을 시도하기 전에 Vision API 빠른 시작: 클라이언트 라이브러리 사용의 Java 설정 안내를 따르세요. 자세한 내용은 Vision API Java 참고 문서를 참조하세요.

Node.js

이 샘플을 사용해 보기 전에 Vision 빠른 시작: 클라이언트 라이브러리 사용의 Node.js 설정 안내를 따르세요. 자세한 내용은 Vision Node.js API 참고 문서를 참조하세요.

Vision에 인증하려면 애플리케이션 기본 사용자 인증 정보를 설정합니다. 자세한 내용은 로컬 개발 환경의 인증 설정을 참조하세요.

Python

이 샘플을 사용해 보기 전에 Vision 빠른 시작: 클라이언트 라이브러리 사용의 Python 설정 안내를 따르세요. 자세한 내용은 Vision Python API 참고 문서를 참조하세요.

Vision에 인증하려면 애플리케이션 기본 사용자 인증 정보를 설정합니다. 자세한 내용은 로컬 개발 환경의 인증 설정을 참조하세요.

Cloud Storage의 파일 사용

다음 코드 샘플을 사용하여 Cloud Storage의 파일에 대한 기능 주석을 가져옵니다.

REST

작은 배치 파일에 대해 온라인 PDF/TIFF/GIF 기능 감지를 수행하려면 POST 요청을 만들고 적절한 요청 본문을 제공합니다.

요청 데이터를 사용하기 전에 다음을 바꿉니다.

- CLOUD_STORAGE_FILE_URI: Cloud Storage 버킷에 있는 유효한 파일(PDF/TIFF)의 경로입니다. 적어도 파일에 대한 읽기 권한이 있어야 합니다.

예를 들면 다음과 같습니다.

gs://cloud-samples-data/vision/document_understanding/custom_0773375000.pdf

- PROJECT_ID: Google Cloud 프로젝트 ID입니다.

필드별 고려사항:

inputConfig.mimeType- 'application/pdf', 'image/tiff', 'image/gif' 중 하나입니다.pages- 파일에서 기능 감지를 수행할 특정 페이지를 지정합니다.

HTTP 메서드 및 URL:

POST https://vision.googleapis.com/v1/files:annotate

JSON 요청 본문:

{

"requests": [

{

"inputConfig": {

"gcsSource": {

"uri": "CLOUD_STORAGE_FILE_URI"

},

"mimeType": "application/pdf"

},

"features": [

{

"type": "DOCUMENT_TEXT_DETECTION"

}

],

"pages": [

1,2,3,4,5

]

}

]

}

요청을 보내려면 다음 옵션 중 하나를 선택합니다.

curl

요청 본문을 request.json 파일에 저장하고 다음 명령어를 실행합니다.

curl -X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "x-goog-user-project: PROJECT_ID" \

-H "Content-Type: application/json; charset=utf-8" \

-d @request.json \

"https://vision.googleapis.com/v1/files:annotate"

PowerShell

요청 본문을 request.json 파일에 저장하고 다음 명령어를 실행합니다.

$cred = gcloud auth print-access-token

$headers = @{ "Authorization" = "Bearer $cred"; "x-goog-user-project" = "PROJECT_ID" }

Invoke-WebRequest `

-Method POST `

-Headers $headers `

-ContentType: "application/json; charset=utf-8" `

-InFile request.json `

-Uri "https://vision.googleapis.com/v1/files:annotate" | Select-Object -Expand Content

annotate 요청이 성공하면 즉시 JSON 응답이 반환됩니다.

이 기능(DOCUMENT_TEXT_DETECTION)의 경우 JSON 응답은 이미지의 문서 텍스트 감지 요청의 응답과 유사합니다. 응답에는 단락, 단어, 개별 기호로 나뉜 블록의 경계 상자가 포함됩니다. 전체 텍스트도 감지됩니다. 응답에는 지정된 PDF 또는 TIFF의 위치와 결과의 파일상 페이지 번호를 표시하는 context 필드도 포함됩니다.

다음 JSON 응답은 단일 페이지(2페이지)에 대한 것이며 보기 편하도록 짧게 편집하였습니다.

Java

이 샘플을 시도하기 전에 Vision API 빠른 시작: 클라이언트 라이브러리 사용의 Java 설정 안내를 따르세요. 자세한 내용은 Vision API Java 참고 문서를 참조하세요.

Node.js

이 샘플을 사용해 보기 전에 Vision 빠른 시작: 클라이언트 라이브러리 사용의 Node.js 설정 안내를 따르세요. 자세한 내용은 Vision Node.js API 참고 문서를 참조하세요.

Vision에 인증하려면 애플리케이션 기본 사용자 인증 정보를 설정합니다. 자세한 내용은 로컬 개발 환경의 인증 설정을 참조하세요.

Python

이 샘플을 사용해 보기 전에 Vision 빠른 시작: 클라이언트 라이브러리 사용의 Python 설정 안내를 따르세요. 자세한 내용은 Vision Python API 참고 문서를 참조하세요.

Vision에 인증하려면 애플리케이션 기본 사용자 인증 정보를 설정합니다. 자세한 내용은 로컬 개발 환경의 인증 설정을 참조하세요.

직접 해 보기

아래에 소개된 작은 배치 온라인 기능 감지를 사용해 보세요.

이미 지정된 PDF 파일을 사용하거나 자체 파일을 대신 지정할 수 있습니다.

이 요청에 지정된 기능 유형에는 세 가지가 있습니다.

DOCUMENT_TEXT_DETECTIONLABEL_DETECTIONCROP_HINTS

요청({"type": "FEATURE_NAME"})에서 적절한 객체를 변경하여 다른 기능 유형을 추가하거나 삭제할 수 있습니다.

실행을 선택하여 요청을 보냅니다.

요청 본문:

{

"requests": [

{

"inputConfig": {

"gcsSource": {

"uri": "gs://cloud-samples-data/vision/document_understanding/custom_0773375000.pdf"

},

"mimeType": "application/pdf"

},

"features": [

{

"type": "DOCUMENT_TEXT_DETECTION"

},

{

"type": "LABEL_DETECTION"

},

{

"type": "CROP_HINTS"

}

],

"pages": [

1,

2,

3,

4,

5

]

}

]

}