After training a model, AutoML Vision uses items from the TEST set to evaluate the quality and accuracy of the new model.

Evaluation overview

AutoML Vision provides an aggregate set of evaluation metrics indicating how well the model performs overall, as well as evaluation metrics for each category label, indicating how well the model performs for that label.

AuPRC : Area under Precision/Recall curve, also referred to as "average precision." Generally between 0.5 and 1.0. Higher values indicate more accurate models.

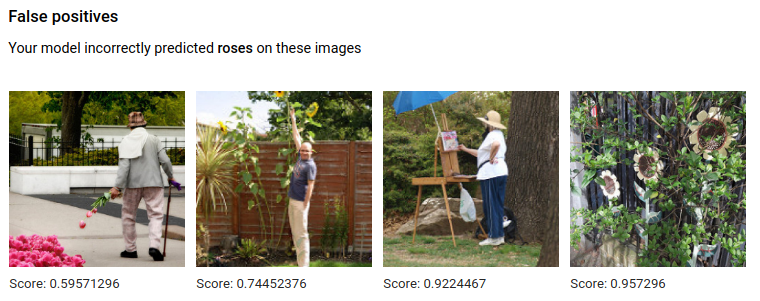

The Confidence threshold curves show how different confidence thresholds would affect precision, recall, true and false positive rates. Read about the relationship of precision and recall.

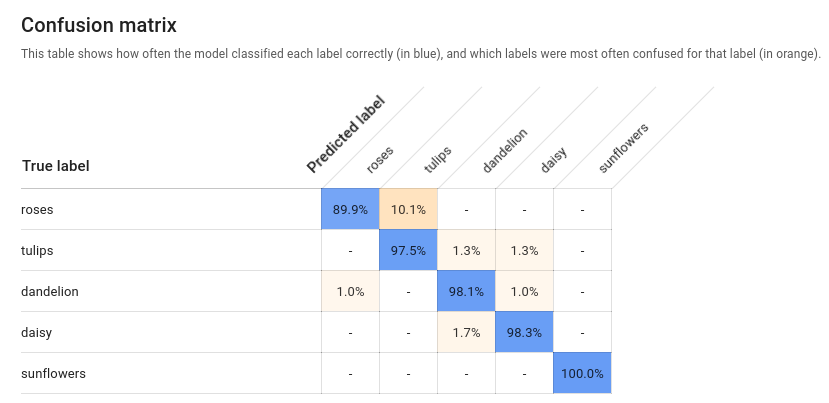

Confusion matrix: Only present for single-label-per-image models. Represents the percentage of times each label was predicted for each label in the training set during evaluation.

Ideally, label

onewould be assigned only to images classified as labelone, etc, so a perfect matrix would look like:100 0 0 0 0 100 0 0 0 0 100 0 0 0 0 100In the example above, if an image was classified as

onebut the model predictedtwo, the first row would instead look like:99 1 0 0More information can be found by searching for 'confusion matrix machine learning'.

AutoML Vision creates the confusion matrix for up to 10 labels. If you have more than 10 labels, the matrix includes the 10 labels with the most confusion (incorrect predictions).

Use this data to evaluate your model's readiness. High confusion, low AUC scores, or low precision and recall scores can indicate that your model needs additional training data or has inconsistent labels. A very high AUC score and perfect precision and recall can indicate that the data is too easy and may not generalize well.

List model evaluations

Once you have trained a model, you can list evaluation metrics for that model.

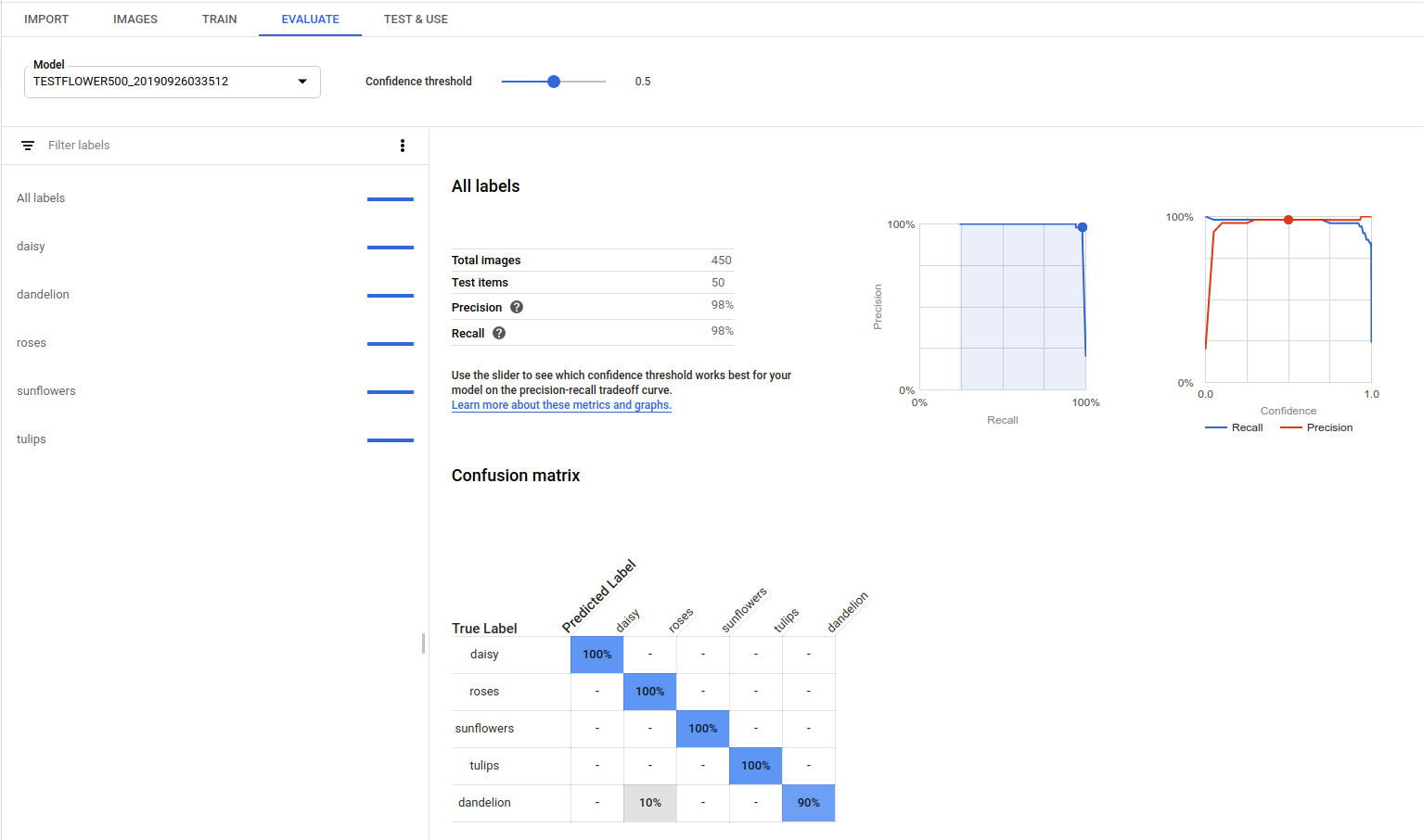

Web UI

Open the AutoML Vision UI and click the Models tab (with lightbulb icon) in the left navigation bar to display the available models.

To view the models for a different project, select the project from the drop-down list in the upper right of the title bar.

Click the row for the model you want to evaluate.

If necessary, click the Evaluate tab just below the title bar.

If training has been completed for the model, AutoML Vision shows its evaluation metrics.

REST

Before using any of the request data, make the following replacements:

- project-id: your GCP project ID.

- model-id: the ID of your model, from the

response when you created the model. The ID is the last element of the name of your model.

For example:

- model name:

projects/project-id/locations/location-id/models/IOD4412217016962778756 - model id:

IOD4412217016962778756

- model name:

- model-evaluation-id: the ID value of the model

evaluation. You can get model evaluation IDs from the

listmodel evaluations operation.

HTTP method and URL:

GET https://automl.googleapis.com/v1/projects/PROJECT_ID/locations/us-central1/models/MODEL_ID/modelEvaluations/MODEL_EVALUATION_ID

To send your request, choose one of these options:

curl

Execute the following command:

curl -X GET \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "x-goog-user-project: project-id" \

"https://automl.googleapis.com/v1/projects/PROJECT_ID/locations/us-central1/models/MODEL_ID/modelEvaluations/MODEL_EVALUATION_ID"

PowerShell

Execute the following command:

$cred = gcloud auth print-access-token

$headers = @{ "Authorization" = "Bearer $cred"; "x-goog-user-project" = "project-id" }

Invoke-WebRequest `

-Method GET `

-Headers $headers `

-Uri "https://automl.googleapis.com/v1/projects/PROJECT_ID/locations/us-central1/models/MODEL_ID/modelEvaluations/MODEL_EVALUATION_ID" | Select-Object -Expand Content

You should receive a JSON response similar to the following sample. Key object detection specific

fields are in bold, and a shortened version of classificationEvaluationMetrics

entries are shown for clarity:

Go

Before trying this sample, follow the setup instructions for this language on the Client Libraries page.

Java

Before trying this sample, follow the setup instructions for this language on the Client Libraries page.

Node.js

Before trying this sample, follow the setup instructions for this language on the Client Libraries page.

Python

Before trying this sample, follow the setup instructions for this language on the Client Libraries page.

Additional languages

C#: Please follow the C# setup instructions on the client libraries page and then visit the AutoML Vision reference documentation for .NET.

PHP: Please follow the PHP setup instructions on the client libraries page and then visit the AutoML Vision reference documentation for PHP.

Ruby: Please follow the Ruby setup instructions on the client libraries page and then visit the AutoML Vision reference documentation for Ruby.

Get model evaluation values

You can also get a specific model evaluation for a label (displayName) using

an evaluation ID. To get your model evaluation ID, run the list model evaluations

function shown in List model evaluations.

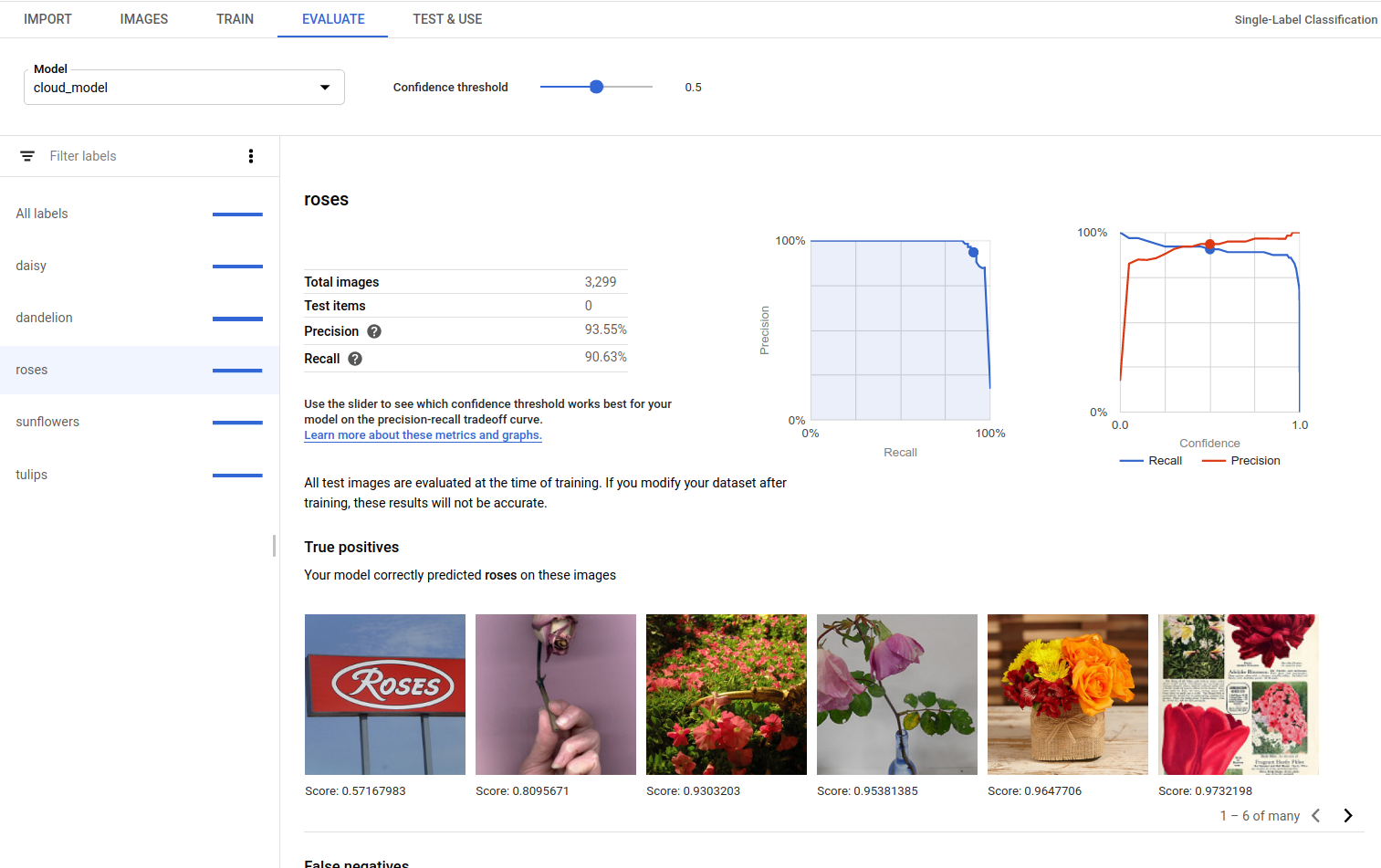

Web UI

Open the Vision Dashboard and click the lightbulb icon in the left navigation bar to display the available models.

To view the models for a different project, select the project from the drop-down list in the upper right of the title bar.

Click the row for the model you want to evaluate.

If necessary, click the Evaluate tab just below the title bar.

If training has been completed for the model, AutoML Vision shows its evaluation metrics.

To view the metrics for a specific label, select the label name from the list of labels in the lower part of the page.

REST

To get just the evaluation metrics for a specific label, add

/{MODEL_EVALUATION_ID} to the request above from the

response.

For example, you can find the model evaluation ID for the rose label

(displayName) in the evaluation name returned from the list

operation:

"name": "projects/PROJECT_ID/locations/us-central1/models/MODEL_ID/modelEvaluations/858136867710915695"

Before using any of the request data, make the following replacements:

- project-id: your GCP project ID.

- model-id: the ID of your model, from the

response when you created the model. The ID is the last element of the name of your model.

For example:

- model name:

projects/project-id/locations/location-id/models/IOD4412217016962778756 - model id:

IOD4412217016962778756

- model name:

- model-evaluation-id: the ID value of the model

evaluation. You can get model evaluation IDs from the

listmodel evaluations operation.

HTTP method and URL:

GET https://automl.googleapis.com/v1/projects/PROJECT_ID/locations/us-central1/models/MODEL_ID/modelEvaluations/MODEL_EVALUATION_ID

To send your request, choose one of these options:

curl

Execute the following command:

curl -X GET \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "x-goog-user-project: project-id" \

"https://automl.googleapis.com/v1/projects/PROJECT_ID/locations/us-central1/models/MODEL_ID/modelEvaluations/MODEL_EVALUATION_ID"

PowerShell

Execute the following command:

$cred = gcloud auth print-access-token

$headers = @{ "Authorization" = "Bearer $cred"; "x-goog-user-project" = "project-id" }

Invoke-WebRequest `

-Method GET `

-Headers $headers `

-Uri "https://automl.googleapis.com/v1/projects/PROJECT_ID/locations/us-central1/models/MODEL_ID/modelEvaluations/MODEL_EVALUATION_ID" | Select-Object -Expand Content

You should receive a JSON response similar to the following:

Go

Before trying this sample, follow the setup instructions for this language on the Client Libraries page.

Java

Before trying this sample, follow the setup instructions for this language on the Client Libraries page.

Node.js

Before trying this sample, follow the setup instructions for this language on the Client Libraries page.

Python

Before trying this sample, follow the setup instructions for this language on the Client Libraries page.

True Positives, False Negatives, and False Positives (UI only)

In the user interface you can observe specific examples of model performance, namely true positive (TP), false negative (FN), and false positive (FP) instances from your TRAINING and VALIDATION sets.

Web UI

Before trying this sample, follow the setup instructions for this language on the Client Libraries page.

You can access the TP, FN, and FP view in the UI by selecting the Evaluate tab, and then selecting any specific label.

By viewing trends in these predictions, you can modify your training set to improve model performance.

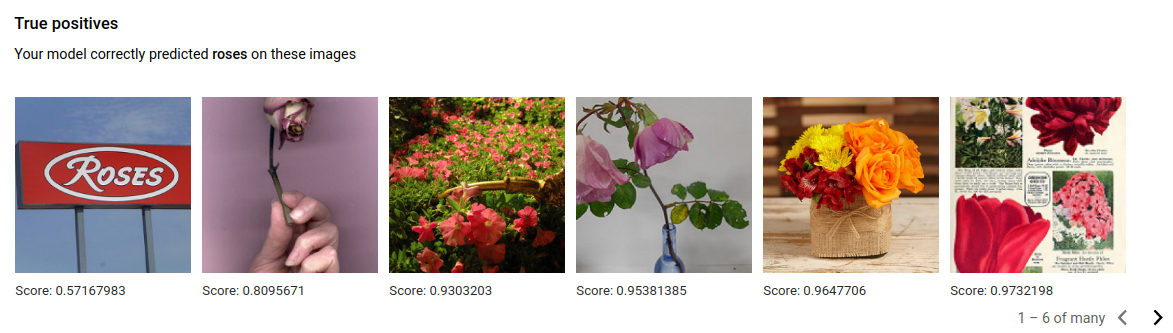

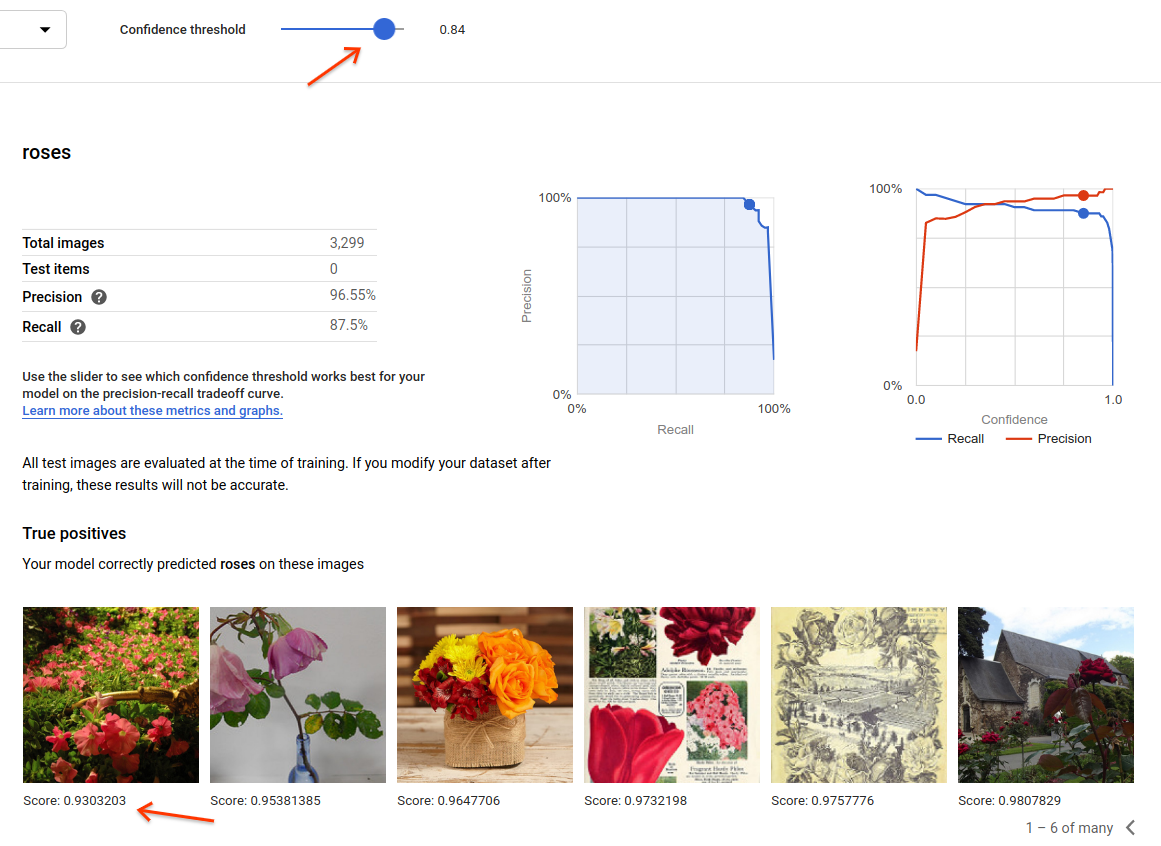

True positive images are sample images provided to the trained model that the model correctly annotated:

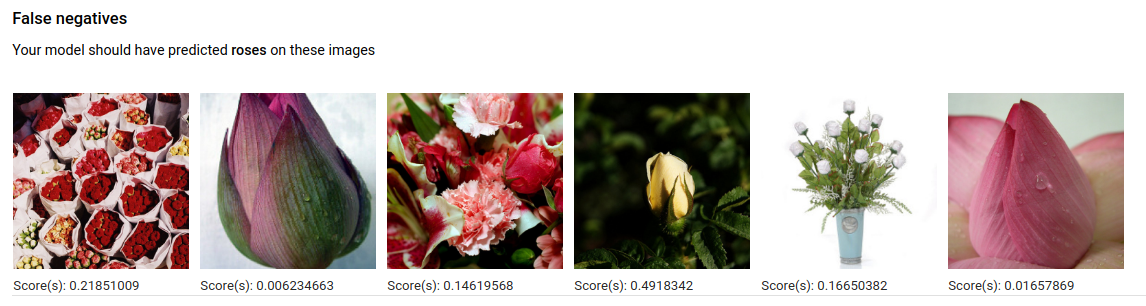

False negative images are similarly provided to the trained model, but the model failed to correctly annotate the image for the given label:

Lastly, false positive images are those provided to the trained model that were annotated with the given label, but should not have been annotated:

The model is selecting interesting corner cases, which presents an opportunity to refine your definitions and labels to help the model understand your label interpretations. For example, a stricter definition would help the model understand if you consider an abstract painting of a rose a "rose" (or not).

With repeated label, train, and evaluate loops your model will surface other such ambiguities in your data.

You can also adjust the score threshold in this view in the user interface, and the TP, FN, and FP images displayed will reflect the threshold change:

Iterate on your model

If you're not happy with the quality levels, you can go back to earlier steps to improve the quality:

- AutoML Vision allows you to sort the images by how “confused” the model is, by the true label and its predicted label. Look through these images and make sure they're labeled correctly.

- Consider adding more images to any labels with low quality.

- You may need to add different types of images (e.g. wider angle, higher or lower resolution, different points of view).

- Consider removing labels altogether if you don't have enough training images.

- Remember that machines can’t read your label name; it's just a random string of letters to them. If you have one label that says "door" and another that says "door_with_knob" the machine has no way of figuring out the nuance other than the images you provide it.

- Augment your data with more examples of true positives and negatives. Especially important examples are the ones that are close to the decision boundary (i.e. likely to produce confusion, but still correctly labeled).

- Specify your own TRAIN, TEST, VALIDATION split. The tool randomly assigns images, but near-duplicates may end up in TRAIN and VALIDATION which could lead to overfitting and then poor performance on the TEST set.

Once you've made changes, train and evaluate a new model until you reach a high enough quality level.