Das Streaming umfasst Antworten auf Prompts, sobald diese generiert werden.Das heißt, sobald das Modell Ausgabetokens generiert, werden die Ausgabetokens gesendet.

Über folgende Elemente können Sie Streaminganfragen an das Vertex AI Large Language Model (LLM) stellen:

- Die Vertex AI REST API mit vom Server gesendeten Ereignissen (SSE)

- Die Vertex AI REST API

- Das Vertex AI SDK für Python

- Eine Clientbibliothek

Die Streaming- und Nicht-Streaming-APIs verwenden dieselben Parameter; es gibt keinen Unterschied in Preisgestaltung und Kontingenten.

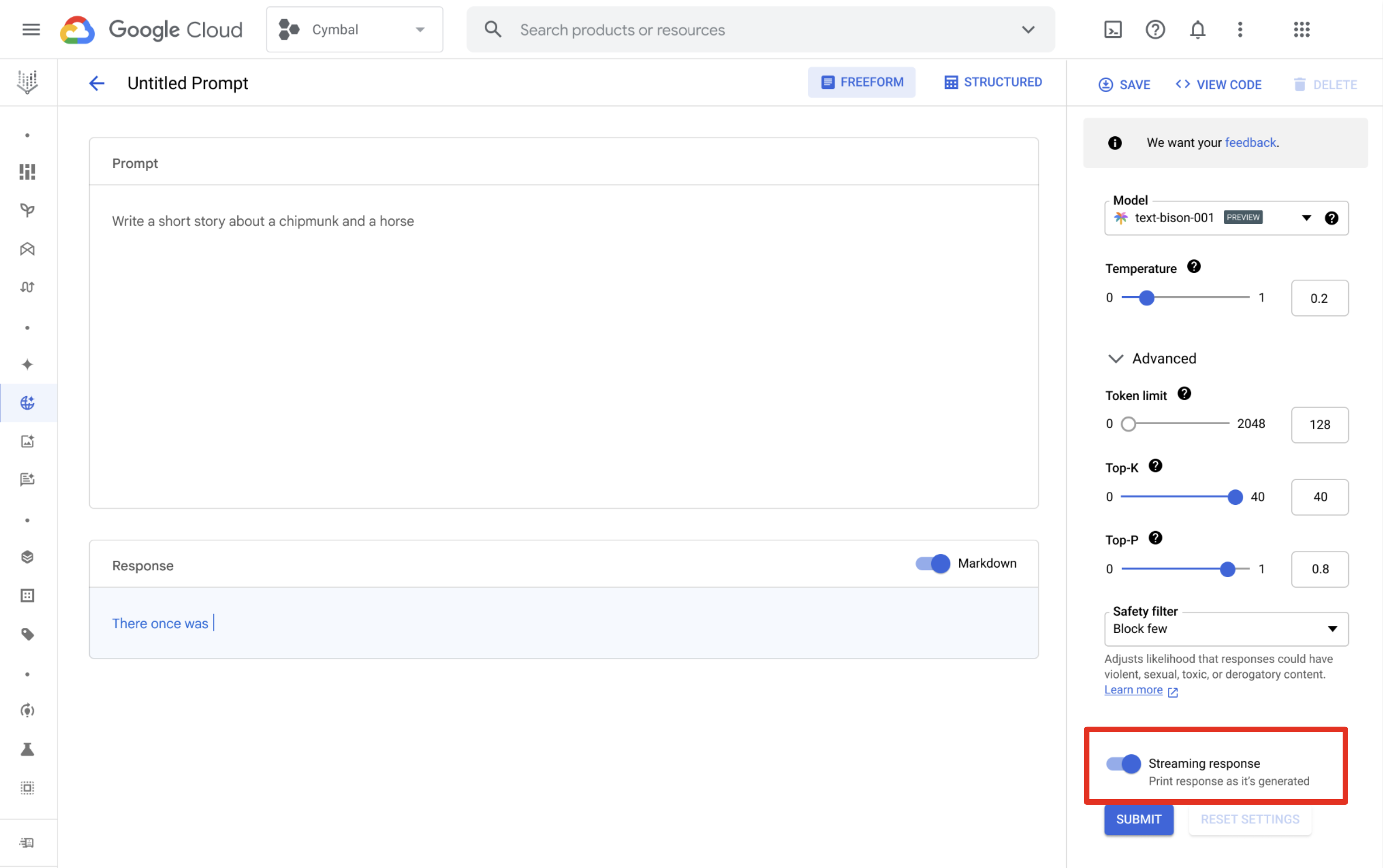

Vertex AI Studio

Mit Vertex AI Studio können Sie Prompts erstellen und ausführen und gestreamte Antworten erhalten. Klicken Sie auf der Designseite für Prompts auf die Schaltfläche Streamingantwort, um das Streaming zu aktivieren.

Unterstützte Sprachen

| Sprachcode | Sprache |

|---|---|

en |

Englisch |

es |

Spanisch |

pt |

Portugiesisch |

fr |

Französisch |

it |

Italienisch |

de |

Deutsch |

ja |

Japanisch |

ko |

Koreanisch |

hi |

Hindi |

zh |

Chinesisch |

id |

Indonesisch |

Beispiele

Sie haben folgende Möglichkeiten, um die Streaming API aufzurufen:

REST API mit vom Server gesendeten Ereignissen (SSE)

Die Parameter unterscheiden sich je nach Modelltyp, der in den folgenden Beispielen verwendet wird:

Text

Derzeit unterstützte Modelle sind text-bison und text-unicorn. Siehe verfügbare Versionen.

Anfrage

PROJECT_ID=YOUR_PROJECT_ID

PROMPT="PROMPT"

MODEL_ID=text-bison

curl \

-X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "Content-Type: application/json" \

https://us-central1-aiplatform.googleapis.com/v1/projects/${PROJECT_ID}/locations/us-central1/publishers/google/models/${MODEL_ID}:serverStreamingPredict?alt=sse -d \

'{

"inputs": [

{

"struct_val": {

"prompt": {

"string_val": [ "'"${PROMPT}"'" ]

}

}

}

],

"parameters": {

"struct_val": {

"temperature": { "float_val": 0.8 },

"maxOutputTokens": { "int_val": 1024 },

"topK": { "int_val": 40 },

"topP": { "float_val": 0.95 }

}

}

}'

Antwort

Antworten sind vom Server gesendete Ereignisnachrichten.

data: {"outputs": [{"structVal": {"content": {"stringVal": [RESPONSE]},"safetyAttributes": {"structVal": {"blocked": {"boolVal": [BOOLEAN]},"categories": {"listVal": [{"stringVal": [Safety category name]}]},"scores": {"listVal": [{"doubleVal": [Safety category score]}]}}},"citationMetadata": {"structVal": {"citations": {}}}}}]}

Chat

Das aktuell unterstützte Modell ist chat-bison. Siehe verfügbare Versionen.

Anfrage

PROJECT_ID=YOUR_PROJECT_ID

PROMPT="PROMPT"

AUTHOR="USER"

MODEL_ID=chat-bison

curl \

-X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "Content-Type: application/json" \

https://us-central1-aiplatform.googleapis.com/v1/projects/${PROJECT_ID}/locations/us-central1/publishers/google/models/${MODEL_ID}:serverStreamingPredict?alt=sse -d \

$'{

"inputs": [

{

"struct_val": {

"messages": {

"list_val": [

{

"struct_val": {

"content": {

"string_val": [ "'"${PROMPT}"'" ]

},

"author": {

"string_val": [ "'"${AUTHOR}"'"]

}

}

}

]

}

}

}

],

"parameters": {

"struct_val": {

"temperature": { "float_val": 0.5 },

"maxOutputTokens": { "int_val": 1024 },

"topK": { "int_val": 40 },

"topP": { "float_val": 0.95 }

}

}

}'

Antwort

Antworten sind vom Server gesendete Ereignisnachrichten.

data: {"outputs": [{"structVal": {"candidates": {"listVal": [{"structVal": {"author": {"stringVal": [AUTHOR]},"content": {"stringVal": [RESPONSE]}}}]},"citationMetadata": {"listVal": [{"structVal": {"citations": {}}}]},"safetyAttributes": {"structVal": {"blocked": {"boolVal": [BOOLEAN]},"categories": {"listVal": [{"stringVal": [Safety category name]}]},"scores": {"listVal": [{"doubleVal": [Safety category score]}]}}}}}]}

Code

Das aktuell unterstützte Modell ist code-bison. Siehe verfügbare Versionen.

Anfrage

PROJECT_ID=YOUR_PROJECT_ID

PROMPT="PROMPT"

MODEL_ID=code-bison

curl \

-X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "Content-Type: application/json" \

https://us-central1-aiplatform.googleapis.com/v1/projects/${PROJECT_ID}/locations/us-central1/publishers/google/models/${MODEL_ID}:serverStreamingPredict?alt=sse -d \

$'{

"inputs": [

{

"struct_val": {

"prefix": {

"string_val": [ "'"${PROMPT}"'" ]

}

}

}

],

"parameters": {

"struct_val": {

"temperature": { "float_val": 0.8 },

"maxOutputTokens": { "int_val": 1024 },

"topK": { "int_val": 40 },

"topP": { "float_val": 0.95 }

}

}

}'

Antwort

Antworten sind vom Server gesendete Ereignisnachrichten.

data: {"outputs": [{"structVal": {"citationMetadata": {"structVal": {"citations": {}}},"safetyAttributes": {"structVal": {"blocked": {"boolVal": [BOOLEAN]},"categories": {"listVal": [{"stringVal": [Safety category name]}]},"scores": {"listVal": [{"doubleVal": [Safety category score]}]}}},"content": {"stringVal": [RESPONSE]}}}]}

Codechat

Das aktuell unterstützte Modell ist codechat-bison. Siehe verfügbare Versionen.

Anfrage

PROJECT_ID=YOUR_PROJECT_ID

PROMPT="PROMPT"

AUTHOR="USER"

MODEL_ID=codechat-bison

curl \

-X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "Content-Type: application/json" \

https://us-central1-aiplatform.googleapis.com/v1/projects/${PROJECT_ID}/locations/us-central1/publishers/google/models/${MODEL_ID}:serverStreamingPredict?alt=sse -d \

$'{

"inputs": [

{

"struct_val": {

"messages": {

"list_val": [

{

"struct_val": {

"content": {

"string_val": [ "'"${PROMPT}"'" ]

},

"author": {

"string_val": [ "'"${AUTHOR}"'"]

}

}

}

]

}

}

}

],

"parameters": {

"struct_val": {

"temperature": { "float_val": 0.5 },

"maxOutputTokens": { "int_val": 1024 },

"topK": { "int_val": 40 },

"topP": { "float_val": 0.95 }

}

}

}'

Antwort

Antworten sind vom Server gesendete Ereignisnachrichten.

data: {"outputs": [{"structVal": {"safetyAttributes": {"structVal": {"blocked": {"boolVal": [BOOLEAN]},"categories": {"listVal": [{"stringVal": [Safety category name]}]},"scores": {"listVal": [{"doubleVal": [Safety category score]}]}}},"citationMetadata": {"listVal": [{"structVal": {"citations": {}}}]},"candidates": {"listVal": [{"structVal": {"content": {"stringVal": [RESPONSE]},"author": {"stringVal": [AUTHOR]}}}]}}}]}

REST API

Die Parameter unterscheiden sich je nach Modelltyp, der in den folgenden Beispielen verwendet wird:

Text

Derzeit unterstützte Modelle sind text-bison und text-unicorn. Siehe verfügbare Versionen.

Anfrage

PROJECT_ID=YOUR_PROJECT_ID

PROMPT="PROMPT"

MODEL_ID=text-bison

curl \

-X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "Content-Type: application/json" \

https://us-central1-aiplatform.googleapis.com/v1/projects/${PROJECT_ID}/locations/us-central1/publishers/google/models/${MODEL_ID}:serverStreamingPredict -d \

'{

"inputs": [

{

"struct_val": {

"prompt": {

"string_val": [ "'"${PROMPT}"'" ]

}

}

}

],

"parameters": {

"struct_val": {

"temperature": { "float_val": 0.8 },

"maxOutputTokens": { "int_val": 1024 },

"topK": { "int_val": 40 },

"topP": { "float_val": 0.95 }

}

}

}'

Antwort

{

"outputs": [

{

"structVal": {

"citationMetadata": {

"structVal": {

"citations": {}

}

},

"safetyAttributes": {

"structVal": {

"categories": {},

"scores": {},

"blocked": {

"boolVal": [

false

]

}

}

},

"content": {

"stringVal": [

RESPONSE

]

}

}

}

]

}

Chat

Das aktuell unterstützte Modell ist chat-bison. Siehe verfügbare Versionen.

Anfrage

PROJECT_ID=YOUR_PROJECT_ID

PROMPT="PROMPT"

AUTHOR="USER"

MODEL_ID=chat-bison

curl \

-X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "Content-Type: application/json" \

https://us-central1-aiplatform.googleapis.com/v1/projects/${PROJECT_ID}/locations/us-central1/publishers/google/models/${MODEL_ID}:serverStreamingPredict -d \

$'{

"inputs": [

{

"struct_val": {

"messages": {

"list_val": [

{

"struct_val": {

"content": {

"string_val": [ "'"${PROMPT}"'" ]

},

"author": {

"string_val": [ "'"${AUTHOR}"'"]

}

}

}

]

}

}

}

],

"parameters": {

"struct_val": {

"temperature": { "float_val": 0.5 },

"maxOutputTokens": { "int_val": 1024 },

"topK": { "int_val": 40 },

"topP": { "float_val": 0.95 }

}

}

}'

Antwort

{

"outputs": [

{

"structVal": {

"candidates": {

"listVal": [

{

"structVal": {

"content": {

"stringVal": [

RESPONSE

]

},

"author": {

"stringVal": [

AUTHOR

]

}

}

}

]

},

"citationMetadata": {

"listVal": [

{

"structVal": {

"citations": {}

}

}

]

},

"safetyAttributes": {

"listVal": [

{

"structVal": {

"categories": {},

"blocked": {

"boolVal": [

false

]

},

"scores": {}

}

}

]

}

}

}

]

}

Code

Das aktuell unterstützte Modell ist code-bison. Siehe verfügbare Versionen.

Anfrage

PROJECT_ID=YOUR_PROJECT_ID

PROMPT="PROMPT"

MODEL_ID=code-bison

curl \

-X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "Content-Type: application/json" \

https://us-central1-aiplatform.googleapis.com/v1/projects/${PROJECT_ID}/locations/us-central1/publishers/google/models/${MODEL_ID}:serverStreamingPredict -d \

$'{

"inputs": [

{

"struct_val": {

"prefix": {

"string_val": [ "'"${PROMPT}"'" ]

}

}

}

],

"parameters": {

"struct_val": {

"temperature": { "float_val": 0.8 },

"maxOutputTokens": { "int_val": 1024 },

"topK": { "int_val": 40 },

"topP": { "float_val": 0.95 }

}

}

}'

Antwort

{

"outputs": [

{

"structVal": {

"safetyAttributes": {

"structVal": {

"categories": {},

"scores": {},

"blocked": {

"boolVal": [

false

]

}

}

},

"citationMetadata": {

"structVal": {

"citations": {}

}

},

"content": {

"stringVal": [

RESPONSE

]

}

}

}

]

}

Codechat

Das aktuell unterstützte Modell ist codechat-bison. Siehe verfügbare Versionen.

Anfrage

PROJECT_ID=YOUR_PROJECT_ID

PROMPT="PROMPT"

AUTHOR="USER"

MODEL_ID=codechat-bison

curl \

-X POST \

-H "Authorization: Bearer $(gcloud auth print-access-token)" \

-H "Content-Type: application/json" \

https://us-central1-aiplatform.googleapis.com/v1/projects/${PROJECT_ID}/locations/us-central1/publishers/google/models/${MODEL_ID}:serverStreamingPredict -d \

$'{

"inputs": [

{

"struct_val": {

"messages": {

"list_val": [

{

"struct_val": {

"content": {

"string_val": [ "'"${PROMPT}"'" ]

},

"author": {

"string_val": [ "'"${AUTHOR}"'"]

}

}

}

]

}

}

}

],

"parameters": {

"struct_val": {

"temperature": { "float_val": 0.5 },

"maxOutputTokens": { "int_val": 1024 },

"topK": { "int_val": 40 },

"topP": { "float_val": 0.95 }

}

}

}'

Antwort

{

"outputs": [

{

"structVal": {

"candidates": {

"listVal": [

{

"structVal": {

"content": {

"stringVal": [

RESPONSE

]

},

"author": {

"stringVal": [

AUTHOR

]

}

}

}

]

},

"citationMetadata": {

"listVal": [

{

"structVal": {

"citations": {}

}

}

]

},

"safetyAttributes": {

"listVal": [

{

"structVal": {

"categories": {},

"blocked": {

"boolVal": [

false

]

},

"scores": {}

}

}

]

}

}

}

]

}

Vertex AI SDK für Python

Informationen zur Installation des Vertex AI SDK für Python finden Sie unter Vertex AI SDK für Python installieren.

Text

import vertexai

from vertexai.language_models import TextGenerationModel

def streaming_prediction(

project_id: str,

location: str,

) -> str:

"""Streaming Text Example with a Large Language Model"""

vertexai.init(project=project_id, location=location)

text_generation_model = TextGenerationModel.from_pretrained("text-bison")

parameters = {

"temperature": temperature, # Temperature controls the degree of randomness in token selection.

"max_output_tokens": 256, # Token limit determines the maximum amount of text output.

"top_p": 0.8, # Tokens are selected from most probable to least until the sum of their probabilities equals the top_p value.

"top_k": 40, # A top_k of 1 means the selected token is the most probable among all tokens.

}

responses = text_generation_model.predict_streaming(prompt="Give me ten interview questions for the role of program manager.", **parameters)

for response in responses:

`print(response)`

Chat

import vertexai

from vertexai.language_models import ChatModel, InputOutputTextPair

def streaming_prediction(

project_id: str,

location: str,

) -> str:

"""Streaming Chat Example with a Large Language Model"""

vertexai.init(project=project_id, location=location)

chat_model = ChatModel.from_pretrained("chat-bison")

parameters = {

"temperature": 0.8, # Temperature controls the degree of randomness in token selection.

"max_output_tokens": 256, # Token limit determines the maximum amount of text output.

"top_p": 0.95, # Tokens are selected from most probable to least until the sum of their probabilities equals the top_p value.

"top_k": 40, # A top_k of 1 means the selected token is the most probable among all tokens.

}

chat = chat_model.start_chat(

context="My name is Miles. You are an astronomer, knowledgeable about the solar system.",

examples=[

InputOutputTextPair(

input_text="How many moons does Mars have?",

output_text="The planet Mars has two moons, Phobos and Deimos.",

),

],

)

responses = chat.send_message_streaming(

message="How many planets are there in the solar system?", **parameters)

for response in responses:

`print(response)`

Code

import vertexai

from vertexai.language_models import CodeGenerationModel

def streaming_prediction(

project_id: str,

location: str,

) -> str:

"""Streaming Chat Example with a Large Language Model"""

vertexai.init(project=project_id, location=location)

code_model = CodeGenerationModel.from_pretrained("code-bison")

parameters = {

"temperature": 0.8, # Temperature controls the degree of randomness in token selection.

"max_output_tokens": 256, # Token limit determines the maximum amount of text output.

}

responses = code_model.predict_streaming(

prefix="Write a function that checks if a year is a leap year.", **parameters)

for response in responses:

`print(response)`

Codechat

import vertexai

from vertexai.language_models import CodeChatModel

def streaming_prediction(

project_id: str,

location: str,

) -> str:

"""Streaming Chat Example with a Large Language Model"""

vertexai.init(project=project_id, location=location)

codechat_model = CodeChatModel.from_pretrained("codechat-bison")

parameters = {

"temperature": 0.8, # Temperature controls the degree of randomness in token selection.

"max_output_tokens": 1024, # Token limit determines the maximum amount of text output.

}

codechat = codechat_model.start_chat()

responses = codechat.send_message_streaming(

message="Please help write a function to calculate the min of two numbers", **parameters)

for response in responses:

`print(response)`

Verfügbare Clientbibliotheken

Mit einer der folgenden Clientbibliotheken können Sie Antworten streamen:

- Python

- Node.js

- Java

- Go

- C#

Beispiele für die Verwendung von REST API-Beispielanfragen und -Antworten finden Sie unter Beispiele, die die REST-API verwenden.

Um Beispielcodeanfragen und -antworten mit dem Vertex AI SDK für Python anzuzeigen, lesen Sie Beispiele, die Vertex AI SDK für Python verwenden.

Responsible AI

RAI-Filter (Responsible Artificial Intelligence) scannen die Streamingausgabe, während das Modell sie generiert. Wird ein Verstoß erkannt, blockieren die Filter die problematischen Ausgabetokens und geben eine Ausgabe mit einem blockierten Flag unter safetyAttributes zurück, das den Stream beendet.

Nächste Schritte

- Weitere Informationen zum Entwerfen von Text-Prompts und Textchat-Prompts.

- Testen von Prompts in Vertex AI Studio

- Informationen zu Texteinbettungen

- Versuchen Sie, ein Sprach-Foundation Model zu optimieren.

- Weitere Informationen zu Best Practices für verantwortungsvolle KI und den Sicherheitsfiltern von Vertex AI